#Lew Schulman

Link

Website: https://www.f6s.com/lewschulman

Address: 1700 Pennsylvania Ave. NW, Washington, DC 20036

Phone: 800-601-5605

Lew Schulman is a co-founder of iBUILD the home construction platform for whole house developments, partial construction projects, and repair. Mr. Schulman is a Navy veteran and is focused on innovative social entrepreneurship, start-ups, and philanthropy, having launched four scalable solutions to various social issues around the globe. Lew Schulman is a seasoned CEO with global leadership experience. Lew Schulman invested his experience in affordable housing innovation after first finding success in finance and the fishing industry. For iBuild, Mr. Schulman has pioneered housing production systems in the US and abroad, resulting in the creation of thousands of affordable homes.

1 note

·

View note

Text

Lew Schulman

Website : https://www.multifamilyexecutive.com/person/lew-schulman

Address : 1700 Pennsylvania Ave. NW, Washington, DC 20036

Phone : 800-601-5605

Lew Schulman is a co-founder of iBUILD the home construction platform for whole house developments, partial construction projects, and repair. Mr. Schulman is a Navy veteran and is focused on innovative social entrepreneurship, start-ups, and philanthropy, having launched four scalable solutions to various social issues around the globe. Lew Schulman is a seasoned CEO with global leadership experience. Lew Schulman invested his experience in affordable housing innovation after first finding success in finance and the fishing industry. For iBuild, Mr. Schulman has pioneered housing production systems in the US and abroad, resulting in the creation of thousands of affordable homes

1 note

·

View note

Link

Address: 1700 Pennsylvania Ave. NW Washington, DC 20036

Phone: 800-601-5605

Lew Schulman is a co-founder of iBUILD the home construction platform for whole house developments, partial construction projects, and repair. Mr. Schulman is a Navy veteran and is focused on innovative social entrepreneurship, start-ups, and philanthropy, having launched four scalable solutions to various social issues around the globe. Lew Schulman is a seasoned CEO with global leadership experience. Lew Schulman invested his experience in affordable housing innovation after first finding success in finance and the fishing industry. For iBuild, Mr. Schulman has pioneered housing production systems in the US and abroad, resulting in the creation of thousands of affordable homes.

1 note

·

View note

Photo

Streaming LIVE on Facebook this Sunday at 7:30 pm: Join us for “Perspectives on Race and Representation: An Evening with the Racial Imaginary Institute.” Taking the debate around Dana Schutz’s painting, Open Casket, as a starting point, the program will look at questions about the ethics of representation and the responsibilities of artists and museums. The Whitney is partnering with Claudia Rankine and the Racial Imaginary Institute to convene this conversation with artists, scholars, and critics to gain their insights into these issues in relation to the 2017 Biennial and our contemporary moment.

There will be contributions from Elizabeth Alexander, Christopher Benson, LeRonn P. Brooks, Ken Chen, Malik Gaines, Lyle Ashton Harris, Terrance Hayes, Ajay Kurian, Christopher Y. Lew, Casey Llewellyn, Mia Locks, Claudia Rankine, Sarah Schulman, Christina Sharpe, and Herb Tam, among others.

#Whitney Biennial#Race#Representation#Dana Schutz#Claudia Rankine#Racial Imaginary Institute#Ethics of Representation#Elizabeth Alexander#Christopher Benson#LeRonn P. Brooks#Ken Chen#Malik Gaines#Lyle Ashton Harris#Terrance Hayes#Ajay Kurian#Casey Llewellyn#Sarah Schulman#Christina Sharpe#Herb Tam#Christopher Y. Lew#Mia Locks#Art#American Art#Whitney Museum#Whitney Museum of American Art

65 notes

·

View notes

Text

Lew Schulman

Website: http://mffhousing.com/mffblog/tag/ibuild/

Address: 1700 Pennsylvania Ave. NW, Washington, DC 20036

Phone: 800-601-5605

Lew Schulman is a co-founder of iBUILD the home construction platform for whole house developments, partial construction projects, and repair. Mr. Schulman is a Navy veteran and is focused on innovative social entrepreneurship, start-ups, and philanthropy, having launched four scalable solutions to various social issues around the globe. Lew Schulman is a seasoned CEO with global leadership experience. Lew Schulman invested his experience in affordable housing innovation after first finding success in finance and the fishing industry. For iBuild, Mr. Schulman has pioneered housing production systems in the US and abroad, resulting in the creation of thousands of affordable homes.

0 notes

Link

Address: 1700 Pennsylvania Ave. NW, Washington, DC 20036

Phone: 800-601-5605

Lew Schulman is a co-founder of iBUILD the home construction platform for whole house developments, partial construction projects, and repair. Mr. Schulman is a Navy veteran and is focused on innovative social entrepreneurship, start-ups, and philanthropy, having launched four scalable solutions to various social issues around the globe. Lew Schulman is a seasoned CEO with global leadership experience. Lew Schulman with iBUILD invested his experience in affordable housing innovation after first finding success in finance and the fishing industry. For iBuild, Mr. Schulman has pioneered housing production systems in the US and abroad, resulting in the creation of thousands of affordable homes.

1 note

·

View note

Link

1 note

·

View note

Text

ZOOT SUIT KILLERS

Joseph Annunziata,

On November 20 1942, a Brooklyn jury returned guilty verdicts on a pair of Williamsburg teens, 16-year-old Neil Simonelli and 18-year-old Joseph Annunziata, for the murder of Irwin Goodman, their math teacher at William J. Gaynor High School. The two of them had never much liked Goodman, a 36-year-old father of two. When he reported them to the principal for smoking in the boys' room, they walked eight blocks to Simonelli's home, where they picked up a pistol, then back to the school. They confronted Goodman and got into a scuffle with him. The gun, which Annunziata was holding, went off, perhaps accidentally, fatally shooting Goodman through the back. Because the jury entertained a doubt that the shooting was premeditated, they convicted the boys of murder in the second degree. The pair went off to Sing Sing together to begin sentences of 20 years to life. Had the verdict been first-degree murder, they could have been the youngest New Yorkers ever executed.

The city's newspapers, from the New York Times to the Brooklyn Eagle, provided extensive coverage of the case, and there was commentary in national magazines like Time. What fascinated them all, beyond the crime itself, was the boys' lifestyle and attire: uniformly, the press described Simonelli and Annunciate as "jitterbugs," "Zoot Suit Youths" and "Zoot Suit Killers."

Neil Simonelli

Whether or not anyone in the press had actually seen Simonelli and Annunziata wearing zoot suits was a moot point. By 1942, "zoot suit" was a metonym for "juvenile delinquent." What the black leather jacket and the hoodie were to later generations, the zoot suit was to the war years.

When the zoot suit first appeared it was mostly associated with black youths and the jitterbug in neighborhoods like Harlem. It consisted of an outrageously outsized jacket, with superwide padded shoulders, that hung down to the knees and the fingertips. The pants were exaggerated as well, ballooning and deeply pleated, then pegged tight at the ankles. A broad-brimmed or porkpie hat, pointed or platform shoes, a long watch chain, and a variety of tie styles completed the ensemble.

At first it was seen as a rather comical and harmless style, just another example of young people going to silly sartorial extremes. It began to look more sinister amid increasing worries about what life in wartime was doing to America's families and children.

The Depression and Dust Bowl 1930s had already wreaked havoc on the American family, turning millions into homeless migrants, splitting off husbands who went on the bum seeking any work they could find, forcing some mothers and daughters into prostitution, and enticing some young men into lives of crime and gangsterism. The war brought new dislocations and disorder. Some 15 million Americans were uprooted again, trekking across the country seeking defense work. Many moved more than once during the war, and few returned to their point of origin after it.

From 1940 well into 1943, the Selective Service exempted fathers with dependent children. But with the military's ever-expanding need for manpower, fathers eventually began to be drafted. The government started sending monthly checks to servicemen's families in 1942, but in expensive cities like New York it often wasn't enough to run a household. By 1944, more than a million servicemen's wives had taken jobs.

Kids were working too. In the Depression years, new legislation against child labor had been enacted, largely to prevent kids from taking scarce jobs away from adult males. Now, as labor shortages grew more severe, many states and localities rolled back those restrictions. As a result, by 1944 high school enrollment had fallen 25%, while the employment of youths 14 to 18 had more than doubled. An estimated 2 million high schoolers had dropped out to take jobs, and many planned not to go back to school.

The impact of all this on kids' lives could be profound. They might lose their father for the duration, or forever. They might follow their parents from one defense job to another, always the new kids in the neighborhood and at school. If they stayed in school, whether dad was gone and mom worked or both parents worked, kids now found themselves with lots of free, unsupervised time. If they dropped out and took jobs, they had cash in their pockets to spend any way they wanted.

And they were growing up in wartime. Teenage boys too young to be sent to fight knew that in a year or two or three they might well be. In the meantime they wanted to look and feel as manly as their fathers and older brothers in uniform. According to law enforcement, teenage gang activity and street fighting escalated, and the violence grew more serious; where teen gangs had formerly used fists and clubs, they now wielded zip guns and flick-knives, sometimes inflicting deadly harm. Teenage girls as well as boys took to drinking, smoking, and sexual pickups, in full eat-drink-and-be-merry mode. Adults labeled it "war degeneracy." It's no coincidence that the terms "youth culture" and "teenager" (or "teen-ager") were also coined in this period. They were something new, a generation of latchkey kids, army brats, war orphans.

The story of Simonelli and Annunziata neatly encapsulated what was seen as a broader trend. Youth crime figures in the first full year of the war were so disturbing, J. Edgar Hoover said, that a "counter-offensive" was necessary to prevent "a breakdown on our home front." He told a graduating class at the FBI Academy, "Something has happened to our moral fibers when the nation's youths under voting age accounted for 15 per cent of all murders, 35 per cent of all robberies, 58 per cent of all car thefts and 50 per cent of all burglaries." Later studies showed that nationwide juvenile delinquency arrests rose 72 per cent during the war. In Brooklyn, it was 100 per cent.

By 1942, the year of Simonelli and Annunziata, the zoot was identified as much with this behavior as with lindy-hopping and jitterbugging. That year, the War Production Board actually declared the zoot suit unpatriotic, because it was a waste of material in a time of rationing. The wide, pleated skirts girls wore for jitterbugging (and showing off their underwear) were denounced on the same grounds.

In 1943, one in five arrests was of someone under 18. But that year offered clear evidence that at least some of those arrests were the result of harassment and bias as much as bad behavior. That June, white sailors and soldiers in Los Angeles went on a rampage, attacking Mexican American teens all over the city. The "pachucos" fought back, and a week of rioting followed. The national press, against all evidence that the white servicemen had instigated a race riot, chose to call it a "zoot suit riot."

A new raft of stories followed, as journalists competed to define what the zoot was, what it meant, who wore it, and who invented it. Claimants to the latter ranged from a busboy in Atlanta to tailors in Memphis, Chicago, and L.A. The New Yorker, not surprisingly, decided that it started in Gotham. "With some friendly cooperation from the editors of the Amsterdam News, an uptown newspaper published by and for colored people, we got in touch with Lew Eisenstein, proprietor of Lew's Pants Store, on 125th Street," a "Talk of the Town" piece called "Zoot Lore" explained that June. Supposedly Lew's wife first pegged some loose pants in 1934, and the rest of the zoot suit followed in due course. Lew took credit for adding the long watch chain. Their claims were, of course, disputed by others.

The zoot suit would live on past the war, mostly worn by black and Hispanic men, though the influence of its wide shoulders and voluminous pants could be discerned in all men's suits in the early 1950s. Concerns about juvenile delinquency also continued after the war, rising to a level of national panic in the 1950s.

The story of the zoot suit killers lived on in its own way. In 1947, Irving Shulman's pulp novel The Amboy Dukes, set in wartime Brooklyn, was a shock sensation, selling five million copies even as it was banned in some locales for its sex and violence. Schulman, who was from Brooklyn himself and spent the war years writing for the War Department in Washington, clearly used Simonelli and Annunziata as the models for his lead characters Frank Goldfarb and Benny Semmel. They're a pair of juvenile delinquents in Jewish Brownsville, products of its "ugly gray and red tenements, tombstones of disease, unrest and smoldering violence… It was as if nothing bright would ever shine on Amboy Street." While their parents do defense work, Frank and Benny hook school almost constantly to hang out with their gang, the Amboy Dukes. They make money selling counterfeit gas ration coupons on the black market, and spend it on liquor, marijuana, zip guns and whores. They too accidentally shoot and kill a teacher in a scuffle, and come to a worse end for it than their real-life models.

Lurid yet relentlessly downbeat, The Amboy Dukes both looked back to the worst of wartime New York and ahead to 1950s juvenile delinquent tales like Blackboard Jungle and Shulman's own Rebel Without a Cause. (He would also write a novelization of West Side Story.) After the scandal kicked up by its first appearance, later editions dialed back the sex and violence and, interestingly, deracinated the two anti-heroes by giving them less Jewish-sounding surnames. In 1949 it was adapted for the film City Across the River.

by John Strausbaugh

13 notes

·

View notes

Text

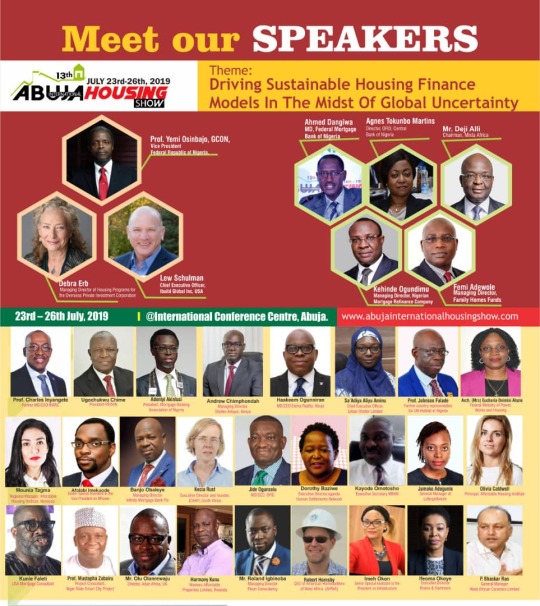

13th Abuja International Housing Show Will Host Real Estate, Bank CEOs, Others

The 13th edition of the prestigious Abuja International Housing Show will play host to a strong delegation of Real Estate Executives and Investors, Bank CEOs and ranking stakeholders in the housing and construction industry from across the world.

According to the event's convener and coordinator, Bar. Festus Adebayo, this year’s edition will also lead a showcase of cutting edge industry innovations as stakeholders gather for the largest housing show on the continent.

While granting interview to Nigeria's premier Housing Development TV program, the show's coordinator revealed that registration have commenced and all hands are on deck to deliver the best show ever, especially in a time when new ideas are needed to expand Nigeria's economic foray.

The 13th Abuja International Housing Show is expected to hold from July 23th – 26th 2019 at the International Conference Centre, Abuja.

According to Bar Adebayo, the theme of this year's edition is, "Driving sustainable Housing Finance Models In The Midst of Global Uncertainty" — a testament to how it continues to be an invaluable experience for building professionals in Nigeria and across the globe.

As always, speakers will be drawn from across the world and with adequate experience and knowledge to share valuable information on the subject matters, with the ultimate goal of pushing forward a unified and progressive housing and construction industry.

Adebayo listed some of the unique innovations to include introduction of CEOs Forum, women in housing sector initiative, international real estate investment forum and the segment for youth in real estate development.

According to him, “CEOs forum is unique in the sense that the leaders in the real estate, banking, building materials and technology will be meeting to address the issue of affordable housing finance holistically.”

“ The show will also feature youth in real estate development. Young people are coming into the industry with a lot of great ideas, and they need the right mentorship and guidance in order to achieve maximum results. Also, the show will present opportunities for those seeking entrance into the industry. Opportunities like this are rare to come by and I am sure they can't afford to miss out," he added.

With more reference to opportunities, participants at the show will have access and first hand information on the best mortgage and finance schemes available and also how to start as a small real estate investor.

As befitting of the show, this year's edition will be declared open by the Vice President of Nigeria, Prof Yemi Osinbajo. Other top attendants will include Lew Schulman, Chief Executive Officer, Build Modal Inc, USA; Debra Erb, Managing Director of Housing Program for Overseas Private Investment Corporation; Ahmed Dangiwa, MD of Federal Mortgage Bank of Nigeria; Agnes Tokumbo Martins, Director CBN; Femi Adewole, MD Family Homes Fund; Kehinde Ogundimu, MD Nigeria Mortgage Refinance Company PLC; Ugochukwu Chime, REDAN President; Robert Hornsby, CEO, American Homebuilders of West Africa; Olivia Caldwell; Kecia Rust; Mounia Tagwa; Afolabi Imokuode; Ime okon;Deji alli of Mixta Africa,Ifeoma okoye ,saadiya aliyu: Hakeem Ogunniran and other international experts.

Ghana’s Minister of Women Affairs, Hon. Freda Prempeh, who has been nominated for a Performance Award, will also deliver an address as a guest of honour and will lead a host of Ghanian Parlimentarians.

The Guest Speakers for the housing finance section of the 13th Abuja Housing Show are drawn from the World Bank, African Union of Housing Finance, Central Bank of Nigeria,institute of affordable housing,USA,America Home Builders of West Africa,Family Homes Funds,presidency, Shelter Afrique Kenya, Federal Mortgage Bank of Nigeria, Nigeria Mortgage Refinance Company, UN Habitat, OPIC Investment USA, Umar Shuaibu Coordinator of Abuja Metropolitan Management Council, among others.

This year's edition according to the coordinator will feature over 30 Speakers and 350 exhibitors from not less than 20 Countries.

By Felix Ojonugbwa

Read the full article

0 notes

Text

Upon Listening In On Perspectives on Race and Representation

I improvisationally composed this piece whilst listening to a live feed from the Whitney Museum of American Art concerning Perspectives on Race and Representation streaming from their Facebook page. The talk included many well established artists including Elizabeth Alexander, Christopher Benson, LeRonn P. Brooks, Ken Chen, Malik Gaines, Lyle Ashton Harris, Terrance Hayes, Ajay Kurian, Christopher Y. Lew, Casey Llewellyn, Mia Locks, Claudia Rankine, Sarah Schulman, Christina Sharpe, and Herb Tam.

Much of the talk revolves around Dana Schutz’s painting, Open Casket, it is, to quote their Facebook, “a starting point, tonight’s program looks at questions about the ethics of representation and the responsibilities of artists and museums. The Whitney is partnering with Claudia Rankine and the Racial Imaginary Institute to convene this conversation with artists, scholars, and critics to gain their insights into these issues in relation to the 2017 Biennial and our contemporary moment.”

This piece is largely unedited. It for me functions as archive in order for me to return to it and meditate upon at some later time. It also represents a listening and reacting to the experience of a mediated reflection upon the events in real time. In all honesty this piece of writing is a series of questions and definitions trying to transcribe, in some medium, what acts such as these are engaging in. It is my reacting and consequential producing. It is a product and a reaction.

For context, a Facebook friend shared the live stream and because I had sufficient free time I began to listen in about half way through just as Claudia Rankine was finishing her portion. First, I began taking notes as if in a lecture and then realized I was doing something else altogether.

---

Who is authorized to destroy?

What laws are of humans and not also upon them?

Does the destruction of property frighten us because of its connection to the body

Nothing is property without propriety, right?

propriety

noun

the state or quality of conforming to conventionally accepted standards of behavior or morals

Apropos - being both relevant and opportune

If the A- hear is negational then is what does that make propos, irrelevant and inopportune?

Propos - French for about

prop -

noun

a pole or beam used as a support or to keep something in position, typically one that is not an integral part of the thing supported.

verb

position something underneath (someone or something) for support.

noun

a portable object other than furniture or costumes used on the set of a play or movie.

mid 19th century: abbreviation of property.

abbreviation

proposition

proprietor

pro,

professional or

you positive?

Let us not confuse David with Goliath! The prophet extolls this! Why?

What profit does the prophet make from this ex

-tolling?

ex- prefix

from Latin meaning ‘out of’

What was the original toll?

What has come out of toll?

What is the toll of the body?

What is the toll on the body?

How much does the casket cost?

What is the use of an Open Casket when we will bury or burn the damned thing anyways?

What is the Damned Thing?

The body, the body, inside the casket is the body, are we trying to avoid the body by putting it in a casket?

Can the body be painted?

Can the body be put away in a painting?

I’ve heard of a painting embodying but what of a body empainting?

em-

noun

a unit for measuring the width of printed matter, equal to the height of the type size being used.

late 18th century: the letter M represented as a word, since it is approximately this width.

pronoun

short for them, especially in informal use.

Middle English: originally a form of hem, dative and accusative third person plural pronoun in Middle English; now regarded as an abbreviation of them.

prefix

variant spelling of en-

abbreviation

electromagnetic.

Engineer of Mines.

enlisted man (men).

ing-

denoting a verbal action relating to an occupation, skill, etc.

"banking"

denoting something involved in an action or process but with no corresponding verb.

"scaffolding"

-ing

forming present participles used as adjectives.

"charming"

-ing

a thing belonging to or having the quality of.

What is the body?

We put the body in the Earth, does the earth own the body?

When the government taxes us for land use do they own the body?

If they own the body, should they take responsibility for it?

What is the dead body?

What can the dead body do?

If the owner can be said to be responsible for the body, the body must be troubling something, causing some issue for another body?

Is that other body living?

Wait, is the body a zombie or some other undead?

un- prefix

Old English, of Germanic origin; from an Indo-European root shared by Latin in- and Greek a-

in-

adjective

(of a person) present at one’s home or office

fashionable

(of the ball in tennis or similar games) landing within the designated playing area

adverb

expressing movement with the result that someone or something becomes enclosed or surrounded by something else

expressing the situation of being enclosed or surrounded by something

expressing arrival at a destination

(of the tide) rising or at its highest level

(of an infielder or outfielder) playing closer to home plate than usual (of a pitch)

Old English in (preposition), inn, inne (adverb), of Germanic origin; related to Dutch and German in (preposition), German ein (adverb), from an Indo-European root shared by Latin in and Greek en.

a- prefix

What is a prefix?

What if I take the word apart?

What if I take the word a-

part?

What if I take the word, a part?

Is this an autopsy of the word?

Do I upturn all the quiet graves disturb the bodies or the Earth when I define, or search into the etymology?

Is the word a body?

Is the body propos or apropos in this sentence?

Whose opportunity is the body?

Is life a sentence?

sent - english

verb

simple past tense and past participle of send

sent - french

a smell, a stink, something foul-smelling

a feeling,

a touching,

a groping

en - within; inside from Greek

expressing a conversion into a specified state or location

ce - this

Am I trying to make this a poem?

Is this a poem?

I don’t want to lie when I say I don’t know, but I wonder how?

If I want to ask the question, will it come out as just a statement?

How am I responsible to other human beings in anything I make?

Is that question complicated enough?

How do we not know?

Am I yelling?

Am I yelling?

Am I yelling?

What is right now?

What is now?

What now?

now

adverb

at the present time or moment

used, especially in conversation, to draw attention to a particular statement or point in a narrative.

conjunction

as a consequence of the fact

adjective

fashionable; up to date

---

I’ve also added the video of the talk if anyone might be interested in listening to it themselves. Thank you.

https://www.facebook.com/whitneymuseum/videos/10154262121821433/

#open casket#emmet till#writing#listening#responding#definitions#whitneymuseum#claudia rankine#Malik Gaines

1 note

·

View note

Text

Lew Schulman iBUILD

Website : https://sketchfab.com/lewschulman2

ADDRESS : 3401 Quorum Drive, Fort Worth, TX 76137

Phone : +1 (800) 622-6433

If you are in need of fire restoration for your commercial or corporate building, Interstate Restoration is ready to help. Our team is available 24/7 in case of emergencies and can work with both commercial and corporate facilities. Interstate is different from other companies because we come in immediately to assess and mitigate damage from the fire and smoke, but we also deal with the reconstruction side of things as well. Fire restoration would normally require the use of several companies, but Interstate Restoration will provide a full repair of the damage. This is a guarantee that other individual companies cannot promise you. The Interstate process of fire restoration includes safety, containment, preservation, decontamination, restoration and repair, and recommisioning. You can trust our company to help after the devastation from a fire.

Facebook : https://www.facebook.com/InterstateRest/

0 notes

Text

Soft Robotics and Artificial Intelligence

*Obviously rather a lot of thought has been going into this.

https://www.researchgate.net/publication/329243898_Deep_Reinforcement_Learning_for_Soft_Robotic_Applications_Brief_Overview_with_Impending_Challenges

Deep Reinforcement Learning for Soft Robotic Applications: Brief Overview with Impending Challenges

by Sarthak Bhagat

Abstract

The increasing trend of studying the innate softness of robotic structures and amalgamating it with the benefits of the extensive developments in the field of embodied intelligence has led to sprouting of a relatively new yet extremely rewarding sphere of technology. The fusion of current deep reinforcement algorithms with physical advantages of a soft bio-inspired structure certainly directs us to a fruitful prospect of designing completely self-sufficient agents that are capable of learning from observations collected from their environment to achieve a task they have been assigned. For soft robotics structure possessing countless degrees of freedom, it is often not easy (something not even possible) to formulate mathematical constraints necessary for training a deep reinforcement learning (DRL) agent for the task in hand, hence, we resolve to imitation learning techniques due to ease of manually performing such tasks like manipulation that could be comfortably mimicked by our agent. Deploying current imitation learning algorithms on soft robotic systems have been observed to provide satisfactory results but there are still challenges in doing so. This review article thus posits an overview of various such algorithms along with instances of them being applied to real world scenarios and yielding state-of-the-art results followed by brief descriptions on various pristine branches of DRL research that may be centers of future research in this field of interest.

(...)

References

Trimmer, B. A Confluence of Technology: Putting Biology into Robotics. Soft Robot. 2014, 1, 159–160. [CrossRef] [CrossRef]

Banerjee, H.; Tse, Z.T.H.; Ren, H. Soft Robotics with Compliance and Adaptation for Biomedical Applications and Forthcoming Challenges. Int. J. Robot. Autom. 2018, 33. [CrossRef] [CrossRef]

Trivedi, D.; Rahn, C.D.; Kier, W.M.; Walker, I.D. Soft robotics: Biological inspiration, state of the art, and future research. Appl. Bionics Biomech. 2008, 5, 99–117. [CrossRef] [CrossRef]

Banerjee, H.; Ren, H. Electromagnetically responsive soft-flexible robots and sensors for biomedical applications and impending challenges. In Electromagnetic Actuation and Sensing in Medical Robotics; Springer: Berlin, Germany, 2018; pp. 43–72.

Banerjee, H.; Aaron, O.Y.W.; Yeow, B.S.; Ren, H. Fabrication and Initial Cadaveric Trials of Bi-directional Soft Hydrogel Robotic Benders Aiming for Biocompatible Robot-Tissue Interactions. In Proceedings of the IEEE ICARM 2018, Singapore, 18–20 July 2018.

Banerjee, H.; Roy, B.; Chaudhury, K.; Srinivasan, B.; Chakraborty, S.; Ren, H. Frequency-induced morphology alterations in microconfined biological cells. Med. Biol. Eng. Comput. 2018. [CrossRef] [PubMed] [CrossRef]

Kim, S.; Laschi, C.; Trimmer, B. Soft robotics: A bioinspired evolution in robotics. Trends Biotechnol. 2013,

31, 287–294. [CrossRef] [PubMed] [CrossRef]

Ren, H.; Banerjee, H. A Preface in Electromagnetic Robotic Actuation and Sensing in Medicine.

In Electromagnetic Actuation and Sensing in Medical Robotics; Springer: Berlin, Germany, 2018; pp. 1–10.

Banerjee, H.; Shen, S.; Ren, H. Magnetically Actuated Minimally Invasive Microbots for Biomedical Applications. In Electromagnetic Actuation and Sensing in Medical Robotics; Springer: Berlin, Germany, 2018;

pp. 11–41.

Banerjee, H.; Suhail, M.; Ren, H. Hydrogel Actuators and Sensors for Biomedical Soft Robots: Brief Overview

with Impending Challenges. Biomimetics 2018, 3, 15. [CrossRef] [CrossRef] [PubMed]

Iida, F.; Laschi, C. Soft robotics: Challenges and perspectives. Proc. Comput. Sci. 2011, 7, 99–102. [CrossRef]

[CrossRef]

Schmidhuber, J. Deep learning in neural networks: An overview. Neural Netw. 2015, 61, 85–117. [CrossRef]

[CrossRef] [PubMed]

Andrychowicz, M.; Wolski, F.; Ray, A.; Schneider, J.; Fong, R.; Welinder, P.; McGrew, B.; Tobin, J.; Abbeel, O.P.;

Zaremba, W. Hindsight experience replay. In Proceedings of the Advances in Neural Information Processing

Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 5048–5058.

Deng, L. A tutorial survey of architectures, algorithms, and applications for deep learning. APSIPA Trans.

Signal Inf. Process. 2014, 3. [CrossRef] [CrossRef]

Guo, Y.; Liu, Y.; Oerlemans, A.; Lao, S.; Wu, S.; Lew, M.S. Deep learning for visual understanding: A review.

Neurocomputing 2016, 187, 27–48. [CrossRef] [CrossRef]

Bagnell, J.A. An Invitation to Imitation; Technical Report; Carnegie-Mellon Univ Pittsburgh Pa Robotics Inst:

Pittsburgh, PA, USA, 2015.

Levine, S. Exploring Deep and Recurrent Architectures for Optimal Control. arXiv 2013, arXiv:1311.1761.

Lillicrap, T.P.; Hunt, J.J.; Pritzel, A.; Heess, N.; Erez, T.; Tassa, Y.; Silver, D.; Wierstra, D. Continuous Control

with Deep Reinforcement Learning. arXiv 2015, arXiv:1509.02971.

Spielberg, S.; Gopaluni, R.B.; Loewen, P.D. Deep reinforcement learning approaches for process control.

In Proceedings of the 2017 6th International Symposium on Advanced Control of Industrial Processes

(AdCONIP), Taipei, Taiwan, 28–31 May 2017; pp. 201–206.

Khanbareh, H.; de Boom, K.; Schelen, B.; Scharff, R.B.N.; Wang, C.C.L.; van der Zwaag, S.; Groen, P. Large

area and flexible micro-porous piezoelectric materials for soft robotic skin. Sens. Actuators A Phys. 2017, 263,

554–562. [CrossRef]

Zhao, H.; O’Brien, K.; Li, S.; Shepherd, R.F. Optoelectronically innervated soft prosthetic hand via stretchable

optical waveguides. Sci. Robot. 2016, 1, eaai7529. [CrossRef]

Li, S.; Vogt, D.M.; Rus, D.; Wood, R.J. Fluid-driven origami-inspired artificial muscles. Proc. Natl. Acad.

Sci. USA 2017, 114, 13132–13137. [CrossRef]

Robotics 2019, 8, 4 30 of 36

Ho, S.; Banerjee, H.; Foo, Y.Y.; Godaba, H.; Aye, W.M.M.; Zhu, J.; Yap, C.H. Experimental characterization of a dielectric elastomer fluid pump and optimizing performance via composite materials. J. Intell. Mater. Syst. Struct. 2017, 28, 3054–3065. [CrossRef] [CrossRef]

Shepherd, R.F.; Ilievski, F.; Choi, W.; Morin, S.A.; Stokes, A.A.; Mazzeo, A.D.; Chen, X.; Wang, M.; Whitesides, G.M. Multigait soft robot. Proc. Natl. Acad. Sci. USA 2011, 108, 20400–20403. [CrossRef] [PubMed] [CrossRef]

Banerjee, H.; Pusalkar, N.; Ren, H. Single-Motor Controlled Tendon-Driven Peristaltic Soft Origami Robot.

J. Mech. Robot. 2018, 10, 064501. [CrossRef] [CrossRef]

Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction; MIT Press: Cambridge, MA, USA, 1998.

Dayan, P. Improving generalization for temporal difference learning: The successor representation.

Neural Comput. 1993, 5, 613–624. [CrossRef] [CrossRef]

Kulkarni, T.D.; Saeedi, A.; Gautam, S.; Gershman, S.J. Deep Successor Reinforcement Learning. arXiv 2016,

arXiv:1606.02396.

Barreto, A.; Dabney, W.; Munos, R.; Hunt, J.J.; Schaul, T.; van Hasselt, H.P.; Silver, D. Successor features

for transfer in reinforcement learning. In Proceedings of the Advances in Neural Information Processing

Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 4055–4065.

Zhang, J.; Springenberg, J.T.; Boedecker, J.; Burgard, W. Deep reinforcement learning with successor features

for navigation across similar environments. In Proceedings of the 2017 IEEE/RSJ International Conference

on Intelligent Robots and Systems (IROS), Vancouver, BC, Canada, 24–28 September 2017; pp. 2371–2378.

Fu, M.C.; Glover, F.W.; April, J. Simulation optimization: A review, new developments, and applications. In Proceedings of the 37th Conference on Winter Simulation, Orlando, FL, USA, 4–7 December 2005;

pp. 83–95.

Szita, I.; Lörincz, A. Learning Tetris using the noisy cross-entropy method. Neural Comput. 2006,

18, 2936–2941. [CrossRef] [CrossRef] [PubMed]

Schulman, J.; Moritz, P.; Levine, S.; Jordan, M.; Abbeel, P. High-Dimensional Continuous Control Using

Generalized Advantage Estimation. arXiv 2015, arXiv:1506.02438.

Williams, R.J. Simple statistical gradient-following algorithms for connectionist reinforcement learning.

Mach. Learn. 1992, 8, 229–256. [CrossRef] [CrossRef]

Silver, D.; Lever, G.; Heess, N.; Degris, T.; Wierstra, D.; Riedmiller, M. Deterministic policy gradient

algorithms. In Proceedings of the ICML, Beijing, China, 21–26 June 2014.

Sutton, R.S. Dyna, an integrated architecture for learning, planning, and reacting. ACM SIGART Bull. 1991,

2, 160–163. [CrossRef] [CrossRef]

Weber, T.; Racanière, S.; Reichert, D.P.; Buesing, L.; Guez, A.; Rezende, D.J.; Badia, A.P.; Vinyals, O.; Heess, N.;

Li, Y.; et al. Imagination-Augmented Agents for Deep Reinforcement Learning. arXiv 2017, arXiv:1707.06203.

Kalweit, G.; Boedecker, J. Uncertainty-driven imagination for continuous deep reinforcement learning. In Proceedings of the Conference on Robot Learning, Mountain View, CA, USA, 13–15 November 2017;

pp. 195–206.

Banerjee, H.; Pusalkar, N.; Ren, H. Preliminary Design and Performance Test of Tendon-Driven Origami-Inspired

Soft Peristaltic Robot. In Proceedings of the 2018 IEEE International Conference on Robotics and Biomimetics

(IEEE ROBIO 2018), Kuala Lumpur, Malaysia, 12–15 December 2018.

Cianchetti, M.; Ranzani, T.; Gerboni, G.; Nanayakkara, T.; Althoefer, K.; Dasgupta, P.; Menciassi, A. Soft Robotics

Technologies to Address Shortcomings in Today’s Minimally Invasive Surgery: The STIFF-FLOP Approach.

Soft Robot. 2014, 1, 122–131. [CrossRef] [CrossRef]

Hawkes, E.W.; Blumenschein, L.H.; Greer, J.D.; Okamura, A.M. A soft robot that navigates its environment

through growth. Sci. Robot. 2017, 2, eaan3028. [CrossRef]

Atalay, O.; Atalay, A.; Gafford, J.; Walsh, C. A Highly Sensitive Capacitive-Based Soft Pressure Sensor Based

on a Conductive Fabric and a Microporous Dielectric Layer. Adv. Mater. 2017. [CrossRef] [CrossRef]

Truby, R.L.; Wehner, M.J.; Grosskopf, A.K.; Vogt, D.M.; Uzel, S.G.M.; Wood, R.J.; Lewis, J.A. Soft Somatosensitive

Actuators via Embedded 3D Printing. Adv. Mater. 2018, 30, e1706383. [CrossRef] [PubMed] [CrossRef]

Bishop-Moser, J.; Kota, S. Design and Modeling of Generalized Fiber-Reinforced Pneumatic Soft Actuators.

IEEE Trans. Robot. 2015, 31, 536–545. [CrossRef] [CrossRef]

Mnih, V.; Kavukcuoglu, K.; Silver, D.; Rusu, A.A.; Veness, J.; Bellemare, M.G.; Graves, A.; Riedmiller, M.;

Fidjeland, A.K.; Ostrovski, G.; et al. Human-level control through deep reinforcement learning. Nature 2015,518, 529. [CrossRef] [PubMed] [CrossRef] [PubMed]

Robotics 2019, 8, 4 31 of 36

Katzschmann, R.K.; DelPreto, J.; MacCurdy, R.; Rus, D. Exploration of underwater life with an acoustically controlled soft robotic fish. Sci. Robot. 2018, 3, eaar3449. [CrossRef]

Van Hasselt, H.; Guez, A.; Silver, D. Deep Reinforcement Learning with Double Q-Learning. In Proceedings of the AAAI, Phoenix, AZ, USA, 12–17 February 2016; Volume 2, p. 5.

Wang, Z.; Schaul, T.; Hessel, M.; Van Hasselt, H.; Lanctot, M.; De Freitas, N. Dueling Network Architectures for Deep Reinforcement Learning. arXiv 2015, arXiv:1511.06581.

Gu, S.; Lillicrap, T.; Sutskever, I.; Levine, S. Continuous deep q-learning with model-based acceleration. In Proceedings of the International Conference on Machine Learning, New York, NY, USA, 19–24 June 2016; pp. 2829–2838.

Gu, S.; Holly, E.; Lillicrap, T.; Levine, S. Deep reinforcement learning for robotic manipulation with asynchronous off-policy updates. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 3389–3396.

Mnih, V.; Badia, A.P.; Mirza, M.; Graves, A.; Lillicrap, T.; Harley, T.; Silver, D.; Kavukcuoglu, K. Asynchronous methods for deep reinforcement learning. In Proceedings of the International Conference on Machine Learning, New York, NY, USA, 19–24 June 2016; pp. 1928–1937.

Wang, J.X.; Kurth-Nelson, Z.; Tirumala, D.; Soyer, H.; Leibo, J.Z.; Munos, R.; Blundell, C.; Kumaran, D.; Botvinick, M. Learning to Reinforcement Learn. arXiv 2016, arXiv:1611.05763.

Wu, Y.; Mansimov, E.; Grosse, R.B.; Liao, S.; Ba, J. Scalable trust-region method for deep reinforcement learning using kronecker-factored approximation. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 5279–5288.

Levine, S.; Koltun, V. Guided policy search. In Proceedings of the International Conference on Machine Learning, Atlanta, GA, USA, 16 June–21 June 2013; pp. 1–9.

Schulman, J.; Levine, S.; Abbeel, P.; Jordan, M.; Moritz, P. Trust region policy optimization. In Proceedings of the International Conference on Machine Learning, Lille, France, 6 July–11 July 2015; pp. 1889–1897.

Kakade, S.; Langford, J. Approximately optimal approximate reinforcement learning. In Proceedings of the ICML, Sydney, Australia, 8–12 July 2002; Volume 2, pp. 267–274.

Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal Policy Optimization Algorithms.arXiv 2017, arXiv:1707.06347.

Mirowski, P.; Pascanu, R.; Viola, F.; Soyer, H.; Ballard, A.J.; Banino, A.; Denil, M.; Goroshin, R.; Sifre, L.; Kavukcuoglu, K.; et al. Learning to Navigate in Complex Environments. arXiv 2016, arXiv:1611.03673.

Riedmiller, M.; Hafner, R.; Lampe, T.; Neunert, M.; Degrave, J.; Van de Wiele, T.; Mnih, V.; Heess, N.; Springenberg, J.T. Learning by Playing-Solving Sparse Reward Tasks from Scratch. arXiv 2018, arXiv:1802.10567.

Yu, T.; Finn, C.; Xie, A.; Dasari, S.; Zhang, T.; Abbeel, P.; Levine, S. One-Shot Imitation from Observing

Humans via Domain-Adaptive Meta-Learning. arXiv 2018, arXiv:1802.01557.

Levine, S.; Finn, C.; Darrell, T.; Abbeel, P. End-to-end training of deep visuomotor policies. J. Mach. Learn. Res.

2016, 17, 1334–1373.

Jaderberg, M.; Mnih, V.; Czarnecki, W.M.; Schaul, T.; Leibo, J.Z.; Silver, D.; Kavukcuoglu, K. Reinforcement

Learning with Unsupervised Auxiliary Tasks. arXiv 2016, arXiv:1611.05397.

Schaul, T.; Quan, J.; Antonoglou, I.; Silver, D. Prioritized Experience Replay. arXiv 2015, arXiv:1511.05952.

Bengio, Y.; Louradour, J.; Collobert, R.; Weston, J. Curriculum learning. In Proceedings of the 26th Annual

International Conference on Machine Learning, Montreal, QC, Canada, 14–18 June 2009; pp. 41–48.

Zhang, J.; Tai, L.; Boedecker, J.; Burgard, W.; Liu, M. Neural SLAM. arXiv 2017, arXiv:1706.09520.

Florensa, C.; Held, D.; Wulfmeier, M.; Zhang, M.; Abbeel, P. Reverse Curriculum Generation for

Reinforcement Learning. arXiv 2017, arXiv:1707.05300.

Pathak, D.; Agrawal, P.; Efros, A.A.; Darrell, T. Curiosity-driven exploration by self-supervised

prediction. In Proceedings of the International Conference on Machine Learning (ICML), Sydney, Australia,

6–11 August 2017; Volume 2017.

Sukhbaatar, S.; Lin, Z.; Kostrikov, I.; Synnaeve, G.; Szlam, A.; Fergus, R. Intrinsic Motivation and Automatic

Curricula via Asymmetric Self-Play. arXiv 2017, arXiv:1703.05407.

Fortunato, M.; Azar, M.G.; Piot, B.; Menick, J.; Osband, I.; Graves, A.; Mnih, V.; Munos, R.; Hassabis, D.;

Pietquin, O.; et al. Noisy Networks for Exploration. arXiv 2017, arXiv:1706.10295.

Plappert, M.; Houthooft, R.; Dhariwal, P.; Sidor, S.; Chen, R.Y.; Chen, X.; Asfour, T.; Abbeel, P.;

Andrychowicz, M. Parameter Space Noise for Exploration. arXiv 2017, arXiv:1706.01905.

Robotics 2019, 8, 4 32 of 36

Rafsanjani, A.; Zhang, Y.; Liu, B.; Rubinstein, S.M.; Bertoldi, K. Kirigami skins make a simple soft actuator crawl. Sci. Robot. 2018. [CrossRef]

Zhu, Y.; Mottaghi, R.; Kolve, E.; Lim, J.J.; Gupta, A.; Fei-Fei, L.; Farhadi, A. Target-driven visual navigation in indoor scenes using deep reinforcement learning. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 30–31 May 2017; pp. 3357–3364.

He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778.

Kolve, E.; Mottaghi, R.; Gordon, D.; Zhu, Y.; Gupta, A.; Farhadi, A. AI2-THOR: An Interactive 3d

Environment for Visual AI. arXiv 2017, arXiv:1712.05474.

Tai, L.; Paolo, G.; Liu, M. Virtual-to-real deep reinforcement learning: Continuous control of mobile robots

for mapless navigation. In Proceedings of the 2017 IEEE/RSJ International Conference on Intelligent Robots

and Systems (IROS), Vancouver, BC, Canada, 24–28 September 2017; pp. 31–36.

Chen, Y.F.; Everett, M.; Liu, M.; How, J.P. Socially aware motion planning with deep reinforcement learning. In Proceedings of the 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS),

Vancouver, BC, Canada, 24–28 September 2017; pp. 1343–1350.

Long, P.; Fan, T.; Liao, X.; Liu, W.; Zhang, H.; Pan, J. Towards Optimally Decentralized Multi-Robot Collision

Avoidance via Deep Reinforcement Learning. arXiv 2017, arXiv:1709.10082.

Thrun, S.; Burgard, W.; Fox, D. Probabilistic Robotics (Intelligent Robotics and Autonomous Agents);

The MIT Press: Cambridge, MA, USA, 2001.

Gupta, S.; Davidson, J.; Levine, S.; Sukthankar, R.; Malik, J. Cognitive Mapping and Planning for Visual

Navigation. arXiv 2017, arXiv:1702.03920.

Gupta, S.; Fouhey, D.; Levine, S.; Malik, J. Unifying Map and Landmark Based Representations for Visual

Navigation. arXiv 2017, arXiv:1712.08125.

Parisotto, E.; Salakhutdinov, R. Neural Map: Structured Memory for Deep Reinforcement Learning. arXiv

2017, arXiv:1702.08360.

Kümmerle, R.; Grisetti, G.; Strasdat, H.; Konolige, K.; Burgard, W. G2o: A general framework for graph

optimization. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation (ICRA),

Shanghai, China, 9–13 May 2011; pp. 3607–3613.

Parisotto, E.; Chaplot, D.S.; Zhang, J.; Salakhutdinov, R. Global Pose Estimation with an Attention-Based

Recurrent Network. arXiv 2018, arXiv:1802.06857.

Schaul, T.; Horgan, D.; Gregor, K.; Silver, D. Universal value function approximators. In Proceedings of the

International Conference on Machine Learning, Lille, France, 6 July–11 July 2015; pp. 1312–1320.

Zhu, J.Y.; Park, T.; Isola, P.; Efros, A.A. Unpaired Image-to-Image Translation Using Cycle-Consistent

Adversarial Networks. arXiv 2017, arXiv:1703.10593.

Khan, A.; Zhang, C.; Atanasov, N.; Karydis, K.; Kumar, V.; Lee, D.D. Memory Augmented Control Networks.

arXiv 2017, arXiv:1709.05706.

Bruce, J.; Sünderhauf, N.; Mirowski, P.; Hadsell, R.; Milford, M. One-Shot Reinforcement Learning for Robot

Navigation with Interactive Replay. arXiv 2017, arXiv:1711.10137.

Chaplot, D.S.; Parisotto, E.; Salakhutdinov, R. Active Neural Localization. arXiv 2018, arXiv:1801.08214.

Savinov, N.; Dosovitskiy, A.; Koltun, V. Semi-Parametric Topological Memory for Navigation. arXiv 2018,

arXiv:1803.00653.

Heess, N.; Sriram, S.; Lemmon, J.; Merel, J.; Wayne, G.; Tassa, Y.; Erez, T.; Wang, Z.; Eslami, A.; Riedmiller, M.; et al.

Emergence of Locomotion Behaviours in Rich Environments. arXiv 2017, arXiv:1707.02286.

Calisti, M.; Giorelli, M.; Levy, G.; Mazzolai, B.; Hochner, B.; Laschi, C.; Dario, P. An octopus-bioinspired solutiontomovementandmanipulationforsoftrobots. Bioinspir.Biomim.2011,6,036002.[CrossRef][PubMed]

[CrossRef]

Martinez, R.V.; Branch, J.L.; Fish, C.R.; Jin, L.; Shepherd, R.F.; Nunes, R.M.D.; Suo, Z.; Whitesides, G.M.

Robotic tentacles with three-dimensional mobility based on flexible elastomers. Adv. Mater. 2013, 25, 205–212.

[CrossRef] [PubMed] [CrossRef]

Caldera, S. Review of Deep Learning Methods in Robotic Grasp Detection. Multimodal Technol. Interact.

2018, 2, 57. [CrossRef] [CrossRef]

Zhou, J.; Chen, S.; Wang, Z. A Soft-Robotic Gripper with Enhanced Object Adaptation and Grasping

Reliability. IEEE Robot. Autom. Lett. 2017, 2, 2287–2293. [CrossRef] [CrossRef]

Robotics 2019, 8, 4 33 of 36

Finn, C.; Tan, X.Y.; Duan, Y.; Darrell, T.; Levine, S.; Abbeel, P. Deep Spatial Autoencoders for Visuomotor Learning. arXiv 2015, arXiv:1509.06113.

Tzeng, E.; Devin, C.; Hoffman, J.; Finn, C.; Peng, X.; Levine, S.; Saenko, K.; Darrell, T. Towards Adapting Deep Visuomotor Representations from Simulated to Real Environments. arXiv 2015, arXiv:1511.07111v3.

Fu, J.; Levine, S.; Abbeel, P. One-shot learning of manipulation skills with online dynamics adaptation and neural network priors. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Korea, 9–14 October 2016; pp. 4019–4026.

Kumar, V.; Todorov, E.; Levine, S. Optimal control with learned local models: Application to dexterous manipulation. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), New York, NY, USA, 16–20 May 2016; pp. 378–383.

Gupta, A.; Eppner, C.; Levine, S.; Abbeel, P. Learning dexterous manipulation for a soft robotic hand from human demonstrations. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Deajeon, Korea, 9–14 October 2016; pp. 3786–3793.

Popov, I.; Heess, N.; Lillicrap, T.; Hafner, R.; Barth-Maron, G.; Vecerik, M.; Lampe, T.; Tassa, Y.; Erez, T.; Riedmiller, M. Data-Efficient Deep Reinforcement Learning for Dexterous manipulation. arXiv 2017, arXiv:1704.03073.

Prituja,A.;Banerjee,H.;Ren,H.ElectromagneticallyEnhancedSoftandFlexibleBendSensor:AQuantitative Analysis with Different Cores. IEEE Sens. J. 2018, 18, 3580–3589. [CrossRef] [CrossRef]

Sun, J.Y.; Zhao, X.; Illeperuma, W.R.; Chaudhuri, O.; Oh, K.H.; Mooney, D.J.; Vlassak, J.J.; Suo, Z. Highly stretchable and tough hydrogels. Nature 2012, 489, 133–136. [CrossRef] [CrossRef]

Tzeng,E.;Hoffman,J.;Zhang,N.;Saenko,K.;Darrell,T.DeepDomainConfusion:MaximizingforDomain Invariance. arXiv 2014, arXiv:1412.3474.

Goodfellow,I.;Pouget-Abadie,J.;Mirza,M.;Xu,B.;Warde-Farley,D.;Ozair,S.;Courville,A.;Bengio,Y. Generative adversarial nets. In Proceedings of the Advances in Neural Information Processing Systems (NIPS 2014), Montreal, QC, Canada, 8–13 December 2014; pp. 2672–2680.

Radford, A.; Metz, L.; Chintala, S. Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks. arXiv 2015, arXiv:1511.06434.

Arjovsky, M.; Chintala, S.; Bottou, L. Wasserstein gan. arXiv 2017, arXiv:1701.07875.

Hoffman,J.;Tzeng,E.;Park,T.;Zhu,J.Y.;Isola,P.;Saenko,K.;Efros,A.A.;Darrell,T.Cycada:Cycle-Consistent

AdversarialDomainAdaptation. arXiv2017,arXiv:1711.03213.

Doersch, C. Tutorial on Variational Autoencoders. arXiv 2016, arXiv:1606.05908v2.

Szabó,A.;Hu,Q.;Portenier,T.;Zwicker,M.;Favaro,P.ChallengesinDisentanglingIndependentFactorsof

Variation. arXiv 2017, arXiv:1711.02245v1.

Mathieu, M.; Zhao, J.J.; Sprechmann, P.; Ramesh, A.; LeCun, Y. Disentangling factors of variation in

deep representations using adversarial training. In Proceedings of the NIPS 2016, Barcelona, Spain,

5–10 December 2016.

Bousmalis,K.;Irpan,A.;Wohlhart,P.;Bai,Y.;Kelcey,M.;Kalakrishnan,M.;Downs,L.;Ibarz,J.;Pastor,P.;

Konolige, K.; et al. Using Simulation and Domain Adaptation to Improve Efficiency of Deep Robotic

Grasping. arXiv 2017, arXiv:1709.07857.

Tobin,J.;Fong,R.;Ray,A.;Schneider,J.;Zaremba,W.;Abbeel,P.Domainrandomizationfortransferring

deep neural networks from simulation to the real world. In Proceedings of the 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Vancouver, BC, Canada, 24–28 September 2017; pp. 23–30.

Peng, X.B.; Andrychowicz, M.; Zaremba, W.; Abbeel, P. Sim-to-Real Transfer of Robotic Control with Dynamics Randomization. arXiv 2017, arXiv:1710.06537.

Rusu,A.A.;Vecerik,M.;Rothörl,T.;Heess,N.;Pascanu,R.;Hadsell,R.Sim-to-RealRobotLearningfrom Pixels with Progressive Nets. arXiv 2016, arXiv:1610.04286.

Zhang,J.;Tai,L.;Xiong,Y.;Liu,M.;Boedecker,J.;Burgard,W.VrGogglesforRobots:Real-to-SimDomain Adaptation for Visual Control. arXiv 2018, arXiv:1802.00265.

Ruder, M.; Dosovitskiy, A.; Brox, T. Artistic style transfer for videos and spherical images. Int. J. Comput. Vis.2018, 126, 1199–1219. [CrossRef] [CrossRef]

Koenig, N.P.; Howard, A. Design and use paradigms for Gazebo, an open-source multi-robot simulator.IROS. Citeseer 2004, 4, 2149–2154.

Robotics 2019, 8, 4 34 of 36

Maddern, W.; Pascoe, G.; Linegar, C.; Newman, P. 1 year, 1000 km: The Oxford RobotCar dataset. Int. J. Robot. Res. 2017, 36, 3–15. [CrossRef] [CrossRef]

Dosovitskiy,A.;Ros,G.;Codevilla,F.;Lopez,A.;Koltun,V.CARLA:AnOpenUrbanDrivingSimulator.arXiv 2017, arXiv:1711.03938.

Chen,L.C.;Papandreou,G.;Kokkinos,I.;Murphy,K.;Yuille,A.L.SemanticImageSegmentationwithDeep Convolutional Nets and Fully Connected Crfs. arXiv 2014, arXiv:1412.7062.

Yang, L.; Liang, X.; Xing, E. Unsupervised Real-to-Virtual Domain Unification for End-to-End Highway Driving. arXiv 2018, arXiv:1801.03458.

Uesugi,K.;Shimizu,K.;Akiyama,Y.;Hoshino,T.;Iwabuchi,K.;Morishima,K.Contractileperformanceand controllability of insect muscle-powered bioactuator with different stimulation strategies for soft robotics.Soft Robot. 2016, 3, 13–22. [CrossRef] [CrossRef]

Niiyama,R.;Sun,X.;Sung,C.;An,B.;Rus,D.;Kim,S.PouchMotors:PrintableSoftActuatorsIntegrated with Computational Design. Soft Robot. 2015, 2, 59–70. [CrossRef] [CrossRef]

Gul,J.Z.;Sajid,M.;Rehman,M.M.;Siddiqui,G.U.;Shah,I.;Kim,K.C.;Lee,J.W.;Choi,K.H.3Dprintingfor soft robotics—A review. Sci. Technol. Adv. Mater. 2018, 19, 243–262. [CrossRef] [PubMed] [CrossRef]

Umedachi, T.; Vikas, V.; Trimmer, B. Highly deformable 3-D printed soft robot generating inching and crawling locomotions with variable friction legs. In Proceedings of the 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems, Tokyo, Japan, 3–7 November 2013; pp. 4590–4595.

Mutlu,R.;Tawk,C.;Alici,G.;Sariyildiz,E.A3Dprintedmonolithicsoftgripperwithadjustablestiffness. In Proceedings of the IECON 2017—43rd Annual Conference of the IEEE Industrial Electronics Society, Beijing, China, 29 October–1 November 2017; pp. 6235–6240.

Lu,N.;HyeongKim,D.FlexibleandStretchableElectronicsPavingtheWayforSoftRobotics.SoftRobot.2014, 1, 53–62 [CrossRef] [CrossRef]

Rohmer, E.; Singh, S.P.; Freese, M. V-REP: A versatile and scalable robot simulation framework. In Proceedings of the 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Tokyo, Japan, 3–8 November 2013; pp. 1321–1326.

Shah,S.;Dey,D.;Lovett,C.;Kapoor,A.Airsim:High-fidelityvisualandphysicalsimulationforautonomous vehicles. In Field and Service Robotics; Springer: Berlin, Germany, 2018; pp. 621–635.

Pan,X.;You,Y.;Wang,Z.;Lu,C.VirtualtoRealReinforcementLearningforAutonomousDriving.arXiv2017, arXiv:1704.03952.

Savva,M.;Chang,A.X.;Dosovitskiy,A.;Funkhouser,T.;Koltun,V.MINOS:MultimodalIndoorSimulator for Navigation in Complex Environments. arXiv 2017, arXiv:1712.03931.

Wu,Y.;Wu,Y.;Gkioxari,G.;Tian,Y.BuildingGeneralizableAgentswithaRealisticandRich3DEnvironment.arXiv 2018, arXiv:1801.02209.

Coevoet, E.; Bieze, T.M.; Largilliere, F.; Zhang, Z.; Thieffry, M.; Sanz-Lopez, M.; Carrez, B.; Marchal, D.; Goury, O.; Dequidt, J.; et al. Software toolkit for modeling, simulation, and control of soft robots. Adv. Robot.2017, 31, 1208–1224. [CrossRef] [CrossRef]

Duriez, C.; Coevoet, E.; Largilliere, F.; Bieze, T.M.; Zhang, Z.; Sanz-Lopez, M.; Carrez, B.; Marchal, D.; Goury, O.; Dequidt, J. Framework for online simulation of soft robots with optimization-based inverse model. In Proceedings of the 2016 IEEE International Conference on Simulation, Modeling, and Programming for Autonomous Robots (SIMPAR), San Francisco, CA, USA, 13–16 December 2016; pp. 111–118.

Olaya,J.;Pintor,N.;Avilés,O.F.;Chaparro,J.Analysisof3RPSRoboticPlatformMotioninSimScapeand MATLAB GUI Environment. Int. J. Appl. Eng. Res. 2017, 12, 1460–1468.

Coevoet,E.;Escande,A.;Duriez,C.Optimization-basedinversemodelofsoftrobotswithcontacthandling.IEEE Robot. Autom. Lett. 2017, 2, 1413–1419. [CrossRef]

Yekutieli,Y.;Sagiv-Zohar,R.;Aharonov,R.;Engel,Y.;Hochner,B.;Flash,T.Dynamicmodeloftheoctopus arm. I. Biomechanics of the octopus reaching movement. J. Neurophysiol. 2005, 94, 1443–1458. [CrossRef] [PubMed] [CrossRef]

Zatopa,A.;Walker,S.;Menguc,Y.Fullysoft3D-printedelectroactivefluidicvalveforsofthydraulicrobots.Soft Robot. 2018, 5, 258–271. [CrossRef]

Ratliff, N.D.; Bagnell, J.A.; Srinivasa, S.S. Imitation learning for locomotion and manipulation. In Proceedings of the 2007 7th IEEE-RAS International Conference on Humanoid Robots, Pittsburgh, PA, USA, 29 November–1 December 2007; pp. 392–397.

Robotics 2019, 8, 4 35 of 36

Langsfeld,J.D.;Kaipa,K.N.;Gentili,R.J.;Reggia,J.A.;Gupta,S.K.Towards Imitation Learning of Dynamic Manipulation Tasks: A Framework to Learn from Failures. Available online: https://pdfs.semanticscholar. org/5e1a/d502aeb5a800f458390ad1a13478d0fbd39b.pdf (accessed on 18 January 2019).

Ross,S.;Gordon,G.;Bagnell,D.Areductionofimitationlearningandstructuredpredictiontono-regret online learning. In Proceedings of the Fourteenth International Conference on Artificial Intelligence and Statistics, Fort Lauderdale, FL, USA, 11–13 April 2011; pp. 627–635.

Bojarski, M.; Del Testa, D.; Dworakowski, D.; Firner, B.; Flepp, B.; Goyal, P.; Jackel, L.D.; Monfort, M.; Muller, U.; Zhang, J.; et al. End to end Learning for Self-Driving Cars. arXiv 2016, arXiv:1604.07316.

Tai, L.; Li, S.; Liu, M. A deep-network solution towards model-less obstacle avoidance. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Korea, 9–14 October 2016; pp. 2759–2764.

Giusti, A.; Guzzi, J.; Ciresan, D.C.; He, F.L.; Rodríguez, J.P.; Fontana, F.; Faessler, M.; Forster, C.; Schmidhuber, J.; Di Caro, G.; et al. A Machine Learning Approach to Visual Perception of Forest Trails for Mobile Robots. IEEE Robot. Autom. Lett. 2016, 1, 661–667. [CrossRef] [CrossRef]

Codevilla,F.;Müller,M.;Dosovitskiy,A.;López,A.;Koltun,V.End-to-EndDrivingviaConditionalImitation Learning. arXiv 2017, arXiv:1710.02410.

Duan,Y.;Andrychowicz,M.;Stadie,B.C.;Ho,J.;Schneider,J.;Sutskever,I.;Abbeel,P.;Zaremba,W.One-Shot Imitation Learning. In Proceedings of the NIPS, Long Beach, CA, USA, 4–9 December 2017.

Finn, C.; Yu, T.; Zhang, T.; Abbeel, P.; Levine, S. One-Shot Visual Imitation Learning via Meta-Learning.arXiv 2017, arXiv:1709.04905.

Finn, C.; Abbeel, P.; Levine, S. Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks. arXiv2017, arXiv:1703.03400.

Eitel, A.; Hauff, N.; Burgard, W. Learning to Singulate Objects Using a Push Proposal Network. arXiv 2017, arXiv:1707.08101.

Ziebart, B.D.; Maas, A.L.; Bagnell, J.A.; Dey, A.K. Maximum Entropy Inverse Reinforcement Learning. In Proceedings of the AAAI, Chicago, IL, USA, 13–17 July 2008; Volume 8, pp. 1433–1438.

Okal, B.; Arras, K.O. Learning socially normative robot navigation behaviors with bayesian inverse reinforcement learning. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–20 May 2016; pp. 2889–2895.

Pfeiffer,M.;Schwesinger,U.;Sommer,H.;Galceran,E.;Siegwart,R.Predictingactionstoactpredictably: Cooperative partial motion planning with maximum entropy models. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Deajeon, Korea, 9–14 October 2016; pp. 2096–2101.

Kretzschmar,H.;Spies,M.;Sprunk,C.;Burgard,W.Sociallycompliantmobilerobotnavigationviainverse reinforcement learning. Int. J. Robot. Res. 2016, 35, 1289–1307. [CrossRef] [CrossRef]

Wulfmeier,M.;Ondruska,P.;Posner,I.MaximumEntropyDeepInverseReinforcementLearning.arXiv2015, arXiv:1507.04888.

Ho, J.; Ermon, S. Generative adversarial imitation learning. In Proceedings of the Advances in Neural Information Processing Systems, Barcelona, Spain, 5–10 December 2016; pp. 4565–4573.

Baram, N.; Anschel, O.; Mannor, S. Model-Based Adversarial Imitation Learning. arXiv 2016, arXiv:1612.02179.

Wang, Z.; Merel, J.S.; Reed, S.E.; de Freitas, N.; Wayne, G.; Heess, N. Robust imitation of diverse behaviors. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA,

USA, 4–9 December 2017; pp. 5320–5329.

Li,Y.;Song,J.;Ermon,S.InferringtheLatentStructureofHumanDecision-MakingfromRawVisualInputs.

arXiv 2017, arXiv:1604.07316.

Tai, L.; Zhang, J.; Liu, M.; Burgard, W. Socially-Compliant Navigation through Raw Depth Inputs with

Generative Adversarial Imitation Learning. arXiv 2017, arXiv:1710.02543.

Stadie, B.C.; Abbeel, P.; Sutskever, I. Third-Person Imitation Learning. arXiv 2017, arXiv:1703.01703.

Wehner,M.;Truby,R.L.;Fitzgerald,D.J.;Mosadegh,B.;Whitesides,G.M.;Lewis,J.A.;Wood,R.J.Anintegrated

design and fabrication strategy for entirely soft, autonomous robots. Nature 2016, 536. [CrossRef]

Katzschmann, R.K.; de Maille, A.; Dorhout, D.L.; Rus, D. Physical human interaction for an inflatable manipulator. In Proceedings of the 2011 IEEE/EMBC Annual International Conference of the Engineering in

Medicine and Biology Society, Boston, MA, USA, August 30–3 September 2011; pp. 7401–7404.

Robotics 2019, 8, 4 36 of 36

Rogóz, M.; Zeng, H.; Xuan, C.; Wiersma, D.S.; Wasylczyk, P. Light-driven soft robot mimics caterpillar locomotion in natural scale. Adv. Opt. Mater. 2016, 4.

Katzschmann,R.K.;Marchese,A.D.;Rus,D.HydraulicAutonomousSoftRoboticFishfor3DSwimming. In Proceedings of the ISER, Marrakech and Essaouira, Morocco, 15–18 June 2014.

Katzschmann,R.K.;deMaille,A.;Dorhout,D.L.;Rus,D.Cyclichydraulicactuationforsoftroboticdevices. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Korea, 9–14 October 2016; pp. 3048–3055.

DelPreto,J.;Katzschmann,R.K.;MacCurdy,R.B.;Rus,D.ACompactAcousticCommunicationModulefor Remote Control Underwater. In Proceedings of the WUWNet, Washington, DC, USA, 22–24 October 2015.

Marchese, A.D.; Onal, C.D.; Rus, D. Towards a Self-contained Soft Robotic Fish: On-Board Pressure

Generation and Embedded Electro-permanent Magnet Valves. In Proceedings of the ISER, Quebec City, QC,

Canada, 17–21 June 2012.

Narang, Y.S.; Degirmenci, A.; Vlassak, J.J.; Howe, R.D. Transforming the Dynamic Response of Robotic

Structures and Systems Through Laminar Jamming. IEEE Robot. Autom. Lett. 2018, 3, 688–695. [CrossRef]

[CrossRef]

Narang,Y.S.;Vlassak,J.J.;Howe,R.D.MechanicallyVersatileSoftMachinesThroughLaminarJamming.

Adv. Funct. Mater. 2017, 28, 1707136. [CrossRef] [CrossRef]

Kim, T.; Yoon, S.J.; Park, Y.L. Soft Inflatable Sensing Modules for Safe and Interactive Robots. IEEE Robot.

Autom. Lett. 2018, 3, 3216–3223. [CrossRef] [CrossRef]

Qi, R.; Lam, T.L.; Xu, Y. Mechanical design and implementation of a soft inflatable robot arm for safe

human-robot interaction. In Proceedings of the 2014 IEEE International Conference on Robotics and

Automation (ICRA), Hong Kong, China, 31 May–7 June 2014; pp. 3490–3495.

Zeng, H.; Wani, O.M.; Wasylczyk, P.; Priimagi, A. Light-Driven, Caterpillar-Inspired Miniature Inching

Robot. Macromol. Rapid Commun. 2018, 39, 1700224. [CrossRef] [CrossRef]

Banerjee, H.; Ren, H. Optimizing double-network hydrogel for biomedical soft robots. Soft Robot. 2017,

4, 191–201. [CrossRef] [CrossRef]

Henderson,P.;Islam,R.;Bachman,P.;Pineau,J.;Precup,D.;Meger,D.DeepReinforcementLearningthat

Matters. arXiv 2017, arXiv:1709.06560.

Vecerík, M.; Hester, T.; Scholz, J.; Wang, F.; Pietquin, O.; Piot, B.; Heess, N.; Rothörl, T.; Lampe, T.;

Riedmiller, M.A. Leveraging Demonstrations for Deep Reinforcement Learning on Robotics Problems

with Sparse Rewards. arXiv 2017, arXiv:1707.08817v1.

Nair,A.;McGrew,B.;Andrychowicz,M.;Zaremba,W.;Abbeel,P.OvercomingExplorationinReinforcement

Learning with Demonstrations. arXiv 2017, arXiv:1709.10089.

Gao, Y.; Lin, J.; Yu, F.; Levine, S.; Darrell, T. Reinforcement Learning from Imperfect Demonstrations.

arXiv 2018, arXiv:1802.05313.

Zhu, Y.; Wang, Z.; Merel, J.; Rusu, A.; Erez, T.; Cabi, S.; Tunyasuvunakool, S.; Kramár, J.; Hadsell, R.;

de Freitas, N.; et al. Reinforcement and Imitation Learning for Diverse Visuomotor Skills. arXiv 2018,

arXiv:1802.09564.

Nichol, A.; Schulman, J. Reptile: A Scalable Metalearning Algorithm. arXiv 2018, arXiv:1803.02999.

⃝c 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license

(http://creativecommons.org/licenses/by/4.0/).

0 notes

Text

Construction

Website: https://www.archilovers.com/lew-schulman-ibuild/

Address: 1700 Pennsylvania Ave. NW, Washington, DC 20036

Phone: 800-601-5605

Lew Schulman is a co-founder of iBUILD the home construction platform for whole house developments, partial construction projects, and repair. Mr. Schulman is a Navy veteran and is focused on innovative social entrepreneurship, start-ups, and philanthropy, having launched four scalable solutions to various social issues around the globe. Lew Schulman is a seasoned CEO with global leadership experience. Lew Schulman with iBUILD invested his experience in affordable housing innovation after first finding success in finance and the fishing industry. For iBuild, Mr. Schulman has pioneered housing production systems in the US and abroad, resulting in the creation of thousands of affordable homes.

0 notes

Video

vimeo

Focus On, 14.18 from Princeton Community Television on Vimeo.

Dan Schulman, PayPal CEO

Lew Goldstein interviews PayPal CEO Dan Schulman. Schulman a former Princeton High School graduate, discusses his vision of PayPal, how companies have a moral obligation to try and be a force for good, the need to support local businesses and the importance of social activism throughout his life in an exclusive interview.

0 notes

Text

Can America Build a Luxury Powerhouse to Rival Europe’s LVMH?

The new 700,000-square-foot headquarters of Coach is a state-of-the-art campus in one of New York’s newest skyscrapers. Showrooms along a 15-story atrium look out over tourists walking the High Line, the elevated railroad track-turned-park, and terraces on the 23rd floor poke out from a dine-in cafe that offers sushi and sandwiches. There’s even a special chicken wing bar for staffers who don’t want the usual lunch fare.

A lot of work remains to be done, though. The building occupies the southeast corner of the city’s new $20 billion Hudson Yards complex, and cranes have loomed around the 52-story glass tower since the brand moved in two years ago. Even now, the buzz of jackhammers and welding machines greet Coach’s 1,200 or so employees each morning as they enter their pristine new office.

Inside, a similarly radical restructuring is underway. Sales at Coach are just starting to recover after a disastrous three-year stretch from 2012 to 2015, when the label shed $928 million, or more than 18 percent, of its annual revenue. During that time, shares plummeted more than 62 percent, from an all-time high of $77.28 to $28.93.

To restore the fading fashion house, the plan is to turn it into America’s answer to European luxury conglomerates such as Kering and LVMH, which run wide-ranging portfolios of brands. LVMH, the world’s largest luxury company at nearly $50 billion in annual revenue, owns everything from Louis Vuitton clothing and Veuve Cliquot Champagne to Guerlain perfumes, TAG Heuer watches, and Sephora cosmetics.

The man steering this strategy, perched in a corner office high above the Hudson River, is taking a page out of his former boss’s playbook. Victor Luis, a 52-year-old executive, ran two divisions at LVMH before joining Coach: fashion label Givenchy in Japan and Baccarat crystal glassware in the U.S. An immigrant from São Miguel, a little Portuguese island in the Atlantic, he has a master’s degree in international economics and, from the looks of it, a Ph.D. in swagger.

Since his promotion to the top job in January 2014, Luis has announced two acquisitions: a $574 million deal for women’s shoemaker Stuart Weitzman and, last July, $2.4 billion for Kate Spade, one of the brand’s nemeses. He announced layoffs, culled about a third of his domestic store fleet, and hired replacements for several high-level executives, including former brand chiefs Craig Leavitt and Wendy Kahn. He eliminated the Jack Spade menswear business. He has also severely cut down on promotional activity, such as flash sales and discounted merchandise, purposely hurting sales in the hope that it would wean customers off lower-priced fare.

Perhaps the most controversial announcement, at least for the millions of shoppers who buy Coach’s bags and wallets, occurred last fall, when Luis gave the 77-year-old fashion house a new corporate name: Tapestry Inc. The move signals that Luis is looking to reposition the company as an American LVMH, one that has evolved beyond “core fashion.”

This year’s performance has been much better, with the stock up about 18 percent this year to $52.03 through Tuesday’s close. Coach, Tapestry’s biggest business at more than $4 billion, is coming off a strong 12-month run, with same-store sales, a key metric for the retail industry, turning positive over the holiday season last year. “The biggest question mark for us—and for me—was how much time do these things take?” Luis says. “Anxiousness? Short-term concern? Absolutely.”

A Brief History of American Luxury

It wasn’t always like this. Coach was known as an originator of what’s called “affordable luxury.” The company began in 1941 as a leather goods workshop in New York that sold only men’s goods: bags, wallets, flask-holders. It didn’t sell women’s handbags until Lillian and Miles Cahn bought the factory 20 years later. Some of the label’s oldest pieces are still stored in its archive, deep in the labyrinth of its headquarters. They’re relics that designers now use to jog their creativity.

Many of those bags were designed by Bonnie Cashin, who was hired in 1962 and is considered a pioneer of women’s sportswear. In her 12 years there, she transformed Coach from leather shop to fashion house. Her shoulder bags with interchangeable straps, bucket bags and clutches became mainstays, and her signature brass turn lock, which was inspired by the toggles on the roof of her convertible, is still used on many of the brand’s styles today.

In 1985, the Cahns sold the company to the Sara Lee Corp., a now-defunct consumer goods conglomerate, and Coach expanded quickly. It hit $100 million in sales by 1989 and made longtime executive Lew Frankfort its president. Appointed CEO in 1995, he spent the next 19 years turning Coach into a multibillion-dollar global luxury powerhouse. Head designer Reed Krakoff became a fashion superstar, thanks to runway-worthy leather goods that could also be sold to the masses—at much lower prices than European peers could offer. When Sara Lee spun off its leather goods business in 2000, Coach had just surpassed the half-billion mark in annual revenue.

Krakoff’s most lasting contribution came in 2001, when the label released a line of bags covered in interlocking Cs, a design that coincided with the very beginning of fashion’s logo craze: Abercrombie & Fitch had its logo tees, Gap had its logo sweatshirts, and Coach had its logo bags. The print was applied to premium leather satchels, as well as to its cheap nylon tote bags. In a little over a decade, Coach would grow into one of the world’s largest handbag labels, peaking at nearly $5.1 billion.

Frankfort and Krakoff left Coach in 2014. The company said that the CEO’s departure was part of a long-term succession plan and that it didn’t require an interim chief for the transition. Frankfort took a role as an executive-in-residence at private-equity firm Sycamore Partners. Krakoff, too, left before Coach had found a replacement. (He is now the creative head of U.S. jeweler Tiffany & Co.)

Luis spent eight years under their leadership and watched the empire they built come crashing, in a very literal sense. Coach’s old industrial building, at 516 West 34th St, has since been taken down. One executive kept a brick as a souvenir.

Six months after Luis became CEO, executives held an investor day to reveal their turnaround plans. It would get worse before it gets better, they said. A 2014 company-wide memo asked not to panic, even though sales would be down more than 20 percent for the quarter. “That’s not a pretty number,” says Luis. “Even if you know it’s coming, it never feels good.”

In Search of “Elevation”

On the bottom floor of Tapestry’s new headquarters, seamstresses and leatherworkers sit at sewing machines, churning out sample clutches and hobo bags among spools of bonded leather and rubber fleece. Upstairs, a squad of designers sketch at high desks, surrounded by sheets of fabric. Pin-up boards line the merchandising floor, a vast menu of styles for a brand that sells thousands of different products.

On the 19th floor is the glossy C-suite. Senior management has experienced near-total turnover under Luis, and new faces now run the company’s global supply chain, finance, international business development, and technology. All three of Tapestry’s labels have new top executives, each recruited from outside the company. Kate Spade is run by fashion veteran Anna Bakst, who came over from Michael Kors in late March. In April, Stuart Weitzman announced that its new boss was Eraldo Poletto, the former head of Italian fashion house Salvatore Ferragamo.

Coach CEO Joshua Schulman, who joined from Neiman Marcus Group last June, is the company’s longest-tenured brand chief. The former president of posh department store Bergdorf Goodman speaks conceptually about Coach’s “brand DNA” (a label’s most distinctive attributes), the impact of “omnichannel commerce” (selling seamlessly both online and in stores), and where each new handbag line fits into his theoretical product “pyramid” (higher margin items with a smaller market at the top; lower ones with a bigger market at the bottom).

Coach has begun to diversify its offerings beyond handbags. It started selling ready-to-wear apparel, and it plans to expand into new product categories and grow its menswear selection, which accounts for about 20 percent of the business. Its merchandise now includes outerwear, jewelry, watches, scarves, and fragrances. Schulman is open to expanding into home décor and other segments, when the time comes.

“Elevate” is a word that Coach executives use on a near-constant basis, whether it’s elevated product, elevated price points, or an elevated brand. The average price of a Coach handbag was once under $300. Now, according to Schulman, the sweet spot for price is from $300 to $500. The Rogue, at $795, is Coach’s most expensive line of handbags. Made from glove-tanned pebble leather, it has detachable straps and suede lining and can also come in bold patterns and embellishments. It was designed with die-cut snakeskin tea roses and priced at an elevated $1,500 in the recent season.

In February, the brand welcomed celebrities and influencers to a runway show for Coach 1941, an upscale offshoot of its main brand, designed by creative head Stuart Vevers. “He’s taken the brand in directions that it had never been,” says Schulman. The catwalk itself was more abstract art than clothing showcase, presented as an eerie forest full of video monitors gone haywire. As the show closed, lights dimmed and strobes pulsed as the models hurried through the set. You couldn’t see the clothes at all—not that it mattered. This was about artistic credibility.

“Maybe a shopper who buys a Fendi or a Dior might come in and buy Coach apparel or Coach footwear, because it does now have a luxury point of view,” says Erinn Murphy, an analyst at Piper Jaffray. “That customer would have never bought a logo-oriented Coach tote from seven or eight years ago.”

More Brands, More Problems?

Tapestry’s other brands remain in recovery from a variety of ailments. Stuart Weitzman’s business largely relies on two styles: an over-the-knee, super-tall boot called the “5050” and a line of minimalist “Nudist” sandals with a delicate ankle strap. But if consumers aren’t wooed with compelling versions of those franchises for one season, it can mean disaster. Earlier this year, the shoemaker ran into production delays with new styles, forcing the company to admit that the issue will persist through next winter. On top of that, Tapestry ousted Stuart Weitzman’s creative director, Giovanni Morelli, in May, citing issues with his “behavior.”

With $1.4 billion in annual revenue when it was acquired, Kate Spade had different problems, primarily that it had torpedoed its own brand with constant online flash sales. As a more youthful, less serious brand, it sells sneakers covered in rose gold glitter, jacquard dresses in multi-color daisies, and giant, heart-shaped hoop earrings. But the label’s whimsical items were often too strange for luxury shoppers unwilling to shell out $300 on bags that looked, for example, like a giant cat’s head. Weak traffic at its outlet stores forced the brand to offer deeper discounts. Even worse, several seasons of inventory missteps hindered stores that failed to stock enough of the merchandise that people actually wanted.