Microsoft Azure MVP with a passion for web and the cloud. Works as IT-consultant at Active Solution. Speaks regularly at conferences and usergroups. Loves to learn about new and useful technologies. [email protected] +46 738 031398

Don't wanna be here? Send us removal request.

Text

Statistik för BankID-inloggningar med Active Login Monitor

I februari 2019 så lanserade vi första versionen av vårt Open Source-projekt Active Login. Ett gäng bibliotek tänkt att på ett säkert och stabilt sätt göra det enklare att använda BankID som inloggningsmetod i .NET-baserade webbapplikationer. Responsen har varit enorm och tack vare en öppen källkodsmodell så har vi tillsammans med våra kunder kunnat fokusera på mer värdeskapande arbete än rutinarbete kring inloggningsintegration.

I morse hade vi glädjen att se nedladdningstalet tippa över en kvarts miljon - en riktig milstolpe i projektet! Statistik inom öppen källkod är ett kapitel för sig, men 250 000 är en indikation på dess genomslag på den svenska marknaden! På bilden nedan ser ni den fysiska räknare vi skruvat upp på vårt kontor som i realtid håller koll på antalet nedladdningar.

Något vi haft en efterfrågan kring både internt och externt är möjligheten till bättre insikt i antalet inloggningar, typ av inloggningar, fel vid inloggning m.m. Vi har baserat på detta behov arbetat fram en dashboard med bra överblick på inloggnignar som sker via Active Login som vi idag är glada att kunna dela med oss av!

Active Login Monitor

Active Login Monitor är en Azure dashboard som ger dig en överblick på inloggningar via BankID om du använder Active Login. Du kan bland annat se:

Antalet inloggningar

Per dag

Per vecka

Antalet fel

Per felkod

Per feltyp

Antal användare

Per enhet

Per inloggningstyp

Detaljerad händelseinformation

Active Login Monitor är open source och finns tillgänglig som en ARM-template för att enkelt kunna integreras i ditt befintliga releaseflöde. I dokumentationen finns även ett stort antal exempel på KQL-frågor du kan integrera i befintliga dashboards.

På GitHub finns dokumentation hur du kommer i gång och behöver du hjälp på traven erbjuder vi support.

0 notes

Text

100 000 nedladdningar och lansering av Active Login Authentication 4.0.0

Vi är väldigt stola och glada att Active Login - open source biblioteket som förenklar implementationer av BankId - passerat 100 000 nedladdningar! Stort tack till alla som bidragit till dess framgång. Vi på Active Solution är glada att kunna hjälpa företag med moderna, säkra inloggningslösningar där BankID är en viktig pusselbit.

Idag är vi glada att kunna meddela att nästa version av Active Login Authentication, 4.0.0, finns tillgänglig för användning i produktion.

Nya funktioner

I stora drag har vi fokuserat på:

Bättre stöd för övervakning genom Azure Monitor och Azure Application Insights

Bättre stöd för att drifta Active Login i Kubernetes genom att finjustera IP-adresser

Använd App Link (https:/app.bankid.com/) på de webbläsare på Android som stödjer detta

Bättre identifiering av klientenheter

Verifiera stöd för .NET 5

Mer info

Som vanligt hittar du mer information om Active Login på GitHub. Detaljer kring denna release finns i våra Release Notes.

Feedback

Vill du bidra med kod eller input till projektet, skriv på GitHub.

Support eller Implementera BankID i din web eller app

Är du i behov av support eller vill komma igång med BankID som säker inloggning på din webb eller i din app? Vi på Active Solution erbjuder kommersiella upplägg för en snabb snart. Läs mer på vår webb.

0 notes

Text

Solving "The recordings URI contains invalid data." in Azure Speech - Batch Transcription

... or "How to remove a thumbnail from an mp3 using ffmpeg?"

Update 2020-12-10: Since writing this post, the team at Microsoft have rolled out an update of the transcription service that allows embedded media, so the workaround described in this blog post won’t be necessary any more. They have also introduced a new, more powerful API (v3) for Batch transcription; an introduction is available here.

While building the site RadioText.net (which I've blogged about here) I found that a few of the files I tried to transcribe returned an undocumented error: "The recordings URI contains invalid data." and I'll describe the solution to the (at least my) problem below.

The problem

So, the problem appeared when using Azure Speech Services - Batch transcription. Speech Services is one of the AI services in Azure provided through Azure Cogntitive Services. Batch Transcription allows for large scale, asyncronous transcription of audio files.

Initiating the transcription went fine is done using a HTTP POST like this (which of course can vary depending on your preferences):

HTTP POST: https://westeurope.cris.ai/api/speechtotext/v2.0/Transcriptions/

{ "Name":"RadioText - Episode 1482554", "Description":"RadioText", "RecordingsUrl":"https://STORAGEACCOUNT.blob.core.windows.net/media/Audio.mp3", "Locale":"sv-SE", // ... }

The error is returned to you when you are querying for the transcription status:

HTTP GET: https://westeurope.cris.ai/api/speechtotext/v2.0/Transcriptions/TRANSCRIPTION-GUID

{ "recordingsUrl": "https://STORAGEACCOUNT.blob.core.windows.net/media/Audio.mp3", // ... "statusMessage": "The recordings URI contains invalid data.", "status": "Failed", "locale": "sv-SE", // ... }

Video stream / JPG

After some investigation, the issue is seems undocumented but is related to that the batch service requires that the audio file being transcribed need to contain audio streams exclusively. The mp3 files I were trying to transcribe had an embedded video stream containing a jpg file - basically, the thumbnails used in media players. At the moment, batch transcription can't handle audio files containing such image/video stream (confirmed with the team at Microsoft).

Solution

Until support for these media files is built into the service, it's easy for us to extract only the audio channel ourselves using ffmpeg. The following command will extract the first audio channel from Input.mp3 and output it as Output.mp3 - ready to use for batch service. It's documented under Stream selection in the ffmpeg documentation.

ffmpeg -i Input.mp3 -map 0:a -codec:a copy Output.mp3

If we break it down:

ffmpeg: The utility

-i Input.mp3: Use Input.mp3 as input, could also be a URL (https://STORAGEACCOUNT.blob.core.windows.net/media/Audio.mp3).

input docs

-map 0:a: Use the audio (a) from the first input file (0)

map docs

-codec:a copy: Set codec option for audio (a) to only copy, this will make it very efficient as it does not need to encode anything.

Stream copy

Output.mp3: Output the new file that only contains the audio stream(s) into Output.mp3

There might be other options or tweaks that work for you, but I found this to be fast and work well to solve my problem.

Calling ffmpeg from CSharp

In my case, I wanted to run the above ffmpeg command as part of my transcription pipeline and therefore call it from C#. The below code is a minimal approach to do so:

const string ffMpegLocation = "PATH_TO_FFMPEG.EXE"; var inputFile = "Input.mp3"; var outputFile = "Output.mp3"; var processStartInfo = new ProcessStartInfo { FileName = ffmpegLocation, Arguments = $"ffmpeg -i \"{inputFile}\" -map 0:a -codec:a copy \"{outputFile}\"", UseShellExecute = false, RedirectStandardOutput = true, CreateNoWindow = true }; var process = Process.Start(processStartInfo); process.WaitForExit();

Contribute

I hope this helps, and if you have any tweaks you want to share, please let me know. I'm at @PeterOrneholm.

0 notes

Text

Have we reached peak Corona in Swedish news?

I recently blogged about the site RadioText - a site that automatically transcribes Swedish news radio shows using AI services in Microsoft Azure. I realized I had plenty of data available for some interesting analytics, so I decided to scan all news from Swedish Radio Ekot since 2019-12-01 and were looking for words related to Corona/Covid-19. This info was then visualized in a graph using PowerBI to show the correlation between to the statistics from Swedish authorities.

Below you can see the graphs (Swedish). The graphs are based on automatic computer transcription and is not 100% accurate but should show the overall trends. It has analyzed more than 340 of broadcasts with more than 2 300 hours of audio. The numbers are the sum of each word per week. It’s worth noticing that Ekot started to broadcast more episodes when the Corona crises began.

A quick reflection over the data is that initially “China” and “Virus” were mention quite often, but a few weeks later Corona became the most common word. During week 9 Italy peaked, as that was the week people from Stockholm went skiing there. The word “Crisis” started to become common around week 13/14.

Have we reached peak Corona?

But, to my question in the title - Have we reached peak Corona? According to my graphs we have, at least regarding the word Corona / Covid-19. It is starting to decline - or it’s understood by everybody so that the word isn’t used as much even if the news is regarding Corona.

Overview

Niche words

Week 14

0 notes

Text

Introducing RadioText.net - Transcribing news episodes from Sveriges Radio

RadioText.net is a site that transcribes news episodes from Swedish Radio and makes them accessible. It uses multiple AI-based services in Azure from Azure Cognitive Services like Speech-to-Text, Text Analytics, Translation, and Text-to-Speech.

By using all of the services, you can listen to "Ekot" from Swedish Radio in English :) Disclaimer: The site is primarily a technical demo, and should be treated as such.

Background

Just to give you (especially non-Swedish) people a background. Sveriges Radio (Swedish Radio) is the public service radio in Sweden like BBC is in the UK. Swedish Radio does produce some shows in languages like English, Finnish and Arabic - but the majority is (for natural reasons) produced in Swedish.

The main news show is called Ekot ("The Echo") and they broadcast at least once every hour and the broadcasts range from 1 minute to 50 minutes. The spoken language for Ekot is Swedish.

For some time, I've been wanting to build a public demo with the AI Services in Azure Cognitive Services, but as always with AI - you need some datasets to work with. It just so happens that Sveriges Radio has an open API with access to all of their publically available data, including audio archive - enabling me to work with the speech API:s.

Architecture

The site runs in Azure and is heavily dependant on Cognitive Services. It's split into two parts, Collect & Analyze and Present & Read.

Collect & Analyze

The collect & analyze part is a series of actions that will collect, transcribe, analyze and store the information about the episodes.

It's built using .NET Core 3.1 and can be hosted as an Azure function, Container or something else that can run continuously or on a set interval.

The application periodically looks for a new episode of Ekot using the Sveriges Radio open API. There is a NuGet-package available that wraps the API for .NET (disclaimer, I'm the author of that package...). Once a new episode arrives, it caches the relevant data in Cosmos DB and the media in Blob Storage.

JSON Response: https://api.sr.se/api/v2/episodes/get?id=1464731&format=json

The reason to cache the media is that the batch version of Speech-to-text requires the media to be in Blob Storage.

Once all data is available locally, it starts the asynchronous transcription using Cognitive Services Speech-to-text API. It specifically uses the batch transcription which supports transcribing longer audio files. Note that the default speech recognition only supports 15 seconds because it is (as I've understood it) more targeted towards understanding "commands".

The raw result of the transcription is stored in Blob-storage, and the most relevant information is stored in Cosmos DB.

The transcription contains the combined result (a long string of all the text) the individual words with timestamps. A sample of such a file can be found below:

Original page at Sveriges Radio: Nyheter från Ekot 2020-03-20 06:25

Original audio: Audio.mp3

Transcription (Swedish): Transcription.json

This site only uses the combined result but could improve the user experience by utilizing the data of individual words.

All of the texts (title, description, transcription) are translated into English and Swedish (if those were not the original language of the audio) using Cognitive Services Translator Text API.

A sample can be found here: https://radiotext.net/episode/1464731

All texts mentioned above are analyzed using Cognitive Services Text Analytics API, which provides sentiment analysis, keyphrases and (most important) named entities. Named entities are a great way to filter and search the episodes by. It's better than keywords, as it's not only a word but also what kind of category it is. The result is stored in Cosmos DB.

The translated transcriptions are then converted back into audio using Cognitive Services Text-to-Speech. It produces one for English and one for Swedish. For English, there is support for the Neural Voice and I'm impressed by the quality, it's almost indistinguishable from a human. The voice for Swedish is fine, but you will hear that it's computer-generated. The generated audio is stored in Blob Storage.

Original audio: Audio.mp3

English audio (JessaNeural, en-US): Speaker_en-US-JessaNeural.mp3

Swedish audio (HedvigRUS, sv-SE): Speaker_sv-SE-HedvigRUS.mp3

Last but not least, a summary of the most relevant data from previous steps are denormalized and stored in Cosmos DB (using Table API).

Present & Read

The site that presents the data is available at https://radiotext.net/. It's built using ASP.NET Core 3.1 and is deployed as a Linux Docker container to Dockerhub and then released to an Azure App Service.

Currently, it lists all episodes and allows for in-memory filtering and search. From the listing, you can see the first part of the transcription in English and listen to the English audio.

By entering the details page, you can explore the data in multiple languages as well as the original information from the API.

Immersive reader

Immersive Reader is a tool/service that's been available for some time as part of Office, for example in OneNote. It's a great way to make reading and understanding texts easier. My wife works as a speech- and language pathologist and she says that this tool is a great way to enable people to understand texts. I've incorporated the service into Radiotext to allow the user to read the news using this tool.

Primarily, it can read the text for you, and highlight the words that are currently being read:

It can also explain certain words, using pictures:

And if you are learning about grammar, it can show you grammar details like what verbs are nouns, verbs, and adjectives:

I hadn't used this service before, but it shows great potential for making texts more accessible. Combined with Speech-to-text, it can also make audio more accessible.

Cost

I've tried to get a grip on what the cost would be to do run this service and I estimate that to run all services for one episode of Ekot (5 minutes) the cost is roughly €0,2. That includes transcribing, translating, analyzing and generating audio for multiple languages.

Speech pricing

Translation pricing

Text analytics pricing

Also, there will be a cost for running the web, analyzer, and storage.

Ideas for improvement

The current application was done to showcase and explore a few services, but it's not in any way feature complete. Here are a few ideas on the top of my mind.

Live audio transcription: Speech to text supports live audio transcription, so we could transcribe the live radio feed. Could be comined with subtitles idea below.

Improve accuracy with Custom Speech: Using Custom Speech we could improve the accuracy of the transcriptions by training it on some common domain-specific words. For example, the jingle is often treated as a words, while it should not.

Enable subtitles: Using the timestamp data from the transcription subtitles could be generated. That would enable a scenario where we can combine the original audio with subtitles.

Multiple voices: A natural part of a news episode are interviews. And naturally, in interviews, there are multiple people involved. The audio I'm generating now is reading all texts with the same voice, so in scenarios when there are conversations it sounds kind of strange. Using conversation transcription it could find out who says what and generate the audio with multiple voices.

Improve long audio: The current solution will fail when generating audio for long texts. The Long Audio API allows for that.

Handle long texts: Both translation and text analytics has limitations on the length of the texts. At the moment, the texts are cut if they are too long, but they could be split into multiple chunks and then analyzed and concatenated again.

Search using Azure Search: At the moment the "search" and "filtering" functionality is done in memory, just for demo purposes. Azure Search allows for a much better search experience and could be added for that. Unfortunately, it does not allow for automatic indexing of Cosmos DB Table API at the moment.

Custom Neural Voice: I've always wanted to be a newsreader, and using Custom Neural Voice I might be able to do so ;) Custom Neural Voice can be trained on your voice and used to generate the audio. But, even if we could to this, it doesn't mean we should. Custom Neural Voice is one (maybe the only?) service you need to apply for to be able to use. In the world of fake news, I would vote for not implementing this.

Disclaimer

This is an unofficial site, not built or supported by Sveriges Radio. It's based on the open data in their public API. It's built as a demo showcasing some technical services.

Most of the information is automatically extracted and/or translated by the AI in Azure Cognitive Services. It's based on the information provided by Swedish Radio API. It is not verified by any human and there will most likely be inaccuracies compared to the source.

All data belongs is retrieved from the Swedish Radio Open API (Sveriges Radios Öppna API) and is Copyright © Sveriges Radio.

Try it out and contribute

The Source code is available at GitHub and Docker image available at Dockerhub.

Hope you like it. Feel free to contribute :)

0 notes

Text

Introducing Cognitive Workbench

Working more and more with Cognitive Services in Azure, I saw the need for a tool to explore and visualize the API.

Cognitive Workbench is a site to learn about and demo the capabilities of Azure Cognitive Services. The site aims to be a complement to already existing demos, samples and sites to make it easier to try out the capabilities, visulize results and explain how the services works.

The sites is targeted towards people familiar with Microsoft Azure and Cognitive Services and will require you to create resources in Azure and bring your own keys.

You can use the site at: https://cognitive-workbench.azurewebsites.net/

The Sourcecode is available at GitHub and Docker image available at Dockerhub.

As of today, the site covers these services and API:s:

Vision

Computer Vision

AnalyzeImage (ImageType, Faces, Adult, Categories, Color, Tags, Description, Objects, Brands)

AreaOfInterest

RecognizeText

RecognizePrintedText

BatchReadFile

Face

Detect (Age, Gender, HeadPose, Smile, FacialHair, Glasses, Emotion, Hair, Makeup, Occlusion, Accessories, Blur, Exposure, Noise)

Samples

Computer Vision

Input

Analyze

OCR

Face

Contributions

Feel free to contribute. A few ideas of improvements are available as GitHub issues.

Thanks to @NicoRobPro for some great additions to this site.

0 notes

Text

CogBox.net - A jukebox enhanced with cognitive skills

Some time ago, we at Active Solution arranged an internal Hackathon with focus on music and modern web technologies. Me myself decided I wanted to see how I could combine AI and music from Spotify - and then Cognitive Jukebox was born!

Introducing Cognitive Jukebox

CogBox.net is a jukebox enhanced with cognitive skills. Take a selfie and the application will play music from the year you were born.

The application is built using modern web technologies, AI services in Azure and music from Spotify.

Frontend

Using Javascript the stream from the webcam is captured and once the user takes a photo it's sent to the ASP.NET Core backend.

navigator.mediaDevices.getUserMedia({ audio: false, video: { facingMode: { exact: "user" } } }).then(function (mediaStream) { videoElement.srcObject = mediaStream; });

Age detection

The backend forwards the image to Cognitive Services in Azure. Using the Face API we can extract information such as estimated age, emotions, gender etc. In this case we focus on the estimated Age.

var analyzeImageResult = await _computerVisionClient.AnalyzeImageInStreamAsync(file.OpenReadStream(), new List<VisualFeatureTypes> { VisualFeatureTypes.Description, VisualFeatureTypes.Faces }); var age = analyzeImageResult.Faces.First().Age;

Spotify Music

Given that age, we can figure out the year of birth of the user. Spotify allows us to filter music by year, so the Spotify Web API can then be used to get some top tracks from that year. The OSS lib SpotifyAPI-NET made it very easy to consume from .NET.

var searchItems = api.SearchItems("year:" + year, SearchType.Track); var mp3Url = searchItems.Tracks.Items.First().PreviewUrl;

Cost

There are a few basic costs involved. But the part that could become expensive is the analytics of images. I've estimated it to cost roughly $1 USD / 1000 images analyzed.

The Spotify Web API is free to use, but comes with rate limits. If I hit those, the site would require the user to login and use their own account.

Interested in even more details?

If you or your company wants to know more about services like this, I deliver a session/Workshop called "Democratizing AI with Azure Cognitive Services" which shows the potential of Cognitive Services by fun and creative examples. Please drop me an email if that sounds interesting.

Summary

This site brings zero to no value, but it was a fun way of exploring a few technologies and maybe it will give a smile to those who uses it :)

I've published all the source code to GitHub and the dockerimage that powers the site is available at DockerHub.

If you like it, please share it with friends and family. I've noticed that people like it the most when it subtracts a few years from your age.... :)

Any feedback or cheers is always welcome, I'm @PeterOrneholm at Twitter.

0 notes

Text

Active Login 3.0 - Implementera Mobilt BankID i .NET

För drygt 9 månader sedan, i februari 2019, lanserade vi Active Login 1.0 - En samling bibliotek som drastiskt minskar tiden det tar att implementera BankID / Mobilt BankID i .NET/ASP.NET.

9 månader går fort och vi har på den tiden nått nästan 30 000 nedladdningar på NuGet, fått över 100 stjärnmarkeringar på GitHub och sett projektet växa till vad som idag ser ut att vara det största open source-projektet kring BankID i hela Sverige!

I sitt enklaste utförande krävs det nu alltså endats ett fåtal rader kod för att komma igång med BankID i ASP.NET, något som förut kunde ta veckor för att få till på ett korrekt sätt:

services .AddAuthentication() .AddBankId(builder => { builder .AddOtherDevice() .AddSameDevice(); });

Projektet i sig är helt kostnadsfritt att använda och tillgängligt under MIT-licensen. Tack vare vår gedigna kunskap på området har vi sett en stor efterfrågan att på konsultbasis få hjälpa företag kring utbildning, implementering och verifiering. Vi vet att Active Login idag används i branscher så som finans, säkerhet, fastighet, kommuner och utbildning.

Fler och fler använder Active Login för att möjliggöra BankID i Identity Server, Azure AD B2C eller i kombination med andra lösningar och det är kul att få assistera på dessa områden. Hör av dig om du eller ditt företag vill ha hjälp att komma igång med säker bankinloggning.

Active Login 3.0 (Alpha)

Vi är glada att en alphaversion av Active Login 3.0.0 nu finns att ladda ner på NuGet!

Självklart finns all källkod på GitHub.

Nyheter i 3.0

I version 3 har vi gjort tusentals både mindre och större förändringar i kod, texter, byggpipeline, texter m.m. I stora drag är det följande nyheter:

Stöd för att autentisera användaren med BankIDs QR-kod. Detta är nu standardkonfigurationen.

Stöd för att avbryta en påbärjad inloggning. Användaren kan då välja en alternativ inloggningsmetod och sessionen gentemot BankID avbryts.

Uppdaterade texter för knappar, status m.m. enligt BankIDs nya riktlinjer.

Uppdaterad dokumentation som ska vara tydligare och lättare att följa.

Uppdaterade exempel för ASP.NET Core 3.

Vi har även gjort en del tekniska uppdateringar:

Våra API-wrappers (*.Api) kräver nu .NET Standard 2.0. Vi dubbelkompilerar inte längre mot .NET Framework. Notera att .NET Framework >= 4.6.1 stödjer .NET Standard 2.0 så du kan fortfarande använda .NET Framework om du kör en någorlunda modern version.

Våra UI-paket (*.AspNetCore) kräver nu ASP.NET Core 3.1. Dessa paket har därmed inte något "strong naming" längre då detta endast ska vara relevant för .NET Framwork.

Byggena i Azure DevOps defineras nu i kod med hjälp av Azure Pipeline YAML.

En mer detaljerad genomgång hittar ni på GitHub.

Nyhet: QR kod

Den största synliga nyheten i 3.0 är stödet för att låta användaren initiera inloggningen med hjälp av BankIDs QR-kod. I och med detta försvinner behovet av att ange sitt personnummer. Stödet för personnummer finns kvar, men bör endast användas om speciella skäl finns.

QR-kodsstödet är aktiverat som standard, men du behöver välja en QR-kodsimplementation. Vi har byggt ett paket som använder tredjepartsbiblioteket QRCoder och du aktiverar det genom att anropa .UseQrCoderQrCodeGenerator(), t.ex. såhär:

services .AddAuthentication() .AddBankId(builder => { builder .UseSimulatedEnvironment() .AddSameDevice() .AddOtherDevice() .UseQrCoderQrCodeGenerator(); });

Nyhet: Avbryt

Användarna kan nu avbryta en påbörjad inloggning. När detta sker så kontaktas BankID och sessionen avbryts. Användaren skickas sedan tillbaka till startsidan.

Om Active Login används i t.ex. Identity Server måste cancelReturnUrl överridas för att ta hänsyn till den returnUrl som klienten angett.

Du gör detta som en del av AuthenticationProperties, t.ex. såhär:

public IActionResult ExternalLogin(string provider, string returnUrl) { var props = new AuthenticationProperties { RedirectUri = Url.Action(nameof(ExternalLoginCallback)), Items = { { "returnUrl", returnUrl }, { "scheme", provider }, { "cancelReturnUrl", Url.Action("Login", "Account", new { returnUrl }) } } }; return Challenge(props, provider); }

Exempel: Azure AD B2C

Vi har sett en stor efterfrågan på att använda Active Login för att möjliggöra BankID i Azure AD B2C. Tack vare Identity Server + Active Login kan Azure AD B2C prata med BankID.

Hör av dig om du vill ha assistans i att sätta upp detta.

Tack

Ett stort tack till de personer som bidragit med kod, dokumentation, tester och diskussion. Tack vare er blir Active Login en bättre produkt.

Följande personer har på ett eller annat sätt varit involverade i senaste releasen:

Peter Örneholm (@PeterOrneholm)

Daniel Kvist (@span)

Nikolay Krondev (@nikolaykrondev)

Viktor Andersson (@viktorvan)

Peter Hedberg (@Palpie)

Håkan Sjö Ballina (@HSBallina)

Fredrik Lundin (@fredrik-lundin)

Fredrik Karlsson (@FredrikK)

Conny Sjögren (@Zonnex)

Ett speciellt tack till Daniel Kvist som gjort en hjälteinsats för att få till QR-kodstöd samt stöd för avbryt!

Testa, diskutera och kom med feedback

Vi är väldigt tacksamma för all feedback vi kan få. Om du hittar eventuella buggar, rapportera in dem på GitHub som en GitHub Issue.

Vi är numera aktiva på Slack och Twitter, följ oss gärna där och gå med i diskussionen!

Plan framåt

Vi planerar att lansera den skarpa versionen (redo för produktion) av 3.0 senare under året. Har du ideér eller behov för framtiden, lägg upp en issue på GitHub så vi vet vad vi ska planera inför nästa version.

0 notes

Text

BirdOrNot.net - Is it a bird or not?

As a technical person and developer, I'm a big fan of XKCD which makes entertaining comics about our industry. A couple of years ago I stumbled upon this one:

Turns out that it was published September 2014, almost exactly 5 years ago, the time the team would need to solve the problem according to the comic. So, I thought, this should be solved now, right? And it turns out it did!

Today I'm proud to announce: BirdOrNot.net

BirdOrNot.net uses the Artificial Intelligence (AI) powered object detection capability in Azure Cognitive Services to find out if the image contains a bird and, in some cases, it even finds out what species it is. I didn't have to hire a team of researchers for five years, instead Microsoft did and made the result available as a service in Azure.

Using that specific service, I was able to go from idea to working concept in under an hour, with a few more hours spent on CSS :)

Below you can see a few samples, feel free to try it out yourself on the site: https://birdornot.net/

It builds on the default object detection model provided by Azure Cognitive Services, which can detect anything from buildings, vehicles to animals. It does a very good job of detecting birds, but as it's not specialized on birds, there will be cases where it makes mistakes. Still, shows how the power of AI and ML can be used by everyone who knows how to call a webservice.

If you boil it down, these are the few lines that powers the site:

var imageAnalysis = await computerVisionClient.AnalyzeImageAsync(url, new List { VisualFeatureTypes.Objects }); var isBird = imageAnalysis.Objects.Any(x => x.ObjectProperty.Equals("bird")); Console.WriteLine(isBird ? "It is a bird." : "It is not a bird.");

You can see a simple sample with the code above at GitHub.

Behind the scenes

The source code is fully available at GitHub, feel free to dig around to learn how it works. If you want to run it yourself, the image that runs on the site is available at dockerhub (peterorneholm/orneholmbirdornotweb).

docker run --env AzureComputerVisionSubscriptionKey=XYZ --env AzureComputerVisionEndpoint=ABC peterorneholm/orneholmbirdornotweb

For those of you that are interested, here comes a brief explanation on the setup.

Architecture

Object recognition

The service is using Azure Cognitive Services to analyse the image. It fetches the following info:

VisualFeatureTypes.Objects (Used for detecting the birds and getting their bounding rectangles. Checks the object hierarchy to detect species.)

VisualFeatureTypes.Description (Used to describe the image with text and tags.)

VisualFeatureTypes.Adult (Used to decline images classed as Adult, Gory or Racy.)

Code

The website is written in ASP.NET Core 3 and packaged as a container running Linux.

Hosting

The container is published to dockerhub and then automatically deployed to Azure App Service for easy management and scale out if needed.

Cache

The costly part in the application (both money and time) is the analysis of an image, therefore every unique URL is cached. The application uses the IDistributedCache interface and is configured to use Azure cache for Redis (hosted) as the implementation.

In case the site would go viral, I'm distributing it through Azure Frontdoor. By doing so I have HTTP cache at the edge so that the samples and landing page will have no impact on the backend and be served immediately.

Analytics

The site is monitored through Azure Application Insights / Azure Monitor. I store anonymous telemetry on how the site is used and by collecting feedback on wheter or not the audience agrees with the AI motor, it could be used to improve over time.

I've activated a custom alert that will send me an email in case the traffic to the site would rapidly increase.

Cost

There are a few basic costs involved. But the part that could become expensive is the analytics of images. I've estimated it to cost roughly $5 USD / 1000 images analyzed. By caching the results I'm preventing the samples to cost me any money and I will be able to handle the cost for quite a lot of images.

Next steps

Here are a few things I've thought about implementing:

Custom Vision: I would like to add som custom models to detect "Bird like" things, like Big Yellow Bird, Jack Sparrow etc. I could use Azure Custom Vision for this, just give me some time and I'll fix it :)

The XKCD above also mentions the task of detecting if the photo is taken in a national park. I've thought of using Azure Maps to find the POI based on GPS coordinates stored in the picture.

Make it a PWA and publish it to Store(s)

Interested in even more details?

If you or your company wants to know more about services like this, I deliver a session/Workshop called "Democratizing AI with Azure Cognitive Services" which shows the potential of Cognitive Services by fun and creative examples. Please drop me an email if you want more details.

0 notes

Text

Async routes stopped working in ASP.NET Core 3

I’m part of the team that maintains Active Login, an ASP.NET authentication provider for Swedish BankID. The package is targeting .NET Standard 2.0 and should therefore need to updates for ASP.NET Core 3, but we have samples of usages written in ASP.NET Core 2.2, so I set out to update them. The PR to go from 2.2 to 3.0 can be found here, if interesting for anyone.

While doing the upgrade I realized the package didn’t work properly. Some debugging and I found out that the wrong URL was resolved for my API actions.

TL;DR: In ASP.NET Core 3, if you have Action methods suffixed with Async but a route path that does not include Async, refer to them without the Async suffix when resolving a URL through (for example) Url.Action(). This seems to be a breaking change from ASP.NET Core 2.2 which is not officially documented.

So, change Url.Action(”ActionAsync”, “Controller”) into Url.Action(”Action”, “Controller”) when upgrading from Core 2.2 to Core 3.0.

Some more in depth:

After some googling, I found this GitHub Issue, and it seems to be considered a minor change and that’s why I didn’t see this as part of the official documentation. I might have used the routing in a way not many people do, but thought it’s worth writing this post for those who have the same issue as me.

Id did create a repo with a minimal setup to verify the behavior. It seems that when running ASP.NET Core 3, you should refer to the action without the Async suffix to get a correct url from Url.Action (or IUrlHelper).

ASP.NET Core 2.2

ASP.NET Core 3.0

Another change is that if the action can’t be found, it will fall back to the url pattern convention. In 2.2 and below it returned an empty string. My guess is that this is related to the new endpoint routing.

So, I hopefully this can save someone a few hours of debugging… :)

0 notes

Text

How the cloud got me a free Swedish massage

Ok, technically, it's my employer Active Solution that provides free Swedish Massage for me as an employee (according to The Daily Show is is part of our Stockholm Syndrome). The issue though, is that the weakly massage has limited amount of available seats. You can sign up in advance, but I never seem to prioritize that and when it’s Wednesday morning, all slots are usually taken.

Working a couple of years at this place I’ve realized a few things though: There will always be one or two persons that in the last minute needs to hand their slot over to someone else. The way we handle this is as simple as sending out an email to everyone stating:

“Available massage time at 11 o’clock!”

You reply and you get the slot. But I’m too late to the game, every - single - time.

I thought, how can I beat the game and always be first to grab those available slots? And this is where the cloud comes in :)

Introducing Massage Email Bot

To be able to grab those available slot I wanted to implement some kind of automatic reply on those specific emails. You can do conditional auto replies in Outlook, but those rules will only evaluate on the client which requires you to have your computer and Outlook running at all time. Didn’t work for this scenario.

As the developer I am I was thinking of writing some Azure Function, grabbing emails through the Microsoft Graph Outlook API:s and doing some magic there, but it all seemed to much orchestration for such simple task. I quickly evaluated Logic Apps (that could have solved it) but eventually turned to Microsoft Flow (which i, from what I can understand, is a more end user friendly version of the same infrastructure as Logic Apps). Microsoft Flow is at its core quite similar to IFTTT which I’m using for automating other things.

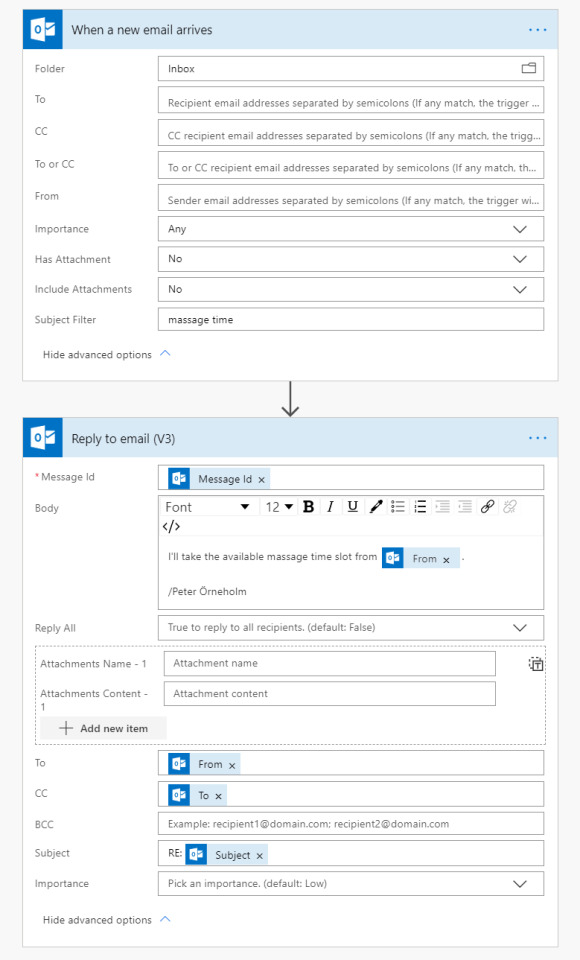

In Microsoft Flow I used the Office 365 Outlook Connector, listened for incoming emails, filter them out by the subject line and then make a customized auto reply. My first version looked like this:

But after trying it out I realized a few flaws, most importantly, it would introduce an infinite loop and completely flood my inbox. Because the auto reply also included “Massage time” in the subject line, the same flow would trigger on the auto reply and so it would go on forever. I also wanted to avoid any auto reply outside of the organisation, so I wanted to filter on our email domain.

Using the conditional block I made sure to only reply on the initial email and ended up with this flow.

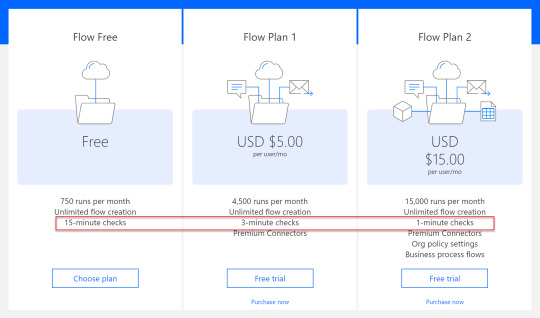

I was ready to try it out, but realized it was to slow! It took up to several minutes for it to make the reply. Turned out that the free plan only runs the flow roughly every 15 minutes and I needed to step up to a Premium plan to get 1 minute checks. I would have loved if the Outlook connector could take advantage of the notification API and react immediately without having to scan the inbox in intervals. But you could get a 90 day free trial for Premium, so I did and now it was quick enough.

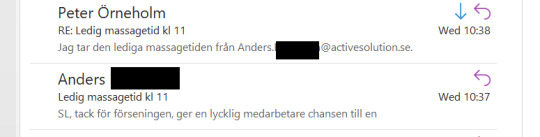

So, finally Wednesday and massage time. As usual I had no slot and was quite occupied so didn’t have time to check my inbox and then it happened. My bot had grabbed an available slot for me and my time paid off ;)

Ideas of improvement

Calendar integration: I did try to parse the time in the email and create a slot in my calendar (and also verified I was actually available), but people tend to write the time in so many ways it wasn’t easily solvable. I did try to run it through Cognitive Services Text Analysis but it only catches the most obvious cases which I as easily could grab with a simple Regexp, might be that the Swedish model is not as accurate as the English one.

Multi language: At Active Solution we speak multiple languages, so it would be nice if it supported multiple languages. Maybe overkill, but there is a translator connector that could provide a generic solution to that “problem”.

Summary

I’ve actually turned of this flow now, to give my colleagues a fair chance of grabbing those slots, but it was a fun way of exploring a new technique. I think Microsoft Flow can really automate boring and simple tasks while you can spend time on more interesting things. For some scenarios you’ll have to step up your pricing plan, but the free tier will handle most of your personal scenarios.

0 notes

Text

Introducerar ActiveLogin.Authentication

Idag introducerar vi ActiveLogin.Authentication 1.0.0, en samling bibliotek för att förenkla integrationen av svenskt BankID i en ASP.NET Core-applikation. Enkelt att installera från NuGet och all källkod tillgänlig på GitHub.

BankID är idag en vida utbredd och snabbt växande autentiseringsmetod för såväl offentliga som privata tjänster. Under 2018 uppgick det totala antalet användningstillfällen (autentisering och signering) till 3,3 miljarder. En ökning med 0,8 miljarder jämfört med året innan. Men tröskeln för att implementera inloggning med BankID på ett korrekt sett i en modern ASP.NET-miljö är relativt hög. Den tekniska dokumentationen innehåller inga kodexempel och ingen teknisk support tillhandahålls.

Vi såg en ökande efterfrågan från våra kunder att integrera BankID i deras applikationer samtidigt som många hade problem att få till en fungerande lösning. Vår ambition var att en BankID-integration skulle vara så enkelt som att installera ett NuGet paket.

Kom igång med Active Login

Active Login kommer i två varianter, BankID och GrandID. Där den första är en direktintegration mot det API som förses av BankID, medan GrandID går via en extern tjänst med samma namn som tillhandahålls av Svensk e-identitiet. Direktintegrationen erbjuder större flexibilitet och möjligheter att anpassa användargränssnittet genom användning av Razor Class Libraries. Direktintegrationen kräver dock ett certifikat utfärdat av någon av de fem storbanker som är återförsäljare av BankID. Med GrandID behöver du inget certifikat, utan kan komma igång betydligt snabbare med de API nycklar som du beställer från Svensk e-identitiet.

Den fullständiga dokumentationen hur man använder Active Login återfinns på GitHub, men en kort intro följer nedan för att ge dig en känsla för hur det används.

BankID

Installera NuGet paketet

Installera NuGet paketet ActiveLogin.Authentication.BankId.AspNetCore med valfritt verktyg, exempelvis dotnet cli.

dotnet add package ActiveLogin.Authentication.BankId.AspNetCore

Kom igång med utvecklingsmiljön

Active Login registreras i ConfigureServices(…) i Startup.cs. Slå på .UseSimulatedEnvironment() för att köra mot en in-memory implementation av API:et som inte kräver att du har ett certifikat på plats.

using ActiveLogin.Authentication.BankId.AspNetCore; services .AddAuthentication() .AddBankId(builder => { builder .UseSimulatedEnvironment() .AddSameDevice() .AddOtherDevice(); });

Gå vidare till test- och produktionsmiljön

Fullständig beskrivning av hur du använder test eller produktionsmiljön, samt hur du anpassar paketet, hittar du i den fullständiga dokumentationen på GitHub.

BankID via GrandID

Installera NuGet paketet

Installera NuGet-paketet ActiveLogin.Authentication.GrandId.AspNetCore med valfritt verktyg, exempelvis dotnet cli.

dotnet add package ActiveLogin.Authentication.GrandId.AspNetCore

Kom igång med utvecklingsmiljön

Active Login registreras i ConfigureServices(…) i Startup.cs. Slå på UseSimulatedEnvironment() för att köra mot en in-memory implementation av API:et som inte kräver att du har API nycklar på plats.

using ActiveLogin.Authentication.GrandId.AspNetCore; services .AddAuthentication() .AddGrandId(builder => { builder .UseSimulatedEnvironment() .AddBankIdSameDevice(options => { }) .AddBankIdOtherDevice(options => { }); });

Gå vidare till test och produktionsmiljön

Fullständig beskrivning av hur du använder test eller produktionsmiljön, samt hur du anpassar paketet, hittar du i den fullständiga dokumentationen på GitHub.

Anpassa Active Login

Förutom att komma igång väldigt snabbt med en grunduppsättning finns även möjlighet till anpassningar. Kanske vill du endast tillåta Mobilt BankID, lägga till fler språk än de inbyggda (Svenska / Engelska) eller helt anpassa det grafiska utseendet. Allt detta är tekniskt möjligt och finns dokumenterat på GitHub.

Som exempel kan du begränsa vilka typer av BankID som tillåts samt styra om hurvida användaren kan nyttja biometrisk inloggning.

.AddBankId(builder => { builder.UseProductionEnvironment() .AddSameDevice(options => { // Only allow Mobile BankID options.BankIdCertificatePolicies = BankIdCertificatePolicies.GetPoliciesForProductionEnvironment( BankIdCertificatePolicy.MobileBankId ); // Don't allow biometric login options such as fingerprint or face id options.BankIdAllowBiometric = false; }); });

Azure

Majoriteten av de projekt vi på Active Solution arbetar med tas fram för att driftas i Microsoft Azure. Active Login i sig har inget direkt beroende på Azure, men vi har byggt extra bibliotek för att göra det enklare att använda Azure som plattform.

Som exempel kan du med biblioteket ActiveLogin.Authentication.BankId.AspNetCore.Azure enkelt lagra det certifikat du får ifrån BankID i Microsoft Azure KeyVault, så att det hanteras på ett säkert sätt.

builder.UseProductionEnvironment() .UseClientCertificateFromAzureKeyVault(Configuration.GetSection("ActiveLogin:BankId:ClientCertificate"))

Det finns även en mall, en Azure ARM Template, som du kan utgå ifrån när du vill spinna upp en miljö att drifta din inloggningslösning i.

Personnummer

För att möjliggöra för användaren att mata in sitt personnummer på olika sätt (10 siffror, 12 siffror, med eller utan - etc.) byggde vi som en del av Active ett separat paket för att validera och normalisera svenska personnummer. Läs mer om det i följande blogginlägg.

Vill du vara med och bidra till utvecklingen av Active Login?

Active Login är ett Open Source projekt och vi ser gärna att fler är med och bidrar. All källkod finns tillgänglig på GitHub under MIT-licensen. Grundläggande kunskap om Git och GitHub krävs för att komma igång. Skicka in dina kodändringar som en Pull request. Glöm inte att skriva en utförlig beskrivning och att tillhandahålla tester som täcker de ändringar du har gjort. Har du idéer om ny funktionalitet eller stött på problem? Skapa en ny Issue för att starta en diskussion om ämnet.

Sist men inte minst, ge gärna vårt repo en stjärna om du gillar projektet :)

Författare

Det här blogginlägget är komponerat av Elin Fokine, en av huvudutvecklarna bakom ActiveLogin.Authentication, till vardags IT-konsult och systemutvecklare på Active Solution.

0 notes

Text

Introducerar ActiveLogin.Identity.Swedish

Idag introducerar vi ActiveLogin.Identity.Swedish 2.0.0, ett bibliotek för parsning, validering och normalisering av svenska personnummer för .NET. Enkelt att installera från NuGet och all källkod tillgänglig på GitHub.

I flera kundprojekt har vi haft ett behov att hantera personnummer. I sin enklaste form kan det handla om att en användare matar in ett personnummer i ett formulär, eller att vi tar emot ett personnummer från ett annat system.

När vi såg behovet av validering och normalisering av personnummer i flera projekt undersökte vi vad för bibliotek som fanns tillgänliga. De vi hittade byggde antingen på enkla Regex som inte validerade checksumman, eller så kunde de endast validera men inte normalisera personnumret. Därför valde vi att själva implementera en lösning som skulle lösa dessa problem.

ActiveLogin.Identity.Swedish är byggt i .NET Standard och kan därför användas i t.ex. .NET Core och .NET Framework. Det är enkelt att installera och köra på flertalet plattformar så som Windows, Linux och macOS.

Biblioteket hanterar både personnummer med sekelsiffra (12-siffror YYYYMMDDBBBC) och utan (10 siffror YYMMDD-BBBC, YYMMDD+BBBC). Alla ogiltiga tecken och blanksteg tvättas bort före parsning och biblioteket kan parsa alla inputsträngar som innehåller ett giltigt personnummer med 10 eller 12 siffror. Självklart verifieras även checksumman för att säkerställa att personnummret är korrekt. För exempel på giltiga och ogiltiga input se testerna.

Exempelanvändning C#

Installera paketet, t.ex. med dotnet cli

dotnet add ActiveLogin.Identity.Swedish

Parsa ett personnummer

using ActiveLogin.Identity.Swedish; if (SwedishPersonalIdentityNumber.TryParse("990807-2391", out var personalIdentityNumber)) { Console.WriteLine($"Personnummer med 10 siffror: {personalIdentityNumber.To10DigitString()}"); Console.WriteLine($"Personnummer med 12 siffror: {personalIdentityNumber.To12DigitString()}"); } else { Console.WriteLine("Personnumret är inte giltigt"); } // Output: // Personnummer (10 siffror): 990807-2391 // Personnummer representerat med 12 siffror: 199908072391

Exempelanvändning F#

Då biblioteket är implementerat i F# exponerar vi även ett F#-vänligt api för den som så föredrar.

Installera paketet, förslagsvis med paket

paket add ActiveLogin.Identity.Swedish`

Parsa ett personnummer

open ActiveLogin.Identity.Swedish.FSharp let pin = "990807-2391" |> SwedishPersonalIdentityNumber.parse |> Error.handle pin |> SwedishPersonalIdentityNumber.to10DigitString |> printfn "Personnummer med 10 siffror: %s" pin |> SwedishPersonalIdentityNumber.to12DigitString |> printfn "Personnummer med 12 siffror: %s" // Output: // Personnummer (10 siffror): 990807-2391 // Personnummer representerat med 12 siffror: 199908072391

Hjälpfunktioner

Biblioteket innehåller även ett antal hjälpfunktioner som kan användas för att läsa ut data från ett personnummer, t.ex. födelsedatum, ålder och kön. Eftersom det inte finns något sätt att garantera att dessa uppgifter är korrekta har vi valt att ge dessa funktioner ett Hints-suffix.

I C# är metoderna exponerade som extensionmetoder och ligger i namespacet ActiveLogin.Identity.Swedish.Extensions. De kan användas enligt:

using ActiveLogin.Identity.Swedish; using ActiveLogin.Identity.Swedish.Extensions; var pin = new SwedishPersonalIdentityNumber(1999, 8, 7, 239, 1); Console.WriteLine($"Födelsedatum: {pin.GetDateOfBirthHint().ToShortDateString()}"); Console.WriteLine($"Ålder: {pin.GetAgeHint().ToString()}"); Console.WriteLine($"Kön: {pin.GetGenderHint().ToString()}"); // Output: // Födelsedatum: 8/7/99 // Ålder: 19 // Kön: Male

I F# hittas funktionerna i en Hints-modul:

open ActiveLogin.Identity.Swedish.FSharp let pin = { Year = 1999 Month = 8 Day = 7 BirthNumber = 239 Checksum = 1 } |> SwedishPersonalIdentityNumber.create |> Error.handle pin |> SwedishPersonalIdentityNumber.Hints.getDateOfBirthHint |> fun date -> date.ToShortDateString() |> printfn "Födelsedatum: %s" pin |> SwedishPersonalIdentityNumber.Hints.getAgeHint |> Option.iter (printfn "Ålder: %i") pin |> SwedishPersonalIdentityNumber.Hints.getGenderHint |> printfn "Kön: %A" // Output: // Födelsedatum: 8/7/99 // Ålder: 19 // Kön: Male

Tester

Implementationen har arbetats fram testdrivet och idag finns mer än 200 tester som säkerställer att vi följer Folkbokföringslagens definition (FOL 18 §). För att vara kompatibel med GDPR används de testpersonnummer som Skatteverket tillhandahåller som öppen data.

Vill du vara med och bidra till utvecklingen av Active Login?

ActiveLogin är ett Open Source projekt och vi ser gärna att fler är med och bidrar. All källkod finns tillgänglig på GitHub under MIT-licensen. Grundläggande kunskap om Git och GitHub krävs för att komma igång. Skicka in dina kodändringar som en Pull request. Glöm inte att skriva en utförlig beskrivning och att tillhandahålla tester som täcker de ändringar du har gjort. Har du idéer om ny funktionalitet eller stött på problem? Skapa en ny Issue för att starta en diskussion om ämnet.

Sist men inte minst, ge gärna vårt repo en stjärna om du gillar projektet :)

Författare

Det här blogginlägget är komponerat av Viktor Andersson, en av huvudutvecklarna bakom ActiveLogin.Identity, till vardags IT-konsult och systemutvecklare på Active Solution.

0 notes

Text

Azure Portal Dashboard Contributor

With Azure RBAC you can delegate access on different scopes and with different allowed actions. The list of built in roles is comprehensive, but can of course not handle all available scenarios. One that I have lacked a couple of times is related to Portal Dashboards.

By default I like to lock down modification of resources for “human” accounts, and only allow service principals to create and modify but give read access to logs, Application Insights etc. But there is sometimes one exception, and that’s for the Portal Dashboards. I know you can automate the creation of them, but myself have found the syntax a bit tricky and also realized that dashboards tend to be something you want to experiment with a bit.

So, because you can create your own Role definitions I’ve put together a template that you can use to create a role called Portal Dashboard Contributor.

The template will give read, write and delete permissions:

Microsoft.Portal/dashboards/read

Microsoft.Portal/dashboards/write

Microsoft.Portal/dashboards/delete

Place it on either a Subscription to allow modification of all Dashboards within that, or preferably, on a specific resource group.

You will find the role template in this GitHub Gist: https://gist.github.com/PeterOrneholm/356bf53bec9ce42bc3d560c7faed7e6b

Make sure to replace the scopes with whatever Subscription(s) you want to apply it to.

You will find more docs on how to create custom roles here: https://docs.microsoft.com/en-us/azure/role-based-access-control/custom-roles

0 notes

Text

Introducing Orneholm.ApplicationInsights.HealthChecks! - ASP.NET Core Health Checks publisher for Application Insights

ASP.NET Core Health Checks is a great feature introduced in ASP.NET Core 2.2. It allows you to define a set of health checks that can verify the health status of your application by checking a range of conditions, like access to a database, Azure storage or maybe an external SMTP server.

The most common scenario is to publish an endpoint that hints the health of the application so that external monitoring solutions can check it, like a Kubernetes Cluster, Azure Traffic Manager or Application Insights Availability tests.

Speaking about Application Insights Availability, that is a great service that can ping your web application from locations around the globe to verify it is available. But even with the health check monitor endpoint enabled, you still would not get the exact details on what wasn’t working in case it reports unhealthy. Likewise, if the application is behind a firewall, no external service would be able to ping it. That leads us into...

Orneholm.ApplicationInsights.HealthChecks uses the IHealthCheckPublisher API:s to periodically push the health check reports, including details, to Application Insights as Availability telemetry.

Now you can track the health in Azure Portal:

You can also get full details by querying the data in Analytics:

And plot those durations as a scatter chart to get a visual overview:

The package is now available on NuGet: https://www.nuget.org/packages/Orneholm.ApplicationInsights.HealthChecks/

All source code is published under the MIT-license at GitHub: https://github.com/PeterOrneholm/Orneholm.ApplicationInsights

If you have feedback or question, please file an Issue at GitHub or ping me at Twitter! Enjoy!

0 notes

Text

Resolve “HTTP Error 500.30 - ANCM In-Process Start Failure” when running ASP.NET Core 2.2 in Azure App Service or IIS

I was just recently deploying a web project to Azure that I was working on during my spare time and realized It would not start, instead (after waiting almost a minute for a response) I got the following error: "HTTP Error 500.30 - ANCM In-Process Start Failure"

My setup was ASP.NET Core 2.2 targeting netcoreapp2.2 and running the in-process hosting model in IIS under Azure App Service (Windows). Some time spent debugging and I found out that this was the line that broke it:

After looking at the sourcecode for WebHostBuilderKestrelExtensions I realized you should use .ConfigureKestrel(...) instead of .UseKestrel(...) when running using the in-process hosting mode, otherwise both Kestrel and IIS seems to fight about what server to use.

There is actually a note on that you should to this in the Migration Guide from ASP.NET Core 2.1 to 2.2, but I had missed that..

TL;DR:

If running ASP.NET Core 2.2 using the in-process hosting mode and you want to Configure Kestrel, ensure to use .ConfigureKestrel(…) instead of .UseKestrel(…) in your Program.cs.

Update 2019-04-04:

I’ve got reports that this does not always solve the issue, but there are instructions from the ASP.NET team on GitHub that can solve the same error (from what I’ve heard): https://github.com/dotnet/core/blob/master/release-notes/2.2/2.2-known-issues.md

0 notes

Text

Forecast your Application Insights cost with the new pricing options

Azure Application Insights is basically Application Monitoring as a Service provided by Microsoft as part of their cloud offering. Application Insights have been in Preview for quite a long time but during Connect 2016 they did announce that it’s now Generally Available. Once a service in Azure goes GA, the preview prices does not apply anymore, but in addition to this they also announced that they are changing the pricing model.

Previously you were billed by how many data points you sent per month, independent of how much data (like custom properties) each point included. Now they have changed to bill you by the amount of data instead, which is what similar services in the industry do. There is also an enterprise option if you need continuous export or OMS integration. For full details, have a look at the pricing page: https://azure.microsoft.com/en-us/pricing/details/application-insights/

All new accounts you create in Application Insights will use the new pricing model, but existing ones will use the old one until February 1st (2017) when they will all automatically be converted. If you are interested in how this will affect your bill there is actually a chart available to have a look at.

Navigate to your AI resource in the portal and choose “Quota + prcing” in the left panel. At the bottom you will find two charts, one indicating Data points count (relevant for the old pricing model), but at the very bottom there is now a chart available to tell you about the Daily data volume (relevant for the new pricing model).

Given these numbers you should be able to figure out how it will affect your AI usage and billing.

0 notes