#Mukesh Agrawal

Photo

🔰Jharkhand Orthopedic Association - PG Teaching Webinar Series - Episode 2

🔆Sunday 19th June 2022, 10 am - 12:30 pm

🔆*Click to Watch : https://bit.ly/OrthoTV-JOA-PG-2

🔆*National Faculty - *

Prof. (Dr.) Harpal Singh Selhi

Prof. (Dr.) Along Chandra Agrawal

Dr. Ram Chadda

Prof. (Dr.) Debdutta Chatterjee

🔆JOA OFFICE BEARERS

Dr. Mukesh Prasad - President JOA

Dr. Manjit S Sandhu - President Elect JOA

Dr. Govind Gupta - Vice president JOA

Dr. Nirmal Kumar - Secretary JOA

Dr. S Ayan - Treasurer JOA

🔆PG TEACHING TEAM

Dr. Manish Raj

Dr. Rajesh Thakur

Dr. Saubhik Das

Dr. Birendra Kumar

🔆Topics with Speakers

What is expected from post graduate students while managing patients and wound care in words - From Consultant Prospective :- Prof. (Dr.) debdutta Chatterjee

Managing patients in emergency :- Everything a post graduate students should know :- Prof. (Dr.) Harpal Singh Selhi

How to approach final theory examination :- key to success :- Prof. (Dr.) Along Chandra Agrawal

Development of communication skill: A must know for post graduate students :- Dr. Ram Chadda

OrthoTV Team : Dr. Ashok Shyam, Dr. Neeraj Bijlani

Streaming Live on OrthoTV www.orthotvonline.com

#orthopedics #orthopaedics #orthopedicsurgery #OrthoTwitter

0 notes

Text

Door Bell (2017)

Door Bell (2017) is a 2021 India N/A language Sci-Fi film directed by Agrawal Mukesh Narayan. Door Bell (2017) film stars Shubhra Ghosh, Nataliya Kozhenova, Nishant Kumar, Tanisha Singh in supporting roles. Door Bell (2017) film premiered on 27 Oct 2017. Free download and watch Door Bell (2017) all episode. Door Bell (2017) A married couple tries to decipher their unusual childbirth.

Door Bell…

View On WordPress

0 notes

Text

तिरोड्याचे मुकेश अग्रवाल यांचा सहपरिवार दुबईत सत्कार

तिरोड्याचे मुकेश अग्रवाल यांचा सहपरिवार दुबईत सत्कार

तिरोडा : तिरोडा येथील सुप्रसिद्ध व्यावसायिक, मेरिटोरियस पब्लिक स्कूलचे संस्थापक तथा श्याम ट्रेडर्सचे संचालक मुकेश अग्रवाल यांचा सहपरिवार दुबई येथे एका छोटेखानी कार्यक्रमात सीमेंट व्यवसायात ग्राहकांना उत्कृष्ट सेवा देत असल्याबद्दल अंबुजा कंपनीच्या वतीने सत्कार करण्यात आला. (Tiroda’s Mukesh Agarwal’s family felicitated in Dubai)

मुकेश अग्रवाल हे केवळ सीमेंट व्यवसायातच उत्कृष्ट सेवा देत नाही तर…

View On WordPress

0 notes

Photo

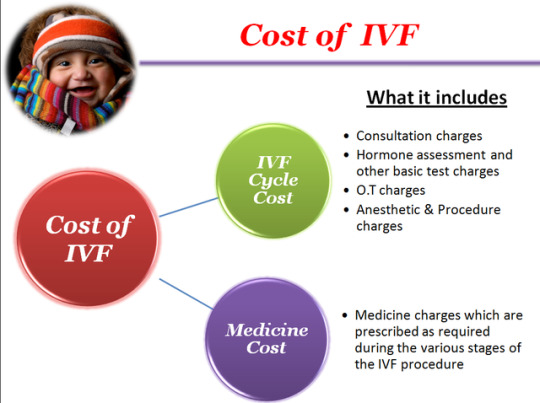

Cost of IVF in Mumbai | Elawoman

#Dr. Jagdip Shah#Dr. Mukesh Agrawal#Cost of IVF in Mumbai#Dr. Yashodhara Mhatre#Dr. Bella Jagtap Palnitkar#Dr. Sheetal Sawankar

0 notes

Video

youtube

In conversation with an amazing team from Surdaan Ameya, Sanchay, Kavni, Kriti and Milli.

Inspiration Masters, LLC is dedicated to making a positive difference in the lives of people on the planet by helping everyone search their purpose of life, find what they are passionate about, and then helping with tools, techniques, and strategies to reach their true purpose in life by following their passion.

As a part of our “Inspiring Series”, we invite the difference makers, entrepreneurs, artists, business owners, and individuals who have interesting stories to share which can inspire everyone.

This week we got the privilege to meet an amazing team from Surdaan Ameya, Sanchay, Kavni, Kriti and Milli.

Surdaan will be holding their fifth Annual Charity Benefit Concert on August 9th. Each year high school students of Alaap School of Music come together for Surdaan - summer musical concert. The students showcase their singing talents while using their voices to help others in need. Surdaan 2020 is extra special. This year the musical event will be online allowing the kids to reach audiences all over the world. And the funds raised from ticket sales and donations will benefit 2 charities.

For those purchasing tickets in the US, each ticket is $25 per family. You can purchase additional tickets for $10 for family and friends in India under your bundle. In the US, payment will be accepted in the form of cash, cheques, or zelle.

For residents in India wishing to purchase the ticket, it is 500 rupees per family. In India, the payments will be accepted through Google pay. Please check out our previous post for more information on how to purchase tickets.

For more information regarding this event or tickets please contact or email [email protected].

instagram.com/surdaan/

https://www.facebook.com/surdaan/

For ticket: https://www.facebook.com/events/3998581140213296/

Phone: 972.948.8476

Email: [email protected]

Website: www.inspirationmasters.com

Facebook Page: www.facebook.com/inspirationmasters/

YouTube: https://www.youtube.com/channel/UCaqsWGFDdqc2Puhu3UKuvNw…

#inspiringseries #inspiration #motivation #inspirationmasters #positivedifference #followyourpassions #inspirationmasters #becomeremarkablenow #inspiringseries #purposeoflife #livelife #liveyourdreams #followyourdreams #passion #learn #irving #coppell #Lascolinas #carollton #inspire #DFWmetroplex #dallas #southlake #lewisville #frisco #plano #allen #bollywood #charitableorganizations #CityHouse #transitionalhousing #nonprofit

#CharityEvent #Concert #ClassicalSinging #BollywoodSinging #Fundraising

Pause-14:17

Additional Visual Settings

Enter Watch And Scroll

Click to enlarge

UnmuteClick to expand

Jay Pujara with an amazing team from Surdaan Ameya, Sanchay, Kavni, Kriti and Milli.

Send Message

8People Reached

0Engagements

Boost Post

Inspiration Masters LLC

Like

Comment

Share

See All

See More

Chat with friendsYOUR PAGES

SEE ALL

Inspiration Masters LLC9

Inspiration Masters Speakers Group for youth9

Fun N Frolic Montessori1

CONTACTS

Soujanya Ar

Srirekha Potturi

Krishna Puttaparthi

Rashmi Bedi

Swati Halady

Dena Soliman

Megha Vyas

Chandni Somaya

GROUP CONVERSATIONS

Tulsi, Bhakti, Cherry, 7 others

Neelima, Rachita, Tulsi, 13 others

Minu, Nitu, Rachna, 9 others

MORE CONTACTS (411)

Aaliya Aekta Sangani

Aarti Dewan

Abhi Trivedi

Abhishek Shivajirao Satham

Acharya Harshvardhan Shukla

Adi Campuzano

Aditi Kharel

Ajanta Patil Khetade

Alay Thakkar

Alba Hatcher

Ali Khan

Ali Malyani

Alka Soni

Alpa Patel

Alpana Jacob

Amanda Kaufman

Amit Anand

Amit Thakkar

Amit Trivedi

Amna Khan

Angela Wulz Deaton

Angie Cox

Angie McIntyre Buford

Anil Kilaru

Anil Sankaramanchi

Anitha Chandran

Anjali Chhabria

Anjali Desai

Anjum Varshney

Ankit Wani

Ansi Vincent

Anu Malhotra

Anupa Goyal

Aparna Joshi

Apurva Shah

Arathi Shah

Arun Ji Kumaar

Arun Sharma

Aruna Patibandla

Asha Amin

Ashish Khetarpal

Ashraf Feerasta Daredia

Ashwinikumar Sanakal

Asim Mehta

Atul Patel

Atul Shah

Avi Anand

Avinash Bardoli

Bharat Gandhi

Bijoya Saha

Bill Woodard

Blake Powers

Bobby Roberti

Brandi Davenport Mieger

Bryan Linder

Burra Vinod Kumar

Carly Alacahan

Carolina Bustamante de Poché

Chakori's Terracota

Chandra Pal

Chandra Viswanathan

Charmi Gandhi Ramchandani

Chennam Preetham Reddy

Chirag Patel

Chris Betts

Chris Bhatti

Chris Hampton

Christian Lem-Grace Collada

Christina Thomas

Christina Vance

Col Virendra Tavathia

Daliyah Jehangir Raja

Dallas Buzz

Dallas Terka

Danny Mehta

Darlene King Stark

Darshana Sheth

Deepa Barve

Deepa Raghavachari

Deepa Shahani Sood

Deepa Shankar

Deepak Patel

Deepak Patel

Deepali Naidu

Deepika Agarwal

Deepti Kalra

Devi Singh

Dharmendra Chaudhari Puniyani

Dhillon P Singh

Dinesh Muthyam

Dipesh Acharya

Dipti Gupta

Dipti Parikh

Dolly Solanki

Dreamy Patel

DrPankaj Jain

Efti Bali

Elizabeth Esparza

Elizabeth Gormly De Moraes

Erin Smith Gregor

Gans Subramanian

Gary Coffman

Gayathri Rao

George M. Kim

Girija Anand

Gladson Varghese

Gretchen Besco House

Guru Chandrahasreddy

Hamid Abbas

Hasan Soomro

HD Shah

Heather Boyce Parks

Hemachandra Reddy

Hemal Doshi

Hemang Thakkar

Hetal Dave

Himanshu Chaudhari

Himanshu Patel

Hope Hill

Humaira Malik

Hussain Ajani

Jagadeesh Unnikrishnan

Jagan Mohan Vinukula Vinukonda

Jagmohan Singh Kohli

Janak Bedi

Jared Patterson

Jasmina Bhattacharya

Jasmine Xavier

Jason D York

Jaswant Singh

Jeff Cheney

Jerry Kampiyil

Jess Oberoi

Jetal Chauhan

Jignasa Patel

Jim Neumann

Jimmy Singh

Jobin Panicker

Joga Sandhu

John Allen RedmondJr

Jonathan Baldwin

Josh Hurtado

Jothi Krishnamoorthy

Juan Carlos Cruz

Jyoti Ranjan Das

Jyoti Soni

K R Raghuveer Reddy

Kanchan Thareja Bhatti

Kanhaiya Moharir

Karen Kim

KaRri SaSi

Kathy Todryk

Kaushal Kathwadia

Kavita Gupta

Kavita Patil Doddamane

Kavita Sreedhar

Keith Burr Car Pro

Kenneth Allen Dugger

Kenneth George Wincorn

Kim Gill

Kimberly Armstrong

Kimberly Y. Evans

Kiran Samran

Kiran Walia Pulugurta

Kishore Chukkala

Kp Singh

Kris Wise

Krish Dhanam

Krishna Nemani

Krishnaveni Radhakrishna

Kuntal Ray

La Meglio

LaByron Thomas

Lakshmi Narayana Chintha

Lakshmi Srinivasan

Lavanya Reddy

Laxmi Tummala

Leah Frazier

Leo Kanell

Lilian Chavira

Little Musicmaker

Lubi Lafdewali

Madhuri Lad

Mahak Miglani Dhingra

Mahesh Chitnis

Mandar Wadekar

Manesh Lilani

Mani Raveendran

Manish Jariwala

Manish Lodha

Manjunath Rao

Mano Kunderu

Manoj Pillai

Manoj Singamsetti

Manoj Solanki

Manu's Mehendi

Maria Stillisano

Mathews Abraham

Maushmi Sabat

Max Miller

Medha Atre Kulkarni

Meena Gupta

Meenakshi Anipindi

Meera Yagnik

Mehul Patel

Micah Bellieu

Michael Simmons

Milan Sasmal

Milie Rajput

Missterry Perfume

Mohit Seth

Monika Khurana

Mukesh Sundesha

Murali CA

MusicMentor-Singer Prashant Soni

Nabeel Shams

Nagamani Gudipati

Nagavalli Medicharla

Nagesh Sikand

Naidu Mrc

Namrata Banga

Namrita Yuhanna

Nanda Mehta

Nanda Tiwari

Narender Kasarla

Neal Walters

Neelam Shah

Neelu Gupta

Neeta Bhasin

Neetu Jain

Neha Jain

Ner de Leon

Nidhi Shah

Nidhi Sharma

Nikeeta Sanger Pradhan

Nilesh Patel

Nishi Bhatia

Nitika Kohli

Nitu Shukla

Noundla R Srinivas

Noureen Dhanani

Parmeet K Randhawa

Patrick Subedi

Payal Patel

Payal Thakkar

Pooja Ghiya

Pooja Mehrotra

Pooja Sethi

Poojitha Vasireddi

Prabhat Chaudhary

Pramod Rajput

Pramodh Vaiccath

Prasanna Kumar

Prashanth Holla

Prathyusha Davuluri

Praveen Bhosale

Praveena Vajja

Preeti Shroff Gandhi

Preeti Sodhi Sharma

Prina Shah

Prithi Narasimhan

Priyanka Sinha

Puja Kaura Athale

Purvi Parakh

Pushpalatha Arakotaram

Rachel Olson

Rachel Samuel

Radha Karanam

Rahul Walia

Rajbhog Hicksville

Rajendra Gondhia

Rajesh Jyothiswaran

Raju Sethi

Ram Ganta

Ram Majji

Ramji Krishnamoorthy

Ramzan A Virani

Rao Medikonda

Ravi Jandhyala

Reddy N. Urimindi

Reema Khanolkar

Reena Nandani

Reet Wadhwa

Reflects You

Rehan Qaiser

Rekha Chopra

Rekha Nair

Renuka Bhandari Chauhan

Renuka Samudrala

Rhonda Ray

Riaz Ali

Rick Stopfer

Rita P Singh

Rita Pujara

RJ Vaibhav

Ro Simmons

Robert Cox

Rohit Bhatia

Roshan Bharti

Roshni B Deo

Roshni Thakkar Suchde

Rupa Thakkar

Rupal Desai

Ruth Riddle Thompson

Salim Lalany

Sami Ahmed

Samta Lodha

Sangeetha Sridharan

Sanjeev Sharma

Sapna Sohani

Sarada Karthik

Saravanan Kunjithapatham

Savitha Namuduri

Sayani Ravichandran

Shailaja Kumar

Shamik Shastri

Shardula Joshi

Sharmistha Das

Sharon Duffy Hamel

Shashi Kaligotla

Shayema Rahim

Shayona Shubha

Shelina Hawkins-Sajwani

Shelley Beaty

Shikha Gupta

Shipra Majji

Shirley Wilson Moon

Shital Kalpesh

Shobha Michaels

Shravya Reddy

Shreya Choudhury

Shruthi Sriram

Shruti Ravindran

Shubhada Kshirsagar Sharma

Shweta Dubey

Shweta Paralikar Tamhane

Siddhu Govindaswamy

Sim Bains

Simi Arora

Siri Katti

Smita Gupta Choudaha

Snehal Shah

Sobia Taimoor

Sonal Patel

Sonika Kukreja

Sonny Chatrath

Sonu Bhalla

Sonya Reddy

Sowmya Ananth

Sowmya Gadiraju

Sree Vas Kallepalli

Sridevi Tummalapalli

Srini Tillu

Stephanie Schnitzius Hengstenberg

Subba Yantra

Subha Ramanan

Sudipa Ray

Sugandha Agarwal

Sujata Bagde

Sumaira Baig Mughal

Sumit Shrivastava

Summer Neimann

Sunaina Panchal

Sunny Dhillon

Sunny Lal

Suresh Madha

Surinder Massey

Suroma Sinha

Susan Jones

Sushma Maitreyi Sharma Gangaraju

Sushma Prasad

Taher Ali

Tamim Shakir

Tanu Madan

Tarang Soni

Tarulata Agrawal

Ted Halladay

Tejal Patel

Terri West

Tina Patel

TJ Patel

Tracy Marshall

Uma Venkatesan

Umang Mehta

Umar John

Usha Venkataraman

Ushi Chaturvedi

Vamsi Kalakuntla

Vandana Tilak

Vani Sinha

Veenu Gaba

Venu Kolipaka

Veronica S Jones

Vibha Mistry

Vidisha Gupta

Vidya Rao

Vijay Moksha

Vijay Naraharishetty

Vijaya Botla

Vijayalakshmi Kariamal

Vikram Reddy

Vinay Kumar

Vini Tandon Keni

Vinod Devlia

Visa Shanmugam

Vishal Gandhi

Vruddhi Choksy Shah

Yatini Desai

Zahra Jahanyfard

Zin Sakshi Bhardwaj

कनिका वर्मा

More stories loaded.

7 notes

·

View notes

Video

youtube

Song: Tata Kardene (2022) - Harmaan Nazim

Music: Vivek Kar, Lyrics: Mukesh Mishra

Singer: Harmaan Nazim

-- Tata Kardene : Official Music Video | Jay Patel & Akriti Agrawal | Harmaan Nazim | Vivek Kar (via Zee Music Company)

0 notes

Text

Bollywood movies Shot in Sydney, Australia

Famous Bollywood films shot in Australia consist of: Bachna Ae Haseeno, Chak De India, Dil Chahta Hai, From Sydney With Love, Heyy Babyy, Hindustani, Love tale 2050, Ramaiya Vastavaiya, Salaam Namaste, Singh Is King, Bhaag milkha bhaag. ‘Shaadi Ke aspect outcomes’ (starring Farhan Akhtar & Vidya Balan) had a tune “Tumse Pyar Ho Gaya” that turned into shot in & around Gold Coast in Queensland, Australia. Gold coast is well-known for its white sandy seashores, global magnificence theme parks, and the awesome Hinterland.

Soldier – 1998 film directed by Abbas-Mustan starring Raakhee, Bobby Deol and Preity Zinta.

Bachna Ae Haseeno – 2008 film directed by Siddharth Anand, with the famous person strength of Ranbir Kapoor, Bipasha Basu, Deepika Padukone and Minissha Lamba.

Chak De! India – 2007 movie directed with the aid of Shimit Amin and Rob Miller produced by Aditya Chopra, with a track by using Salim–Sulaiman and a screenplay by means of Jaideep Sahni . Shahrukh Khan became the principal.

Salaam Namaste – 2005 movie directed by way of Siddharth Anand and produced by Aditya Chopra and Yash Chopra . The movie stars Saif Ali Khan and Preity Zinta , Arshad Warsi, Tania Zaetta and Jugal Hansraj . The movie is a remake of the 1995 Hollywood movie nine Months.

Dil Chahta Hai – 2001 film starring Aamir Khan, Saif Ali Khan, Akshaye Khanna, Preity Zinta, Sonali Kulkarni, and Dimple Kapadia. Movie is written and directed by Farhan Akhtar.

Shaadi Ke facet effects – 2014 movie directed by Saket Chaudhary and starring Farhan Akhtar and Vidya Balan. The film is produced by means of balaji rainbow motion photographs and Pritish Nandy Communications.

Bhaag Milkha Bhaag- 2013 film directed by Rakeysh Omprakash Mehra based on the life of Milkha Singh. The movie stars Farhan Akhtar, Sonam Kapoor, Meesha Shafi, Divya Dutta, Pavan Malhotra, Yograj Singh and Prakash Raj.

Singh Is Kinng – 2008 movie stars Akshay Kumar and Katrina Kaif in lead roles. Directed by means of Anees Bazmee and Produced by means of Vipul Amrutlal Shah

Principal Saab – 1998 film starring Amitabh Bachchan, Ajay Devgan, Sonali Bendre and Ashish Vidhyarthi. Directed by Tinnu Anand.

Aap Mujhe Achche Lagne Lage – 2002 movie, directed by Vikram Bhatt, starring Hrithik Roshan and Amisha Patel. Song with the aid of Rajesh Roshan.

Criminal – 2010 movie stars Emraan Hashmi and Neha Sharma in the lead. It's directed by Mohit Suri and produced by Mukesh Bhatt. The movie is based totally on the controversy concerning the alleged racial assaults on Indian students in Australia between 2007 and 2010.

We are our own family – 2010 film directed through Siddharth Malhotra and produced by Karan Johar. The movie superstar was solid – Kajol, Kareena Kapoor Khan and Arjun Rampal .

Heyy Babyy – 2007 film starring Akshay Kumar, Vidya Balan, Fardeen Khan, Riteish Deshmukh, Juanna Sanghvi and Boman Irani. It's a remake of the Malayalam film Thoovalsparsham. Directed by Sajid Khan.

Hadh Kar Di Aapne – 2000 Hindi movie directed with the aid of Manoj Agrawal. The movie stars Govinda and Rani Mukerji.

Koi Aap Sa – 2005 movie directed with the aid of Partho Mitra. It stars Aftab Shivdasani and Natassha .

Prem Aggan – 1998 movie written and directed through Feroz Khan. The film stars Fardeen Khan and Meghna Kothari in the lead roles.

Read More : turkish airlines customer service usa

0 notes

Photo

3 BHK FLAT IN KOLAR ROAD

Happy to fulfil our Commitment Towards Our Customers.

It's always been a Great feeling to Handover the Key of Happiness to our Valuable Customers. Thanks, Mr. Mukesh Rai Ji for showing your faith in Agrawal Construction Co.

0 notes

Text

Meet ‘Top 50 Newsmaker Indians in 2020’ surveyed by Fame India & Asia Post Survey

New Delhi: India is considered as a land full of opportunities. Like most other democracies, this country too is very politically and economically charged. Everyone appears to have a view on how the nation should run. People here have created benchmarks of social service. Their efforts demanded us to prepare a list of newsmaker Indians that created ripples of impact. Hence, Fame India & Asia Post together came up with the list of ‘Top 50 Newsmaker Indians in 2020’.

This list is a survey of such famous countrymen, who created change through their efforts in the lives of people. There are hundreds of people who, from their own places, are working towards strengthening the nation. All these people have tried to change, develop and strengthen the possibilities in people’s lives with their excellent works and efforts.

The list includes people from all walks of life who are politicians, bureaucrats, film stars, journalists, artists, educationists, industrialists and spiritual leaders. Those who are involved in the public sphere, who are trying to make people’s lives simpler and more capable, those who are creating a benchmark of social work.

Fame India & Asia Post has tried to compile the list of these newsmakers together that will motivate more Indians to work harder for a brighter and better India. At the release of the list, editorial director of the Magazine Fame India, US Sonthalia said “These are those inspirational Indians who have tirelessly worked towards the betterment of common people. This positive initiative of Fame India, along with selecting them, makes other empowered people like them realize that they should move strongly on their duty path”.

Here is the list of ‘Top 50 Newsmakers in 2020’ by Fame India & Asia Post Survey:

Sandeep Marwah – Educationist, Philanthropist, International Media Person, Social Reformer (Chairman – AAFT), Krishnaswamy Kasturirangan – Chairman – National Education Policy Committee, K Parasaran – Law Advocate – Shri Ramlala Virajman, Gen. Manoj Mukund Narwane – Chief of Army Staff, MS Swaminathan – Agricultural Scientist, Manoj Modi – Famous Corporate & Industrial Strategist, Director – Reliance Group, Gupteshwar Pandey (IPS) – DGP, Bihar, Indian Police Services

Manan Mishra – Chairman – Bar Council of India, Dr Pratap Chandra Reddy – Health, Chairman – Apollo Hospital, Harish Salve – Senior Advocate – Supreme Court, Muthayya Vanitha – Scientist, Project Director – Chandrayaan Mission, Dr Devi Prasad Shetty – Founder – Narayan Hospital, BL Santhosh – Politics, National General Secretary – BJP, Girish Chandra Murmu – CAG

Sanjay Kothari – Commissioner – Central Vigilance Commission Shashikant Das – Governor – RBI, Rakesh Jhunjhunwala – Investment Banker, known for share market predictions, Jagdeep Dhankhar – Governor, West Bengal, Champat Rai – Social worker, General Secretary, Shri Ram Janma Bhoomi Teerth Kshetra Trust, Ayodhya, Sourav Ganguly – BCCI Chief, Swami Awadheshanand Giri – Spiritual Guru, President – Juna Akhada, Madhu Pandit Dasa – Samaj Reformer, President – Akshaya Patra Foundation, Sant Balbir Singh Seechewal – Social Reformer, Environmental hero, Punjab

Jyotiraditya Scindia – MP – Rajya Sabh, Prof Dr Jagat Ram – Director – PGI Chandigarh, Prashant Kishore – Political Strategist, Founder – I-PAC, Dr Kishore Singh – HOD – Oncology, LNJP Hospital, Delhi, Dr Shiv Kumar Sarin – Director – ILBS, Delhi, Ravi Kalra – Social Reformer, Founder – Earth Saviors Foundation, Sambhaji Bhide – Social Reformer, Founder – Shri Shivpratisthan Hindusthan, Maharashtra, Dr MV Padma Srivastava – HOD – Neurology Department, AIIMS, Delhi, Dr Prakash Baba Amte – Social Reformer, Tribal Welfare – Maharashtra, Dr Uma Kumar – Head – Rheumatology Department, AIIMS, Delhi.

Ela Ramesh Bhatt – Social Reformer, Founder- Seva Foundation, Rajendra Singh – Social Reformer, Famous Environmentalist, MA Yusuf Ali – Businessman and philanthropist, Chairman Lulu Group International, Dubai, Ashok Bhagat – Social Reformer, Tribal Welfare, Secretary, Vikas Bharti, Jharkhand, Ajit Mohan – Youth Icon, Managing Director – Facebook India, Dr C. Rajkumar – Dynamic Educationist, Vice-Chancellor – OP Jindal Global University, Sunitha Krishnan – Social Reformer, Co-founder – Prajjavala

Ashish Dhawan – Entrepreneur, Educationist, Philanthropist, Chairman Trustee – Ashoka University, Manish Maheshwari – Youth Icon, Managing Director- Twitter India, Sonu Sood – Film Actor, social worker, Philanthropist, Abhinandan Sharma – Wing Commander – Indian Air Force, Mahesh Savani – Industrialist & philanthropist, Chairman – Savani Group, Mukesh Patel – Industrialist & Social Service, Director- Hindwa Group, Sharma – Journalism, News Director – TV9 Bharatvarsha, Pratap Chand Agrawal – Educationist, philanthropist, Sanjay Bihari – Social Reformer, Founder, Samarth Bihar, Manish Mundra – film producer, philanthropist

0 notes

Text

IPL auction: Complete players' list and their base price

The IPL 2018 auction will take place in Bengaluru on January 27 and 28 where a total of 578 players will go under the hammer.

A fierce bidding war is expected as Indian stars Gautam Gambhir, off-spinners Ravichandran Ashwin and Harbhajan Singh, Ajinkya Rahane, mystery spinner Kuldeep Yadav and openers KL Rahul and Murali Vijay will be on the franchises’ radar.

The list also includes the overseas players, including Glenn Maxwell, Chris Gayle, Shane Watson, Rashid Khan and Eoin Morgan.

In the 10th season, Mumbai Indians led by Rohit Sharma defeated Pune to lift the IPL trophy for the third time.

The 11th season of the much-awaited Indian Premier League will begin on 6th April 2018.

The opening ceremony of the tournament will take place on April 6 in Mumbai while the first match of the new season will be played at the same venue on April 7.

He further informed that the tournament will continue until May 27 with Mumbai once again hosting the final match.

Here is the complete list of players and their base price.

Base Price ₹2,00,00,000

Batsman

KL Rahul, Murali Vijay, Brendon McCullum, Eoin Morgan, Cameron White, Chris Lynn, Colin Ingram

Bowler

Josh Hazlewood, Rashid Khan Arman, Karn Sharma, Yuzvendra Singh Chahal, Mitchell Johnson, Liam Plunkett, Pat Cummins

Wicket Keeper

Quinton De Kock, Dinesh Karthik, Robin Uthappa

All-Rounder

James Faulkner, Marcus Stoinis, Chris Woakes, Angelo Mathews, David Willey, Corey Anderson, Kedar Jadhav

==========

Base Price ₹1,50,00,000

Batsman

Aaron Finch, Jason Roy, Hashim Amla, Evin Lewis, Travis Head, Shaun Marsh, Michael Klinger, Lendl Simmons, David Miller

Bowler

Kagiso Rabada, Trent Boult, Kyle Abbott, Kuldeep Singh Yadav, Nathan Coulter-Nile, Amit Mishra, Mohit Sharma, Nathan Lyon, Steven Finn, Harry Gurney, Mark Wood, Jaydev Unadkat

Wicket Keeper

Jonny Bairstow, Jos Buttler, Peter Handscomb

All-Rounder

Moises Henriques, Ravi Bopara, Jason Holder, Moeen Ali, M.S. Washington Sundar

==========

Base Price ₹1,00,00,000

Batsman

Manish Pandey, Dwayne Smith, Alex Hales

Bowler

Tymal Mills, Andrew Tye, Mohammed Siraj, Adam Zampa, Mohammad Shami, Dale Steyn, Mustafizur Rahman, Samuel Badree, Imran Tahir, Tim Southee, Jason Behrendorff, Mitchell McClenaghan, Lasith Malinga, Ranganath Vinay Kumar, Umesh Yadav, Piyush Chawla

Wicket Keeper

Parthiv Patel, Wriddhiman Saha, Sanju Samson, Sam Billings

All-Rounder

Daniel Christian, Carlos Brathwaite, Ben Cutting, Jean-Paul Duminy, Shane Watson, Chris Jordan, Tom Curran

==========

Base Price ₹75,00,000

Batsman

Martin Guptill, Darren Bravo, Cheteshwar Pujara, Ross Taylor, Usman Khawaja

Bowler

Peter Siddle, Jerome Taylor, Lockie Ferguson, Morne Morkel, Ishant Sharma, Shardul Narendra Thakur, Adam Milne, Marchant De Lange

Wicket Keeper

Naman Ojha, Johnson Charles, Luke Ronchi

All-Rounder

Darren Sammy, Colin De Grandhomme, Yusuf Pathan, Adil Rashid, Joe Denly, Samit Patel, Wayne Parnell

==========

Base Price ₹50,00,000

Batsman

Reeza Hendricks, Mandeep Hardev Singh, Anton Devcich, Upul Tharanga, Karun Nair, Billy Stanlake, Joe Burns, Manoj Tiwary, Saurabh Tiwary, Tamim Khan, Aiden Markram, Faiz Fazal, Abhinav Mukund, Venugopal Rao, Dean Elgar, Najibullah Zadran

Bowler

Ben Laughlin, Ronsford Beaton, Dhawal Kulkarni, Sandeep Sharma, Gulbadin Naib, Ish Sodhi, Duanne Olivier, Michael Beer, Sachithra Senanayaka, Dawlat Zadran, Aaron Phangiso, Beuran Hendricks, Lakshan Sandakan, Aravind Sreenath, Barinder Singh Sran, Sean Abbott, Ben Wheeler, Kesrick Williams, Lungisani Ngidi, Ashoke Dinda, Praveen Kumar, Mujeeb Zadran, Pragyan Ojha, Jhye Richardson, Rahul Sharma, Joel Paris, Varun Aaron, Parvinder Awana, Munaf Patel, Scott Boland, Dushmantha Chameera, Shannon Gabriel, Akila Dhananjaya, Keshav Maharaj, Dane Paterson, Ben Hilfenhaus, Seth Rance, Fawad Ahmed, Tabrez Shamsi, Neil Wagner, Shapoor Zadran, Abhimanyu Mithun, Sheldon Cottrell, Matt Henry, Nuwan Kulasekara, Suranga Lakmal, Manpreet Gony, Pankaj Singh, Sudeep Tyagi

Wicket Keeper

Glenn Phillips, Denesh Ramdin, Niroshan Dickwella, Kusal Janith Perera, Nicolas Pooran, Alex Carey, Chadwick Walton, Tom Latham, M Shahzad Mohammadi, Shafiqullah Shafaq, Ambati Rayudu

All-Rounder

Gurkeerat Singh Mann, John Hastings, Sikandar Butt, Graeme Cremer, Rishi Dhawan, Solomon Mire, Ryan McLaren, Parveez Rasool, Shabbir Rahaman, Vernon Philander, Abul Raju, Paul Stirling, Malcolm Waller, Dilshan Munaweera, Thisara Perera, Pawan Negi, Seekkuge Prasanna, Ashton Agar, Mohammad Nabi, Rahmat Shah Zarmatai, Dwaine Pretorius, David Wiese, Asela Gunarathna, Dhananjaya Silva, Andile Phehlukwayo, Jonathan Carter, Rovman Powell, Mitchell Santner, Jayant Yadav, Irfan Pathan, Marlon Samuels, Andre Fletcher, Stuart Binny, Hilton Cartwright, Dasun Shanka, Dawid Malan, Farhaan Behardien, Jon-Jon Trevor Smuts, Ashley Nurse, Scott Kuggeleijn, Robbie Frylinck, Wiaan Mulder, Colin Munro, Vaughn Van Jaarsveld, Rayad Emrit, Mohammad Mahmudullah, Isuru Udana

==========

Base Price ₹40,00,000

Batsman

Tom Cooper

Bowler

Thomas Helm, Mitchell Swepson, Shahbaz Nadeem, T Natarajan

Wicket Keeper

Ishan Kishan

All-Rounder

Rajat Bhatia, Kevon Cooper, Vijay Shankar, Krunal Pandya, Deepak Hooda, Michael Neser, Jofra Archer

==========

Base Price ₹30,00,000

Batsman

Suryakumar Yadav, Christiaan Jonker, Vishnu Solanki, Alex Ross, Daniel Hughes

Bowler

Iqbal Abdullah, Siddarth Kaul, Anureet Singh, Pradeep Sangwan, Basil Thampi, Gurvinder Singh, Aniket Choudhary, Ankit Singh Rajpoot

Wicket Keeper

Ben McDermott

All-Rounder

Cameron Delport, Javon Searless, Roshon Primus

==========

Base Price ₹20,00,000

Batsman

Manprit Juneja, Mayank Siddana, Armaan Jaffer, Shivam Chauhan, Sachin Baby, Prithvi Shaw, Ankeet Bawane, Siddhesh Dinesh Lad, Apoorv Vijay Wankhade, Virat Singh, Marcus Harris, Ricky Bhui, Rassie Van der Dussen, Rajesh Bishnoi Sr, Paras Dogra, D.B Ravi Teja, Paul Valthaty, Amandeep Khare, Rinku Singh, Tanmay Agarwal, Ankit Lamba, Sarthak Ranjan, Priyank Panchal, Pratham Singh, Ishank Jaggi, Manjot Kalra, Anmolpreet Singh, Ruturaj Gaikwad, Sharad Lumba, Shubham Singh Rohilla, Himanshu Rana, Akshath Reddy, R Samarth, Mohammed Asaduddin, Abhinav Manohar, Rohan Marwaha, Rajat Patidar, Yash Sehrawat, Ravi Chauhan, Samit Gohil, Ramandeep Singh, Abhijeet Tomar, Jiwanjot Singh Chauhan, Abhimanyu Easwaran, Chirag Gandhi, Shubman Gill, Rahul Tripathi, Manan Vohra, Mayank Agarwal, Unmukt Chand

Bowler

Syed Khaleel Ahmed, Nidheesh M D Dinesan, Junior Dala, Karan Thakur, Anurag Verma, Lizaad Williams, Tanveer Ulhaq, Kushang Patel, Shelly Shaurya, A. Aswin Crist, Aaron Summers, Royston Dias, Kartik Tyagi, Tejas Singh Baroka, Abu Nechim Ahmed, Rahul Shukla, Bhargav Bhatt, Shadab Jakati, Sarabjit Ladda, Pravin Tambe, Ben Dwarshuis, Ajit Chahal, Deepak Chaudhary, Pradeep Dadhe, Domnic Joseph Muthuswamy, Babasafi Pathan, Monu Singh, Pradeep Thippeswamy, Kuldip Yadav, Krishnappa Gowtham, K.C. Cariappa, Mihir Hirwani, Akshay Wakhare, Manjeetkumar Chaudhary, Kulwant Khejroliya, Lukman Iqbal Meriwala, Navdeep Saini, Vikas Tokas, Yuvraj Chudasama, Rahul Chahar, Ronit More, Veer Pratap Singh, Varun Khanna, Pawan Suyal, Sandeep Warrier, J Suchith, Ashish Hooda, R. Sai Kishore, Rahil S Shah, Harmeet Singh, Ishwar Chaudhary, Parikshit Valsangkar, Avesh Khan, Amit Mishra, Cheepurupalli Stephen, Rajwinder Singh, Shubek Gill, Vinay Choudhary, Mayank Markande, Zahir Khan Pakteen, Ankit Soni, Lalit Yadav, Pardeep Sahu, Chama Milind, Umar Nazir Mir, Yarra Raj, Oshane Thomas, Athisayaraj V, Zeeshan Ansari, Siddharth Desai, Jiyas K, Alexandar Rama Doss, Nathu Singh, M. Ashwin, Shivil Kaushik, Baltej Dhanda, Armaan Jain, Mohsin Khan, Mukesh Kumar Singh, Arshdeep Singh, Rishi Arothe, Asif K M, Ravi Kiran Majeti, Ishan Porel, Aditya Thakare, Sandeep Lamichhane, Subodh Bhati, Mohan Prasath, Abhishek Sakuja, Javed Khan, Ashok Sandhu, Tushar Deshpande, Sayan Ghosh, Jaskaran Singh, Prasidh Krishna, Rajneesh Gurbani

Wicket Keeper

Ankush Bains, C.M. Gautam, Aditya Tare, N Jagadeesan, Nikhil Shankar Naik, Smit Patel, K.B Arun Karthik, Kona Srikar Bharat, Shreevats Goswami, Mahesh Rawat, Gitansh Khera, Jitesh Sharma, Vishnu Vinod, Sheldon Jackson, Kedar Devdhar, Prashant Chopra, Anuj Rawat, Harvik Desai, Anmol Malhotra, Dhruv Raval, Rohith Ravikumar, Mohammad Nazim Siddiqui, Mayank Sidhu, Sandeep Kumar Tomar, Sadiq Hassan Kirmani, Jaskaranvir Singh Sohi, Abhishek Gupta, Hamza Tariq, Rahul Yadav, Kyle Mayers

All-Rounder

Vyshak Vijay Kumar, Jaydev Shah, Shashank Singh, Manzoor Dar, Aman Khan, Diwesh Pathania, Shamss Mulani, Salman Nizar, Dafedar, Khizar Anwar, Mandeep Singh, Shubham Ranjane, Sidhant Dobal, Vinod Kumar C.V., Thomas Kaber, Midhun S, Akhil Arvind Herwadkar, Shamar Springer, Ashok Menaria, Jack Wildermuth, Odean Smith, Yogesh Nagar, Milind Kumar, Shubham Agrawal, Akshdeep Nath, Yomahesh Kumar, Vivek Singh, Mohammed Bilal, Arun Chaprana, Rajat Paliwal, Abhimanyu Rana, Sarang Rawat, Fabid, Farook Ahmed, Arjun Sharma, Shreyas Gopal, Akash Sudan, Sandeep Bavanaka, Karan Kaila, Aryaman Vikram Birla, Gaurav Gambir, Ankit Kaushik, Patrick Kruger, Sohraab Dhaliwal, Aditya Sarvate, Amish Sidhu, Shadley Van Schalkwyk, Vignesh Moorthy, Arjun Nair, Kanishk Seth, Shivam Dubey, Hanuma Vihari, Puneet Datey, Ninad Rathva, Siddhant Sharma, Mrinank Singh, Manan Sharma, Chintan Gaja, Amit Mishra, Jalaj Saxena, Bipul Sharma, Shreekant Wagh, Syed, Mehdi Hasan, Harshal Patel, Sumit Ruikar, Ashish Reddy, Kuldeep Hooda, Shaurya Sanandia, Vaibhav Rawal, Pankaj Jaswal, Anustup Majumdar, Dhruv Shorey, Kshitiz Sharma, Swapnil Singh, Himmat Singh, Writtick Chatterjee, Chris Green, Ryan Ninan, Rohan Prem, Rahul Tewatia, Puneed Datey, R. Sanjay Yadav, Imtiaz Ahmed, Atit Sheth, Dinesh Salunkhe, Pavan Deshpande, Shivam Sharma, Chaitanya Bishnoi, Indrajith Baba, Jatin Saxena, Shivam Mavi, Sagar Trivedi, Amit Verma, Akash Parkar, Nitish Rana, Anukul Roy, Akash Bhandari, Pratyush Singh, Ankit Sharma, Anirudha Ashok Joshi, Saurabh Kumar, Praveen Dubey, Kunal Chandela, Aamir Gani, Pulkit Narang, Riyan Parag, Karanveer Singh, Sumeet Verma, Cameron Gannon, Akshay Karnewar, Tajinder Dhillon, Govinda Poddar, Rajesh Sharma, Deepak Chahar, Antony Dhas, Kishore Pramod Kamath, Nikhil Gangta, Jay Gokul Bista, Sumanth Bodapati, Mahipal Lomror, Deepak Punia, Mayank Dagar, Kamlesh Nagarkoti, Darcy Short, Baba Aparajith, Abhishek Sharma, Milind Tandon.

]]>

4 notes

·

View notes

Text

mukesh ambani, ratan tata, ritesh agrawal lits diyas on appeal of pm narendra modi to fight against coronavirus - मुकेश अंबानी, रतन टाटा, किरण मजूमदार शॉ और रितेश अग्रवाल ने भी दीये जलाकर कोरोना से जंग में दिखाई एकजुटता, एंटीलिया में ठीक 9 बजे बंद हो गई थीं लाइटें

mukesh ambani, ratan tata, ritesh agrawal lits diyas on appeal of pm narendra modi to fight against coronavirus – मुकेश अंबानी, रतन टाटा, किरण मजूमदार शॉ और रितेश अग्रवाल ने भी दीये जलाकर कोरोना से जंग में दिखाई एकजुटता, एंटीलिया में ठीक 9 बजे बंद हो गई थीं लाइटें

पीएम नरेंद्र मोदी की अपील पर रविवार की रात 9 बजे देश भर में लोगों ने दीये जलाए और कोरोना के खिलाफ जंग में अपना संकल्प व्यक्त किया। यही नहीं पीएम मोदी की अपील पर आम लोगों के साथ ही दिग्गज कारोबारी मुकेश अंबानी भी पत्नी नीता अंबानी के साथ दीये जलाते दिखे। यही नहीं टाटा ग्रुप के चेयरमैन और इस संकट में 1,500 करोड़ रुपये की बड़ी रकम डोनेट करने वाले रतन टाटा ने भी दीये जलाकर कोरोना के खिलाफ एकजुटता…

View On WordPress

0 notes

Text

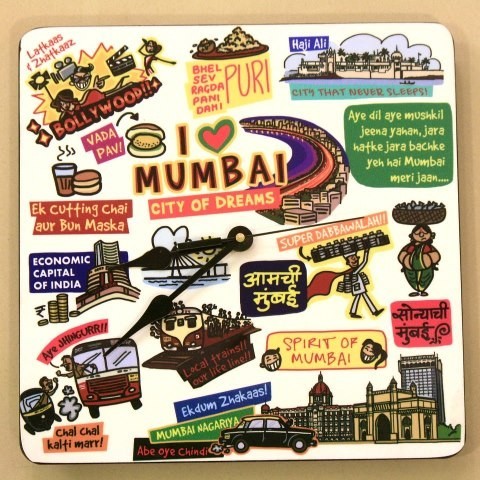

fantastic fact about mumbai

Mumbai (previously known as Bombay until 1996) is a natural harbour on the west coast of India, and is the capital city of Maharashtra state. It is India's largest city, and one of the world's most populous cities. It is the financial capital of India. The city is the second most-populous in the world. It has approximately 13 million people. Along with the neighbouring cities of Navi Mumbai and Thane, it forms the world's 4th largest urban agglomeration. They have around 19.1 million people.

Interesting facts about Mumbai that makes you proud:

1. Rail transport in India

Railways in India were introduced by Britishers. The Red Hill Railway, the country's first train, ran from Red Hills to Chintadripet bridge in Madras in 1837. It was built by Arthut Cotton. This railway was primarily used to transport laterite stone for road-building work in Madras. The first passenger train in India ran between Bombay (Bori Bunder) and Thane on 16 April 1853. The 14-carriage train was hauled by three steam locomotives: Sahib, Sindh and Sultan. It carried 400 people and ran on a line of 34 kilometres (21 mi) built and operated by GIPR. Today Mumbai rail Is known as a lifeline of the Mumbai.

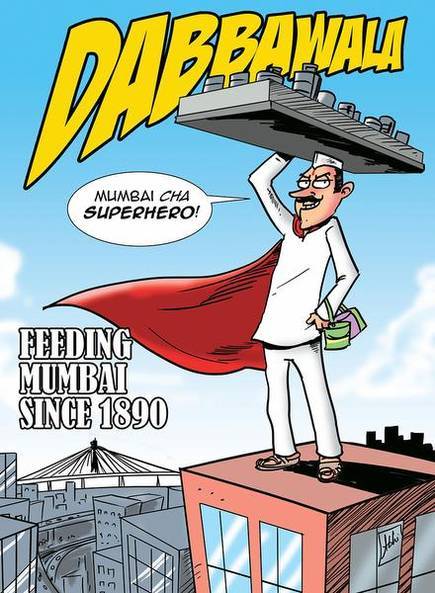

2 . Mumbai’s dabbewala

The dabbawalla’s constitute a lunchbox delivery and return system that delivers hot lunches from homes and restaurants to people at work in India, especially in Mumbai. In 1890 Bombay, Mahadeo Havaji Bachche started a lunch delivery service with a hundred men. In 1930, he makes a group of the dabbawalla’s. Later, a charitable trust was registered in 1956 under the name of Nutan Mumbai Tiffin Box Suppliers Trust. This lunchbox is picked up in the late morning, delivered mostly using bicycles and railway trains, and returned empty in the afternoon. They are also used by meal suppliers in Mumbai, who pay them to ferry lunchboxes with ready-cooked meals from central kitchens to customers and back. This event increased the demand from all quarters. Currently, a minimum of 5,000 dabbawalla’s are involved in the business. They form a part of an organised cooperative business that also offers job security. the charge currently ranges from 600rs to 1000rs depending upon the distance. A large number of dabbawalla’s come from the Varkari community of Maharashtra. Dr. Pawan Girdharilal Agrawal is the CEO of the renowned Mumbai Dabbawalla’s.

3. India’s first bus service

India’s first motor bus route started on July 15, 1926, and ran between Afghan Church and Crawford Market, Mumbai. The bus fare for the same journey was 4 annas,i.e 25 paise.

4 .Bombay To Mumbai

The city's official name change, to Mumbai from Bombay it happened when regional political party ShivSena came into power in 1995. The ShivSena saw Bombay as a legacy of British colonialism and wanted the city's name to reflect its Maratha heritage, hence renaming it to pay tribute to the goddess Mumba Devi.

5.India’s first airport

India's first civil aviation airport, now known as the Juhu aerodrome, is located just ahead of the Nanavati Hospital on one of suburban Mumbai's arterial roads. When it first opened its gates in 1928, it was known as the Vile Parle Flying Club. The first civil flight that took off from this airport was fittingly piloted by J R D Tata, the father of Indian civil aviation. The flight, part of the Tata Aviation Service, the forerunner to Tata Airlines and Air India, departed from the Drigh Road airstrip, Karachi, on October 15, 1932. After a stopover at Ahmedabad, it landed at the Juhu Aerodrome to complete India's first civil flight. Juhu Aerodrome served as the city's sole airport for 26 years. Today, the aerodrome, which has one runway, hosts a flying club and a heliport. It is occasionally used for shoots.

6.Most expensive houses

Antilia is a private home in the South Mumbai district of Mumbai, India. It is the residence of Indian billionaire Mukesh Ambani and his family, who moved into it in 2012; at 27-stories, 173 metres (570 feet) tall, and 400,000 square feet, and with amenities such as three helipads, a 168-car garage, a ballroom, 80-seat theatre, terrace gardens, spa, and a temple, the skyscraper-mansion is one of world's largest and most elaborate private home. As of November 2014, it is valued at $2 billion, deemed to be the world's second most valuable residential property, after British crown property Buckingham Palace, and the world's most valuable private residence. Its controversial design and ostentatious use by a single family has made it infamous in India and beyond, including severe criticism in the architectural press and mockery in popular media. It is located on Altamont Road, Cumballa Hill in Mumbai.

7.India’s 1st car owner lived in Mumbai

The first person to own a car in India was the great Sir Jamshedji Tata, the founder of the Tata empire. It was in 1901 that any India brought a car.

8.Bandra-worli sea link

Bandra Worli Sea Link Mumbai. The Famous Bandra-Worli sea link is also known by the name Rajiv Gandhi Sea Link. Bandra-Worli Sea Link is the fourth-longest bridge above water in India (5,600 m equal to the earth’s girth.). is a cable-stayed bridge with pre-stressed concrete-steel viaducts on either side that links Bandra in the Western Suburbs of Mumbai with Worli in South Mumbai. The bridge is a part of the proposed Western Freeway that will link the Western Suburbs to Nariman Point in Mumbai's main business district. The sea-link reduces travel time between Bandra and Worli during peak hours from 20–30 minutes to 10 minutes. As of October 2009, BWSL had an average daily traffic of around 37,500 vehicles.

9.Global city (Alpha city)

Mumbai is the commercial capital of India and has evolved into a global financial hub. From being an ancient fishing community and a colonial centre of trade, Mumbai has become South Asia's largest city and home of the world's most prolific film industry. Mumbai is not just a well-connected city it is also globally connected in terms of the world. Mumbai is a Global city or an Alpha World city that acts as a primary connection for the global economic network.

10.Countries 1st palace with electricity

Taj Hotels is a chain of luxury hotels of the Indian Hotels Company Limited founder of the Tata Group, Jamshedji Tata, in 1903, the company is a part of the Tata Group, one of India's largest business conglomerates. The Taj Mahal Palace Hotel was the first hotel in India to have electricity. Also, it was the first in the country to have American fans, German elevators, and Turkish baths.

0 notes

Text

Citizenship Bill: Protesters defy curfew in Assam; Army conducts flag march

Election News

The people defied curfew in Guwahati on Thursday morning to protest against the Citizen (Amendment) Bill as the situation remained tense throughout Assam, with the Army conducting flag march in the city.

Guwahati, the epicentre of anti-CAB protests, was placed under indefinite curfew last night while the Army was called in at four places and Assam Rifles personnel were deployed in Tripura on Wednesday as the two northeastern states plunged into chaos over the hugely emotive Citizenship (Amendment) Bill or CAB.

All Assam Student's Union has called for a protest at 11 am in Guwahati.

Krishak Mukti Sangram Samiti appealed to the people to come out on the road for peaceful protest.The people were on the road in the night despite curfew.

Army conducted a flag march in the city on Thursday morning. Vehicles were stranded in various cities of Assam due to heavy blockade. Half-a dozen vehicles were burnt. The houses of BJP and AGP leaders were attacked in various parts of the state."Curfew is on till further orders, We are monitoring the situation very closely. So far the situation is under control,' Mukesh Agrawal told PTI.An RSS official told PTI that the organisation's office in Dibrugarh, Sadya and Tezpur were attacked. The BJP office in Tezpur was also attacked.

Late in the night, curfew was also imposed in Dibrugarh for an indefinite period as protesters targeted the houses of Assam Chief Minister Sarbananda Sonowal and Union minister Rameswar Teli in the district.As tens of thousands of anti-CAB protesters descended on the streets of Assam on Wednesday, clashing with police and plunging the state into chaos of a magnitude unseen since the violent six-year movement by students that ended with the signing of the Assam accord, Guwahati was placed under curfew...Read More

0 notes

Photo

With the blessings of Baba Balaji, yesterday I completed one more year journey. This year is very dynamic and with happiness of #little #champ it gives some sorrow movement like father operation, leg fracture. However with the blessings of Baba and love and grace of senior and friends I am able to conquer negative and able to enjoy positive. Special thanks to my sister, my life partner Payal Anshul Singhal, my mentor Mukesh Agrawal (at Mehandipur Balaji Temple) https://www.instagram.com/p/B0R3HIrnyvk/?igshid=vwzgywjlcw3h

0 notes

Text

Top 10 Cited Papers Software Engineering & Applications Research Articles From 2017 Issue

http://www.airccse.org/journal/ijsea/vol8.html

International Journal of Software Engineering & Applications (IJSEA)

ISSN : 0975 - 9018 ( Online ); 0976-2221 ( Print )

http://www.airccse.org/journal/ijsea/ijsea.html

Citation Count – 04

Factors on Software Effort Estimation

Simon WU Iok Kuan

Faculty of Business Administration, University of Macao, Macau, China

ABSTRACT

Software effort estimation is an important process of system development life cycle, as it may affect the success of software projects if project designers estimate the projects inaccurately. In the past of few decades, various effort prediction models have been proposed by academicians and practitioners. Traditional estimation techniques include Lines of Codes (LOC), Function Point Analysis (FPA) method and Mark II Function Points (Mark II FP) which have proven unsatisfactory for predicting effort of all types of software. In this study, the author proposed a regression model to predict the effort required to design small and medium scale application software. To develop such a model, the author used 60 completed software projects developed by a software company in Macau. From the projects, the author extracted factors and applied them to a regression model. A prediction of software effort with accuracy of MMRE = 8% was constructed.

KEYWORDS

Effort Estimation, Software Projects, Software Applications, System Development Life Cycle.

For More Details : http://aircconline.com/ijsea/V8N1/8117ijsea03.pdf

Volume Link:

http://www.airccse.org/journal/ijsea/vol8.html

REFERENCES

[1] Fu, Ya-fang, Liu, Xiao-dong, Yang, Ren-nong, Du, Yi-lin and Li Yan-jie (2010), “A Software Size Estimation Method Based on Improved FPA”, Second World Congress on Software Engineering,Vol. 2, pp228-233.

[2] Hastings, T. E. & Sajeev, A. S. M. (2001), “A Vector-Based Approach to Software Size Measurement and Effort Estimation”, IEEE Transactions on Software Engineering, Vol. 27, No. 4, pp.337-350.

[3] Norris, K. P. (1971), “The Accuracy of Project Cost and Duration Estimates in Industrial R&D”, R&D Management, Vol. 2, No. 1, pp.25-36.

[4] Murmann, Philipp A. (1994), “Expected Development Time Reductions in the German Mechanical Engineering Industry”, Journal of Product innovation Management, Vol. 11, pp.236-252.

[5] David Consulting Group (2012), “Project Estimating”, DCG Corporate Office, Paoli, 2007: http:davidconsultinggroup.com/training/estimation.aspx (January, 2017)

[6] Boehm, Barry (1976), “Software Engineering”, IEEE Transactions on Computers, Vol. C-25, Issue 12, pp1226-1241.

[7] Dreger, J. B. (1989), “Function Point Analysis”, Englewood Cliffs, NJ:Prentice-Hall.

[8] Smith, Randy K., Hale, Joanne E. & Parrish, Allen S. (2001), “An Empirical Study Using Task Assignment Patterns to Improve the Accuracy of Software Effort Estimation”, IEEE Transactions on Software Engineering, Vol. 27, No. 3, pp.264- 271.

[9] Sataphthy, Shashank Mouli, Kumar, Mukesh & Rath, Santanu Kumar (2013), “Class Point Approach for Software Effort Estimation Using Soft Computing Techniques, International Conference on Advances in Computing, Communications and Informatics(ICACCI), p178-183.

[10] Tariq, Sidra, Usman, Muhammad, Wong, Raymond, Zhuang, Yan & Fong, Simon (2015), “On Learning Software Effort Estimation”, 3rd International Symposium and Business Intelligence, P79- 84.

[11] Bhandari, Sangeeta (2016), “FCM Based Conceptual Framework for Software Effort Estimation”, International Conference on Computing for Sustainable Global Development, pp2585-2588.

[12] Moharreri, Kayhan, Sapre, Alhad Vinayak, Ramanathan, Jayashree & Ramnath, Rajiv (2016), “CostEffective Supervised Learning Models for Software Effort Estimation in Agile Environments”, IEEE 40th Annual Computer Software and Applications Conference, p135-140.

[13] Mukhopadhyay, Tridas & Kekre, Sunder. (1992), “Software Effort Models for Early Estimation of Process Control Applications”, IEEE Transactions on Software Engineering, Vol. 18, No. 10, pp.915- 924.

[14] Boehm, Barry W. (1995), “Cost Models for Future Software Life Cycle Processes: COCOMO 2.0,” Anals of Software Engineering Special Volume on Software Process and Product Measurement, Science Publisher, Amsterdam, Netherlands, 1(3), p45-60.

[15] Srinivasan, Krishnamoorthy & Fisher, Douglas (1995), “Machine Learning Approaches to Estimating Software Development Effort”, IEEE Transactions on Software Engineering, Vol. 21, No. 2, pp126- 137.

[16] Strike, Kevin, Emam, Khaled EI & Madhavji, Nazim (2001), “Software Cost Estimation with Incomplete Data”, IEEE Transactions on Software Engineering, Vol. 27, No. 10, pp215-223.

[17] Putnam, Lawrence H. (1978), “A General Empirical Solution to the Macro Software Sizing and Estimating Problem”, IEEE Transactions on Software Engineering, Vol. SE-4, No. 4, pp345-361.

[18] Boehm, Barry W. (1981), “Software Engineering Economics”, Englewood Cliffs, NJ:Prentice-Hall.

[19] Subramanian, Girish H. & Breslawski, Steven (1995), “An Empirical Analysis of Software Effort Estimate Alternations”, Journal of Systems Software, Vol. 31, pp135-141.

[20] Boehm, Barry W. (1984), “Software Engineering Economics”, IEEE Transactions on Software Engineering”, Vol. 10, pp4-21.

[21] Agrawal, Priya & Kumar, Shraddha (2016), “Early Phase Software Effort Estimation Model”, Symposium on Colossal Data Analysis and Networking, pp1-8.

[22] Albrecht, Allen. J. (1979), “Measuring Application Development Productivity”, Proceedings of the IBM Applications Development Symposium, pp83-92.

[23] Albrecht, Allen J. & Gaffney. John E. (1983), “Software Function, Source Lines of Code, and Development Effort Prediction: A Software Science Validation”, IEEE Transactions on Software Engineering, Vol. 9, No.6, pp639-648.

[24] Hu, Qing, Plant, Robert & Hertz, David (1998), “Software Cost Estimation Using Economic Production Models”, Journal of Management Information Systems, Vol. 15, No. 1, pp143-163.

[25] Bock D. B., & Klepper R. (1992). FP S: A Simplified Function Point Counting Method, “The Journal of Systems Software”, 18:245 254.

[26] Kemerer, Chris F. (1993). “Reliability of Function Points Measurement: A Field Experiment”, Communications of the ACM, 36(2):85 97.

[27] Lokan, Chris J. (2000). “An Empirical Analysis of Function Point Adjustment Factors”, Journal of Information and Software Technology, vol. 42, pp649-660.

[28] Jeffery, J., Low, G. & Barnes, C. (1993), “Comparison of Function Point Counting Techniques”, IEEE Transactions on Software Engineering, Vol. 19, No. 5, pp529- 532.

[29] Misra, A. K. & Chaudhary, B. D. (1991), “An Interactive Structured Program Development Tool”, IEEE Region 10 International Conference on EC3-Energy, Computer, Communication and Control Systems, 3, 1-5.

[30] Kendall, K. E. & Kendall, J. E. (2005), “System Analysis and Design”, 6/e, Prentice- Hall.

[31] Brooks, F. (1975), “The Mythical Man-Month.” Addison-Wesley.

[32] Zhang, Xiaoni & Windsor, John (2003). “An Empirical Analysis of Software Volatility and Related Factors”, Industrial Management & Data Systems, Vol. 103, No. 4, pp275-281.

[33] Kemerer, C. F. & Slaughter, S. (1997). “Determinants of Software Maintenance Profiles: An Empirical Investigation”, Journal of Software Maintenance, Vol. 9, pp235-251.

[34] Krishnan, Mayuram S. (1998). “The Role of Team Factors in Software Cost and Quality”, Information Technology & People, Vol. 11(1), pp20-35.

[35] MacDonell, S. G., Shepperd, M. J. & Sallis, P. (1997), “Metrics for Database Systems: An Empirical Study”, Proceedings of the 4th International Software Metrics Symposium(Metrics 1997).

[36] MacDonell, S. G. (1994). “Comparative Review of Functional Complexity Assessment Methods for Effort Estimation”, Software Engineering Journal, pp107- 116.

Citation Count – 03

A Brief Program Robustness Survey

Ayman M. Abdalla, Mohammad M. Abdallah and Mosa I. Salah

Faculty of Science and I.T, Al-Zaytoonah University of Jordan,

Amman, Jordan

ABSTRACT

Program Robustness is now more important than before, because of the role software programs play in our life. Many papers defined it, measured it, and put it into context. In this paper, we explore the different definitions of program robustness and different types of techniques used to achieve or measure it. There are many papers about robustness. We chose the papers that clearly discuss program or software robustness. These papers stated that program (or software) robustness indicates the absence of ungraceful failures. There are different types of techniques used to create or measure a robust program. However, there is still a wide space for research in this area.

Keywords:

Robustness, Robustness measurement, Dependability, Correctness.

For More Details: http://aircconline.com/ijsea/V8N1/8117ijsea01.pdf

Volume Link: http://www.airccse.org/journal/ijsea/vol8.html

REFERENCES

[1] IEEE Standard Glossary of Software Engineering Terminology, 1990.

[2] J. C. Laprie, J. Arlat, C. Beounes, and K. Kanoun, "Definition and analysis of hardware- and software-fault-tolerant architectures," Computer, vol. 23, pp. 39-51, 1990.

[3] A. Avizienis, J. C. Laprie, B. Randell, and C. Landwehr, "Basic concepts and taxonomy of dependable and secure computing," Dependable and Secure Computing, IEEE Transactions on, vol. 1, pp. 11-33, 2004.

[4] W. S. Jawadekar, Software Engineering: Principles and Practice: Mcgraw Hill Higher Education, 2004.

[5] J. C. Laprie, "Dependable computing: concepts, challenges, directions," in Computer Software and Applications Conference, 2004. COMPSAC 2004.Proceedings of the 28th Annual International, 2004, p. 242 vol.1.

[6] R. S. Pressman, Software Engineering: A Practitioner's Approach, Seventh edition ed.: McGraw Hill Higher Education, 2009.

[7] I. Sommerville, Software Engineering: Addison-Wesley, 2006.

[8] D. M. John, I. Anthony, and O. Kazuhira, Software reliability: measurement, prediction, application: McGraw-Hill, Inc., 1987.

[9] L. L. Pullum, Software fault tolerance techniques and implementation: Artech House, Inc., 2001.

[10] D. G. Steven, "Robustness in Complex Systems," presented at the Proceedings of the Eighth Workshop on Hot Topics in Operating Systems, 2001.

[11] G. M. Weinberg. (1983) Kill That Code! Infosystems.48-49.

[12] D. John and Philip J. Koopman, Jr., "Robust Software - No More Excuses," presented at the Proceedings of the 2002 International Conference on Dependable Systems and Networks, 2002.

[13] D. Frank, Z. Nickolai, K. Frans, M. David, res, and M. Robert, "Event-driven programming for robust software," presented at the Proceedings of the 10th workshop on ACM SIGOPS European workshop, Saint-Emilion, France, 2002.

[14] Y. Bi, J. Yuan, and Y. Jin, "Beyond the Interconnections: Split Manufacturing in RF Designs," Electronics, vol. 4, p. 541, 2015.

[15] Y. Bi, X. S. Hu, Y. Jin, M. Niemier, K. Shamsi, and X. Yin, "Enhancing Hardware Security with Emerging Transistor Technologies," presented at the Proceedings of the 26th edition on Great Lakes Symposium on VLSI, Boston, Massachusetts, USA, 2016.

[16] Y. Bi, K. Shamsi, J.-S.Yuan, P.-E.Gaillardon, G. D. Micheli, X. Yin, X.S. Hu, M. Niemier,

Y. Jin, "Emerging Technology-Based Design of Primitives for Hardware Security," J. Emerg. Technol. Comput. Syst., vol. 13, pp. 1-19, 2016.

[17] M. Rebaudengo, M. S. Reorda, M. Torchiano, and M. Violante, "Soft-Error Detection through Software Fault-Tolerance Techniques," in IEEE International Symposium on Defect and Fault-Tolerance in VLSI Systems, 1999.

[18] R. L. Michael, H. Zubin, K. S. S. Sam, and C. Xia, "An Empirical Study on Testing and Fault Tolerance for Software Reliability Engineering," presented at the Proceedings of the 14th International Symposium on Software Reliability Engineering, 2003.

[19] N. H. Michael and T. H. Vance, "Robust Software," IEEE Internet Computing, vol. 6, pp. 80-82, 2002.

[20] M. Dix and H. D. Hofmann, "Automated software robustness testing - static and adaptive test case design methods," in Euromicro Conference, 2002.Proceedings.28th, 2002, pp. 62- 66.

[21] N. H. Michael, T. H. Vance, and G. Rosa Laura Zavala, "Robust software via agent-based redundancy," presented at the Proceedings of the second international joint conference on Autonomous agents and multiagent systems, Melbourne, Australia, 2003.

[22] T. Rajesh and N. H. Michael, "Multiagent Reputation Management to Achieve Robust Software Using Redundancy," presented at the Proceedings of the IEEE/WIC/ACM International Conference on Intelligent Agent Technology, 2005.

[23] V. T. Holderfield and M. N. Huhns, "A Foundational Analysis of Software Robustness Using Redundant Agent Collaboration," in Agent Technologies, Infrastructures, Tools, and Applications for E-Services.vol. 2592/2003, ed Berlin / Heidelberg: Springer, 2003, pp. 355- 369.

[24] R. Laddaga. (1999, May/June) Creating Robust Software through Self-Adaptation.IEEE Intelligent systems.26-30.

[25] M. K. Mieczyslaw, B. Kenneth, and A. E. Yonet, "Control Theory-Based Foundations of Self-Controlling Software," vol. 14, ed: IEEE Educational Activities Department, 1999, pp. 37-45.

[26] C. Petitpierre and A. Eliëns, "Active Objects Provide Robust Event-Driven Applications," in SERP'02, Las Vegas, 2002, pp. 253-259.

[27] G. C. Philip, "Software design guidelines for event-driven programming," Journal of Systems and Software, vol. 41, pp. 79-91, 1998.

[28] B. P. Miller, D. Koski, C. P. Lee, V. Maganty, R. Murthy, A. Natarajan, J. Steidl, "Fuzz Revisited: A Re-examination of the Reliability of UNIX Utilities and Services," Report: University of Wisconsin, 1995.

[29] M. Schmid and F. Hill, "Data Generation Techniques for Automated Software Robustness Testing," in Proceedings of the International Conference on Testing Computer Software, 1999, pp. 14-18.

[30] J. P. DeVale, P. J. Koopman, and D. J. Guttendorf, "The Ballista Software Robustness Testing Service," presented at the Tesing Computer Software Coference, 1999.

[31] P. Koopman. (2002, 2nd September). The Ballista Project: COTS Software Robustness Testing. Available: http://www.ece.cmu.edu/~koopman/ballista/index.html

[32] K. Kanoun, H. Madeira, and J. Arlat, "A Framework for Dependability Benchmarking," presented at the The International Conference on Dependable Systems and Networks, Washington, D.C., USA, 2002.

[33] A. B. Brown and P. Shum, "Measuring Resiliency of IT Systems," presented at the SIGDeB Workshop, 2005.

[34] A. B. Brown, J. Hellerstein, M. Hogstrom, T. Lau, S. Lightstone, P. Shum, M. Peterson, "Benchmarking Autonomic Capabilities: Promises and Pitfalls," in International Conference on Autonomic Computing (ICAC'04), Los Alamitos, CA, USA, 2004, pp. 266-267.

[35] H. Zuse, A Framework of Software Measurement: Walter de Gruyter, 1998.

[36] N. E. Fenton and S. L. Pfleeger, Software Metrics, A Rigorous and Practical Approach, 2 ed.: PWS Publishing Company, 1997.

[37] ISO/IEC 15939: Systems and software engineering -- Measurement process, ISO/IEC, 2007.

[38] K. Kaur, K. Minhas, N. Mehan, and N. Kakkar, "Static and Dynamic Complexity Analysis of Software Metrics," Empirical Software Engineering, vol. 56, pp. 159-161, 2009.

[39] D. M. Jones, The New C Standard: A Cultural and Economic Commentary, 1st edition ed.: Addison-Wesley Professional, 2003.

[40] International Standard ISO/IEC 9899, 1999.

[41] D. M. Jones, The New C Standard: An Economic and Cultural Commentary, 2002.

[42] C programming language coding guidelines, www.lrdev.com, 1998.

[43] M. Arup and P. S. Daniel, "Measuring Software Dependability by Robustness Benchmarking," vol. 23, ed: IEEE Press, 1997, pp. 366-378.

[44] B. Eslamnour and S. Ali, "Measuring robustness of computing systems," Simulation Modelling Practice and Theory, vol. 17, pp. 1457-1467, 2009.

[45] H. Arne, R. Razvan, and E. Rolf, "Methods for multi-dimensional robustness optimization in complex embedded systems," presented at the Proceedings of the 7th ACM & IEEE international conference on Embedded software, Salzburg, Austria, 2007.

[46] M. Abdallah, M. Munro, and K. Gallagher, "Certifying software robustness using program slicing," in 2010 IEEE International Conference on Software Maintenance, Timisoara, Romania, 2010, pp. 1-2.

Citation Count – 02

Culture Effect on Requirements Elicitation Practice in

Developing Countries

Ayman Sadig1 and Abd-El-Kader Sahraoui2 1Ahfad University for Women and SUST Khartoum Sudan

2LAAS-CNRS, Université de Toulouse, CNRS, U2J, Toulouse, France

ABSTRACT

Requirement elicitation is a very important step into developing any new application. This paper will examine the culture effect on requirement elicitation in developing countries.

This is a unique research that will look at requirement elicitation process in 10 different parts of the world including Arab word, India, China, Africa and South America. The focus is how the culture affects (RE) and makes every place has its own practice of RE. The data were collect through surveys and direct interviews. The results show astonishing culture effect on RE.

The conclusion is that culture effects deeply the technique gets chosen for requirement elicitation. If you are doing RE in Thailand, it will be very different from RE in Arab world. For example in Thailand respect for leader is critical and any questioning of manager methods will create a problem while in Arab world decision tree is favourite RE technique because visual are liked much more than documents.

KEYWORDS

Culture impact, requirement elicitation.

For More Details:http://aircconline.com/ijsea/V8N1/8117ijsea05.pdf

Volume Link: http://www.airccse.org/journal/ijsea/vol8.html

REFERENCES

[1] Lee, S.hyun. & Kim Mi Na, (2008) “This is my paper”, ABC Transactions on ECE, Vol. 10, No. 5, pp120-122.

[2] Gizem, Aksahya & Ayese, Ozcan (2009) Coomunications & Networks, Network Books, ABC Publishers.

[3] Sadiq .M and Mohd .S (2009), Article in an International journal, “Elicitationand Prioritization of Software Requirements”. Internation Journal of RecentTrends in Engineering, Vol.2, No.3, pp. 138-142.

[4] Bergey, John, et al. Why Reengineering Projects Fail. No. CMU/SEI-99-TR-010. CARNEGIE-MELLON UNIV PITTSBURGH PA SOFTWARE ENGINEERING INST, 1999.

[5] Goguen, J. A., Linde, C. (1993): Techniques for Requirements Elicitation, International Symposium on Requirements Engineering, pp. 152-164, January 4-6, San Diego, CA.

[6] Robertson, S., Robertson, J. (1999) Mastering the Requirements Process, Addison Wesley: Great Britain.

[7] Iqbal, Tabbassum, and Mohammad Suaib. "Requirement Elicitation Technique:-A Review Paper." Int. J. Comput. Math. Sci 3.9 (2014).

[8] HOFSTEDE G (1980) Culture’s Consequences: International Differences in Work- Related Values. Sage, Newbury Park, CA.

[9] SCHEIN EH (1985) Organisational Culture and Leadership. Jossey-Bass, San Francisco, CA.

[10] LYTLE AL, BRETT JM, BARSNESS ZI, TINSLEY CH and JANSSENS M (1999) A paradigm for confirmatory cross-cultural research in organizational behavior. Research in Organizational Behavior 17, 167–214, https:// lirias.kuleuven.be/handle/123456789/31199.

[11] Kluckhohn, K (1954) Culture and behavior. In G. Lindsey (ED.) handbook of social psychology

[12] TRIANDIS HC (1995) Individualism & Collectivism. Westview Press, Boulder, CO.

[13] ROKEACH M (1973) The Nature of Human Values. Free Press, New York.

[14] KARAHANNA E, EVARISTO JR and SRITE M (2005) Levels of culture and individual behavior: an integrative perspective. Journal of Global Information Management 13(2), 1–20

[15] Fernández, Daniel Méndez, and Stefan Wagner. "Naming the pain in requirements engineering: A design for a global family of surveys and first results from Germany." Information and Software Technology 57 (2015): 616-643.

[16] Davis, Gordon B. "Strategies for information requirements determination."IBM systems journal 21.1 (1982): 4-30.

[17] Rouibah, Kamel. "Social usage of instant messaging by individuals outside the workplace in Kuwait: A structural equation model." Information Technology & People 21.1 (2008): 34-68.

[18] Byrd, Terry Anthony, Kathy L. Cossick, and Robert W. Zmud. "A synthesis of research on requirements analysis and knowledge acquisition techniques."MIS quarterly (1992): 117-138.

[19] Arnott, David, Waraporn Jirachiefpattana, and Peter O'Donnell. "Executive information systems development in an emerging economy." Decision Support Systems 42.4 (2007): 2078-2084.

[20] Kontio, Jyrki, Laura Lehtola, and Johanna Bragge. "Using the focus group method in software engineering: obtaining practitioner and user experiences."Empirical Software Engineering, 2004. ISESE'04. Proceedings. 2004 International Symposium on. IEEE, 2004.

[21] Agarwal, Ritu, Atish P. Sinha, and Mohan Tanniru. "The role of prior experience and task characteristics in object-oriented modeling: an empirical study." International journal of human-computer studies 45.6 (1996): 639-667.

[22] Liu, Lin, et al. "Understanding chinese characteristics of requirements engineering." 2009 17th IEEE International Requirements Engineering Conference. IEEE, 2009.

[23] Rouibah, Kamel, and Sulaiman Al-Rafee. "Requirement engineering elicitation methods: A Kuwaiti empirical study about familiarity, usage and perceived value." Information management & computer security 17.3 (2009): 192-217.

[24] Liu, Lin, et al. "Understanding chinese characteristics of requirements engineering." 2009 17th IEEE International Requirements Engineering Conference. IEEE, 2009.

[25] Fernández, Daniel Méndez, and Stefan Wagner. "Naming the pain in requirements engineering: A design for a global family of surveys and first results from Germany." Information and Software Technology 57 (2015): 616-643.

[26] Winschiers-Theophilus, Heike, et al. "Determining requirements within an indigenous knowledge system of African rural communities." Proceedings of the 2010 Annual Research Conference of the South African Institute of Computer Scientists and Information Technologists. ACM, 2010.

[27] Mursu, Anja, et al. "Information systems development in a developing country: Theoretical analysis of special requirements in Nigeria and Africa."System Sciences, 2000. Proceedings of the 33rd Annual Hawaii International Conference on. IEEE, 2000.

[28] Anwar, Fares, and Rozilawati Razali. "A practical guide to requirements elicitation techniques selection-An empirical study." Middle-East Journal of Scientific Research 11.8 (2012): 1059-1067.

[29] HELLIWELL J, LAYARD R and SACHS J (2013) World happiness report 2013, United Nations.

[30] HOFSTEDE G andHOFSTEDE GJ (2005) Cultures and Organisations: Software of the Mind. McGraw-Hill, New York.

[31] Thanasankit, Theerasak, and Brian Corbitt. "Cultural context and its impact on requirements elicitation in Thailand." EJISDC: The Electronic Journal on Information Systems in Developing Countries 1 (2000): 2.

[32] Komin, S. (1990). Psychology of the Thai People: Values and Behavioral Patterns. Bangkok, Thailand: NIDA (National Institute of Development Administration).

[33] Khan¹, Shadab, Aruna B. Dulloo, and Meghna Verma. "Systematic review of requirement elicitation techniques." (2014).

[34] Sadig, Ayman. "Requirements Engineering Practice in Developing Countries: Elicitation and Traceability Processes." Proceedings of the International Conference on Software Engineering Research and Practice (SERP). The Steering Committee of The World Congress in Computer Science, Computer Engineering and Applied Computing (WorldComp), 2016.

[35] Wiegers, Karl, and Joy Beatty. Software requirements. Pearson Education, 2013

Citation Count – 02

A User Story Quality Measurement Model for Reducing Agile

Software Development Risk

Sen-Tarng Lai

Department of Information Technology and Management, Shih Chien University, Taipei, Taiwan

ABSTRACT

In Mobile communications age, the IT environment and IT technology update rapidly. The requirements change is the software project must face challenge. Able to overcome the impact of requirements change, software development risks can be effectively reduced. Agile software development uses the Iterative and Incremental Development (IID) process and focuses on the workable software and client communication. Agile software development is a very suitable development method for handling the requirements change in software development process. In agile development, user stories are the important documents for the client communication and criteria of acceptance test. However, the agile development doesn’t pay attention to the formal requirements analysis and artifacts tracability to cause the potential risks of software change management. In this paper, analyzing and collecting the critical quality factors of user stories, and proposes the User Story Quality Measurement (USQM) model. Applied USQM model, the requirements quality of agile development can be enhanced and risks of requirement changes can be reduced.

KEYWORDS

Agile development, user story, software project, quality measurement, USQM.

For More Details : http://aircconline.com/ijsea/V8N2/8217ijsea05.pdf

Volume Link : http://www.airccse.org/journal/ijsea/vol8.html

REFERENCES

[1] S. A. Bohner and R. S. Arnold, 1996. Software Change Impact Analysis, IEEE Computer Society Press, CA, pp. 1-26.

[2] S. A. Bohner, 2002. Software Change Impacts: An Evolving Perspective, Proc. of IEEE Intl Conf. on Software Maintenance, pp. 263-271.

[3] B. W. Boehm, 1991. Software risk management: Principles and practices, IEEE Software, 8(1), 1991, pp. 32-41.

[4] A. Cockburn, 2002. Agile Software Development, Addison-Wesley.

[5] M. Cohn and D. Ford, 2003. Introducing an Agile Process to an Organization, IEEE Computer, vol. 36 no. 6 pp. 74-78, June 2003.

[6] V. Szalvay, “An Introduction to Agile Software Development,” Danube Technologies Inc., 2004.

[7] C. Larman and V. R. Basili, 2003. Iterative and Incremental Development: A Brief History, IEEE Computer, June 2003.

[8] C. Larman, 2004. Agile and Iterative Development: A Manager's Guide, Boston: Addison Wesley.

[9] S. R. Schach, 2010. Object-Oriented Software Engineering, McGraw-Hill Companies.

[10] J. L. Eveleens and C. Verhoef, 2010. The Rise and Fall of the Chaos Report Figures,” IEEE Software, vol. 27, no. 1, pp. 30-36.

[11] The Standish group, 2009. “New Standish Group report shows more project failing and less successful projects,” April 23, 2009.

(http://www.standishgroup.com/newsroom/chaos_2009.php)

[12] B. W. Boehm, 1989. “Tutorial: Software Risk Management,” IEEE CS Press, Los Alamitos, Calif.

[13] R. Fairley,1994. “Risk management for Software Projects,” IEEE Software, vol. 11, no. 3, pp. 57-67.

[14] R. S. Pressman, 2010. Software Engineering: A Practitioner’s Approach, McGraw- Hill, New York, 2010.

[15] David S. Frankel, 2003. Model Driven Architecture: Applying MDA to Enterprise Computing, John Wiley & Sons.

[16] Mike Cohn, 2004. User Stories Applied: For Agile Software Development, Addison- Wesley Professional; 1 edition.

[17] Ron Jeffries, 2001. “Essential XP: Card, Conversation, Confirmation,” Posted on: August 30, 2001. (http://xprogramming.com/index.php)

[18] Bill Wake, 2003. “INVEST in Good Stories, and SMART Tasks,” Posted on August 17, 2003, (http://xp123.com/articles/invest-in-good-stories-and-smart-tasks/)

[19] Bill Wake, 2012. “Independent Stories in the INVEST Model,” Posted on: February 8, 2012, (http://xp123.com/articles/independent-stories-in-the-invest-model/)

[20] T. J. McCabe, 1976. A Complexity Measure, IEEE Trans. On Software Eng., Vol. 2, No 4, pp.308-320.

[21] M. H. Halstead, 1977, Elements of Software Science, North-Holland, New York.

[22] Ivar Jacobson and Pan-Wei Ng, 2004, Aspect-Oriented Software Development with Use Cases, Addison-Wesley Boston, 2004.

[23] Ralph Young, 2001, Effective Requirements Practices, Addison-Wesley, Boston, 2001.

[24] S. D. Conte, H. E. Dunsmore and V. Y. Shen, 1986. Software Engineering Metrics and Models, Benjamin/Cummings, Menlo Park.

[25] N. E. Fenton, 1991, Software Metrics - A Rigorous Approach, Chapman & Hall.

[26] D. Galin, 2004. Software Quality Assurance – From theory to implementation, Pearson Education Limited, England.

Citation Count – 19

A Survey of Verification Tools Based on Hoare Logic

Nahid A. Ali

College of Computer Science & Information Technology, Sudan University of Science & Technology, Khartoum, Sudan

ABSTRACT

The quality and the correctness of software has a great concern in computer systems. Formal verification tools can used to provide a confidence that a software design is free from certain errors. This paper surveys tools that accomplish automatic software verification to detect programming errors or prove their absence. The two tools considered are tools that based on Hoare logic namely, the KeY-Hoare and Hoare Advanced Homework Assistant (HAHA). A detailed example on these tools is provided, underlining their differences when applied to practical problems.

KEYWORDS

Hoare Logic, Software Verification, Formal Verification Tools, KeY-Hoare Tool, Hoare Advanced Homework Assistant Tool

For More Details : http://aircconline.com/ijsea/V8N2/8217ijsea06.pdf

Volume Link : http://www.airccse.org/journal/ijsea/vol8.html

REFERENCES

[1] D'silva, Vijay and Kroening, Daniel and Weissenbacher, Georg, "A survey of automated techniques for formal software verification." IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems, vol. 27(7), pp.1165- 1178, 2008.

[2] C. A. R. Hoare, "An Axiomatic Basis for Computer Programming," Communications of the ACM, vol. 12, no. 10, pp. 576 - 580, 1969.

[3] R. W. Floyd, "Assigning Meanings to Programs," Mathematical Aspects of Computer Science, vol. 19, no. 1, pp. 19-32, 1967.

[4] Mili , Ali ; Tchier, Fairouz ;, Software Testing: Concepts and Operations, Hoboken, New Jersey: John Wiley & Sons, 2015.

[5] "Isabelle," [Online]. Available: http://www.cl.cam.ac.uk/research/hvg/Isabelle/.

[6] S. Owre, J. Rushby and N. Shankar, "PVS: A Prototype Verification System," in 11th International Conference on Automated Deduction (CADE), vol. 607, Springer- Verlag, 1992, pp. 748-752.

[7] "Symbolic Model Verifier," [Online]. Available: http://www.cs.cmu.edu/~modelcheck/smv.html.

[8] J. Winkler, "The Frege Program Prover FPP," in Internationales Wissenschaftliches Kolloquium, vol. 42, 1997, pp. 116-121.

[9] D. Crocker, "Perfect Developer: A Tool for Object-Oriented Formal Specification and Refinement," Tools Exhibition Notes at Formal Methods Europe, 2003.

[10] H¨ahnle , Reiner; Bubel, Richard, "A Hoare-Style Calculus with Explicit State Updates," Formal Methods in Computer Science Education(FORMED), pp. 49-60, 2008.

[11] "Hoare Advanced Homework Assistant (HAHA)," [Online]. Available: http://haha.mimuw.edu.pl/.

[12] T. Sznuk and A. Schubert, "Tool Support for Teaching Hoare Logic," in Software Engineering and Formal Methods, Springer, 2014, pp. 332-346.

[13] "Key- Hoare System," [Online]. Available: http://www.key- project.org/download/hoare/.

[14] L. de Moura and N. Bjørner, "Z3: An efficient SMT solver," in Tools and Algorithms for the Construction and Analysis of Systems, Springer, 2008, pp. 337- 340.

[15] C. Barrett, C. L. Conway, M. Deters, L. Hadarean, D. Jovanovi´c, T. King, A. Reynolds and C. Tinelli, "CVC4," in Computer Aided Verification, Springer, 2011, pp. 171-177.

[16] Feinerer, Ingo and Salzer, Gernot , A comparison of tools for teaching formal software verification, Formal Aspects of Computing, vol. 21(3), pp. 293–301, 2009.

Citation Count – 18

The Impact of Software Complexity on Cost and Quality - A Comparative Analysis Between Open Source and Proprietary Software

Anh Nguyen-Duc IDI, NTNU, Norway

ABSTRACT

Early prediction of software quality is important for better software planning and controlling. In early development phases, design complexity metrics are considered as useful indicators of software testing effort and some quality attributes. Although many studies investigate the relationship between design complexity and cost and quality, it is unclear what we have learned beyond the scope of individual studies. This paper presented a systematic review on the influence of software complexity metrics on quality attributes. We aggregated Spearman correlation coefficients from 59 different data sets from 57 primary studies by a tailored meta-analysis approach. We found that fault proneness and maintainability are most frequently investigated attributes. Chidamber & Kemerer metric suite is most frequently used but not all of them are good quality attribute indicators. Moreover, the impact of these metrics is not different in proprietary and open source projects. The result provides some implications for building quality model across project type.

KEYWORDS

Design Complexity, Software Engineering, Open source software, Systematic literature review

For More Details : http://aircconline.com/ijsea/V8N2/8217ijsea02.pdf

Volume Link : http://www.airccse.org/journal/ijsea/vol8.html

REFERENCES

[1] T. DeMarco, “A metric of estimation quality,” Proceedings of the May 16-19, 1983, national computer conference, Anaheim, California: ACM, 1983, pp. 753-756.