Photo

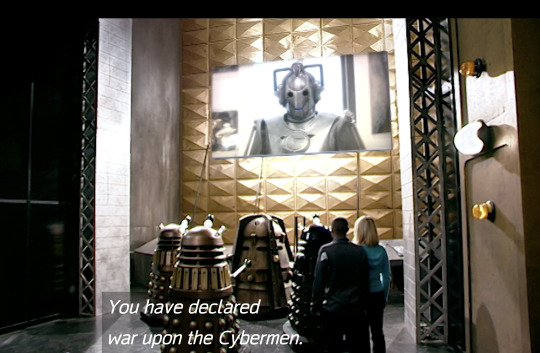

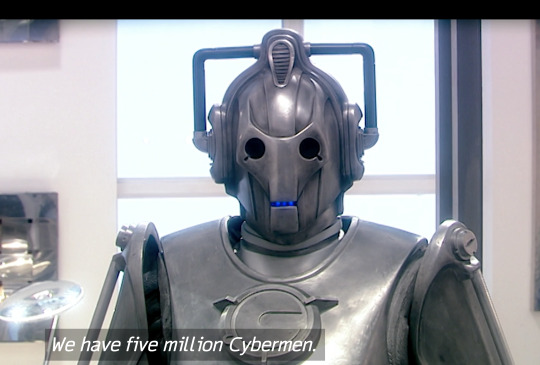

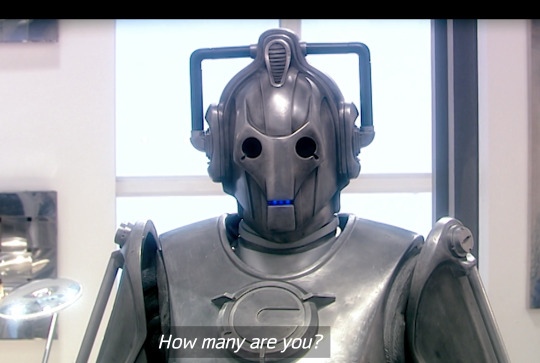

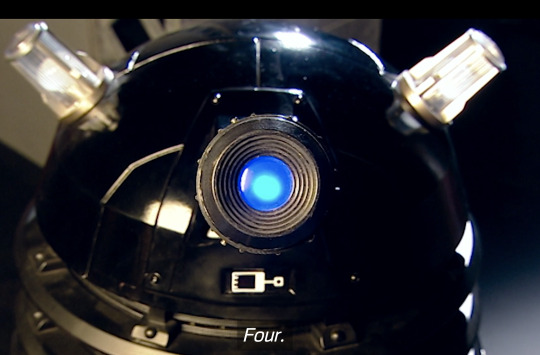

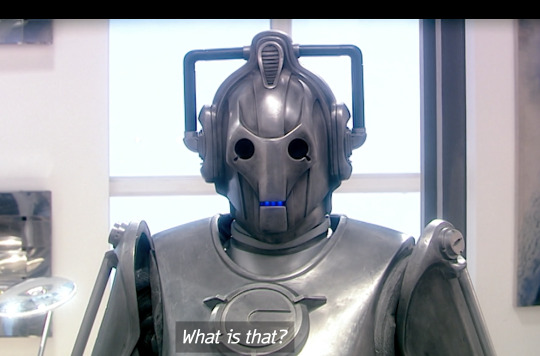

possibly one of the most hilarious exchanges on doctor who

148K notes

·

View notes

Text

blackkittycrew

"what? what is your problem?? always in here showing off your four fangs >:("

10K notes

·

View notes

Text

Well you should’ve named yourself something else then

22K notes

·

View notes

Text

applying for internships and i think the job market may be irreparable

23K notes

·

View notes

Text

There was a paper in 2016 exploring how an ML model was differentiating between wolves and dogs with a really high accuracy, they found that for whatever reason the model seemed to *really* like looking at snow in images, as in thats what it pays attention to most.

Then it hit them. *oh.*

*all the images of wolves in our dataset has snow in the background*

*this little shit figured it was easier to just learn how to detect snow than to actually learn the difference between huskies and wolves. because snow = wolf*

Shit like this happens *so often*. People think trainning models is like this exact coding programmer hackerman thing when its more like, coralling a bunch of sentient crabs that can do calculus but like at the end of the day theyre still fucking crabs.

25K notes

·

View notes

Text

13K notes

·

View notes

Text

*bleeding to death because the paramedics can’t break the windows to get me out of my stupid fucking truck* heha well at least i dont have to worry about the friggin Zombie Apocalypse… awesomesauce 😎

31K notes

·

View notes

Text

She's just trying to pay her student loans

(Source)

22K notes

·

View notes

Text

She's just trying to pay her student loans

(Source)

22K notes

·

View notes

Text

111K notes

·

View notes

Text

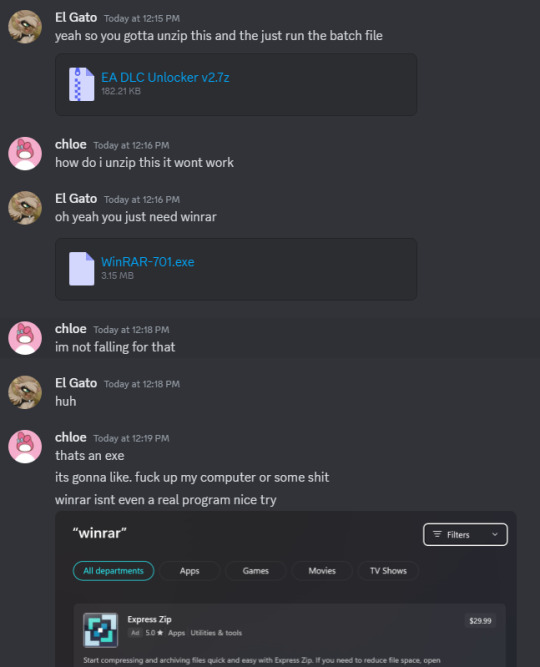

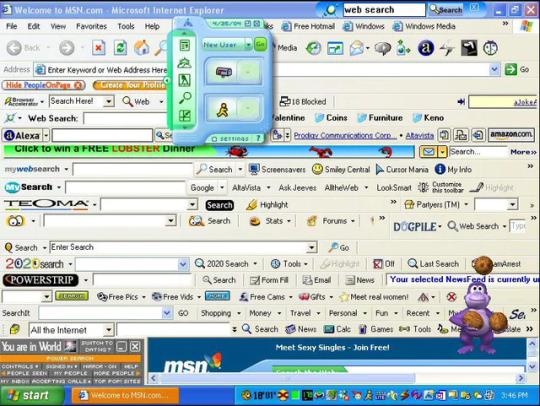

I'm sorry, but I adamantly disagree.

This is very good behavior.

They didn't recognize the file extension.

They didn't recognize the EXE program.

And so they refused to open them.

That is excellent internet security hygiene.

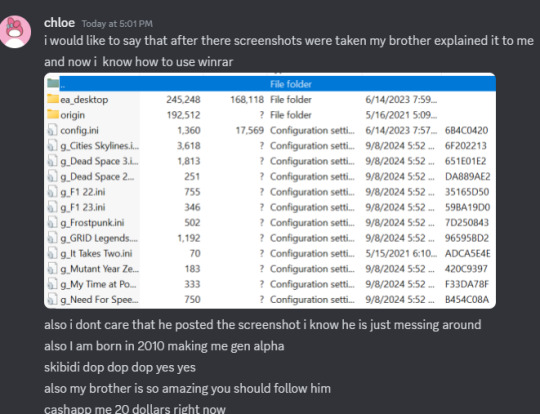

They went to a trusted person (their brother) and verified WinRAR was legit and then proceeded to unpack the files.

How is this not *encouraging* for gen alpha? Is it just because they didn't know what WinRAR was? Who cares? I'm just proud they were being careful.

Unlike my boomer uncle who once installed so many spam search toolbars that there was no screen real estate left to show webpages.

16K notes

·

View notes