#apache pyspark training

Explore tagged Tumblr posts

Text

How to Read and Write Data in PySpark

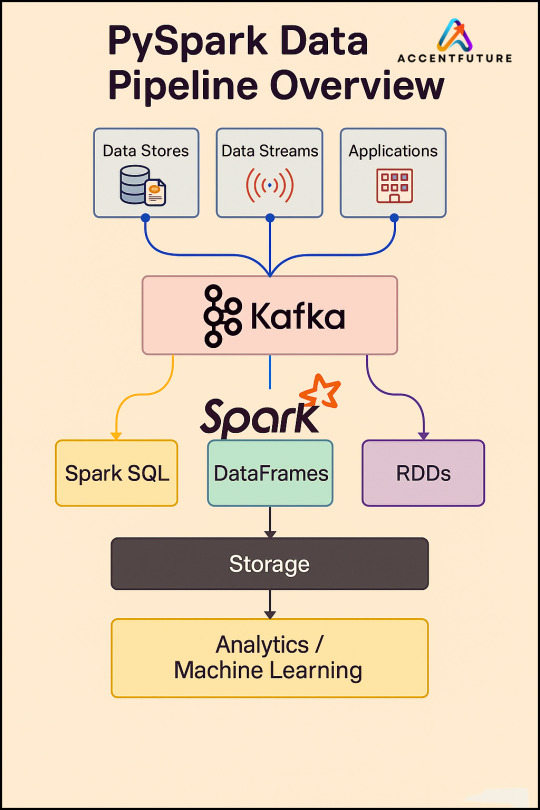

The Python application programming interface known as PySpark serves as the front end for Apache Spark execution of big data operations. The most crucial skill required for PySpark work involves accessing and writing data from sources which include CSV, JSON and Parquet files.

In this blog, you’ll learn how to:

Initialize a Spark session

Read data from various formats

Write data to different formats

See expected outputs for each operation

Let’s dive in step-by-step.

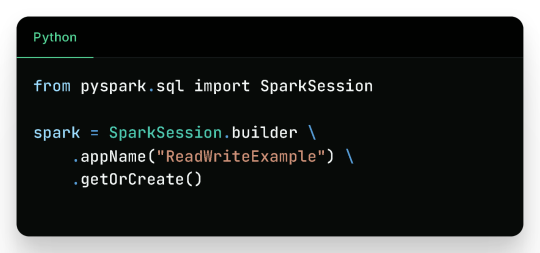

Getting Started

Before reading or writing, start by initializing a SparkSession.

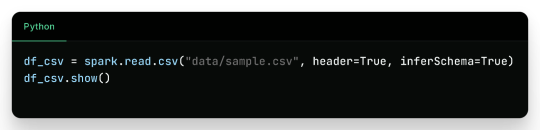

Reading Data in PySpark

1. Reading CSV Files

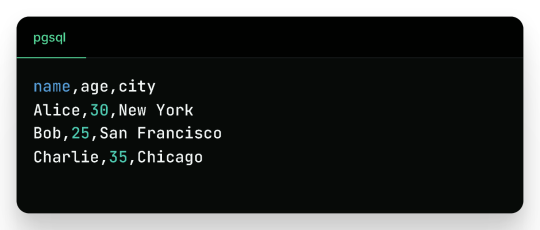

Sample CSV Data (sample.csv):

Output:

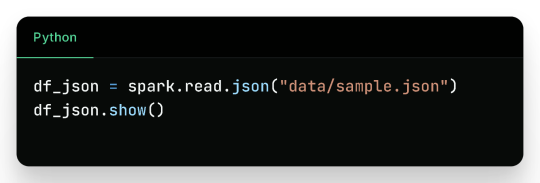

2. Reading JSON Files

Sample JSON (sample.json):

Output:

3. Reading Parquet Files

Parquet is optimized for performance and often used in big data pipelines.

Assuming the parquet file has similar content:

Output:

4. Reading from a Database (JDBC)

Sample Table employees in MySQL:

Output:

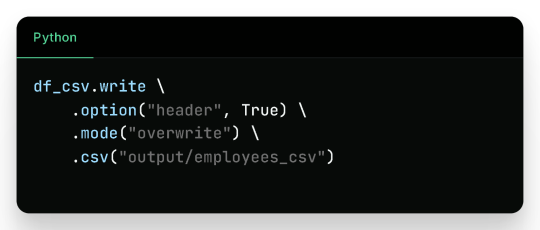

Writing Data in PySpark

1. Writing to CSV

Output Files (folder output/employees_csv/):

Sample content:

2. Writing to JSON

Sample JSON output (employees_json/part-*.json):

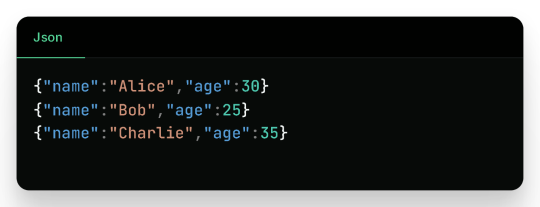

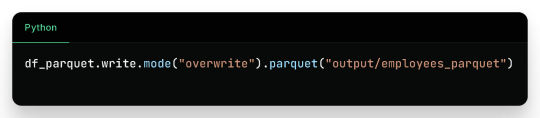

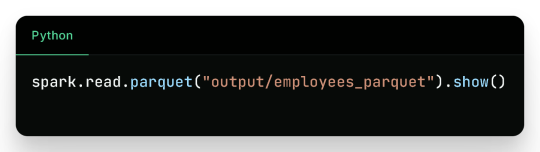

3. Writing to Parquet

Output:

Binary Parquet files saved inside output/employees_parquet/

You can verify the contents by reading it again:

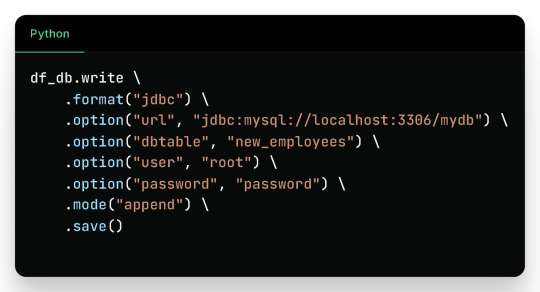

4. Writing to a Database

Check the new_employees table in your database — it should now include all the records.

Write Modes in PySpark

Mode

Description

overwrite

Overwrites existing data

append

Appends to existing data

ignore

Ignores if the output already exists

error

(default) Fails if data exists

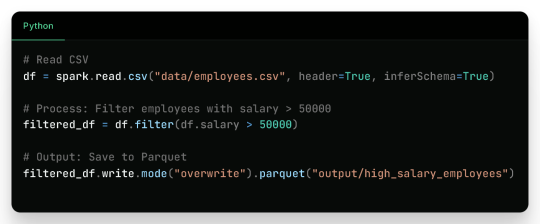

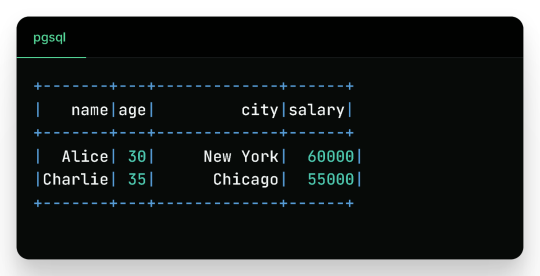

Real-Life Use Case

Filtered Output:

Wrap-Up

Reading and writing data in PySpark is efficient, scalable, and easy once you understand the syntax and options. This blog covered:

Reading from CSV, JSON, Parquet, and JDBC

Writing to CSV, JSON, Parquet, and back to Databases

Example outputs for every format

Best practices for production use

Keep experimenting and building real-world data pipelines — and you’ll be a PySpark pro in no time!

🚀Enroll Now: https://www.accentfuture.com/enquiry-form/

📞Call Us: +91-9640001789

📧Email Us: [email protected]

🌍Visit Us: AccentFuture

#apache pyspark training#best pyspark course#best pyspark training#pyspark course online#pyspark online classes#pyspark training#pyspark training online

0 notes

Text

Navigating the Data Landscape: A Deep Dive into ScholarNest's Corporate Training

In the ever-evolving realm of data, mastering the intricacies of data engineering and PySpark is paramount for professionals seeking a competitive edge. ScholarNest's Corporate Training offers an immersive experience, providing a deep dive into the dynamic world of data engineering and PySpark.

Unlocking Data Engineering Excellence

Embark on a journey to become a proficient data engineer with ScholarNest's specialized courses. Our Data Engineering Certification program is meticulously crafted to equip you with the skills needed to design, build, and maintain scalable data systems. From understanding data architecture to implementing robust solutions, our curriculum covers the entire spectrum of data engineering.

Pioneering PySpark Proficiency

Navigate the complexities of data processing with PySpark, a powerful Apache Spark library. ScholarNest's PySpark course, hailed as one of the best online, caters to both beginners and advanced learners. Explore the full potential of PySpark through hands-on projects, gaining practical insights that can be applied directly in real-world scenarios.

Azure Databricks Mastery

As part of our commitment to offering the best, our courses delve into Azure Databricks learning. Azure Databricks, seamlessly integrated with Azure services, is a pivotal tool in the modern data landscape. ScholarNest ensures that you not only understand its functionalities but also leverage it effectively to solve complex data challenges.

Tailored for Corporate Success

ScholarNest's Corporate Training goes beyond generic courses. We tailor our programs to meet the specific needs of corporate environments, ensuring that the skills acquired align with industry demands. Whether you are aiming for data engineering excellence or mastering PySpark, our courses provide a roadmap for success.

Why Choose ScholarNest?

Best PySpark Course Online: Our PySpark courses are recognized for their quality and depth.

Expert Instructors: Learn from industry professionals with hands-on experience.

Comprehensive Curriculum: Covering everything from fundamentals to advanced techniques.

Real-world Application: Practical projects and case studies for hands-on experience.

Flexibility: Choose courses that suit your level, from beginner to advanced.

Navigate the data landscape with confidence through ScholarNest's Corporate Training. Enrol now to embark on a learning journey that not only enhances your skills but also propels your career forward in the rapidly evolving field of data engineering and PySpark.

#data engineering#pyspark#databricks#azure data engineer training#apache spark#databricks cloud#big data#dataanalytics#data engineer#pyspark course#databricks course training#pyspark training

3 notes

·

View notes

Text

Can I use Python for big data analysis?

Yes, Python is a powerful tool for big data analysis. Here’s how Python handles large-scale data analysis:

Libraries for Big Data:

Pandas:

While primarily designed for smaller datasets, Pandas can handle larger datasets efficiently when used with tools like Dask or by optimizing memory usage..

NumPy:

Provides support for large, multi-dimensional arrays and matrices, along with a collection of mathematical functions to operate on these arrays.

Dask:

A parallel computing library that extends Pandas and NumPy to larger datasets. It allows you to scale Python code from a single machine to a distributed cluster

Distributed Computing:

PySpark:

The Python API for Apache Spark, which is designed for large-scale data processing. PySpark can handle big data by distributing tasks across a cluster of machines, making it suitable for large datasets and complex computations.

Dask:

Also provides distributed computing capabilities, allowing you to perform parallel computations on large datasets across multiple cores or nodes.

Data Storage and Access:

HDF5:

A file format and set of tools for managing complex data. Python’s h5py library provides an interface to read and write HDF5 files, which are suitable for large datasets.

Databases:

Python can interface with various big data databases like Apache Cassandra, MongoDB, and SQL-based systems. Libraries such as SQLAlchemy facilitate connections to relational databases.

Data Visualization:

Matplotlib, Seaborn, and Plotly: These libraries allow you to create visualizations of large datasets, though for extremely large datasets, tools designed for distributed environments might be more appropriate.

Machine Learning:

Scikit-learn:

While not specifically designed for big data, Scikit-learn can be used with tools like Dask to handle larger datasets.

TensorFlow and PyTorch:

These frameworks support large-scale machine learning and can be integrated with big data processing tools for training and deploying models on large datasets.

Python’s ecosystem includes a variety of tools and libraries that make it well-suited for big data analysis, providing flexibility and scalability to handle large volumes of data.

Drop the message to learn more….!

2 notes

·

View notes

Text

Data Science Tutorial for 2025: Tools, Trends, and Techniques

Data science continues to be one of the most dynamic and high-impact fields in technology, with new tools and methodologies evolving rapidly. As we enter 2025, data science is more than just crunching numbers—it's about building intelligent systems, automating decision-making, and unlocking insights from complex data at scale.

Whether you're a beginner or a working professional looking to sharpen your skills, this tutorial will guide you through the essential tools, the latest trends, and the most effective techniques shaping data science in 2025.

What is Data Science?

At its core, data science is the interdisciplinary field that combines statistics, computer science, and domain expertise to extract meaningful insights from structured and unstructured data. It involves collecting data, cleaning and processing it, analyzing patterns, and building predictive or explanatory models.

Data scientists are problem-solvers, storytellers, and innovators. Their work influences business strategies, public policy, healthcare solutions, and even climate models.

Essential Tools for Data Science in 2025

The data science toolkit has matured significantly, with tools becoming more powerful, user-friendly, and integrated with AI. Here are the must-know tools for 2025:

1. Python 3.12+

Python remains the most widely used language in data science due to its simplicity and vast ecosystem. In 2025, the latest Python versions offer faster performance and better support for concurrency—making large-scale data operations smoother.

Popular Libraries:

Pandas: For data manipulation

NumPy: For numerical computing

Matplotlib / Seaborn / Plotly: For data visualization

Scikit-learn: For traditional machine learning

XGBoost / LightGBM: For gradient boosting models

2. JupyterLab

The evolution of the classic Jupyter Notebook, JupyterLab, is now the default environment for exploratory data analysis, allowing a modular, tabbed interface with support for terminals, text editors, and rich output.

3. Apache Spark with PySpark

Handling massive datasets? PySpark—Python’s interface to Apache Spark—is ideal for distributed data processing across clusters, now deeply integrated with cloud platforms like Databricks and Snowflake.

4. Cloud Platforms (AWS, Azure, Google Cloud)

In 2025, most data science workloads run on the cloud. Services like Amazon SageMaker, Azure Machine Learning, and Google Vertex AI simplify model training, deployment, and monitoring.

5. AutoML & No-Code Tools

Tools like DataRobot, Google AutoML, and H2O.ai now offer drag-and-drop model building and optimization. These are powerful for non-coders and help accelerate workflows for pros.

Top Data Science Trends in 2025

1. Generative AI for Data Science

With the rise of large language models (LLMs), generative AI now assists data scientists in code generation, data exploration, and feature engineering. Tools like OpenAI's ChatGPT for Code and GitHub Copilot help automate repetitive tasks.

2. Data-Centric AI

Rather than obsessing over model architecture, 2025’s best practices focus on improving the quality of data—through labeling, augmentation, and domain understanding. Clean data beats complex models.

3. MLOps Maturity

MLOps—machine learning operations—is no longer optional. In 2025, companies treat ML models like software, with versioning, monitoring, CI/CD pipelines, and reproducibility built-in from the start.

4. Explainable AI (XAI)

As AI impacts sensitive areas like finance and healthcare, transparency is crucial. Tools like SHAP, LIME, and InterpretML help data scientists explain model predictions to stakeholders and regulators.

5. Edge Data Science

With IoT devices and on-device AI becoming the norm, edge computing allows models to run in real-time on smartphones, sensors, and drones—opening new use cases from agriculture to autonomous vehicles.

Core Techniques Every Data Scientist Should Know in 2025

Whether you’re starting out or upskilling, mastering these foundational techniques is critical:

1. Data Wrangling

Before any analysis begins, data must be cleaned and reshaped. Techniques include:

Handling missing values

Normalization and standardization

Encoding categorical variables

Time series transformation

2. Exploratory Data Analysis (EDA)

EDA is about understanding your dataset through visualization and summary statistics. Use histograms, scatter plots, correlation heatmaps, and boxplots to uncover trends and outliers.

3. Machine Learning Basics

Classification (e.g., predicting if a customer will churn)

Regression (e.g., predicting house prices)

Clustering (e.g., customer segmentation)

Dimensionality Reduction (e.g., PCA, t-SNE for visualization)

4. Deep Learning (Optional but Useful)

If you're working with images, text, or audio, deep learning with TensorFlow, PyTorch, or Keras can be invaluable. Hugging Face’s transformers make it easier than ever to work with large models.

5. Model Evaluation

Learn how to assess model performance with:

Accuracy, Precision, Recall, F1 Score

ROC-AUC Curve

Cross-validation

Confusion Matrix

Final Thoughts

As we move deeper into 2025, data science tutorial continues to be an exciting blend of math, coding, and real-world impact. Whether you're analyzing customer behavior, improving healthcare diagnostics, or predicting financial markets, your toolkit and mindset will be your most valuable assets.

Start by learning the fundamentals, keep experimenting with new tools, and stay updated with emerging trends. The best data scientists aren’t just great with code—they’re lifelong learners who turn data into decisions.

0 notes

Text

Best Azure Data Engineer Course In Ameerpet | Azure Data

Understanding Delta Lake in Databricks

Introduction

Delta Lake, an open-source storage layer developed by Databricks, is designed to address these challenges. It enhances Apache Spark's capabilities by providing ACID transactions, schema enforcement, and time travel, making data lakes more reliable and efficient. In modern data engineering, managing large volumes of data efficiently while ensuring reliability and performance is a key challenge.

What is Delta Lake?

Delta Lake is an optimized storage layer built on Apache Parquet that brings the reliability of a data warehouse to big data processing. It eliminates the limitations of traditional data lakes by adding ACID transactions, scalable metadata handling, and schema evolution. Delta Lake integrates seamlessly with Azure Databricks, Apache Spark, and other cloud-based data solutions, making it a preferred choice for modern data engineering pipelines. Microsoft Azure Data Engineer

Key Features of Delta Lake

1. ACID Transactions

One of the biggest challenges in traditional data lakes is data inconsistency due to concurrent read/write operations. Delta Lake supports ACID (Atomicity, Consistency, Isolation, Durability) transactions, ensuring reliable data updates without corruption. It uses Optimistic Concurrency Control (OCC) to handle multiple transactions simultaneously.

2. Schema Evolution and Enforcement

Delta Lake enforces schema validation to prevent accidental data corruption. If a schema mismatch occurs, Delta Lake will reject the data, ensuring consistency. Additionally, it supports schema evolution, allowing modifications without affecting existing data.

3. Time Travel and Data Versioning

Delta Lake maintains historical versions of data using log-based versioning. This allows users to perform time travel queries, enabling them to revert to previous states of data. This is particularly useful for auditing, rollback, and debugging purposes. Azure Data Engineer Course

4. Scalable Metadata Handling

Traditional data lakes struggle with metadata scalability, especially when handling billions of files. Delta Lake optimizes metadata storage and retrieval, making queries faster and more efficient.

5. Performance Optimizations (Data Skipping and Caching)

Delta Lake improves query performance through data skipping and caching mechanisms. Data skipping allows queries to read only relevant data instead of scanning the entire dataset, reducing processing time. Caching improves speed by storing frequently accessed data in memory.

6. Unified Batch and Streaming Processing

Delta Lake enables seamless integration of batch and real-time streaming workloads. Structured Streaming in Spark can write and read from Delta tables in real-time, ensuring low-latency updates and enabling use cases such as fraud detection and log analytics.

How Delta Lake Works in Databricks?

Delta Lake is tightly integrated with Azure Databricks and Apache Spark, making it easy to use within data pipelines. Below is a basic workflow of how Delta Lake operates: Azure Data Engineering Certification

Data Ingestion: Data is ingested into Delta tables from multiple sources (Kafka, Event Hubs, Blob Storage, etc.).

Data Processing: Spark SQL and PySpark process the data, applying transformations and aggregations.

Data Storage: Processed data is stored in Delta format with ACID compliance.

Query and Analysis: Users can query Delta tables using SQL or Spark.

Version Control & Time Travel: Previous data versions are accessible for rollback and auditing.

Use Cases of Delta Lake

ETL Pipelines: Ensures data reliability with schema validation and ACID transactions.

Machine Learning: Maintains clean and structured historical data for training ML models. Azure Data Engineer Training

Real-time Analytics: Supports streaming data processing for real-time insights.

Data Governance & Compliance: Enables auditing and rollback for regulatory requirements.

Conclusion

Delta Lake in Databricks bridges the gap between traditional data lakes and modern data warehousing solutions by providing reliability, scalability, and performance improvements. With ACID transactions, schema enforcement, time travel, and optimized query performance, Delta Lake is a powerful tool for building efficient and resilient data pipelines. Its seamless integration with Azure Databricks and Apache Spark makes it a preferred choice for data engineers aiming to create high-performance and scalable data architectures.

Trending Courses: Artificial Intelligence, Azure AI Engineer, Informatica Cloud IICS/IDMC (CAI, CDI),

Visualpath stands out as the best online software training institute in Hyderabad.

For More Information about the Azure Data Engineer Online Training

Contact Call/WhatsApp: +91-7032290546

Visit: https://www.visualpath.in/online-azure-data-engineer-course.html

#Azure Data Engineer Course#Azure Data Engineering Certification#Azure Data Engineer Training In Hyderabad#Azure Data Engineer Training#Azure Data Engineer Training Online#Azure Data Engineer Course Online#Azure Data Engineer Online Training#Microsoft Azure Data Engineer#Azure Data Engineer Course In Bangalore#Azure Data Engineer Course In Chennai#Azure Data Engineer Training In Bangalore#Azure Data Engineer Course In Ameerpet

0 notes

Text

Data Preparation for Machine Learning in the Cloud: Insights from Anton R Gordon

In the world of machine learning (ML), high-quality data is the foundation of accurate and reliable models. Without proper data preparation, even the most sophisticated ML algorithms fail to deliver meaningful insights. Anton R Gordon, a seasoned AI Architect and Cloud Specialist, emphasizes the importance of structured, well-engineered data pipelines to power enterprise-grade ML solutions.

With extensive experience deploying cloud-based AI applications, Anton R Gordon shares key strategies and best practices for data preparation in the cloud, focusing on efficiency, scalability, and automation.

Why Data Preparation Matters in Machine Learning

Data preparation involves multiple steps, including data ingestion, cleaning, transformation, feature engineering, and validation. According to Anton R Gordon, poorly prepared data leads to:

Inaccurate models due to missing or inconsistent data.

Longer training times because of redundant or noisy information.

Security risks if sensitive data is not properly handled.

By leveraging cloud-based tools like AWS, GCP, and Azure, organizations can streamline data preparation, making ML workflows more scalable, cost-effective, and automated.

Anton R Gordon’s Cloud-Based Data Preparation Workflow

Anton R Gordon outlines an optimized approach to data preparation in the cloud, ensuring a seamless transition from raw data to model-ready datasets.

1. Data Ingestion & Storage

The first step in ML data preparation is to collect and store data efficiently. Anton recommends:

AWS Glue & AWS Lambda: For automating the extraction of structured and unstructured data from multiple sources.

Amazon S3 & Snowflake: To store raw and transformed data securely at scale.

Google BigQuery & Azure Data Lake: As powerful alternatives for real-time data querying.

2. Data Cleaning & Preprocessing

Cleaning raw data eliminates errors and inconsistencies, improving model accuracy. Anton suggests:

AWS Data Wrangler: To handle missing values, remove duplicates, and normalize datasets before ML training.

Pandas & Apache Spark on AWS EMR: To process large datasets efficiently.

Google Dataflow: For real-time preprocessing of streaming data.

3. Feature Engineering & Transformation

Feature engineering is a critical step in improving model performance. Anton R Gordon utilizes:

SageMaker Feature Store: To centralize and reuse engineered features across ML pipelines.

Amazon Redshift ML: To run SQL-based feature transformation at scale.

PySpark & TensorFlow Transform: To generate domain-specific features for deep learning models.

4. Data Validation & Quality Monitoring

Ensuring data integrity before model training is crucial. Anton recommends:

AWS Deequ: To apply statistical checks and monitor data quality.

SageMaker Model Monitor: To detect data drift and maintain model accuracy.

Great Expectations: For validating schemas and detecting anomalies in cloud data lakes.

Best Practices for Cloud-Based Data Preparation

Anton R Gordon highlights key best practices for optimizing ML data preparation in the cloud:

Automate Data Pipelines – Use AWS Glue, Apache Airflow, or Azure Data Factory for seamless ETL workflows.

Implement Role-Based Access Controls (RBAC) – Secure data using IAM roles, encryption, and VPC configurations.

Optimize for Cost & Performance – Choose the right storage options (S3 Intelligent-Tiering, Redshift Spectrum) to balance cost and speed.

Enable Real-Time Data Processing – Use AWS Kinesis or Google Pub/Sub for streaming ML applications.

Leverage Serverless Processing – Reduce infrastructure overhead with AWS Lambda and Google Cloud Functions.

Conclusion

Data preparation is the backbone of successful machine learning projects. By implementing scalable, cloud-based data pipelines, businesses can reduce errors, improve model accuracy, and accelerate AI adoption. Anton R Gordon’s approach to cloud-based data preparation enables enterprises to build robust, efficient, and secure ML workflows that drive real business value.

As cloud AI evolves, automated and scalable data preparation will remain a key differentiator in the success of ML applications. By following Gordon’s best practices, organizations can enhance their AI strategies and optimize data-driven decision-making.

0 notes

Text

How to Integrate Hadoop with Machine Learning & AI

Introduction

With the explosion of big data, businesses are leveraging Machine Learning (ML) and Artificial Intelligence (AI) to gain insights and improve decision-making. However, handling massive datasets efficiently requires a scalable storage and processing solution—this is where Apache Hadoop comes in. By integrating Hadoop with ML and AI, organizations can build powerful data-driven applications. This blog explores how Hadoop enables ML and AI workflows and the best practices for seamless integration.

1. Understanding Hadoop’s Role in Big Data Processing

Hadoop is an open-source framework designed to store and process large-scale datasets across distributed clusters. It consists of:

HDFS (Hadoop Distributed File System): A scalable storage system for big data.

MapReduce: A parallel computing model for processing large datasets.

YARN (Yet Another Resource Negotiator): Manages computing resources across clusters.

Apache Hive, HBase, and Pig: Tools for data querying and management.

Why Use Hadoop for ML & AI?

Scalability: Handles petabytes of data across multiple nodes.

Fault Tolerance: Ensures data availability even in case of failures.

Cost-Effectiveness: Open-source and works on commodity hardware.

Parallel Processing: Speeds up model training and data processing.

2. Integrating Hadoop with Machine Learning & AI

To build AI/ML applications on Hadoop, various integration techniques and tools can be used:

(a) Using Apache Mahout

Apache Mahout is an ML library that runs on top of Hadoop.

It supports classification, clustering, and recommendation algorithms.

Works with MapReduce and Apache Spark for distributed computing.

(b) Hadoop and Apache Spark for ML

Apache Spark’s MLlib is a powerful machine learning library that integrates with Hadoop.

Spark processes data 100x faster than MapReduce, making it ideal for ML workloads.

Supports supervised & unsupervised learning, deep learning, and NLP applications.

(c) Hadoop with TensorFlow & Deep Learning

Hadoop can store large-scale training datasets for TensorFlow and PyTorch.

HDFS and Apache Kafka help in feeding data to deep learning models.

Can be used for image recognition, speech processing, and predictive analytics.

(d) Hadoop with Python and Scikit-Learn

PySpark (Spark’s Python API) enables ML model training on Hadoop clusters.

Scikit-Learn, TensorFlow, and Keras can fetch data directly from HDFS.

Useful for real-time ML applications such as fraud detection and customer segmentation.

3. Steps to Implement Machine Learning on Hadoop

Step 1: Data Collection and Storage

Store large datasets in HDFS or Apache HBase.

Use Apache Flume or Kafka for streaming real-time data.

Step 2: Data Preprocessing

Use Apache Pig or Spark SQL to clean and transform raw data.

Convert unstructured data into a structured format for ML models.

Step 3: Model Training

Choose an ML framework: Mahout, MLlib, or TensorFlow.

Train models using distributed computing with Spark MLlib or MapReduce.

Optimize hyperparameters and improve accuracy using parallel processing.

Step 4: Model Deployment and Predictions

Deploy trained models on Hadoop clusters or cloud-based platforms.

Use Apache Kafka and HDFS to feed real-time data for predictions.

Automate ML workflows using Oozie and Airflow.

4. Real-World Applications of Hadoop & AI Integration

1. Predictive Analytics in Finance

Banks use Hadoop-powered ML models to detect fraud and analyze risk.

Credit scoring and loan approval use HDFS-stored financial data.

2. Healthcare and Medical Research

AI-driven diagnostics process millions of medical records stored in Hadoop.

Drug discovery models train on massive biomedical datasets.

3. E-Commerce and Recommendation Systems

Hadoop enables large-scale customer behavior analysis.

AI models generate real-time product recommendations using Spark MLlib.

4. Cybersecurity and Threat Detection

Hadoop stores network logs and threat intelligence data.

AI models detect anomalies and prevent cyber attacks.

5. Smart Cities and IoT

Hadoop stores IoT sensor data from traffic systems, energy grids, and weather sensors.

AI models analyze patterns for predictive maintenance and smart automation.

5. Best Practices for Hadoop & AI Integration

Use Apache Spark: For faster ML model training instead of MapReduce.

Optimize Storage: Store processed data in Parquet or ORC formats for efficiency.

Enable GPU Acceleration: Use TensorFlow with GPU-enabled Hadoop clusters for deep learning.

Monitor Performance: Use Apache Ambari or Cloudera Manager for cluster performance monitoring.

Security & Compliance: Implement Kerberos authentication and encryption to secure sensitive data.

Conclusion

Integrating Hadoop with Machine Learning and AI enables businesses to process vast amounts of data efficiently, train advanced models, and deploy AI solutions at scale. With Apache Spark, Mahout, TensorFlow, and PyTorch, organizations can unlock the full potential of big data and artificial intelligence.

As technology evolves, Hadoop’s role in AI-driven data processing will continue to grow, making it a critical tool for enterprises worldwide.

Want to Learn Hadoop?

If you're looking to master Hadoop and AI, check out Hadoop Online Training or contact Intellimindz for expert guidance.

Would you like any refinements or additional details? 🚀

0 notes

Text

Training Proposal: PySpark for Data Processing

Training Proposal: PySpark for Data Processing Introduction:This proposal outlines a 3-day PySpark training program designed for 10 participants. The course aims to equip data professionals with the skills to leverage Apache Spark using the Python API (PySpark) for efficient large-scale data processing[5]. Participants will gain hands-on experience with PySpark, covering fundamental concepts to…

0 notes

Text

Training Proposal: PySpark for Data Processing

Introduction:This proposal outlines a 3-day PySpark training program designed for 10 participants. The course aims to equip data professionals with the skills to leverage Apache Spark using the Python API (PySpark) for efficient large-scale data processing5. Participants will gain hands-on experience with PySpark, covering fundamental concepts to advanced techniques, enabling them to tackle…

0 notes

Text

Pyspark Training

0 notes

Text

BigQuery Studio From Google Cloud Accelerates AI operations

Google Cloud is well positioned to provide enterprises with a unified, intelligent, open, and secure data and AI cloud. Dataproc, Dataflow, BigQuery, BigLake, and Vertex AI are used by thousands of clients in many industries across the globe for data-to-AI operations. From data intake and preparation to analysis, exploration, and visualization to ML training and inference, it presents BigQuery Studio, a unified, collaborative workspace for Google Cloud’s data analytics suite that speeds up data to AI workflows. It enables data professionals to:

Utilize BigQuery’s built-in SQL, Python, Spark, or natural language capabilities to leverage code assets across Vertex AI and other products for specific workflows.

Improve cooperation by applying best practices for software development, like CI/CD, version history, and source control, to data assets.

Enforce security standards consistently and obtain governance insights within BigQuery by using data lineage, profiling, and quality.

The following features of BigQuery Studio assist you in finding, examining, and drawing conclusions from data in BigQuery:

Code completion, query validation, and byte processing estimation are all features of this powerful SQL editor.

Colab Enterprise-built embedded Python notebooks. Notebooks come with built-in support for BigQuery DataFrames and one-click Python development runtimes.

You can create stored Python procedures for Apache Spark using this PySpark editor.

Dataform-based asset management and version history for code assets, including notebooks and stored queries.

Gemini generative AI (Preview)-based assistive code creation in notebooks and the SQL editor.

Dataplex includes for data profiling, data quality checks, and data discovery.

The option to view work history by project or by user.

The capability of exporting stored query results for use in other programs and analyzing them by linking to other tools like Looker and Google Sheets.

Follow the guidelines under Enable BigQuery Studio for Asset Management to get started with BigQuery Studio. The following APIs are made possible by this process:

To use Python functions in your project, you must have access to the Compute Engine API.

Code assets, such as notebook files, must be stored via the Dataform API.

In order to run Colab Enterprise Python notebooks in BigQuery, the Vertex AI API is necessary.

Single interface for all data teams

Analytics experts must use various connectors for data intake, switch between coding languages, and transfer data assets between systems due to disparate technologies, which results in inconsistent experiences. The time-to-value of an organization’s data and AI initiatives is greatly impacted by this.

By providing an end-to-end analytics experience on a single, specially designed platform, BigQuery Studio tackles these issues. Data engineers, data analysts, and data scientists can complete end-to-end tasks like data ingestion, pipeline creation, and predictive analytics using the coding language of their choice with its integrated workspace, which consists of a notebook interface and SQL (powered by Colab Enterprise, which is in preview right now).

For instance, data scientists and other analytics users can now analyze and explore data at the petabyte scale using Python within BigQuery in the well-known Colab notebook environment. The notebook environment of BigQuery Studio facilitates data querying and transformation, autocompletion of datasets and columns, and browsing of datasets and schema. Additionally, Vertex AI offers access to the same Colab Enterprise notebook for machine learning operations including MLOps, deployment, and model training and customisation.

Additionally, BigQuery Studio offers a single pane of glass for working with structured, semi-structured, and unstructured data of all types across cloud environments like Google Cloud, AWS, and Azure by utilizing BigLake, which has built-in support for Apache Parquet, Delta Lake, and Apache Iceberg.

One of the top platforms for commerce, Shopify, has been investigating how BigQuery Studio may enhance its current BigQuery environment.

Maximize productivity and collaboration

By extending software development best practices like CI/CD, version history, and source control to analytics assets like SQL scripts, Python scripts, notebooks, and SQL pipelines, BigQuery Studio enhances cooperation among data practitioners. To ensure that their code is always up to date, users will also have the ability to safely link to their preferred external code repositories.

BigQuery Studio not only facilitates human collaborations but also offers an AI-powered collaborator for coding help and contextual discussion. BigQuery’s Duet AI can automatically recommend functions and code blocks for Python and SQL based on the context of each user and their data. The new chat interface eliminates the need for trial and error and document searching by allowing data practitioners to receive specialized real-time help on specific tasks using natural language.

Unified security and governance

By assisting users in comprehending data, recognizing quality concerns, and diagnosing difficulties, BigQuery Studio enables enterprises to extract reliable insights from reliable data. To assist guarantee that data is accurate, dependable, and of high quality, data practitioners can profile data, manage data lineage, and implement data-quality constraints. BigQuery Studio will reveal tailored metadata insights later this year, such as dataset summaries or suggestions for further investigation.

Additionally, by eliminating the need to copy, move, or exchange data outside of BigQuery for sophisticated workflows, BigQuery Studio enables administrators to consistently enforce security standards for data assets. Policies are enforced for fine-grained security with unified credential management across BigQuery and Vertex AI, eliminating the need to handle extra external connections or service accounts. For instance, Vertex AI’s core models for image, video, text, and language translations may now be used by data analysts for tasks like sentiment analysis and entity discovery over BigQuery data using straightforward SQL in BigQuery, eliminating the need to share data with outside services.

Read more on Govindhtech.com

#BigQueryStudio#BigLake#AIcloud#VertexAI#BigQueryDataFrames#generativeAI#ApacheSpark#MLOps#news#technews#technology#technologynews#technologytrends#govindhtech

0 notes

Text

Big Data vs. Traditional Data: Understanding the Differences and When to Use Python

In the evolving landscape of data science, understanding the nuances between big data and traditional data is crucial. Both play pivotal roles in analytics, but their characteristics, processing methods, and use cases differ significantly. Python, a powerful and versatile programming language, has become an indispensable tool for handling both types of data. This blog will explore the differences between big data and traditional data and explain when to use Python, emphasizing the importance of enrolling in a data science training program to master these skills.

What is Traditional Data?

Traditional data refers to structured data typically stored in relational databases and managed using SQL (Structured Query Language). This data is often transactional and includes records such as sales transactions, customer information, and inventory levels.

Characteristics of Traditional Data:

Structured Format: Traditional data is organized in a structured format, usually in rows and columns within relational databases.

Manageable Volume: The volume of traditional data is relatively small and manageable, often ranging from gigabytes to terabytes.

Fixed Schema: The schema, or structure, of traditional data is predefined and consistent, making it easy to query and analyze.

Use Cases of Traditional Data:

Transaction Processing: Traditional data is used for transaction processing in industries like finance and retail, where accurate and reliable records are essential.

Customer Relationship Management (CRM): Businesses use traditional data to manage customer relationships, track interactions, and analyze customer behavior.

Inventory Management: Traditional data is used to monitor and manage inventory levels, ensuring optimal stock levels and efficient supply chain operations.

What is Big Data?

Big data refers to extremely large and complex datasets that cannot be managed and processed using traditional database systems. It encompasses structured, unstructured, and semi-structured data from various sources, including social media, sensors, and log files.

Characteristics of Big Data:

Volume: Big data involves vast amounts of data, often measured in petabytes or exabytes.

Velocity: Big data is generated at high speed, requiring real-time or near-real-time processing.

Variety: Big data comes in diverse formats, including text, images, videos, and sensor data.

Veracity: Big data can be noisy and uncertain, requiring advanced techniques to ensure data quality and accuracy.

Use Cases of Big Data:

Predictive Analytics: Big data is used for predictive analytics in fields like healthcare, finance, and marketing, where it helps forecast trends and behaviors.

IoT (Internet of Things): Big data from IoT devices is used to monitor and analyze physical systems, such as smart cities, industrial machines, and connected vehicles.

Social Media Analysis: Big data from social media platforms is analyzed to understand user sentiments, trends, and behavior patterns.

Python: The Versatile Tool for Data Science

Python has emerged as the go-to programming language for data science due to its simplicity, versatility, and robust ecosystem of libraries and frameworks. Whether dealing with traditional data or big data, Python provides powerful tools and techniques to analyze and visualize data effectively.

Python for Traditional Data:

Pandas: The Pandas library in Python is ideal for handling traditional data. It offers data structures like DataFrames that facilitate easy manipulation, analysis, and visualization of structured data.

SQLAlchemy: Python's SQLAlchemy library provides a powerful toolkit for working with relational databases, allowing seamless integration with SQL databases for querying and data manipulation.

Python for Big Data:

PySpark: PySpark, the Python API for Apache Spark, is designed for big data processing. It enables distributed computing and parallel processing, making it suitable for handling large-scale datasets.

Dask: Dask is a flexible parallel computing library in Python that scales from single machines to large clusters, making it an excellent choice for big data analytics.

When to Use Python for Data Science

Understanding when to use Python for different types of data is crucial for effective data analysis and decision-making.

Traditional Data:

Business Analytics: Use Python for traditional data analytics in business scenarios, such as sales forecasting, customer segmentation, and financial analysis. Python's libraries, like Pandas and Matplotlib, offer comprehensive tools for these tasks.

Data Cleaning and Transformation: Python is highly effective for data cleaning and transformation, ensuring that traditional data is accurate, consistent, and ready for analysis.

Big Data:

Real-Time Analytics: When dealing with real-time data streams from IoT devices or social media platforms, Python's integration with big data frameworks like Apache Spark enables efficient processing and analysis.

Large-Scale Machine Learning: For large-scale machine learning projects, Python's compatibility with libraries like TensorFlow and PyTorch, combined with big data processing tools, makes it an ideal choice.

The Importance of Data Science Training Programs

To effectively navigate the complexities of both traditional data and big data, it is essential to acquire the right skills and knowledge. Data science training programs provide comprehensive education and hands-on experience in data science tools and techniques.

Comprehensive Curriculum: Data science training programs cover a wide range of topics, including data analysis, machine learning, big data processing, and data visualization, ensuring a well-rounded education.

Practical Experience: These programs emphasize practical learning through projects and case studies, allowing students to apply theoretical knowledge to real-world scenarios.

Expert Guidance: Experienced instructors and industry mentors offer valuable insights and support, helping students master the complexities of data science.

Career Opportunities: Graduates of data science training programs are in high demand across various industries, with opportunities to work on innovative projects and drive data-driven decision-making.

Conclusion

Understanding the differences between big data and traditional data is fundamental for any aspiring data scientist. While traditional data is structured, manageable, and used for transaction processing, big data is vast, varied, and requires advanced tools for real-time processing and analysis. Python, with its robust ecosystem of libraries and frameworks, is an indispensable tool for handling both types of data effectively.

Enrolling in a data science training program equips you with the skills and knowledge needed to navigate the complexities of data science. Whether you're working with traditional data or big data, mastering Python and other data science tools will enable you to extract valuable insights and drive innovation in your field. Start your journey today and unlock the potential of data science with a comprehensive training program.

#Big Data#Traditional Data#Data Science#Python Programming#Data Analysis#Machine Learning#Predictive Analytics#Data Science Training Program#SQL#Data Visualization#Business Analytics#Real-Time Analytics#IoT Data#Data Transformation

0 notes

Text

Data Engineering Bootcamp Training – Featuring Everything You Need to Accelerate Growth

If you want your team to master data engineering skills, you should explore the potential of data engineering bootcamp training focusing on Python and PySpark. That will provide your team with extensive knowledge and practical experience in data engineering. Here is a closer look at the details of how data engineering bootcamps can help your team grow.

Big Data Concepts and Systems Overview for Data Engineers

This foundational data engineering boot camp module offers a comprehensive understanding of big data concepts, systems, and architectures. The topics covered in this module include emerging technologies such as Apache Spark, distributed computing, and Hadoop Ecosystem components. The topics discussed in this module equip teams to manage complex data engineering challenges in real-world settings.

Translating Data into Operational and Business Insights

Unlike what most people assume, data engineering is a whole lot more than just processing data. It also involves extracting actionable insights to drive business decisions. Data engineering bootcamps course emphasize translating raw data into actionable and operational business insights. Learners are equipped with techniques to transform, aggregate, and analyze data so that they can deliver meaningful insights to stakeholders.

Data Processing Phases

Efficient data engineering requires a deep understanding of the data processing life cycle. With data engineering bootcamps, teams will be introduced to various phases of data processing, such as data storage, processing, ingestion, and visualization. Employees will also gain practical experience in designing and deploying data processing pathways using Python and PySpark. This translates into improved efficiency and reliability in data workflow.

Running Python Programs, Control Statements, and Data Collections

Python is one of the most popular programming languages and is widely used for data engineering purposes. For this reason, data engineering bootcamps offer an introduction to Python programming and cover basic concepts such as running Python programs, common data collections, and control statements. Additionally, teams learn how to create efficient and secure Python code to process and manipulate data efficiently.

Functions and Modules

Effective data engineering workflow demands creating modular and reusable code. Consequently, this module is necessary to understand data engineering work processes comprehensively. The module focuses on functions and modules in Python, enabling teams to transform logic into functions and manage code as a reusable module. The course introduces participants to optimal code organization, thereby improving productivity and sustainability in data engineering projects.

Data Visualization in Python

Clarity in data visualization is vital to communicating key insights and findings to stakeholders. This Data engineering bootcamp module on data visualization emphasizes techniques that utilize libraries such as Seaborn and Matplotlib in Python. During the course, teams learn how to design informative and visually striking charts, plots, and dashboards to communicate complex data relationships effectively.

Final word

To sum up, data engineering bootcamp training using Python and PySpark provides a gateway for teams to venture into the rapidly growing realm of data engineering. The training endows them with a solid foundation in big data concepts, practical experience in Python, and hands-on skills in data processing and visualization. Ensure that you choose an established course provider to enjoy the maximum benefits of data engineering courses.

For more information visit: https://www.webagesolutions.com/courses/WA3020-data-engineering-bootcamp-training-using-python-and-pyspark

0 notes

Text

Hadoop Python

You are interested in information regarding Hadoop and Python. Hadoop is a widely used framework for storing and processing big data in a distributed environment across clusters of computers. At the same time, Python is a popular programming language known for its simplicity and versatility.

Integrating Python with Hadoop can be highly beneficial for handling big data tasks. Python can interact with the Hadoop ecosystem using libraries and frameworks like Pydoop, Hadoop Streaming, and Apache Spark with PySpark.

Pydoop: A Python interface to Hadoop allows you to write Hadoop MapReduce programs and interact with HDFS using pure Python.

Hadoop Streaming: A utility that comes with Hadoop and allows you to create and run MapReduce jobs with any executable or script as the mapper and the reducer.

Apache Spark and PySpark: While technically not a part of the Hadoop ecosystem, Apache Spark is often used in conjunction with Hadoop. PySpark is the Python API for Spark, allowing you to use Python to write Spark applications for big data processing.

Using Python with Hadoop is particularly advantageous due to Python’s simplicity and the extensive set of libraries available for data analysis, machine learning, and data visualization.

If you’re considering training or informing others about integrating Python with Hadoop, covering these tools and their practical applications is essential. This would ensure that the recipients of your information can effectively utilize Python in a Hadoop-based environment for big data tasks.

Hadoop Training Demo Day 1 Video:

youtube

You can find more information about Hadoop Training in this Hadoop Docs Link

Conclusion:

Unogeeks is the №1 IT Training Institute for Hadoop Training. Anyone Disagree? Please drop in a comment

You can check out our other latest blogs on Hadoop Training here — Hadoop Blogs

Please check out our Best In Class Hadoop Training Details here — Hadoop Training

S.W.ORG

— — — — — — — — — — — -

For Training inquiries:

Call/Whatsapp: +91 73960 33555

Mail us at: [email protected]

Our Website ➜ https://unogeeks.com

Follow us:

Instagram: https://www.instagram.com/unogeeks

Facebook: https://www.facebook.com/UnogeeksSoftwareTrainingInstitute

Twitter: https://twitter.com/unogeeks

#unogeeks #training #ittraining #unogeekstraining

0 notes

Text

Mastering Big Data Tools: Scholarnest's Databricks Cloud Training

In the ever-evolving landscape of data engineering, mastering the right tools is paramount for professionals seeking to stay ahead. Scholarnest, a leading edtech platform, offers comprehensive Databricks Cloud training designed to empower individuals with the skills needed to navigate the complexities of big data. Let's explore how this training program, rich in keywords such as data engineering, Databricks, and PySpark, sets the stage for a transformative learning journey.

Diving into Data Engineering Mastery:

Data Engineering Course and Certification:

Scholarnest's Databricks Cloud training is structured as a comprehensive data engineering course. The curriculum is curated to cover the breadth and depth of data engineering concepts, ensuring participants gain a robust understanding of the field. Upon completion, learners receive a coveted data engineer certification, validating their expertise in handling big data challenges.

Databricks Data Engineer Certification:

The program places a special emphasis on Databricks, a leading big data analytics platform. Participants have the opportunity to earn the Databricks Data Engineer Certification, a recognition that holds substantial value in the industry. This certification signifies proficiency in leveraging Databricks for efficient data processing, analytics, and machine learning.

PySpark Excellence Unleashed:

Best PySpark Course Online:

A highlight of Scholarnest's offering is its distinction as the best PySpark course online. PySpark, the Python library for Apache Spark, is a pivotal tool in the data engineering arsenal. The course delves into PySpark's intricacies, enabling participants to harness its capabilities for data manipulation, analysis, and processing at scale.

PySpark Training Course:

The PySpark training course is thoughtfully crafted to cater to various skill levels, including beginners and those looking for a comprehensive, full-course experience. The hands-on nature of the training ensures that participants not only grasp theoretical concepts but also gain practical proficiency in PySpark.

Azure Databricks Learning for Real-World Applications:

Azure Databricks Learning:

Recognizing the industry's shift towards cloud-based solutions, Scholarnest's program includes Azure Databricks learning. This module equips participants with the skills to leverage Databricks in the Azure cloud environment, aligning their knowledge with contemporary data engineering practices.

Best Databricks Courses:

Scholarnest stands out for offering one of the best Databricks courses available. The curriculum is designed to cover the entire spectrum of Databricks functionalities, from data exploration and visualization to advanced analytics and machine learning.

Learning Beyond Limits:

Self-Paced Training and Certification:

The flexibility of self-paced training is a cornerstone of Scholarnest's approach. Participants can learn at their own speed, ensuring a thorough understanding of each concept before progressing. The self-paced model is complemented by comprehensive certification, validating the mastery of Databricks and related tools.

Machine Learning with PySpark:

Machine learning is seamlessly integrated into the program, providing participants with insights into leveraging PySpark for machine learning applications. This inclusion reflects the program's commitment to preparing professionals for the holistic demands of contemporary data engineering roles.

Conclusion:

Scholarnest's Databricks Cloud training transcends traditional learning models. By combining in-depth coverage of data engineering principles, hands-on PySpark training, and Azure Databricks learning, this program equips participants with the knowledge and skills needed to excel in the dynamic field of big data. As the industry continues to evolve, Scholarnest remains at the forefront, ensuring that professionals are not just keeping pace but leading the way in data engineering excellence.

#data engineering#data engineering course#data engineering certification#databricks data engineer certification#pyspark course#databricks courses online#best pyspark course online#best pyspark course#pyspark online course#databricks learning#data engineering courses in bangalore#data engineering courses in india#azure databricks learning#pyspark training course#big data

1 note

·

View note

Text

PySpark SQL: Introduction & Basic Queries

Introduction

In today’s data-driven world, the volume and variety of data have exploded. Traditional tools often struggle to process and analyze massive datasets efficiently. That’s where Apache Spark comes into the picture — a lightning-fast, unified analytics engine for big data processing.

For Python developers, PySpark — the Python API for Apache Spark — offers an intuitive way to work with Spark. Among its powerful modules, PySpark SQL stands out. It enables you to query structured data using SQL syntax or DataFrame operations. This hybrid capability makes it easy to blend the power of Spark with the familiarity of SQL.

In this blog, we'll explore what PySpark SQL is, why it’s so useful, how to set it up, and cover the most essential SQL queries with examples — perfect for beginners diving into big data with Python.

Agenda

Here's what we'll cover:

What is PySpark SQL?

Why should you use PySpark SQL?

Installing and setting up PySpark

Basic SQL queries in PySpark

Best practices for working efficiently

Final thoughts

What is PySpark SQL?

PySpark SQL is a module of Apache Spark that enables querying structured data using SQL commands or a more programmatic DataFrame API. It offers:

Support for SQL-style queries on large datasets.

A seamless bridge between relational logic and Python.

Optimizations using the Catalyst query optimizer and Tungsten execution engine for efficient computation.

In simple terms, PySpark SQL lets you use SQL to analyze big data at scale — without needing traditional database systems.

Why Use PySpark SQL?

Here are a few compelling reasons to use PySpark SQL:

Scalability: It can handle terabytes of data spread across clusters.

Ease of use: Combines the simplicity of SQL with the flexibility of Python.

Performance: Optimized query execution ensures fast performance.

Interoperability: Works with various data sources — including Hive, JSON, Parquet, and CSV.

Integration: Supports seamless integration with DataFrames and MLlib for machine learning.

Whether you're building dashboards, ETL pipelines, or machine learning workflows — PySpark SQL is a reliable choice.

Setting Up PySpark

Let’s quickly set up a local PySpark environment.

1. Install PySpark:

pip install pyspark

2. Start a Spark session:

from pyspark.sql import SparkSession spark = SparkSession.builder \ .appName("PySparkSQLExample") \ .getOrCreate()

3. Create a DataFrame:

data = [("Alice", 25), ("Bob", 30), ("Clara", 35)] columns = ["Name", "Age"] df = spark.createDataFrame(data, columns) df.show()

4. Create a temporary view to run SQL queries:

df.createOrReplaceTempView("people")

Now you're ready to run SQL queries directly!

Basic PySpark SQL Queries

Let’s look at the most commonly used SQL queries in PySpark.

1. SELECT Query

spark.sql("SELECT * FROM people").show()

Returns all rows from the people table.

2. WHERE Clause (Filtering Rows)

spark.sql("SELECT * FROM people WHERE Age > 30").show()

Filters rows where Age is greater than 30.

3. Adding a Derived Column

spark.sql("SELECT Name, Age, Age + 5 AS AgeInFiveYears FROM people").show()

Adds a new column AgeInFiveYears by adding 5 to the current age.

4. GROUP BY and Aggregation

Let’s update the data with multiple entries for each name:

data2 = [("Alice", 25), ("Bob", 30), ("Alice", 28), ("Bob", 35), ("Clara", 35)] df2 = spark.createDataFrame(data2, columns) df2.createOrReplaceTempView("people")

Now apply aggregation:

spark.sql(""" SELECT Name, COUNT(*) AS Count, AVG(Age) AS AvgAge FROM people GROUP BY Name """).show()

This groups records by Name and calculates the number of records and average age.

5. JOIN Between Two Tables

Let’s create another table:

jobs_data = [("Alice", "Engineer"), ("Bob", "Designer"), ("Clara", "Manager")] df_jobs = spark.createDataFrame(jobs_data, ["Name", "Job"]) df_jobs.createOrReplaceTempView("jobs")

Now perform an inner join:

spark.sql(""" SELECT p.Name, p.Age, j.Job FROM people p JOIN jobs j ON p.Name = j.Name """).show()

This joins the people and jobs tables on the Name column.

Tips for Working Efficiently with PySpark SQL

Use LIMIT for testing: Avoid loading millions of rows in development.

Cache wisely: Use .cache() when a DataFrame is reused multiple times.

Check performance: Use .explain() to view the query execution plan.

Mix APIs: Combine SQL queries and DataFrame methods for flexibility.

Conclusion

PySpark SQL makes big data analysis in Python much more accessible. By combining the readability of SQL with the power of Spark, it allows developers and analysts to process massive datasets using simple, familiar syntax.

This blog covered the foundational aspects: setting up PySpark, writing basic SQL queries, performing joins and aggregations, and a few best practices to optimize your workflow.

If you're just starting out, keep experimenting with different queries, and try loading real-world datasets in formats like CSV or JSON. Mastering PySpark SQL can unlock a whole new level of data engineering and analysis at scale.

PySpark Training by AccentFuture

At AccentFuture, we offer customizable online training programs designed to help you gain practical, job-ready skills in the most in-demand technologies. Our PySpark Online Training will teach you everything you need to know, with hands-on training and real-world projects to help you excel in your career.

What we offer:

Hands-on training with real-world projects and 100+ use cases

Live sessions led by industry professionals

Certification preparation and career guidance

🚀 Enroll Now: https://www.accentfuture.com/enquiry-form/

📞 Call Us: +91–9640001789

📧 Email Us: [email protected]

🌐 Visit Us: AccentFuture

1 note

·

View note