#OSD

Explore tagged Tumblr posts

Text

1K notes

·

View notes

Text

✧ lil dream and horror

@calcium-cat

#my art#undertale#calcium-cat#osd#i forgot i had this doodle laying around so i decided to finish it up hehe#i hope you like it!!#gift art#one small dream#horror sans#horror#cuties#uncle/dad sans#dream sans#babybones#💛🖤#<3333

811 notes

·

View notes

Text

Computers, displays and OSD, Die Another Day (2002)

#Die Another Day#Bond-a-Thon#Bond a Thon#computers#vintage#technology!#OSD#screens#LCD#computer graphics#junkfooddaily#dailyflicks#00sedit#filmgifs#movieedit#GIF#my gifs#dadedit#dieanotherdayedit#bondedit#Die Another Day Rewatch#Hide and Queue

83 notes

·

View notes

Text

Ice Story 2nd: RE_PRAY

95 notes

·

View notes

Text

Rules:

When sending an ask, address it to the character you want to ask. (i.e. “Dream, what do you . . .”) Asks without a character specified will not be answered.

You can ask/talk to more than one character per ask! (i.e. “Hey Dream and Nightmare, what do you . . .”)

You can ask pretty much anything, be it story/lore-wise or other. Just please be appropriette and respectful!

You can only ask/talk to those on the character list. (For example, you cannot ask Blue or Ink anything! at least for right now)

You can “gift” any of the characters something either through ask or a submission!

All asks with be answered via text only. I will also try to answer questions at least once a week on this blog, if not more, depending on how much time and energy (or asks) I have. Please bear with me if it takes a while to get to your ask!

Character List: Names are color coded so you will know who is speaking.

OSD!Nightmare

OSD!Little Dream

OSD!Killer

OSD!Cross

OSD!Horror

OSD!Dust

Mod!Cal

(These characters are from my fanfic One Small Dream, but the original characters and their canon belong to their respective creators!)

#OSD#one small dream#OSD ask blog#skelesona#calluna#calcium cat#utmv#nightmare sans#horror sans#dust sans#cross sans#killer sans#dream sans#babybones#big brother night#little dream#gremlin gang#masterpost#i think i drew dream a bit to small#but sometimes#i cannot resist the tinyness <3#art#my art

799 notes

·

View notes

Text

A new paper, the Ocean Species Discoveries (OSD), describes a ground-breaking experiment that united 25 independent taxonomists from ten countries. The initiative boasts the discovery of eleven new marine species from all over the globe, occurring at depths from 5.2 to 7081 meters. It also represents a significant step forward in accelerating the pace at which new marine species are described and published. Accelerating global change continues to threaten Earth's vast biodiversity, including in the oceans, which remain largely unexplored. To date, only a small fraction of an estimated two million total living marine species have been named and described.

Continue Reading.

57 notes

·

View notes

Text

"Open" "AI" isn’t

Tomorrow (19 Aug), I'm appearing at the San Diego Union-Tribune Festival of Books. I'm on a 2:30PM panel called "Return From Retirement," followed by a signing:

https://www.sandiegouniontribune.com/festivalofbooks

The crybabies who freak out about The Communist Manifesto appearing on university curriculum clearly never read it – chapter one is basically a long hymn to capitalism's flexibility and inventiveness, its ability to change form and adapt itself to everything the world throws at it and come out on top:

https://www.marxists.org/archive/marx/works/1848/communist-manifesto/ch01.htm#007

Today, leftists signal this protean capacity of capital with the -washing suffix: greenwashing, genderwashing, queerwashing, wokewashing – all the ways capital cloaks itself in liberatory, progressive values, while still serving as a force for extraction, exploitation, and political corruption.

A smart capitalist is someone who, sensing the outrage at a world run by 150 old white guys in boardrooms, proposes replacing half of them with women, queers, and people of color. This is a superficial maneuver, sure, but it's an incredibly effective one.

In "Open (For Business): Big Tech, Concentrated Power, and the Political Economy of Open AI," a new working paper, Meredith Whittaker, David Gray Widder and Sarah B Myers document a new kind of -washing: openwashing:

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4543807

Openwashing is the trick that large "AI" companies use to evade regulation and neutralizing critics, by casting themselves as forces of ethical capitalism, committed to the virtue of openness. No one should be surprised to learn that the products of the "open" wing of an industry whose products are neither "artificial," nor "intelligent," are also not "open." Every word AI huxters say is a lie; including "and," and "the."

So what work does the "open" in "open AI" do? "Open" here is supposed to invoke the "open" in "open source," a movement that emphasizes a software development methodology that promotes code transparency, reusability and extensibility, which are three important virtues.

But "open source" itself is an offshoot of a more foundational movement, the Free Software movement, whose goal is to promote freedom, and whose method is openness. The point of software freedom was technological self-determination, the right of technology users to decide not just what their technology does, but who it does it to and who it does it for:

https://locusmag.com/2022/01/cory-doctorow-science-fiction-is-a-luddite-literature/

The open source split from free software was ostensibly driven by the need to reassure investors and businesspeople so they would join the movement. The "free" in free software is (deliberately) ambiguous, a bit of wordplay that sometimes misleads people into thinking it means "Free as in Beer" when really it means "Free as in Speech" (in Romance languages, these distinctions are captured by translating "free" as "libre" rather than "gratis").

The idea behind open source was to rebrand free software in a less ambiguous – and more instrumental – package that stressed cost-savings and software quality, as well as "ecosystem benefits" from a co-operative form of development that recruited tinkerers, independents, and rivals to contribute to a robust infrastructural commons.

But "open" doesn't merely resolve the linguistic ambiguity of libre vs gratis – it does so by removing the "liberty" from "libre," the "freedom" from "free." "Open" changes the pole-star that movement participants follow as they set their course. Rather than asking "Which course of action makes us more free?" they ask, "Which course of action makes our software better?"

Thus, by dribs and drabs, the freedom leeches out of openness. Today's tech giants have mobilized "open" to create a two-tier system: the largest tech firms enjoy broad freedom themselves – they alone get to decide how their software stack is configured. But for all of us who rely on that (increasingly unavoidable) software stack, all we have is "open": the ability to peer inside that software and see how it works, and perhaps suggest improvements to it:

https://www.youtube.com/watch?v=vBknF2yUZZ8

In the Big Tech internet, it's freedom for them, openness for us. "Openness" – transparency, reusability and extensibility – is valuable, but it shouldn't be mistaken for technological self-determination. As the tech sector becomes ever-more concentrated, the limits of openness become more apparent.

But even by those standards, the openness of "open AI" is thin gruel indeed (that goes triple for the company that calls itself "OpenAI," which is a particularly egregious openwasher).

The paper's authors start by suggesting that the "open" in "open AI" is meant to imply that an "open AI" can be scratch-built by competitors (or even hobbyists), but that this isn't true. Not only is the material that "open AI" companies publish insufficient for reproducing their products, even if those gaps were plugged, the resource burden required to do so is so intense that only the largest companies could do so.

Beyond this, the "open" parts of "open AI" are insufficient for achieving the other claimed benefits of "open AI": they don't promote auditing, or safety, or competition. Indeed, they often cut against these goals.

"Open AI" is a wordgame that exploits the malleability of "open," but also the ambiguity of the term "AI": "a grab bag of approaches, not… a technical term of art, but more … marketing and a signifier of aspirations." Hitching this vague term to "open" creates all kinds of bait-and-switch opportunities.

That's how you get Meta claiming that LLaMa2 is "open source," despite being licensed in a way that is absolutely incompatible with any widely accepted definition of the term:

https://blog.opensource.org/metas-llama-2-license-is-not-open-source/

LLaMa-2 is a particularly egregious openwashing example, but there are plenty of other ways that "open" is misleadingly applied to AI: sometimes it means you can see the source code, sometimes that you can see the training data, and sometimes that you can tune a model, all to different degrees, alone and in combination.

But even the most "open" systems can't be independently replicated, due to raw computing requirements. This isn't the fault of the AI industry – the computational intensity is a fact, not a choice – but when the AI industry claims that "open" will "democratize" AI, they are hiding the ball. People who hear these "democratization" claims (especially policymakers) are thinking about entrepreneurial kids in garages, but unless these kids have access to multi-billion-dollar data centers, they can't be "disruptors" who topple tech giants with cool new ideas. At best, they can hope to pay rent to those giants for access to their compute grids, in order to create products and services at the margin that rely on existing products, rather than displacing them.

The "open" story, with its claims of democratization, is an especially important one in the context of regulation. In Europe, where a variety of AI regulations have been proposed, the AI industry has co-opted the open source movement's hard-won narrative battles about the harms of ill-considered regulation.

For open source (and free software) advocates, many tech regulations aimed at taming large, abusive companies – such as requirements to surveil and control users to extinguish toxic behavior – wreak collateral damage on the free, open, user-centric systems that we see as superior alternatives to Big Tech. This leads to the paradoxical effect of passing regulation to "punish" Big Tech that end up simply shaving an infinitesimal percentage off the giants' profits, while destroying the small co-ops, nonprofits and startups before they can grow to be a viable alternative.

The years-long fight to get regulators to understand this risk has been waged by principled actors working for subsistence nonprofit wages or for free, and now the AI industry is capitalizing on lawmakers' hard-won consideration for collateral damage by claiming to be "open AI" and thus vulnerable to overbroad regulation.

But the "open" projects that lawmakers have been coached to value are precious because they deliver a level playing field, competition, innovation and democratization – all things that "open AI" fails to deliver. The regulations the AI industry is fighting also don't necessarily implicate the speech implications that are core to protecting free software:

https://www.eff.org/deeplinks/2015/04/remembering-case-established-code-speech

Just think about LLaMa-2. You can download it for free, along with the model weights it relies on – but not detailed specs for the data that was used in its training. And the source-code is licensed under a homebrewed license cooked up by Meta's lawyers, a license that only glancingly resembles anything from the Open Source Definition:

https://opensource.org/osd/

Core to Big Tech companies' "open AI" offerings are tools, like Meta's PyTorch and Google's TensorFlow. These tools are indeed "open source," licensed under real OSS terms. But they are designed and maintained by the companies that sponsor them, and optimize for the proprietary back-ends each company offers in its own cloud. When programmers train themselves to develop in these environments, they are gaining expertise in adding value to a monopolist's ecosystem, locking themselves in with their own expertise. This a classic example of software freedom for tech giants and open source for the rest of us.

One way to understand how "open" can produce a lock-in that "free" might prevent is to think of Android: Android is an open platform in the sense that its sourcecode is freely licensed, but the existence of Android doesn't make it any easier to challenge the mobile OS duopoly with a new mobile OS; nor does it make it easier to switch from Android to iOS and vice versa.

Another example: MongoDB, a free/open database tool that was adopted by Amazon, which subsequently forked the codebase and tuning it to work on their proprietary cloud infrastructure.

The value of open tooling as a stickytrap for creating a pool of developers who end up as sharecroppers who are glued to a specific company's closed infrastructure is well-understood and openly acknowledged by "open AI" companies. Zuckerberg boasts about how PyTorch ropes developers into Meta's stack, "when there are opportunities to make integrations with products, [so] it’s much easier to make sure that developers and other folks are compatible with the things that we need in the way that our systems work."

Tooling is a relatively obscure issue, primarily debated by developers. A much broader debate has raged over training data – how it is acquired, labeled, sorted and used. Many of the biggest "open AI" companies are totally opaque when it comes to training data. Google and OpenAI won't even say how many pieces of data went into their models' training – let alone which data they used.

Other "open AI" companies use publicly available datasets like the Pile and CommonCrawl. But you can't replicate their models by shoveling these datasets into an algorithm. Each one has to be groomed – labeled, sorted, de-duplicated, and otherwise filtered. Many "open" models merge these datasets with other, proprietary sets, in varying (and secret) proportions.

Quality filtering and labeling for training data is incredibly expensive and labor-intensive, and involves some of the most exploitative and traumatizing clickwork in the world, as poorly paid workers in the Global South make pennies for reviewing data that includes graphic violence, rape, and gore.

Not only is the product of this "data pipeline" kept a secret by "open" companies, the very nature of the pipeline is likewise cloaked in mystery, in order to obscure the exploitative labor relations it embodies (the joke that "AI" stands for "absent Indians" comes out of the South Asian clickwork industry).

The most common "open" in "open AI" is a model that arrives built and trained, which is "open" in the sense that end-users can "fine-tune" it – usually while running it on the manufacturer's own proprietary cloud hardware, under that company's supervision and surveillance. These tunable models are undocumented blobs, not the rigorously peer-reviewed transparent tools celebrated by the open source movement.

If "open" was a way to transform "free software" from an ethical proposition to an efficient methodology for developing high-quality software; then "open AI" is a way to transform "open source" into a rent-extracting black box.

Some "open AI" has slipped out of the corporate silo. Meta's LLaMa was leaked by early testers, republished on 4chan, and is now in the wild. Some exciting stuff has emerged from this, but despite this work happening outside of Meta's control, it is not without benefits to Meta. As an infamous leaked Google memo explains:

Paradoxically, the one clear winner in all of this is Meta. Because the leaked model was theirs, they have effectively garnered an entire planet's worth of free labor. Since most open source innovation is happening on top of their architecture, there is nothing stopping them from directly incorporating it into their products.

https://www.searchenginejournal.com/leaked-google-memo-admits-defeat-by-open-source-ai/486290/

Thus, "open AI" is best understood as "as free product development" for large, well-capitalized AI companies, conducted by tinkerers who will not be able to escape these giants' proprietary compute silos and opaque training corpuses, and whose work product is guaranteed to be compatible with the giants' own systems.

The instrumental story about the virtues of "open" often invoke auditability: the fact that anyone can look at the source code makes it easier for bugs to be identified. But as open source projects have learned the hard way, the fact that anyone can audit your widely used, high-stakes code doesn't mean that anyone will.

The Heartbleed vulnerability in OpenSSL was a wake-up call for the open source movement – a bug that endangered every secure webserver connection in the world, which had hidden in plain sight for years. The result was an admirable and successful effort to build institutions whose job it is to actually make use of open source transparency to conduct regular, deep, systemic audits.

In other words, "open" is a necessary, but insufficient, precondition for auditing. But when the "open AI" movement touts its "safety" thanks to its "auditability," it fails to describe any steps it is taking to replicate these auditing institutions – how they'll be constituted, funded and directed. The story starts and ends with "transparency" and then makes the unjustifiable leap to "safety," without any intermediate steps about how the one will turn into the other.

It's a Magic Underpants Gnome story, in other words:

Step One: Transparency

Step Two: ??

Step Three: Safety

https://www.youtube.com/watch?v=a5ih_TQWqCA

Meanwhile, OpenAI itself has gone on record as objecting to "burdensome mechanisms like licenses or audits" as an impediment to "innovation" – all the while arguing that these "burdensome mechanisms" should be mandatory for rival offerings that are more advanced than its own. To call this a "transparent ruse" is to do violence to good, hardworking transparent ruses all the world over:

https://openai.com/blog/governance-of-superintelligence

Some "open AI" is much more open than the industry dominating offerings. There's EleutherAI, a donor-supported nonprofit whose model comes with documentation and code, licensed Apache 2.0. There are also some smaller academic offerings: Vicuna (UCSD/CMU/Berkeley); Koala (Berkeley) and Alpaca (Stanford).

These are indeed more open (though Alpaca – which ran on a laptop – had to be withdrawn because it "hallucinated" so profusely). But to the extent that the "open AI" movement invokes (or cares about) these projects, it is in order to brandish them before hostile policymakers and say, "Won't someone please think of the academics?" These are the poster children for proposals like exempting AI from antitrust enforcement, but they're not significant players in the "open AI" industry, nor are they likely to be for so long as the largest companies are running the show:

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4493900

I'm kickstarting the audiobook for "The Internet Con: How To Seize the Means of Computation," a Big Tech disassembly manual to disenshittify the web and make a new, good internet to succeed the old, good internet. It's a DRM-free book, which means Audible won't carry it, so this crowdfunder is essential. Back now to get the audio, Verso hardcover and ebook:

http://seizethemeansofcomputation.org

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2023/08/18/openwashing/#you-keep-using-that-word-i-do-not-think-it-means-what-you-think-it-means

Image: Cryteria (modified) https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0 https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#llama-2#meta#openwashing#floss#free software#open ai#open source#osi#open source initiative#osd#open source definition#code is speech

253 notes

·

View notes

Text

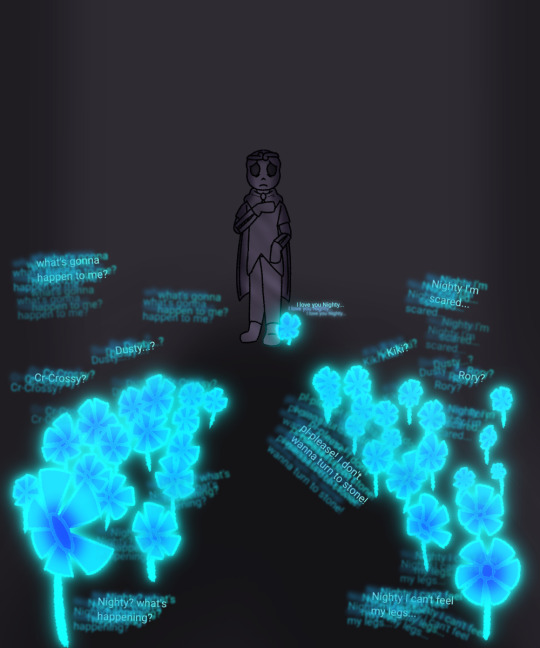

Memories.

His hand used to he holding something... But it's not anymore :)

OSD belongs to @calcium-cat

275 notes

·

View notes

Text

Antidepressants 💊

alts under the cut :3

#art#my art#artist on tumblr#digital art#fanart#eeveeboo#pastelvoidsystem#bbno$#bbnomoney#bbnomula#osd

12 notes

·

View notes

Text

A Little Broken Dream

Dream wandered the halls of the castle. Everyone was busy, and Dream was bored. He wandered for a while, before spotting the bundle of lavender he’d given Nightmare. It reminded him of something, but he didn’t know what. It made his head hurt to try and remember. He could vaguely recall having an argument with Nighty, and Nighty was sulking about it. He remembered going out to the southern glen, where the lavender grew. He was going to get some as an apology… but the memory was hazy, really hazy. He found that his feet were moving, maybe going to the flower field outside the castle would help him remember? He wasn’t supposed to leave without the others, but not being able to figure out what the memory was frustrated him. So he walked, remembering as he went.

Nightmare was really upset that fateful day, and Dream wanted to make it right. He got to the flower field and remembered arriving at the glen. He knew which flowers were the best, and he made sure to take his time. A big handful of the prettiest flowers made for a more sincere apology, so he spent a very long time picking lavender. When Dream was done, he walked in the direction he would need to go if this really was the southern glen, back to where the tree would have been.

He remembered a deep feeling of wrongness in his soul, as if something bad was happening. Anxious, he sped up, almost running, when suddenly, Dream was enveloped in the memory, as if it were happening then and there. Nightmare was standing in front of the stump that had been their Tree. The village was destroyed, with dust and blood everywhere, there were even corpses lying around.

The village was dead.

The Tree was dead.

His brother was…

Dream looked at Nightmare. Though the goop covering his body was not new to the little skeleton, his soul filled with shock. This was the day… This was when Nighty turned into what he was now. “What happened?” He couldn’t stop himself from asking. His brother looked hurt, and furious. “You did this.” Nightmare looked so angry, so upset. Dream couldn’t believe it. He hadn’t been there when it had happened. He dropped the bundle of flowers, falling to his knees as the memory faded.

Nightmare had to go through that all alone, because Dream wasn’t there.

He’d failed.

He’d failed as a guardian, and as a brother.

The little skeleton sobbed, unable to believe he’d basically abandoned his brother when the worst thing that could ever happen did happen. He just knelt there, crying for who knew how long. Eventually, he ran out of tears, his magic level too low for him to be able to cry. Still, he sat, his soul breaking into a thousand pieces. It made him feel sick, knowing he’d done such a thing.

It was then, finally, that Nightmare appeared. Seeing how broken his brother was, he came closer. Dream was too lost in thought to hear him, his mind hazy and his skull aching. With how low Dream’s magic levels were, it made him feel much worse than he would have ordinarily. Nightmare’s hands, firm on the little skeleton’s shoulders, snapped Dream back to reality somewhat. “Dream, what happened?” It was clear Nighty had asked the question several times, and he sounded very worried. “I failed.” It was the only thing Dream said, the words that repeated over and over in his mind.

Dream was vaguely aware of Nightmare CHECKing him, making sure he was alright. The next thing Dream knew, he was in Nightmare’s arms. When had Nighty picked him up? He had no idea. Dream didn’t have enough magic left to form tears, but he still sobbed, shaking and whimpering as his brother held him. Nightmare wrapped his arms firmly around the little skeleton, offering soft comforts to his tiny brother. Dream didn’t know what the larger, goopy skeleton was saying, but Nighty’s gentle tone helped him calm down a little.

Once Dream’s whimpers became less frequent, his shaking less violent, Nightmare teleported to the castle. With how low the little skeleton’s magic levels were, Nightmare knew he had to get Dream to drink something, before his dehydration became dangerous. Cross was the first to spot Dream, and immediately Nightmare was met with a rush of questions. Nightmare’s answers were short, and there were some questions Nightmare couldn’t answer. Nightmare explained that Dream was severely dehydrated at that point, and Cross was quick to get the tiny skeleton something to drink. Dream drank it very quickly, still sniffling.

Once Dream had finished, he looked up at Nightmare, with massive wobbly eyelights. “I’m sorry.” The words were small and tearful, as Dream was crying again. “Sorry? What for?” Nightmare didn’t know what was wrong, his focus was on Dream. He was severely caught off-guard when Dream curled into his chest, and mumbled, “For not being there.”

One Small Dream belongs to @calcium-cat

#osd#angst#one small dream#here have some soul-crushing angst#I regret nothing#where was nightmare all day? who knows#tw death

184 notes

·

View notes

Text

536 notes

·

View notes

Text

Last night while my friend was info dumping sonic lore she told midnight (as in the time of night) to f off, but I've got OSD still spinning in my head so I thought she meant the nickname nightmare goes by in the fic jshfjdjd 💀

OSD and 'Midnight' belong to @calcium-cat

Go read their fic its adorable

#shitpost#tokki#pepsketch#OSD#one small dream#midnight#nightmare#undertale au#my first drawing of nightmare ever djfhhd

80 notes

·

View notes

Text

Terminator Zero (2024)

21 notes

·

View notes

Text

Painfully obsessed with this one

178 notes

·

View notes