#xlog

Explore tagged Tumblr posts

Text

youtube

São os números que nos mostram como crescemos e como continuamos a crescer, com a ajuda de uma equipa incansável e um grupo de clientes empreendedores. Conhece a experiência XLOG em menos de 1 minuto.

2 notes

·

View notes

Text

Is anyone’s allergies worse this year? I used to just snotty and sneezy but now I physically cannot breathe cause of my sinuses being clogged. Not with my you’d or anything but it’s just xlogged.

It’s literally physically unbearable being myself right now

0 notes

Photo

Grand Line Concert VCR behind

321 notes

·

View notes

Video

Lay Zhang '莲 (Lit)' MV Behind The Scenes

#a freaking behind the scenes#I love a LAY Vlog#Xlog#eeeep#The details#some of these you miss in the MV#seriously#look at them#and that ponytail#it freaking SENDS#YIXING#*throws him an evil look*#*but also loves him for it*

13 notes

·

View notes

Text

Self-Diagnosis DID Tests

by x (helped by few others, mainly real life buds)

Decided to post this in case you need some help to self-diagnosis to determine you are DID/OSDD systems. It is not the best, but this is what we personally did to help us out to figure things out. You can try other methods of course. People are experiencing things differently. Once again, this is a personal list and not 100% correct. We are glad if it helps, but if it doesn’t- you can always figure out other ways.

Before we started, this is optional- but it’s more recommended to have at least one real life person you trust(and the alters who joined this thing) to accompany you in some of these. If you can’t find anyone, then you can just find the ones in this list that doesn’t require another person-in-another-body.

This whole thing requires at least two participating alters to co-op to determine. And a media to write down your results (book/phone notes/anything). Book is more recommended though- it’s faster, plus it might help you to recognize different writing pattern between the participating alters.

It’s also HIGHLY RECOMMENDED to still go to professional despite you ended up being sure with the results. You may bring the results to some professional for confirmation at least. This whole thing only able to help you to figure things out, not being the confirmation itself.

These are not that much, but hope it helped.

try to take picture of yourself when you are fronting, tell the alter to do the same when they front after you. try doing co-front selfie and totally -alone- selfie.

try to focus on switching and put it in a video. watch it aftermath.

recite each of you own memories. which are blurry and which are not. try to write those down.

look at your favorite series/character/anything and determine you/alter reaction to it if it differs.

make one of you write down something for the other when they are fronting, try the other to read it later.

try to "guess”(or remember) what they wrote down before opening it to read.

if you have another person helping you with this, try to make them help you writing down your differences in behaving, even if it’s a small one. (and several facial details like eye width difference or so)

your friend show one alter something, anything. Something simple enough to memorize. When they switched, make the other alter to recite what it was.

Determine switch triggers and reaction to certain calling(helped by having friend more).

try all of those with non-fronting alter turning on and off on your co-consciousness and see if there are differences. (i don’t even know how to put it in words because somewhat i always am co-con) difference in clear remembering, blurry, and that-remember-but-on-tip-of-your-tongue - write those all down.

examine your entire handwritings.

when you are not fronting but the other is, try to take notice of their behavior in public- later write it down under their name. let your alter do the same for you too. Including talking pattern and habits. (much easier with friend really)

try talking(head/not) when co-fronting and see if there is any difference.

most of these are mainly to determine whether you really differ with your alter(if you doubted yourself if you are the same person or not) and perhaps some a bit too focused in a few aspects. If the results of differentiation and lapses and emotions happen, you can tell you (and that alter) are two different people.

Sorry it’s not really written in the best way. But I kinda tried. Feel free to add.

#dissociative identity disorder#did#actuallydid#xlog#help#self help#self diagnosis#idk what to tag this as

46 notes

·

View notes

Text

ZHANG YIXING from zhang yixing's studio (weibo)

#zhang yixing#lay zhang#exo#exo lay#kpop#cpop#exosnet#my gifs#yixingnet#exo gifs#from yixing's weibo#from yixing's xlog#weibo#k idol#c idol#chinese idol

193 notes

·

View notes

Video

tumblr

210723 Lay’s Studio - Xlog Red

14 notes

·

View notes

Text

can anyone bring my will to make gifsets back because where did she go

#personal#i also would like to fire whoever in yixing's team is editing his xlogs bc those picsart effects and frames are hideous

1 note

·

View note

Text

Openser xlog

Openser xlog code#

loadmodule textops.so loadmodule maxfwd.so loadmodule xlog.so. loadmodule textops.so loadmodule siputils.so loadmodule xlog.so. KAMAILIO This config file implements the basic P-CSCF functionality - web. # opensips-cli -x mi subscribers_list E_RTPPROXY_STATUS unix:/tmp/event. KAMAILIO define WITHMYSQL define WITHAUTH define WITHUSRLOCDB define. # opensips-cli -x mi subscribers_list E_RTPPROXY_STATUS If the socket is also specified, only one subscriber information is returned. By setting the usrloc’s parameter dbmode to 2 we tell OpenSER to use mysql for storing contact information (and not the memory). We need mysql module to store user locations in a database. We xlog() function for logging the processing details on the screen. If the event is specified, only the external applications subscribed for that event are returned. We run the OpenSER server in a debug mode as a terminal process. The goal of the implementation is to load balance requests from my SIP provider to a farm of 10 asterisk servers for media processing. Output: If no parameter is specified, then the command returns information about all events and their subscribers. hey, i am new to OpenSIPS/OpenSER and just finished writing my first config. In Kamailio, we often may wish to add headers, view the contents of headers and perform an action or re-write headers (Disclaimer about not rewriting Vias as that goes beyond the purview of a SIP. socket (optional) - external application socket The SIP RFC allows for multiple SIP headers to have the same name, For example, it’s very common to have lots of Via headers present in a request.pid (optional) - Unix pid (validated by OpenSIPS).Section 1.2, Implemented Specifiers shows what can be printed out. A C-style printf specifier is replaced with a part of the SIP request or other variables from system. level (optional) - logging level (-3.4) (see meaning of the values) This module provides the possibility to print user formatted log or debug messages from OpenSIPS scripts, similar to printf function.If pid is also given, the logging level will change only for that process. OpenSIPS is a GPL implementation of a multi-functionality SIP Server that targets to deliver a high-level technical solution (performance, security and. If a logging level is given, it will be set for each process. If no argument is passed to the log_level command, it will print a table with the current logging levels of all processes. Get or set the logging level of one or all OpenSIPS processes. Output: an array with one object per connection with the following attributes : ID, type, state, source, destination, lifetime, alias port. Updated to latest upstream version: 1.0.1 Added support for multiple modules, including accounting, mysql, sms, xlog. Pseudo-variable marker - represents the character '' 3.2. The list of pseudo-variables in OpenSER Predefined pseudo-variables are listed in alphabetical order.

Openser xlog code#

As a special service "Fossies" has tried to format the requested source page into HTML format using (guessed) INI source code syntax highlighting (style: standard) with prefixed line numbers.Īlternatively you can here view or download the uninterpreted source code file.The command lists all ongoing TCP/TLS connection from OpenSIPS. Pseudo-variables can be used with following modules of OpenSER: avpops - function avpprintf () xlog - functions xlog () and xdbg () 3.

0 notes

Photo

Yixing // Xlog Blue 200914

#zhang yixing#yixing#lay#exo#exosnet#the smirk dimple combo deadly as ever#lay zhang#xinggif#mine#gif

1K notes

·

View notes

Text

[ENG] EXO Youtube Channel/Vlogs Updates - 2022

EXO

Mihawk Wedding Vlog

XIUMIN

220220 OrpheuXiu Vlog

221007 Kangta 'Street Alcohol Fighter' Vlog

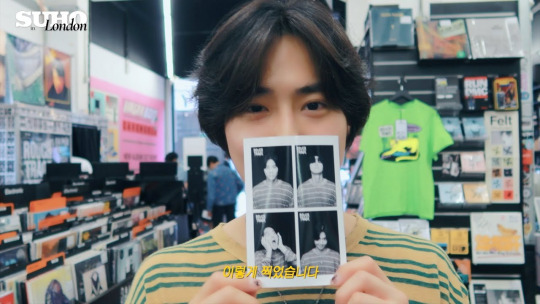

SUHO

220322 Suho's Day Vlog

220426 'Finally GF Met EXO'

220624 SUHO in Dubai Pt.1

220701 SUHO in Dubai Pt.2

220907 SUHO in London

LAY

220110 Xlog Red

220114 Zhang Yixing watches the fireworks

220129 Chinese New Year Gala BTS Vlog

220204 Xlog practising 'Wish the World Well-being' Stage

220211 Xlog Blue BTS of "Health to the World"

220220 Chromosome Ent. Update - Making Dumplings

220401 Xlog Red Yixing performing the magic trick

220415 Xlog Red Process of Creating Music

220429 Xlog Red Boss is Eating Well

220708 BTS Youku live stream

220812 Xlog Blue BTS Concert

220819 Xlog Blue BTS Concert

220826 Xlog Blue BTS Concert

220902 Xlog Blue BTS Concert

220909 Xlog Blue Rap of China Related

220916 Xlog Blue BTS Concert

220930 Xlog Blue Veil MV Vlog

221007 One Day in Beijing

221021 Third postcard from the sea

221126 Ninth Postcard

221208 VOGUE BTS

221227 Glass Man Cover Shoots BTS

BAEKHYUN

220117 Favourite 'haha' | ceramic art

220214 Valentine's Day Flower Arrangement

220317 Mongryong Walk

220417 Overcoming | Painting with Oil Pastel

220517 With Love Light Friend

220617 Kyoong Who Reads Books

220717 Kyoong Radio

2208117 Mukbang ASMR

220917 Chuseok homecoming playlist

221017 Kyoong Who Reads Books

221111 Burning Artistic Spirit | Pepero Making

221217 Kyoong Radio

CHEN

220511 "Hold You Tight"

220623 "An Unfamiliar Day"

220812 "You Never Know"

220821 Dare U Naeju London Vlog

221209 "Reminisce"

221223 "On The Snow"

CHANYEOL

220726 NNG Update - nature_1 hour

D.O.

221029 Lee Sieon 'Bad Prosecutor' Vlog

KAI

220105 Jonginie's Peaches Vlog

220130 KAIst EP. 05

220417 KAIst EP. 06

220531 KAIst EP. 07

220710 Dare U Naeju Garmany Vlog

220822 Jonginie’s various schedule

221116 Dare U Naeju Paris Vlog

SEHUN

220108 Choi Heeseo’s ‘Now, We Are Breaking Up’ Vlog

25 notes

·

View notes

Photo

yixing :: xlog blue 200920

#zhang yixing#yixingnet#yixing#exo#mgroupsedit#bia.gifs#hello to my first yixing gifs on this blog!!!#that last gif flex!

234 notes

·

View notes

Photo

《joker》photo shoot behind

400 notes

·

View notes

Text

We get MOREEEE???

youtube

4 notes

·

View notes

Photo

xlog blue: behind the scenes of yixing x Fantasy Westward Journey

2K notes

·

View notes

Text

too tired to keep this personal log updated but we gained a new member, a new kid.

dunno what to call them rn but we're calling them chibi for now. a literal kid, messy when talking. but for some reason have full memory access. perhaps they have been here for longer than we thought? we don't know tho. but they front a lot for some reason by sudden switches. tho then again we're all pretty drained these days. but chibi has been nice and agreeable anyway when we talk about the fronting, kinda cute. they just want to help and kinda try to defend us in some way too.

chibi is byelingual, barely able to say full sentence without braining too hard. very honest. nice kid overall even tho i worry for our system to work in public now.

0 notes