#filter bubbles

Text

Unravelling the gender politics of young men [Pt 2]

This is the second of three pieces on gender, politics, and young people. Part 1 is here.

—

There is some unfinished business from the previous piece on the gendered of young people. ‘Young’ is 18-29.

So let’s start, briefly, with the politics of young women, radicalised in the last decade (in the UK, Germany, and the US), and the last half decade in South Korea.

In his FT piece…

View On WordPress

0 notes

Text

The Algorithm's Effect on Users

How Does The Algorithm Engage with Users?

In the Gendered Lives reading “Media Representations and the Creation of Knowledge,” Kirk and Okazawa-Rey write briefly about the role of social media in news circulation and how it works. They state, “As a condition of using such sites, these companies have unprecedented access to our personal information to direct specific advertising our way. Moreover, online business models that seek to maximize ‘clicks’ to earn advertising revenue encourage posting information that is ‘click-worthy’ regardless of whether it is accurate” (Kirk and Okazawa-Rey, 2020). In other words, a common method used by advertisers and social media users to gain more interaction with their content is to appeal to the need for belief affirmation and curiosity.

Algorithms used by TikTok and other social media platforms filter the content presented to each user so that they are not presented with views or news that challenge their personal beliefs, essentially submerging them into an online sphere of constant positive feedback for every opinion or experience they have. This presents a degrading effect on media literacy. If one is presented with a post that agrees with their values or beliefs, they are less likely to question it. If you like pineapple on pizza, and you see someone else on TikTok who claims that pineapple is the best possible topping for pizza, then you aren’t going to question that post because you already believe it to be true.

This effect is helped by the fact that people often get their news from accounts that post about the news, and these accounts are more prone to posting with biases since they do not operate the same way as newspapers or newscasters. The same level of fact-checking or extensive research simply doesn’t exist with a platform where it’s so easy to post and repost, which leads to the rapid spread of misinformation and biases. TikTok is also somewhat infamous for its young user base, consisting largely of teens and adults under 30, even children younger than their teens if given access to a device. Young and impressionable minds will be the most affected by biases they take in from TikTok because they don’t have the maturity, critical thought, or media literacy to see that the content they are fed might be subverting their thinking. Instead of learning how to think about real-world events and other people from their education and community, they’re learning through an algorithm. So, when presented with information or ideas that may be false or negatively reflected on a group of people, users will absorb it without question. That absorption may come in reposting, liking, or scrolling through and ignoring the post.

How Does The Algorithm Warp Users’ Sense of Self & Others?

A small social science review paper about how the algorithm of TikTok functions in shaping users’ identities discusses in more detail how people find themselves trapped in a cycle of repeatedly feeding about, by, and for the same groups. The authors write, “Due to the opaqueness or “black box-ed” nature of algorithms, users experience them just through their perceptions. Because what people see on social media is largely personalized, it shapes how people see themselves and others (Bhandari and Bimo 2022) but also impacts their behavior on social media platforms (DeVito 2021)” (Ionescu & Licu, 2023). This means that not thinking critically about the information they are seeing means that users are taking in opinions without considering alternate perspectives. Learning hatred without empathy means these users, in their filter bubbles, will continue to perpetuate hatred.

In their research, Ionescu and Licu also talk about how these “filter bubbles” create what’s called a crystal framework, where “The main characteristics of the “crystal” were: reflective (parts of their self-concept were reflected back to them in the feed); multifaceted; has a refinement strategy; is diffractive” (Ionescu & Licu, 2023). When placed into filter bubbles, users are put into the net of fellow users who share the ideologies and opinions of others, meaning that they are constantly surrounded by others who think, act, and look the same as them. This can snowball into a sort of ‘us vs.. them’ mentality on social media platforms, as users engage with others of a like mind (a like mind which the platform’s algorithm has also curated), they will gradually take in opinions and potential hatred of other groups who hold differing opinions, without taking the chance to think critically about what they are seeing of those groups.

When young users are put into one of these filter bubbles where all they see is a continuous stream of derogatory and discriminatory content, it normalizes the attitudes and language of misogyny. It generates a misogynistic worldview that the mind takes on.

How Do We See “Filter Bubbles” and Internalized Hatred Manifesting?

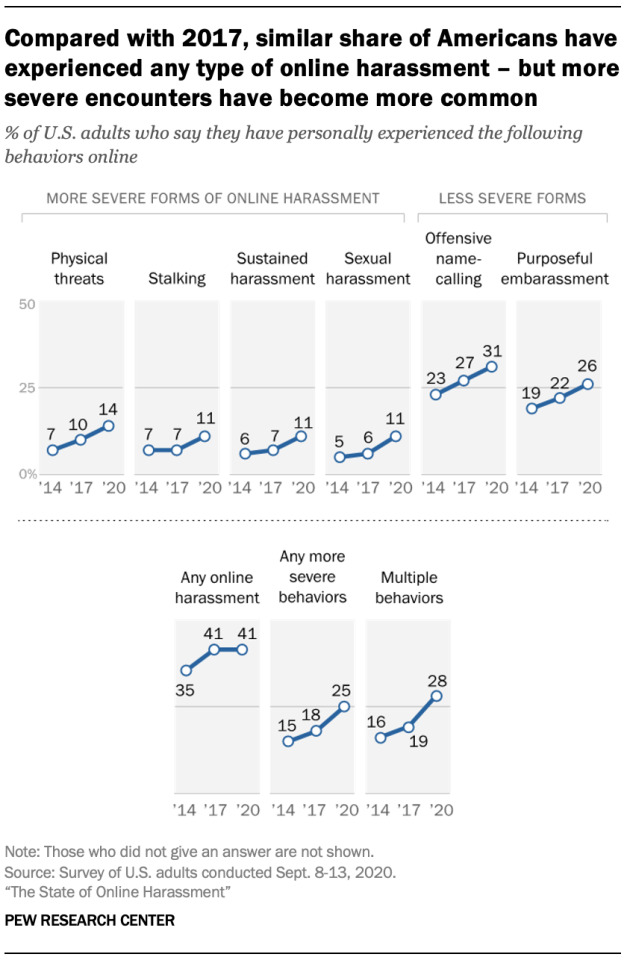

How this influx of filtered content shapes how users see themselves in relation to others can manifest in the form of online harassment. Misogynists and others with similar beliefs, when presented with content contending a more feminist perspective, will lash out because they may feel that their sense of identity and the way they see the world is being attacked. In a research survey conducted by the Pew Research Center on online harassment, it was found that the overall amount of online harassment has increased since 2017, stating, “Beyond politics, more also cite their gender or their racial and ethnic background as reasons why they believe they were harassed online” (Vogels, 2021).

As shown in the data, the cases of online harassment have shown a notable increase since 2017. We cannot say for certain, but it may not be unwise to rule out TikTok’s notoriously addictive algorithm as playing a part in this increase, as the platform was released in September 2016. It is also worth noting that the amount of harassment faced by users varies depending on their race, with Black and Hispanic users saying they often find themselves harassed due to their race (Vogels, 2021). This indicates that the backlash faced by women on TikTok is not only gendered but also racial. The research article also states that “50% of lesbian, gay or bisexual adults who have been harassed online say they think it occurred because of their sexual orientation” (Vogels, 2021). The takeaway here is that these trends and the way they generalize women result in more persecution targeting minority groups of women.

We find that through the perpetuation of misogyny, racism, homophobia, and transphobia in these filter bubbles, online spaces are made more dangerous to individuals belonging to minority groups.

How Does This Relate to a Watered-Down and Dehumanizing Image of Women?

Trends such as the girl math versus boy math and Roman empire trends are made general to garner as large of an audience as possible. While there may be a target audience for these trends, they will inevitably gain the attention of users who may not relate to the experiences or find them distasteful. This means that trends meant to be somewhat silly and uniquely catered to women with specific experiences may suddenly find themselves being co-opted by people with deep internalized prejudices to make it into something hateful or degrading. We can watch these trends devolve into another way to simply accuse women of being too unintelligent, too childish, too one-minded, fit only for sex & reproduction & motherhood, and simply not human enough to garner the respect of men.

Trends that gain a lot of attention on TikTok are used as a tool by and for misogynists to reaffirm what they already believe, that “modern” women are impudent and dismissive. Algorithms ensnare people into hateful and sexist communities that teach them how to twist every aspect of a woman to fit their mold of what a woman should be. They then go out into other parts of social media to take trends and content posted by women and either harass them for it or take the trend and turn it into a narrative demeaning women.

SOURCES

Ionescu, Claudiu Gabriel, and Monica Licu. "Are TikTok Algorithms Influencing Users’ Self-Perceived Identities and Personal Values? A Mini Review" Social Sciences 12, no. 8: 465. May 30, 2023. https://doi.org/10.3390/socsci12080465

Kirk, Gwyn & Margo Okazawa-Rey. “Gendered Lives: International Perspectives.” New York: Oxford University Press, 2020.

Vogels, Emily A. “The State of Online Harassment.” Pew Research Center: Internet, Science & Tech, January 13, 2021.

#Tiktok#Ruby#Filter Bubbles#algorithm#How does it affect brains?#Users#doomscrolling#my videos on tiktok are all the same content is it because of this?#yes it is

0 notes

Text

Sometimes, I feel like I have found a way to mildly transcend any clique that was made for me, and stumble upon messages for another. It gives me a taste of the filter bubbles. While I am certainly in one as well, sometimes I can sense where they are made.

Self

1 note

·

View note

Text

Breaking Out of the Information Cocoon Room: Why Diversifying Your Information Sources is Crucial

In today’s digital age, we have access to more information than ever before. From news articles to social media posts, we are constantly bombarded with information from a wide range of sources. However, with so much information available, it’s becoming increasingly difficult to separate fact from fiction. This is where the concept of the “Information Cocoon Room” comes into play.

In today’s…

View On WordPress

#critical thinking#diversifying information sources#echo chambers#filter bubbles#Information Cocoon Room#Media Literacy

0 notes

Text

🫧🫧🫧

#xiaoven#xiao#venti#genshin impact#genshin xiao#genshin venti#an AU where Xiao is a mermaid that lives in Venti's bathtub#they often have bubble baths together#which leads to shenanigans 👀👀#take that as you will lol#anyway was trying to capture that 90s anime filter type of thing i tried my best 🥲#art tag

331 notes

·

View notes

Text

in another universe TLT is a coming of age fantasy YA tragedy about Silas having to leave home with his nephew/guardian at the age of 16 to seek glory for his house and then not only LOSING that guardian in a terrible mistake, but also losing faith in his culture/religion as a whole as he rejects everything he has been taught to stand for and want. And as he stands up for himself in his final moments and realizes his terrible miscalculation he is KILLED by the shambling, posessed body of his guardian only for his soul to be then swept away and thrust into a confusing dreamscape where nothing is real and once again he must reject his world in order to chase after something new. the universe does not forgive but maybe he can force its hand

#trb.txt#silas octakiseron#thank god we got GIDEON NAV instead#sorry i woke up at 3am w the thirsties and remembered silas is only 16....#would of course never trade him for gideon nav but i do thnk he is given shockingly little sympathy by both the narrative & also the reader#but like that is likely by design he is SO mean to gideon and she filters pretty much everything we see of him except the tiny bit#in the river bubble#but i do think abt how he is 16 and harrow is 17-turns-18 and issac is like 14 idk!!!!#i am not sure what i am saying anymore#i like the side characters? im going back to bed

271 notes

·

View notes

Text

Kinnporsche x textposts pt. 19: The It Couple [more]

BONUS:

#this better show up or im gonna give up for good#i figured out it was the pic of kinn hugging porsches midriff while his hair was being dried that was too n*fw for tumblr 😞#its a shame it was such a cute screencap and i had a great post paired with it too :((( gonna have to put it under the wavy filter or smth#kinnporsche#kinnporsche memes#kptp:mine#oh the speech bubble is from 'fungirl' by elizabeth pich btw. go read its a nice one!!!!!#kpts#kp

257 notes

·

View notes

Text

I have posted this before but then i edited it

#Beeper#photo manipulation#you can do this to your chickens as well#Use the artistic filter lines only on one layer then posterize the layer under it then do some corrections since#it isnt perfect#then utilize Clip studios built in chat bubble makers then boom done

319 notes

·

View notes

Text

guys i think ive solved all of shipping

(click for full resolution)

if only there was a joke to be made between apples and corporate tech giants

obligatory alt ending:

side by side with the original

#i took 3000 years trying to draw lucifers wings only to cover them in thought bubble#100 step plan to get vox over alastor#(spoiler it doesnt work vox is still polyamorous)#but it would be SO FUNNY#hazbin hotel#fuck how does tagging work#hazbin lucifer#hazbin vox#alastor#vox#staticapple#aroace alastor#onewaybroadcast#fucking#zero sided radio apple idk???#<adding a space so it doesnt fuck with anyones searches#fuck do i even tag the other characters#ehh#valentino#<him and him alone for filtering#applestatic#appletv#which is another one i see sometimes#one sided radiostatic#i think ive covered all my bases#myart#THE READ MORE BROKE AAAAAAAA#should be better now

123 notes

·

View notes

Text

you let tim wear an a for two (2) preseason games and he forgets how to act

#i want to wrap him bubble wrap and then push him onto the ground#every single one of these players has so much more nhl experience than you timothy#tim stützle#ottawa senators#sens lb#why does this look like it has a shitty instagram filter on it

160 notes

·

View notes

Text

i made some modern arc fushi icons

#fnae#fumetsu no anata e#to your eternity#no fancy filters or anything#but i edited out speech bubbles and all that#some are LQ because the panels were small but sdfghdsjkdfghj

135 notes

·

View notes

Text

Guys, they lived in a literal filter bubble.

#in case you don't know a filter bubbled is when you are only fed content that matches/affirms/suits your beliefs online#doctor who#dot and bubble#dw spoilers

27 notes

·

View notes

Text

A story in two parts.

#I’ve been wanting to draw Caine x bubble for months tho#being drunk tired finally removed my filter long enough to do it

24 notes

·

View notes

Text

Random photos of my pool's water

#this was above surface and i kept asking my sister to splash the water to get the bubbles LMAO#also at night with the pool light on i didn't use filters

12 notes

·

View notes

Text

Bubble bath. 🫧 💜

9 notes

·

View notes

Text

#mood#alternative moodboard#messy moodboard#white moodboard#stray kids moodboard#skz moodboard#moodboard#moodb#lee know moodboard#lee know icon#icon lee know#lee know layouts#layouts lee know#layouts#icons#skz layouts#icons stray kids#lee know with filter#lee know bubble#lee minho layouts#lee minho stray kids#layouts lee minho#lee minho icon#icon lee minho#Spotify#solo harry#harry styles songs

216 notes

·

View notes