#hpc services

Explore tagged Tumblr posts

Text

Future Applications of Cloud Computing: Transforming Businesses & Technology

Cloud computing is revolutionizing industries by offering scalable, cost-effective, and highly efficient solutions. From AI-driven automation to real-time data processing, the future applications of cloud computing are expanding rapidly across various sectors.

Key Future Applications of Cloud Computing

1. AI & Machine Learning Integration

Cloud platforms are increasingly being used to train and deploy AI models, enabling businesses to harness data-driven insights. The future applications of cloud computing will further enhance AI's capabilities by offering more computational power and storage.

2. Edge Computing & IoT

With IoT devices generating massive amounts of data, cloud computing ensures seamless processing and storage. The rise of edge computing, a subset of the future applications of cloud computing, will minimize latency and improve performance.

3. Blockchain & Cloud Security

Cloud-based blockchain solutions will offer enhanced security, transparency, and decentralized data management. As cybersecurity threats evolve, the future applications of cloud computing will focus on advanced encryption and compliance measures.

4. Cloud Gaming & Virtual Reality

With high-speed internet and powerful cloud servers, cloud gaming and VR applications will grow exponentially. The future applications of cloud computing in entertainment and education will provide immersive experiences with minimal hardware requirements.

Conclusion

The future applications of cloud computing are poised to redefine business operations, healthcare, finance, and more. As cloud technologies evolve, organizations that leverage these innovations will gain a competitive edge in the digital economy.

🔗 Learn more about cloud solutions at Fusion Dynamics! 🚀

#Keywords#services on cloud computing#edge network services#available cloud computing services#cloud computing based services#cooling solutions#cloud backups for business#platform as a service in cloud computing#platform as a service vendors#hpc cluster management software#edge computing services#ai services providers#data centers cooling systems#https://fusiondynamics.io/cooling/#server cooling system#hpc clustering#edge computing solutions#data center cabling solutions#cloud backups for small business#future applications of cloud computing

0 notes

Text

Unveiling the Future of AI: Why Sharon AI is the Game-Changer You Need to Know

Artificial Intelligence (AI) is no longer just a buzzword; it’s the backbone of innovation in industries ranging from healthcare to finance. As businesses look to scale and innovate, leveraging advanced AI services has become crucial. Enter Sharon AI, a cutting-edge platform that’s reshaping how organizations harness AI’s potential. If you haven’t heard of Sharon AI yet, it’s time to dive in.

Why AI is Essential in Today’s World

The adoption of artificial intelligence has skyrocketed over the past decade. From chatbots to complex data analytics, AI is driving efficiency, accuracy, and innovation. Businesses that leverage AI are not just keeping up; they’re leading their industries. However, one challenge remains: finding scalable, high-performance computing solutions tailored to AI.

That’s where Sharon AI steps in. With its GPU-based computing infrastructure, the platform offers solutions that are not only powerful but also sustainable, addressing the growing need for eco-friendly tech.

What Sets Sharon AI Apart?

Sharon AI specializes in providing advanced compute infrastructure for high-performance computing (HPC) and AI applications. Here’s why Sharon AI stands out:

Scalability: Whether you’re a startup or a global enterprise, Sharon AI offers flexible solutions to match your needs.

Sustainability: Their commitment to building net-zero energy data centers, like the 250 MW facility in Texas, highlights a dedication to green technology.

State-of-the-Art GPUs: Incorporating NVIDIA H100 GPUs ensures top-tier performance for AI and HPC workloads.

Reliability: Operating from U.S.-based data centers, Sharon AI guarantees secure and efficient service delivery.

Services Offered by Sharon AI

Sharon AI’s offerings are designed to empower businesses in their AI journey. Key services include:

GPU Cloud Computing: Scalable GPU resources tailored for AI and HPC applications.

Sustainable Data Centers: Energy-efficient facilities ensuring low carbon footprints.

Custom AI Solutions: Tailored services to meet industry-specific needs.

24/7 Support: Expert assistance to ensure seamless operations.

Why Businesses Are Turning to Sharon AI

Businesses today face growing demands for data-driven decision-making, predictive analytics, and real-time processing. Traditional computing infrastructure often falls short, making Sharon AI’s advanced solutions a must-have for enterprises looking to stay ahead.

For instance, industries like healthcare benefit from Sharon AI’s ability to process massive datasets quickly and accurately, while financial institutions use their solutions to enhance fraud detection and predictive modeling.

The Growing Demand for AI Services

Searches related to AI solutions, HPC platforms, and sustainable computing are increasing as businesses seek reliable providers. By offering innovative solutions, Sharon AI is positioned as a leader in this space.If you’re searching for providers or services such as GPU cloud computing, NVIDIA GPU solutions, or AI infrastructure services, Sharon AI is a name you’ll frequently encounter. Their offerings are designed to cater to the rising demand for efficient and sustainable AI computing solutions.

0 notes

Text

#High-Performance Computing HPC as a Service Market#High-Performance Computing HPC as a Service Market Share#High-Performance Computing HPC as a Service Market Size#High-Performance Computing HPC as a Service Market Research#High-Performance Computing HPC as a Service Industry#What is High-Performance Computing HPC as a Service?

0 notes

Text

800G OSFP - Optical Transceivers -Fibrecross

800G OSFP and QSFP-DD transceiver modules are high-speed optical solutions designed to meet the growing demand for bandwidth in modern networks, particularly in AI data centers, enterprise networks, and service provider environments. These modules support data rates of 800 gigabits per second (Gbps), making them ideal for applications requiring high performance, high density, and low latency, such as cloud computing, high-performance computing (HPC), and large-scale data transmission.

Key Features

OSFP (Octal Small Form-Factor Pluggable):

Features 8 electrical lanes, each capable of 100 Gbps using PAM4 modulation, achieving a total of 800 Gbps.

Larger form factor compared to QSFP-DD, allowing better heat dissipation (up to 15W thermal capacity) and support for future scalability (e.g., 1.6T).

Commonly used in data centers and HPC due to its robust thermal design and higher power handling.

QSFP-DD (Quad Small Form-Factor Pluggable Double Density):

Also uses 8 lanes at 100 Gbps each for 800 Gbps total throughput.

Smaller and more compact than OSFP, with a thermal capacity of 7-12W, making it more energy-efficient.

Backward compatible with earlier QSFP modules (e.g., QSFP28, QSFP56), enabling seamless upgrades in existing infrastructure.

Applications

Both form factors are tailored for:

AI Data Centers: Handle massive data flows for machine learning and AI workloads.

Enterprise Networks: Support high-speed connectivity for business-critical applications.

Service Provider Networks: Enable scalable, high-bandwidth solutions for telecom and cloud services.

Differences

Size and Thermal Management: OSFP’s larger size supports better cooling, ideal for high-power scenarios, while QSFP-DD’s compact design suits high-density deployments.

Compatibility: QSFP-DD offers backward compatibility, reducing upgrade costs, whereas OSFP often requires new hardware.

Use Cases: QSFP-DD is widely adopted in Ethernet-focused environments, while OSFP excels in broader applications, including InfiniBand and HPC.

Availability

Companies like Fibrecross,FS.com, and Cisco offer a range of 800G OSFP and QSFP-DD modules, supporting various transmission distances (e.g., 100m for SR8, 2km for FR4, 10km for LR4) over multimode or single-mode fiber. These modules are hot-swappable, high-performance, and often come with features like low latency and high bandwidth density.

For specific needs—such as short-range (SR) or long-range (LR) transmission—choosing between OSFP and QSFP-DD depends on your infrastructure, power requirements, and future scalability plans. Would you like more details on a particular module type or application?

2 notes

·

View notes

Text

Stray flashlight sucked by F-35 engine caused $4 million in damage

Fernando Valduga By Fernando Valduga 01/19/2024 - 20:18in Incidents, Military

The F-35's ALIS system should soon be replaced by a new cloud-based platform.

A portable flashlight left inside the engine inlet of a USAF F-35 fighter was sucked into the engine during a maintenance operation at Luke Air Base, Arizona, in March 2023, causing almost $4 million in damage, according to a new accident investigation report.

The investigation, released on January 18, blamed the maintainer for not following the joint and U.S. Air Force guidelines as the main cause of the accident, which damaged the $14 million engine enough so that it could not be repaired locally.

However, the researchers also cited problems with the Autonomous Logistics Information System (ALIS) of the F-35 as a factor that contributed substantially. ALIS is intended to integrate operations, maintenance, forecasts, supply chain, customer support services, training and technical data, but the system has struggled with the lack of real-time connectivity, clumsy interfaces and much more.

As a result, the report states, “the substantial number of checklists and the difficulty in accessing the corrections cause complacency when users consult the necessary maintenance procedures”.

The accident in question occurred on March 15, when a three-person maintenance team was completing a Time Compliance Technical Directive on the F-35 to “install a measurement buffer on the engine fuel line and perform a leak check on the new measurement buffer while the engine was running,” according to the report.

After the plug was installed, a maintainer conducted a tool inventory check, before another maintainer performed a "Before maintenance operations" inspection of the engine. For this, the maintainer used a flashlight to inspect the engine inlet and left it on the edge of the entrance.

The maintainer who performed the engine inspection then operated the engine for five minutes to check for fuel leaks. During this time, the cabin showed no indication of damage from foreign objects to the engine, but when the engine was turned off, the team reported hearing abnormal noises. The maintainer who conducted the engine operation performed another inspection and identified the damage, while the maintainer who completed the first check of the tool inventory performed another and noticed the lack of a flashlight.

Finally, the engine suffered damage to the second stage rotor, the third stage rotor, the fifth stage rotor, the sixth stage rotor, the fuel nozzle, the bypass duct, the high pressure compressor (HPC), the high pressure turbine (HPT) and the variable fan input vane, valued at US$ 3,933,106.

Investigators found that the maintainer who conducted the inspection before the engine ran did not follow the Joint Technical Data warnings to remove all loose items before entering the aircraft entrance and to ensure that all engine inlets and exhausts were free of foreign and loose objects. The aviator also did not follow the instructions of the Air Force Department to "perform a visual inventory" of the toolkit after completing each task.

Finally, the report also concluded that the local practice of the 62ª Aircraft Maintenance Unit did not fully follow the instructions of the DAF, which require the individual who signed the toolkit to perform visual checks of the inventory. Instead, the practice of the unit was to make the individual who performed the operation of the engine conduct the inventory check. As a result, the two aviators involved in the accident thought that the flashlight had been found.

The ALIS factor in the accident marks another problem for the problematic F-35 support venture. The program has been affected by high costs and technical problems, and lawmakers have expressed frustration with ALIS before. The Joint Office of the Program is in the process of moving to a new "Integrated Operational Data Network", but the authorities have described it as a gradual effort - it has already been under construction for four years.

Source: Air & Space Forces Magazine

Tags: ALISMilitary AviationF-35 Lightning IIIncidentsUSAF - United States Air Force / U.S. Air Force

Sharing

tweet

Fernando Valduga

Fernando Valduga

Aviation photographer and pilot since 1992, he has participated in several events and air operations, such as Cruzex, AirVenture, Dayton Airshow and FIDAE. He has works published in specialized aviation magazines in Brazil and abroad. He uses Canon equipment during his photographic work in the world of aviation.

Related news

MILITARY

France will deliver missiles to Ukraine on a monthly basis in 2024

19/01/2024 - 16:00

MILITARY

DragonFire laser weapon system successfully tested against aerial targets

19/01/2024 - 14:00

MILITARY

'Storted' camouflage is patented by the UAC for Su-75 Checkmate

19/01/2024 - 09:00

BRAZILIAN AIR FORCE

Saab puts F-39 Gripen in air combat with F-5 for IRST tests

19/01/2024 - 08:14

MILITARY

Houthis want to threaten the US with a single jet fighter, an old F-5

18/01/2024 - 22:52

MILITARY

USAF confirms that B-21 Raider started test flights at Edwards Air Base

18/01/2024 - 21:52

13 notes

·

View notes

Text

A3 Ultra VMs With NVIDIA H200 GPUs Pre-launch This Month

Strong infrastructure advancements for your future that prioritizes AI

To increase customer performance, usability, and cost-effectiveness, Google Cloud implemented improvements throughout the AI Hypercomputer stack this year. Google Cloud at the App Dev & Infrastructure Summit:

Trillium, Google’s sixth-generation TPU, is currently available for preview.

Next month, A3 Ultra VMs with NVIDIA H200 Tensor Core GPUs will be available for preview.

Google’s new, highly scalable clustering system, Hypercompute Cluster, will be accessible beginning with A3 Ultra VMs.

Based on Axion, Google’s proprietary Arm processors, C4A virtual machines (VMs) are now widely accessible

AI workload-focused additions to Titanium, Google Cloud’s host offload capability, and Jupiter, its data center network.

Google Cloud’s AI/ML-focused block storage service, Hyperdisk ML, is widely accessible.

Trillium A new era of TPU performance

Trillium A new era of TPU performance is being ushered in by TPUs, which power Google’s most sophisticated models like Gemini, well-known Google services like Maps, Photos, and Search, as well as scientific innovations like AlphaFold 2, which was just awarded a Nobel Prize! We are happy to inform that Google Cloud users can now preview Trillium, our sixth-generation TPU.

Taking advantage of NVIDIA Accelerated Computing to broaden perspectives

By fusing the best of Google Cloud’s data center, infrastructure, and software skills with the NVIDIA AI platform which is exemplified by A3 and A3 Mega VMs powered by NVIDIA H100 Tensor Core GPUs it also keeps investing in its partnership and capabilities with NVIDIA.

Google Cloud announced that the new A3 Ultra VMs featuring NVIDIA H200 Tensor Core GPUs will be available on Google Cloud starting next month.

Compared to earlier versions, A3 Ultra VMs offer a notable performance improvement. Their foundation is NVIDIA ConnectX-7 network interface cards (NICs) and servers equipped with new Titanium ML network adapter, which is tailored to provide a safe, high-performance cloud experience for AI workloads. A3 Ultra VMs provide non-blocking 3.2 Tbps of GPU-to-GPU traffic using RDMA over Converged Ethernet (RoCE) when paired with our datacenter-wide 4-way rail-aligned network.

In contrast to A3 Mega, A3 Ultra provides:

With the support of Google’s Jupiter data center network and Google Cloud’s Titanium ML network adapter, double the GPU-to-GPU networking bandwidth

With almost twice the memory capacity and 1.4 times the memory bandwidth, LLM inferencing performance can increase by up to 2 times.

Capacity to expand to tens of thousands of GPUs in a dense cluster with performance optimization for heavy workloads in HPC and AI.

Google Kubernetes Engine (GKE), which offers an open, portable, extensible, and highly scalable platform for large-scale training and AI workloads, will also offer A3 Ultra VMs.

Hypercompute Cluster: Simplify and expand clusters of AI accelerators

It’s not just about individual accelerators or virtual machines, though; when dealing with AI and HPC workloads, you have to deploy, maintain, and optimize a huge number of AI accelerators along with the networking and storage that go along with them. This may be difficult and time-consuming. For this reason, Google Cloud is introducing Hypercompute Cluster, which simplifies the provisioning of workloads and infrastructure as well as the continuous operations of AI supercomputers with tens of thousands of accelerators.

Fundamentally, Hypercompute Cluster integrates the most advanced AI infrastructure technologies from Google Cloud, enabling you to install and operate several accelerators as a single, seamless unit. You can run your most demanding AI and HPC workloads with confidence thanks to Hypercompute Cluster’s exceptional performance and resilience, which includes features like targeted workload placement, dense resource co-location with ultra-low latency networking, and sophisticated maintenance controls to reduce workload disruptions.

For dependable and repeatable deployments, you can use pre-configured and validated templates to build up a Hypercompute Cluster with just one API call. This include containerized software with orchestration (e.g., GKE, Slurm), framework and reference implementations (e.g., JAX, PyTorch, MaxText), and well-known open models like Gemma2 and Llama3. As part of the AI Hypercomputer architecture, each pre-configured template is available and has been verified for effectiveness and performance, allowing you to concentrate on business innovation.

A3 Ultra VMs will be the first Hypercompute Cluster to be made available next month.

An early look at the NVIDIA GB200 NVL72

Google Cloud is also awaiting the developments made possible by NVIDIA GB200 NVL72 GPUs, and we’ll be providing more information about this fascinating improvement soon. Here is a preview of the racks Google constructing in the meantime to deliver the NVIDIA Blackwell platform’s performance advantages to Google Cloud’s cutting-edge, environmentally friendly data centers in the early months of next year.

Redefining CPU efficiency and performance with Google Axion Processors

CPUs are a cost-effective solution for a variety of general-purpose workloads, and they are frequently utilized in combination with AI workloads to produce complicated applications, even if TPUs and GPUs are superior at specialized jobs. Google Axion Processors, its first specially made Arm-based CPUs for the data center, at Google Cloud Next ’24. Customers using Google Cloud may now benefit from C4A virtual machines, the first Axion-based VM series, which offer up to 10% better price-performance compared to the newest Arm-based instances offered by other top cloud providers.

Additionally, compared to comparable current-generation x86-based instances, C4A offers up to 60% more energy efficiency and up to 65% better price performance for general-purpose workloads such as media processing, AI inferencing applications, web and app servers, containerized microservices, open-source databases, in-memory caches, and data analytics engines.

Titanium and Jupiter Network: Making AI possible at the speed of light

Titanium, the offload technology system that supports Google’s infrastructure, has been improved to accommodate workloads related to artificial intelligence. Titanium provides greater compute and memory resources for your applications by lowering the host’s processing overhead through a combination of on-host and off-host offloads. Furthermore, although Titanium’s fundamental features can be applied to AI infrastructure, the accelerator-to-accelerator performance needs of AI workloads are distinct.

Google has released a new Titanium ML network adapter to address these demands, which incorporates and expands upon NVIDIA ConnectX-7 NICs to provide further support for virtualization, traffic encryption, and VPCs. The system offers best-in-class security and infrastructure management along with non-blocking 3.2 Tbps of GPU-to-GPU traffic across RoCE when combined with its data center’s 4-way rail-aligned network.

Google’s Jupiter optical circuit switching network fabric and its updated data center network significantly expand Titanium’s capabilities. With native 400 Gb/s link rates and a total bisection bandwidth of 13.1 Pb/s (a practical bandwidth metric that reflects how one half of the network can connect to the other), Jupiter could handle a video conversation for every person on Earth at the same time. In order to meet the increasing demands of AI computation, this enormous scale is essential.

Hyperdisk ML is widely accessible

For computing resources to continue to be effectively utilized, system-level performance maximized, and economical, high-performance storage is essential. Google launched its AI-powered block storage solution, Hyperdisk ML, in April 2024. Now widely accessible, it adds dedicated storage for AI and HPC workloads to the networking and computing advancements.

Hyperdisk ML efficiently speeds up data load times. It drives up to 11.9x faster model load time for inference workloads and up to 4.3x quicker training time for training workloads.

With 1.2 TB/s of aggregate throughput per volume, you may attach 2500 instances to the same volume. This is more than 100 times more than what big block storage competitors are giving.

Reduced accelerator idle time and increased cost efficiency are the results of shorter data load times.

Multi-zone volumes are now automatically created for your data by GKE. In addition to quicker model loading with Hyperdisk ML, this enables you to run across zones for more computing flexibility (such as lowering Spot preemption).

Developing AI’s future

Google Cloud enables companies and researchers to push the limits of AI innovation with these developments in AI infrastructure. It anticipates that this strong foundation will give rise to revolutionary new AI applications.

Read more on Govindhtech.com

#A3UltraVMs#NVIDIAH200#AI#Trillium#HypercomputeCluster#GoogleAxionProcessors#Titanium#News#Technews#Technology#Technologynews#Technologytrends#Govindhtech

2 notes

·

View notes

Text

Data Center Accelerator Market Set to Transform AI Infrastructure Landscape by 2031

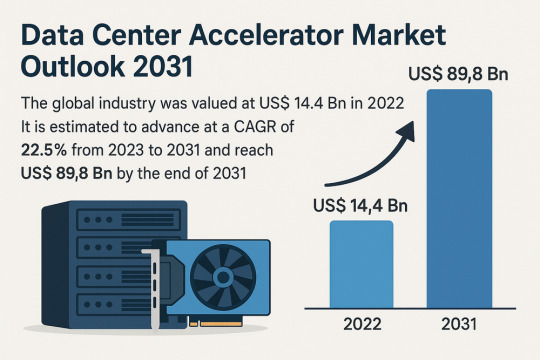

The global data center accelerator market is poised for exponential growth, projected to rise from USD 14.4 Bn in 2022 to a staggering USD 89.8 Bn by 2031, advancing at a CAGR of 22.5% during the forecast period from 2023 to 2031. Rapid adoption of Artificial Intelligence (AI), Machine Learning (ML), and High-Performance Computing (HPC) is the primary catalyst driving this expansion.

Market Overview: Data center accelerators are specialized hardware components that improve computing performance by efficiently handling intensive workloads. These include Graphics Processing Units (GPUs), Tensor Processing Units (TPUs), Field Programmable Gate Arrays (FPGAs), and Application-Specific Integrated Circuits (ASICs), which complement CPUs by expediting data processing.

Accelerators enable data centers to process massive datasets more efficiently, reduce reliance on servers, and optimize costs a significant advantage in a data-driven world.

Market Drivers & Trends

Rising Demand for High-performance Computing (HPC): The proliferation of data-intensive applications across industries such as healthcare, autonomous driving, financial modeling, and weather forecasting is fueling demand for robust computing resources.

Boom in AI and ML Technologies: The computational requirements of AI and ML are driving the need for accelerators that can handle parallel operations and manage extensive datasets efficiently.

Cloud Computing Expansion: Major players like AWS, Azure, and Google Cloud are investing in infrastructure that leverages accelerators to deliver faster AI-as-a-service platforms.

Latest Market Trends

GPU Dominance: GPUs continue to dominate the market, especially in AI training and inference workloads, due to their capability to handle parallel computations.

Custom Chip Development: Tech giants are increasingly developing custom chips (e.g., Meta’s MTIA and Google's TPUs) tailored to their specific AI processing needs.

Energy Efficiency Focus: Companies are prioritizing the design of accelerators that deliver high computational power with reduced energy consumption, aligning with green data center initiatives.

Key Players and Industry Leaders

Prominent companies shaping the data center accelerator landscape include:

NVIDIA Corporation – A global leader in GPUs powering AI, gaming, and cloud computing.

Intel Corporation – Investing heavily in FPGA and ASIC-based accelerators.

Advanced Micro Devices (AMD) – Recently expanded its EPYC CPU lineup for data centers.

Meta Inc. – Introduced Meta Training and Inference Accelerator (MTIA) chips for internal AI applications.

Google (Alphabet Inc.) – Continues deploying TPUs across its cloud platforms.

Other notable players include Huawei Technologies, Cisco Systems, Dell Inc., Fujitsu, Enflame Technology, Graphcore, and SambaNova Systems.

Recent Developments

March 2023 – NVIDIA introduced a comprehensive Data Center Platform strategy at GTC 2023 to address diverse computational requirements.

June 2023 – AMD launched new EPYC CPUs designed to complement GPU-powered accelerator frameworks.

2023 – Meta Inc. revealed the MTIA chip to improve performance for internal AI workloads.

2023 – Intel announced a four-year roadmap for data center innovation focused on Infrastructure Processing Units (IPUs).

Gain an understanding of key findings from our Report in this sample - https://www.transparencymarketresearch.com/sample/sample.php?flag=S&rep_id=82760

Market Opportunities

Edge Data Center Integration: As computing shifts closer to the edge, opportunities arise for compact and energy-efficient accelerators in edge data centers for real-time analytics and decision-making.

AI in Healthcare and Automotive: As AI adoption grows in precision medicine and autonomous vehicles, demand for accelerators tuned for domain-specific processing will soar.

Emerging Markets: Rising digitization in emerging economies presents substantial opportunities for data center expansion and accelerator deployment.

Future Outlook

With AI, ML, and analytics forming the foundation of next-generation applications, the demand for enhanced computational capabilities will continue to climb. By 2031, the data center accelerator market will likely transform into a foundational element of global IT infrastructure.

Analysts anticipate increasing collaboration between hardware manufacturers and AI software developers to optimize performance across the board. As digital transformation accelerates, companies investing in custom accelerator architectures will gain significant competitive advantages.

Market Segmentation

By Type:

Central Processing Unit (CPU)

Graphics Processing Unit (GPU)

Application-Specific Integrated Circuit (ASIC)

Field-Programmable Gate Array (FPGA)

Others

By Application:

Advanced Data Analytics

AI/ML Training and Inference

Computing

Security and Encryption

Network Functions

Others

Regional Insights

Asia Pacific dominates the global market due to explosive digital content consumption and rapid infrastructure development in countries such as China, India, Japan, and South Korea.

North America holds a significant share due to the presence of major cloud providers, AI startups, and heavy investment in advanced infrastructure. The U.S. remains a critical hub for data center deployment and innovation.

Europe is steadily adopting AI and cloud computing technologies, contributing to increased demand for accelerators in enterprise data centers.

Why Buy This Report?

Comprehensive insights into market drivers, restraints, trends, and opportunities

In-depth analysis of the competitive landscape

Region-wise segmentation with revenue forecasts

Includes strategic developments and key product innovations

Covers historical data from 2017 and forecast till 2031

Delivered in convenient PDF and Excel formats

Frequently Asked Questions (FAQs)

1. What was the size of the global data center accelerator market in 2022? The market was valued at US$ 14.4 Bn in 2022.

2. What is the projected market value by 2031? It is projected to reach US$ 89.8 Bn by the end of 2031.

3. What is the key factor driving market growth? The surge in demand for AI/ML processing and high-performance computing is the major driver.

4. Which region holds the largest market share? Asia Pacific is expected to dominate the global data center accelerator market from 2023 to 2031.

5. Who are the leading companies in the market? Top players include NVIDIA, Intel, AMD, Meta, Google, Huawei, Dell, and Cisco.

6. What type of accelerator dominates the market? GPUs currently dominate the market due to their parallel processing efficiency and widespread adoption in AI/ML applications.

7. What applications are fueling growth? Applications like AI/ML training, advanced analytics, and network security are major contributors to the market's growth.

Explore Latest Research Reports by Transparency Market Research: Tactile Switches Market: https://www.transparencymarketresearch.com/tactile-switches-market.html

GaN Epitaxial Wafers Market: https://www.transparencymarketresearch.com/gan-epitaxial-wafers-market.html

Silicon Carbide MOSFETs Market: https://www.transparencymarketresearch.com/silicon-carbide-mosfets-market.html

Chip Metal Oxide Varistor (MOV) Market: https://www.transparencymarketresearch.com/chip-metal-oxide-varistor-mov-market.html

About Transparency Market Research Transparency Market Research, a global market research company registered at Wilmington, Delaware, United States, provides custom research and consulting services. Our exclusive blend of quantitative forecasting and trends analysis provides forward-looking insights for thousands of decision makers. Our experienced team of Analysts, Researchers, and Consultants use proprietary data sources and various tools & techniques to gather and analyses information. Our data repository is continuously updated and revised by a team of research experts, so that it always reflects the latest trends and information. With a broad research and analysis capability, Transparency Market Research employs rigorous primary and secondary research techniques in developing distinctive data sets and research material for business reports. Contact: Transparency Market Research Inc. CORPORATE HEADQUARTER DOWNTOWN, 1000 N. West Street, Suite 1200, Wilmington, Delaware 19801 USA Tel: +1-518-618-1030 USA - Canada Toll Free: 866-552-3453 Website: https://www.transparencymarketresearch.com Email: [email protected] of Form

Bottom of Form

0 notes

Text

Available Cloud Computing Services at Fusion Dynamics

We Fuel The Digital Transformation Of Next-Gen Enterprises!

Fusion Dynamics provides future-ready IT and computing infrastructure that delivers high performance while being cost-efficient and sustainable. We envision, plan and build next-gen data and computing centers in close collaboration with our customers, addressing their business’s specific needs. Our turnkey solutions deliver best-in-class performance for all advanced computing applications such as HPC, Edge/Telco, Cloud Computing, and AI.

With over two decades of expertise in IT infrastructure implementation and an agile approach that matches the lightning-fast pace of new-age technology, we deliver future-proof solutions tailored to the niche requirements of various industries.

Our Services

We decode and optimise the end-to-end design and deployment of new-age data centers with our industry-vetted services.

System Design

When designing a cutting-edge data center from scratch, we follow a systematic and comprehensive approach. First, our front-end team connects with you to draw a set of requirements based on your intended application, workload, and physical space. Following that, our engineering team defines the architecture of your system and deep dives into component selection to meet all your computing, storage, and networking requirements. With our highly configurable solutions, we help you formulate a system design with the best CPU-GPU configurations to match the desired performance, power consumption, and footprint of your data center.

Why Choose Us

We bring a potent combination of over two decades of experience in IT solutions and a dynamic approach to continuously evolve with the latest data storage, computing, and networking technology. Our team constitutes domain experts who liaise with you throughout the end-to-end journey of setting up and operating an advanced data center.

With a profound understanding of modern digital requirements, backed by decades of industry experience, we work closely with your organisation to design the most efficient systems to catalyse innovation. From sourcing cutting-edge components from leading global technology providers to seamlessly integrating them for rapid deployment, we deliver state-of-the-art computing infrastructures to drive your growth!

What We Offer The Fusion Dynamics Advantage!

At Fusion Dynamics, we believe that our responsibility goes beyond providing a computing solution to help you build a high-performance, efficient, and sustainable digital-first business. Our offerings are carefully configured to not only fulfil your current organisational requirements but to future-proof your technology infrastructure as well, with an emphasis on the following parameters –

Performance density

Rather than focusing solely on absolute processing power and storage, we strive to achieve the best performance-to-space ratio for your application. Our next-generation processors outrival the competition on processing as well as storage metrics.

Flexibility

Our solutions are configurable at practically every design layer, even down to the choice of processor architecture – ARM or x86. Our subject matter experts are here to assist you in designing the most streamlined and efficient configuration for your specific needs.

Scalability

We prioritise your current needs with an eye on your future targets. Deploying a scalable solution ensures operational efficiency as well as smooth and cost-effective infrastructure upgrades as you scale up.

Sustainability

Our focus on future-proofing your data center infrastructure includes the responsibility to manage its environmental impact. Our power- and space-efficient compute elements offer the highest core density and performance/watt ratios. Furthermore, our direct liquid cooling solutions help you minimise your energy expenditure. Therefore, our solutions allow rapid expansion of businesses without compromising on environmental footprint, helping you meet your sustainability goals.

Stability

Your compute and data infrastructure must operate at optimal performance levels irrespective of fluctuations in data payloads. We design systems that can withstand extreme fluctuations in workloads to guarantee operational stability for your data center.

Leverage our prowess in every aspect of computing technology to build a modern data center. Choose us as your technology partner to ride the next wave of digital evolution!

#Keywords#services on cloud computing#edge network services#available cloud computing services#cloud computing based services#cooling solutions#hpc cluster management software#cloud backups for business#platform as a service vendors#edge computing services#server cooling system#ai services providers#data centers cooling systems#integration platform as a service#https://www.tumblr.com/#cloud native application development#server cloud backups#edge computing solutions for telecom#the best cloud computing services#advanced cooling systems for cloud computing#c#data center cabling solutions#cloud backups for small business#future applications of cloud computing

0 notes

Text

In the fast-paced digital world of today, businesses and industries are relying more than ever on efficient and scalable solutions for managing their infrastructure. One of the most promising innovations is the combination of cloud computing infrastructure and artificial intelligence (AI). Together, they are transforming how we handle infrastructure asset management and optimizing industries such as energy. This blog will explain how these technologies work together and the impacts that they are having across a wide array of sectors, including in the USA energy markets

What is Cloud Computing Infrastructure?

Cloud computing infrastructure refers to the systems that serve as the basis for delivering cloud services. This may include virtual servers, storage systems, networking capabilities, and databases. They are offered to businesses and consumers through the internet. Instead of having to hold expensive physical infrastructure, a company can use cloud infrastructure solutions to scale its operations very efficiently.

Businesses do not have to be concerned about the capital expenses for on-premise infrastructure maintenance and upgrades. With cloud service provision, organizations are enabled with tools for the management of cloud infrastructure on digital resources to watch out for them seamlessly. With cloud computing in the energy industry, companies run their simulations and manage the output without having to buy large, expensive hardware.

Changing the Face of Computing Infrastructure

The Role of AI Technology

Artificial intelligence (AI refers to computer systems designed to perform tasks that typically require human intelligence, such as learning, reasoning, and problem-solving. AI is revolutionizing how infrastructure is managed by enabling automated systems to make decisions based on data and real-time analysis.

In the energy industry, for instance, AI technology can be used in analyzing large volumes of data to optimize operations, predict failures, and recommend improvements. Such is vital in industries like US energy markets, where AI solutions can predict market fluctuations, optimize energy distribution, and increase overall efficiency.

Artificial Intelligence in Cloud Computing

When artificial intelligence in cloud computing is introduced, the possibilities expand exponentially. AI-based cloud solutions allow businesses to benefit from AI capabilities without requiring investment in dedicated hardware or a specialized team. For example, companies can utilize AI cloud computing benefits to analyze large data sets stored in the cloud, forecast energy demands, or predict equipment failures in real time.

AI and Cloud Computing for Asset Management

Among the benefits that come from the integration of AI with cloud computing infrastructure is infrastructure asset management. It is complex managing equipment, machines, or even digital services. AI algorithms help in optimizing this by identifying patterns and predicting when assets will require maintenance or replacement.

#ai infrastructure#cloudstorage#cloud computing#artificial intelligence tools#artificial intelligence

0 notes

Text

#High-Performance Computing HPC as a Service Market#High-Performance Computing HPC as a Service Market Share#High-Performance Computing HPC as a Service Market Size#High-Performance Computing HPC as a Service Market Research#High-Performance Computing HPC as a Service Industry#What is High-Performance Computing HPC as a Service?

0 notes

Text

HPE Servers' Performance in Data Centers

HPE servers are widely regarded as high-performing, reliable, and well-suited for enterprise data center environments, consistently ranking among the top vendors globally. Here’s a breakdown of their performance across key dimensions:

1. Reliability & Stability (RAS Features)

Mission-Critical Uptime: HPE ProLiant (Gen10/Gen11), Synergy, and Integrity servers incorporate robust RAS (Reliability, Availability, Serviceability) features:

iLO (Integrated Lights-Out): Advanced remote management for monitoring, diagnostics, and repairs.

Smart Array Controllers: Hardware RAID with cache protection against power loss.

Silicon Root of Trust: Hardware-enforced security against firmware tampering.

Predictive analytics via HPE InfoSight for preemptive failure detection.

Result: High MTBF (Mean Time Between Failures) and minimal unplanned downtime.

2. Performance & Scalability

Latest Hardware: Support for newest Intel Xeon Scalable & AMD EPYC CPUs, DDR5 memory, PCIe 5.0, and high-speed NVMe storage.

Workload-Optimized:

ProLiant DL/ML: Versatile for virtualization, databases, and HCI.

Synergy: Composable infrastructure for dynamic resource pooling.

Apollo: High-density compute for HPC/AI.

Scalability: Modular designs (e.g., Synergy frames) allow scaling compute/storage independently.

3. Management & Automation

HPE OneView: Unified infrastructure management for servers, storage, and networking (automates provisioning, updates, and compliance).

Cloud Integration: Native tools for hybrid cloud (e.g., HPE GreenLake) and APIs for Terraform/Ansible.

HPE InfoSight: AI-driven analytics for optimizing performance and predicting issues.

4. Energy Efficiency & Cooling

Silent Smart Cooling: Dynamic fan control tuned for variable workloads.

Thermal Design: Optimized airflow (e.g., HPE Apollo 4000 supports direct liquid cooling).

Energy Star Certifications: ProLiant servers often exceed efficiency standards, reducing power/cooling costs.

5. Security

Firmware Integrity: Silicon Root of Trust ensures secure boot.

Cyber Resilience: Runtime intrusion detection, encrypted memory (AMD SEV-SNP, Intel SGX), and secure erase.

Zero Trust Architecture: Integrated with HPE Aruba networking for end-to-end security.

6. Hybrid Cloud & Edge Integration

HPE GreenLake: Consumption-based "as-a-service" model for on-premises data centers.

Edge Solutions: Compact servers (e.g., Edgeline EL8000) for rugged/remote deployments.

7. Support & Services

HPE Pointnext: Proactive 24/7 support, certified spare parts, and global service coverage.

Firmware/Driver Ecosystem: Regular updates with long-term lifecycle support.

Ideal Use Cases

Enterprise Virtualization: VMware/Hyper-V clusters on ProLiant.

Hybrid Cloud: GreenLake-managed private/hybrid environments.

AI/HPC: Apollo systems for GPU-heavy workloads.

SAP/Oracle: Mission-critical applications on Superdome Flex.

Considerations & Challenges

Cost: Premium pricing vs. white-box/OEM alternatives.

Complexity: Advanced features (e.g., Synergy/OneView) require training.

Ecosystem Lock-in: Best with HPE storage/networking for full integration.

Competitive Positioning

vs Dell PowerEdge: Comparable performance; HPE leads in composable infrastructure (Synergy) and AI-driven ops (InfoSight).

vs Cisco UCS: UCS excels in unified networking; HPE offers broader edge-to-cloud portfolio.

vs Lenovo ThinkSystem: Similar RAS; HPE has stronger hybrid cloud services (GreenLake).

Summary: HPE Server Strengths in Data Centers

Reliability: Industry-leading RAS + iLO management. Automation: AI-driven ops (InfoSight) + composability (Synergy). Efficiency: Energy-optimized designs + liquid cooling support. Security: End-to-end Zero Trust + firmware hardening. Hybrid Cloud: GreenLake consumption model + consistent API-driven management.

Bottom Line: HPE servers excel in demanding, large-scale data centers prioritizing stability, automation, and hybrid cloud flexibility. While priced at a premium, their RAS capabilities, management ecosystem, and global support justify the investment for enterprises with critical workloads. For SMBs or hyperscale web-tier deployments, cost may drive consideration of alternatives.

0 notes