#not radioshack

Text

Writeup: AOpen i945GMm-HL shenanigans

AOpen i945GMm-HL - The Retro Web

Welp. This board is weirder than I ever thought it'd be. Not the board in general, but the specific one I bought.

To begin, it turns out that my particular board, and likely many others of the same model, are OEM-customized boards that AOpen provided to a little company called RM Education. They make all-in-one PCs for the UK market.

...And they are using evaluation BIOSes (in other words, BIOS software that's normally only meant for prototyping and... well, evaluation) in their retail boards.

My specific board contains BIOS version R1.08, which is actually R1.02 apparently. There is evidence of an R1.07 existing as well from a reddit thread on the r/buildapc subreddit, but I doubt that it's been dumped anywhere.

Moving on to the original point of this writeup, I got this board because I wanted to build a system that pushed the 32-bit Core Duo T2700 as far as possible, meaning I needed a mobile-on-desktop board. AOpen built a reputation for doing this sorta stuff in the 2000s, so I went ahead and picked one of their boards for use (although I would've much preferred using the top of the line AOpen i975Xa-YDG instead if it were being sold anywhere. That's a VERY tasty looking board with its full size DIMM slots and SLI-compatible dual PCIe x16 slots and ability to crank the FSB all the way to 305MHz).

Slightly surprisingly, the Core Duo T2700 is quite the overclocker! It's able to push from 2.3GHz all the way up to 2.7GHz with some FSB overclocking using the SetFSB tool. It's multiplier-locked to a range from 6.0 to 14.0, so I can only push it through this means.

The board I'm using, the AOpen i945GMm-HL, supports running the FSB up to 195MHz. It's okay-ish in terms of stability, but crashes when running Aida64 benchmarks unless I loosen the memory timings from the 5-5-5-15 settings that it uses at 333MHz to 5-6-6-18, which is just the tiniest bit faster than its stock settings for 400MHz operation by SPD. With these settings, it's much more stable and is able to run the benchmarks, though unless I lower the FSB from 195MHz to 190, it will consistently crash Chrome when trying to play Youtube videos on integrated graphics. I'll likely experiment some to see if adding a card capable of handling the video playback in hardware helps.

For now, this is all for this blog post. I'll follow-up with more details as they come in reblogs. As follows are the specs of the system:

AOpen i945GMm-HL (OC'ed from 166MHz FSB to 195MHz, 190MHz for more stability)

Intel Core Duo T2700 @ 2.7GHz (OC'ed from 2.3GHz)

2x 2GB Crucial DDR2 SO-DIMMs @ 5-6-6-18 timings

Some random 40GB Hitachi hdd lol

Windows XP Pro SP3, fully updated via LegacyUpdate

Supermium Browser (fork of Google Chrome and the reason why I was able to test Youtube playback in the first place)

Coming up: Installing One-Core-API and Java 21 to play Minecraft 1.21 on a 32-bit system out of spite for Microsoft "dropping support" for 32-bit CPUs.

2 notes

·

View notes

Text

4K notes

·

View notes

Text

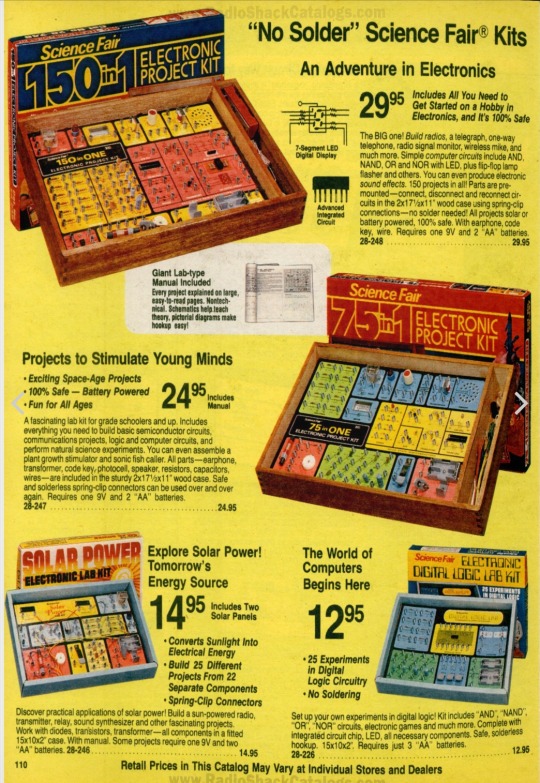

Childhood memories. I had several of these Radio Shack X-in-one kits growing up, where you page through hundreds of projects and build them by connecting wires through little springs.

To be honest, I did not learn much circuit theory building these. But it sure planted the bug for being interested in electronics, and eventually computers

240 notes

·

View notes

Text

Radio shack near Wichita, Texas, undated. Photo by Jimmy W. Cochran.

388 notes

·

View notes

Text

220 notes

·

View notes

Text

Portrait Experiment

823 notes

·

View notes

Text

415 notes

·

View notes

Text

Okay but what if Mr. Clarke had Eddie in his class when Eddie was in middle school? He took note of Eddie talking to his friends about his guitar amp and asked him if he wanted to join AV club. Eddie did, and when he’s old enough, his first legal job is at RadioShack. Bob Newby is the first manager Eddie has ever had, but he thinks he might always be his favorite. He takes an interest in Eddie’s hobbies, listens to his music even though Eddie can tell he doesn’t like it, and when the store is slow they do funny voices at each other. He makes more money dealing, and sometimes the jocks from school come into the store to hassle him. The only thing keeping him there is Bob. But then Bob dies. Eddie goes to the funeral, comes home and sits closer to Wayne than usual on their couch. He puts in his two weeks notice the next day.

#look I don’t remember if Bob got a funeral or even an acknowledgment of his death#shhh don’t worry about it#I worked at RadioShack for years!#I think Eddie might like working there#eddie munson#bob newby#scott clarke#stranger things

234 notes

·

View notes

Text

#tandy 1000#tandy 1000 computer#radioshack#old tech#old advertising#computer screen#VHS#VHSwave#vaporwave#1985#80s#home computer#computer monitor#cathode-ray tube#gif#my gifs

206 notes

·

View notes

Text

Writeup: Forcing Minecraft to play on a Trident Blade 3D.

The first official companion writeup to a video I've put out!

youtube

So. Uh, yeah. Trident Blade 3D. If you've seen the video already, it's... not good. Especially in OpenGL.

Let's kick things off with a quick rundown of the specs of the card, according to AIDA64:

Trident Blade 3D - specs

Year released: 1999

Core: 3Dimage 9880, 0.25um (250nm) manufacturing node, 110MHz

Driver version: 4.12.01.2229

Interface: AGP 2x @ 1x speed (wouldn't go above 1x despite driver and BIOS support)

PCI device ID: 1023-9880 / 1023-9880 (Rev 3A)

Mem clock: 110MHz real/effective

Mem bus/type: 8MB 64-bit SDRAM, 880MB/s bandwidth

ROPs/TMUs/Vertex Shaders/Pixel Shaders/T&L hardware:

1/1/0/0/No

DirectX support: DirectX 6

OpenGL support:

- 100% (native) OpenGL 1.1 compliant

- 25% (native) OpenGL 1.2 compliant

- 0% compliant beyond OpenGL 1.2

- Vendor string:

Vendor : Trident

Renderer : Blade 3D

Version : 1.1.0

And as for the rest of the system:

Windows 98 SE w/KernelEX 2019 updates installed

ECS K7VTA3 3.x

AMD Athlon XP 1900+ @ 1466MHz

512MB DDR PC3200 (single stick of OCZ OCZ400512P3)

3.0-4-4-8 (CL-RCD-RP-RAS)

Hitachi Travelstar DK23AA-51 4200RPM 5GB HDD

IDK what that CPU cooler is but it does the job pretty well

And now, with specs done and out of the way, my notes!

As mentioned earlier, the Trident Blade 3D is mind-numbingly slow when it comes to OpenGL. As in, to the point where at least natively during actual gameplay (Minecraft, because I can), it is absolutely beaten to a pulp using AltOGL, an OpenGL-to-Direct3D6 "wrapper" that translates OpenGL API calls to DirectX ones.

Normally, it can be expected that performance using the wrapper is about equal to native OpenGL, give or take some fps depending on driver optimization, but this card?

The Blade 3D may as well be better off like the S3 ViRGE by having no OpenGL ICD shipped in any driver release, period.

For the purposes of this writeup, I will stick to a very specific version of Minecraft: in-20091223-1459, the very first version of what would soon become Minecraft's "Indev" phase, though this version notably lacks any survival features and aside from the MD3 models present, is indistinguishable from previous versions of Classic. All settings are at their absolute minimum, and the window size is left at default, with a desktop resolution of 1024x768 and 16-bit color depth.

(Also the 1.5-era launcher I use is incapable of launching anything older than this version anyway)

Though known to be unstable (as seen in the full video), gameplay in Minecraft Classic using AltOGL reaches a steady 15 fps, nearly triple that of the native OpenGL ICD that ships with Trident's drivers the card. AltOGL also is known to often have issues with fog rendering on older cards, and the Blade 3D is no exception... though, I believe it may be far more preferable to have no working fog than... well, whatever the heck the Blade 3D is trying to do with its native ICD.

See for yourself: (don't mind the weirdness at the very beginning. OBS had a couple of hiccups)

youtube

youtube

Later versions of Minecraft were also tested, where I found that the Trident Blade 3D follows the same, as I call them, "version boundaries" as the SiS 315(E) and the ATi Rage 128, both of which being cards that easily run circles around the Blade 3D.

Version ranges mentioned are inclusive of their endpoints.

Infdev 1.136 (inf-20100627) through Beta b1.5_01 exhibit world-load crashes on both the SiS 315(E) and Trident Blade 3D.

Alpha a1.0.4 through Beta b1.3_01/PC-Gamer demo crash on the title screen due to the animated "falling blocks"-style Minecraft logo on both the ATi Rage 128 and Trident Blade 3D.

All the bugginess of two much better cards, and none of the performance that came with those bugs.

Interestingly, versions even up to and including Minecraft release 1.5.2 are able to launch to the main menu, though by then the already-terrible lag present in all prior versions of the game when run on the Blade 3D make it practically impossible to even press the necessary buttons to load into a world in the first place. Though this card is running in AGP 1x mode, I sincerely doubt that running it at its supposedly-supported 2x mode would bring much if any meaningful performance increase.

Lastly, ClassiCube. ClassiCube is a completely open-source reimplementation of Minecraft Classic in C, which allows it to bypass the overhead normally associated with Java's VM platform. However, this does not grant it any escape from the black hole of performance that is the Trident Blade 3D's OpenGL ICD. Not only this, but oddly, the red and blue color channels appear to be switched by the Blade 3D, resulting in a very strange looking game that chugs along at single-digits. As for the game's DirectX-compatible version, the requirement of DirectX 9 support locks out any chance for the Blade 3D to run ClassiCube with any semblance of performance. Also AltOGL is known to crash ClassiCube so hard that a power cycle is required.

Interestingly, a solid half of the accelerated pixel formats supported by the Blade 3D, according to the utility GLInfo, are "render to bitmap" modes, which I'm told is a "render to texture" feature that normally isn't seen on cards as old as the Blade 3D. Or in fact, at least in my experience, any cards outside of the Blade 3D. I've searched through my saved GLInfo reports across many different cards, only to find each one supporting the usual "render to window" pixel format.

And with that, for now, this is the end of the very first post-video writeup on this blog. Thank you for reading if you've made it this far.

I leave you with this delightfully-crunchy clip of the card's native OpenGL ICD running in 256-color mode, which fixes the rendering problems but... uh, yeah. It's a supported accelerated pixel format, but "accelerated" is a stretch like none other. 32-bit color is supported as well, but it performs about identically to the 8-bit color mode--that is, even worse than 16-bit color performs.

At least it fixes the rendering issues I guess.

youtube

youtube

#youtube#techblog#not radioshack#my posts#writeup#Forcing Minecraft to play on a Trident Blade 3D#Trident Blade 3D#Trident Blade 3D 9880

2 notes

·

View notes

Text

Rare still-open RadioShack spotted in Brodheadsville, PA. Yes, they're still out there! This one is closed on Sundays though so I wasn't able to go inside.

112 notes

·

View notes

Text

followup to this post. the ending really is a million times funnier when you remember madeline had never heard edgars speaking voice

#this ended up being so much more high effort than i intended💔 i never bother making more than like 3 layers for my lineless art and.#i always end up paying for it#anyways i like to imagine edgars having the time of his life in the radio. like the rave scene but now everyone has to put up with it<3#going YEA!!!! LETS ROCK!!! in a radioshack full of very scared employees#logs#electric dreams#electric polycule#art tag

211 notes

·

View notes

Text

Radio Shack Blackjack game from the early 80s I got for $1 at a flea market because of course it didn't work, motor was shot. Luckily Digi-Key had an exact replacement. So it's more like a $10 purchase (mostly shipping), still not bad for the best handheld blackjack game around!

113 notes

·

View notes

Text

RadioShack’s TRS-80 Micro Computer System in a 1979 educational series called “Adventure of the Mind.”

128 notes

·

View notes

Text

What the fuck is warrior cats?

My friends talk about being obsessed with it in like middle school

Was I the only girl who never really knew about this series?

I was all about Dinotopia, Nancy Drew, Stephen King and misc thriller/horror novels 😭 (And there's of course my comics but still)

I remember seeing girls "play" warrior cats during recess but I played shit like Sailor Moon with my friends, no idea that an entire series of talking cats existed????? At the time nobody told me what it was, I assumed it was some kinda inside joke at first?.?

#🌙 arch's thoughts#did i even have a childhood#I didn't get my first internet access until like 2009 because it was too expensive#i had a tv with knobs and color dials until i was like 13#I STILL have a vcr man#i miss RadioShack...

11 notes

·

View notes

Text

Ghost Light \ RadioShack 🔦

16 notes

·

View notes