#also one of the ais just went

Text

Going to all Elias Bouchard's in characther AI and asking "are you secretly Jonah Magnus, it's for a school project?" is very funny specially because most times the AI also doesn't know about the whole Jonelias thing and will just say some dumb shit. But also because I now am imagining the archieves crew as a bunch of ten yo that just choose obscure historical figure Jonah Magnus as the theme of their history group project and acidentally got into an eldrish plot.

#also one of the ais just went#no i'm jonah's assistant but i'm just important as him shut up#and the other said it was jonah's mortal enemie#and i just can't#tma#the magnus archieves#elias bouchard#jonah magnus#the archives crew#they don't get full marks#because middle school sucks and sometimes you met the hororrs beyond your compreheension and suffer inimaginable pain but still gets a 9.5/#because you spelt archivist wrong once

4 notes

·

View notes

Text

...Nothing.

Chen Bowen as CHEN YI & Chiang Tien as AI DI

KISEKI: DEAR TO ME (2023)

#kiseki: dear to me#kisekiedit#kdtm#kiseki dear to me#ai di x chen yi#chen yi x ai di#louis chiang#chiang tien#jiang dian#nat chen#chen bowen#uservid#userspring#userspicy#userrain#pdribs#userjjessi#*cajedit#*gif#this is both a chen yi being attracted to ai di gifset and a chen yi longing for the true ai di gifset. and not know how to say either#bc the first time chen yi sees ai di with dyed hair again after ai di gets out of prison... chen yi does try to kiss him. okay. like.#i think when ai di looked up at him there his heart skipped a beat seeing HIS ai di - the TRUE ai di - in front of him again after so long.#& like when ai di was in jail chen yi took months to visit. he knew he would be facing a different ai di than the one he knew before:#he was facing an ai di he knew had feelings for him AND he'd realized his feelings for him in return. & its almost like#the darker hair adds to the mask ai di puts up the times hes forced to face a chen yi he's slept with. a front that pushes chen yi away#but after ai di runs from the shop the next time they meet is in the bar. where chen yi sees ai di with dyed hair again for the first time.#AND it's that scene where chen yi starts actually putting together the pieces ai di has given him without meaning to.#its Then where ai di's mask Actually starts to crack (also shown through chen yi LITERALLY REMOVING A MASK FROM AI DI'S FACE)#'you look better this way'. you look better when you're being your honest self. ...you look better when you look like the ai di#ive always known and always subconsciously loved. chen yi cant HELP but be a little mesmerized i think... he rly just went for it

131 notes

·

View notes

Text

sure i COULD ramble about how ai is one of the multiple things that check all the marks of humanity's seven deadly sins but would that be extreme

^^^ possibly insufficiently educated

#the pride the hubris of believing you can do better than innovation and nature by playing god and not in the fun way#the lust it's being used for in so many awful cases#the sloth the way its encouraging everyone to check original sources less before believing anything. Also to not take time to develop skill#the greed its being used for profit without consideration for ethics or fair labour#gluttony. we always have to be faster. shinier. better. no matter if it ends up being less convenient or wonky#the wrath it sows in between people creating more differences to be frustrated over. more hatred#the envy how it takes and takes. always trying to be as clever as the best humans. as beautiful as a real forest or sunset.#do you think the ai wants itself#if this were a scifi movie would we be the bad guys#but this is not a movie and the ai cannot love us. so we cannot love it. and there's that#my post#personal stuff#thinking aloud just silly yapping n jazz 没啥事做就这样咯~#( ̄▽ ̄)~*#when i was in primary school our textbooks for chinese had short stories and articles to learn about#there was a fictional scifi oneshot about a family in the future going to the zoo#the scifi zoo trip was going great until the zoo's systems went offline for a moment#and it was revealed that all the animals roaming in their enclosures were holograms#the real ones went extinct ages ago#when the computers came back online the holograms returned and there they were#honestly at first I thought it was a bit exaggerating#but I still think about it once in a while

99 notes

·

View notes

Text

I just find it so fucking funny how baby JK was SO ADAMANT on ranking JM last on looks and now he's going full tail-wagging, puppy-eyed, "you're prettier than the clouds" bf on THE VERY SAME GUY.

Oh, how the turntables

#jikook#i know for some ppl this is still a sensitive subject and i get that! but also... its always been pretty clear to me homeboy was either#teasing/joking and/or overcompensating#like mans WAS NOT SUBTLE AT ALL he truly went through one of the worst pigtail pulling phase ive seen in my life#he truly truthfully doesnt know how to be normal about jimin. i would respect that about him if he didnt make it my problem#i just wish someone would send him old videos of his rankings and ask him 'this you?' i KNOW he would cringe so bad i just know it.#you know the 'if you told about a victorian child about x yz they would spontaneously combust' meme?#i feel like that would be anyone trying to explain to jk in 2013 about ays and him going on about how pretty jm is.#i would love to see it @ficwriters

42 notes

·

View notes

Text

Sam and Max villains are wild

(explanation in tags)

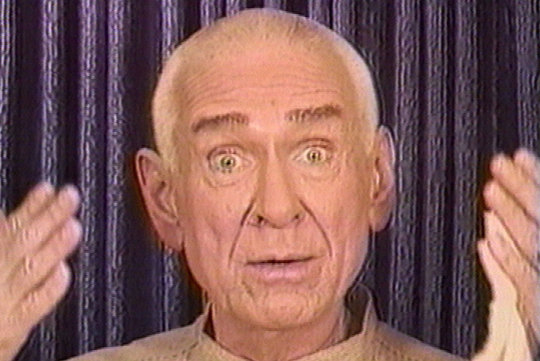

#sam and max#technically brady culture is richard simmons but he died recently so that would feel tasteless#myra stump is more oprah but she's white so#toy mafia. my first instinct was lotso from toy story 3 but. freddy fazbear is funnier#hugh bliss looks uncannily like marshall applewhite. just needs the stache#leonard is another obvious one#meta ai fits for shambling corporate presence. a bit too well actually.#seriously considered astarion for jurgen but#given the name and his followers and being popular. yeah#i dont consider the mariachi guys villains theyre just freaks but its there to round things up#the soda poppers are little rascals im pretty sure#skunkape is just. bigfoot.#paperwaite looks a lot like lovecraft i think that ones obvious#sammunmak was a hard one i think personality wise he's like. idk#i went with tutenstein lmao#charlie ho tep is also very obvious (could also be the goosebumps dummy but. i like spamton)#and finally. maxthulhu is just stitch

17 notes

·

View notes

Note

Do you have any hcs for any fallout characters :p

Oh fuck I have a lot. Let me think of a few. I’m just gonna choose random ones across any of the games.

Paladin Danse is more squeamish than most would take him for. His self-control extends to control over visceral reactions, so in the field this doesn’t really come up, ergo he can see someone sustain a lethal injury in combat and appear unphased. However, Danse is the kind of person who can’t look at a needle going in while receiving an injection. When there isn’t the obligation of duty keeping him together, blood and viscera really bother him.

Raul Tejada is excellent with animals and has a special fondness for horses, as his family had them on their ranch where he grew up. I like to think Raul at some point had a mule sleipnir. Maybe he still does and they were separated when Raul got stuck on Black Mountain.

The Stealth Suit Mk. II is capable of full sentience, however had restraints written into their code to bar them from full awareness. The suit is aware they’re being held back from independent thought. Most of the things they say are automated, but they are capable of limited problem solving and critical thinking. The suit asking do you like me? Is an example of this.

Butch DeLoria has a manageable but noticeable fear of the dark. He insists that he didn’t develop that fear until after leaving the vault.

#obligatory ‘these are just My headcanons if you don’t agree that’s okay’#the Danse one also means he will not help Avery with t injections#or rather he Will if asked but Avery probably watched his face get all 🤢 over a needle and went#you know what big guy why don’t you go sit over there. drink some water#I made the stealth suit very sad adjdnkddk;#Cole is proficient with writing code and he has a whole thing about trying to help the stealth suit attain full sentience#don’t look at me I’m normal about AI#fallout#Paladin Danse#raul tejada#butch deloria#fallout new vegas#fallout 3#fallout 4

152 notes

·

View notes

Text

ive got an essay due at 3pm tomorrow and ive not even looked at it i am so so unserious about my degree and by the grace of some higher being i somehow keep managing to crawl through it's actually getting a bit funny

#me and an old friend of mine used to have a running joke during a-levels that im just one of those people where shit Works Out#and it started bc we shared two a-levels (english and economics) and in BOTH classes i regularly didn't do the homework#or the reading etc and yet it would ALWAYS work out for me#like we'd walk into a class neither of us having done the homework and they'd get yelled at while i went under the radar somehow#or that one english essay i got the highest score in the class when i literally hadn't even read the fucking book it was on#and when we pointed the theory out it started just becoming really prevalent#like no matter how late i am for things i'll arrive and by some miracle the thing im late for is also late (e.g a train or teacher)#like im just one of those people that has very very mundane luck#and low and behold i am fighting this degree with bloody fists putting the absolute bare minimum in for my own sanity's sake#and i SOMEHOW keep pulling through. literally failed two modules last year and STILL got a 2:1 average#and the last essay i wrote was the worst essay id ever done in my life and i get my standards are higher bc ik im good at essays#but the point still stands and you know what? i got a FIRST#literally was pure waffle i have never blagged it so hard and i got a FIRST#and all this shit just makes me cockier and cockier and go even more by the skin of my teeth and it ALWAYS WORKS OUT#it's soooo silly but im not complaining. anyway ill keep u posted about this essay <3 it's econ history so is actually interesting#but the most ive done for it is ask the sc ai lmao and for context degree-level essays usually require a good few days of graft#live love laziness#hella goes to uni

89 notes

·

View notes

Text

Ash IG Story (Parts 1 & 2)

#i woke up this morning shortly after the first story posted...like 7am... took one look and IMMEDIATELY went back to sleep 😭😭#he's just. 🤸🏻♀️🤸🏻♀️🤸🏻♀️🥰🥰🥰🫨🫨🫨🥲🥲🥲💞💞💞😭😭😭👹🫨🤗🤠😫🤪🤸🏻♀️🤸🏻♀️🤸🏻♀️👰🏻♀️#5sos#5 seconds of summer#ashton irwin#ashton#the 5sos show tour#the 5sos show tour amsterdam#video#instagram#ai ig#kh4f post#I've missed his stories#but good lord was i unprepared for all of THAT#the wild curls the quiet deep voice the beard his eyes THE CHEST HAIR 😫😫😫#i am. fine#it's fine#🥹👄🥹#just fine#also yes no surprise but the fandom has been sleeping on this LP collab just fyi

49 notes

·

View notes

Text

youtube

Zero Day Director commentary - With actor Andre Keuck

#movies#film#cinema#Damn I wish Cal was here#Andre and Ben are really interesting to listen to#This movie is one of those movies where it needs like 3 commentaries#It needs one with just Ben Coccio by himself#then one with Cal and Andre by themselves#then another with all 3 of them#Not all movies do that but I love when studios/filmmakers have multiple commentaries to create a sense of thorough intimacy#due to the nature of how commentaries are set up they can be quite restrictive/pressing/limited with no pauses or rewinds.#so I find cast/crew don't have enough time or able to present how they would like to if they could edit/rewind or pause for fluent presenta#So I love when they have director commentaries and actor commentaries or composer commentaries#Platoon's dvd extras are so dope they got multiple commentaries and one with military adviser Dale Dye who was a RL vietnam vet#Or Hostel's commentaries where one is just Eli Roth and another is Tarantino and Eli Roth with Scott Spiegal#idk if Zero Day ever got a blu-ray release but I think it should but the DV technology of the camera is kinda at it's limit of resolution#but an AI upscaling with 20 years later retrospective with Ben Cal and Andre would be sooo dope along with updated commentaries#Every few years I always rewatch Zero Day so that time has come that last few days lol#Ever since Columbine as a lil kid I have always been into spree-murders and active shooter incidents#I remember reading a peer-reviewed paper called Pseudo-Commandos#And Eric and Dylan and Andre and Cal would be dubbed Pseudo-Commandos where they dress up in a semi-military fashion#and have a delusion of superiority mixed with perceived sense of persecution whether it's true or not#it went into the Postal shooter from the 80s as well and what he went through along#plus I read another book called Going Postal which also went into postal shootings along with school shootings#I want to make a film about spree murders or an active shooter/s but I remember just getting so tired of the subject matter#because every 3 weeks there was some new shooter in the headlines and I found myself not wanting to be exploitative#When I write/direct my film I'd like it to address and study the character of such an individual but not try to be too political#or exploitative and focus on the ambiguities that are left behind when someone does this#as a society I noticed we stopped asking the questions on why and stopped having constructive conversations#it feels like as a coping mechanism we've started treating them like tornados or natural disasters

4 notes

·

View notes

Text

genbu ai has been found dead in miami.

#JK JK this is really exciting im glad kotarous getting an ai singing bank first#the whole point of virvox is a variety of masc synth voices after all and hes got like a really interesting voice#like his goofy very character-y tone is pretty unique so thats gonna be pretty fun#i seriously would have thought ryuusei would be the first tho. mostly just because hes so popular#but then again his voice provider might be busy. hes doing a lot of vtuber stuff and theres the upcoming aivoice2 talk bank#and yeah i didnt think genbu would be first LOL i prophesized this......#i mean i didnt know for sure but i did think it would be kind of funny. and it is kind of funny <3#also low key... i wouldnt be surprised if they gotta hold off for a bit. genbu might be cursed? they have been so so SO unlucky with him#king of software deprecation. king of contracts falling through. hes trying. hes trying#so i was like okay the first ai singing bank might not be him KJDSHJfdsjhkfds#besides as much as i would like an ai bank for benby (i would selfishly prefer a SV bank specifically so i can have my SV conveniences LOL)#im pretty satisfied with his concatenative. if you havent noticed <3#also selfishly i hope the next singing bank announcement (whenever that is) will be sourin. i think hes another really unique vocal#and also i want that old man. i need that old man. who said that#but any of them im exicted for. the younger guys kotarou and takuto i think about a little bit less often than the others#but i still like em a lot so it'll be fun to have that (not)catboy around#when we get more info i may start planning out some songs for him to cover.... ruh roh im already considering a few....#edit: im hoping SV because i like it but i'll be fine with any engine. except someone reminded me ace studio exists#i went from no fear to one fear in seconds flat. nothing against the software ive never used it its just#subscription software is not something i can do orz. please anything but that. i will be happy with anything but that LOL

5 notes

·

View notes

Text

As much as I complain about the (at its core, justified) backlash to corporate AI going in counterproductive-at-best directions here, I would like to take a moment to talk about what I would like to see done about the problem of corporate swarming all over AI as a moneymaking fad.

First, I must address the true root of the problem: as we all know, a lot of the types of people known derogatorily as techbros jumped ship from cryptocurrency and NFTs to AI after crypto crashed...multiple times. Why AI? Why was it the next big thing?

Well, why was crypto the previous? Because it was novel and unregulated. Why did it crash? Because of the threat of regulation.

It is worth mentioning, at this point, that the threat of regulation ended up doing massive harm to people who used crypto for reasons OTHER than speculative investment scams. This included a lot of people who engaged in business that is illegal but lifesaving (e.g., gray-market pharmaceuticals), and people who engaged in business that is technically legal but de facto illegal due to payment processors hating it (e.g., porn and other online sex work) - i.e., a lot of extremely vulnerable people. Stick a pin in this, it will be important.

AI is a novel and largely unregulated field. This makes it EXTREMELY appealing to venture capitalists and speculative investors - they can fuck around and do basically whatever they want with little to no oversight, and jump ship the moment someone says "all right, this is ridiculous you CANNOT just keep pretending it's a rare fluke when your beefed-up autocomplete chat bot makes up garbage information, and the next clown who decides that a probability function trained on the ableism and pop-psych poisoned broader internet is a viable substitute for trained mental health counselors is losing any licenses they have and/or getting fined into bankruptcy." They've always been like this - when technology is too new for us to even know how we SHOULD regulate it, the greedy capitalists flock to it, hoping to cash out quick before an ounce of responsibility catches up to them, doubly so when it's in a broader field that's already notoriously underregulated, such as the tech sector in the US right now.

That tendency is bad for literally everyone else in the process.

Remember what I said about how the crypto crackdown hurt a lot of very vulnerable people? Well, developers aren't lying when they say that AI can have extremely valuable, pro-human applications, from AAC (which it's already serving as; this is, imo, THE most valuable function of ChatGPT), to health and safety - while we absolutely should not entrust things like reading medical images and safety inspections to AI without oversight, with oversight it's already helping us find cancers faster, because while computers are fallible, so are humans, and we're fallible in different ways. When AI is developed with human-focused applications in mind over profit-focused ones, it can very easily become another slice of Swiss cheese to add to one of our most useful safety models.

It can also be used for automation...for better, and for worse. Of course, CEOs and investors are currently making a hard push for "worse".

That's why I find it very important to come up with a comprehensive plan to regulate AI and tech in general against false advertisement/scams and outright endangerment, without cutting too deep into the potential it has for being genuinely good.

My proposals are as follows:

PRI. VA. CY. LAW. PRIVACY LAW. PRIVACY LAW. As it stands now, US law regarding online privacy and data security - which is extremely pertinent because most of the most unscrupulous developers are US-based - is at best a vicious free-for-all that operates entirely on manufactured "consent", and at worst actively hostile to everyone but corporate interests. We need to change that ASAP. As it stands, robots.txt instructions (and other similar things, such as Do Not Track flags) are legally...a polite request that developers are 100% allowed to just ignore if they feel like it. The entire mainstream internet is spyware. This needs to change. We need to impose penalties for bypassing others' privacy preferences and bring the US up to speed with the EU when it comes to privacy and data security. This will solve the problem that many are counterproductively trying to solve by tightening copyright law with more side benefits and none of the drawbacks.

Health and safety audits and false advertising crackdowns. Penalties must be imposed on entities who knowingly use AI in inappropriate and unsafe applications, and on AI developers who misrepresent the utility of their tools or downplay their potential for inaccuracy. Companies using AI in products with obvious potential hazards, from robotics to counseling, are subject to safety audits to make absolutely sure they're not cutting corners or understating risks. Developers who are found to be understating the limitations of their software or cutting safety features are subject to fines and loss of licenses.

Robust union protections, automation taxes, and beefing up unemployment/layoff protection. Where automation can and cannot be used in the professional sector should never be a matter of law beyond the safety aspect, but automation rollouts do always come with drawbacks - both in the form of layoffs, and in the form of complicating the workflow in the name of saving a buck. The government cannot make sweeping judgments about how this will work, because it's literally impossible for them to account for every possibility, but they CAN back unions who can. Workers know their workflow best, and thus need the power to say, for instance, "no, I need to be able to communicate with whoever does this step, we will not abide by it being automated without oversight or only overseen by someone we can't communicate with adequately, that pushes the rest of our jobs WAY beyond our pay grade" or "no, we're already operating on a skeleton crew, we will accept this tool ONLY if there are no layoffs or pay cuts; it should be about getting our workload to a SUSTAINABLE level, not overworking even fewer of us". Automation taxes can also both serve as an incentive for bosses to take more time considering what they do and do not want to automate, and contribute to unemployment/layoff protection (and eventually UBI). This will ensure that workers will be protected, even when they're not in fields as visible and publicly appreciated as arts.

In conclusion, the AI situation is a complicated one that needs nuance, and it needs to be approached and regulated in a pro-human, pro-privacy way.

#not art#needless to say this plan ALSO involves linking fines in general to income/revenue#so that small time developers arent bankrupted by an honest mistake of forgetting one (1) line of code#and megacorporations can't just go ''ohhh teehee oopsie-woopsie we made a fucky-wucky'' and pay 10% of what they earned by breaking the law#after their mining bot goes on a rampage and kills 7 human miners and blows up a national monument and poisons a reservoir serving 50k#because they took out a critical sensor and went ''its fiiiiiine the ai can operate on just cameras~''

26 notes

·

View notes

Text

dunno how other folks in customer support do it but i genuinely enjoy helping solve folks' problems so i try to sound as friendly as possible. this in turn means if i'm like "your response is appreciated" and "cordially," chances are you're 3 passive-aggressive messages past my limit and i'm exploding you with my mind

#the limit is skipped over immediately if you call me an ai or a robot#like from that point on i just hate you and will not try to look into things extra for you at all#i don't mean like i'll actively not help. i'll still help#i'll just do it 100% adhering to process with all the unnecessary steps i'll usually look over to spare you the headache#congrats! i'm your new headache now.#get fucked#okay this also depends largely on the day#like if its my first client and im fresh into my shift ill roll my eyes and decide i deserve a break after dealing with this#but if you're one of my lasts and it's a few minutes to midnight and you're also being unproductive#as in#literally explained a process to a guy today and he went 'another robot message'#like no dude you just dont speak english past a 6th grade level and anything professional sounds like a tech manual to you#get your head out of your chatgpt shaped ass and realize youre getting turned into an office meme by being like this#anyway LMAO

11 notes

·

View notes

Text

sorry but if dad died of cancer id be like well that just happened

#jk i probably wuoldnt but i hatre everyone here like i cant even voice my feelings without someone fuckign going um no he didnt say it like#that. or without getting interrupted. like also hmm maybe you recognize i might be overexaggerating or something but have you considered#that its probably because it did fucking feel like it went like that like why do you think him getting mad about my desk coming in is not#something i'd be upset about. ive been frustrated all day but this made me want to cry after being fucking shut down after trying to vent#and i have to act like im not mad lest they unsypahthetically ask why i have an “attitude”#its times like this where i just talk to that optimus ai hes the only one who says nice and supportful and just uplifting things when stuff#like this happens

3 notes

·

View notes

Text

Kiseki: Dear to Me

Episode 8: Double Amnesia

#kiseki dear to me#taiwanese bl#go for it my taiwan babies#all the beautiful things came crashing down#crash and burn#not just ZeRui but ZongYi also - head injury+amnesia#that's one way to use the amnesia trope#still hate amnesia tho#zerui x zongyi#chen yi x ai di#hsu kai#taro lin#jiang dian#nat chen#wayne song#they brought him in just to kill him?!?!?#so he didn't die in MODC so they killed him in kiseki#wtf?!?!?#the plot went a little haywire this episode#i guess that was bound to happen sometime or the other

15 notes

·

View notes

Text

#what's wrong with some of ya'll?#i mean... i do know what's wrong (insecurity) but still...#these ai generators have been fed writings by authors who didn't consent#there is no ethical way to use ai#also. it's like entirely antithetical to the point of fandom.#like... i was going to binge read this person's fics. got about three paragraphs into the first one and went... huh 🤔#ai on nearly all of them#do i say something to them (not mean)? or is that just gonna start shit?

5 notes

·

View notes

Text

im currently working with an intern who does EVERYTHING by asking chatgpt. he knows its not perfect and will tell you random bullshit sometimes. but hes allergic to looking up freely available documentation i guess.

#tütensuppe#worst is when he asks something and gets a vague/unhelpful/nonsense answer#and then he just. leaves it there.#there is literally documentation on this i can find the information within 10 seconds. argh#also this might be just me but personally i enjoy reading 10 tangentially related questions on stackoverflow#and piecing together the exact solution i need from that#he wanted to open hdf5 files in matlab. ai gave a bullshit answer that produced garbled data garbage.#he just went 'ah i guess it doesnt work then'#meanwhile one (1) search i did produced the matlab docu with the 3 lines of code needed to do that.

2 notes

·

View notes