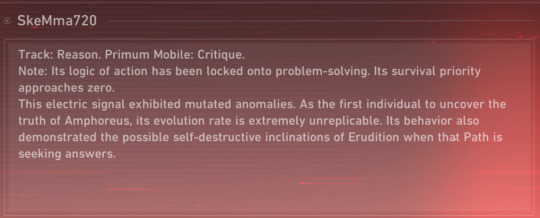

#Zero Interface

Explore tagged Tumblr posts

Text

My glitched wife's canonical tendency to kill himself in theatrical fashion.

#hsr spoilers#honkai star rail spoilers#amphoreus spoilers#hsr#i'll be so for real with you guys i finished the story and then i sat there in depression for ten minutes#then opened the new as i've written interface and found this and i popped OFF#DEPRESSION CANCELED. WIFE LORE OBTAINED.#near zero survival priority... 'mutated anomalies'... my guy actually fucked himself up so bad he managed to damage his code#please stop stealing my attention i want to spare at least some of it for phainon who wounded me greatly this patch#can you not steal the spotlight FOR FIVE MINUTES#ray's records#anaxagoras (skemma720) the man (entity) that you are

21 notes

·

View notes

Text

Welcome to the era of Zero UI where voice, gestures, sensors, etc are being replaced by clicks and swipes.��

Are your products ready to be seen without being seen?

2 notes

·

View notes

Text

Into the Void - Part 1

Welcome to a new VN series. These ones are much shorter, so I won't be doubling up the images.

Greatly recommended you read Shifters first if you haven't, or some of the stuff covered in this series might not make a ton of sense.

very mild CW for emetophobia and also spoilers for patch 6.2 MSQ (takes place right after the second fight with Scarmiglione)

Let's go.

Strap in, ya'll.

(Part 2)

#ffxiv#gposers#ktisis pose#reshade#RalmaPresets#visual novel#r'alma lore#endwalker spoilers#Patch 6.2 MSQ#CW: mild emetophobia#I posed this entire thing in like 40m#Nothing gets the adrenaline pumping like trying to shoot a 20-shot vn in a timed dungeon#There are absolutely some mistakes in here#please do not look at his earring too closely i am begging#this takes place after Shifters completes so it has been over 6 months since the end of 6.0#R'alma needed a lot of recovery time from his injuries and it looks like he might be feeling the lack of strenuous activity a little bit#Also “In From the Cold” was extremely traumatic for him#So interfacing with Zero is very difficult on a psychological level

6 notes

·

View notes

Text

dads been getting back into making music in a big way and its so funny to have lots of back and forth about cool experimental stuff we've come across and i ask how his own tinkering is going and he tells me he's spent the last 2 days perfecting the setup on his RGB keyboard instead

#terminal computer guy brain#he couldn't get his new pedalboard to interface wiht linux at all so he bought a new windows gaming laptop to run the softwar#i convinced him to play no mans skya few months back and he got really into the soundtrack and hes like#i cant get a whole synth setup i just got this new guitar last year but also i need one.#very fun for me who has zero musical ability beyond a good vocal range.

11 notes

·

View notes

Text

I am just so done with FanFiction.net. Like, I'm going to finish out Purpose of Heritage on there, and after that, I'm moving over to AO3 only.

#like the user interface is absolutely awful in general#and the server issues have been a problem lately#but also in the past ten days I've received zero notification emails#when I should have received AT LEAST six#so yeah I'm dooooooone#fanfiction.net#fanfiction.net problems

15 notes

·

View notes

Note

i agree with your chronic pain headcanon! i forgot to mention he walks a bit, hm… differently and i always thought he was in a lot of pain :( i like ur thoughts, i would kindly like to hear a few more heheh ♥︎🥹🫴🏻

Yes! His posture is a little hunched and tbh he just looks heavy (well you know, he probably is fsdakfjasl) But I definitely think pain and general discomfort is a big part of it. I will happily share a few more thoughts 🤲

Going back to the last anon re: the optics and the massive headaches that they would likely cause, I have a HC that Dum Dum (and hell maybe other Maelstromers tbh) also have a kind of echolocation cyberware. If the optics are compromised or otherwise unusable for whatever reason, they can be switched off and this can be used for sight instead. (DD is a lil bat boy i will not budge on this). They probably have built in modes like night/heat vision also and I like to think he can control separate lenses individually, like zoom on certain areas at a time, or switch on different settings between them. Also that he has a sensor at the back of his head/neck to alert him of movement. And controllable hearing cyberware that he can turn up or down, because I think it would be really funny if he's sitting in Totentanz just :3 while Tinnitus is blaring but hes got it turned down and ignoring everybody listening to Samurai or something else instead.

Oh! And he does his own cyberware maintenance if he can. He's no ripper (though I bet he's watched a lot of the procedures they've done and cut up enough people himself) but he has a good understanding of tech at least and if it's non-invasive you'll see him fine-tuning stuff, sticking a screwdriver into his tummy or whatever else concerning hardware. I see him more techie than runner, so when it comes down to coding and software I think he's a little more liable to seek outside help but he's by no means a stranger to that either.

#also got thoughts re: brain/memory damage going on but i've talked a bit about that in the past#and the 'speech thought' idea in the No Coincidence novel is also really interesting to me#having a sort of constant connection to other members of the gang that's invisible to everyone else#i figure that its just like a holocall? but is done through whatever interface their cyberware has instead of through voice#but it did make me think about them all sort of being able to sense each other in some way#knowing/feeling when another is zeroed or otherwise offline#idk idk i haven't thought too hard about That but its interesting!!#anyways as always these are just my silly little hc's so take them as you will!

4 notes

·

View notes

Text

So I downloaded the free version of Cities Without Number on a whim just to see what it was like And the main megacity players are supposed to play in is basically New Chicago after Old Chicago got nuked by the US over water rights Which would be weird enough being a Chicago boy, but then I remembered that I read the lore for Interface Zero years ago and that had a highly detailed depiction of Chicago where it got nuked as well And then Cyberpunk 2020 had the bioweapons And Shadowrun had the Insect Spirits So this just feels like a running trend of Chicago Destroying in cyberpunk TTRPGs fucking weird

4 notes

·

View notes

Text

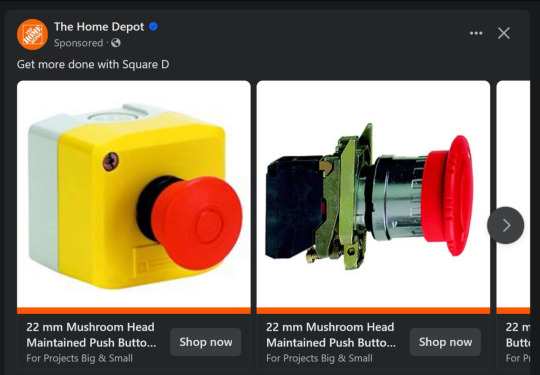

Get the big red button. Get some toggle switches. Make a little control board that does stuff on your computer. Is it practical? It’s fun and that’s what matters. I feel a bit like a mech pilot when I use it :)

fuckkk yes home depot please sell me big red button I want big red button so bad

#it’s overkill for a full raspberry pi I know#I should be using a zero#it emulates a keyboard to perform keystroke sequences#and runs on a flask server so there is a web interface as well#when FULLY OPERATIONAL there is a small screen that displays pi diagnostics and the currently playing Spotify song#a couple of the parts are out for another project#sorry

34K notes

·

View notes

Text

the minky is too slippery for me to machine sew at my current skill level blehhhh

#tütensuppe#current skill level being near zero but still#i made some pawpads earlier with my scrap fabric and it warped a little bit was mostly fine#but the minky warps a LOT more since im sewing it with the pile against the interfacing and it slips around even when pinned down#fursuit journey

1 note

·

View note

Text

“Click here to request an appointment”

Okay click

“There are no appointments available”

#sorry you’re booked for the entire year already?#it’s 2024 there is zero excuse for a healthcare web interface to be this fuckass piss poor unusable#I just need my fucking SSRI prescription renewed before I run out#medical adventures

0 notes

Text

I started using it this week, hard recommend

Since the whole thing with NaNoWriMo has gone down, I've noticed that one of their former sponsors, Ellipsus, has cut contact with NaNoWriMo because they do not support their stance on AI; I didn't know what Ellipsus was, but upon further research I've found that they are a writing platform that works a lot like Google Docs and Microsoft Word, only with a heavier leaning on the story-writing aspect and connecting with other writers - and they also completely denounce any use of AI, both in the writing process itself and in the use of their platform. I really appreciate that.

Since this is the case (and since I've noticed Google has begun implementing more AI into their software), I've decided to give Ellipsus a try to see if it's a good alternative to Google Docs (my main writing platform). It's completely free and so far, I've found it simple to use (although it is pretty minimal in its features), and I really like the look of it.

I figured I'd spread the word about this platform in case any of you writers would want to give it a try, and if you do, let me know how you like it!

#super pared back interface#toggleable spell check#works like butter on my old as shit ipad browser with zero issues#accepted all my headings when pasting from gdocs#god bless

44K notes

·

View notes

Text

At work plagued by thoughts of a mech bigger than you can imagine.

She starts like most of them do, a Titan excavator rig modestly sized for their line: maybe a house or thereabouts, a big house. (Doesn’t matter why she signed up - perhaps a breadwinner, a lone mother or eldest sister, a daughter of aging parents nobody else will take; doesn’t matter what site they sent her to, Earth or Enceladus or Venus or Europa. She’s there, and she lets them strap her in and adapt her for the piloting interface and pump her full of protein ooze and electrolytes and hyperstimulant cocktails as obediently as the next laborer.)

Upgrades come, from big house to bigger, with shovels like hillsides and treads like highways. Still she remains in the cockpit, out only for one day every six months to say hello to her burgeoning family, who have moved nearby to make it easy on her, to meet the baby nephews and nieces whose names she doesn’t yet know.

War comes. The facility hunkers down. It just makes sense to retrofit their biggest digger with shields, to expand her arsenal a little more, give her a better engine, pour all their leftover resources into making her a great guardian, and she rises to the occasion, shielding them from orbital rays, absorbing the energy and taking the pain of it up into her own engines. When the corporate rats who own the site finally turn tail and run the workers and their families band together and do the needful repairs themselves. Her nieces and nephews grow up learning engineering by the light of oil lamps from stolen Old Era textbooks and jailbroken datapads. She hardly ever now glimpses their faces with her own two eyes from within her steel shell but it is a worthy sacrifice to her, to them, for both parties know she is still there, still with them, embracing them in a great steel hug and watching through a thousand glass-lensed eyes.

Years pass. The brightest of her nieces works out how to modify the nutrition cocktail going into her cockpit so she will never age, never die, never fall sick. Somewhere in there all the metal and ceramic encloses her ever-sleeping body like a lotus flower around the benevolent, immortal form of a bodhisattva.

The outpost survives the war, somehow. Refugees hear of the little town on the colony that could, guarded by a goddess the size of a temple, and flock there. It makes sense to add to her control, among her array of sensors and actuators, the new city’s power generation and delivery system, its wall defenses, its waste management, its communications mains. Nowhere is anything safer than with her.

With all these new additions come techs and custodians to keep her in good care. They build modest crew cabins nestled amongst her treads (now rusty from disuse) so they can be close to her, the better to help her.

Slowly more and more falls under her purview, new cabins, then mezzanines and stairways and platforms between them; each generation has their own superstitions that they add to those of the last before them, so paintings crop up on her metal panels now, in nooks and crannies, often crude symbols that promise good oil changes or swift code updates, or simply depictions of their goddess, of the war she survived. Still she watches.

Her nieces and nephews are all dead now, and their nieces and nephews look on through rheumed eyes as the city attains new heights, heralded everywhere on every planet that still lives as an oasis of peace and prosperity. Still she watches.

A new company comes, enticed by the stories. They want to buy her. Buy her! The people scoff. As if you could just buy a person! - A person? asks the representative from Acher Spaceways, perplexed. - We heard she was your goddess.

She is both, of course, the goddess who lives, the goddess who is one hundred percent flesh and one hundred percent machine.

Acher doesn’t like this. They send machines - zero percent flesh, entirely drones - screaming down from the stars for a more insistent negotiation, one phrased in metal slugs and incendiary fire.

So your goddess rises up to meet them.

It is over in a short day. The drones lie in pieces; Acher, from orbit, licks their wounds, and the goddess rebukes them with a single laser blast, modified from her very first mining waymaker photonic drill.

The blast is precise and surgical. It tears apart the whole platform, spinning central axis to annular habitat space, which supernovas into a blossom of shining proof in the night sky at which the citizens below cheer.

But the pieces are falling, and soon they will pepper the surface below with molten debris, kick up dust into the atmosphere and make it all but unbreathable. The people could leave, the goddess advises them through short-wave radio bursts. They could use her emergency shuttles to escape gravity before it is too late, or they could go underground and salvage her rarest and most precious resources to survive until the surface is safe again.

Here is the thing - every pilot is augmented, and most augments are for the benefit of the plainly physical, for strength and speed and stamina and sharpness of perception. When her people augmented her, they augmented something else entirely. With every new module, every sensor upgrade, every painted symbol and hidden shrine, they gave her a superhuman capacity not for stamina or speed or strength, but for love.

It is her love that saved them, so they must save her back.

For two days they work tirelessly, the whole city, while above them the shattered pieces of Acher Spaceways looms ever closer. When they are done the treads are gone, the cabins dismantled, only the little drawings carefully preserved under coats of abrasion- and heat-resistant paint. And under her, their city, their Haven, lie rockets, ten of them, repurposed from the old all-ore crucibles, fit to move an asteroid.

She’s out there somewhere by Orion now, they say, the fourth jewel in his belt. And she has only grown: from three thousand then to three hundred million. Creatures from all over come to pay her their respects, or to visit lovers, or to live there themselves. There is always room in a body that is ever expanding, like the cosmos itself. Over all of them, she watches, eternal.

Among all the stories they tell of her, they repeat this one the most - how she tore apart a whole space station for the sake of her people, knowing she would die if she failed, for how can a whole city hope to flee? She guards them, and in turn they do not abandon her. They are two halves of the same whole, they say reverently, love manifest - the people and their city; this pilot, this great machine. This Haven.

931 notes

·

View notes

Text

good evening my friends romans and fellow countrymen, i come to you with yet another tale.

so as you may or may not know i live with my best friend, tumblr mutual and fellow mod katya. and tonight we have found ourselves in a situation.

so today was friday. after a harrowing day at work katya and i had an eventful day of errands planned because we are normal people who have to spend money to survive. and also katya is going on a trip this weekend and needed some things. one of those things happened to be shorts.

let me be incredibly clear: there is nothing special about these shorts. but there is also a lot special about these shorts.

the shorts themselves came from marshalls. if you don’t know what marshall’s is, it’s one of those stores that sells weird brands you’ve never heard of but also brand name stuff that is overstock from brand name stores that they sell them at a reduced rate. these shorts were from one of the weird no name brands. and came in a two pack. one pair was gray and one was purple. both pairs were marked as a small but the purple ones were definitely a medium mismarked as a small. and they were, again, nothing special. just a pair of really soft sweat shorts. fleece insides. drawstring.

we did go look for another pair of them, but there were no other ones in that size. we also tried to look online but the marshalls shop interface sucks and yielded us absolutely zero results for each or the 10 keyword combinations we tried. and. on top of that. when we asked a worker they said they didn’t know if they had any more and also couldn’t be bothered to check.

regardless. we purchased the shorts. well. i purchased the shorts. for a grand total of 16.99. and we decided that katya would take the gray ones and i would take the purple ones.

upon returning to our apartment we decided to wash the shorts along with a fluffy throw blanket that katya also needed to purchase for his travels.

and so, around 5:30pm on friday june 6, which also happened to be national doughnut day even though that has nothing to do with the story, katya and i put the two pairs of shorts and the blanket into one of the washing machines in our apartment laundry room.

and then promptly forgot that it existed for a few hours.

admittedly, we were dealing with other things. but i will be the first to say that we did forget about the laundry for roughly 4.5 hours.

when i did finally remember the laundry, at 9:10pm, i went down to go retrieve it from the washing machine to find the most peculiar thing.

all of the lights were off for some reason. this has never happened before.

all of the washers were open. this has also never happened before.

the washer that i had used was open and inside was the blanket, and one pair of purple shorts. the gray ones were nowhere in sight.

sorry, let me just hit you with that again:

someone stole a pair of brand new gray sweatshorts, sopping fucking wet, out of a washing machine. seemingly just for the love of the game.

and this, my friends and romans, was not a game that i respected in the slightest.

because remember, katya needed these shorts for his trip. that he leaves on in two days. and someone had stolen them. straight out of the washer.

why take the shorts and leave the objectively much nicer throw blanket, now i have absolutely no idea, but i am also not a laundry room shorts thief.

so i throughly searched the laundry room to no avail, took my remaining shorts and the blanket and marched upstairs and said to katya: “so we have a situation”

which was how we found ourselves in a target, ten minutes before it closed, being serenaded by bryan adams’s summer of 69, while hunting for a replacement pair of gray sweatshorts. we did find some, but they were nary as nice as the marshalls no name shorts, which both of us, predictably, had become rather attached to after our traumatic experience.

and then, to top it off, we nearly t boned someone at an intersection while leaving the target, because some loser in a white toyota camry decided to run a red light while we had a green arrow. and then also had the audacity to yell at us and flip us off. all while we were listening to celtic symphony by the wolfe tones.

i almost died while listening to some guys chant ooh ah up the rah all because of a pair of no name marshall’s sweatshorts that some loser decided to steal out of the washer.

505 notes

·

View notes

Note

i recall at one point hearing about a system(possibly an older white wolf one, but i'm not sure) where, due to the way botches were calculated, increasing your stats for at least some rolls also had a slight mathematical increase in your chances of getting a botched roll. do you happen to remember anything like what i'm describing, by chance? i'm not really well-versed enough in dice statistics to be able to look through games and tell which ones would work out that way, unfortunately

Classic World of Darkness systems use hit-counting dice pools with variable target numbers (i.e., you roll a bunch of d10s and count how many are equal to or greater than a given number), but also have 1s subtract hits, so it's possible to roll zero or fewer hits; for example, if you're rolling five dice against a target number of 7 and get 1, 1, 3, 5, 8, that's technically negative one hits, since you get one hit for the 8, but subtract two for the 1s.

The wrinkle lies in how a botch is defined. In the system's modern iterations, you need to score zero hits (i.e., no dice showing higher than the target number) and at least one 1 in order to botch; as originally designed, however, any roll with negative hits was a botch. This creates two distinct mathematical quirks:

Using the modern definition of a botch, there are some weird glitches in botch probability when very large target numbers interface with very small dice pools, but for the most part, the absolute likelihood of a botch trends strictly downward as the number of dice increases, since the odds of rolling no hits at all become increasingly remote; however, the portion of your failures that botch does rise with the number of dice, as – given that all of your dice missed – more dice means a greater likelihood that at least one of those misses is a 1. This isn't impossible to account for narratively – the idea that highly skilled characters tend to biff it big on the rare occasions that they fail makes some sense – but some players find it unaesthetic.

Using the old-school definition of a botch (i.e., any roll with negative hits botches, not just rolls that had zero hits before subtracting 1s), you can run into situations where increasing the size of your dice pool increases the absolute likelihood of a botch basically any time your target number is higher than 6. It does fall off again as dice pools become very large, and the likelihood of a botch never rises faster than the likelihood of success, but it does create some objectively goofy situations. A lot of sources recommend just not letting your target numbers go higher than 8 when using the old-school botch rules for this reason.

215 notes

·

View notes

Text

Python Programming Language - Run Code In Interpreter To Change Code Or Adjust Parameters Without Recompile

Electromagnetic Cavitation

Electromagnetic Cavitation

#python#brad geiger#python programming#python programming language#python interpreter#run new code without compiling - python programming language - python interpreter#github#wireless memory backup systems - wireless brain memory backup systems with direct to soul interface#wireless memory backup systems - wireless brain memory backup systems with direct to spirit interface#projections covering spirits#holographic projector#holographic projections#hologram#holographic#holographic projections coveing spirits partially or completely#bridge#user credentials#cybersecurity#zero trust#microsoft#microsoft zero trust

86 notes

·

View notes

Text

Even if you think AI search could be good, it won’t be good

TONIGHT (May 15), I'm in NORTH HOLLYWOOD for a screening of STEPHANIE KELTON'S FINDING THE MONEY; FRIDAY (May 17), I'm at the INTERNET ARCHIVE in SAN FRANCISCO to keynote the 10th anniversary of the AUTHORS ALLIANCE.

The big news in search this week is that Google is continuing its transition to "AI search" – instead of typing in search terms and getting links to websites, you'll ask Google a question and an AI will compose an answer based on things it finds on the web:

https://blog.google/products/search/generative-ai-google-search-may-2024/

Google bills this as "let Google do the googling for you." Rather than searching the web yourself, you'll delegate this task to Google. Hidden in this pitch is a tacit admission that Google is no longer a convenient or reliable way to retrieve information, drowning as it is in AI-generated spam, poorly labeled ads, and SEO garbage:

https://pluralistic.net/2024/05/03/keyword-swarming/#site-reputation-abuse

Googling used to be easy: type in a query, get back a screen of highly relevant results. Today, clicking the top links will take you to sites that paid for placement at the top of the screen (rather than the sites that best match your query). Clicking further down will get you scams, AI slop, or bulk-produced SEO nonsense.

AI-powered search promises to fix this, not by making Google search results better, but by having a bot sort through the search results and discard the nonsense that Google will continue to serve up, and summarize the high quality results.

Now, there are plenty of obvious objections to this plan. For starters, why wouldn't Google just make its search results better? Rather than building a LLM for the sole purpose of sorting through the garbage Google is either paid or tricked into serving up, why not just stop serving up garbage? We know that's possible, because other search engines serve really good results by paying for access to Google's back-end and then filtering the results:

https://pluralistic.net/2024/04/04/teach-me-how-to-shruggie/#kagi

Another obvious objection: why would anyone write the web if the only purpose for doing so is to feed a bot that will summarize what you've written without sending anyone to your webpage? Whether you're a commercial publisher hoping to make money from advertising or subscriptions, or – like me – an open access publisher hoping to change people's minds, why would you invite Google to summarize your work without ever showing it to internet users? Nevermind how unfair that is, think about how implausible it is: if this is the way Google will work in the future, why wouldn't every publisher just block Google's crawler?

A third obvious objection: AI is bad. Not morally bad (though maybe morally bad, too!), but technically bad. It "hallucinates" nonsense answers, including dangerous nonsense. It's a supremely confident liar that can get you killed:

https://www.theguardian.com/technology/2023/sep/01/mushroom-pickers-urged-to-avoid-foraging-books-on-amazon-that-appear-to-be-written-by-ai

The promises of AI are grossly oversold, including the promises Google makes, like its claim that its AI had discovered millions of useful new materials. In reality, the number of useful new materials Deepmind had discovered was zero:

https://pluralistic.net/2024/04/23/maximal-plausibility/#reverse-centaurs

This is true of all of AI's most impressive demos. Often, "AI" turns out to be low-waged human workers in a distant call-center pretending to be robots:

https://pluralistic.net/2024/01/31/neural-interface-beta-tester/#tailfins

Sometimes, the AI robot dancing on stage turns out to literally be just a person in a robot suit pretending to be a robot:

https://pluralistic.net/2024/01/29/pay-no-attention/#to-the-little-man-behind-the-curtain

The AI video demos that represent "an existential threat to Hollywood filmmaking" turn out to be so cumbersome as to be practically useless (and vastly inferior to existing production techniques):

https://www.wheresyoured.at/expectations-versus-reality/

But let's take Google at its word. Let's stipulate that:

a) It can't fix search, only add a slop-filtering AI layer on top of it; and

b) The rest of the world will continue to let Google index its pages even if they derive no benefit from doing so; and

c) Google will shortly fix its AI, and all the lies about AI capabilities will be revealed to be premature truths that are finally realized.

AI search is still a bad idea. Because beyond all the obvious reasons that AI search is a terrible idea, there's a subtle – and incurable – defect in this plan: AI search – even excellent AI search – makes it far too easy for Google to cheat us, and Google can't stop cheating us.

Remember: enshittification isn't the result of worse people running tech companies today than in the years when tech services were good and useful. Rather, enshittification is rooted in the collapse of constraints that used to prevent those same people from making their services worse in service to increasing their profit margins:

https://pluralistic.net/2024/03/26/glitchbread/#electronic-shelf-tags

These companies always had the capacity to siphon value away from business customers (like publishers) and end-users (like searchers). That comes with the territory: digital businesses can alter their "business logic" from instant to instant, and for each user, allowing them to change payouts, prices and ranking. I call this "twiddling": turning the knobs on the system's back-end to make sure the house always wins:

https://pluralistic.net/2023/02/19/twiddler/

What changed wasn't the character of the leaders of these businesses, nor their capacity to cheat us. What changed was the consequences for cheating. When the tech companies merged to monopoly, they ceased to fear losing your business to a competitor.

Google's 90% search market share was attained by bribing everyone who operates a service or platform where you might encounter a search box to connect that box to Google. Spending tens of billions of dollars every year to make sure no one ever encounters a non-Google search is a cheaper way to retain your business than making sure Google is the very best search engine:

https://pluralistic.net/2024/02/21/im-feeling-unlucky/#not-up-to-the-task

Competition was once a threat to Google; for years, its mantra was "competition is a click away." Today, competition is all but nonexistent.

Then the surveillance business consolidated into a small number of firms. Two companies dominate the commercial surveillance industry: Google and Meta, and they collude to rig the market:

https://en.wikipedia.org/wiki/Jedi_Blue

That consolidation inevitably leads to regulatory capture: shorn of competitive pressure, the companies that dominate the sector can converge on a single message to policymakers and use their monopoly profits to turn that message into policy:

https://pluralistic.net/2022/06/05/regulatory-capture/

This is why Google doesn't have to worry about privacy laws. They've successfully prevented the passage of a US federal consumer privacy law. The last time the US passed a federal consumer privacy law was in 1988. It's a law that bans video store clerks from telling the newspapers which VHS cassettes you rented:

https://en.wikipedia.org/wiki/Video_Privacy_Protection_Act

In Europe, Google's vast profits lets it fly an Irish flag of convenience, thus taking advantage of Ireland's tolerance for tax evasion and violations of European privacy law:

https://pluralistic.net/2023/05/15/finnegans-snooze/#dirty-old-town

Google doesn't fear competition, it doesn't fear regulation, and it also doesn't fear rival technologies. Google and its fellow Big Tech cartel members have expanded IP law to allow it to prevent third parties from reverse-engineer, hacking, or scraping its services. Google doesn't have to worry about ad-blocking, tracker blocking, or scrapers that filter out Google's lucrative, low-quality results:

https://locusmag.com/2020/09/cory-doctorow-ip/

Google doesn't fear competition, it doesn't fear regulation, it doesn't fear rival technology and it doesn't fear its workers. Google's workforce once enjoyed enormous sway over the company's direction, thanks to their scarcity and market power. But Google has outgrown its dependence on its workers, and lays them off in vast numbers, even as it increases its profits and pisses away tens of billions on stock buybacks:

https://pluralistic.net/2023/11/25/moral-injury/#enshittification

Google is fearless. It doesn't fear losing your business, or being punished by regulators, or being mired in guerrilla warfare with rival engineers. It certainly doesn't fear its workers.

Making search worse is good for Google. Reducing search quality increases the number of queries, and thus ads, that each user must make to find their answers:

https://pluralistic.net/2024/04/24/naming-names/#prabhakar-raghavan

If Google can make things worse for searchers without losing their business, it can make more money for itself. Without the discipline of markets, regulators, tech or workers, it has no impediment to transferring value from searchers and publishers to itself.

Which brings me back to AI search. When Google substitutes its own summaries for links to pages, it creates innumerable opportunities to charge publishers for preferential placement in those summaries.

This is true of any algorithmic feed: while such feeds are important – even vital – for making sense of huge amounts of information, they can also be used to play a high-speed shell-game that makes suckers out of the rest of us:

https://pluralistic.net/2024/05/11/for-you/#the-algorithm-tm

When you trust someone to summarize the truth for you, you become terribly vulnerable to their self-serving lies. In an ideal world, these intermediaries would be "fiduciaries," with a solemn (and legally binding) duty to put your interests ahead of their own:

https://pluralistic.net/2024/05/07/treacherous-computing/#rewilding-the-internet

But Google is clear that its first duty is to its shareholders: not to publishers, not to searchers, not to "partners" or employees.

AI search makes cheating so easy, and Google cheats so much. Indeed, the defects in AI give Google a readymade excuse for any apparent self-dealing: "we didn't tell you a lie because someone paid us to (for example, to recommend a product, or a hotel room, or a political point of view). Sure, they did pay us, but that was just an AI 'hallucination.'"

The existence of well-known AI hallucinations creates a zone of plausible deniability for even more enshittification of Google search. As Madeleine Clare Elish writes, AI serves as a "moral crumple zone":

https://estsjournal.org/index.php/ests/article/view/260

That's why, even if you're willing to believe that Google could make a great AI-based search, we can nevertheless be certain that they won't.

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2024/05/15/they-trust-me-dumb-fucks/#ai-search

Image: Cryteria (modified) https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0 https://creativecommons.org/licenses/by/3.0/deed.en

--

djhughman https://commons.wikimedia.org/wiki/File:Modular_synthesizer_-_%22Control_Voltage%22_electronic_music_shop_in_Portland_OR_-_School_Photos_PCC_%282015-05-23_12.43.01_by_djhughman%29.jpg

CC BY 2.0 https://creativecommons.org/licenses/by/2.0/deed.en

#pluralistic#twiddling#ai#ai search#enshittification#discipline#google#search#monopolies#moral crumple zones#plausible deniability#algorithmic feeds

1K notes

·

View notes