#Self-DrivingCars

Link

#autonomousdrivingtechnology#autonomousvehicletechnology#AutonomousVehicles#DassaultSystemes#digitaltwin#Futurride#ride-hailing#robocars#robotaxi#self-drivingcars#self-drivingvehicles#sustainablemobility#Tesla#Waymo

0 notes

Text

AI-Driven Cars Have Transformed from Science Fiction to Street Reality in 2024

Have you ever caught yourself dreaming of driving around town in a self-driving car?Get ready, because this future is right around the corner, and you don't even know it, because the race to build cars is at its peak, and artificial intelligence is the central pillar.But before we dive headlong into this field, I'd like to stop for a moment and think about why anyone would want a self-driving car. Try to imagine this scenario: you're driving along the freeway and suddenly you see a chicken and a sloth playing pickleball on the side of the road (sorry, that's the best I could come up with). In a normal car, you'd probably be concentrating on the road and miss this strange sight (which, let's face it, sucks). But what if you were in a self-driving car? You'd be able to enjoy the show as you please, without fear of finding yourself in oncoming traffic.

The need for autonomous cars

Let's face it, the previous example was the lamest of them all, and it's certainly not the most appropriate. But I'd like you to keep this in mind: around 90% of car accidents in the United States are due to human error, according to the National Highway Traffic Safety Administration. Let's face it, we humans are broken drivers. We're (always) distracted, we're tired, we make bad decisions. Why do we behave as if we had 16 at the wheel?It's because of this kind of attitude that AI steps in, and promises to be wise and responsible adults in the car.the AI doesn't get distracted by an incredible song on the radio, or by a discussion about whether that's Ryan Reynolds we've just passed on the street - just the opposite of us. It keeps its eyes (that's a metaphor okay!) on the road at all times.

How does AI power autonomous vehicles?

The question on everyone's mind is how can AI enable a car to drive itself? Well, it's not magic (even if it looks a little like magic, that is). Rather, it's about giving the car a 2.0 version of the five senses, and a brain to make sense of the hubbub.

Key AI Technologies Enabling Self-Driving Cars

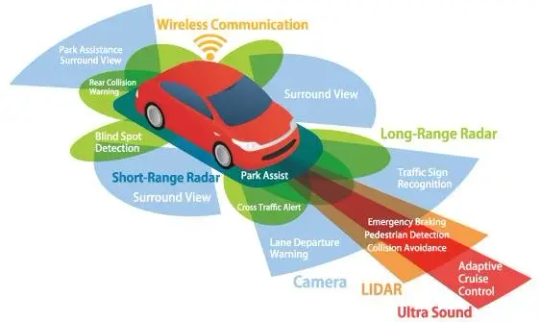

The Car's Eyes and Ears

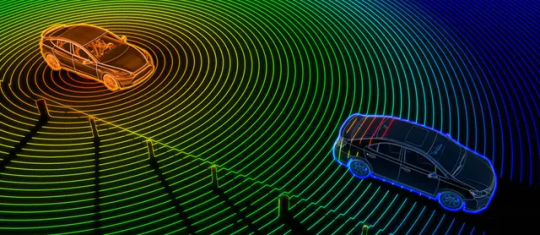

Lidar in Autonomous Driving: Pros, Cons & More

dubizzle

First of all, we have the car's eyes. They come in the form of cameras, radars and a device called LIDAR (which looks like a radar, but with lasers - in case you didn't know, this technology was used in Avengers Infinity War, the scene where we see Thanos' ship land on a city - it wasn't a photo, a video or even a 3D city). These sensors scan the environment all the time, looking for absolutely everything, whether it's other cars, pedestrians or even the chicken and sloth we mentioned earlier ( Good! I promise this is the last time we'll talk about this stuff)

Vehicle tracking using ultrasonic sensors & joined particle weighting

Semantic Scholar

Next, we have the ears. These are ultrasonic sensors that work like bat sonar, bouncing sound waves off nearby targets to estimate their distance. And it's very useful for parking, because, let's be honest, not even the AI wants to parallel park if it can avoid it.then there's the sense of touch, which in this case consists of feeling the car's movements. Accelerometers and gyroscopes track the car's speed and position, a bit like the inner ear does for humans (but without the risk of discomfort in the car, otherwise it would have been weird).The Brains of the Operation

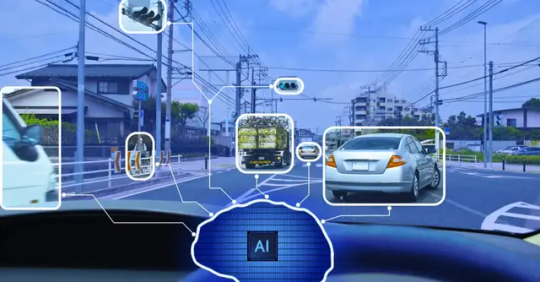

active learning and autonomous-vehicles-cloudfactory.com

All this sensory information is fed to the car's brain, a sort of hyper-powerful on-board computer running artificial intelligence algorithms. And this is where the magic happens. The AI will use all this data to build a real-time 3D model of the world around the car. It's a bit like The Matrix, but instead of dodging bullets, it dodges potholes and pedestrians.Machine Learning: Practice Makes Perfect But it's not enough for AI to see the world, it has to understand it. For this, we need machine learning. When it analyzes millions of hours of driving data, AI will learn to recognize what I'll call patterns and make anticipations, or in more appropriate terms, predictions.If a car overtakes, is it likely to change lanes? That pedestrian, is he about to step off the sidewalk? AI simply asks itself these kinds of questions all the time, answering them on a regular basis and far more quickly than a human ever could.Path Planning and Navigation

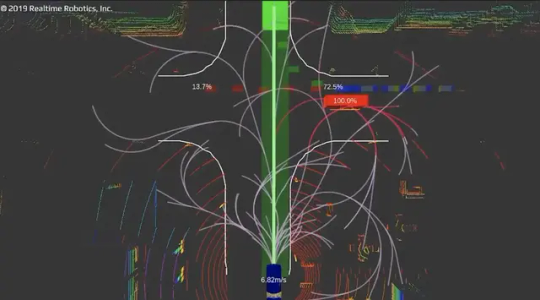

Autonomous Driving and the Need For Motion Planning- Realtime Robotics

Once the AI has understood what's going on around it, it needs to decide what to do. This is where it really starts to get exciting (and a little philosophical, I'd say). The AI has to be programmed with a set of rules and priorities. Should it prefer the safety of its passengers to that of pedestrians? How should it handle moral situations like the famous cart problem?These are difficult questions, and it's because of situations like these that we're all driving around in self-driving cars.But progress is being made. Companies like Tesla, Waymo and Uber are traveling millions of kilometers in autonomous cars, and they're teaching their AIs how to handle all kinds of situations, from rush-hour traffic to unexpected weather conditions.

Levels of Vehicle Automation

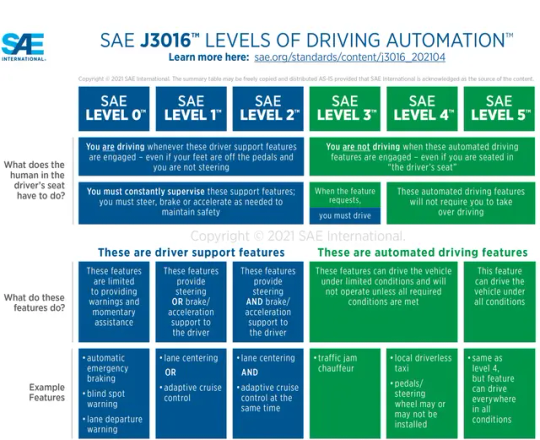

SAE Levels of Driving Automation™ Refined for Clarity and- SAE international

Speaking of progress, let's talk about autonomous driving levels, because if you didn't already know, the Society of Automotive Engineers (SAE) has kindly and carefully only determined six levels for us, from 0 to 5Autonomy levels- Level 0 corresponds to grandfather's old pickup truck, with no automation whatsoever. No automation whatsoever.

- Level 1 can, and I mean can, have cruise control or lane-keeping assistance. Other than that, zilch.

- We're currently seeing a lot of Level 2 cars on the road, which can handle certain driving roles and nothing more, but still need a human to take over at any given moment.

- It's at level 3 that we get to caress autonomous driving a little. These cars can handle the majority of driving tasks, and still need WE, the human saviors, to take over.

- Level 4 cars can drive themselves in most conditions, but when the going gets tough and the going gets a bit red, like the weather, they gently give way to humans.

- And level 5? This is the Holy Grail, the pearl of pearls, a car capable of driving itself, in all conditions, in all situations, without the need for human intervention.Now, I've had some personal experiences with this technology that I'd like to share. I recently test-drove a car with Level 2 autonomy, and let me tell you, it was both exhilarating and terrifying. Watching the steering wheel move on its own as the car navigated a curve was like witnessing magic. But I also found myself constantly hovering my hands over the wheel, ready to take control at a moment's notice. It's a stark reminder that we're still in a transitional phase, where AI driving automation in the automotive industry is impressive but not yet perfect.Technical ChallengesYou may be thinking: all this sounds cool, but when am I going to buy this thing? That's the million-dollar (or multi-billion-dollar) question.The truth is, we're not there yet. While companies like Tesla are still inventing advanced driving assistance systems, truly autonomous vehicles are still a long, long way off - in fact, they're still in the testing phase. Personally, I couldn't care less about autonomous cars, it's not something I'm looking forward to, but if autonomous cars tempt you, some analysts think that by 2030, up to 15% of cars sold could be fully autonomous. It may not sound like much, but in 2010 electric cars accounted for less than 1% of new car sales. Today, they account for almost 10% in many countries. Technology has a way of surprising us.The catch (there is always a catch)As always, and this is starting to get on my nerves, there are challenges to be met. One of the most important is ensuring that these AI drivers are capable of handling unpredictable real-world events. It's one thing to navigate a well-mapped city street, but not the same for a country road that's not on any map, or a construction zone that appears overnight. These are the kinds of scenarios that keep AI engineers up at night.There's also the question of public confidence. There are many who disagree or are afraid of entrusting absolute control to a computer. It's going to take time, time and more time (I don't think the 2030s are still relevant.) and proof of safety, so there's a lot of proof of safety before most of us feel comfortable taking a little nap while our car takes us to work. In any case, if we can be persuaded to implant a neuralinks chip in our brains, this trick will be a piece of cake.And yes! We're already entrusting our lives to artificial intelligence. Every time you board a commercial aircraft, you're trusting the artificial intelligence systems that manage much of the flight. And without lying to us, most of us trust Google Maps more than our own sense of direction.Is it worth fighting for?By the time we get to fully autonomous cars, we'll have traveled a whole long and boring, with plenty of potholes along the way. But if we consider the benefits it could bring, it might just be worth it. Isn't a world where road accidents are drastically reduced, where the elderly and disabled can move around freely, where traffic flows like water because every car communicates with every other car, worth it?Yes! it's worth working for, even if we have to deal with a few bugs along the way. After all, as any programmer will tell you, the first step in fixing a bug is to find it. Not to mention that test vehicles cover millions of miles every year, which could eliminate these bugs at a hell of a rate.So if you happen to be stuck in those pesky traffic jams and think about this article and the day when you'll be able to sit back and let your car do the driving, remember our little secret: that day will come. Maybe not tomorrow, maybe not next year, maybe not even in the next 10 years. But it's coming. And when it does, it will change the way we travel forever.

Conclusion

In the meantime, keep your eyes on the road. And if you happen to see a chicken and a sloth playing pickleball, well ( damn! I promised I wouldn't talk about that thing anymore)... maybe it's time to stop and check if you're dreaming. Or if you've stumbled across a Pixar movie. Either way, it might be best to let a human take the wheel on this particular journey.

Read the full article

#AIadvancementsinautonomousvehiclenavigation#AIalgorithmsforself-drivingcars#AIdrivingautomationintheautomotiveindustry#AIinautonomousvehicles#AIinself-drivingcars#AI-basedautonomousvehiclesystems#AI-poweredself-drivingcarsoftware#AI'simpactonautonomousvehicletechnology#autonomousvehicles#benefitsofAIinautonomousdriving#futureofAIinself-drivingcars#self-drivingcarsafetywithAI#self-drivingcars#self-drivingtechnology#theroleofAIindevelopingself-drivingtechnology

0 notes

Text

The Robotics Revolution All Around We Don't See

Despite being romanticised as futuristic; robots are indispensable in our lives, making it better and exploring outer worlds like the Moon writes Satyen.

Read More. https://www.sify.com/ai-analytics/the-robotics-revolution-all-around-we-dont-see/

#RoboticsRevolution#AI#ArtificialIntelligence#Robot#Automation#Chornobyl#AutonomousVehicle#Self-DrivingCars

0 notes

Photo

The mansion in Succession’s season 4 premiere belongs to a real-life billionaire with a struggling company

The fourth and final season of the hit dark-comedy series Succession kicked off with the perfect backdrop to Roy family scheming: a sprawling clifftop property in the Pacific Palisades, Los Angeles.Read more...

https://qz.com/succession-season-4-premiere-austin-russell-mansion-1850268981

#billionaire#succession#goresmetropoulos#tomhanks#forbes#luminar#volvo#luminartechnologies#velodyne#articles#business2cfinance#park#lidar#stanforduniversity#self drivingcar#roy#pacificpalisades#technology#austinrussell#applicationsoftware#emergingtechnologies#Julia Malleck#Quartz

0 notes

Text

driverless #self-drivingcars #Autonomousdriving #technology #future #innovation #car #AI #autonomousvehicles #tech #automotive #vehicle #selfdriving #auto

1 note

·

View note

Link

Self-driving Cars Market by Component (Radar, LiDAR, Ultrasonic, & Camera Unit), Vehicle (Hatchback, Coupe & Sports Car, Sedan, SUV), Level of Autonomy (L1, L2, L3, L4, L5), Mobility Type, EV and Region

#self-driving cars market#SelfDrivingCarsMarket#self-drivingcars#SelfDrivingCars#SelfDrivingCarsMarketSize#SelfDrivingCarsMarketShare#SelfDrivingCarsMarketGrowth#SelfDrivingCarsMarketTrends#SelfDrivingCarsMarketAnalysis#SelfDrivingCarsMarketOverview#SelfDrivingCarsMarketTechnology#SelfDrivingCarsIndustry

0 notes

Text

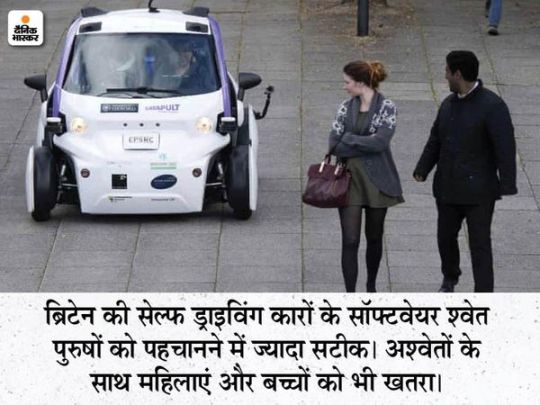

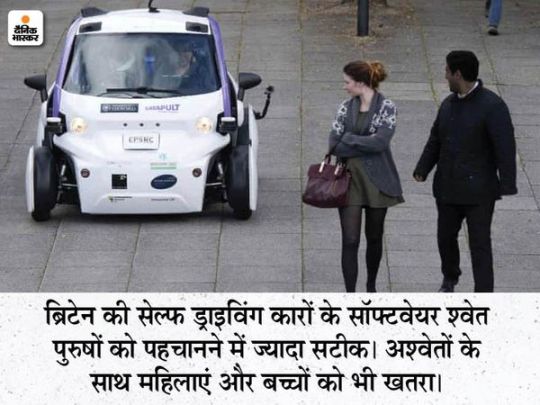

मशीन की सोच में इंसानी भेदभाव: सेल्फ ड्राइविंग कार भी नस्लवादी, अश्वेत चेहरों को पहचानती नहीं; ब्रिटिश लॉ कमीशन ने चेताया- खतरनाक हो सकते हैं नतीजे

मशीन की सोच में इंसानी भेदभाव: सेल्फ ड्राइविंग कार भी नस्लवादी, अश्वेत चेहरों को पहचानती नहीं; ब्रिटिश लॉ कमीशन ने चेताया- खतरनाक हो सकते हैं नतीजे

Hindi News

Db original

Self driving Cars Also Do Not Recognize Racial, Blacks; British Law Commission Warns

Ads से है परेशान? बिना Ads खबरों के लिए इनस्टॉल करें दैनिक भास्कर ऐप

9 घंटे पहले

कॉपी लिंक

सोच-समझ कर खुद फैसला करने वाली सेल्फ ड्राइविंग कार की सोच में भी इंसानी नस्लवाद शामिल हो गया है। चौंक गए न आप। लेकिन यह सच है। ब्रिटेन के लॉ कमीशन ने सेल्फ ड्राइविंग कारों को लेकर चेतावनी जारी की…

View On WordPress

#America#artificial technology#Autonomous Driving Car#britain#Cars#cars 2 racist#google facial recognition system#Google image recognition system#Law Commission#Racism#Racist#racist self driving car#Self-driving cars#Self-Driving Cars Are Racist#self-driving cars can not detect dark skin#self-driving cars hitting people#Self-DrivingCars

0 notes

Text

मशीन की सोच में इंसानी भेदभाव: सेल्फ ड्राइविंग कार भी नस्लवादी, अश्वेत चेहरों को पहचानती नहीं; ब्रिटिश लॉ कमीशन ने चेताया- खतरनाक हो सकते हैं नतीजे

मशीन की सोच में इंसानी भेदभाव: सेल्फ ड्राइविंग कार भी नस्लवादी, अश्वेत चेहरों को पहचानती नहीं; ब्रिटिश लॉ कमीशन ने चेताया- खतरनाक हो सकते हैं नतीजे

Hindi News

Db original

Self driving Cars Also Do Not Recognize Racial, Blacks; British Law Commission Warns

Ads से है परेशान? बिना Ads खबरों के लिए इनस्टॉल करें दैनिक भास्कर ऐप

27 मिनट पहले

कॉपी लिंक

सोच-समझ कर खुद फैसला करने वाली सेल्फ ड्राइविंग कार की सोच में भी इंसानी नस्लवाद शामिल हो गया है। चौंक गए न आप। लेकिन यह सच है। ब्रिटेन के लॉ कमीशन ने सेल्फ ड्राइविंग कारों को लेकर चेतावनी जारी की…

View On WordPress

#america#artificial technology#Autonomous Driving Car#Britain#Cars#cars 2 racist#google facial recognition system#Google image recognition system#Law Commission#racism#racist#racist self driving car#self driving cars#Self-Driving Cars Are Racist#self-driving cars can not detect dark skin#self-driving cars hitting people#Self-DrivingCars

0 notes

Link

We live in the Technology Era. Man has already made great strides in technology. The wheel discovered by ancient man has made a great revolution. One of the greatest designs ever made by a wheel is the vehicle design. Automobile technology is still at an advanced level. The technology of modern vehicles is sometimes astonishing. Some vehicles even use artificial intelligence. Some modern vehicles are driven by themselves. I think self-driving is something that belongs to the future. But that is what we look forward to now.

#History Of Self-Driving Cars How Self-Driving Cars Detect Objects? Self-Driving Cars Technology#HistoryOfSelf-DrivingCars HowSelf-DrivingCarsDetectObjects Self-DrivingCars TTechnolog

1 note

·

View note

Photo

The Golden State of Scooter Surveillance #privacy #Scooters #Self-drivingCars #transportation https://t.co/a83WE9Pwpy http://twitter.com/iandroideu1/status/1234894087884435456

The Golden State of Scooter Surveillance #privacy #Scooters #Self-drivingCars #transportation https://t.co/a83WE9Pwpy

— iAndroid.eu (@iandroideu1) March 3, 2020

0 notes

Text

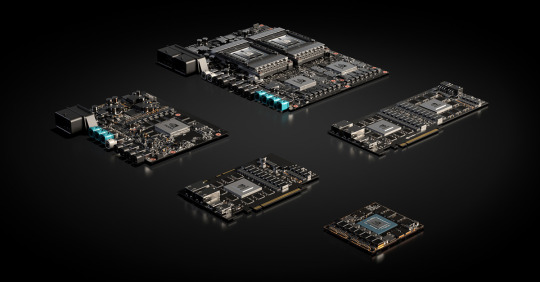

Nvidia acquisition of Arm has self-driving car implications

Nvidia acquisition of Arm has self-driving car implications

Arm’s technology, already embedded in Nvidia’s self-driving computers, will enable the next generation of autonomous vehicle technology from companies like Voyage.

#ArmLimited #artificialintelligence #Chrysler #CPU #CPUdesign #FCA #GHSP #Nvidia #robotaxi #selfdrivingcar #SoftBank #supercomputers #Voyage

Read the full article

#ArmLimited#artificialintelligence#Chrysler#CPU#CPUdesign#FCA#GHSP#Nvidia#robotaxi#self-drivingcar#SoftBank#supercomputers#Voyage

0 notes

Text

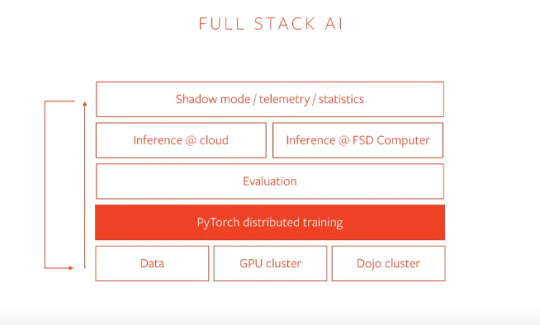

How Tesla Uses PyTorch

Last week, Tesla Motors made the news for delivering big with its smart summon feature on their cars. The cars can now be made to move around the parking lot with just a click. A myriad of tools and frameworks run in the background which makes Tesla’s futuristic features a great success. One such framework is PyTorch.

PyTorch has gained popularity over the past couple of years and it is now powering the fully autonomous objectives of Tesla motors.

During a talk for the recently-concluded PyTorch developer conference, Andrej Karpathy, who plays a key role in Tesla’s self-driving capabilities, spoke about how the full AI stack utilises PyTorch in the background.

Tesla WorkFlow With PyTorch

via PyTorch

Tesla Motors is known for pioneering the self-driving vehicle revolution in the world. They are also known for achieving high reliability in autonomous vehicles without the use of either LIDAR or high definition maps. Tesla cars depend entirely upon computer vision.

Tesla is fairly a vertical integrated company and that is also true when it comes to the intelligence of the autopilot. Everything that goes into making Tesla autopilot the best in the world is based on machine learning and the raw video streams that come from 8 cameras around the vehicle. The footage from these cameras is processed through convolutional neural networks(CNNs) for object detection and performing other actions eventually.

The collected data is labelled, training is done on on-premise GPU clusters and then it is taken through the entire stack. The networks are run on Tesla’s own custom hardware giving them full control over the lifecycle of all these features, which are deployed to almost 4 million Teslas around the world.

For instance, a single frame from the footage of a single camera can contain the following:

Road markingsTraffic lightsOverhead signsCrosswalksMoving objectsStatic objectsEnvironment tags

So this quickly becomes a multi-task setting. A typical Tesla computer vision workflow would have all these tasks that are connected to a ResNet-50 like shared backbone running roughly on 1000 x 1000 images. Shared because having neural networks for every single task is costly and inefficient. These shared backbone or the networks are called Hydra Nets.

There are multiple Hydra Nets for multiple tasks and the information gathered from all these networks can be used to solve recurring tasks. This requires a combination of data-parallel and model-parallel training.

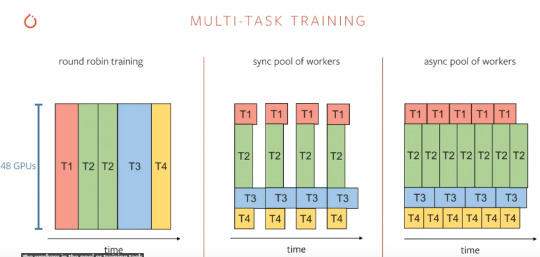

via PyTorch

Multi-task training can be done through three main ways as illustrated above. Though round-robin looks straight forward, it is not effective because a pool of workers would be more effective in doing multiple tasks simultaneously. And, the engineers at Tesla find PyTorch to be well suited for carrying out this multitasking.

For autopilot, Tesla trains around 48 networks that do 1,000 different predictions and it takes 70,000 GPU hours. Moreover, this training is not a one-time affair but an iterative one and all these workflows should be automated while making sure that these 1,000 different predictions don’t regress over time.

Why PyTorch?

Smart summon in action

When it comes to machine learning frameworks, TensorFlow and PyTorch are widely popular with the practitioners. No other framework comes even remotely close to what these two products of Google and Facebook respectively, have in store. These frameworks have slowly found their niche within the AI community. PyTorch, especially has become the go-to framework for machine learning researchers.

PyTorch citations in papers on ArXiv grew 194% in the first half of 2019 alone while the number of contributors to the platform has grown more than 50%.

Companies like Microsoft, Uber, and other organisations across industries are increasingly using it as the foundation for their most important machine learning research and production workloads.

And now with the release of PyTorch 1.3, the platform got a much needed boost as it now includes experimental support for features such as seamless model deployment to mobile devices, model quantisation for better performance at inference time, and front-end improvements, like the ability to name tensors and create clearer code with less need for inline comments.

The team also has plans in place for launching a number of additional tools and libraries to support model interpretability and bringing multimodal research to production.

Additionally, PyTorch team have also collaborated with Google and Salesforce to add broad support for Cloud Tensor Processing Units, providing a significantly accelerated option for training large-scale deep neural networks.

These timely releases from PyTorch coincide with the self-imposed deadlines of Elon Musk on his Tesla team. With the success of their Smart summon, Tesla aims to go fully autonomous in the next couple of years and we can safely say that it has rightly chosen PyTorch to do the heavy lifting.

Read the full article

0 notes

Text

Putting the 'auto' in automobiles

Dinesh Elumalai looks at the past and the future of self-driving cars, and how this technology will make rides safer

Read More. https://www.sify.com/ai-analytics/putting-the-auto-in-automobiles/

0 notes

Photo

Elon Musk readies to unveil Tesla’s third master plan at the company’s first investor day

Tesla CEO Elon Musk will address investors tomorrow (March 1) at the company’s electric vehicle gigafactory in Austin, Texas.Read more...

https://qz.com/tesla-investor-day-2023-musk-ev-twitter-1850168313

#tesla#elonmusk#theboringcompany#business2cfinance#whitesouthafricanpeople#nikolatesla#spacex#self drivingcar#carclassifications#teslapowerwall#cars#motorvehicles#electriccar#bernardarnault#teslamodels#hyperloop#andresmanuellopezobrador#Diego Lasarte#Quartz

0 notes

Text

Self-driving car demo is the first to cross the US-Canada border

Self-driving car demo is the first to cross the US-Canada border

As a rule, self-driving car tests tend to be limited to the country where they started. But that's not how people drive — what happens when your autonomous vehicle crosses the border? Continental and Magna plan to find out. They're planning to pilot… Read entire story.

Source: Engadget RSS Feed

View On WordPress

1 note

·

View note

Photo

福井県永平寺町の自動走行車両に乗ってきました。今年6月24日から12月20日まで自動走行の実証実験が行われているんです。運転手は付いていますが、ハンドルやブレーキの操作はしません😊時速19キロという速度が周りの風景を楽しませてくれます🍁車両は路面に埋め込まれた電磁誘導線に沿って走行していきます。ドローンで上空からも撮ってみました。

Self-driving car in Eiheiji Town, Fukui Prefecture. It‘s the demonstration experiment until December 20th, 2019. It runs at 19 km/h and you can enjoy the surrounding scenery. The car moves along electromagnetic induction lane embedded in the road.

#福井

#永平寺町

#自動走行車両

#自動走行

#実証実験

#電磁誘導

#ドローン

#空撮

#fukui

#Eiheiji

#selfdrivingcar

#demonstrationexperiment

#drone

#aerialphotography

1 note

·

View note