#Open source code

Text

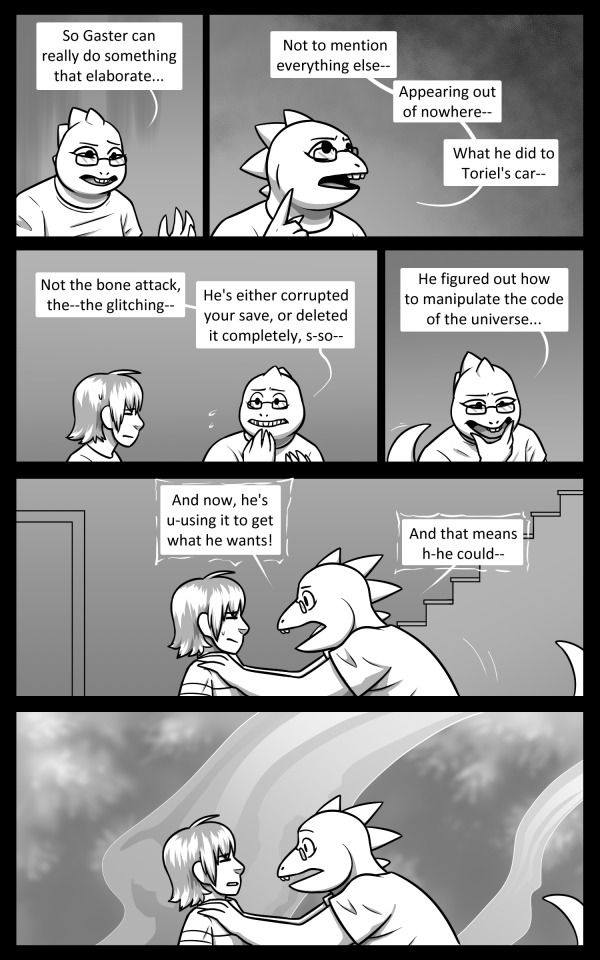

Unexpected Guests Chapter 10, Act Two: Page 6

First / Previous / Next

Out of sight doesn't mean out of mind.... Gaster won't let anything interfere with his goal.

Look for the next update on Nov. 16th!

#undertale#undertale comic#unexpected guests comic#frisk (undertale)#alphys (undertale)#it was nice to draw alphys nerding out -u-#that's a real theory she's discussing but it's basically one guy who advocates for it--typically not a good sign for validity in science >>#but it's great for worlds based on meta video games :>#i couldn't find an easy way to use undertale's source code so that's... just the opening narration in binary =u=;;;#so. no secrets there >>;

2K notes

·

View notes

Text

feel like i've finally gotten to the point where the answer to "will i be able to figure out how to do the thing" is almost always yes, and then the operative question becomes "will i be able to figure out how to do the thing quickly". and unfortunately the answer there is usually no

213 notes

·

View notes

Text

"Open" "AI" isn’t

Tomorrow (19 Aug), I'm appearing at the San Diego Union-Tribune Festival of Books. I'm on a 2:30PM panel called "Return From Retirement," followed by a signing:

https://www.sandiegouniontribune.com/festivalofbooks

The crybabies who freak out about The Communist Manifesto appearing on university curriculum clearly never read it – chapter one is basically a long hymn to capitalism's flexibility and inventiveness, its ability to change form and adapt itself to everything the world throws at it and come out on top:

https://www.marxists.org/archive/marx/works/1848/communist-manifesto/ch01.htm#007

Today, leftists signal this protean capacity of capital with the -washing suffix: greenwashing, genderwashing, queerwashing, wokewashing – all the ways capital cloaks itself in liberatory, progressive values, while still serving as a force for extraction, exploitation, and political corruption.

A smart capitalist is someone who, sensing the outrage at a world run by 150 old white guys in boardrooms, proposes replacing half of them with women, queers, and people of color. This is a superficial maneuver, sure, but it's an incredibly effective one.

In "Open (For Business): Big Tech, Concentrated Power, and the Political Economy of Open AI," a new working paper, Meredith Whittaker, David Gray Widder and Sarah B Myers document a new kind of -washing: openwashing:

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4543807

Openwashing is the trick that large "AI" companies use to evade regulation and neutralizing critics, by casting themselves as forces of ethical capitalism, committed to the virtue of openness. No one should be surprised to learn that the products of the "open" wing of an industry whose products are neither "artificial," nor "intelligent," are also not "open." Every word AI huxters say is a lie; including "and," and "the."

So what work does the "open" in "open AI" do? "Open" here is supposed to invoke the "open" in "open source," a movement that emphasizes a software development methodology that promotes code transparency, reusability and extensibility, which are three important virtues.

But "open source" itself is an offshoot of a more foundational movement, the Free Software movement, whose goal is to promote freedom, and whose method is openness. The point of software freedom was technological self-determination, the right of technology users to decide not just what their technology does, but who it does it to and who it does it for:

https://locusmag.com/2022/01/cory-doctorow-science-fiction-is-a-luddite-literature/

The open source split from free software was ostensibly driven by the need to reassure investors and businesspeople so they would join the movement. The "free" in free software is (deliberately) ambiguous, a bit of wordplay that sometimes misleads people into thinking it means "Free as in Beer" when really it means "Free as in Speech" (in Romance languages, these distinctions are captured by translating "free" as "libre" rather than "gratis").

The idea behind open source was to rebrand free software in a less ambiguous – and more instrumental – package that stressed cost-savings and software quality, as well as "ecosystem benefits" from a co-operative form of development that recruited tinkerers, independents, and rivals to contribute to a robust infrastructural commons.

But "open" doesn't merely resolve the linguistic ambiguity of libre vs gratis – it does so by removing the "liberty" from "libre," the "freedom" from "free." "Open" changes the pole-star that movement participants follow as they set their course. Rather than asking "Which course of action makes us more free?" they ask, "Which course of action makes our software better?"

Thus, by dribs and drabs, the freedom leeches out of openness. Today's tech giants have mobilized "open" to create a two-tier system: the largest tech firms enjoy broad freedom themselves – they alone get to decide how their software stack is configured. But for all of us who rely on that (increasingly unavoidable) software stack, all we have is "open": the ability to peer inside that software and see how it works, and perhaps suggest improvements to it:

https://www.youtube.com/watch?v=vBknF2yUZZ8

In the Big Tech internet, it's freedom for them, openness for us. "Openness" – transparency, reusability and extensibility – is valuable, but it shouldn't be mistaken for technological self-determination. As the tech sector becomes ever-more concentrated, the limits of openness become more apparent.

But even by those standards, the openness of "open AI" is thin gruel indeed (that goes triple for the company that calls itself "OpenAI," which is a particularly egregious openwasher).

The paper's authors start by suggesting that the "open" in "open AI" is meant to imply that an "open AI" can be scratch-built by competitors (or even hobbyists), but that this isn't true. Not only is the material that "open AI" companies publish insufficient for reproducing their products, even if those gaps were plugged, the resource burden required to do so is so intense that only the largest companies could do so.

Beyond this, the "open" parts of "open AI" are insufficient for achieving the other claimed benefits of "open AI": they don't promote auditing, or safety, or competition. Indeed, they often cut against these goals.

"Open AI" is a wordgame that exploits the malleability of "open," but also the ambiguity of the term "AI": "a grab bag of approaches, not… a technical term of art, but more … marketing and a signifier of aspirations." Hitching this vague term to "open" creates all kinds of bait-and-switch opportunities.

That's how you get Meta claiming that LLaMa2 is "open source," despite being licensed in a way that is absolutely incompatible with any widely accepted definition of the term:

https://blog.opensource.org/metas-llama-2-license-is-not-open-source/

LLaMa-2 is a particularly egregious openwashing example, but there are plenty of other ways that "open" is misleadingly applied to AI: sometimes it means you can see the source code, sometimes that you can see the training data, and sometimes that you can tune a model, all to different degrees, alone and in combination.

But even the most "open" systems can't be independently replicated, due to raw computing requirements. This isn't the fault of the AI industry – the computational intensity is a fact, not a choice – but when the AI industry claims that "open" will "democratize" AI, they are hiding the ball. People who hear these "democratization" claims (especially policymakers) are thinking about entrepreneurial kids in garages, but unless these kids have access to multi-billion-dollar data centers, they can't be "disruptors" who topple tech giants with cool new ideas. At best, they can hope to pay rent to those giants for access to their compute grids, in order to create products and services at the margin that rely on existing products, rather than displacing them.

The "open" story, with its claims of democratization, is an especially important one in the context of regulation. In Europe, where a variety of AI regulations have been proposed, the AI industry has co-opted the open source movement's hard-won narrative battles about the harms of ill-considered regulation.

For open source (and free software) advocates, many tech regulations aimed at taming large, abusive companies – such as requirements to surveil and control users to extinguish toxic behavior – wreak collateral damage on the free, open, user-centric systems that we see as superior alternatives to Big Tech. This leads to the paradoxical effect of passing regulation to "punish" Big Tech that end up simply shaving an infinitesimal percentage off the giants' profits, while destroying the small co-ops, nonprofits and startups before they can grow to be a viable alternative.

The years-long fight to get regulators to understand this risk has been waged by principled actors working for subsistence nonprofit wages or for free, and now the AI industry is capitalizing on lawmakers' hard-won consideration for collateral damage by claiming to be "open AI" and thus vulnerable to overbroad regulation.

But the "open" projects that lawmakers have been coached to value are precious because they deliver a level playing field, competition, innovation and democratization – all things that "open AI" fails to deliver. The regulations the AI industry is fighting also don't necessarily implicate the speech implications that are core to protecting free software:

https://www.eff.org/deeplinks/2015/04/remembering-case-established-code-speech

Just think about LLaMa-2. You can download it for free, along with the model weights it relies on – but not detailed specs for the data that was used in its training. And the source-code is licensed under a homebrewed license cooked up by Meta's lawyers, a license that only glancingly resembles anything from the Open Source Definition:

https://opensource.org/osd/

Core to Big Tech companies' "open AI" offerings are tools, like Meta's PyTorch and Google's TensorFlow. These tools are indeed "open source," licensed under real OSS terms. But they are designed and maintained by the companies that sponsor them, and optimize for the proprietary back-ends each company offers in its own cloud. When programmers train themselves to develop in these environments, they are gaining expertise in adding value to a monopolist's ecosystem, locking themselves in with their own expertise. This a classic example of software freedom for tech giants and open source for the rest of us.

One way to understand how "open" can produce a lock-in that "free" might prevent is to think of Android: Android is an open platform in the sense that its sourcecode is freely licensed, but the existence of Android doesn't make it any easier to challenge the mobile OS duopoly with a new mobile OS; nor does it make it easier to switch from Android to iOS and vice versa.

Another example: MongoDB, a free/open database tool that was adopted by Amazon, which subsequently forked the codebase and tuning it to work on their proprietary cloud infrastructure.

The value of open tooling as a stickytrap for creating a pool of developers who end up as sharecroppers who are glued to a specific company's closed infrastructure is well-understood and openly acknowledged by "open AI" companies. Zuckerberg boasts about how PyTorch ropes developers into Meta's stack, "when there are opportunities to make integrations with products, [so] it’s much easier to make sure that developers and other folks are compatible with the things that we need in the way that our systems work."

Tooling is a relatively obscure issue, primarily debated by developers. A much broader debate has raged over training data – how it is acquired, labeled, sorted and used. Many of the biggest "open AI" companies are totally opaque when it comes to training data. Google and OpenAI won't even say how many pieces of data went into their models' training – let alone which data they used.

Other "open AI" companies use publicly available datasets like the Pile and CommonCrawl. But you can't replicate their models by shoveling these datasets into an algorithm. Each one has to be groomed – labeled, sorted, de-duplicated, and otherwise filtered. Many "open" models merge these datasets with other, proprietary sets, in varying (and secret) proportions.

Quality filtering and labeling for training data is incredibly expensive and labor-intensive, and involves some of the most exploitative and traumatizing clickwork in the world, as poorly paid workers in the Global South make pennies for reviewing data that includes graphic violence, rape, and gore.

Not only is the product of this "data pipeline" kept a secret by "open" companies, the very nature of the pipeline is likewise cloaked in mystery, in order to obscure the exploitative labor relations it embodies (the joke that "AI" stands for "absent Indians" comes out of the South Asian clickwork industry).

The most common "open" in "open AI" is a model that arrives built and trained, which is "open" in the sense that end-users can "fine-tune" it – usually while running it on the manufacturer's own proprietary cloud hardware, under that company's supervision and surveillance. These tunable models are undocumented blobs, not the rigorously peer-reviewed transparent tools celebrated by the open source movement.

If "open" was a way to transform "free software" from an ethical proposition to an efficient methodology for developing high-quality software; then "open AI" is a way to transform "open source" into a rent-extracting black box.

Some "open AI" has slipped out of the corporate silo. Meta's LLaMa was leaked by early testers, republished on 4chan, and is now in the wild. Some exciting stuff has emerged from this, but despite this work happening outside of Meta's control, it is not without benefits to Meta. As an infamous leaked Google memo explains:

Paradoxically, the one clear winner in all of this is Meta. Because the leaked model was theirs, they have effectively garnered an entire planet's worth of free labor. Since most open source innovation is happening on top of their architecture, there is nothing stopping them from directly incorporating it into their products.

https://www.searchenginejournal.com/leaked-google-memo-admits-defeat-by-open-source-ai/486290/

Thus, "open AI" is best understood as "as free product development" for large, well-capitalized AI companies, conducted by tinkerers who will not be able to escape these giants' proprietary compute silos and opaque training corpuses, and whose work product is guaranteed to be compatible with the giants' own systems.

The instrumental story about the virtues of "open" often invoke auditability: the fact that anyone can look at the source code makes it easier for bugs to be identified. But as open source projects have learned the hard way, the fact that anyone can audit your widely used, high-stakes code doesn't mean that anyone will.

The Heartbleed vulnerability in OpenSSL was a wake-up call for the open source movement – a bug that endangered every secure webserver connection in the world, which had hidden in plain sight for years. The result was an admirable and successful effort to build institutions whose job it is to actually make use of open source transparency to conduct regular, deep, systemic audits.

In other words, "open" is a necessary, but insufficient, precondition for auditing. But when the "open AI" movement touts its "safety" thanks to its "auditability," it fails to describe any steps it is taking to replicate these auditing institutions – how they'll be constituted, funded and directed. The story starts and ends with "transparency" and then makes the unjustifiable leap to "safety," without any intermediate steps about how the one will turn into the other.

It's a Magic Underpants Gnome story, in other words:

Step One: Transparency

Step Two: ??

Step Three: Safety

https://www.youtube.com/watch?v=a5ih_TQWqCA

Meanwhile, OpenAI itself has gone on record as objecting to "burdensome mechanisms like licenses or audits" as an impediment to "innovation" – all the while arguing that these "burdensome mechanisms" should be mandatory for rival offerings that are more advanced than its own. To call this a "transparent ruse" is to do violence to good, hardworking transparent ruses all the world over:

https://openai.com/blog/governance-of-superintelligence

Some "open AI" is much more open than the industry dominating offerings. There's EleutherAI, a donor-supported nonprofit whose model comes with documentation and code, licensed Apache 2.0. There are also some smaller academic offerings: Vicuna (UCSD/CMU/Berkeley); Koala (Berkeley) and Alpaca (Stanford).

These are indeed more open (though Alpaca – which ran on a laptop – had to be withdrawn because it "hallucinated" so profusely). But to the extent that the "open AI" movement invokes (or cares about) these projects, it is in order to brandish them before hostile policymakers and say, "Won't someone please think of the academics?" These are the poster children for proposals like exempting AI from antitrust enforcement, but they're not significant players in the "open AI" industry, nor are they likely to be for so long as the largest companies are running the show:

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4493900

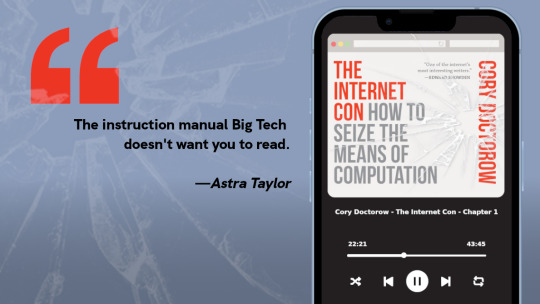

I'm kickstarting the audiobook for "The Internet Con: How To Seize the Means of Computation," a Big Tech disassembly manual to disenshittify the web and make a new, good internet to succeed the old, good internet. It's a DRM-free book, which means Audible won't carry it, so this crowdfunder is essential. Back now to get the audio, Verso hardcover and ebook:

http://seizethemeansofcomputation.org

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2023/08/18/openwashing/#you-keep-using-that-word-i-do-not-think-it-means-what-you-think-it-means

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#llama-2#meta#openwashing#floss#free software#open ai#open source#osi#open source initiative#osd#open source definition#code is speech

250 notes

·

View notes

Text

The “no one would ever work for free” crowd never really considered the eternal bond between humans and increasingly complex tools

#anarchism#anarchist#anarchocommunism#praxis#communism#communist#revolution#leftism#leftist#politics#work#free work#open source#coding#code

142 notes

·

View notes

Text

Some screen sharing of challenging backend Java code that I was testing, although it was quite basic, to be honest.

Additionally, my notebook contained a pretty basic code base for a producer class that I wrote by hand. I want to become very skilled in creating Java classes based on the producer-consumer pattern using Kafka.

#coding#linux#programming#programmer#developer#software development#software#student#study aesthetic#study blog#university student#studyblr#study motivation#studying#university#learning#study#studynotes#studyblr community#java#vim#neovim#vimterminal#clivim#iusevimbtw#linuxposting#open source#computers#debian#notebook

29 notes

·

View notes

Text

Can we upgrade open-source decentralized services, tools, and networks so decentralization can be the default options for startups and developers..?? can we achieve big tech's security and stability with decentralized networks ??

Share any thoughts or ideas you have... what you think ?

NOTICE: I'm not talking about web3 or crypto.

96 notes

·

View notes

Text

My critique of WH is also not that the narrator seems to have such a biased, strange inner moral code/ sense of justice, that really would only make sense to him, that really only reflects his own emotional truth (I think many people have that); it’s that everyone around him reflects this in a way that feels … unrealistic.

#How convenient and transparent is it#that in the opening chapter of tmatl#outside and ‘objective’ characters affirm his view#that Norris was arrogant and no ‘true’ gentleman#but that Wyatt was#the person he destroyed vs the person he spared#esp bcus there is really no contemporary source that spoke ill of Norris#Besides George I would say that his was the accusation#that most were recorded as not believing#because it didn’t reflect what they knew of his character and reputation#the whole inciting incident being a masque that dances on Wolsey’s grave being part of that … odd moral code#I mean despite the other suggestions in the text Cromwell was ; as my friend said#so singular and unique#he really seems to have one thing in common with many others — blaming those of ‘evil influence’ > Henry himself#who was the only one that ordered wolsey’s actual arrest#despite what his personal misgivings might have been or not been

15 notes

·

View notes

Text

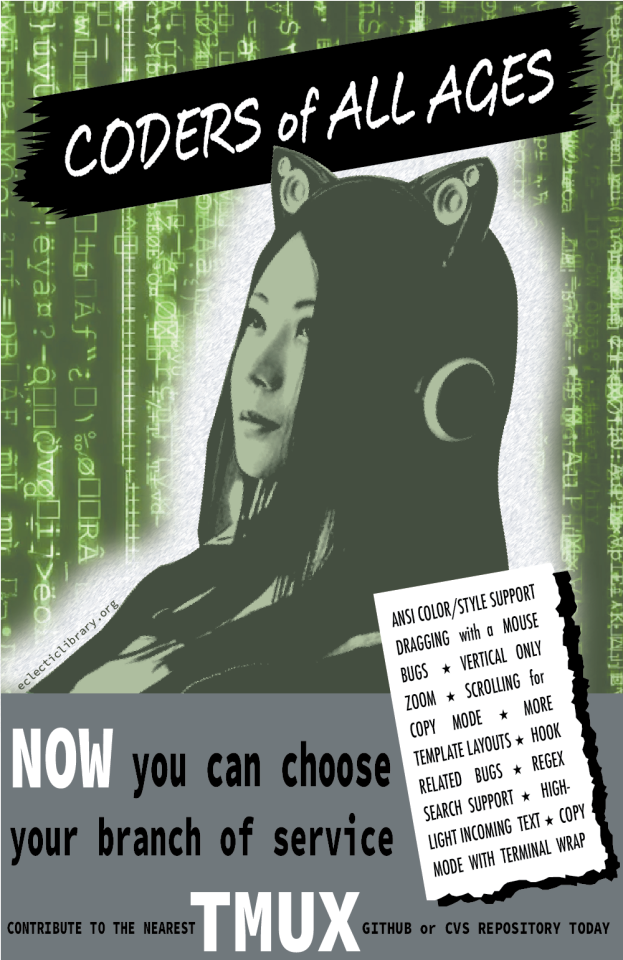

One of the most intimidating and also scariest facts I've been coming to terms with is how true that one xkcd comic is about how many people & libraries are reliant on some package that has been thanklessly maintained by one person in Nebraska since 2003.

In an entirely unrelated discovery, I learned today that tmux (you know, that one terminal multiplexer that is used by god knows how many people) is functionally maintained & developed by one dude, Nicholas Marriott, who was the original creator of the program back in 2007. There is a contribution guide, and he does have a list of recommended issues to work on. So, if you're looking for an open source project to contribute to, and you use tmux, consider :)

13 notes

·

View notes

Text

i had free photoshop because one of my classes was LEARNING photoshop and you'd never guess who's student subscription to adobe products ran out

#truly hoping my game design class also offers unlimited access to adobe products because it's in the same facility#or my art classes offer it...#i HATEEE krita and i know it's open sourced so i can probably code it to be to my liking but i havebnoooo clue how to do that#somehow. mspaint is more fun to use#at least i KNOW i'll have access to unity when i school year starts up again + blender#but. WAHHHH I WNANA DRAW RN!!!!!#babble#my doodles

8 notes

·

View notes

Text

How to dip your 🦶 into Open Source

Contributing to Open Source projects can be a valuable experience for beginner programmers. It can help you learn new skills, build your portfolio, make connections, make a difference, and gain recognition for your work.

But... how do I start? Well here's an option to get you started ☟

Google's Summer of Code (GSoC) is an online mentorship program that matches beginner contributors to open source software development with mentors from open source projects ❋

GSoC takes place every year mid January with registration to be a contributor usually opening around mid March. Unfortunately for this year (2023) the application deadline is tomorrow シ (April 4)

However! There are so many open source projects available this year so I highly encourage you to try to apply even if its past April 4 you might get lucky 🍀

The key to getting accepted by an org is to interact with their developer community and keep in contact with the orgs mentors. This is why I believe even if they application period has ended if you join the orgs online communication app and involve yourself in the community and keep sharing your skills and expertise with them you will still have a good chance of being able to join!

Best of luck! (づ。◕‿‿◕。)づ

(even if it doesn't work out this year there will always be the next and the next and the next... I recommend checking out the archive for how the previous years went to better prepare yourself to jump on this opportunity next year!!)

#GSoC#Google Summer of Code#Google#Open Source#Code#progblr#codeblr#resources#programming#software development#computer science#mentor

83 notes

·

View notes

Text

Die Schweiz fordert ab sofort, dass Software, die von öffentlichen Stellen eingesetzt wird, auch der Quelltext offengelegt wird. So kann die eingesetzte Software besser auf Fehler überprüft und die Wartung an andere Anbieter übergeben werden.

5 notes

·

View notes

Text

The Quiz App: Final.

Hello everyone,

If you follow me or happen to have seen the post I made on the 21st of August (this post precisely) I casually made a request because I was tired of coding alone(still am 😆) and people showed interest starting with @xiacodes. Immediately- We talked about what we wanted to do causally and it ended up being "The Quiz App", We had a few calls on the Codeblr Discord to decide what we wanted to do and where we would stop, with @lazar-codes joining us later on to contribute. We digressed a bit and talked about other things lol but you get it.

Active Contributors

@xiacodes did 99% of the styling (she really loves styling) and it turned it great.

@lazar-codes suggested Trello(a task-management system) which we use to organize tasks and check progress.

I did the documentation, helped with the quiz-app logic, and theme mode and I was generally just all over the place offering help here and there, also being a sponge absorbing all the new pieces of information. 🤩🤩

Today, I merged the testing branch with the master branch, bringing an end to an experience that I want to cherish forever.

(I clearly suck at Geography lol)

⚠⚠⚠

I also want to take this opportunity to encourage Web Developers + programmers to contribute to open-source projects: It's one of the best ways to learn from other people, share ideas and put your Git knowledge into practice.

Check out The Quiz App here

The Quiz App (mmnldm.github.io)

Special Shout-Out to @a-fox-studies Your presence is always appreciated!

#open source#codeblr#coding#coding community#tech#programming#programmers#developer#web development#code#html

28 notes

·

View notes

Text

You won't be able to do everything (and that's okay), it's best to prioritize things to get them done well.

(09/03/2023)

Hello everyone.

How are you?

I'll explain why I put a print of my github, but first I wanted to talk about prioritizing tasks.

He in some article about time optimization about listing your tasks and thus starting a prioritization ranking to know what you should focus more energy/do first, I don't remember which article it was but I recommend searching it on google if you like this type of You won't be able to do everything (and that's okay),

it's best to prioritize things to get them done well.

Within that, I wanted to share that making choices is a difficult thing, even more so if you are anxious and keep doing calculations of probabilities that could go wrong if you choose the x path UHEUHHEUHUE.

BUT that's life and I even admit I like it sometimes.

During the next 44 days I will be focusing 100% of my time on achieving my goal.

And with that I reduced my code study time.

Which will make me study from 30m to 1 hour a day.

And that's where the print from my github comes from, I feel satisfaction in things well done and well aligned. And seeing these green dots not having a straight sequence pisses me off (I take into account that it also excludes certain repositories) UHEHUUHEHUE.

So my goal now is to study and put it on my github every day, until I can only do 15 minutes a day. I want this github to have constant contributions from March to December.

So that's about it, sometimes you'll see me just talking about 1 minimal topic I learned that day and I'm fine with that, because I decided to prioritize something that will change my life if it works out. (And I will fight for that)

So I hope that you who are reading know how to prioritize your tasks/choices in the best way and have great studies, stay well!

ABOUT OUTREACHY: As I don't have anxiety to get the scholarship, I'll do it calmly until April 3th. I'm still learning about project governance models and you'll see more about it on weekends around here.

#computerscience#computer science#computing#open source#software engineer#software development#software#womaninstem#womanintech#codeblr#100 days of code#learn to code

74 notes

·

View notes

Text

I have some cool open-source projects, is Tumblr a good place to share about them?? would the community here appreciate learning about my ideas besides if they are great or not??

62 notes

·

View notes

Text

Check Out my Youtube Clone

# Youtube 2.0 Nexjs Supabase

# Live Site - https://youtube-nextjs.netlify.app/

# Time TO Finish This Project : 2 Months (working time :2 Hours/day)

# Live Working

# Features

- Upload/Edit/Delete Video

- Comment/Reply/ShoutOut

- Search/ Tag Filter, Voice Search

- Emoticon supported 🙂

- Like/Dislike Comment, Replies, Videos

- Subscribe/Unsubscribe

- Subscriptions Feed Recommendation

- Save to Watch Later

- Liked Video Playlist

- Studio Features ✨

- Edit Video Thumbnail

- Customization - Change Channel Image, Banner Image, ChannelName, Location

- Add Social Links

- Dark Mode Feature

## Tech Stacks Used

- Next.js

- Supabase (For Auth And Backend)

- React Emoji Picker

- React Context Api

### I have added most of the features If You want to contribute you are Welcome :), you can add some more features which I have missed. Or You can Remove some bugs

GITHUB LINK:

#programming#webdev#web developers#codeblr#code#html#reactjs#coder#website#webdesign#frontend#coding#open source

56 notes

·

View notes

Photo

🤠

#it's a bean!#she says excitedly to the 3 people who remember her earliest simblr gameplay days 🥲#I've settled on beatrice bean as it sounds somewhat western#she was cloned from the same genetic source code as the rest of the beans#so naturally she's got me pining for a save game I haven't opened since 2018 😅

93 notes

·

View notes