#Squidward ai Squidward sings

Text

I’m back, and You’re all welcome.

#spongebob squarepants#Squidward ai Squidward sings#in the end#linkin park#squidward tentacles#Squidward#I’m sorry

2 notes

·

View notes

Text

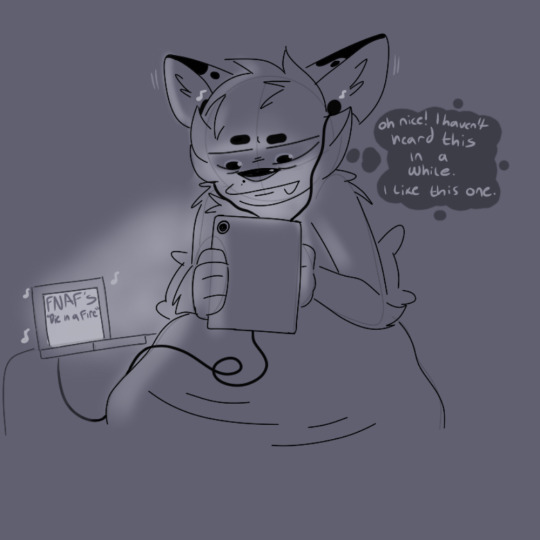

I don't know what I expected from a YouTube mix made based off of my likes but the whiplash caused me to stop whatever I was drawing to try and recreate the visceral reaction I had

#ruckis vandalizes#art#artists on tumblr#original character#fursona#anthro#furry#shit post#shitpost#funny#Meme#Die in a Fire BUT SQUIDWARD SINGS IT (AI Cover)

19 notes

·

View notes

Text

youtube

Okay I know there’s a lot of controversy surrounding the use of AI to create things. But if I hadn’t known this was made using AI, I deadass would’ve believed that Rodger Bumpass decided to make a god tier shitpost that ironically came out really good.

#i honestly believed rodger bumpass had sang this before reading that this was actually made using an AI#it’s high key incredible and terrifying that technology has progressed to a point where a voice can be so accurately replicated#to the point that you can make it say and sing things that the original voice never did#i can think of several ways this could be used in a VERY sinister way#but i’m happy that people seem more interested in using it to make squidward sing fnaf songs than commit crimes…for now#everything aside this lowkey slaps#Youtube

0 notes

Text

Kaden MacKay - Your Stupid Face (Lyrics) - YouTube

I feel like I should make an ai of Squidward singing this

24 notes

·

View notes

Text

i know im the one constantly complaining about people who say sv is the superior software & every vocal should have an sv bank but im a voca scene defender first & foremost & if u start trying to exclude it and other "ai" programs just because u let the internet train u into recoiling from any mention of ai then maybe u shouldnt be starting events u claim are centered around the community as a whole. its ai in the sense of an algorithm so the default tuning isnt quite as "robotic" it's still ur same ethically sampled voice it still requires quite a bit of user effort. it is so fucking far from one click and done auto generation. if ur saying this i know uve never worked with or seen anyone actually working with them because damn the "ai" is so off and so bad sometimes looking at default pitch lines is one of my favorite pastimes because of it. perhaps its making the process just a bit easier but its still so immensely different from going to an ai generation site to make say squidward sing a britney spears song. even tools like voice to midi or vocalochanger arent really touching that. its not the same thing. if u really know what ur doing u can do everything those 2 tools do only manually its really just helping streamline the process. its not even mandatory u can forego them entirely. are you really going to sit there and say songs with hours of effort put into the vocal synth, to get the notes in, tune everything, keep it timed, edit the phonemes so it sounds more like the intended words, all of that means nothing because it has a few tools with a name you dont like. im not sitting here and arguing for ai learning like chatgpt or whatever. there is in fact a difference from mass sourcing information or art from nonconsenting and often completely unaware people and taking a few more hours of samples from a paid consenting voice provider to make an instrument sound a little more like them with a little less user effort. these are still SYNTHS these are INSTRUMENTS just because one guitar plugin sounds more like a real guitar than a different one doesnt mean u need to boycott the more realistic one. also, hell, for a lot of if not all of (i havent kept up with all of them) v6 voicebanks there are, in terms of use, things that they tell you not to have them sing about. very specifically saying thats what they do and do not consent to having their voices used for. and it Does vary per synth its not a cover all. some of u need to learn to stop jumping at buzz words and actually learn what it is ur dealing with.

#im normal about vocal synths#if this incites violence in my inbox may i remind u im an adult with a job & we both have better things to be doing#if ur a classic voca fan then have the nerve to say that instead of wording things for the general community#only to later retract it and say u only think certain synths count

10 notes

·

View notes

Text

sorry i fucking love AI. SO MANY SONGS ARE IMPROVED BY MICHAEL JACKSON SINGING THEM. OR BRITNEY. OR EVEN SQUIDWARD WTF.

9 notes

·

View notes

Text

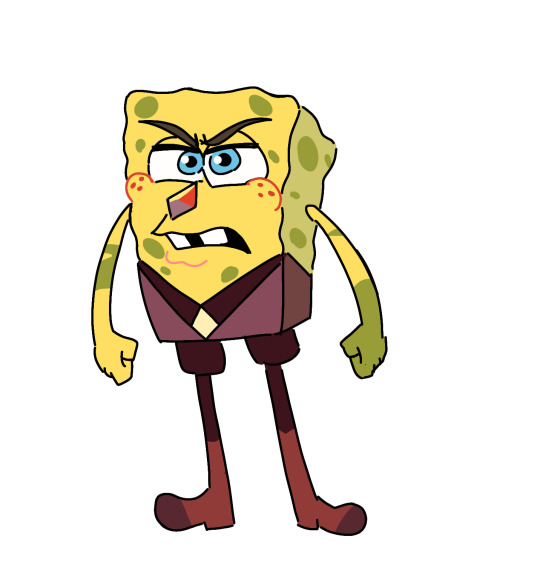

I MADE SOMETHING CURSED TODAY

based on: Stronger Than You but Squidward sings it (AI Cover) - YouTube and Mr. Krabs sings Stronger Than You (Parody) - YouTube

#cursed i guess#cursed art#bob sponge#spongebob#undertale#sans#sans undertale#steven universe#jasper#shitpost#god can't stop me#krusty krab#spongebob squarepants

19 notes

·

View notes

Text

What if I said this was under a video of an ai cover of plankton and squidward singing “something stupid”

11 notes

·

View notes

Text

youtube

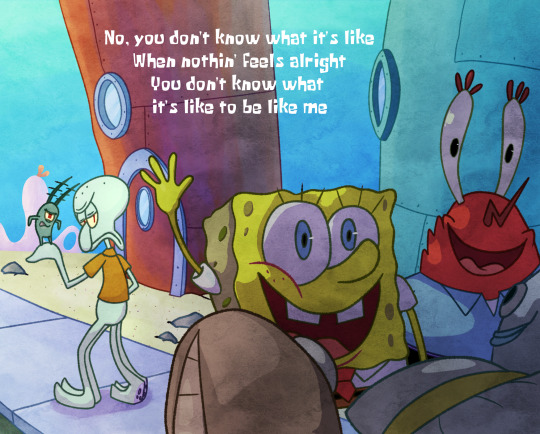

A picture for my SpongeBob Ai cover. This time, Squidward and Plankton singing the song, Welcome to my life by Simple Plan.

I just felt like this is a song that is appropriate to Squidward. And also I wanted Plankton to have a duet as well.

I am pretty sure this is self-explanatory if you know the show. I have so much ideas on how the picture could have been based on how the scenario would go (Like getting stuck inside a dark building and trying to get out or being beaten up), I just went with something lighter instead.

14 notes

·

View notes

Text

You know, after hearing Squidward singing Megadeth, I don't think AI is such a bad thing anymore.

8 notes

·

View notes

Text

Introduction - Vocal Synth Terminology - Part 2

This is a continuation of my previous post. I highly recommend checking that one out first before reading this post.

Append: This term was originally used to describe an additional voice library, originating with Hatsune Miku Append (shown below) and Kagamine Rin and Len Append. Appends provide vocal synths with different tones, such as Miku’s Soft append giving her a more gentle, breathy voice, and her Dark append, which provides her with a more mature, motherly tone.

Now, the word “append” can be used for any vocal synth of a character that differs from their usual voice, such as Gumi’s “Adult” voicebank, though the correct word to address these voicebanks is just… voicebank. Proper appends are commonly seen among UTAU voicebanks, like Kasane Teto’s Weak Append, and Yamine Renri’s EDGE Append!

Fanloid: Also known as "derivatives", these are fanmade vocal synth characters. Fanloids do not have their own voicebanks, instead, their voices are made by editing the parameters of a pre-existing vocal synth. For example, Akita Neru (shown below) is a high-pitched Miku or a low-pitched Rin, Yowane Haku is a low-pitched Miku, Hatsune Mikuo is literally a gender-bend of Miku with the voicebank’s gender factor increased, and Honne Dell made with Kagamine Len with his gender and brightness factors lowered.

Realistic Voice Cloning: Also known as RVC, this phenomenon is loathed by every vocal synth user. If you have been on the internet since 2023ish, you have probably seen things like “Mr. Beast AI sings IDOL by YOASOBI” (shown below), or “Dio Brando sings Colleen Ballinger’s Apology”. These AI modules basically involve taking voice samples of a celebrity, fictional character, or literally ANYTHING, and creating a voice module that can sing any audio track provided to it.

It should not be a big surprise that AI has made its way to the music industry as well, but as cool as they sound, most RVC modules are illegal and essentially harmful to the vocal synth community, and the music industry in general. People make these modules without the knowledge of the “voice providers” and gain a ton of views for doing LITERALLY NOTHING aside from mixing the vocal track with the instrumental, and often make money off of someone else’s voice. They could also make the module say something obscure, offensive, or lewd, and put the blame on the voice provider. I do not think we need to go into details about what is wrong with these consequences, but long story short, they fucking suck.

The icing on the cake is that people would make AI modules of VOCAL SYNTHS as well, which is why most people think Miku is like Squidward AI or whatever. Not only does it harm the voice providers who put their time and effort into creating amazing voicebanks, but it also gives the public a nasty impression of the producer and cover artist community.

youtube

Jinriki UTAUs: Similar to the RVC phenomena, these are UTAU voicebanks that are made without the knowledge of the voice providers. Again, Jinrikis can be anything, from Mario to Baldi from Baldi’s Basics in Education and Learning, to a YouTuber or a LoveLive! character. Yeah… they can be quite cursed. Like RVC, these voicebanks can be considered illegal, however, it's not much of a problem if you keep these voicebanks to yourself, don’t run around advertising them, distribute them, or use them for commercial use.

Jinriki voicebanks should not be confused with porting voicebanks (second video below) from other software, like putting MEIKO into Open UTAU. This is completely okay, so as long as you do not distribute them and port the voicebank yourself.

youtube

youtube

AI Voicebanks: These are actual paid voicebanks that use AI technology to enhance the quality of the vocals to make the tuning sound realistic and to make the overall tuning process much easier for producers. The AI technology would analyze the pitch deviation and other factors of a file and smooth out and clean up anything that sounds too flat or janky. In fact, even when a file is untuned are flat, AI voicebanks will try to create a smooth transition and can provide users with a nice start. As useful as they sound, sometimes these voicebanks can be unpredictable and not provide you with the desired output, but with a bit of practice you can create beautiful melodies with them.

The popularity of AI voicebanks started with CeVIO and SynthesizerV, but now we have AI voicebanks in VOCALOID as well! However, the V6 voicebanks are… not exactly the best, but I will get into them in another post. The most popular AI voicebanks as of now are Chris-A (CeVIO + VOISONA), Eleanor Forte (SynthesizerV), KAFU (CeVIO + SynthesizerV), ONE (Synthesizer V), Megpoid Gumi AI (VOCALOID + SynthesizerV Studio), and recently, Kasane teto AI (SynthesizerV; shown below)!

youtube

Diffusion Singing Voice Conversion Model: Commonly known as Diff-SVC, this a program used to make AI voicebanks that works similarly to UTAU; you make a voicebank with your own singing, and use AI to make it into a voicebank that sounds human. These voicebanks are slowly “trained” to sound more realistic, and can produce beautiful vocals! These should not be mixed up with RVC modules as these voicebanks are either made by the voice providers or with their consent, and they have to be tuned like in any other synthesizer software.

youtube

Talkloid: These are commonly seen in memeish side of the vocal synth community. Basically, instead of making voicebanks sing, you tune them to make them talk. Talkoids are typically shitposts of vocal synths in bizarre scenarios (with a ton of swearing and Generation Z/Alpha jokes… some talkloids are really unhinged and/or cursed), but there are a few hidden gems such as cute conversations or stories tying to a producer’s lore about their favourite vocal synths.

youtube

youtube

PV: This acronym stands for Promotional Video. PVs accompany vocal synth songs and covers to enhance the listening experience . PVs can range from still artwork with lyrics underneath, to animatics and full-on animations.

youtube

Project Diva: A rhythm game series starring the Cryptonloids alongside Kasane Teto, Yowane Haku, and Akita Neru created by SEGA. Not only are they are known for their nightmarish beatmaps (looking at you, The Intense Singing of Hatsune Miku), but their stunning 3D PVs as well. Producers would often record PVs of the vocal synth of their choice in whatever costume they desire, and if the singer is a character who is not in the game, there are tons of mods on GameBanana (example with Otomachi Una shown below; the Teto AI Ghost Rule cover I posted above is another instance of this)!

youtube

Project Sekai! Colorful Stage!: Hell. Absolute hell.

Just kidding, I have a strong feeling that you already know what this is, but in case you do not, Project Sekai! is a mobile rhythm game created by Sega and Piapro starring the Cryptonloids and five music groups of original characters, all with their own stories and songs. Like any other mobile rhythm game, you can pull for characters you want with gacha currency and participate in events that may or may not relate to the main story for prizes.

A lot of new VOCALOID fans come from the Project Sekai! fandom, and although much of the fandom is a mess of entitled, bratty teenagers on Twitter, some people genuinely want a better understanding of how vocal synths work, softwares aside from VOCALOID, and songs aside from those in the game. This is one of the reasons why I made this blog, to properly explain how vocal synths work, and I hope this will give you a better understanding of this precious community.

Also… I may add my EN ID to my about page. Maybe.

MikuMikuDance: Commonly known as “MMD”, this is a community-driven animation software. In MMD, you can create your own motions, cameras, stages, accessories and models, to create stunning PVs. You can also use other software like Blender to enhance your animations

As the name implies, MMD was initially designed for VOCALOID and UTAU fans to create music videos of their favourite characters, but now it has evolved beyond great lengths. You are sure to find models and stages from your favourite video game or TV/anime series, and there are tons of motions and cameras out there.

If you were in the vocal synth fandom in the late 2000s’, you may remember the MMD CUP series. These animations are an example of common memes people will make with this software, alongside PVs for Talkloids or a short motion of your favourite character doing whatever dance is currently popular on TikTok.

youtube

Digital Audio Workstation: Know by the shorthand “DAW”, these are softwares used for making music! DAWs are extremely versatile, allowing you to create a huge variety of projects in them. You can mix an exported wav. file of a tuned VSQx with an off-vocal track, edit audio in general, or use the virtual instruments to create your own arrangements and arrangements! In the vocal synth community, people do all of these things! The most popular DAWs include FL Studio (shown below), Studio One, Logic Pro, Cubase, Ableton Live, Reaper, Cakewalk, and Waveform!

Unsurprisingly, DAWs are quite expensive, usually costing more than the actual vocal synthesizer as well. Not to mention, plug-ins can add additional expenses. When picking a DAW, it’s honestly better to pick quality over cost, all while seeing what fits your budget and needs.

Oh, I’m going to regret exposing myself here, but in case anyone asks, I have no idea how to mix and probably will not make a tutorial on it. I have never used a proper DAW aside from Audacity and Soundtrap by Spotify, and those mixes turned out HORRENDOUS. Not to mention, I’m practically broke so I can’t invest in a good DAW. I’m trying to figure out how to use FL Studio 20 (the free, limited version of this software), but my smooth brain can not wrap my head around the process, even with two helpful tutorials I found. Plus, I do not have the time to learn.

However, I may share some resources in the future relating to using a DAW from people with a brain cell, so keep your eye out for those. But I promise, the day I get decent experience in mixing is when I will make a guide on it.

That is all of the definitions I can think of for now! I know I did not go over all of the parameters like "growl" and "cross synthesis", and that is because I will explain how all of the parameters work in a planned post. I also took some notes from Minnemi's video on basic VOCALOID terminology and the Vocaloid wiki. If I am able to think of any other important jargon later on, I will update this post.

Thank you for reading this! I hope your knowledge on vocal synths has expanded, and I apologize for the huge post!

#vocaloid tutorial#vocaloid#vocal synth#vocaloidproducer#ai that are not paid voicebanks must die#mmdmikumikudance#mikumikudance#project diva#fanloid#synthesizer v#utau#long post#vocaloid resource

3 notes

·

View notes

Text

the temptation to make playlists curating ai covers based on whether i personally feel they would listen to the original song (spongebob and mr krabs would listen to just about any song based on the tastes they display in canon; characters like squidward or plankton would be more selective) or whether i personally feel like they’re applicable or relatable to the character (for example mr krabs singing the world we knew by frank sinatra)

issue is that would obviously be like... effort? good heavens.

5 notes

·

View notes

Text

I have very conflicted feelings about the AI song covers because like I listen to computers singing all the time not only miku and other vocaloids I listen to absolutely any computer synthesized sounds and with shit like *insert politian/celebrity here* AI cover of popular song I kind of get it because who would want to sit there and collect the samples by hand EXCEPT that's what we did, that's exactly what we used UTAU for, all the spongebob, trump and even hetalia character covers, made in UTAU or even worse by hand in sony vegas or fl studo, are very important to me, because there is a human behind it, someone who put in the time and effort and passion to create something like this and now you open some AI and have that handed to you, I ask you where is the fun, you listen to it and it has no story to tell, no joy of creation to share with others and you may say I'm a hypocrite for enjoying shit like Jiafei (there's inherent beauty in the collective meme of floptropica, as there is in goncharov) while the hitler AI covers of barbie girl (setting the obvious problematic aspect of it aside if anyone is concerned) are just kinda not there for me because I know that there should be someone willing to deconstruct the samples by hand eventually and make it and if they didn't do it, it simply means no one needed it as much and thus it's very disposable art, disposable like a pretty shampoo package not a cherished memory encased in a piece of art and people put those covers on spotify and make money of off it while the guy who made a spongebob X squidward Magnet cover can't share the voicebank because it's "illegal" because they have more sense of humanity towards the artists that created the source material and people with no passion and understanding for the act of creation will continue to profit. I'm not saying all forms of AI are inherently bad, used for example in automation of voice synthesis can produce amazing effects, but I fear the era where people will stop creating new music and expect the computers to just give it to them, AI generated lyrics, AI generated melody, AI generated backstory too while to me the most important part is the things people want to show to other through crating something new and of their own

how many covers of barbie girl can you handle before you want something new?

would blue jeans and bloody tears give you cha cha cha?

2 notes

·

View notes

Note

Hey worstie i'm about to head down a rabbit hole of ai song covers in Planktons voice. Sounds promising

Have you tried squidward and spongebob yet?

I personally need to hear Patrick sing chandelier

3 notes

·

View notes

Text

I think the unfortunate truth is that "AI" tools like speech synthesizers, chatbots, image generators, etc. is that there probably isn't a way to keep them as silly things to play with because they HAVE to scrape their stuff off other people's (real humans!) work.

Like we already know about AI stealing art from artists, but a VA on twitter asked somebody to take down an AI cover using her voice, because it is her voice used without her permission and without pay, and AI bros bullied her off the site.

I love listening to tf2 characters reading the Wikipedia article for cock and ball torture and I love squidward singing frank sinatra, but like. Where do we draw the line after funny memes? When is it creepy, when is it taking jobs away from voice actors? When is it actually putting words in someone's mouth without their knowledge or consent? Idk it's just. Weird times we're living in.

4 notes

·

View notes

Text

Some of these AI covers of songs actually sound so good i heard squidward singing I wanna rock with you by mj and it actually sounded so good wtf

18 notes

·

View notes