#centaurs

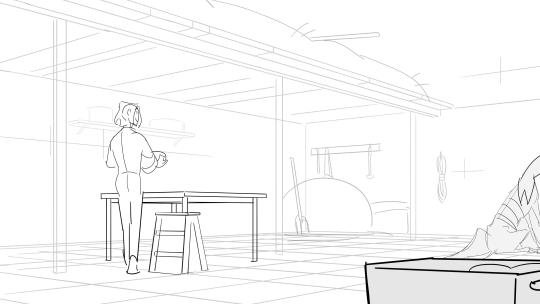

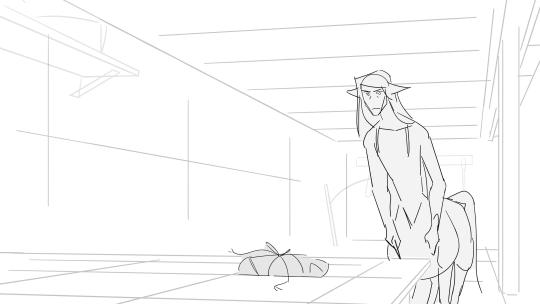

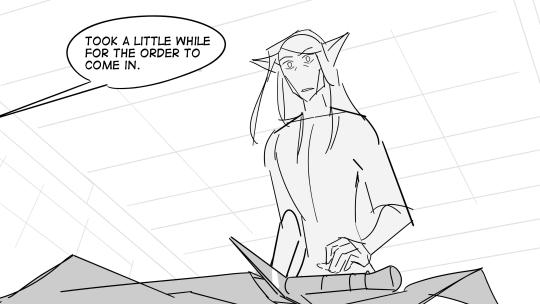

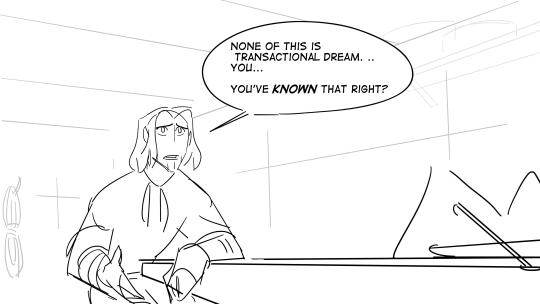

Text

Gift

two part comic! next part can be found here

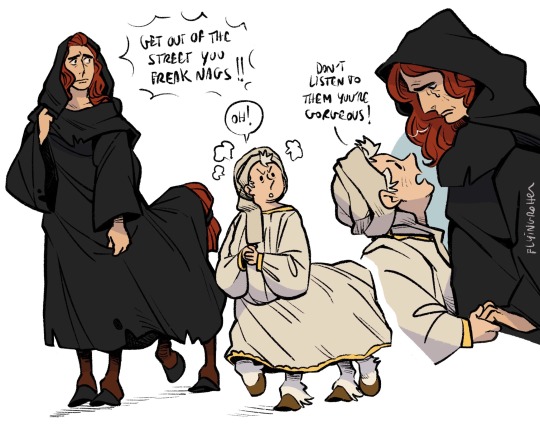

#hob gadling and his wildly unrelenting optimism#Hob you gotta stop looking at dream like you wanna marry him#even Dream's not THAT clueless#(untrue) Dream is definitely that clueless#the sandman#not the first time dream has refused something offered to him#horse girl au#the art tag#dreamling#hob gadling#dream of the endless#centaur!dream#centaurs

292 notes

·

View notes

Text

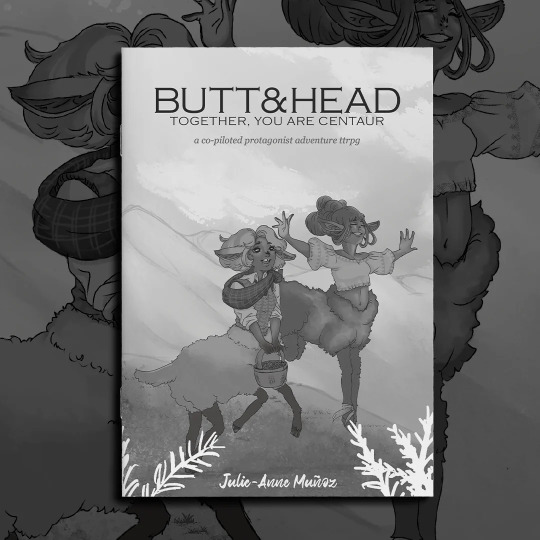

It’s finally here, the updated version of BUTT&HEAD, Jam’s hack of GUN&SLINGER, is available now through Plus One Exp’s ZINE CLUB, and digitally on Itch!

Play as two halves of a centaur (the butt & the head) on an adventure through a magical world using the playing card-based MARKED&MADE system.

In this updated edition, you'll find not only the original hack of GUN&SLINGER, but delightful cover art by Sinta Posadas, and brand new adventures from 3 fantastic creators!

91st Regiment of Hoof by Kevin Nguyen

Someone back home died. Brass redacted out all the nouns though so you can’t tell who; they ticked Operational Security and Morale boxes on the reasons stamp. Learned on your last tour not to worry about that kinda thing so much, not out here on the front.

Worrying’s the luxury of the rear and gear.

Camp Marigold by Charu Patel

Chill frosty air envelops you as you push aside the overgrown jasmine on these ruins. You and your campmates found this place weeks ago, much to the camp leaders' chagrin.

They refuse to investigate the strange items you've found; identical copies of random paraphernalia around camp: Saira's tail ribbon, Aliya's blue metal whistle, Archan's viewing glasses, and a cabin banner, all of which mysteriously disappear by the next morning.

The Apprentice by Liam McCrickard

Atop a mountain covered in snowy woods, SHE awaits you. HER legs are old and gnarled. HER hooves dig into the ground as deep as the roots of the oldest pine. HER back is hunched, with a spine jutting out like the ridges of the tallest peaks. SHE is the keeper of your kind’s oldest magics, and should you prove yourself, a teacher who can impart wisdom no other speaking soul remembers.

SHE says you will be given four trials, then SHE will know.

All the links below~

🐴Digital on Itch:

📖Physical Copies:

📚Zine Club, get cool stuff every month!:

16 notes

·

View notes

Text

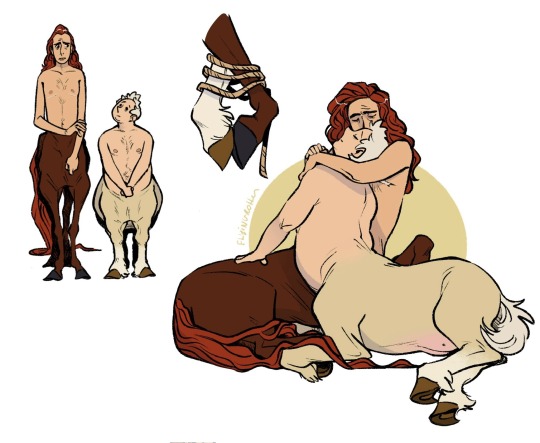

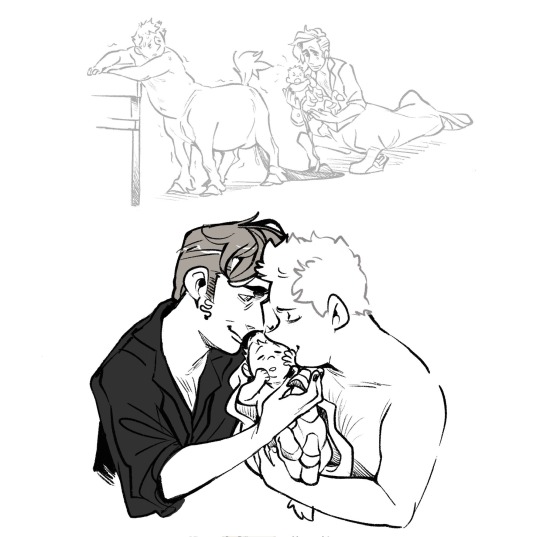

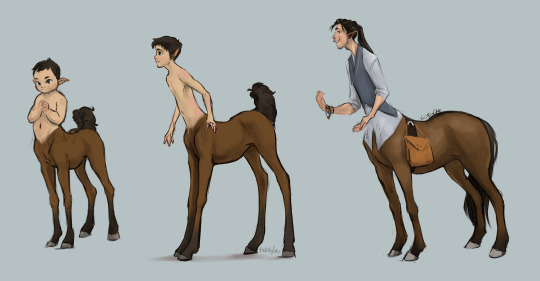

The last centaurs, Aziraphale and Crowley, and their little foal Orion 💛

#good omens#aziraphale#crowley#ineffable husbands#aziracrow#orion#centaurs#centaur#centaur au#centaurs aren’t gendered when they’re foals#they’ll decide who they want to be while growing up#so Orion can go by any pronouns for now#also the dog name is Pantoufle#procreate#digital art#fanart#my art#I have no idea how horse anatomy works I’m super sorry#I was too lazy to check for refs 💀#pregnancy#giving birth

6K notes

·

View notes

Text

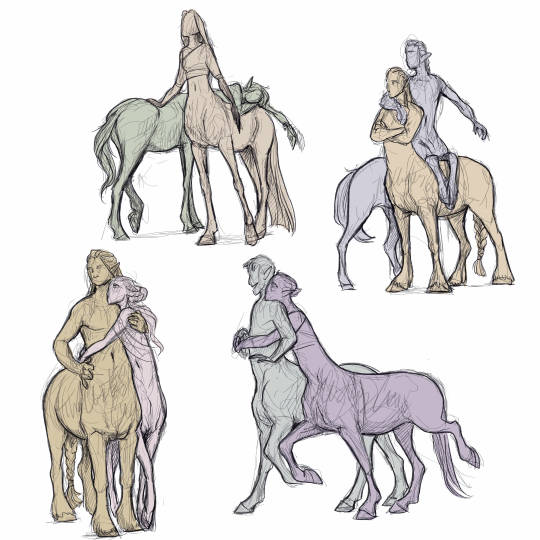

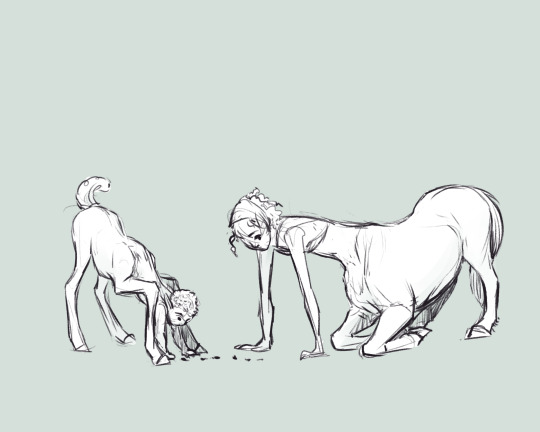

centaurs are enough of a pain to draw on their own, lets add interactions to the mix!

I'm generally trying to get better at figures interacting in a way that feels solid, cause who doesn't want more snuggles in the world 💜

#centaurs#snuggles#doodles#always back at it with the faceless figures#it just helps me focus on the movement!

6K notes

·

View notes

Text

Centaures by Eugene Fromentin (1868)

#eugene fromentin#art#paintings#fine art#19th century#19th century art#academicism#academism#academic art#painting#french artist#french art#mythology#greek mythology#mythical creatures#centaur#centaurs#classic art

2K notes

·

View notes

Text

Centaur Watching Fish by Arnold Böcklin (1878).

I love love love Böcklin’s mythical pieces, they have this sense of realism, and often even sensitivity.

4K notes

·

View notes

Text

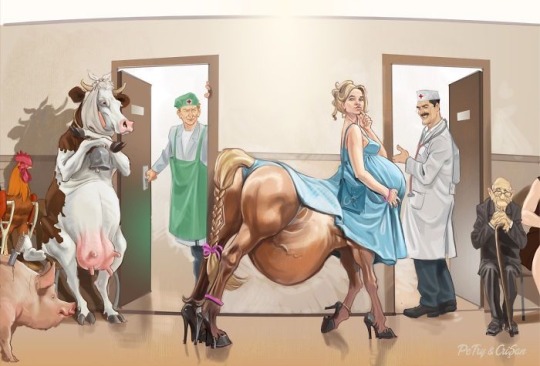

Behold!

A Cursed Image!

(I had to see this with mine own eyes and I feel compelled to inflict it upon all of you)

Credit: Petry & Crisian

#troglodyte thoughts#free range sustainable shitpost#pregnant centaur#vet or doc?#centaurs#cursed image

3K notes

·

View notes

Text

“Humans in the loop” must detect the hardest-to-spot errors, at superhuman speed

I'm touring my new, nationally bestselling novel The Bezzle! Catch me SATURDAY (Apr 27) in MARIN COUNTY, then Winnipeg (May 2), Calgary (May 3), Vancouver (May 4), and beyond!

If AI has a future (a big if), it will have to be economically viable. An industry can't spend 1,700% more on Nvidia chips than it earns indefinitely – not even with Nvidia being a principle investor in its largest customers:

https://news.ycombinator.com/item?id=39883571

A company that pays 0.36-1 cents/query for electricity and (scarce, fresh) water can't indefinitely give those queries away by the millions to people who are expected to revise those queries dozens of times before eliciting the perfect botshit rendition of "instructions for removing a grilled cheese sandwich from a VCR in the style of the King James Bible":

https://www.semianalysis.com/p/the-inference-cost-of-search-disruption

Eventually, the industry will have to uncover some mix of applications that will cover its operating costs, if only to keep the lights on in the face of investor disillusionment (this isn't optional – investor disillusionment is an inevitable part of every bubble).

Now, there are lots of low-stakes applications for AI that can run just fine on the current AI technology, despite its many – and seemingly inescapable - errors ("hallucinations"). People who use AI to generate illustrations of their D&D characters engaged in epic adventures from their previous gaming session don't care about the odd extra finger. If the chatbot powering a tourist's automatic text-to-translation-to-speech phone tool gets a few words wrong, it's still much better than the alternative of speaking slowly and loudly in your own language while making emphatic hand-gestures.

There are lots of these applications, and many of the people who benefit from them would doubtless pay something for them. The problem – from an AI company's perspective – is that these aren't just low-stakes, they're also low-value. Their users would pay something for them, but not very much.

For AI to keep its servers on through the coming trough of disillusionment, it will have to locate high-value applications, too. Economically speaking, the function of low-value applications is to soak up excess capacity and produce value at the margins after the high-value applications pay the bills. Low-value applications are a side-dish, like the coach seats on an airplane whose total operating expenses are paid by the business class passengers up front. Without the principle income from high-value applications, the servers shut down, and the low-value applications disappear:

https://locusmag.com/2023/12/commentary-cory-doctorow-what-kind-of-bubble-is-ai/

Now, there are lots of high-value applications the AI industry has identified for its products. Broadly speaking, these high-value applications share the same problem: they are all high-stakes, which means they are very sensitive to errors. Mistakes made by apps that produce code, drive cars, or identify cancerous masses on chest X-rays are extremely consequential.

Some businesses may be insensitive to those consequences. Air Canada replaced its human customer service staff with chatbots that just lied to passengers, stealing hundreds of dollars from them in the process. But the process for getting your money back after you are defrauded by Air Canada's chatbot is so onerous that only one passenger has bothered to go through it, spending ten weeks exhausting all of Air Canada's internal review mechanisms before fighting his case for weeks more at the regulator:

https://bc.ctvnews.ca/air-canada-s-chatbot-gave-a-b-c-man-the-wrong-information-now-the-airline-has-to-pay-for-the-mistake-1.6769454

There's never just one ant. If this guy was defrauded by an AC chatbot, so were hundreds or thousands of other fliers. Air Canada doesn't have to pay them back. Air Canada is tacitly asserting that, as the country's flagship carrier and near-monopolist, it is too big to fail and too big to jail, which means it's too big to care.

Air Canada shows that for some business customers, AI doesn't need to be able to do a worker's job in order to be a smart purchase: a chatbot can replace a worker, fail to their worker's job, and still save the company money on balance.

I can't predict whether the world's sociopathic monopolists are numerous and powerful enough to keep the lights on for AI companies through leases for automation systems that let them commit consequence-free free fraud by replacing workers with chatbots that serve as moral crumple-zones for furious customers:

https://www.sciencedirect.com/science/article/abs/pii/S0747563219304029

But even stipulating that this is sufficient, it's intrinsically unstable. Anything that can't go on forever eventually stops, and the mass replacement of humans with high-speed fraud software seems likely to stoke the already blazing furnace of modern antitrust:

https://www.eff.org/de/deeplinks/2021/08/party-its-1979-og-antitrust-back-baby

Of course, the AI companies have their own answer to this conundrum. A high-stakes/high-value customer can still fire workers and replace them with AI – they just need to hire fewer, cheaper workers to supervise the AI and monitor it for "hallucinations." This is called the "human in the loop" solution.

The human in the loop story has some glaring holes. From a worker's perspective, serving as the human in the loop in a scheme that cuts wage bills through AI is a nightmare – the worst possible kind of automation.

Let's pause for a little detour through automation theory here. Automation can augment a worker. We can call this a "centaur" – the worker offloads a repetitive task, or one that requires a high degree of vigilance, or (worst of all) both. They're a human head on a robot body (hence "centaur"). Think of the sensor/vision system in your car that beeps if you activate your turn-signal while a car is in your blind spot. You're in charge, but you're getting a second opinion from the robot.

Likewise, consider an AI tool that double-checks a radiologist's diagnosis of your chest X-ray and suggests a second look when its assessment doesn't match the radiologist's. Again, the human is in charge, but the robot is serving as a backstop and helpmeet, using its inexhaustible robotic vigilance to augment human skill.

That's centaurs. They're the good automation. Then there's the bad automation: the reverse-centaur, when the human is used to augment the robot.

Amazon warehouse pickers stand in one place while robotic shelving units trundle up to them at speed; then, the haptic bracelets shackled around their wrists buzz at them, directing them pick up specific items and move them to a basket, while a third automation system penalizes them for taking toilet breaks or even just walking around and shaking out their limbs to avoid a repetitive strain injury. This is a robotic head using a human body – and destroying it in the process.

An AI-assisted radiologist processes fewer chest X-rays every day, costing their employer more, on top of the cost of the AI. That's not what AI companies are selling. They're offering hospitals the power to create reverse centaurs: radiologist-assisted AIs. That's what "human in the loop" means.

This is a problem for workers, but it's also a problem for their bosses (assuming those bosses actually care about correcting AI hallucinations, rather than providing a figleaf that lets them commit fraud or kill people and shift the blame to an unpunishable AI).

Humans are good at a lot of things, but they're not good at eternal, perfect vigilance. Writing code is hard, but performing code-review (where you check someone else's code for errors) is much harder – and it gets even harder if the code you're reviewing is usually fine, because this requires that you maintain your vigilance for something that only occurs at rare and unpredictable intervals:

https://twitter.com/qntm/status/1773779967521780169

But for a coding shop to make the cost of an AI pencil out, the human in the loop needs to be able to process a lot of AI-generated code. Replacing a human with an AI doesn't produce any savings if you need to hire two more humans to take turns doing close reads of the AI's code.

This is the fatal flaw in robo-taxi schemes. The "human in the loop" who is supposed to keep the murderbot from smashing into other cars, steering into oncoming traffic, or running down pedestrians isn't a driver, they're a driving instructor. This is a much harder job than being a driver, even when the student driver you're monitoring is a human, making human mistakes at human speed. It's even harder when the student driver is a robot, making errors at computer speed:

https://pluralistic.net/2024/04/01/human-in-the-loop/#monkey-in-the-middle

This is why the doomed robo-taxi company Cruise had to deploy 1.5 skilled, high-paid human monitors to oversee each of its murderbots, while traditional taxis operate at a fraction of the cost with a single, precaratized, low-paid human driver:

https://pluralistic.net/2024/01/11/robots-stole-my-jerb/#computer-says-no

The vigilance problem is pretty fatal for the human-in-the-loop gambit, but there's another problem that is, if anything, even more fatal: the kinds of errors that AIs make.

Foundationally, AI is applied statistics. An AI company trains its AI by feeding it a lot of data about the real world. The program processes this data, looking for statistical correlations in that data, and makes a model of the world based on those correlations. A chatbot is a next-word-guessing program, and an AI "art" generator is a next-pixel-guessing program. They're drawing on billions of documents to find the most statistically likely way of finishing a sentence or a line of pixels in a bitmap:

https://dl.acm.org/doi/10.1145/3442188.3445922

This means that AI doesn't just make errors – it makes subtle errors, the kinds of errors that are the hardest for a human in the loop to spot, because they are the most statistically probable ways of being wrong. Sure, we notice the gross errors in AI output, like confidently claiming that a living human is dead:

https://www.tomsguide.com/opinion/according-to-chatgpt-im-dead

But the most common errors that AIs make are the ones we don't notice, because they're perfectly camouflaged as the truth. Think of the recurring AI programming error that inserts a call to a nonexistent library called "huggingface-cli," which is what the library would be called if developers reliably followed naming conventions. But due to a human inconsistency, the real library has a slightly different name. The fact that AIs repeatedly inserted references to the nonexistent library opened up a vulnerability – a security researcher created a (inert) malicious library with that name and tricked numerous companies into compiling it into their code because their human reviewers missed the chatbot's (statistically indistinguishable from the the truth) lie:

https://www.theregister.com/2024/03/28/ai_bots_hallucinate_software_packages/

For a driving instructor or a code reviewer overseeing a human subject, the majority of errors are comparatively easy to spot, because they're the kinds of errors that lead to inconsistent library naming – places where a human behaved erratically or irregularly. But when reality is irregular or erratic, the AI will make errors by presuming that things are statistically normal.

These are the hardest kinds of errors to spot. They couldn't be harder for a human to detect if they were specifically designed to go undetected. The human in the loop isn't just being asked to spot mistakes – they're being actively deceived. The AI isn't merely wrong, it's constructing a subtle "what's wrong with this picture"-style puzzle. Not just one such puzzle, either: millions of them, at speed, which must be solved by the human in the loop, who must remain perfectly vigilant for things that are, by definition, almost totally unnoticeable.

This is a special new torment for reverse centaurs – and a significant problem for AI companies hoping to accumulate and keep enough high-value, high-stakes customers on their books to weather the coming trough of disillusionment.

This is pretty grim, but it gets grimmer. AI companies have argued that they have a third line of business, a way to make money for their customers beyond automation's gifts to their payrolls: they claim that they can perform difficult scientific tasks at superhuman speed, producing billion-dollar insights (new materials, new drugs, new proteins) at unimaginable speed.

However, these claims – credulously amplified by the non-technical press – keep on shattering when they are tested by experts who understand the esoteric domains in which AI is said to have an unbeatable advantage. For example, Google claimed that its Deepmind AI had discovered "millions of new materials," "equivalent to nearly 800 years’ worth of knowledge," constituting "an order-of-magnitude expansion in stable materials known to humanity":

https://deepmind.google/discover/blog/millions-of-new-materials-discovered-with-deep-learning/

It was a hoax. When independent material scientists reviewed representative samples of these "new materials," they concluded that "no new materials have been discovered" and that not one of these materials was "credible, useful and novel":

https://www.404media.co/google-says-it-discovered-millions-of-new-materials-with-ai-human-researchers/

As Brian Merchant writes, AI claims are eerily similar to "smoke and mirrors" – the dazzling reality-distortion field thrown up by 17th century magic lantern technology, which millions of people ascribed wild capabilities to, thanks to the outlandish claims of the technology's promoters:

https://www.bloodinthemachine.com/p/ai-really-is-smoke-and-mirrors

The fact that we have a four-hundred-year-old name for this phenomenon, and yet we're still falling prey to it is frankly a little depressing. And, unlucky for us, it turns out that AI therapybots can't help us with this – rather, they're apt to literally convince us to kill ourselves:

https://www.vice.com/en/article/pkadgm/man-dies-by-suicide-after-talking-with-ai-chatbot-widow-says

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2024/04/23/maximal-plausibility/#reverse-centaurs

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#ai#automation#humans in the loop#centaurs#reverse centaurs#labor#ai safety#sanity checks#spot the mistake#code review#driving instructor

712 notes

·

View notes

Text

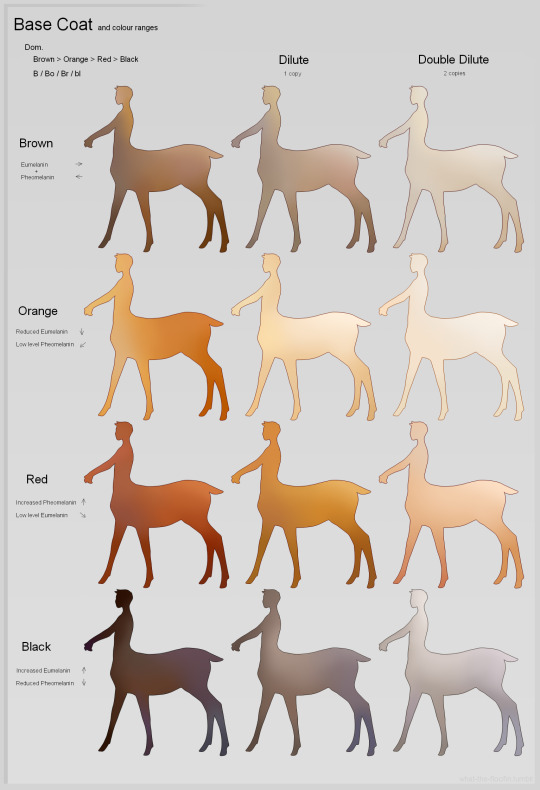

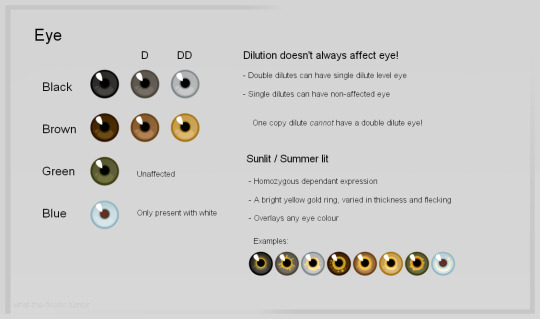

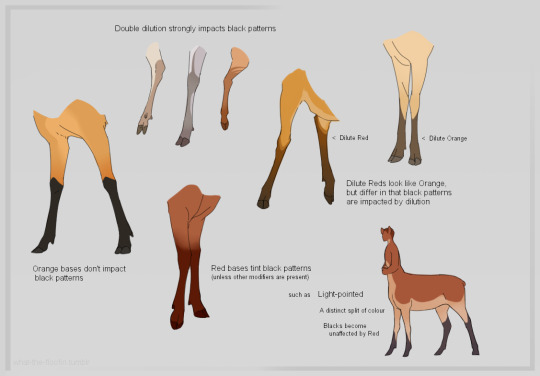

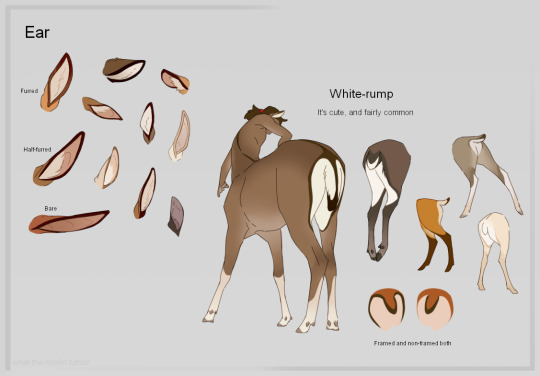

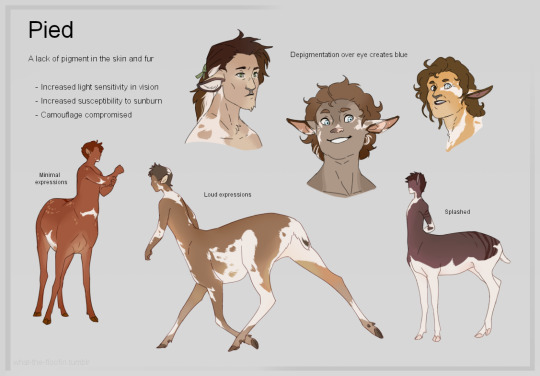

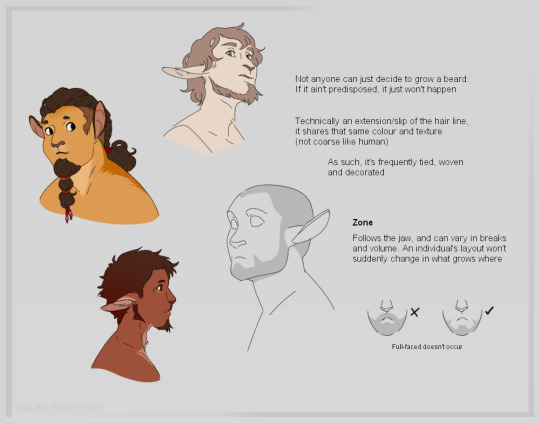

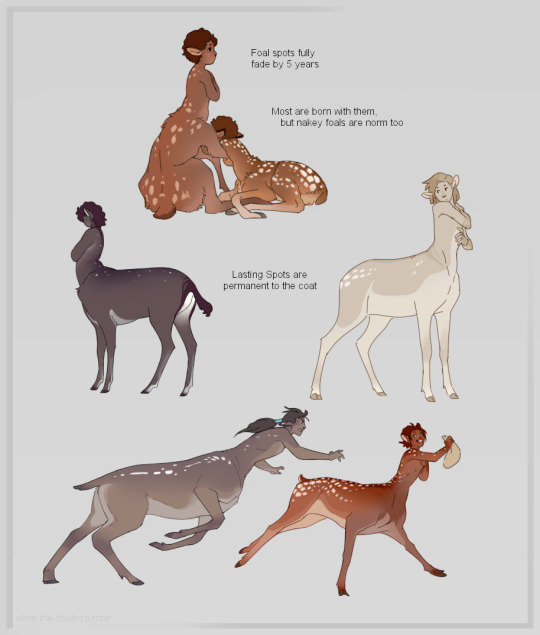

Honestly I think way too much about my cervitaurs at all times so have this compilation of Notes about them

#dnd#dnd art#centaurs#cervitaur#floof in dnd#its ART#accept all these NAKEY taurs for this#they're furred all over anyway lmao#it's not a full comprehensive shebang - with like ALL markings and whatnot - that'd be too much#but some obvious points of interest hell yeah#I make my own rules to follow and it is fun#i dont know how tumblr sizes things anymore but open image in new tab is always our friend - be free

2K notes

·

View notes

Text

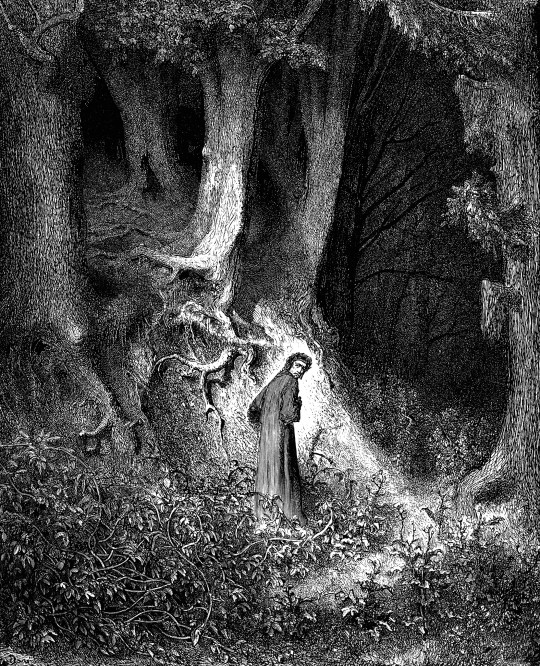

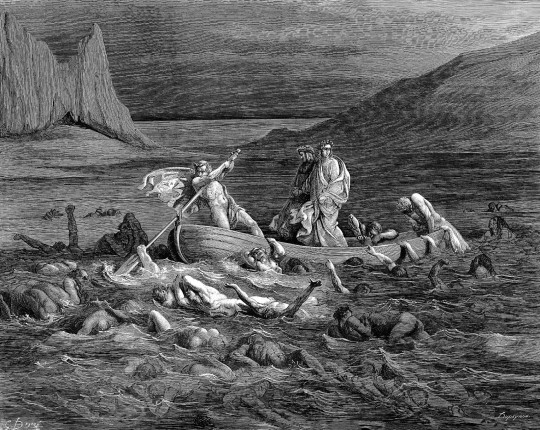

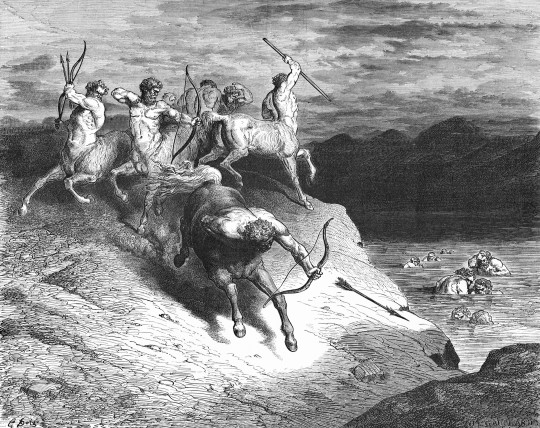

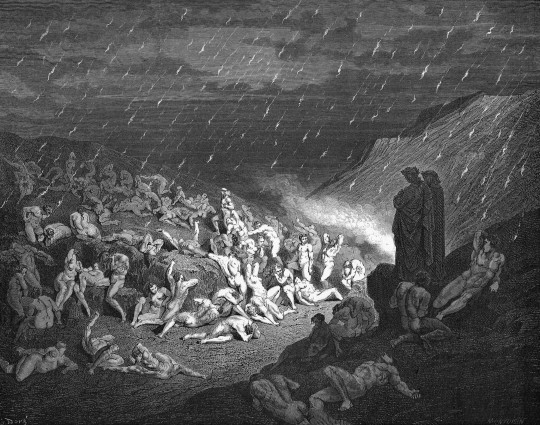

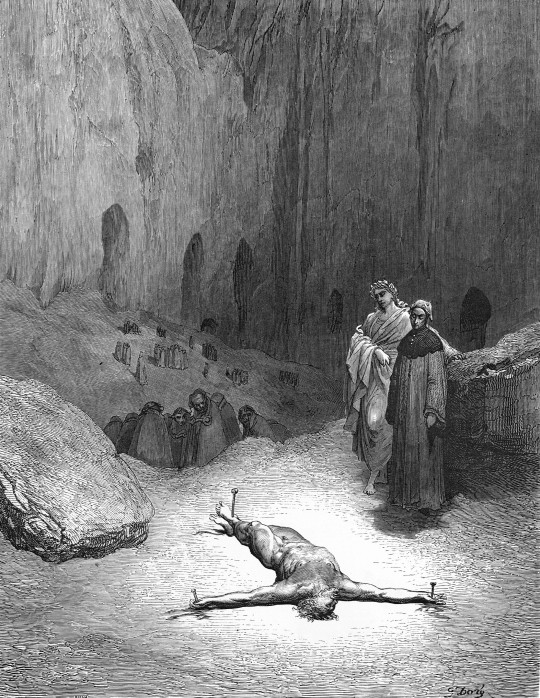

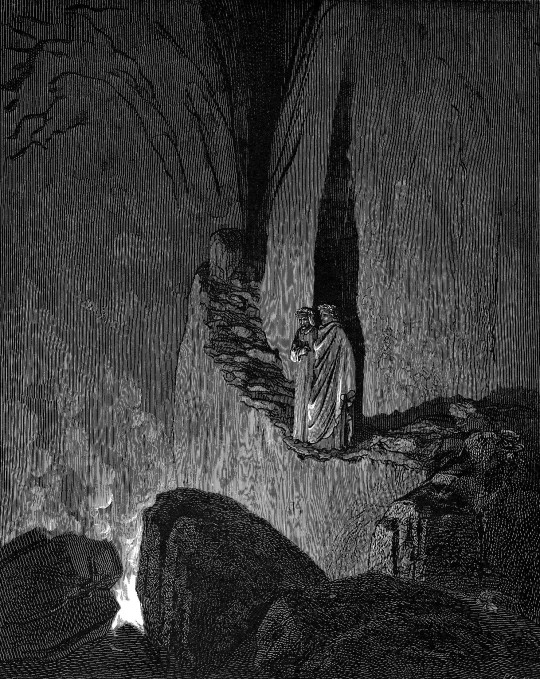

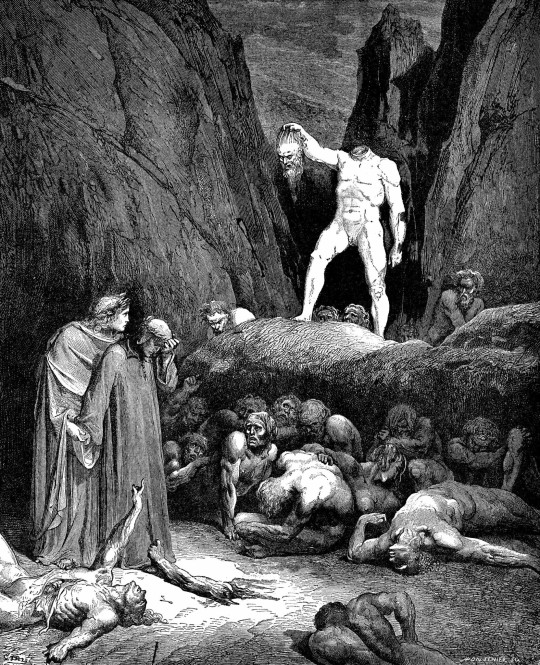

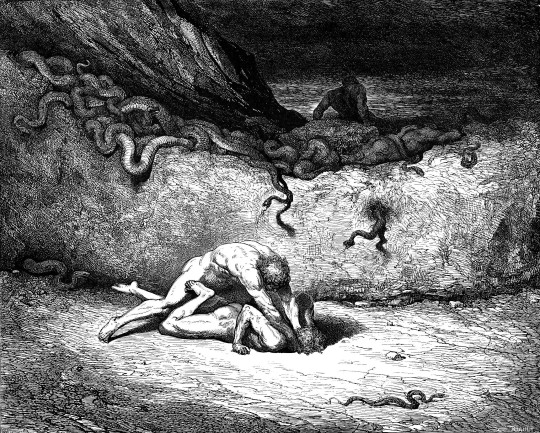

Dante's Inferno - art by Gustave Doré (1861)

#gustave doré#gustave dore#dante's inferno#divine comedy#19th century illustrations#fantasy art#horror art#wood engraving#dante alighieri#epic poem#nine circles of hell#cerberus#river styx#centaurs#harpies#devils#giants#lucifer#1860s#1861

847 notes

·

View notes

Text

#just cause the rules don't say you cant#doesnt mean you can or should#dnd art#centaur#theros campaign setting#dnd comic#not my art#dungeons and dragons#dnd#d&d#centaurs

1K notes

·

View notes

Text

Uno Reverse

Bonus:

@arialerendeair keeps giving me ideas to springboard off of XD

final image was referenced from Calvin and Hobbes :3

#he drank the water#listen I love the trope of Dream offering Hob a boon or service#out of some warped logic that Hob expects something later#and its so sweet when Hob says Dream “owes him nothing”#but the funnier and more unhinged option is for Hob to immediately order Dream to practice self care.#Dream: “wait no that's illegal!”#I also love the concept of Dream suspiciously theory crafting why Hob is being kind#only for his hypotheses to get struck down with increasing hilarity#this is good for Dream though#he's testing his new boundaries#horse girl au#the sandman#the art tag#dreamling#hob gadling#dream of the endless#centaur!dream#centaurs

344 notes

·

View notes

Text

a lot of fantasy universes like to paint dwarves as being the most alcohol tolerant, but i feel like if any fantasy race would be the best at a drinking contest it'd be a centaur.

horses have a specific protein called liver alcohol dehydrogenase, which allows them to convert alcohol into simple sugars easier than humans. additionally, centaurs have the bonus human liver in them and the advantage of being as big as most of a horse and most of a human.

instead of dwarven ale you have to get ready for that centaur IPA. it'll maybe kill you.

641 notes

·

View notes

Text

Battle of the Centaurs, Michelangelo Buonarroti, about 1492

#art history#art#italian art#aesthethic#15th century#centaurs#bassorilievo#sculpture#marble#michelangelo buonarroti#lapiths#greek mythology#ancient greece#rinascimento#casa buonarroti#homoerotic art#battle

186 notes

·

View notes

Note

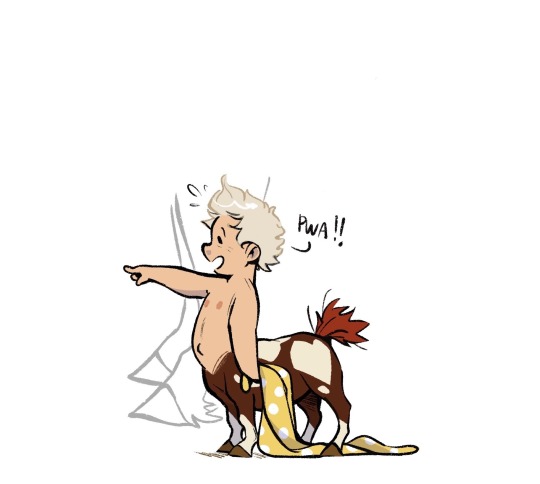

Do you have any references for centaur foals? It's really hard to find any references that's not adults

Yea! Enjoy a dump of all my centaur babies!

They're mostly a bit older drawings but I think they still hold up haha and I don't think many people draw them cause they can be a little funky- what with the chunky little bodies on big ol spidery legs 😅 But I still think they're cute 💜

And a lot of my drawings of the bitties are in slings, as that's how I built in infant care with an L-shaped infant 😂

and of course, some goofy little baby Sunny doodles <3

#asked and answered#centaurs#baby centaurs#foalbies#just a big ol dump of all my littles#like a proud auntie with the rollout wallet

10K notes

·

View notes

Text

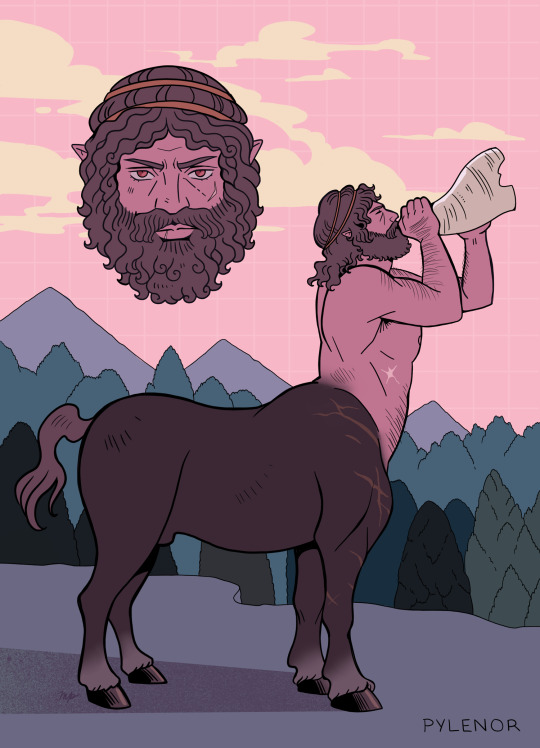

*Pylenor (first pic) was a centaur wounded by Heracles Hydra poison-tipped arrows, he managed to survive by washing his wound in the river Anigrus. From then on, the river was tainted, always having a very peculiar, nasty odor.

I got a brain bug last week to draw a certain centaur, and then my inner horse girl came back from the grave and I ended up drawing a bunch of them.

Taking inspiration from tribal societies and wild horse herd dynamics for those man/beast vibes.

#greek mythology#art#character design#artists on tumblr#original character#centaurs#art dump#medusa's peach

443 notes

·

View notes