#algorithmic scale

Text

Revolutionize Your Business Landscape - Algorithmic Scale Unveils Digital Business Design in Sweden!

Embark on a transformative journey with Algorithmic Scale as we introduce unparalleled Digital Business Design in Sweden. Tailored to amplify your brand's digital footprint, our innovative solutions seamlessly blend technology and strategy. From user-centric interfaces to data-driven insights, we redefine success in the digital realm. Trust Algorithmic Scale to be your partner in navigating Sweden's dynamic business landscape. Elevate your enterprise, embrace the future – where precision meets design, and every click sparks growth. Welcome to a realm where Digital Business Design in Sweden sets the stage for your unprecedented success. To know more, connect with us at [email protected] or +46 72 208 05 17.

#algorithmic scale#digital operating model#digital business#digital business development#digital business design company in sweden

0 notes

Text

okay, I’m cackling at this eBay listing. Those images are masterpieces.

this one!!!

This seller has my utmost respect. We are kindred spirits.

#to be perfectly honest#I was not looking up 1/6 scale straight jackets#the algorithm just worked out like that…

10 notes

·

View notes

Text

i am. perplexed as to why one of my libraries is suddenly adding vast numbers of spanish translations of english books to their ebook collections, when they do not have the english versions of those books, and by vast numbers i mean both a wide range of titles and also sometimes absurd numbers of copies like 100 copies when they usually only have 1-3 ebook copies of most other books

is there some kind of bug in their ordering system? what is happening

#none of these books ever seem to be on loan#which does not surprise me bc the library is not in an area that has a lot of spanish speakers#like if you asked me to rank what not-english books they should buy#spanish would be nowhere near top of the list#i feel like they maybe imported a purchasing algorithm and didn't tweak it to their demographics#hence both the choices and the scale#(but like. a spanish translation of 'bad gays' and no english copy? why?)

8 notes

·

View notes

Note

This is a silly question but HOW are all the pictures of the dragons you post so high quality. I save my dragon and post it and it has like 3 pixels to its name

not a silly question at all! noone really specifically mentions it much anymore, it's kinda one of those old internet osmosis things from a while back LOL

i upscale my dragon images with waifu2x ! just make sure to click the button in the upper corner for the full image png once it's done, otherwise it'll have weird color distortion in the background

#murmur#asks#tumblr's forced image sizes crunches things up constantly bcoz it uses a traditional method for scaling#whereas waifu2x has specific algorithms that it uses to preserve as much of the original lines as it can#since it was made as a way to cleanly upscale anime screenshots & things like that#ALSO i would personally recommend no noise reduction for fr pics; their images are all pretty clean already so they don't rly need it!

2 notes

·

View notes

Text

Unfriendly reminder that ancap is an oxymoron

#god you say maybe the temperature scale we use isnt stupid and evil once and suddenly all of tumblrs algorithm thinks ur fash#dear fellow reds: please interact#trying to make my blog as inhospitable to these ppl as possible so they dont think i agree with them

1 note

·

View note

Text

my deep dark secret is i left one of the most popular negative reviews for a very popular indie game on steam and i feel bad because gamers support gamers but also i didn't think it was worth the hype i had to speak my truth

#i think the steam review system is really shitty and i wouldn't have left it if it were a less popular game#bc i don't like how there's no sliding scale and how it impacts algorithm sales etc#but that game already has thousands of good reviews so it doesn't change that stuff#so like i wanna be like gamers support gamers! but im sorry its not good#warlock wartalks

3 notes

·

View notes

Text

i just scrolled through my blog and i realised i have only two modes: weird pseudo-philosophical rambling. and absolutely unhinged yelling. AND I TELL YOUUUU IT'S SO FUNNYYYYYYY because i spent so long trying to curate my voice and sound like a normal, fun, easy to approach person back when i first made this blog!

then again it's been 3.5 years so i guess my voice changed naturally 🤨 i'm not smart enough for this 😮💨

#nia.musings#sorry even using this tag makes me snort. wdym musing girlie. are u a philosopher. big brain? 🤩🤩 2024 me is bullying 2020 me#also not me saying “im not smart enough for this” for anything that requires me to use more than 2 braincells#couldn't be bothered trying to make sense for more a second#kickstarting my own brainless era and i wear my crown so well#also random but i'm soooooo ready to infest this blog with jjk. i probably won't do that because that piece of art traumatises me#by that i mean i like it and keep up with it far too much for someone who claims theyre traumatised#my emotional scale is SHOT because of it. more pain than preferable. but i do quite enjoy it#and considering i go through sooooo much jjk content on tumblr it's only fair that i showcase it all on my blog :3#i have about 700 draft reblogs on a sideblog i made to save posts when i wasnt active here. i made it this year but theres SO much now#also lowkey regret not being active (though i had no energy) here in 2021 2022 2023 because i had so many thoughts about bnha#and now it's nearly over#like what do you meannnn i didnt get to yap about my spinner era from 2021.#what do you mean my love to hate and back to love arc for dabi didnt get documented in the annals of tumblr dot com#AND WHAT DO YOU MEAN MY MELTDOWN LAST YEAR RE: HAWKS' QUIRK DIDNT GET PUBLICISED#this is all a joke because i for real (FR FR) had ZERO chance of being here because life was putting me through its TRIALS#still is. but that's the way life is. we go on. <3.#speaking of trials. no one here was privy (wait i think i mentioned it in an rb) to my jason grace breakdown when i found out What Happened#sucks !!!!!!!!!!!!!!!#i wasnt made for emotional pain.#also it's funny to me how none of my followers have unfollowed me so far.#are u guys also all inactive or do u just not see me anymore because tumblr's dash algorithm gives u random posts now#thats the only thing i dislike about tumblr now. i LOVE how it lets you edit tags now. also will always miss the old layout

0 notes

Text

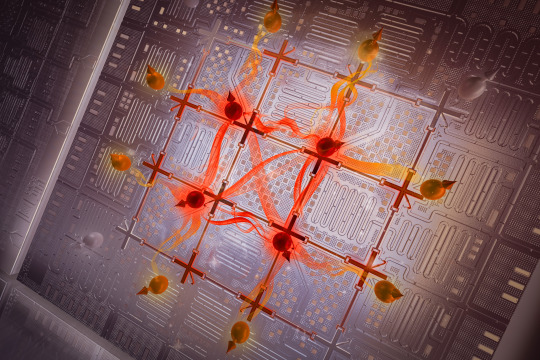

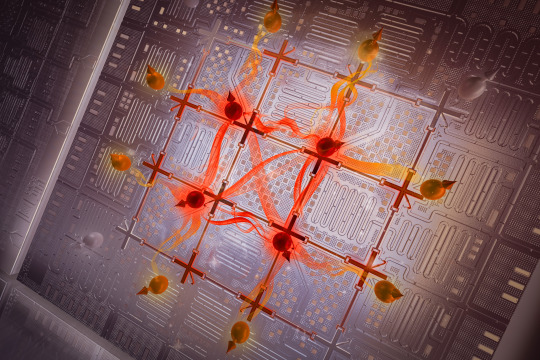

MIT scientists tune the entanglement structure in an array of qubits

New Post has been published on https://thedigitalinsider.com/mit-scientists-tune-the-entanglement-structure-in-an-array-of-qubits/

MIT scientists tune the entanglement structure in an array of qubits

Entanglement is a form of correlation between quantum objects, such as particles at the atomic scale. This uniquely quantum phenomenon cannot be explained by the laws of classical physics, yet it is one of the properties that explains the macroscopic behavior of quantum systems.

Because entanglement is central to the way quantum systems work, understanding it better could give scientists a deeper sense of how information is stored and processed efficiently in such systems.

Qubits, or quantum bits, are the building blocks of a quantum computer. However, it is extremely difficult to make specific entangled states in many-qubit systems, let alone investigate them. There are also a variety of entangled states, and telling them apart can be challenging.

Now, MIT researchers have demonstrated a technique to efficiently generate entanglement among an array of superconducting qubits that exhibit a specific type of behavior.

Over the past years, the researchers at the Engineering Quantum Systems (EQuS) group have developed techniques using microwave technology to precisely control a quantum processor composed of superconducting circuits. In addition to these control techniques, the methods introduced in this work enable the processor to efficiently generate highly entangled states and shift those states from one type of entanglement to another — including between types that are more likely to support quantum speed-up and those that are not.

“Here, we are demonstrating that we can utilize the emerging quantum processors as a tool to further our understanding of physics. While everything we did in this experiment was on a scale which can still be simulated on a classical computer, we have a good roadmap for scaling this technology and methodology beyond the reach of classical computing,” says Amir H. Karamlou ’18, MEng ’18, PhD ’23, the lead author of the paper.

The senior author is William D. Oliver, the Henry Ellis Warren professor of electrical engineering and computer science and of physics, director of the Center for Quantum Engineering, leader of the EQuS group, and associate director of the Research Laboratory of Electronics. Karamlou and Oliver are joined by Research Scientist Jeff Grover, postdoc Ilan Rosen, and others in the departments of Electrical Engineering and Computer Science and of Physics at MIT, at MIT Lincoln Laboratory, and at Wellesley College and the University of Maryland. The research appears today in Nature.

Assessing entanglement

In a large quantum system comprising many interconnected qubits, one can think about entanglement as the amount of quantum information shared between a given subsystem of qubits and the rest of the larger system.

The entanglement within a quantum system can be categorized as area-law or volume-law, based on how this shared information scales with the geometry of subsystems. In volume-law entanglement, the amount of entanglement between a subsystem of qubits and the rest of the system grows proportionally with the total size of the subsystem.

On the other hand, area-law entanglement depends on how many shared connections exist between a subsystem of qubits and the larger system. As the subsystem expands, the amount of entanglement only grows along the boundary between the subsystem and the larger system.

In theory, the formation of volume-law entanglement is related to what makes quantum computing so powerful.

“While have not yet fully abstracted the role that entanglement plays in quantum algorithms, we do know that generating volume-law entanglement is a key ingredient to realizing a quantum advantage,” says Oliver.

However, volume-law entanglement is also more complex than area-law entanglement and practically prohibitive at scale to simulate using a classical computer.

“As you increase the complexity of your quantum system, it becomes increasingly difficult to simulate it with conventional computers. If I am trying to fully keep track of a system with 80 qubits, for instance, then I would need to store more information than what we have stored throughout the history of humanity,” Karamlou says.

The researchers created a quantum processor and control protocol that enable them to efficiently generate and probe both types of entanglement.

Their processor comprises superconducting circuits, which are used to engineer artificial atoms. The artificial atoms are utilized as qubits, which can be controlled and read out with high accuracy using microwave signals.

The device used for this experiment contained 16 qubits, arranged in a two-dimensional grid. The researchers carefully tuned the processor so all 16 qubits have the same transition frequency. Then, they applied an additional microwave drive to all of the qubits simultaneously.

If this microwave drive has the same frequency as the qubits, it generates quantum states that exhibit volume-law entanglement. However, as the microwave frequency increases or decreases, the qubits exhibit less volume-law entanglement, eventually crossing over to entangled states that increasingly follow an area-law scaling.

Careful control

“Our experiment is a tour de force of the capabilities of superconducting quantum processors. In one experiment, we operated the processor both as an analog simulation device, enabling us to efficiently prepare states with different entanglement structures, and as a digital computing device, needed to measure the ensuing entanglement scaling,” says Rosen.

To enable that control, the team put years of work into carefully building up the infrastructure around the quantum processor.

By demonstrating the crossover from volume-law to area-law entanglement, the researchers experimentally confirmed what theoretical studies had predicted. More importantly, this method can be used to determine whether the entanglement in a generic quantum processor is area-law or volume-law.

“The MIT experiment underscores the distinction between area-law and volume-law entanglement in two-dimensional quantum simulations using superconducting qubits. This beautifully complements our work on entanglement Hamiltonian tomography with trapped ions in a parallel publication published in Nature in 2023,” says Peter Zoller, a professor of theoretical physics at the University of Innsbruck, who was not involved with this work.

“Quantifying entanglement in large quantum systems is a challenging task for classical computers but a good example of where quantum simulation could help,” says Pedram Roushan of Google, who also was not involved in the study. “Using a 2D array of superconducting qubits, Karamlou and colleagues were able to measure entanglement entropy of various subsystems of various sizes. They measure the volume-law and area-law contributions to entropy, revealing crossover behavior as the system’s quantum state energy is tuned. It powerfully demonstrates the unique insights quantum simulators can offer.”

In the future, scientists could utilize this technique to study the thermodynamic behavior of complex quantum systems, which is too complex to be studied using current analytical methods and practically prohibitive to simulate on even the world’s most powerful supercomputers.

“The experiments we did in this work can be used to characterize or benchmark larger-scale quantum systems, and we may also learn something more about the nature of entanglement in these many-body systems,” says Karamlou.

Additional co-authors of the study are Sarah E. Muschinske, Cora N. Barrett, Agustin Di Paolo, Leon Ding, Patrick M. Harrington, Max Hays, Rabindra Das, David K. Kim, Bethany M. Niedzielski, Meghan Schuldt, Kyle Serniak, Mollie E. Schwartz, Jonilyn L. Yoder, Simon Gustavsson, and Yariv Yanay.

This research is funded, in part, by the U.S. Department of Energy, the U.S. Defense Advanced Research Projects Agency, the U.S. Army Research Office, the National Science Foundation, the STC Center for Integrated Quantum Materials, the Wellesley College Samuel and Hilda Levitt Fellowship, NASA, and the Oak Ridge Institute for Science and Education.

#2023#Algorithms#analog#artificial#atomic#atomic scale#atoms#Behavior#benchmark#Building#classical#college#complexity#computer#Computer Science#Computer science and technology#computers#computing#defense#Defense Advanced Research Projects Agency (DARPA)#Department of Energy (DoE)#education#Electrical Engineering&Computer Science (eecs)#Electronics#energy#Engineer#engineering#Explained#form#Foundation

0 notes

Text

#AI trends#Competitive Strategies#competitor research#Content creation#content update#digital marketing#Engaging Content#Expired Domain Abuse#Google Algorithm#Google Core Update#Google News Ranking#Google Search Results#google spam policies#google updates#Long-form Content#Manipulative Behaviors#March 2024 Core Update#organic traffic#Originality#Scaled Content Abuse#Search Engine Optimization#SEO Impact#Site Reputation Abuse#Spam Policies#User Engagement.#Website Quality

0 notes

Text

Embracing Digital Transformation The Operating Model in Sweden

Delve into Sweden's impressive path towards digital transformation and innovation. Gain insights into how Sweden's operational framework has adapted to encompass state-of-the-art technologies, sustainability initiatives, and a digital excellence ethos. Uncover the pivotal elements contributing to Sweden's ascent as a frontrunner in the global digital era.

For more details, connect with us at

📧[email protected]

🌐 www.algorithmicscale.com

#digital business#digital operating model#algorithmic scale#product development#operating model#product development company#digital business development

0 notes

Text

I do often wonder what it feels like to like the popular thing.

"Dude what are you talking about, you're literally a Marvel stan-" I know, that's not what I mean.

I mean like... Okay, example, Destiel. You don't have to search it out, you don't have to force your algorithm to show you, it just does, because it's popular (on Tumblr anyway). I don't follow anything Supernatural related and I still see it.

And I like the jokes, but I honestly couldn't care less otherwise. The only SPN character I actually care about is Sam and maybe Jack (but he's in the later seasons that I didn't really watch so...). And so much of the fandom is focused on Destiel that is sometimes frustrates me when I have to go out of my way to see what I want instead of it.

That kind of thing.

What does it feel like for your opinions to align so much with the popular fandom consensus? To not have to go out of your way to see your faves and biases, to not have to be careful about what you interact with, lest it fucks up your algorithm and you have to do it all over again. To not have it even work most of the time.

More examples.

I really like Louis Tomlinson but I've never been on a site where the algorithm didn't try to shove Larry and Harry Styles down my throat when I started interacting with posts about him. (Naturally, I ignore those posts, but they're such a big part of the fandom that ignoring them makes the algorithm stop showing me posts about Louis too. I've honestly given up.)

My BTS bias is so far from the most popular member that my FYP doesn't even show me posts with him, like, period. (Not to mention how many people who don't stan BTS actively hate them and/or the fandom, and/or make awful jokes about them)

And then there's my whole thing with Captain America where none of my irl friends even like him and so when I have to ramble about him I have to go scream into the internet void and hope it screams back.

So, as someone who always seems to fall for the fandom underdog... I just wonder what it feels like to not have to do all this.

#tw vent#personal#ranting#fandom#algorithm#the scale of what it feels like is#frustrating#annoying#neutral#i am very on the annoyed rn#oh and also#exhausting#i just want my louis edits#get fucked with conspiracy theories

0 notes

Text

general music reccomendations don't work because everything is going to be somebody's favorite thing of all time but also some people are going to not like it

#and then i feel like i should be liking it and i mean sometimes i do end up doing that but other times i just don't#I also don't necessarily mean it should be like algorithmic but like limit it to smaller spaces where more people will probably like it#also there's just like no sense of scale tbh

0 notes

Text

An open letter to @staff

I already submitted this to Support under "Feedback," but I'm sharing it here too as I don't expect it to get a response, and I feel like putting in out in public may be more effective than sending it off into the void.

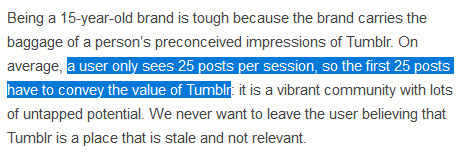

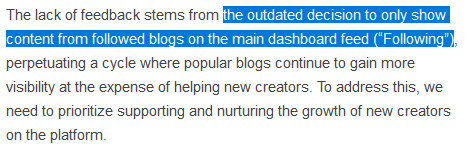

The recent post on the Staff blog about changing tumblr to an algorithmic feed features a large amount of misinformation that I feel staff needs to address, openly and honestly, with information on where this data was sourced at the very least.

Claim 1: Algorithms help small creators.

This is false, as algorithms are designed to push content that gets engagement in order to get it more engagement, thereby assuring that the popular remain popular and the small remain small except in instances of extreme luck.

This can already be seen on the tumblr radar, which is a combination of staff picks (usually the same half-dozen fandoms or niche special interests like Lego photography) which already have a ton of engagement, or posts that are getting enough engagement to hit the radar organically. Tumblr has an algorithm that runs like every other socmed algorithm on the planet, and it will decimate the reach of small creators just like every other platform before it.

Claim 2: Only a small portion of users utilize the chronological feed.

You can find a poll by user @darkwood-sleddog here that at the time of writing this, sits at over 40 THOUSAND responses showing that over 96 percent of them use the chronological feed*. Claiming otherwise isn't just a misstatement, it's a lie. You are lying to your core userbase and expecting them to accept it as fact. It's not just unethical, it's insulting to people who have been supporting your platform for over a decade.

Claim 3: Tumblr is not easy to use.

This is also 100% false and you ABSOLUTELY know it. Tumblr is EXTREMELY easy to use, the issue is that the documentation, the explanations of features, and often even the stability of the service is subpar. All of this would be very easy for staff to fix, if they would invest in the creation of walkthroughs and clear explanations of how various site features work, as well as finally fixing the search function. Your inability to explain how your service works should not result in completely ignoring the needs and wants of your core long-term userbase. The fact that you're more willing to invest in the very systems that have made every other form of social media so horrifically toxic than in trying to make it easier for people to use the service AS IT WORKS NOW and fixing the parts that don't work as well speaks volumes toward what tumblr staff actually cares about.

You will not get a paycheck if your platform becomes defunct, and the thing that makes it special right now is that it is the ONLY large-scale socmed platform on THE ENTIRE INTERNET with a true chronological feed and no aggressive algorithmic content serving. The recent post from staff indicates that you are going to kill that, and are insisting that it's what we want. It is not. I'd hazard to guess that most of the dev team knows it isn't what we want, but I assume the money people don't care. The user base isn't relevant, just how much money they can bring in.

The CEO stated he wanted this to remain as sort of the last bastion of the Old Internet, and yet here we are, watching you declare you intend to burn it to the ground.

You can do so much better than this.

Response to the Update

Under the cut for readability, because everything said above still applies.

I already said this in a reblog on the post itself, but I'm adding it to this one for easy access: people read it that way because that's what you said.

Staff considers the main feed as it exists to be "outdated," to the point that you literally used that word to describe it, and the main goals expressed in this announcement is to figure out what makes "high-quality content" and serve that to users moving forward.

People read it that way because that is what you said.

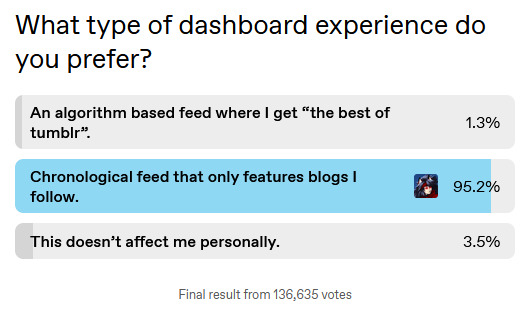

*The final results of the poll, after 24 hours:

136,635 votes breaks down thusly:

An algorithm based feed where I get "the best of tumblr." @ 1.3% (roughly 1,776 votes)

Chronological feed that only features blogs I follow. @ 95.2% (roughly 130,077 votes)

This doesn't affect me personally. @ 3.5% (roughly 4,782 votes)

24K notes

·

View notes

Text

no cuz i really do have to emphasize just how amazing the scope of kgpr is like these people were not signed on to create any of this initially like jin just posted some songs and one of them (kagerou days) went super viral, and then wannyanpuu, with no monetary incentive not even officially working with jin at that point, creates a fan music video that is so impressive that it goes viral as well and not to mention how like, this one interview w jin translated by vgperson (ill link it later) stated just how uncommon something like this was, this being 1.) a vocaloid song featuring a character other than the vocaloid themselves and 2.) a fmv of this scale. cuz like during that period of time, the earliest of early vocaloid music just had still illustrations with the song playing, yes people have been creating more and more impressive mvs during this period (strobe light and mozaik role predate kagerou days fmv) but in spite of that, wannyapuu's kagerou daze still stood out immensely for being incredibly unique for that time period (and it honestly still holds up amazingly even if its not canon to kagepro lore) and then just 3 years later that song and those characters from this super viral song and music video end up appearing in an anime being screened across the nation and abroad like do you know how insane that is? can you imagine just being a hobbyist with some OCs and then suddenly receiving an entire television series in less than 5 years like what even... its almost difficult to comprehend

#even harder to comprehend with the algorithms of today and how a million is no longer the massive number it used to be#like the most recent example i can compare jin to would be toby fox i think. obvs toby fox is way more popular than jin now#but like thats what im trying to convey. how something so indie can grow to an insanely popular scale.... like wow#kgprambling

0 notes

Text

To anyone concerned about KOSA and the state of the web

My wife, @utopicwork, is working hard on a "next internet" with the primary goal of being a place where marginalized people can safely and privately communicate without being restricted by the whims of advertising algorithms and malicious bills.

This would be a decentralized peer-to-peer network, which means

A) it won't be easily shut down

B) it's built around the best aspect of tumblr: being able to choose who you do and don't connect to

She is a highly qualified computer scientist with years of experience in cybersecurity, web development and network technology. However, she can't do this alone. A trans woman is fighting hard for the future of free communication so please support her.

3K notes

·

View notes

Text

hello dear instagram please consider this: maybe i don't want to be snatched and hot and stylish and aesthetic and darkly feminine maybe i just want to have a good time, thanks!

#listen up algorithm:#i'm not marketable#i'm not a self-improvement project#i'm not your target audience#and i will stay that way#i will not bend to your culture of shame#i am free of your rot-inducing influence#you cannot stop me from seeing the beauty within myself#independent from your definition of what is beautiful#listen:#YOU HAVE NO POWER HERE#this is small-scale rebellion#and it HAS an impact#even if it's only on my own life#rant over#yqly#social media#self care#self compassion#healing#boundary setting

1 note

·

View note