#art style going everywhere

Text

its his time to shine!!!!

#twst#twisted wonderland#my art#twst ace#ace trappola#twst deuce#deuce spade#adeuce#art style going everywhere#using a tablet i dont usually use and it makes my hands confuse#a few more days and then i can get my pen oughhhh cant wait to work on the chibs#hes reminded of his boyfie...

252 notes

·

View notes

Text

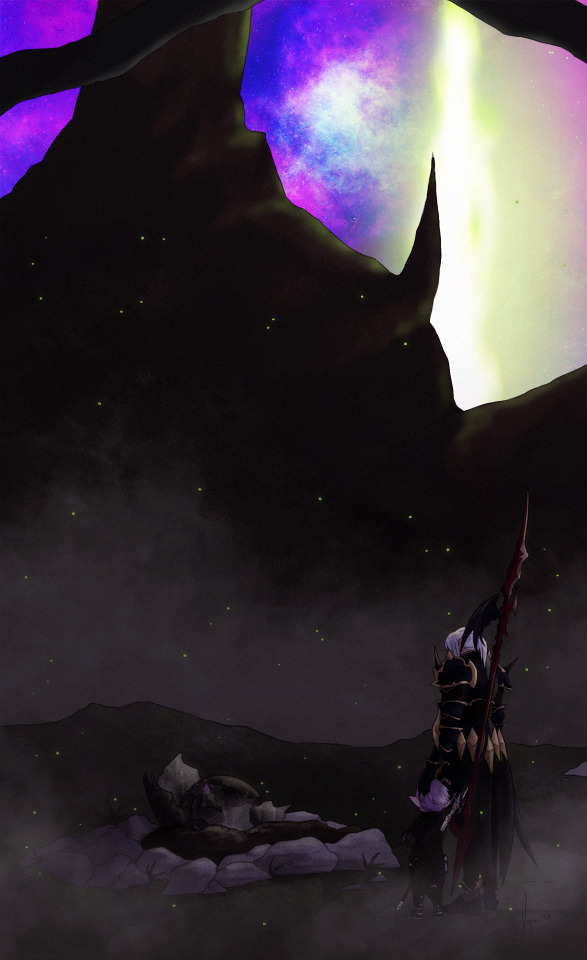

I'm glad you're here with me

At the end of all things...

#ffxiv#Endwalker#Ninira Nira#Estinien#Estinien Wyrmblood#Estinien Varlineau#Estinien x wol#wolstinien#art: mine#I started this picture 2000 years ago#I remember wanting to experiment with a different render style and surprise! getting frustrated#so it's been sitting as a sketch since#then the other day Cyan was like hey this song gave me Estinini vibes#And I was like l o l slides Estinini playlist back across the table and circles Sans Soleil like that's because it is#and I was like man I should go back to that picture#and just kinda yolo the render bc beating myself up about not being successful in my last render will never get it done#so here it is in the usual idk what's happening slap colours everywhere flavour#Nini could have used a bit more work re getting her size right maybe but we're not caring right now#I DID it at least checks the box and somehow does another piece of art in under a month idk what's happening rn energy I guess#Ultima Thule makes me normal#This part of MSQ was f i n e :')

226 notes

·

View notes

Text

I have not actually drawn anything in like 9 months at this point I don't know what I'm doing. Take some Vaughnothy I did for an art trade with @thelegendarypusheen-art

alts w different overlays and blur levels under the cut because im indecisive

#vaughnothy#borderlands#timothy lawrence#vaughn the money man#my art#goose pointed out the fact tims mask hinges are rusted and I HAVE to draw them rusted now#timmy paints vaughns nails for him this is canon because I said so#but timmy having big ass hands causes the polish to get everywhere#the style inconsistencies ARE intentional#i add more grey to timmys hair because that man is STRESSED and also I think it looks good on him :3#going thru an art style crisis#rarepair posting on main

62 notes

·

View notes

Text

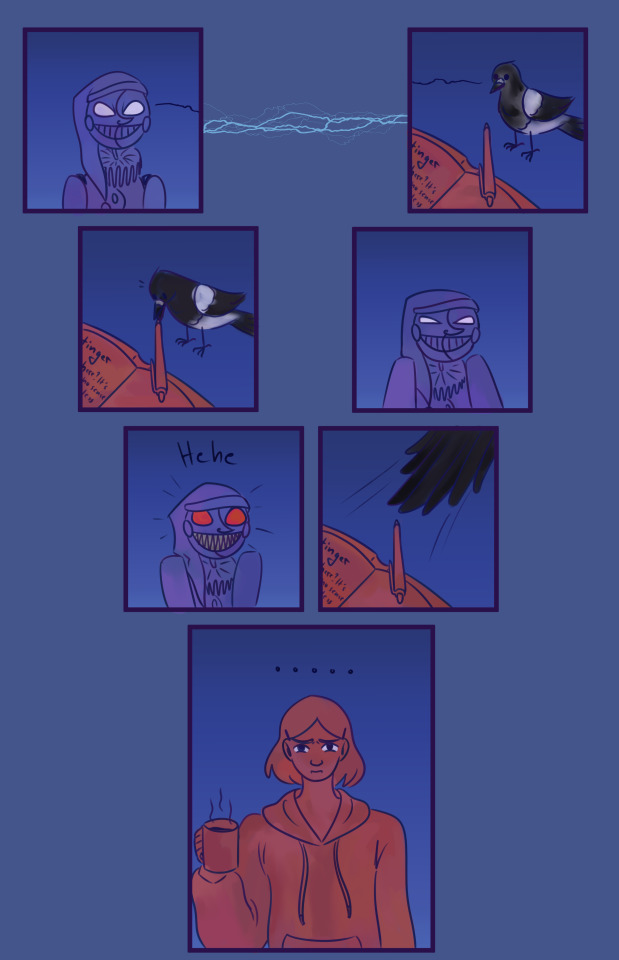

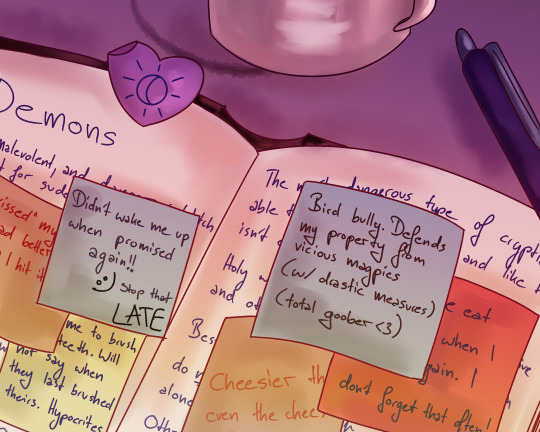

"No more reveal comics" Okay but what about domestic post-reveal shenanigans?

@naffeclipse at this point I have no more excuses, have another

Also bonus, what they were writing:

They have a lot of amendments to make <3

#post let luce#my art#fnaf sb#fnaf sb au#cryptid sightings#naffeclipse#cryptid!moon#every day is the same. i think about cryptid sightings. i thumbnail a comic. i sit down at my laptop go insane for a few hours. done#when will it end!! /j#bonus doodle messy bc 1) kinda experiment combining styles and 2) i did run out of steam#dinner time soon and I need to prep#me slapping a (simplified version of) my tattoo everywhere dca related: hehe sunmoon go brr#it's been ten very very productive days since ch3/ me posting the steady heart comic and i sold my soul to this fic apparently

425 notes

·

View notes

Text

internet without artists would suck huge enormous balls fyi

#thanks artists#this isnt self aggrandizing I prommy.. I just see lots of cool art everywhere and go huh. I dont think people value that cool 3d rendered#anime demon as much as they should. internet = bringing art from everywhere and every time and of every style to every one#and its like. hey thats cool. and pretty weird and new...

82 notes

·

View notes

Text

Yeehaw himbo bf with his sweet goth gf

#jk on the goth#(it’s more grudge LOL)#she has very more styles she just… has a very practical builder outfit LOL#also her clothing style is everywhere#it’s her best friends and Vivi’s fault#teehee one more post in Monday and Ima go afk for a while :P#iykyk xD#my time at sandrock#mtas logan#mtas fanart#mtas oc#mtas builder#My Builder Jay#can u tell I finish the shadin while I was so sick…#my art

65 notes

·

View notes

Text

pinoy hazbin hotel fans can confirm that lucifer is behind the duck hair clip trend

(context: the latest trend here in the Philippines is wearing a duck hair clip on your head or even clipping it on your bag as an accessory.)

Instagram || Twitter || Ko-fi

#hazbin hotel#lucifer hazbin hotel#my art#hazbin hotel fanart#i was debating whether i would draw luci here in my default art style or not#but its cuter in this style so there you go#people are selling these duck hair clips everywhere here#its kinda cute#there's also small windmill hair clips#but i see more ducks on people's head more#pinoytrend#duckhairclip

18 notes

·

View notes

Text

too little gfx in the tags lately this is so unfortunate

#pros of tumblr: backwater hell. cons of tumblr: backwater hell#actually add another con: hostile to all image makers now ig#nothing but gifs..................... please..... graphics where r u#tho tbh idk where the graphics makers live because i literally have never seen any on twitter either#a discord for transparent renders that i lurk in opened a channel to share your edits and like#some of them are a bit cluttered but they're still very nice and nothing like tumblr's usual edit style#they're very . digital ig? full of textures text stickers and random bits and bobs everywhere its interesting#some of them also have the magazine mockup thing going on so its got block font bold as hell style usually main focus is drippy splash art#which like . WHERE DO YOU ALL POST YOUR WORK?? SHOW PLEASE ive never seen any style like it in my life#all are like really cool as hell though im impressed and inspired#esp these are all single image focused whereas tumblr's full of like photosets that make up 1 coherent thing#so it's a different kind of challenge... sooooooooo cool though#lowkey wanna try it zzzzz theyre all so neat... scratches a good itch..#ramblings!

9 notes

·

View notes

Photo

First post of the new year! Finally sharing the artwork I did for the @kuvirazine back in 2021! I was incredibly flattered to be asked to work on the project, and of course I had to bring those comfy gym vibes ✨💪💚

#Baatar Jr.#Kuvira#LoK#Legend of Korra#Baavira#Kuvira Zine Vol 2#Kuvira Zine#[ imagine me getting asked to do a LoK art zine and I DIDN'T bring Baatar along#LOL of course I have to take him everywhere#but yeah aaah so happy to finally be sharing these!#despite these being from like mid 2021 I still like them!#My art style back then (and still is now tbh) is very inconsistent#but I was going for a specific look and and I think I achieved it#that second piece is def my fav though sobs#I was really fighting with Kuvira's face bc at this time I'd hardly drawn her LMAO#but their faces there came out perfect to me I love them so much ;;#thanks so much again to the team for asking me to be apart of it!! ]#Neon Ocean Art

127 notes

·

View notes

Text

Joanne/Joan Fanart

I made fanart for @artsandstoriesandstuff's new OC Joanne/Joan. They're a housewife who's just a tiny bit...Stressed.

This is a rough MS Paint sketch because I don't have the energy for a proper CSP drawing. And I wanted to get this idea out! I was surprised how similar the sketch was to how I envisioned her when I suggested her, lol.

The quality is kinda shit. Sorry.

No reposting, editing, claiming, stealing, or tracing this. No using it for any program, NFT, or AI.

Hope you like it haha. Just a little thing.

#estee's posts#estee's art#gift art#others ocs#mutual's ocs#yeah it's very rough as you can see#but I had fun#sometimes you just gotta draw something small#I have bigger projects on the back-burner anyway and needed a break#artsandstoriesandstuff#I kinda tried to give her that beehive hairstyle#but that style with the flipped up ends is literally my favourite hairstyle ever#maybe she's rich and that's where she got the pearls...#OR. maybe she's got the cheaper ones#so then there's that scene where the necklace breaks and the pearls go everywhere#because they don't do that with richer pearls. there's knots between them#I'm rambling. it's your story ofc

4 notes

·

View notes

Text

Conqueror of Demons

#xiao#原神#genshin impact#genshin#fanart#iztb#xiao was my first 5*#my meow meow#at the time of this art i was still getting used to drawing on a screen so my style was kinda going everywhere#this was an experimental painting

40 notes

·

View notes

Text

started naoki urasawa's Monster bc i want to procrastinate on studying but no one told me id be bombarded with my course material right on screen

#what do you mean neurosurgeon#i dont wanna revise neuro and my procrastianime wanna make me revise#naoki urasawa art style so good and distinctive and i love how he draws facial features#but it's so obvious who he has made out to be a bad guy#like#it's a very old and very tried and tested character design technique im sure#but the dr's girlfriend was gonna be a bitch the moment i saw her bc of how sh's drawn#not because of my judgement but he literally draws bad characters that way !!!!#ik this is going to explore a lot of gray morality and everything and this anime is the highest rated literally everywhere#and that has to be for a reason#and i appreciate them not half-assing the medical stuff#bc bruh#i forgot what was my tag for stuff like this#what he facing is going to be my reality the coming year#i have seen pluto#and im watching monster rn#i wish i was given the chance to trust the characters just once before they broke my heart#by being bougie bastards or whatever#but the artstyle remains as much a part of the storytelling as anything else#am i being treated like a child#i wonder#monster#liveblog

3 notes

·

View notes

Text

wild finding out that people legitimately hate having imagine dragons on the arcane soundtrack. some of the best animation that's come out of the last decade has imagine dragons on the track. listen to sexy downbeats of enemy and think about how sick it would be to see jayce smash someone's head in to it. the imagine dragons is essential to the lol experience and acting like it would be a better show without it is stupid, and more importantly, embarrassing. its like walking into a neighborhood bar that everyone hates and complaining about the one thing people actually kind of like about the original thing right after it got a wicked makeover.

#arcane is a legitimate work of art. the level of incredible technical work and artistry that went into that kind of#animation style? the rigging??? THE PAINTING???????? holy shit#but its also very much. exactly what it is. astounding beauty comes from everything and everywhere#and acting like arcane is somehow less impressive because imagine dragons is on the track??? im going to rip your kneecaps out

6 notes

·

View notes

Text

i think if i make my neocities site cool enough ill ditch my carrd for real actually

#like i could just. add it as a page on the site. and like i dont wanna link to my carrd AND my website everywhere lol thats dumb#the gamer speaks uwu#coding it is so fun tho its frustrating as fuck bc i forgot how inherently annoying coding is but well its still ok ^__^#css die and go to hell CHALLENGE !!!!!!!!!!!!!!!!!!!!!!!!!!!#html my babygirl. javascript my soon to be babygirl idk how to write it really just edit and read through it#like i was obsessed with scratch when i was little and learned it on my own so like that alone already is like#just fully if then statements#if then statements my so beloved theyre so simple i should learn js properly lol#i need to tho i need to integrate the auto post archival script i found into my thing but the js is like#not cooperating with my css . and my css broke lmao#so i have deleted everything and restarted so rn i just have my main page done and the style sheet is in the main html#not ideal ! not ideal at all but it works bb#like see#i want multiple pages and i want to be able to blog here and there to go more in depth about my art if i feel like it#to achieve this i need every page on the site to have the same color palette decor etc#i could one by one update each page to style them individually but if i ever change my layout. i have to update it one by one#and if i make blog posts those are in theory a new page every post as well. so you see this is innefficient and sucks in the long run#easy in the short term or for a small site tho !#so i need to make a css file to collect everything where i only have to change the css to style every single page on the site linked to it#i had this working for a minute but for some reason my main page wouldnt link to my css file OUT OF NO WHERE ???#but the js file that formats the blog posts see it has like a specific format for text and everything so they look right#and i think this conflicted with some info in my css file that ALSO specifically formats some text#so it fucked everything up !#so im right now just with p much an individual page for html and css and im going to start again#copy my css i have right now first of all into an actual css file. link it to the html#then i really really have to scour and gut the script file before implementing it so we dont have everything break again#decent plan i have the energy to do actual work now tho so i wont be doing it until later when i burn out of drawing and need to do smth#tech shid#screaming in the tags

3 notes

·

View notes

Text

idk what kind of microchip they implemented in my brain but for some fucking reason i can instantly recognize when a drawing is a nico di angelo fanart. im not in the pj fandom. ihavent had friends in that fandom for eons. i dont fucking know whats ab that edgy italian fucker that it takes me 1 second to id his ass everytime he pops up on pinterest

#it's not an outfit thing cus he doesnt always seem to have the same one#is it cus pj fans always have THAT art style? i have no idea#everywhere i go i see his face type beat#not every fluffy black hair guy drawing i see is of him but when it is i just fucking know#it's like the lamest superpower ever#'the point of fanarts is that u recognize he character' HES JUST A GUY HE DOESNT LOOK SPECIAL OK#if we were talking ab someone w a veeery specific design then id agree w you#is it cause of his phantom connection to transmasc ppl?do we all have the same power? maybe im not alone.

0 notes

Text

the darling Glaze “anti-ai” watermarking system is a grift that stole code/violated GPL license (that the creator admits to). It uses the same exact technology as Stable Diffusion. It’s not going to protect you from LORAs (smaller models that imitate a certain style, character, or concept)

An invisible watermark is never going to work. “De-glazing” training images is as easy as running it through a denoising upscaler. If someone really wanted to make a LORA of your art, Glaze and Nightshade are not going to stop them.

If you really want to protect your art from being used as positive training data, use a proper, obnoxious watermark, with your username/website, with “do not use” plastered everywhere. Then, at the very least, it’ll be used as a negative training image instead (telling the model “don’t imitate this”).

There is never a guarantee your art hasn’t been scraped and used to train a model. Training sets aren’t commonly public. Once you share your art online, you don’t know every person who has seen it, saved it, or drawn inspiration from it. Similarly, you can’t name every influence and inspiration that has affected your art.

I suggest that anti-AI art people get used to the fact that sharing art means letting go of the fear of being copied. Nothing is truly original. Artists have always copied each other, and now programmers copy artists.

Capitalists, meanwhile, are excited that they can pay less for “less labor”. Automation and technology is an excuse to undermine and cheapen human labor—if you work in the entertainment industry, it’s adapt AI, quicken your workflow, or lose your job because you’re less productive. This is not a new phenomenon.

You should be mad at management. You should unionize and demand that your labor is compensated fairly.

10K notes

·

View notes