#Data bias

Explore tagged Tumblr posts

Text

“Several studies conducted over the past decade or so show that letters of recommendation are another seemingly gender-neutral part of a hiring process that is in fact anything but. One U.S. study found that female candidates are described with more communal (warm; kind; nurturing) and less active (ambitious; self-confident) language than men. And having communal characteristics included in your letter of recommendation makes it less likely that you will get the job, particularly if you're a woman: while 'team-player' is taken as a leadership quality in men, for women the term ‘can make a woman seem like a follower’”. - Caroline Criado Perez (Invisible Women: Data Bias in a World Designed for Men)

#Caroline Criado Perez#womanhood#feminism#sex based discrimination#Invisible Women: Data Bias in a World Designed for Men#letter of recommendation#data bias#work culture#sexism

177 notes

·

View notes

Text

Book of the Day – Invisible Women

Today’s Book of the Day is Invisible Women, written by Caroline Criado Pérez in 2019 and published by Abrams Press. Caroline Criado Pérez is a British writer, journalist, and activist known for her rigorous research and commitment to gender equality. She gained prominence through campaigns such as the fight to keep a woman’s image on British banknotes and the push for a statue of suffragist…

View On WordPress

#bias#Book Of The Day#book recommendation#book review#books#data bias#discrimination#feminism#gender#gender bias#international women&039;s day#politics#Raffaello Palandri#society#women

11 notes

·

View notes

Text

You ever randomly remember excerpts from Invisible Women: Exposing Data Bias in a World Designed for Men by Caroline Criado-Perez and get mad all over again? Like there's no place on Earth where a woman won't be overlooked, ignored, taken for granted, expected to be in pain, or expected to suffer and die.

3 notes

·

View notes

Text

I love good Audiobooks on new tech.

#Accessibility#AI#AI 2041#AI and Global Power#AI Ethics#AI hidden costs#AI history#AI risk#AI successes and setbacks#AI systems#Ajay Agrawal#Alexa#Algorithms of Oppression#Artificial Intelligence: A Guide for Thinking Humans#Atlas of AI#Audible#Audiobooks#Brian Christian#Caroline Criado Perez#Data bias#Ethical Machines#Future of artificial intelligence#Google's AI#Inclusivity#Invisible Women#Kai-Fu Lee#Kate Crawford#Literature consumption#Mark Coeckelbergh#Melanie Mitchell

2 notes

·

View notes

Text

This is an informative, related book.

“Using the cover story that a start-up communications company was looking for a head for its marketing department, sociologist Shelley Correll and colleagues found that, compared with paper nonmothers, identical paper mother applicants were rated about 10 percent less competent, 15 percent less committed to the workplace and worthy of $11,000 less salary. Moreover, only 47 percent of mothers, compared with 84 percent of nonmothers were recommended for hire. One only hopes that the little paper children are worth the career sacrifice.

As a follow-up, over the course of eighteen months Correll and her colleagues sent out a total of 1,276 fictitious résumés and cover letters for real marketing and business jobs advertised in the press. Each employer was sent two applications from two equally qualified applicants. They were both the same sex (sometimes both male, other times both female), but only one was identifiable as a parent. (The researchers counterbalanced which applicant was the parent.) Then the researchers sat back and waited to see who got the most callbacks from the potential employers.

While parenthood served as no disadvantage at all to men, there was evidence of a substantial 'motherhood penalty’. Mothers received only half as many callbacks as their identically qualified childless counterparts. Ongoing research is investigating whether these days it is especially mothers who are discriminated against.”

- Cordelia Fine, Delusions of Gender

128 notes

·

View notes

Text

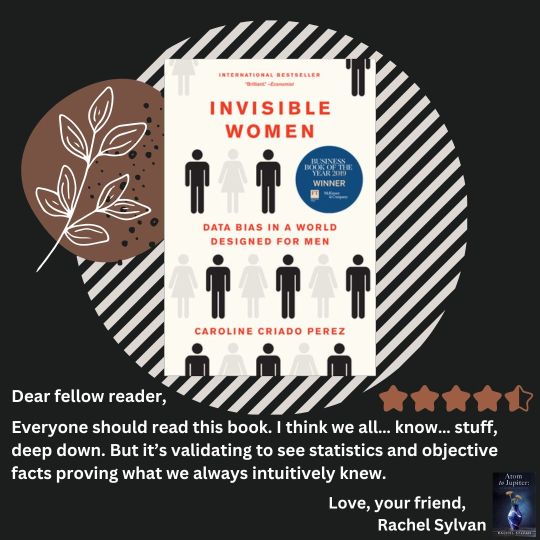

"Invisible Women" by Caroline Criado-Perez

Thank you @womensbookclub_paris for the rec! ❤️

#Invisible women#Data Bias#Bias#Sexism#Patriarchy#Feminism#How the world works#Women stories#women experiences#lived experiences#science#caroline criado perez#statistics#good statistics#why

0 notes

Text

TRYING AGAIN WITH CLEARER WORDING. PLS READ BEFORE VOTING

*Meaning: When did you stop wearing a mask to a majority of your public activities? Wearing a mask when you feel sick or very rarely for specific events/reasons counts as “stopping”

[More Questions Here]

#poll#covid#covid 19#reblog for sample size#I could tag this post ‘environmental storytelling’ cause goddamn#if you reblogged the original please reblog this one#I just want my DATA#hey#excellent deminstration of the scientific process here#needing to correct for confusion on your participants end to get the results you want#can’t fix the tumblr sampling bias but apparently there’s anti-maskers here too#peoples life circumstance can vary so no judgement from me OP on when you stopped#unless you never masked. then I am judging you#like you didn’t even TRY?

623 notes

·

View notes

Photo

something something biased data presentation... no yellow at all, just jumps from These Countries Allow 15 Year Old Kids to Do the Icky to And Heres Everyone Who Does It Right

also didn't i read something the other day about kids being allowed to marry at fourteen or less in certain us states? somehow i cannot imagine they'd let people do that and then come back and have rules saying they have to wait several years to have the wedding night. i bet theres some sort of 'religious freedom' exception for marriage, might have to look that up...

Age of consent by country

121 notes

·

View notes

Text

YOU, the person who watches Once Upon A Witchlight, are autistic

#bias data pool of 1. me#well ok#2. me and my friend#once upon a witchlight#ouaw#legends of avantris#text#this is a true fact

255 notes

·

View notes

Text

“A UK Department for Transport study highlighted the stark difference between male and female perceptions of danger, finding that 62% of women are scared walking in multi-story car parks, 60% are scared waiting on train platforms, 49% are scared waiting at the bus stop, and 59% are scared walking home from a bus stop or station.

The figures for men are 31%, 25%, 20% and 25%, respectively. Fear of crime is particularly high among low-income women, partly because they tend to live in areas with higher crime rates, but also because they are likely to be working odd hours and often come home from work in the dark.' Ethnic-minority women tend to experience more fear for the same reasons, as well as having the added danger of (often gendered) racialised violence to contend with.” - Caroline Criado Perez (Invisible Women: Data Bias in a World Designed for Men)

#Invisible Women: Data Bias in a World Designed for Men#Caroline Criado Perez#crime#violence against women#so based violence#dibs#data bias#womanhood#feminism#patriarchy#women’s safety#this is why we need feminism#statistic#poor women

3 notes

·

View notes

Text

The Epistemology of Algorithmic Bias Detection: A Multidisciplinary Exploration at the Intersection of Linguistics, Philosophy, and Artificial Intelligence

We live in an increasingly data-driven world, where algorithms permeate nearly every facet of our existence, from the mundane suggestions of online retailers and products to the critical decisions impacting healthcare and justice systems. Photo by Tara Winstead on Pexels.com These algorithms, while often presented as objective and impartial, are inherently products of human design and the data…

View On WordPress

#Algorithm#algorithm design#algorithmic bias#Artificial Intelligence#bias#confirmation bias#critical discourse analysis#critical reflection#data bias#dataset#Deep Learning#deontology#epistemology#epistēmē#ethical principles#fairness#inequality#interdisciplinary collaboration#justice#Language#linguistics#Machine Learning#natural language processing#objectivity#Philosophy#pragmatics#prohairesis#Raffaello Palandri#sampling bias#Sapir-Whorf hypothesis

1 note

·

View note

Text

One of my favourite things about Ford is that he's like WAIT SAFETY about somethings when he disagrees (the Fiddleford becoming president thing, him being like it's too stressful for Fiddleford) BUT when it's something he's interested in he's like endangering my life is fine and normal (ie, him jumping into the abyss of the alien space ship with a magnetic gun; which is also a ship that has a security system).

The irony of it is amusing to me, but it's also I think it's a very good example that as much as Ford likes to say he's a Scientist™ and governed by Logic™... He's actually first and foremost driven by his emotions, and the logic is something that comes secondhand when he needs an explanation. Case in point with him drawing his relationship and contact with Bill in a way of him making new discoveries for mankind... When in TBOB it becomes very plainly obvious the main reason why he called on Bill was because he was desperately lonely (driven by emotion), less so about his scientific discovery (driven by logic). And I think there's a very human, relatable aspect to it because we all do this. That's why arguing with climate deniers and citing study after study about climate change, or even dealing with racist people and talking about equality and abstract morals doesn't work; there's an emotional aspect that drives people, always beyond our tower of cards of logic...

#hugin rambles#hugin rambles gf#ford pines#stanford pines#gravity falls#gravity falls stanford#bill cipher#billford#the book of bill#as a biologist and a scientist... its all a big sham about emotions. nothing is ever not biased#we like to lie about that. so its always amusing to me to see characters that are like Im a Scientist™ and mean being very logical when in#fact they are by and large driven by emotion. yes you should consciously not bias your data and outputs but you can NEVER remove your bias#in entirety#but yeah. fictional scientists driven by emotion my beloved#stanford pines meta#if tiny

204 notes

·

View notes

Text

#Adversarial testing#AI#Artificial Intelligence#Auditing#Bias detection#Bias mitigation#Black box algorithms#Collaboration#Contextual biases#Data bias#Data collection#Discriminatory outcomes#Diverse and representative data#Diversity in development teams#Education#Equity#Ethical guidelines#Explainability#Fair AI systems#Fairness-aware learning#Feedback loops#Gender bias#Inclusivity#Justice#Legal implications#Machine Learning#Monitoring#Privacy and security#Public awareness#Racial bias

0 notes

Text

Tackling Misinformation: How AI Chatbots Are Helping Debunk Conspiracy Theories

New Post has been published on https://thedigitalinsider.com/tackling-misinformation-how-ai-chatbots-are-helping-debunk-conspiracy-theories/

Tackling Misinformation: How AI Chatbots Are Helping Debunk Conspiracy Theories

Misinformation and conspiracy theories are major challenges in the digital age. While the Internet is a powerful tool for information exchange, it has also become a hotbed for false information. Conspiracy theories, once limited to small groups, now have the power to influence global events and threaten public safety. These theories, often spread through social media, contribute to political polarization, public health risks, and mistrust in established institutions.

The COVID-19 pandemic highlighted the severe consequences of misinformation. The World Health Organization (WHO) called this an “infodemic,” where false information about the virus, treatments, vaccines, and origins spread faster than the virus itself. Traditional fact-checking methods, like human fact-checkers and media literacy programs, needed to catch up with the volume and speed of misinformation. This urgent need for a scalable solution led to the rise of Artificial Intelligence (AI) chatbots as essential tools in combating misinformation.

AI chatbots are not just a technological novelty. They represent a new approach to fact-checking and information dissemination. These bots engage users in real-time conversations, identify and respond to false information, provide evidence-based corrections, and help create a more informed public.

The Rise of Conspiracy Theories

Conspiracy theories have been around for centuries. They often emerge during uncertainty and change, offering simple, sensationalist explanations for complex events. These narratives have always fascinated people, from rumors about secret societies to government cover-ups. In the past, their spread was limited by slower information channels like printed pamphlets, word-of-mouth, and small community gatherings.

The digital age has changed this dramatically. The Internet and social media platforms like Facebook, Twitter, YouTube, and TikTok have become echo chambers where misinformation booms. Algorithms designed to keep users engaged often prioritize sensational content, allowing false claims to spread quickly. For example, a report by the Center for Countering Digital Hate (CCDH) found that just twelve individuals and organizations, known as the “disinformation dozen,” were responsible for nearly 65% of anti-vaccine misinformation on social media in 2023. This shows how a small group can have a huge impact online.

The consequences of this unchecked spread of misinformation are serious. Conspiracy theories weaken trust in science, media, and democratic institutions. They can lead to public health crises, as seen during the COVID-19 pandemic, where false information about vaccines and treatments hindered efforts to control the virus. In politics, misinformation fuels division and makes it harder to have rational, fact-based discussions. A 2023 study by the Harvard Kennedy School’s Misinformation Review found that many Americans reported encountering false political information online, highlighting the widespread nature of the problem. As these trends continue, the need for effective tools to combat misinformation is more urgent than ever.

How AI Chatbots Are Equipped to Combat Misinformation

AI chatbots are emerging as powerful tools to fight misinformation. They use AI and Natural Language Processing (NLP) to interact with users in a human-like way. Unlike traditional fact-checking websites or apps, AI chatbots can have dynamic conversations. They provide personalized responses to users’ questions and concerns, making them particularly effective in dealing with conspiracy theories’ complex and emotional nature.

These chatbots use advanced NLP algorithms to understand and interpret human language. They analyze the intent and context behind a user’s query. When a user submits a statement or question, the chatbot looks for keywords and patterns that match known misinformation or conspiracy theories. For example, suppose a user mentions a claim about vaccine safety. In that case, the chatbot cross-references this claim with a database of verified information from reputable sources like the WHO and CDC or independent fact-checkers like Snopes.

One of AI chatbots’ biggest strengths is real-time fact-checking. They can instantly access vast databases of verified information, allowing them to present users with evidence-based responses tailored to the specific misinformation in question. They offer direct corrections and provide explanations, sources, and follow-up information to help users understand the broader context. These bots operate 24/7 and can handle thousands of interactions simultaneously, offering scalability far beyond what human fact-checkers can provide.

Several case studies show the effectiveness of AI chatbots in combating misinformation. During the COVID-19 pandemic, organizations like the WHO used AI chatbots to address widespread myths about the virus and vaccines. These chatbots provided accurate information, corrected misconceptions, and guided users to additional resources.

AI Chatbots Case Studies from MIT and UNICEF

Research has shown that AI chatbots can significantly reduce belief in conspiracy theories and misinformation. For example, MIT Sloan Research shows that AI chatbots, like GPT-4 Turbo, can dramatically reduce belief in conspiracy theories. The study engaged over 2,000 participants in personalized, evidence-based dialogues with the AI, leading to an average 20% reduction in belief in various conspiracy theories. Remarkably, about one-quarter of participants who initially believed in a conspiracy shifted to uncertainty after their interaction. These effects were durable, lasting for at least two months post-conversation.

Likewise, UNICEF’s U-Report chatbot was important in combating misinformation during the COVID-19 pandemic, particularly in regions with limited access to reliable information. The chatbot provided real-time health information to millions of young people across Africa and other areas, directly addressing COVID-19 and vaccine safety

concerns.

The chatbot played a vital role in enhancing trust in verified health sources by allowing users to ask questions and receive credible answers. It was especially effective in communities where misinformation was extensive, and literacy levels were low, helping to reduce the spread of false claims. This engagement with young users proved vital in promoting accurate information and debunking myths during the health crisis.

Challenges, Limitations, and Future Prospects of AI Chatbots in Tackling Misinformation

Despite their effectiveness, AI chatbots face several challenges. They are only as effective as the data they are trained on, and incomplete or biased datasets can limit their ability to address all forms of misinformation. Additionally, conspiracy theories are constantly evolving, requiring regular updates to the chatbots.

Bias and fairness are also among the concerns. Chatbots may reflect the biases in their training data, potentially skewing responses. For example, a chatbot trained in Western media might not fully understand non-Western misinformation. Diversifying training data and ongoing monitoring can help ensure balanced responses.

User engagement is another hurdle. It cannot be easy to convince individuals deeply ingrained in their beliefs to interact with AI chatbots. Transparency about data sources and offering verification options can build trust. Using a non-confrontational, empathetic tone can also make interactions more constructive.

The future of AI chatbots in combating misinformation looks promising. Advancements in AI technology, such as deep learning and AI-driven moderation systems, will enhance chatbots’ capabilities. Moreover, collaboration between AI chatbots and human fact-checkers can provide a robust approach to misinformation.

Beyond health and political misinformation, AI chatbots can promote media literacy and critical thinking in educational settings and serve as automated advisors in workplaces. Policymakers can support the effective and responsible use of AI through regulations encouraging transparency, data privacy, and ethical use.

The Bottom Line

In conclusion, AI chatbots have emerged as powerful tools in fighting misinformation and conspiracy theories. They offer scalable, real-time solutions that surpass the capacity of human fact-checkers. Delivering personalized, evidence-based responses helps build trust in credible information and promotes informed decision-making.

While data bias and user engagement persist, advancements in AI and collaboration with human fact-checkers hold promise for an even stronger impact. With responsible deployment, AI chatbots can play a vital role in developing a more informed and truthful society.

#000#2023#Africa#ai#AI chatbots#Algorithms#approach#apps#artificial#Artificial Intelligence#Bias#bots#cdc#change#chatbot#chatbots#Collaboration#Community#conspiracy theory#content#covid#data#data bias#data privacy#Database#databases#datasets#Deep Learning#democratic#deployment

0 notes

Note

Are you familiar with this frog?

Yeah pretty sure that's Larry from down the pub. 'Ullo, Larry!

But in all seriousness, I'm afraid I cannot help without location information. Orientation within Bufonidae without location is a nightmare. If this is Africa, we're talking genus Sclerophrys. If it's the USA, it's probably Anaxyrus. If it's Europe, it's probably Bufo. If it's South America we're in Rhinella territory. And so on, and so forth.

#toad#Bufonidae#probably Anaxyrus based on user bias of the hellsite#but even then getting further to ID is really hard without moderately precise location data#please do not dox yourself for frog ID though#but do share on iNaturalist if you want more reliable and also faster identifications#answers by Mark#broomfrog

171 notes

·

View notes

Text

**I’m aware some of these are vastly different genres and are not necessarily comparable (eg some people value drama more than comedy and vice versa) and they all also have very different stakes in terms of the overall story they’re trying to tell so let that sway you if you want! These are legit just the seasons of shows that have made me go 10/10 no notes 👏 both from a storytelling pov and in terms of my own personal enjoyment

#basically I was going through my skam tag and I started thinking about this#bc skam s3 was for sure the first time I was like ‘ok this is a perfect season’#I don’t know what my fave would be currently#probably the bear or iwtv but that may be recency bias lmao#mine#is throwing 911 in here going to skew the data??#probably asgdjsk

66 notes

·

View notes