#INFOGRAPHICS

Text

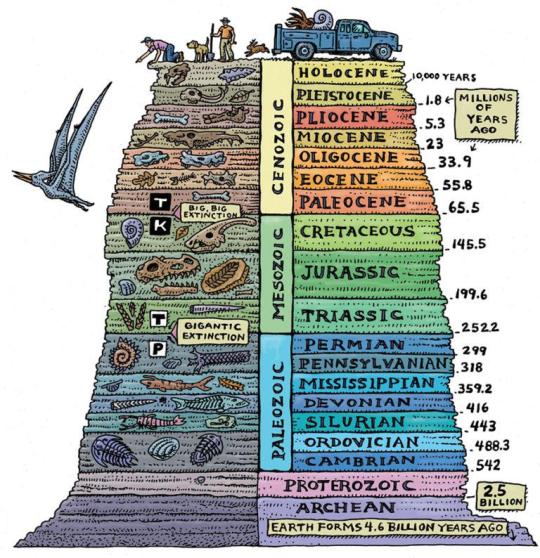

Guide For The Geological Time Periods In Order

#geology#geologists#charts#graphs#infographics#infographic#time periods#educational#earth#science#interesting

10K notes

·

View notes

Photo

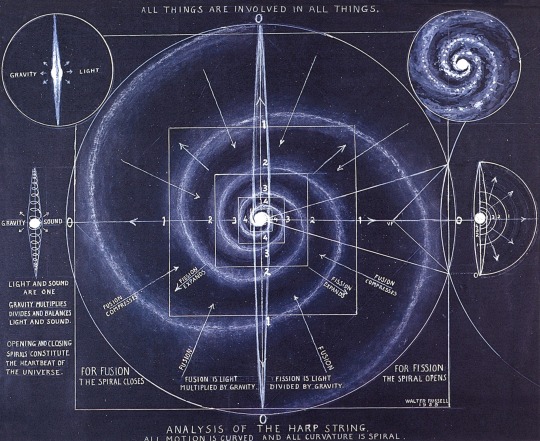

Motion is Curved and All Curvature is Spiral" - Walter Russell

3K notes

·

View notes

Text

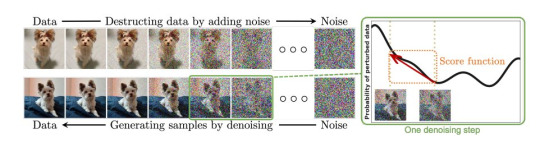

PLEASE JUST LET ME EXPLAIN REDUX

AI {STILL} ISN'T AN AUTOMATIC COLLAGE MACHINE

I'm not judging anyone for thinking so. The reality is difficult to explain and requires a cursory understanding of complex mathematical concepts - but there's still no plagiarism involved. Find the original thread on twitter here; https://x.com/reachartwork/status/1809333885056217532

A longpost!

This is a reimagining of the legendary "Please Just Let Me Explain Pt 1" - much like Marvel, I can do nothing but regurgitate my own ideas.

You can read that thread, which covers slightly different ground and is much wordier, here; https://x.com/reachartwork/status/1564878372185989120

This longpost will;

Give you an approximately ELI13 level understanding of how it works

Provide mostly appropriate side reading for people who want to learn

Look like a corporate presentation

This longpost won't;

Debate the ethics of image scraping

Valorize NFTs or Cryptocurrency, which are the devil

Suck your dick

WHERE DID THIS ALL COME FROM?

The very short, very pithy version of *modern multimodal AI* (that means AI that can turn text into images - multimodal means basically "it can operate on more than one -type- of information") is that we ran an image captioner in reverse.

The process of creating a "model" (the term for the AI's ""brain"", the mathematical representation where the information lives, it's not sentient though!) is necessarily destructive - information about original pictures is not preserved through the training process.

The following is a more in-depth explanation of how exactly the training process works. The entire thing operates off of turning all the images put in it into mush! There's nothing left for it to "memorize". Even if you started with the exact same noise pattern you'd get different results.

SO IF IT'S NOT MEMORIZING, WHAT IS IT DOING?

Great question! It's constructing something called "latent space", which is an internal representation of every concept you can think of and many you can't, and how they all connect to each other both conceptually and visually.

CAN'T IT ONLY MAKE THINGS IT'S SEEN?

Actually, only being able to make things it's seen is sign of a really bad AI! The desired end-goal is a model capable of producing "novel information" (novel meaning "new").

Let's talk about monkey butts and cigarettes again.

BUT I SAW IT DUPLICATE THE MONA LISA!

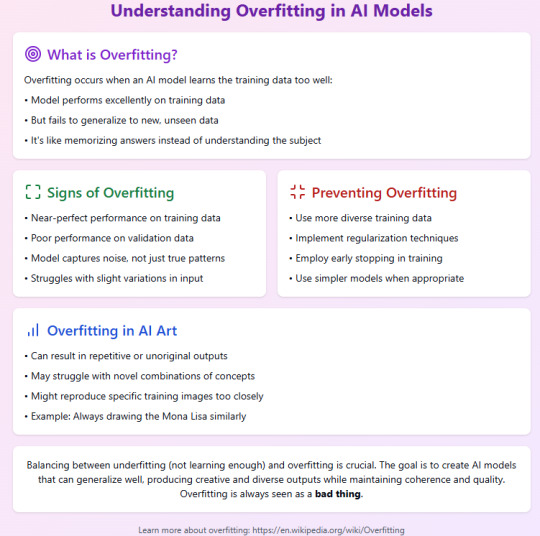

This is called overfitting, and like I said in the last slide, this is a sign of a bad, poorly trained AI, or one with *too little* data. You especially don't want overfitting in a production model!

To quote myself - "basically there are so so so many versions of the mona lisa/starry night/girl with the pearl earring in the dataset that they didn't deduplicate (intentionally or not) that it goes "too far" in that direction when you try to "drive there" in the latent vector and gets stranded."

Anyway, like I said, this is not a technical overview but a primer for people who are concerned about the AI "cutting and pasting bits of other people's artworks". All the information about how it trains is public knowledge, and it definitely Doesn't Do That.

There are probably some minor inaccuracies and oversimplifications in this thread for the purpose of explaining to people with no background in math, coding, or machine learning. But, generally, I've tried to keep it digestible. I'm now going to eat lunch.

Post Script: This is not a discussion about capitalists using AI to steal your job. You won't find me disagreeing that doing so is evil and to be avoided. I think corporate HQs worldwide should spontaneously be filled with dangerous animals.

Cheers!

666 notes

·

View notes

Text

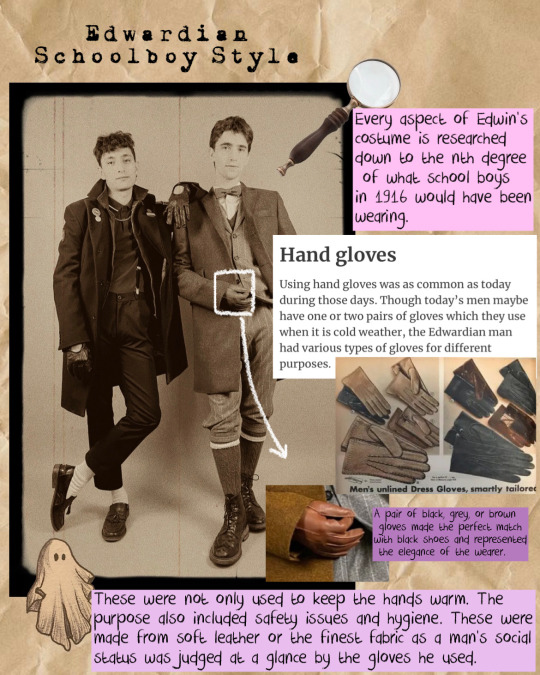

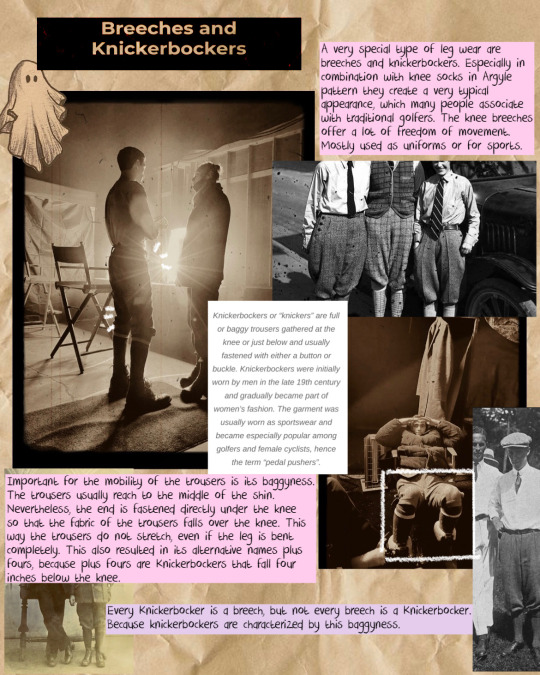

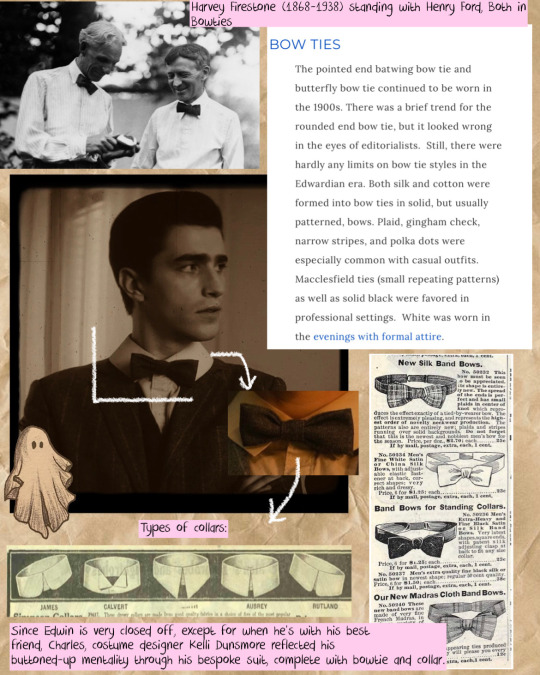

Edwin Payne and his Edwardian clothes:

A breakdown of his outfit using some interviews and hours of research of Edwardian wardrobes.

#dead boy detective agency#dead boy detectives#edwin payne#george rexstrew#charles rowland#jayden revri#details#costume#edwardian#style#clothes#the sandman#kelli dunsmore#infographics

850 notes

·

View notes

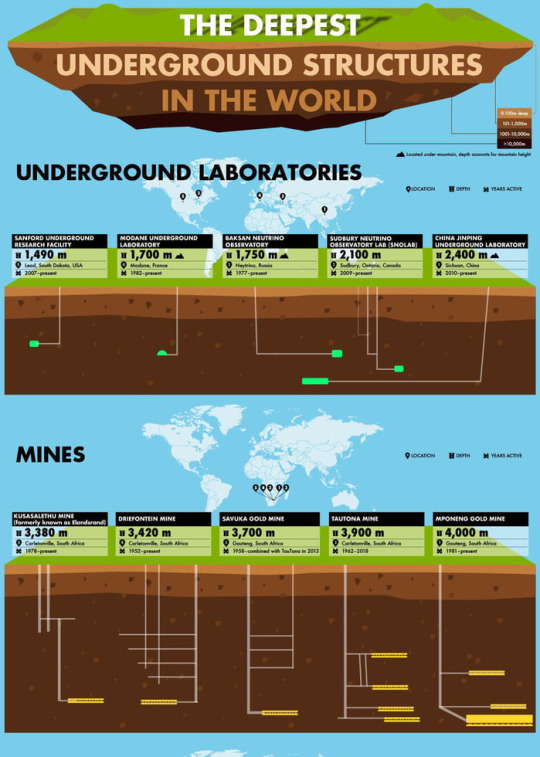

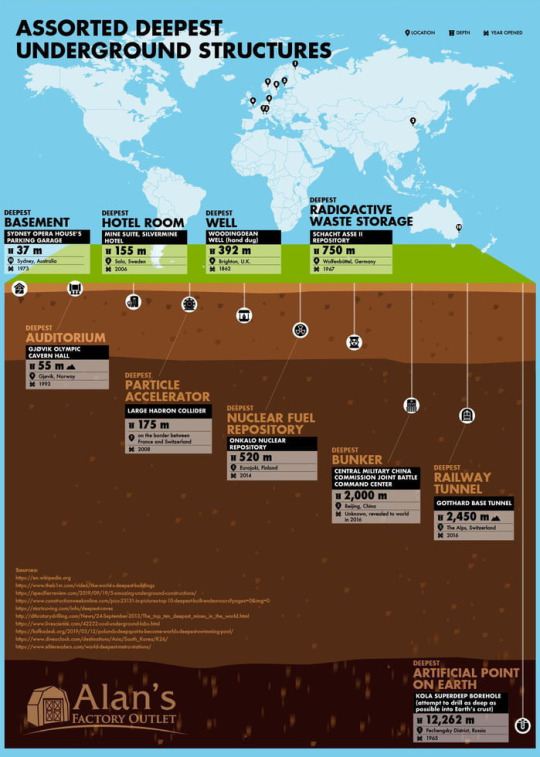

Photo

Mines, caves and subway stations.

2K notes

·

View notes

Text

#Figure 931#The baseball#baseball#noodler#first bass#second bass#third bass#vampire#why am I even here#not wearing a seal costume#left field#has seen doom foretold#diagram#diagrams#infographics#infographic

174 notes

·

View notes

Text

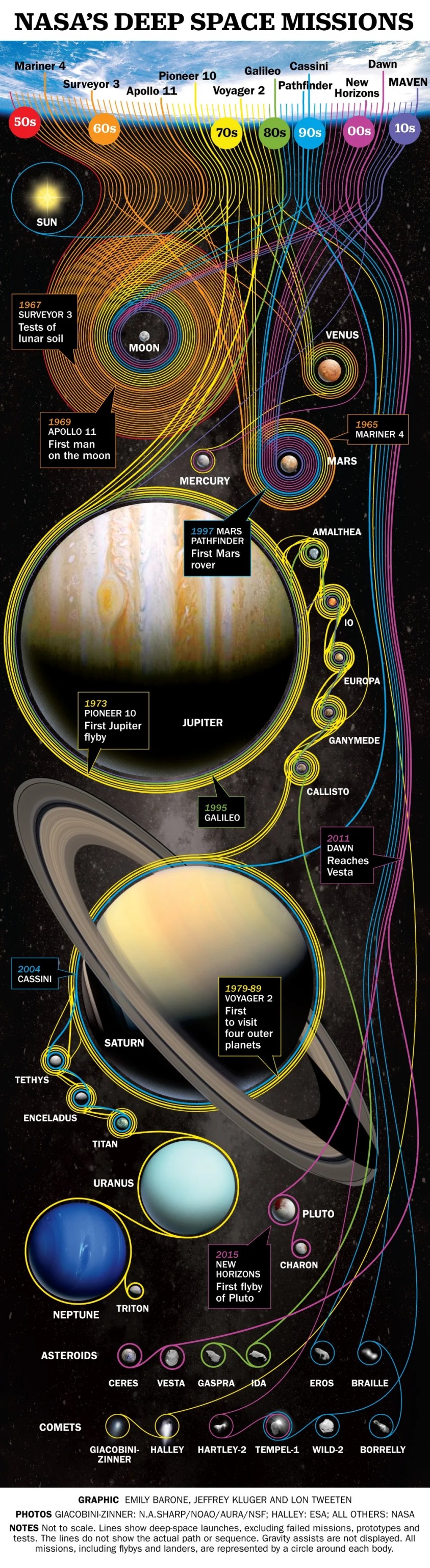

Nasa's deep space missions - an infographic made for Time Magazine by Lon Tweeten

2K notes

·

View notes

Text

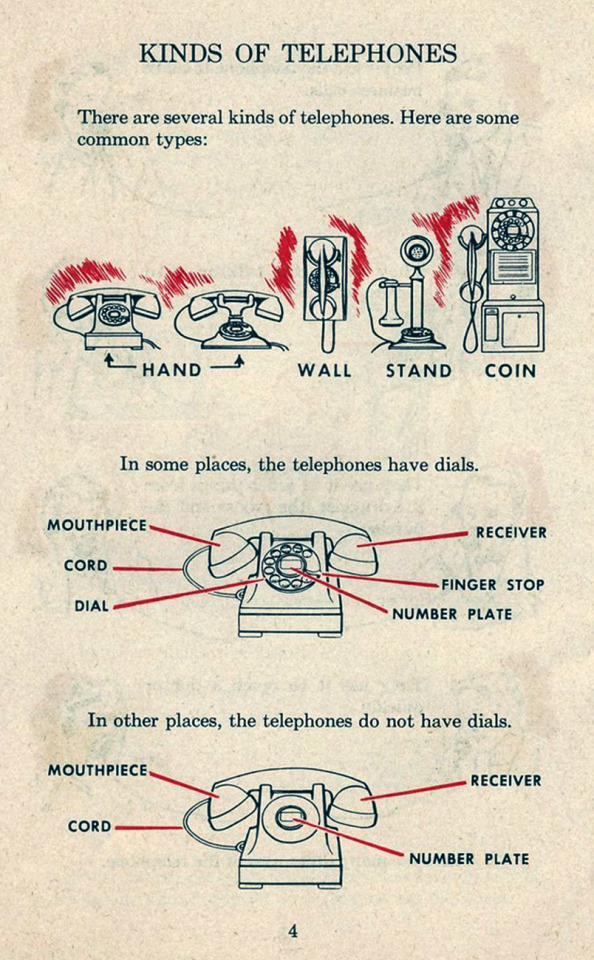

Kinds of telephones.

#vintage illustration#infographics#telephones#vintage telephones#telephone dial#coin operated phones

235 notes

·

View notes

Text

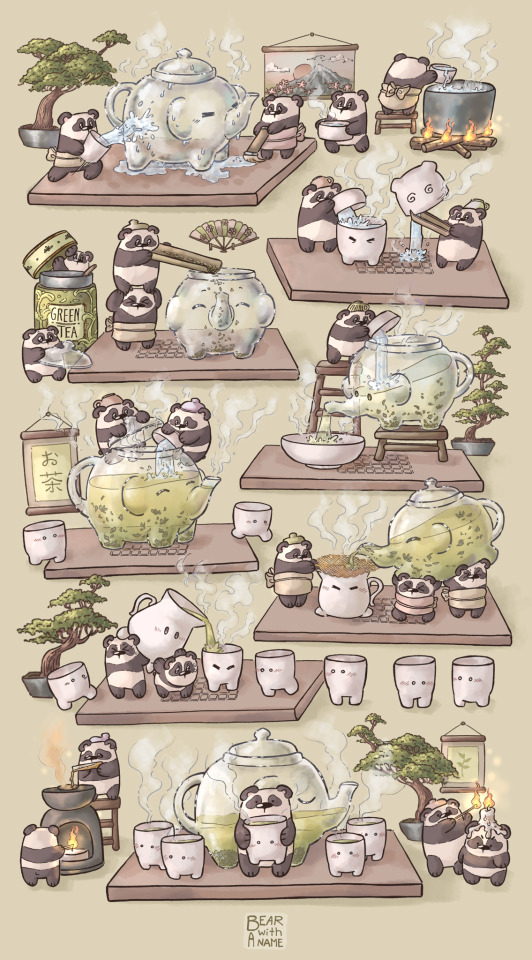

Panda tea ceremony 🍵

Also you can check out this speedpaint ✨

youtube

This post was sponsored by my cringe uni professor👍/silly

(more about it under read more if you’re curious)

Okay so I didn’t plan on posting anything till I draw something tnmn related

But I’m finishing up my semester and one of the requirements to pass my digital illustration exam is

“upload your finished assignment to your youtube channel and generate qr code to it 🤓”

This is literally the only way to submit your work 💥💀

So well

since I’m posting the speedpaint might as well share the art 🐼🍵

#bear stuff 🐻❄️#Youtube#this professor is getting on my nerves I swear 💥#(please like this speedpaint so she’s jealous 😈/silly)#also#tell me which tea you is your favourite in the comments 🎤#(or reblogs)#speedpaint#speed paiting#digital art#megadrawing#big drawings#detailed art#tea ceremony#poster art#cute art#pandasblr#pandas#panda#panda art#tea 🍵#infographics#digital artwork#artist of tumblr#speed paint#green vibes#green aesthetic#green tea#artists on tumblr

127 notes

·

View notes

Text

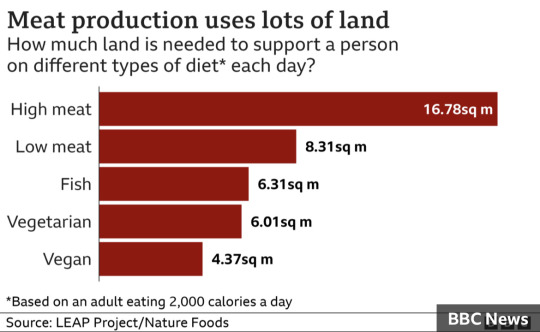

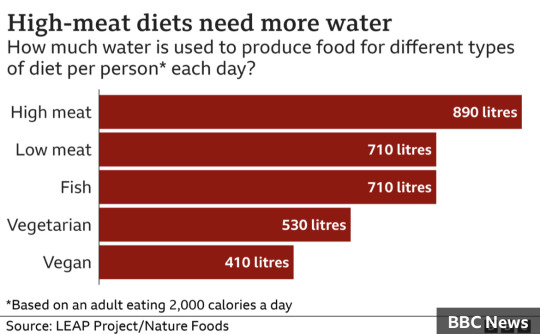

Prof Scarborough surveyed 55,000 people who were divided into big meat-eaters, who ate more than 100g of meat a day, which equates to a big burger, low meat-eaters, whose daily intake was 50g or less, approximately a couple of chipolata sausages, fish-eaters, vegetarians and vegans.

The analysis is the first to look at the detailed impact of diets on other environmental measures all together. These are land use, water use, water pollution and loss of species, usually caused by loss of habitat because of expansion of farming. In all cases high meat-eaters had a significantly higher adverse impact than other groups.

#vegan#meat#dairy#climate crisis#climate change#environment#studies#infographics#articles#news#to add

538 notes

·

View notes

Photo

Carl Hauser / 139 / Field Recorder (Concept) / 2020

310 notes

·

View notes

Text

105 notes

·

View notes

Text

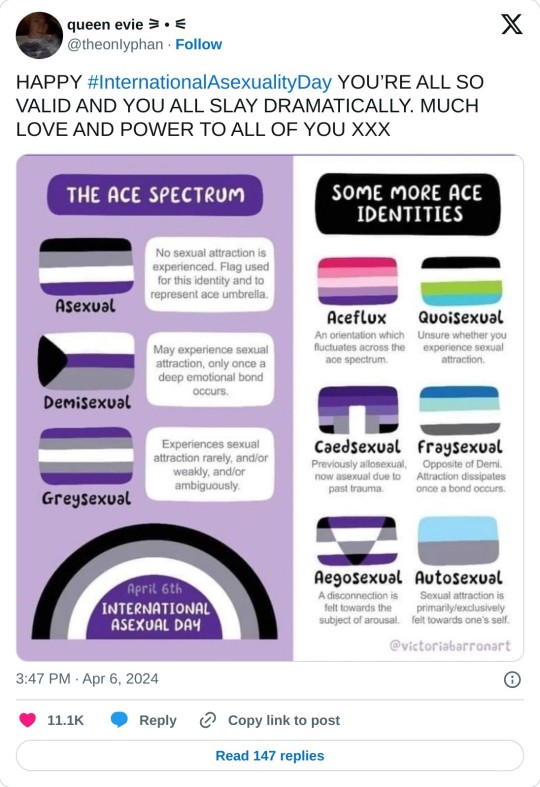

HOW CURRENCIES GOT THEIR NAMES 🤑💰💸

Credit to etymologynerd [Instagram]

#etymology#linguistics#nerd#money#💰#daily#infographic#infographics#currency#economics#history#socioeconomics

83 notes

·

View notes

Photo

The World’s Wettest and Driest Countries

189 notes

·

View notes

Text

Here is another wip for the disability thing

Again, critique is appreciated

#disabled#disability#mobility aid#walkable cities#disability justice#disability rights#comic#infographics#benches

290 notes

·

View notes