#Linux and Servers operating systems

Explore tagged Tumblr posts

Text

Tesla Uses Linux Open Source.

Tesla's in-vehicle infotainment system uses a Linux-based operating system, specifically a modified version of Ubuntu Linux. This OS powers the central display, user interface, and various features like the web browser, video games, and streaming audio.

Penguin

#Tesla #Linux

2 notes

·

View notes

Text

The crash of Windows OS-driven systems around the world reminds me of an incident

The crash of Windows OS-driven systems around the world reminds me of an incident I had in my previous organization. I was the project manager of a small project with about 10 developers. The client was and is one of the biggest companies in India. We were in the last week before the project go-live. All coding was completed and just awaiting client sign-off. It was a Friday. We all went home…

#blogging#crowdstrike crash#linux#ubuntu#visual studio server#windows 2007 server#windows operating systems#writing

0 notes

Text

Installing Linux Mint On A Server | Gaming In A Shack | Printing Issues

File:Rundown Shack.jpg Dream 1 In this dream, I was at a fictional version of the library in the IT Room installing Linux on a new server for the first time. Instead of installing a normal server operating system like Ubuntu Server, they had me installing Linux Mint. So this dream was inspired by me watching SomeOrdinaryGamers YouTube video called Delete Windows Today… before I went to…

View On WordPress

#Classmate#Gaming#Library#Linux#Linux Mint#Mobile Phone#Operating System#Password#Printer#Server#Shack#Television#Video Game#Video Game Console

0 notes

Text

Linux or Windows? What OS to choose for your server?

Before purchasing and setting up a server, you need to consider which operating system you want to use with your server. An average user probably won’t feel the difference between Linux or Windows server OS; however, businesses might have specific technical requirements that could be affected by the choice of an operating system. The choice between Linux and Windows operating systems depends on…

View On WordPress

0 notes

Text

Best Server OS in 2024 in Five Categories

Best Server OS in 2024 in Five Categories @vexpert #vmwarecommunities #serveros #serveroperatingsystems #bestserveros #virtualization #kubernetes #security #nasos #fileserver #containers #docker #hypervisors #virtualizationhowto #vhtforums

When thinking about the best server operating system in 2024, there are a wide range of use cases that servers are used for. Given the wide variety of those use cases, it would make sense to list the best server OS in each category. From virtualization and containers to file sharing and security, this guide explores the best server OS in 2024 across five key categories. We will attempt to provide…

View On WordPress

0 notes

Text

I don't think people realize how absolutely wild Linux is.

Here we have an Operating system that now has 100 different varieties, all of them with their own little features and markets that are also so customizable that you can literally choose what desktop environment you want. Alongside that it is the OS of choice for Supercomputers, most Web servers, and even tiny little toy computers that hackers and gadget makers use. It is the Operating System running on most of the world's smartphones. That's right. Android is a version of Linux.

It can run on literally anything up to and including a potato, and as of now desktop Linux Distros like Ubuntu and Mint are so easily to use and user friendly that technological novices can use them. This Operating system has had App stores since the 90s.

Oh, and what's more, this operating system was fuckin' built by volunteers and users alongside businesses and universities because they needed an all purpose operating system so they built one themselves and released it for free. If you know how to, you can add to this.

Oh, and it's founder wasn't some corporate hotshot. It's an introverted Swedish-speaking Finn who, while he was a student, started making his own Operating system after playing around with someone else's OS. He was going to call it Freax but the guy he got server space from named the folder of his project "Linux" (Linus Unix) and the name stuck. He operates this project from his Home office which is painted in a colour used in asylums. Man's so fucking introverted he developed the world's biggest code repo, Git, so he didn't have to deal with drama and email.

Steam adopted it meaning a LOT of games now natively run in Linux and what cannot be run natively can be adapted to run. It's now the OS used on their consoles (Steam Deck) and to this, a lot of people have found games run better on Linux than on Windows. More computers run Steam on Linux than MacOS.

On top of that the Arctic World Archive (basically the Svalbard Seed bank, but for Data) have this OS saved in their databanks so if the world ends the survivors are going to be using it.

On top of this? It's Free! No "Freemium" bullshit, no "pay to unlock" shit, no licenses, no tracking or data harvesting. If you have an old laptop that still works and a 16GB USB drive, you can go get it and install it and have a functioning computer because it uses less fucking resources than Windows. Got a shit PC? Linux Mint XFCE or Xubuntu is lightweight af. This shit is stopping eWaste.

What's more, it doesn't even scrimp on style. KDE, XFCE, Gnome, Cinnamon, all look pretty and are functional and there's even a load of people who try make their installs look pretty AF as a hobby called "ricing" with a subreddit (/r/unixporn) dedicated to it.

Linux is fucking wild.

11K notes

·

View notes

Text

!Important Warning!

These Days some Mods containing Malware have been uploaded on various Sites.

The Sims After Dark Discord Server has posted the following Info regarding the Issue:

+++

Malware Update: What We Know Now To recap, here are the mods we know for sure were affected by the recent malware outbreak: "Cult Mod v2" uploaded to ModTheSims by PimpMySims (impostor account) "Social Events - Unlimited Time" uploaded to CurseForge by MySims4 (single-use account) "Weather and Forecast Cheat Menu" uploaded to The Sims Resource by MSQSIMS (hacked, real account) "Seasons Cheats Menu" uploaded to The Sims Resource by MSQSIMS (hacked, real account)

Due to this malware using an exe file, we believe that anyone using a Mac or Linux device is completely unaffected by this.

If the exe file was downloaded and executed on your Windows device, it has likely stolen a vast amount of your data and saved passwords from your operating system, your internet browser (Chrome, Edge, Opera, Firefox, and more all affected), Discord, Steam, Telegram, and certain crypto wallets. Thank you to anadius for decompiling the exe.

To quickly check if you have been compromised, press Windows + R on your keyboard to open the Run window. Enter %AppData%/Microsoft/Internet Explorer/UserData in the prompt and hit OK. This will open up the folder the malware was using. If there is a file in this folder called Updater.exe, you have unfortunately fallen victim to the malware. We are unware at this time if the malware has any function which would delete the file at a later time to cover its tracks.

To quickly remove the malware from your computer, Overwolf has put together a cleaner program to deal with it. This program should work even if you downloaded the malware outside of CurseForge. Download SimsVirusCleaner.exe from their github page linked here and run it. Once it has finished, it will give you an output about whether any files have been removed.

+++

For more Information please check the Sims After Dark Server News Channel! Or here https://scarletsrealm.com/malware-mod-information/

TwistedMexi made a Mod to help detect & block such Mods in the Future: https://www.patreon.com/posts/98126153

CurseForge took actions and added mechanics to prevent such Files to be uploaded, so downloading there should be safe.

In general be careful, where and what you download, and do not download my Mods at any other Places than my own Sites and my CurseForge Page.

2K notes

·

View notes

Text

How I ditched streaming services and learned to love Linux: A step-by-step guide to building your very own personal media streaming server (V2.0: REVISED AND EXPANDED EDITION)

This is a revised, corrected and expanded version of my tutorial on setting up a personal media server that previously appeared on my old blog (donjuan-auxenfers). I expect that that post is still making the rounds (hopefully with my addendum on modifying group share permissions in Ubuntu to circumvent 0x8007003B "Unexpected Network Error" messages in Windows 10/11 when transferring files) but I have no way of checking. Anyway this new revised version of the tutorial corrects one or two small errors I discovered when rereading what I wrote, adds links to all products mentioned and is just more polished generally. I also expanded it a bit, pointing more adventurous users toward programs such as Sonarr/Radarr/Lidarr and Overseerr which can be used for automating user requests and media collection.

So then, what is this tutorial? This is a tutorial on how to build and set up your own personal media server using Ubuntu as an operating system and Plex (or Jellyfin) to not only manage your media, but to also stream that media to your devices both at home and abroad anywhere in the world where you have an internet connection. Its intent is to show you how building a personal media server and stuffing it full of films, TV, and music that you acquired through indiscriminate and voracious media piracy various legal methods will free you to completely ditch paid streaming services. No more will you have to pay for Disney+, Netflix, HBOMAX, Hulu, Amazon Prime, Peacock, CBS All Access, Paramount+, Crave or any other streaming service that is not named Criterion Channel. Instead whenever you want to watch your favourite films and television shows, you’ll have your own personal service that only features things that you want to see, with files that you have control over. And for music fans out there, both Jellyfin and Plex support music streaming, meaning you can even ditch music streaming services. Goodbye Spotify, Youtube Music, Tidal and Apple Music, welcome back unreasonably large MP3 (or FLAC) collections.

On the hardware front, I’m going to offer a few options catered towards different budgets and media library sizes. The cost of getting a media server up and running using this guide will cost you anywhere from $450 CAD/$325 USD at the low end to $1500 CAD/$1100 USD at the high end (it could go higher). My server was priced closer to the higher figure, but I went and got a lot more storage than most people need. If that seems like a little much, consider for a moment, do you have a roommate, a close friend, or a family member who would be willing to chip in a few bucks towards your little project provided they get access? Well that's how I funded my server. It might also be worth thinking about the cost over time, i.e. how much you spend yearly on subscriptions vs. a one time cost of setting up a server. Additionally there's just the joy of being able to scream "fuck you" at all those show cancelling, library deleting, hedge fund vampire CEOs who run the studios through denying them your money. Drive a stake through David Zaslav's heart.

On the software side I will walk you step-by-step through installing Ubuntu as your server's operating system, configuring your storage as a RAIDz array with ZFS, sharing your zpool to Windows with Samba, running a remote connection between your server and your Windows PC, and then a little about started with Plex/Jellyfin. Every terminal command you will need to input will be provided, and I even share a custom #bash script that will make used vs. available drive space on your server display correctly in Windows.

If you have a different preferred flavour of Linux (Arch, Manjaro, Redhat, Fedora, Mint, OpenSUSE, CentOS, Slackware etc. et. al.) and are aching to tell me off for being basic and using Ubuntu, this tutorial is not for you. The sort of person with a preferred Linux distro is the sort of person who can do this sort of thing in their sleep. Also I don't care. This tutorial is intended for the average home computer user. This is also why we’re not using a more exotic home server solution like running everything through Docker Containers and managing it through a dashboard like Homarr or Heimdall. While such solutions are fantastic and can be very easy to maintain once you have it all set up, wrapping your brain around Docker is a whole thing in and of itself. If you do follow this tutorial and had fun putting everything together, then I would encourage you to return in a year’s time, do your research and set up everything with Docker Containers.

Lastly, this is a tutorial aimed at Windows users. Although I was a daily user of OS X for many years (roughly 2008-2023) and I've dabbled quite a bit with various Linux distributions (mostly Ubuntu and Manjaro), my primary OS these days is Windows 11. Many things in this tutorial will still be applicable to Mac users, but others (e.g. setting up shares) you will have to look up for yourself. I doubt it would be difficult to do so.

Nothing in this tutorial will require feats of computing expertise. All you will need is a basic computer literacy (i.e. an understanding of what a filesystem and directory are, and a degree of comfort in the settings menu) and a willingness to learn a thing or two. While this guide may look overwhelming at first glance, it is only because I want to be as thorough as possible. I want you to understand exactly what it is you're doing, I don't want you to just blindly follow steps. If you half-way know what you’re doing, you will be much better prepared if you ever need to troubleshoot.

Honestly, once you have all the hardware ready it shouldn't take more than an afternoon or two to get everything up and running.

(This tutorial is just shy of seven thousand words long so the rest is under the cut.)

Step One: Choosing Your Hardware

Linux is a light weight operating system, depending on the distribution there's close to no bloat. There are recent distributions available at this very moment that will run perfectly fine on a fourteen year old i3 with 4GB of RAM. Moreover, running Plex or Jellyfin isn’t resource intensive in 90% of use cases. All this is to say, we don’t require an expensive or powerful computer. This means that there are several options available: 1) use an old computer you already have sitting around but aren't using 2) buy a used workstation from eBay, or what I believe to be the best option, 3) order an N100 Mini-PC from AliExpress or Amazon.

Note: If you already have an old PC sitting around that you’ve decided to use, fantastic, move on to the next step.

When weighing your options, keep a few things in mind: the number of people you expect to be streaming simultaneously at any one time, the resolution and bitrate of your media library (4k video takes a lot more processing power than 1080p) and most importantly, how many of those clients are going to be transcoding at any one time. Transcoding is what happens when the playback device does not natively support direct playback of the source file. This can happen for a number of reasons, such as the playback device's native resolution being lower than the file's internal resolution, or because the source file was encoded in a video codec unsupported by the playback device.

Ideally we want any transcoding to be performed by hardware. This means we should be looking for a computer with an Intel processor with Quick Sync. Quick Sync is a dedicated core on the CPU die designed specifically for video encoding and decoding. This specialized hardware makes for highly efficient transcoding both in terms of processing overhead and power draw. Without these Quick Sync cores, transcoding must be brute forced through software. This takes up much more of a CPU’s processing power and requires much more energy. But not all Quick Sync cores are created equal and you need to keep this in mind if you've decided either to use an old computer or to shop for a used workstation on eBay

Any Intel processor from second generation Core (Sandy Bridge circa 2011) onward has Quick Sync cores. It's not until 6th gen (Skylake), however, that the cores support the H.265 HEVC codec. Intel’s 10th gen (Comet Lake) processors introduce support for 10bit HEVC and HDR tone mapping. And the recent 12th gen (Alder Lake) processors brought with them hardware AV1 decoding. As an example, while an 8th gen (Kaby Lake) i5-8500 will be able to hardware transcode a H.265 encoded file, it will fall back to software transcoding if given a 10bit H.265 file. If you’ve decided to use that old PC or to look on eBay for an old Dell Optiplex keep this in mind.

Note 1: The price of old workstations varies wildly and fluctuates frequently. If you get lucky and go shopping shortly after a workplace has liquidated a large number of their workstations you can find deals for as low as $100 on a barebones system, but generally an i5-8500 workstation with 16gb RAM will cost you somewhere in the area of $260 CAD/$200 USD.

Note 2: The AMD equivalent to Quick Sync is called Video Core Next, and while it's fine, it's not as efficient and not as mature a technology. It was only introduced with the first generation Ryzen CPUs and it only got decent with their newest CPUs, we want something cheap.

Alternatively you could forgo having to keep track of what generation of CPU is equipped with Quick Sync cores that feature support for which codecs, and just buy an N100 mini-PC. For around the same price or less of a used workstation you can pick up a mini-PC with an Intel N100 processor. The N100 is a four-core processor based on the 12th gen Alder Lake architecture and comes equipped with the latest revision of the Quick Sync cores. These little processors offer astounding hardware transcoding capabilities for their size and power draw. Otherwise they perform equivalent to an i5-6500, which isn't a terrible CPU. A friend of mine uses an N100 machine as a dedicated retro emulation gaming system and it does everything up to 6th generation consoles just fine. The N100 is also a remarkably efficient chip, it sips power. In fact, the difference between running one of these and an old workstation could work out to hundreds of dollars a year in energy bills depending on where you live.

You can find these Mini-PCs all over Amazon or for a little cheaper on AliExpress. They range in price from $170 CAD/$125 USD for a no name N100 with 8GB RAM to $280 CAD/$200 USD for a Beelink S12 Pro with 16GB RAM. The brand doesn't really matter, they're all coming from the same three factories in Shenzen, go for whichever one fits your budget or has features you want. 8GB RAM should be enough, Linux is lightweight and Plex only calls for 2GB RAM. 16GB RAM might result in a slightly snappier experience, especially with ZFS. A 256GB SSD is more than enough for what we need as a boot drive, but going for a bigger drive might allow you to get away with things like creating preview thumbnails for Plex, but it’s up to you and your budget.

The Mini-PC I wound up buying was a Firebat AK2 Plus with 8GB RAM and a 256GB SSD. It looks like this:

Note: Be forewarned that if you decide to order a Mini-PC from AliExpress, note the type of power adapter it ships with. The mini-PC I bought came with an EU power adapter and I had to supply my own North American power supply. Thankfully this is a minor issue as barrel plug 30W/12V/2.5A power adapters are easy to find and can be had for $10.

Step Two: Choosing Your Storage

Storage is the most important part of our build. It is also the most expensive. Thankfully it’s also the most easily upgrade-able down the line.

For people with a smaller media collection (4TB to 8TB), a more limited budget, or who will only ever have two simultaneous streams running, I would say that the most economical course of action would be to buy a USB 3.0 8TB external HDD. Something like this one from Western Digital or this one from Seagate. One of these external drives will cost you in the area of $200 CAD/$140 USD. Down the line you could add a second external drive or replace it with a multi-drive RAIDz set up such as detailed below.

If a single external drive the path for you, move on to step three.

For people with larger media libraries (12TB+), who prefer media in 4k, or care who about data redundancy, the answer is a RAID array featuring multiple HDDs in an enclosure.

Note: If you are using an old PC or used workstatiom as your server and have the room for at least three 3.5" drives, and as many open SATA ports on your mother board you won't need an enclosure, just install the drives into the case. If your old computer is a laptop or doesn’t have room for more internal drives, then I would suggest an enclosure.

The minimum number of drives needed to run a RAIDz array is three, and seeing as RAIDz is what we will be using, you should be looking for an enclosure with three to five bays. I think that four disks makes for a good compromise for a home server. Regardless of whether you go for a three, four, or five bay enclosure, do be aware that in a RAIDz array the space equivalent of one of the drives will be dedicated to parity at a ratio expressed by the equation 1 − 1/n i.e. in a four bay enclosure equipped with four 12TB drives, if we configured our drives in a RAIDz1 array we would be left with a total of 36TB of usable space (48TB raw size). The reason for why we might sacrifice storage space in such a manner will be explained in the next section.

A four bay enclosure will cost somewhere in the area of $200 CDN/$140 USD. You don't need anything fancy, we don't need anything with hardware RAID controls (RAIDz is done entirely in software) or even USB-C. An enclosure with USB 3.0 will perform perfectly fine. Don’t worry too much about USB speed bottlenecks. A mechanical HDD will be limited by the speed of its mechanism long before before it will be limited by the speed of a USB connection. I've seen decent looking enclosures from TerraMaster, Yottamaster, Mediasonic and Sabrent.

When it comes to selecting the drives, as of this writing, the best value (dollar per gigabyte) are those in the range of 12TB to 20TB. I settled on 12TB drives myself. If 12TB to 20TB drives are out of your budget, go with what you can afford, or look into refurbished drives. I'm not sold on the idea of refurbished drives but many people swear by them.

When shopping for harddrives, search for drives designed specifically for NAS use. Drives designed for NAS use typically have better vibration dampening and are designed to be active 24/7. They will also often make use of CMR (conventional magnetic recording) as opposed to SMR (shingled magnetic recording). This nets them a sizable read/write performance bump over typical desktop drives. Seagate Ironwolf and Toshiba NAS are both well regarded brands when it comes to NAS drives. I would avoid Western Digital Red drives at this time. WD Reds were a go to recommendation up until earlier this year when it was revealed that they feature firmware that will throw up false SMART warnings telling you to replace the drive at the three year mark quite often when there is nothing at all wrong with that drive. It will likely even be good for another six, seven, or more years.

Step Three: Installing Linux

For this step you will need a USB thumbdrive of at least 6GB in capacity, an .ISO of Ubuntu, and a way to make that thumbdrive bootable media.

First download a copy of Ubuntu desktop (for best performance we could download the Server release, but for new Linux users I would recommend against the server release. The server release is strictly command line interface only, and having a GUI is very helpful for most people. Not many people are wholly comfortable doing everything through the command line, I'm certainly not one of them, and I grew up with DOS 6.0. 22.04.3 Jammy Jellyfish is the current Long Term Service release, this is the one to get.

Download the .ISO and then download and install balenaEtcher on your Windows PC. BalenaEtcher is an easy to use program for creating bootable media, you simply insert your thumbdrive, select the .ISO you just downloaded, and it will create a bootable installation media for you.

Once you've made a bootable media and you've got your Mini-PC (or you old PC/used workstation) in front of you, hook it directly into your router with an ethernet cable, and then plug in the HDD enclosure, a monitor, a mouse and a keyboard. Now turn that sucker on and hit whatever key gets you into the BIOS (typically ESC, DEL or F2). If you’re using a Mini-PC check to make sure that the P1 and P2 power limits are set correctly, my N100's P1 limit was set at 10W, a full 20W under the chip's power limit. Also make sure that the RAM is running at the advertised speed. My Mini-PC’s RAM was set at 2333Mhz out of the box when it should have been 3200Mhz. Once you’ve done that, key over to the boot order and place the USB drive first in the boot order. Then save the BIOS settings and restart.

After you restart you’ll be greeted by Ubuntu's installation screen. Installing Ubuntu is really straight forward, select the "minimal" installation option, as we won't need anything on this computer except for a browser (Ubuntu comes preinstalled with Firefox) and Plex Media Server/Jellyfin Media Server. Also remember to delete and reformat that Windows partition! We don't need it.

Step Four: Installing ZFS and Setting Up the RAIDz Array

Note: If you opted for just a single external HDD skip this step and move onto setting up a Samba share.

Once Ubuntu is installed it's time to configure our storage by installing ZFS to build our RAIDz array. ZFS is a "next-gen" file system that is both massively flexible and massively complex. It's capable of snapshot backup, self healing error correction, ZFS pools can be configured with drives operating in a supplemental manner alongside the storage vdev (e.g. fast cache, dedicated secondary intent log, hot swap spares etc.). It's also a file system very amenable to fine tuning. Block and sector size are adjustable to use case and you're afforded the option of different methods of inline compression. If you'd like a very detailed overview and explanation of its various features and tips on tuning a ZFS array check out these articles from Ars Technica. For now we're going to ignore all these features and keep it simple, we're going to pull our drives together into a single vdev running in RAIDz which will be the entirety of our zpool, no fancy cache drive or SLOG.

Open up the terminal and type the following commands:

sudo apt update

then

sudo apt install zfsutils-linux

This will install the ZFS utility. Verify that it's installed with the following command:

zfs --version

Now, it's time to check that the HDDs we have in the enclosure are healthy, running, and recognized. We also want to find out their device IDs and take note of them:

sudo fdisk -1

Note: You might be wondering why some of these commands require "sudo" in front of them while others don't. "Sudo" is short for "super user do”. When and where "sudo" is used has to do with the way permissions are set up in Linux. Only the "root" user has the access level to perform certain tasks in Linux. As a matter of security and safety regular user accounts are kept separate from the "root" user. It's not advised (or even possible) to boot into Linux as "root" with most modern distributions. Instead by using "sudo" our regular user account is temporarily given the power to do otherwise forbidden things. Don't worry about it too much at this stage, but if you want to know more check out this introduction.

If everything is working you should get a list of the various drives detected along with their device IDs which will look like this: /dev/sdc. You can also check the device IDs of the drives by opening the disk utility app. Jot these IDs down as we'll need them for our next step, creating our RAIDz array.

RAIDz is similar to RAID-5 in that instead of striping your data over multiple disks, exchanging redundancy for speed and available space (RAID-0), or mirroring your data writing by two copies of every piece (RAID-1), it instead writes parity blocks across the disks in addition to striping, this provides a balance of speed, redundancy and available space. If a single drive fails, the parity blocks on the working drives can be used to reconstruct the entire array as soon as a replacement drive is added.

Additionally, RAIDz improves over some of the common RAID-5 flaws. It's more resilient and capable of self healing, as it is capable of automatically checking for errors against a checksum. It's more forgiving in this way, and it's likely that you'll be able to detect when a drive is dying well before it fails. A RAIDz array can survive the loss of any one drive.

Note: While RAIDz is indeed resilient, if a second drive fails during the rebuild, you're fucked. Always keep backups of things you can't afford to lose. This tutorial, however, is not about proper data safety.

To create the pool, use the following command:

sudo zpool create "zpoolnamehere" raidz "device IDs of drives we're putting in the pool"

For example, let's creatively name our zpool "mypool". This poil will consist of four drives which have the device IDs: sdb, sdc, sdd, and sde. The resulting command will look like this:

sudo zpool create mypool raidz /dev/sdb /dev/sdc /dev/sdd /dev/sde

If as an example you bought five HDDs and decided you wanted more redundancy dedicating two drive to this purpose, we would modify the command to "raidz2" and the command would look something like the following:

sudo zpool create mypool raidz2 /dev/sdb /dev/sdc /dev/sdd /dev/sde /dev/sdf

An array configured like this is known as RAIDz2 and is able to survive two disk failures.

Once the zpool has been created, we can check its status with the command:

zpool status

Or more concisely with:

zpool list

The nice thing about ZFS as a file system is that a pool is ready to go immediately after creation. If we were to set up a traditional RAID-5 array using mbam, we'd have to sit through a potentially hours long process of reformatting and partitioning the drives. Instead we're ready to go right out the gates.

The zpool should be automatically mounted to the filesystem after creation, check on that with the following:

df -hT | grep zfs

Note: If your computer ever loses power suddenly, say in event of a power outage, you may have to re-import your pool. In most cases, ZFS will automatically import and mount your pool, but if it doesn’t and you can't see your array, simply open the terminal and type sudo zpool import -a.

By default a zpool is mounted at /"zpoolname". The pool should be under our ownership but let's make sure with the following command:

sudo chown -R "yourlinuxusername" /"zpoolname"

Note: Changing file and folder ownership with "chown" and file and folder permissions with "chmod" are essential commands for much of the admin work in Linux, but we won't be dealing with them extensively in this guide. If you'd like a deeper tutorial and explanation you can check out these two guides: chown and chmod.

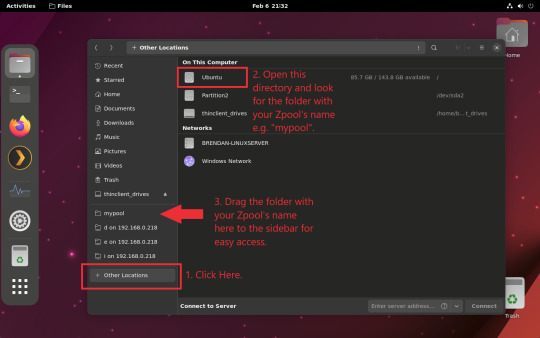

You can access the zpool file system through the GUI by opening the file manager (the Ubuntu default file manager is called Nautilus) and clicking on "Other Locations" on the sidebar, then entering the Ubuntu file system and looking for a folder with your pool's name. Bookmark the folder on the sidebar for easy access.

Your storage pool is now ready to go. Assuming that we already have some files on our Windows PC we want to copy to over, we're going to need to install and configure Samba to make the pool accessible in Windows.

Step Five: Setting Up Samba/Sharing

Samba is what's going to let us share the zpool with Windows and allow us to write to it from our Windows machine. First let's install Samba with the following commands:

sudo apt-get update

then

sudo apt-get install samba

Next create a password for Samba.

sudo smbpswd -a "yourlinuxusername"

It will then prompt you to create a password. Just reuse your Ubuntu user password for simplicity's sake.

Note: if you're using just a single external drive replace the zpool location in the following commands with wherever it is your external drive is mounted, for more information see this guide on mounting an external drive in Ubuntu.

After you've created a password we're going to create a shareable folder in our pool with this command

mkdir /"zpoolname"/"foldername"

Now we're going to open the smb.conf file and make that folder shareable. Enter the following command.

sudo nano /etc/samba/smb.conf

This will open the .conf file in nano, the terminal text editor program. Now at the end of smb.conf add the following entry:

["foldername"]

path = /"zpoolname"/"foldername"

available = yes

valid users = "yourlinuxusername"

read only = no

writable = yes

browseable = yes

guest ok = no

Ensure that there are no line breaks between the lines and that there's a space on both sides of the equals sign. Our next step is to allow Samba traffic through the firewall:

sudo ufw allow samba

Finally restart the Samba service:

sudo systemctl restart smbd

At this point we'll be able to access to the pool, browse its contents, and read and write to it from Windows. But there's one more thing left to do, Windows doesn't natively support the ZFS file systems and will read the used/available/total space in the pool incorrectly. Windows will read available space as total drive space, and all used space as null. This leads to Windows only displaying a dwindling amount of "available" space as the drives are filled. We can fix this! Functionally this doesn't actually matter, we can still write and read to and from the disk, it just makes it difficult to tell at a glance the proportion of used/available space, so this is an optional step but one I recommend (this step is also unnecessary if you're just using a single external drive). What we're going to do is write a little shell script in #bash. Open nano with the terminal with the command:

nano

Now insert the following code:

#!/bin/bash CUR_PATH=`pwd` ZFS_CHECK_OUTPUT=$(zfs get type $CUR_PATH 2>&1 > /dev/null) > /dev/null if [[ $ZFS_CHECK_OUTPUT == *not\ a\ ZFS* ]] then IS_ZFS=false else IS_ZFS=true fi if [[ $IS_ZFS = false ]] then df $CUR_PATH | tail -1 | awk '{print $2" "$4}' else USED=$((`zfs get -o value -Hp used $CUR_PATH` / 1024)) > /dev/null AVAIL=$((`zfs get -o value -Hp available $CUR_PATH` / 1024)) > /dev/null TOTAL=$(($USED+$AVAIL)) > /dev/null echo $TOTAL $AVAIL fi

Save the script as "dfree.sh" to /home/"yourlinuxusername" then change the ownership of the file to make it executable with this command:

sudo chmod 774 dfree.sh

Now open smb.conf with sudo again:

sudo nano /etc/samba/smb.conf

Now add this entry to the top of the configuration file to direct Samba to use the results of our script when Windows asks for a reading on the pool's used/available/total drive space:

[global]

dfree command = /home/"yourlinuxusername"/dfree.sh

Save the changes to smb.conf and then restart Samba again with the terminal:

sudo systemctl restart smbd

Now there’s one more thing we need to do to fully set up the Samba share, and that’s to modify a hidden group permission. In the terminal window type the following command:

usermod -a -G sambashare “yourlinuxusername”

Then restart samba again:

sudo systemctl restart smbd

If we don’t do this last step, everything will appear to work fine, and you will even be able to see and map the drive from Windows and even begin transferring files, but you'd soon run into a lot of frustration. As every ten minutes or so a file would fail to transfer and you would get a window announcing “0x8007003B Unexpected Network Error”. This window would require your manual input to continue the transfer with the file next in the queue. And at the end it would reattempt to transfer whichever files failed the first time around. 99% of the time they’ll go through that second try, but this is still all a major pain in the ass. Especially if you’ve got a lot of data to transfer or you want to step away from the computer for a while.

It turns out samba can act a little weirdly with the higher read/write speeds of RAIDz arrays and transfers from Windows, and will intermittently crash and restart itself if this group option isn’t changed. Inputting the above command will prevent you from ever seeing that window.

The last thing we're going to do before switching over to our Windows PC is grab the IP address of our Linux machine. Enter the following command:

hostname -I

This will spit out this computer's IP address on the local network (it will look something like 192.168.0.x), write it down. It might be a good idea once you're done here to go into your router settings and reserving that IP for your Linux system in the DHCP settings. Check the manual for your specific model router on how to access its settings, typically it can be accessed by opening a browser and typing http:\\192.168.0.1 in the address bar, but your router may be different.

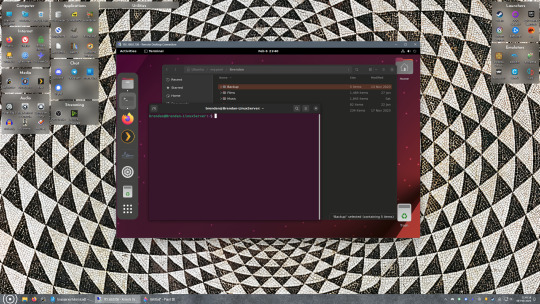

Okay we’re done with our Linux computer for now. Get on over to your Windows PC, open File Explorer, right click on Network and click "Map network drive". Select Z: as the drive letter (you don't want to map the network drive to a letter you could conceivably be using for other purposes) and enter the IP of your Linux machine and location of the share like so: \\"LINUXCOMPUTERLOCALIPADDRESSGOESHERE"\"zpoolnamegoeshere"\. Windows will then ask you for your username and password, enter the ones you set earlier in Samba and you're good. If you've done everything right it should look something like this:

You can now start moving media over from Windows to the share folder. It's a good idea to have a hard line running to all machines. Moving files over Wi-Fi is going to be tortuously slow, the only thing that’s going to make the transfer time tolerable (hours instead of days) is a solid wired connection between both machines and your router.

Step Six: Setting Up Remote Desktop Access to Your Server

After the server is up and going, you’ll want to be able to access it remotely from Windows. Barring serious maintenance/updates, this is how you'll access it most of the time. On your Linux system open the terminal and enter:

sudo apt install xrdp

Then:

sudo systemctl enable xrdp

Once it's finished installing, open “Settings” on the sidebar and turn off "automatic login" in the User category. Then log out of your account. Attempting to remotely connect to your Linux computer while you’re logged in will result in a black screen!

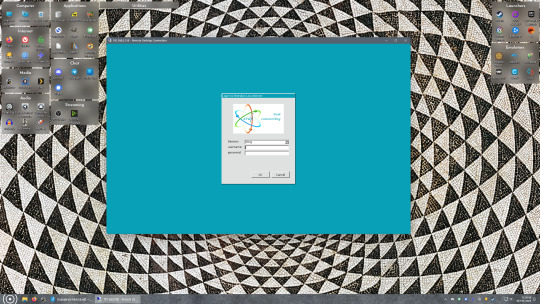

Now get back on your Windows PC, open search and look for "RDP". A program called "Remote Desktop Connection" should pop up, open this program as an administrator by right-clicking and selecting “run as an administrator”. You’ll be greeted with a window. In the field marked “Computer” type in the IP address of your Linux computer. Press connect and you'll be greeted with a new window and prompt asking for your username and password. Enter your Ubuntu username and password here.

If everything went right, you’ll be logged into your Linux computer. If the performance is sluggish, adjust the display options. Lowering the resolution and colour depth do a lot to make the interface feel snappier.

Remote access is how we're going to be using our Linux system from now, barring edge cases like needing to get into the BIOS or upgrading to a new version of Ubuntu. Everything else from performing maintenance like a monthly zpool scrub to checking zpool status and updating software can all be done remotely.

This is how my server lives its life now, happily humming and chirping away on the floor next to the couch in a corner of the living room.

Step Seven: Plex Media Server/Jellyfin

Okay we’ve got all the ground work finished and our server is almost up and running. We’ve got Ubuntu up and running, our storage array is primed, we’ve set up remote connections and sharing, and maybe we’ve moved over some of favourite movies and TV shows.

Now we need to decide on the media server software to use which will stream our media to us and organize our library. For most people I’d recommend Plex. It just works 99% of the time. That said, Jellyfin has a lot to recommend it by too, even if it is rougher around the edges. Some people run both simultaneously, it’s not that big of an extra strain. I do recommend doing a little bit of your own research into the features each platform offers, but as a quick run down, consider some of the following points:

Plex is closed source and is funded through PlexPass purchases while Jellyfin is open source and entirely user driven. This means a number of things: for one, Plex requires you to purchase a “PlexPass” (purchased as a one time lifetime fee $159.99 CDN/$120 USD or paid for on a monthly or yearly subscription basis) in order to access to certain features, like hardware transcoding (and we want hardware transcoding) or automated intro/credits detection and skipping, Jellyfin offers some of these features for free through plugins. Plex supports a lot more devices than Jellyfin and updates more frequently. That said, Jellyfin's Android and iOS apps are completely free, while the Plex Android and iOS apps must be activated for a one time cost of $6 CDN/$5 USD. But that $6 fee gets you a mobile app that is much more functional and features a unified UI across platforms, the Plex mobile apps are simply a more polished experience. The Jellyfin apps are a bit of a mess and the iOS and Android versions are very different from each other.

Jellyfin’s actual media player is more fully featured than Plex's, but on the other hand Jellyfin's UI, library customization and automatic media tagging really pale in comparison to Plex. Streaming your music library is free through both Jellyfin and Plex, but Plex offers the PlexAmp app for dedicated music streaming which boasts a number of fantastic features, unfortunately some of those fantastic features require a PlexPass. If your internet is down, Jellyfin can still do local streaming, while Plex can fail to play files unless you've got it set up a certain way. Jellyfin has a slew of neat niche features like support for Comic Book libraries with the .cbz/.cbt file types, but then Plex offers some free ad-supported TV and films, they even have a free channel that plays nothing but Classic Doctor Who.

Ultimately it's up to you, I settled on Plex because although some features are pay-walled, it just works. It's more reliable and easier to use, and a one-time fee is much easier to swallow than a subscription. I had a pretty easy time getting my boomer parents and tech illiterate brother introduced to and using Plex and I don't know if I would've had as easy a time doing that with Jellyfin. I do also need to mention that Jellyfin does take a little extra bit of tinkering to get going in Ubuntu, you’ll have to set up process permissions, so if you're more tolerant to tinkering, Jellyfin might be up your alley and I’ll trust that you can follow their installation and configuration guide. For everyone else, I recommend Plex.

So pick your poison: Plex or Jellyfin.

Note: The easiest way to download and install either of these packages in Ubuntu is through Snap Store.

After you've installed one (or both), opening either app will launch a browser window into the browser version of the app allowing you to set all the options server side.

The process of adding creating media libraries is essentially the same in both Plex and Jellyfin. You create a separate libraries for Television, Movies, and Music and add the folders which contain the respective types of media to their respective libraries. The only difficult or time consuming aspect is ensuring that your files and folders follow the appropriate naming conventions:

Plex naming guide for Movies

Plex naming guide for Television

Jellyfin follows the same naming rules but I find their media scanner to be a lot less accurate and forgiving than Plex. Once you've selected the folders to be scanned the service will scan your files, tagging everything and adding metadata. Although I find do find Plex more accurate, it can still erroneously tag some things and you might have to manually clean up some tags in a large library. (When I initially created my library it tagged the 1963-1989 Doctor Who as some Korean soap opera and I needed to manually select the correct match after which everything was tagged normally.) It can also be a bit testy with anime (especially OVAs) be sure to check TVDB to ensure that you have your files and folders structured and named correctly. If something is not showing up at all, double check the name.

Once that's done, organizing and customizing your library is easy. You can set up collections, grouping items together to fit a theme or collect together all the entries in a franchise. You can make playlists, and add custom artwork to entries. It's fun setting up collections with posters to match, there are even several websites dedicated to help you do this like PosterDB. As an example, below are two collections in my library, one collecting all the entries in a franchise, the other follows a theme.

My Star Trek collection, featuring all eleven television series, and thirteen films.

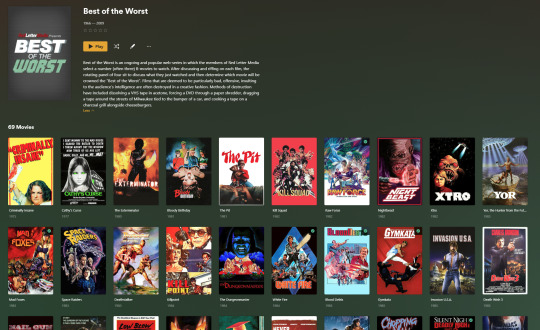

My Best of the Worst collection, featuring sixty-nine films previously showcased on RedLetterMedia’s Best of the Worst. They’re all absolutely terrible and I love them.

As for settings, ensure you've got Remote Access going, it should work automatically and be sure to set your upload speed after running a speed test. In the library settings set the database cache to 2000MB to ensure a snappier and more responsive browsing experience, and then check that playback quality is set to original/maximum. If you’re severely bandwidth limited on your upload and have remote users, you might want to limit the remote stream bitrate to something more reasonable, just as a note of comparison Netflix’s 1080p bitrate is approximately 5Mbps, although almost anyone watching through a chromium based browser is streaming at 720p and 3mbps. Other than that you should be good to go. For actually playing your files, there's a Plex app for just about every platform imaginable. I mostly watch television and films on my laptop using the Windows Plex app, but I also use the Android app which can broadcast to the chromecast connected to the TV in the office and the Android TV app for our smart TV. Both are fully functional and easy to navigate, and I can also attest to the OS X version being equally functional.

Part Eight: Finding Media

Now, this is not really a piracy tutorial, there are plenty of those out there. But if you’re unaware, BitTorrent is free and pretty easy to use, just pick a client (qBittorrent is the best) and go find some public trackers to peruse. Just know now that all the best trackers are private and invite only, and that they can be exceptionally difficult to get into. I’m already on a few, and even then, some of the best ones are wholly out of my reach.

If you decide to take the left hand path and turn to Usenet you’ll have to pay. First you’ll need to sign up with a provider like Newshosting or EasyNews for access to Usenet itself, and then to actually find anything you’re going to need to sign up with an indexer like NZBGeek or NZBFinder. There are dozens of indexers, and many people cross post between them, but for more obscure media it’s worth checking multiple. You’ll also need a binary downloader like SABnzbd. That caveat aside, Usenet is faster, bigger, older, less traceable than BitTorrent, and altogether slicker. I honestly prefer it, and I'm kicking myself for taking this long to start using it because I was scared off by the price. I’ve found so many things on Usenet that I had sought in vain elsewhere for years, like a 2010 Italian film about a massacre perpetrated by the SS that played the festival circuit but never received a home media release; some absolute hero uploaded a rip of a festival screener DVD to Usenet. Anyway, figure out the rest of this shit on your own and remember to use protection, get yourself behind a VPN, use a SOCKS5 proxy with your BitTorrent client, etc.

On the legal side of things, if you’re around my age, you (or your family) probably have a big pile of DVDs and Blu-Rays sitting around unwatched and half forgotten. Why not do a bit of amateur media preservation, rip them and upload them to your server for easier access? (Your tools for this are going to be Handbrake to do the ripping and AnyDVD to break any encryption.) I went to the trouble of ripping all my SCTV DVDs (five box sets worth) because none of it is on streaming nor could it be found on any pirate source I tried. I’m glad I did, forty years on it’s still one of the funniest shows to ever be on TV.

Part Nine/Epilogue: Sonarr/Radarr/Lidarr and Overseerr

There are a lot of ways to automate your server for better functionality or to add features you and other users might find useful. Sonarr, Radarr, and Lidarr are a part of a suite of “Servarr” services (there’s also Readarr for books and Whisparr for adult content) that allow you to automate the collection of new episodes of TV shows (Sonarr), new movie releases (Radarr) and music releases (Lidarr). They hook in to your BitTorrent client or Usenet binary newsgroup downloader and crawl your preferred Torrent trackers and Usenet indexers, alerting you to new releases and automatically grabbing them. You can also use these services to manually search for new media, and even replace/upgrade your existing media with better quality uploads. They’re really a little tricky to set up on a bare metal Ubuntu install (ideally you should be running them in Docker Containers), and I won’t be providing a step by step on installing and running them, I’m simply making you aware of their existence.

The other bit of kit I want to make you aware of is Overseerr which is a program that scans your Plex media library and will serve recommendations based on what you like. It also allows you and your users to request specific media. It can even be integrated with Sonarr/Radarr/Lidarr so that fulfilling those requests is fully automated.

And you're done. It really wasn't all that hard. Enjoy your media. Enjoy the control you have over that media. And be safe in the knowledge that no hedgefund CEO motherfucker who hates the movies but who is somehow in control of a major studio will be able to disappear anything in your library as a tax write-off.

1K notes

·

View notes

Note

Thoughts on Linux (the OS)

Misconception!

I don't want to be obnoxiously pedantic, but Linux is not an OS. It is a kernel, which is just part of an OS. (Like how Windows contains a lot more than just KERNEL32.DLL). A very, very important piece, which directly shapes the ways that all the other programs will talk to each other. Think of it like a LEGO baseplate.

Everything else is built on top of the kernel. But, a baseplate does not a city make. We need buildings! A full operating system is a combination of a kernel and kernel-level (get to talk to hardware directly) utilities for talking to hardware (drivers), and userspace (get to talk to hardware ONLY through the kernel) utilities ranging in abstraction level from stuff like window management and sound servers and system bootstrapping to app launchers and file explorers and office suites. Every "Linux OS" is a combination of that LEGO baseplate with some permutation of low and high-level userspace utilities.

Now, a lot of Linux-based OSes do end up feeling (and being) very similar to each other. Sometimes because they're directly copying each other's homework (AKA forking, it's okay in the open source world as long as you follow the terms of the licenses!) but more generally it's because there just aren't very many options for a lot of those utilities.

Want your OS to be more than just a text prompt? Your pick is between X.org (old and busted but...well, not reliable, but a very well-known devil) and Wayland (new hotness, trying its damn hardest to subsume X and not completely succeeding). Want a graphics toolkit? GTK or Qt. Want to be able to start the OS? systemd or runit. (Or maybe SysVinit if you're a real caveman true believer.) Want sound? ALSA is a given, but on top of that your options are PulseAudio, PipeWire, and JACK. Want an office suite? Libreoffice is really the only name in the game at present. Want terminal utilities? Well, they're all gonna have to conform to the POSIX spec in some capacity. GNU coreutils, busybox, toybox, all more or less the same programs from a user perspective.

Only a few ever get away from the homogeneity, like Android. But I know that you're not asking about Android. When people say "Linux OS" they're talking about the homogeneity. The OSes that use terminals. The ones that range in looks from MacOS knockoff to Windows knockoff to 'impractical spaceship console'. What do I think about them?

I like them! I have my strongly-felt political and personal opinions about which building blocks are better than others (generally I fall into the 'functionality over ideology' camp; Nvidia proprietary over Nouveau, X11 over Wayland, Systemd over runit, etc.) but I like the experience most Linux OSes will give me.

I like my system to be a little bit of a hobby, so when I finally ditched Windows for the last time I picked Arch Linux. Wouldn't recommend it to anyone who doesn't want to treat their OS as a hobby, though. There are better and easier options for 'normal users'.

I like the terminal very much. I understand it's intimidating for new users, but it really is an incredible tool for doing stuff once you're in the mindset. GUIs are great when you're inexperienced, but sometimes you just wanna tell the computer what you want with your words, right? So many Linux programs will let you talk to them in the terminal, or are terminal-only. It's very flexible.

I also really, really love the near-universal concept of a 'package manager' -- a program which automatically installs other programs for you. Coming from Windows it can feel kinda restrictive that you have to go through this singular port of entry to install anything, instead of just looking up the program and running an .msi file, but I promise that if you get used to it it's very hard to go back. Want to install discord? yay -S discord. Want to install firefox? yay -S firefox. Minecraft? yay -S minecraft-launcher. etc. etc. No more fucking around in the Add/Remove Programs menu, it's all in one place! Only very rarely will you want to install something that isn't in the package manager's repositories, and when you do you're probably already doing something that requires technical know-how.

Not a big fan of the filesystem structure. It's got a lot of history. 1970s mainframe computer operation procedure history. Not relevant to desktop users, or even modern mainframe users. The folks over at freedesktop.org have tried their best to get at least the user's home directory cleaned up but...well, there's a lot of historical inertia at play. It's not a popular movement right now but I've been very interested in watching some people try to crack that nut.

Aaaaaand I think those are all the opinions I can share without losing everyone in the weeds. Hope it was worth reading!

223 notes

·

View notes

Text

I made it easier to back up your blog with tumblr-utils

Hey friends! I've seen a few posts going around about how to back up your blog in case tumblr disappears. Unfortunately the best backup approach I've seen is not the built-in backup option from tumblr itself, but a python app called tumblr-utils. tumblr-utils is a very, very cool project that deserves a lot of credit, but it can be tough to get working. So I've put together something to make it a bit easier for myself that hopefully might help others as well.

If you've ever used Docker, you know how much of a game-changer it is to have a pre-packaged setup for running code that someone else got working for you, rather than having to cobble together a working environment yourself. Well, I just published a tumblr-utils Docker container! If you can get Docker running on your system - whether Windows, Linux, or Mac - you can tell it to pull this container from dockerhub and run it to get a full backup of your tumblr blog that you can actually open in a web browser and navigate just like the real thing!

This is still going to be more complicated than grabbing a zip file from the tumblr menu, but hopefully it lowers the barrier a little bit by avoiding things like python dependency errors and troubleshooting for your specific operating system.

If you happen to have an Unraid server, I'm planning to submit it to the community apps repository there to make it even easier.

Drop me a message or open an issue on github if you run into problems!

207 notes

·

View notes

Text

the op of that "you should restart your computer every few days" post blocked me so i'm going to perform the full hater move of writing my own post to explain why he's wrong

why should you listen to me: took operating system design and a "how to go from transistors to a pipelined CPU" class in college, i have several servers (one physical, four virtual) that i maintain, i use nixos which is the linux distribution for people who are even bigger fucking nerds about computers than the typical linux user. i also ran this past the other people i know that are similarly tech competent and they also agreed OP is wrong (haven't run this post by them but nothing i say here is controversial).

anyway the tl;dr here is:

you don't need to shut down or restart your computer unless something is wrong or you need to install updates

i think this misconception that restarting is necessary comes from the fact that restarting often fixes problems, and so people think that the problems are because of the not restarting. this is, generally, not true. in most cases there's some specific program (or part of the operating system) that's gotten into a bad state, and restarting that one program would fix it. but restarting is easier since you don't have to identify specifically what's gone wrong. the most common problem i can think of that wouldn't fall under this category is your graphics card drivers fucking up; that's not something you can easily reinitialize without restarting the entire OS.

this isn't saying that restarting is a bad step; if you don't want to bother trying to figure out the problem, it's not a bad first go. personally, if something goes wrong i like to try to solve it without a restart, but i also know way, way more about computers than most people.

as more evidence to point to this, i would point out that servers are typically not restarted unless there's a specific need. this is not because they run special operating systems or have special parts; people can and do run servers using commodity consumer hardware, and while linux is much more common in the server world, it doesn't have any special features to make it more capable of long operation. my server with the longest uptime is 9 months, and i'd have one with even more uptime than that if i hadn't fucked it up so bad two months ago i had to restore from a full disk backup. the laptop i'm typing this on has about a month of uptime (including time spent in sleep mode). i've had servers with uptimes measuring in years.

there's also a lot of people that think that the parts being at an elevated temperature just from running is harmful. this is also, in general, not true. i'd be worried about running it at 100% full blast CPU/GPU for months on end, but nobody reading this post is doing that.

the other reason i see a lot is energy use. the typical energy use of a computer not doing anything is like... 20-30 watts. this is about two or three lightbulbs worth. that's not nothing, but it's not a lot to be concerned over. in terms of monetary cost, that's maybe $10 on your power bill. if it's in sleep mode it's even less, and if it's in full-blown hibernation mode it's literally zero.

there are also people in the replies to that post giving reasons. all of them are false.

temporary files generally don't use enough disk space to be worth worrying about

programs that leak memory return it all to the OS when they're closed, so it's enough to just close the program itself. and the OS generally doesn't leak memory.

'clearing your RAM' is not a thing you need to do. neither is resetting your registry values.

your computer can absolutely use disk space from deleted files without a restart. i've taken a server that was almost completely full, deleted a bunch of unnecessary files, and it continued fine without a restart.

1K notes

·

View notes

Text

How to replace Microsoft and support the BDS boycott

reach out to your tech friends about replacing windows with an alternative operating system, such as linux or a *BSD OS. if you decide to do this on your own, make sure to back up your hard drive first. windows profits off your usage data (even if you never paid for it) and can use it to train their AI, which is arming israel.

if your work or school requires you to acquire windows, look up massgrave (it's very simple to activate windows).

duckduckgo is just microsoft's bing in a trench coat. they have made a secret exception for microsoft's tracking services in the past. check out Searx instances, or try alternative indie web search engines such as Marginalia or Wiby.

if you're using microsoft's outlook for email, consider Tuta or Disroot (avoid proton; it's all privacy theatre that's only somewhat better than other email providers, and the CEO has voiced support for trump).

don't pay to watch the minecraft movie that's coming out. i've heard it's incredibly underwhelming anyway.

insist on playing minecraft but don't want to give microsoft money? avoid bedrock edition. check out UltimMC if you need a way to acquire java edition and you don't own it. if you're a server operator, you can set your server to offline mode in server.properties which allows people who acquire minecraft the cool way to connect, but this should be paired with a server-side authentication plugin/mod for safety reasons (in offline mode, anyone can log in with any username, including a whitelisted or operator username, and there are bots scanning for servers to grief). don't use realms. disable telemetry with mods if you can.

get a vpn (i recommend airvpn for p2p connections) and download qBittorrent. in case you're interested in media published by microsoft. or just in general. learn to torrent, and make sure all your torrent traffic goes through your vpn service.

if you're using microsoft edge, consider switching to an alternative browser such as LibreWolf (basically firefox with better privacy and security out of the box; mozilla is not the innocent robin hood figure they're made out to be) or Ungoogled-Chromium (chromium without the google spyware; unfortunately lacks auto-update in most cases).

if you're using microsoft's AI for anything, consider getting a library card instead.

86 notes

·

View notes

Text

Being neurodivergent is like you're a computer running some variation of Unix while the rest of the world runs Windows. You have the exact same basic components as other machines, but you think differently. You organize differently. You do things in a way that Windows machines don't always understand, and because of that, you can't use programs written for Windows. If you're lucky, the developer will write a special version of their program specifically with your operating system in mind that will work just as well as the original, and be updated in a similar time frame. But if not? You'll either be stuck using emulators or a translator program like Wine, which come with an additional resource load and a host of other challenges to contend with, or you'll have to be content with an equivalent, which may or may not have the same features and the ability to read files created by the other program.

However, that doesn't mean you're not just as powerful. Perhaps you're a desktop that just happens to run Mac or Linux. Maybe you're a handheld device, small and simple but still able to connect someone to an entire world. Or perhaps you're an industrial computer purposely-built to perform a limited number of tasks extremely well, but only those tasks. You may not even have a graphical user interface. You could even be a server proudly hosting a wealth of media and information for an entire network to access- perhaps even the entire Internet. They need only ask politely. You may not be able to completely understand other machines, but you are still special in your own way.

#actually autistic#autism#neurodivergent#neurodiversity#computers#this is so nerdy i'm sorry#i also hope it doesn't offend people#adhd#actually adhd#unix#linux#macintosh#windows#actually neurodivergent

1K notes

·

View notes

Note

what is the best way to get safer/more anonymous online

Ok, security and anonymity are not the same thing, but when you combine them you can enhance your online privacy.

My question is: how tech literate are you and what is your aim? As in do you live in a country where your government would benefit from monitoring private (political) conversations or do you just want to degoogle? Because the latter is much easier for the average user.

Some general advice:

Leave Windows and Mac operating systems and switch to Linux distributions like Fedora and Ubuntu (both very user friendly). Switch from Microsoft Office or Pages/Numbers/Keynote (Mac) to LibreOffice.

You want to go more hardcore with a very privacy-focused operating system? There are Whonix and Tails (portable operating system).

Try to replace all your closed source apps with open source ones.

Now, when it comes to browsers, leave Chrome behind. Switch to Firefox (or Firefox Focus if you're on mobile). Want to go a step further? Use LibreWolf (a modified version of Firefox that increases protection against tracking), Brave (good for beginners but it has its controversies), DuckDuckGo or Bromite. You like ecofriendly alternatives? Check Ecosia out.

Are you, like, a journalist or political activist? Then you probably know Tor and other anonymous networks like i2p, freenet, Lokinet, Retroshare, IPFS and GNUnet.

For whistleblowers there are tools like SecureDrop (requires Tor), GlobaLeaks (alternative to SecureDrop), Haven (Android) and OnionShare.

Search engines?

There are Startpage (obtains Google's results but with more privacy), MetaGer (open source), DuckDuckGo (partially open source), Searx (open source). You can see the comparisons here.

Check libRedirect out. It redirects requests from popular socmed websites to privacy friendly frontends.

Alternatives to YouTube that value your privacy? Odysee, PeerTube and DTube.

Decentralized apps and social media? Mastodon (Twitter alternative), Friendica (Facebook alternative), diaspora* (Google+ RIP), PixelFed (Insta alternative), Aether (Reddit alternative).

Messaging?

I know we all use shit like Viber, Messenger, Telegram, Whatsup, Discord etc. but there are:

Signal (feels like Whatsup but it's secure and has end-to-end encryption)

Session (doesn't even require a phone or e-mail address to sign up)

Status (no phone or e-mail address again)

Threema (for mobile)

Delta Chat (you can chat with people if you know their e-mail without them having to use the app)

Team chatting?

Open source options:

Element (an alternative to Discord)

Rocket.chat (good for companies)

Revolt.chat (good for gamers and a good alternative to Discord)

Video/voice messaging?

Brave Talk (the one who creates the talk needs to use the browser but the others can join from any browser)

Jami

Linphone

Jitsi (no account required, video conferencing)

Then for Tor there are various options like Briar (good for activists), Speek! and Cwtch (user friendly).

Georestrictions? You don't want your Internet Provider to see what exactly what you're doing online?

As long as it's legal in your country, then you need to hide your IP with a VPN (authoritarian regimes tend to make them illegal for a reason), preferably one that has a no log policy, RAM servers, does not operate in one of the 14 eyes, supports OpenVPN (protocol), accepts cash payment and uses a strong encryption.

NordVPN (based in Panama)

ProtonVPN (Switzerland)

Cyberghost

Mullvad (Sweden)

Surfshark (Netherlands)

Private e-mails?

ProtonMail

StartMail

Tutamail

Mailbox (ecofriendly option)

Want to hide your real e-mail address to avoid spam etc.? SimpleLogin (open source)

E-mail clients?

Thunderbird

Canary Mail (for Android and iOS)

K-9 Mail (Android)

Too many complex passwords that you can't remember?

NordPass

BitWarden

LessPass

KeePassXC

Two Factor Authenticators?

2FAS

ente Authenticator

Aegis Authenticator

andOTP

Tofu (for iOS)

Want to encrypt your files? VeraCrypt (for your disk), GNU Privacy Guard (for your e-mail), Hat.sh (encryption in your browser), Picocrypt (Desktop encryption).

Want to encrypt your Dropbox, Google Drive etc.? Cryptomator.

Encrypted cloud storage?

NordLocker

MEGA

Proton Drive

Nextcloud

Filen

Encrypted photography storage?

ente

Cryptee

Piwigo

Want to remove metadata from your images and videos? ExifCleaner. For Android? ExifEraser. For iOS? Metapho.

Cloak your images to counter facial recognition? Fawkes.

Encrypted file sharing? Send.

Do you menstruate? Do you want an app that tracks your menstrual cycle but doesn't collect your data? drip.

What about your sexual health? Euki.

Want a fitness tracker without a closed source app and the need to transmit your personal data to the company's servers? Gadgetbridge.

34 notes

·

View notes

Text

Ok so I talked about this in tags of a post earlier but I need to talk about it properly

So a couple weeks ago I finally pulled the trigger, I dual booted Linux Mint on my laptop

It has less of my vital files on it then my pc, but I use it more for videos and general Internet stuff, so I would know if I liked it

Installing was scary but after a bit of trouble shooting with disabling bit locker it was easy, and let me be clear, that's a windows thing, because Microsoft really really doesn't want you to have freedom over your machine.

So I booted in

And like

I literally love it so much

I knew people talked about how much better Linux is and how it speeds up literally anything it's put on over windows, but like WOW

It doesn't take 2 minutes to boot up or shut down, my CPU doesn't idle at 25% for no reason, the search for files feature doesn't take 40 minutes only to show me Internet results instead of files, its wonderful.

The default theme is (in my opinion) pretty ugly, sorry whoever made it, it's just not for me.

But that's the great thing, you can literally customize this almost however you would like.

Maybe you shouldn't trust my opinion on what looks nice because I instantly installed a theme that replicated Windows 7

But I got bored of the default colors so I literally found the files where the home bar is saved and changed them to be more "minty"

That along with some CSS color editing gave me this:

You just can't do anything like this in Windows 10/11. You can change the color on windows but if I wanted, in Mint, I could completely change everything, centered icons on the taskbar, icons left justified on the taskbar, no taskbar, make it look like windows 95, it's all yours to do with whatever you want.

There are issues, I won't lie, the biggest one that will probably haunt Linux forever is compatibility.

Simply put most developers don't make native Linux versions of their software, you are lucky if there is a Mac version.

Lots and lots of Windows software CAN work on Linux through compatibility layers like Wine and Steam's Proton, but it's not 100%

My biggest problem is FL Studio and Clip Studio, neither of these I could get working with Wine or Proton so far. I'm hoping in the future I will find a way to make this work, or transition to their free and open source alternatives, but for now I'm stuck with a win 10 pc.

The other issue I've faced is that Linux seems to have a hard time recognizing and remembering my wired headphones. Like sometimes it just works, but most of the time it fails to do so.

My solution to this until I have time to troubleshoot more is to use my stupid headphone jack to USB C dongle that I bought for my stupid phone with no headphone jack.

Luckily it works fine and the type C port on my laptop literally doesn't get used otherwise.

All in all, I'm like excited to use a computer again. I used to only be excited for the programs it allowed me to use, but for the first time in a long time, the "magic" of the PC has returned for me.

Once I save up the money, my next PC will be Linux, Windows doesn't cut it anymore for me.

Ok now I'm going to kinda just talk about Linux for a bit, unrelated to my experience because my brain has been buzzing about this topic lately.

I get why guys who run Linux are so annoying about it now, because it's me now, I love this stupid OS and everyone has to hear about it.

And chances are, you've used Linux before already!

Linux is used in a ridiculous number of places because of its open source nature.

Most servers and other cloud computing systems are running Linux, many public terminals and screens run Linux, every supercomputer in the world runs Linux, if you were in the education system for the past 13~ years you might have used ChromeOS, which is built on Linux, if you have ever used an Android device you have used Linux.

It's never going to take over Windows as the go to operating system in the home, most people don't even know they could switch, and if they don't know that there's no way they are willing to put up with some of the headaches Linux brings.

Although I've spent way more time troubleshooting Windows issues then I have Linux ones so far, so maybe Microsoft stuffing so much bloated spyware into their system is starting to cause windows to rip at the seams, idk.

When I try to explain Linux to people who literally don't understand any of this I use a car metaphor

Windows is like a hatchback SUV, you buy it from a dealer and it mostly works for everyone good enough that they don't complain.

Linux is like a project vehicle in a lot of ways, the mechanic can tune it up exactly to the specifications they want, tear a bit out and put a diffrent one in, it requires some work under the engine but once that mechanic gets it the way they want it, it's incredible.

It's not a perfect metaphor but I think it gets the idea across.

Uh IDK how to finish this post, please try Linux if you can, changed my life.

#Long post about Linux ahead don't click read more if you don't want that#Linux#Linux mint#open source#Mantis thoughts

26 notes

·

View notes

Text

I have a suggestion

27 notes

·

View notes