#cad configuration

Explore tagged Tumblr posts

Text

Understanding CAD Computer Requirements: Essential Guide for Optimal Performance

If you’re diving into the world of Computer-Aided Design (CAD), ensuring that your system is properly equipped to handle the demands of software like AutoCAD is crucial. Whether you are an architect, engineer, or designer, having the right hardware and software configuration will not only improve your workflow but also guarantee smoother performance and better results. In this blog, we’ll walk through the key computer requirements for running AutoCAD and other CAD software smoothly.

Why Understanding CAD Computer Requirements Matters

Running CAD software efficiently requires more than just having a standard computer. CAD applications, especially AutoCAD, are resource-intensive and demand high computing power. Without a suitable setup, you might experience lagging, crashes, or long rendering times that could affect productivity. Understanding these requirements ensures that your system is up to the task and can handle the software’s robust functionalities without compromising performance.

Key CAD Computer Requirements for Optimal Performance

1. Processor (CPU): The Brain of Your CAD System

The processor is the heart of your CAD system. CAD software requires a multi-core processor to handle complex calculations and data. AutoCAD, for example, performs better on processors that can handle multiple tasks at once.

Recommended: A multi-core processor, ideally with 4 or more cores, such as Intel i7/i9 or AMD Ryzen 7/9.

Minimum: Intel Core i5 or AMD Ryzen 5 (6th generation or newer).

Choosing a higher-end processor will significantly enhance your CAD experience, especially when working with complex designs or large files.

2. Graphics Card (GPU): Visuals and Rendering Performance

The graphics card is crucial for rendering 3D models and visualizing designs in AutoCAD. A powerful GPU will ensure smooth navigation, rendering, and model manipulation in both 2D and 3D spaces.

Recommended: NVIDIA GeForce RTX Quadro series or AMD Radeon Pro series.

Minimum: NVIDIA GeForce GTX or AMD Radeon RX series.

For demanding 3D modeling tasks, consider upgrading to a workstation-grade GPU like the NVIDIA Quadro series, which is optimized for professional CAD workflows.

3. Memory (RAM): Smooth Multitasking

When working with large files or running multiple applications, ample RAM is necessary to avoid system slowdowns or crashes. CAD software requires significant memory to store large drawings, 3D models, and complex calculations.

Recommended: 16GB or more of RAM.

Minimum: 8GB of RAM.

For more intensive CAD tasks or multitasking (like running AutoCAD with other software), investing in 32GB or more of RAM is ideal.

4. Storage: Quick Access to Large Files

CAD designs often involve large files that need fast access and ample storage space. A slow hard drive can create bottlenecks when loading files or saving work, hindering your productivity. Opting for an SSD (Solid-State Drive) will significantly improve file loading times and overall system responsiveness.

Recommended: 512GB or higher SSD for storage.

Minimum: 256GB SSD or a 1TB HDD (though SSD is always recommended).

For the best performance, SSDs should be used for the operating system and primary software installation, while larger HDDs can be used for archival purposes.

5. Display: Crisp and Accurate Visualization

A high-resolution display is essential for accurately visualizing detailed designs and models. AutoCAD users often work with intricate 2D and 3D elements, making a large, high-resolution monitor an essential component of the setup.

Recommended: A 24” or larger screen with 1920x1080 resolution (Full HD) or higher, ideally with IPS technology for better color accuracy.

Minimum: 21” screen with 1920x1080 resolution.

For better productivity, you may even consider a dual monitor setup to increase workspace and improve multitasking efficiency.

6. Operating System: AutoCAD Compatibility

The operating system you use can impact the compatibility and performance of your CAD software. AutoCAD supports both Windows and macOS, but Windows remains the dominant platform for CAD applications due to better driver support and compatibility.

Recommended: Windows 10 64-bit (or newer), or macOS Mojave 10.14 or later.

Minimum: Windows 8.1 (64-bit) or macOS High Sierra 10.13 or later.

For those using Windows, make sure to keep your OS updated to take advantage of the latest performance and security enhancements.

7. Internet Connection: Cloud Integration and Updates

While not a direct hardware requirement, a reliable internet connection is important for downloading software updates, using cloud-based storage, and collaborating on projects. AutoCAD’s cloud integration features, such as AutoCAD Web and AutoCAD Mobile, rely on internet connectivity for seamless operation.

Recommended: Stable broadband connection with speeds of at least 10 Mbps.

Minimum: Basic internet connection for updates and cloud features.

Additional Tips for Optimizing Your CAD System

Ensure Regular Software Updates: Keeping your AutoCAD software and drivers up to date ensures compatibility and optimizes performance.

Consider External Storage for Backup: Large CAD files can quickly fill up your system’s storage, so having an external drive or cloud storage option for backup and archiving is a good idea.

Use CAD-Specific Peripherals: A high-quality mouse and keyboard designed for CAD work can enhance precision and reduce strain during long working hours.

Conclusion

Setting up a system to run AutoCAD and other CAD software efficiently isn’t just about meeting the bare minimum requirements — it’s about ensuring that your system can handle complex design tasks without compromising on speed or performance. By investing in a high-performance processor, powerful graphics card, sufficient RAM, and an SSD for fast storage, you’ll experience smoother, more efficient CAD workflows.

To learn more about AutoCAD system requirements, be sure to check out Virto Solar’s AutoCAD System Requirements page. This guide will help you make the right decisions for your setup, ensuring that your CAD design work is always at its best.

Are you ready to upgrade your system for seamless CAD experiences? Make sure your system is optimized for success with the right components, and get started on your next project with confidence!

#AutoCAD System Requirements#Best Computer for AutoCAD#AutoCAD Hardware Requirements#Computer Requirements for CAD Software#Optimal PC for AutoCAD#CAD System Configuration#CAD Design Computer Specifications#Best Graphics Card for AutoCAD#Recommended Processor for AutoCAD#AutoCAD RAM Requirements#Storage Requirements for AutoCAD#AutoCAD Performance Optimization#How to Choose a Computer for AutoCAD#AutoCAD PC Setup Guide#Best Workstation for AutoCAD#AutoCAD Compatible Hardware#Laptop for AutoCAD#Solid-State Drive for AutoCAD#AutoCAD 3D Modeling Requirements#AutoCAD 2025 System Requirements#How Much RAM for AutoCAD#Best Monitor for CAD Design#AutoCAD Operating System Requirements#AutoCAD Graphic Cards Comparison

0 notes

Text

Designing and manufacturing custom doors is a complex task due to the bespoke design needs of customers. Technological advancements, such as design automation using visual CAD configurators, have accelerated design development time for custom doors. These configurators simplify door design customization and generate accurate manufacturing drawings faster. Read the full article.

0 notes

Text

How I ditched streaming services and learned to love Linux: A step-by-step guide to building your very own personal media streaming server (V2.0: REVISED AND EXPANDED EDITION)

This is a revised, corrected and expanded version of my tutorial on setting up a personal media server that previously appeared on my old blog (donjuan-auxenfers). I expect that that post is still making the rounds (hopefully with my addendum on modifying group share permissions in Ubuntu to circumvent 0x8007003B "Unexpected Network Error" messages in Windows 10/11 when transferring files) but I have no way of checking. Anyway this new revised version of the tutorial corrects one or two small errors I discovered when rereading what I wrote, adds links to all products mentioned and is just more polished generally. I also expanded it a bit, pointing more adventurous users toward programs such as Sonarr/Radarr/Lidarr and Overseerr which can be used for automating user requests and media collection.

So then, what is this tutorial? This is a tutorial on how to build and set up your own personal media server using Ubuntu as an operating system and Plex (or Jellyfin) to not only manage your media, but to also stream that media to your devices both at home and abroad anywhere in the world where you have an internet connection. Its intent is to show you how building a personal media server and stuffing it full of films, TV, and music that you acquired through indiscriminate and voracious media piracy various legal methods will free you to completely ditch paid streaming services. No more will you have to pay for Disney+, Netflix, HBOMAX, Hulu, Amazon Prime, Peacock, CBS All Access, Paramount+, Crave or any other streaming service that is not named Criterion Channel. Instead whenever you want to watch your favourite films and television shows, you’ll have your own personal service that only features things that you want to see, with files that you have control over. And for music fans out there, both Jellyfin and Plex support music streaming, meaning you can even ditch music streaming services. Goodbye Spotify, Youtube Music, Tidal and Apple Music, welcome back unreasonably large MP3 (or FLAC) collections.

On the hardware front, I’m going to offer a few options catered towards different budgets and media library sizes. The cost of getting a media server up and running using this guide will cost you anywhere from $450 CAD/$325 USD at the low end to $1500 CAD/$1100 USD at the high end (it could go higher). My server was priced closer to the higher figure, but I went and got a lot more storage than most people need. If that seems like a little much, consider for a moment, do you have a roommate, a close friend, or a family member who would be willing to chip in a few bucks towards your little project provided they get access? Well that's how I funded my server. It might also be worth thinking about the cost over time, i.e. how much you spend yearly on subscriptions vs. a one time cost of setting up a server. Additionally there's just the joy of being able to scream "fuck you" at all those show cancelling, library deleting, hedge fund vampire CEOs who run the studios through denying them your money. Drive a stake through David Zaslav's heart.

On the software side I will walk you step-by-step through installing Ubuntu as your server's operating system, configuring your storage as a RAIDz array with ZFS, sharing your zpool to Windows with Samba, running a remote connection between your server and your Windows PC, and then a little about started with Plex/Jellyfin. Every terminal command you will need to input will be provided, and I even share a custom #bash script that will make used vs. available drive space on your server display correctly in Windows.

If you have a different preferred flavour of Linux (Arch, Manjaro, Redhat, Fedora, Mint, OpenSUSE, CentOS, Slackware etc. et. al.) and are aching to tell me off for being basic and using Ubuntu, this tutorial is not for you. The sort of person with a preferred Linux distro is the sort of person who can do this sort of thing in their sleep. Also I don't care. This tutorial is intended for the average home computer user. This is also why we’re not using a more exotic home server solution like running everything through Docker Containers and managing it through a dashboard like Homarr or Heimdall. While such solutions are fantastic and can be very easy to maintain once you have it all set up, wrapping your brain around Docker is a whole thing in and of itself. If you do follow this tutorial and had fun putting everything together, then I would encourage you to return in a year’s time, do your research and set up everything with Docker Containers.

Lastly, this is a tutorial aimed at Windows users. Although I was a daily user of OS X for many years (roughly 2008-2023) and I've dabbled quite a bit with various Linux distributions (mostly Ubuntu and Manjaro), my primary OS these days is Windows 11. Many things in this tutorial will still be applicable to Mac users, but others (e.g. setting up shares) you will have to look up for yourself. I doubt it would be difficult to do so.

Nothing in this tutorial will require feats of computing expertise. All you will need is a basic computer literacy (i.e. an understanding of what a filesystem and directory are, and a degree of comfort in the settings menu) and a willingness to learn a thing or two. While this guide may look overwhelming at first glance, it is only because I want to be as thorough as possible. I want you to understand exactly what it is you're doing, I don't want you to just blindly follow steps. If you half-way know what you’re doing, you will be much better prepared if you ever need to troubleshoot.

Honestly, once you have all the hardware ready it shouldn't take more than an afternoon or two to get everything up and running.

(This tutorial is just shy of seven thousand words long so the rest is under the cut.)

Step One: Choosing Your Hardware

Linux is a light weight operating system, depending on the distribution there's close to no bloat. There are recent distributions available at this very moment that will run perfectly fine on a fourteen year old i3 with 4GB of RAM. Moreover, running Plex or Jellyfin isn’t resource intensive in 90% of use cases. All this is to say, we don’t require an expensive or powerful computer. This means that there are several options available: 1) use an old computer you already have sitting around but aren't using 2) buy a used workstation from eBay, or what I believe to be the best option, 3) order an N100 Mini-PC from AliExpress or Amazon.

Note: If you already have an old PC sitting around that you’ve decided to use, fantastic, move on to the next step.

When weighing your options, keep a few things in mind: the number of people you expect to be streaming simultaneously at any one time, the resolution and bitrate of your media library (4k video takes a lot more processing power than 1080p) and most importantly, how many of those clients are going to be transcoding at any one time. Transcoding is what happens when the playback device does not natively support direct playback of the source file. This can happen for a number of reasons, such as the playback device's native resolution being lower than the file's internal resolution, or because the source file was encoded in a video codec unsupported by the playback device.

Ideally we want any transcoding to be performed by hardware. This means we should be looking for a computer with an Intel processor with Quick Sync. Quick Sync is a dedicated core on the CPU die designed specifically for video encoding and decoding. This specialized hardware makes for highly efficient transcoding both in terms of processing overhead and power draw. Without these Quick Sync cores, transcoding must be brute forced through software. This takes up much more of a CPU’s processing power and requires much more energy. But not all Quick Sync cores are created equal and you need to keep this in mind if you've decided either to use an old computer or to shop for a used workstation on eBay

Any Intel processor from second generation Core (Sandy Bridge circa 2011) onward has Quick Sync cores. It's not until 6th gen (Skylake), however, that the cores support the H.265 HEVC codec. Intel’s 10th gen (Comet Lake) processors introduce support for 10bit HEVC and HDR tone mapping. And the recent 12th gen (Alder Lake) processors brought with them hardware AV1 decoding. As an example, while an 8th gen (Kaby Lake) i5-8500 will be able to hardware transcode a H.265 encoded file, it will fall back to software transcoding if given a 10bit H.265 file. If you’ve decided to use that old PC or to look on eBay for an old Dell Optiplex keep this in mind.

Note 1: The price of old workstations varies wildly and fluctuates frequently. If you get lucky and go shopping shortly after a workplace has liquidated a large number of their workstations you can find deals for as low as $100 on a barebones system, but generally an i5-8500 workstation with 16gb RAM will cost you somewhere in the area of $260 CAD/$200 USD.

Note 2: The AMD equivalent to Quick Sync is called Video Core Next, and while it's fine, it's not as efficient and not as mature a technology. It was only introduced with the first generation Ryzen CPUs and it only got decent with their newest CPUs, we want something cheap.

Alternatively you could forgo having to keep track of what generation of CPU is equipped with Quick Sync cores that feature support for which codecs, and just buy an N100 mini-PC. For around the same price or less of a used workstation you can pick up a mini-PC with an Intel N100 processor. The N100 is a four-core processor based on the 12th gen Alder Lake architecture and comes equipped with the latest revision of the Quick Sync cores. These little processors offer astounding hardware transcoding capabilities for their size and power draw. Otherwise they perform equivalent to an i5-6500, which isn't a terrible CPU. A friend of mine uses an N100 machine as a dedicated retro emulation gaming system and it does everything up to 6th generation consoles just fine. The N100 is also a remarkably efficient chip, it sips power. In fact, the difference between running one of these and an old workstation could work out to hundreds of dollars a year in energy bills depending on where you live.

You can find these Mini-PCs all over Amazon or for a little cheaper on AliExpress. They range in price from $170 CAD/$125 USD for a no name N100 with 8GB RAM to $280 CAD/$200 USD for a Beelink S12 Pro with 16GB RAM. The brand doesn't really matter, they're all coming from the same three factories in Shenzen, go for whichever one fits your budget or has features you want. 8GB RAM should be enough, Linux is lightweight and Plex only calls for 2GB RAM. 16GB RAM might result in a slightly snappier experience, especially with ZFS. A 256GB SSD is more than enough for what we need as a boot drive, but going for a bigger drive might allow you to get away with things like creating preview thumbnails for Plex, but it’s up to you and your budget.

The Mini-PC I wound up buying was a Firebat AK2 Plus with 8GB RAM and a 256GB SSD. It looks like this:

Note: Be forewarned that if you decide to order a Mini-PC from AliExpress, note the type of power adapter it ships with. The mini-PC I bought came with an EU power adapter and I had to supply my own North American power supply. Thankfully this is a minor issue as barrel plug 30W/12V/2.5A power adapters are easy to find and can be had for $10.

Step Two: Choosing Your Storage

Storage is the most important part of our build. It is also the most expensive. Thankfully it’s also the most easily upgrade-able down the line.

For people with a smaller media collection (4TB to 8TB), a more limited budget, or who will only ever have two simultaneous streams running, I would say that the most economical course of action would be to buy a USB 3.0 8TB external HDD. Something like this one from Western Digital or this one from Seagate. One of these external drives will cost you in the area of $200 CAD/$140 USD. Down the line you could add a second external drive or replace it with a multi-drive RAIDz set up such as detailed below.

If a single external drive the path for you, move on to step three.

For people with larger media libraries (12TB+), who prefer media in 4k, or care who about data redundancy, the answer is a RAID array featuring multiple HDDs in an enclosure.

Note: If you are using an old PC or used workstatiom as your server and have the room for at least three 3.5" drives, and as many open SATA ports on your mother board you won't need an enclosure, just install the drives into the case. If your old computer is a laptop or doesn’t have room for more internal drives, then I would suggest an enclosure.

The minimum number of drives needed to run a RAIDz array is three, and seeing as RAIDz is what we will be using, you should be looking for an enclosure with three to five bays. I think that four disks makes for a good compromise for a home server. Regardless of whether you go for a three, four, or five bay enclosure, do be aware that in a RAIDz array the space equivalent of one of the drives will be dedicated to parity at a ratio expressed by the equation 1 − 1/n i.e. in a four bay enclosure equipped with four 12TB drives, if we configured our drives in a RAIDz1 array we would be left with a total of 36TB of usable space (48TB raw size). The reason for why we might sacrifice storage space in such a manner will be explained in the next section.

A four bay enclosure will cost somewhere in the area of $200 CDN/$140 USD. You don't need anything fancy, we don't need anything with hardware RAID controls (RAIDz is done entirely in software) or even USB-C. An enclosure with USB 3.0 will perform perfectly fine. Don’t worry too much about USB speed bottlenecks. A mechanical HDD will be limited by the speed of its mechanism long before before it will be limited by the speed of a USB connection. I've seen decent looking enclosures from TerraMaster, Yottamaster, Mediasonic and Sabrent.

When it comes to selecting the drives, as of this writing, the best value (dollar per gigabyte) are those in the range of 12TB to 20TB. I settled on 12TB drives myself. If 12TB to 20TB drives are out of your budget, go with what you can afford, or look into refurbished drives. I'm not sold on the idea of refurbished drives but many people swear by them.

When shopping for harddrives, search for drives designed specifically for NAS use. Drives designed for NAS use typically have better vibration dampening and are designed to be active 24/7. They will also often make use of CMR (conventional magnetic recording) as opposed to SMR (shingled magnetic recording). This nets them a sizable read/write performance bump over typical desktop drives. Seagate Ironwolf and Toshiba NAS are both well regarded brands when it comes to NAS drives. I would avoid Western Digital Red drives at this time. WD Reds were a go to recommendation up until earlier this year when it was revealed that they feature firmware that will throw up false SMART warnings telling you to replace the drive at the three year mark quite often when there is nothing at all wrong with that drive. It will likely even be good for another six, seven, or more years.

Step Three: Installing Linux

For this step you will need a USB thumbdrive of at least 6GB in capacity, an .ISO of Ubuntu, and a way to make that thumbdrive bootable media.

First download a copy of Ubuntu desktop (for best performance we could download the Server release, but for new Linux users I would recommend against the server release. The server release is strictly command line interface only, and having a GUI is very helpful for most people. Not many people are wholly comfortable doing everything through the command line, I'm certainly not one of them, and I grew up with DOS 6.0. 22.04.3 Jammy Jellyfish is the current Long Term Service release, this is the one to get.

Download the .ISO and then download and install balenaEtcher on your Windows PC. BalenaEtcher is an easy to use program for creating bootable media, you simply insert your thumbdrive, select the .ISO you just downloaded, and it will create a bootable installation media for you.

Once you've made a bootable media and you've got your Mini-PC (or you old PC/used workstation) in front of you, hook it directly into your router with an ethernet cable, and then plug in the HDD enclosure, a monitor, a mouse and a keyboard. Now turn that sucker on and hit whatever key gets you into the BIOS (typically ESC, DEL or F2). If you’re using a Mini-PC check to make sure that the P1 and P2 power limits are set correctly, my N100's P1 limit was set at 10W, a full 20W under the chip's power limit. Also make sure that the RAM is running at the advertised speed. My Mini-PC’s RAM was set at 2333Mhz out of the box when it should have been 3200Mhz. Once you’ve done that, key over to the boot order and place the USB drive first in the boot order. Then save the BIOS settings and restart.

After you restart you’ll be greeted by Ubuntu's installation screen. Installing Ubuntu is really straight forward, select the "minimal" installation option, as we won't need anything on this computer except for a browser (Ubuntu comes preinstalled with Firefox) and Plex Media Server/Jellyfin Media Server. Also remember to delete and reformat that Windows partition! We don't need it.

Step Four: Installing ZFS and Setting Up the RAIDz Array

Note: If you opted for just a single external HDD skip this step and move onto setting up a Samba share.

Once Ubuntu is installed it's time to configure our storage by installing ZFS to build our RAIDz array. ZFS is a "next-gen" file system that is both massively flexible and massively complex. It's capable of snapshot backup, self healing error correction, ZFS pools can be configured with drives operating in a supplemental manner alongside the storage vdev (e.g. fast cache, dedicated secondary intent log, hot swap spares etc.). It's also a file system very amenable to fine tuning. Block and sector size are adjustable to use case and you're afforded the option of different methods of inline compression. If you'd like a very detailed overview and explanation of its various features and tips on tuning a ZFS array check out these articles from Ars Technica. For now we're going to ignore all these features and keep it simple, we're going to pull our drives together into a single vdev running in RAIDz which will be the entirety of our zpool, no fancy cache drive or SLOG.

Open up the terminal and type the following commands:

sudo apt update

then

sudo apt install zfsutils-linux

This will install the ZFS utility. Verify that it's installed with the following command:

zfs --version

Now, it's time to check that the HDDs we have in the enclosure are healthy, running, and recognized. We also want to find out their device IDs and take note of them:

sudo fdisk -1

Note: You might be wondering why some of these commands require "sudo" in front of them while others don't. "Sudo" is short for "super user do”. When and where "sudo" is used has to do with the way permissions are set up in Linux. Only the "root" user has the access level to perform certain tasks in Linux. As a matter of security and safety regular user accounts are kept separate from the "root" user. It's not advised (or even possible) to boot into Linux as "root" with most modern distributions. Instead by using "sudo" our regular user account is temporarily given the power to do otherwise forbidden things. Don't worry about it too much at this stage, but if you want to know more check out this introduction.

If everything is working you should get a list of the various drives detected along with their device IDs which will look like this: /dev/sdc. You can also check the device IDs of the drives by opening the disk utility app. Jot these IDs down as we'll need them for our next step, creating our RAIDz array.

RAIDz is similar to RAID-5 in that instead of striping your data over multiple disks, exchanging redundancy for speed and available space (RAID-0), or mirroring your data writing by two copies of every piece (RAID-1), it instead writes parity blocks across the disks in addition to striping, this provides a balance of speed, redundancy and available space. If a single drive fails, the parity blocks on the working drives can be used to reconstruct the entire array as soon as a replacement drive is added.

Additionally, RAIDz improves over some of the common RAID-5 flaws. It's more resilient and capable of self healing, as it is capable of automatically checking for errors against a checksum. It's more forgiving in this way, and it's likely that you'll be able to detect when a drive is dying well before it fails. A RAIDz array can survive the loss of any one drive.

Note: While RAIDz is indeed resilient, if a second drive fails during the rebuild, you're fucked. Always keep backups of things you can't afford to lose. This tutorial, however, is not about proper data safety.

To create the pool, use the following command:

sudo zpool create "zpoolnamehere" raidz "device IDs of drives we're putting in the pool"

For example, let's creatively name our zpool "mypool". This poil will consist of four drives which have the device IDs: sdb, sdc, sdd, and sde. The resulting command will look like this:

sudo zpool create mypool raidz /dev/sdb /dev/sdc /dev/sdd /dev/sde

If as an example you bought five HDDs and decided you wanted more redundancy dedicating two drive to this purpose, we would modify the command to "raidz2" and the command would look something like the following:

sudo zpool create mypool raidz2 /dev/sdb /dev/sdc /dev/sdd /dev/sde /dev/sdf

An array configured like this is known as RAIDz2 and is able to survive two disk failures.

Once the zpool has been created, we can check its status with the command:

zpool status

Or more concisely with:

zpool list

The nice thing about ZFS as a file system is that a pool is ready to go immediately after creation. If we were to set up a traditional RAID-5 array using mbam, we'd have to sit through a potentially hours long process of reformatting and partitioning the drives. Instead we're ready to go right out the gates.

The zpool should be automatically mounted to the filesystem after creation, check on that with the following:

df -hT | grep zfs

Note: If your computer ever loses power suddenly, say in event of a power outage, you may have to re-import your pool. In most cases, ZFS will automatically import and mount your pool, but if it doesn’t and you can't see your array, simply open the terminal and type sudo zpool import -a.

By default a zpool is mounted at /"zpoolname". The pool should be under our ownership but let's make sure with the following command:

sudo chown -R "yourlinuxusername" /"zpoolname"

Note: Changing file and folder ownership with "chown" and file and folder permissions with "chmod" are essential commands for much of the admin work in Linux, but we won't be dealing with them extensively in this guide. If you'd like a deeper tutorial and explanation you can check out these two guides: chown and chmod.

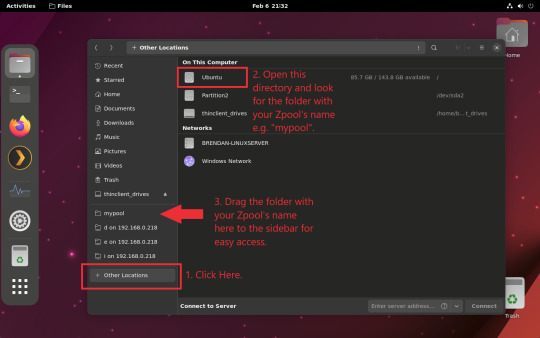

You can access the zpool file system through the GUI by opening the file manager (the Ubuntu default file manager is called Nautilus) and clicking on "Other Locations" on the sidebar, then entering the Ubuntu file system and looking for a folder with your pool's name. Bookmark the folder on the sidebar for easy access.

Your storage pool is now ready to go. Assuming that we already have some files on our Windows PC we want to copy to over, we're going to need to install and configure Samba to make the pool accessible in Windows.

Step Five: Setting Up Samba/Sharing

Samba is what's going to let us share the zpool with Windows and allow us to write to it from our Windows machine. First let's install Samba with the following commands:

sudo apt-get update

then

sudo apt-get install samba

Next create a password for Samba.

sudo smbpswd -a "yourlinuxusername"

It will then prompt you to create a password. Just reuse your Ubuntu user password for simplicity's sake.

Note: if you're using just a single external drive replace the zpool location in the following commands with wherever it is your external drive is mounted, for more information see this guide on mounting an external drive in Ubuntu.

After you've created a password we're going to create a shareable folder in our pool with this command

mkdir /"zpoolname"/"foldername"

Now we're going to open the smb.conf file and make that folder shareable. Enter the following command.

sudo nano /etc/samba/smb.conf

This will open the .conf file in nano, the terminal text editor program. Now at the end of smb.conf add the following entry:

["foldername"]

path = /"zpoolname"/"foldername"

available = yes

valid users = "yourlinuxusername"

read only = no

writable = yes

browseable = yes

guest ok = no

Ensure that there are no line breaks between the lines and that there's a space on both sides of the equals sign. Our next step is to allow Samba traffic through the firewall:

sudo ufw allow samba

Finally restart the Samba service:

sudo systemctl restart smbd

At this point we'll be able to access to the pool, browse its contents, and read and write to it from Windows. But there's one more thing left to do, Windows doesn't natively support the ZFS file systems and will read the used/available/total space in the pool incorrectly. Windows will read available space as total drive space, and all used space as null. This leads to Windows only displaying a dwindling amount of "available" space as the drives are filled. We can fix this! Functionally this doesn't actually matter, we can still write and read to and from the disk, it just makes it difficult to tell at a glance the proportion of used/available space, so this is an optional step but one I recommend (this step is also unnecessary if you're just using a single external drive). What we're going to do is write a little shell script in #bash. Open nano with the terminal with the command:

nano

Now insert the following code:

#!/bin/bash CUR_PATH=`pwd` ZFS_CHECK_OUTPUT=$(zfs get type $CUR_PATH 2>&1 > /dev/null) > /dev/null if [[ $ZFS_CHECK_OUTPUT == *not\ a\ ZFS* ]] then IS_ZFS=false else IS_ZFS=true fi if [[ $IS_ZFS = false ]] then df $CUR_PATH | tail -1 | awk '{print $2" "$4}' else USED=$((`zfs get -o value -Hp used $CUR_PATH` / 1024)) > /dev/null AVAIL=$((`zfs get -o value -Hp available $CUR_PATH` / 1024)) > /dev/null TOTAL=$(($USED+$AVAIL)) > /dev/null echo $TOTAL $AVAIL fi

Save the script as "dfree.sh" to /home/"yourlinuxusername" then change the ownership of the file to make it executable with this command:

sudo chmod 774 dfree.sh

Now open smb.conf with sudo again:

sudo nano /etc/samba/smb.conf

Now add this entry to the top of the configuration file to direct Samba to use the results of our script when Windows asks for a reading on the pool's used/available/total drive space:

[global]

dfree command = /home/"yourlinuxusername"/dfree.sh

Save the changes to smb.conf and then restart Samba again with the terminal:

sudo systemctl restart smbd

Now there’s one more thing we need to do to fully set up the Samba share, and that’s to modify a hidden group permission. In the terminal window type the following command:

usermod -a -G sambashare “yourlinuxusername”

Then restart samba again:

sudo systemctl restart smbd

If we don’t do this last step, everything will appear to work fine, and you will even be able to see and map the drive from Windows and even begin transferring files, but you'd soon run into a lot of frustration. As every ten minutes or so a file would fail to transfer and you would get a window announcing “0x8007003B Unexpected Network Error”. This window would require your manual input to continue the transfer with the file next in the queue. And at the end it would reattempt to transfer whichever files failed the first time around. 99% of the time they’ll go through that second try, but this is still all a major pain in the ass. Especially if you’ve got a lot of data to transfer or you want to step away from the computer for a while.

It turns out samba can act a little weirdly with the higher read/write speeds of RAIDz arrays and transfers from Windows, and will intermittently crash and restart itself if this group option isn’t changed. Inputting the above command will prevent you from ever seeing that window.

The last thing we're going to do before switching over to our Windows PC is grab the IP address of our Linux machine. Enter the following command:

hostname -I

This will spit out this computer's IP address on the local network (it will look something like 192.168.0.x), write it down. It might be a good idea once you're done here to go into your router settings and reserving that IP for your Linux system in the DHCP settings. Check the manual for your specific model router on how to access its settings, typically it can be accessed by opening a browser and typing http:\\192.168.0.1 in the address bar, but your router may be different.

Okay we’re done with our Linux computer for now. Get on over to your Windows PC, open File Explorer, right click on Network and click "Map network drive". Select Z: as the drive letter (you don't want to map the network drive to a letter you could conceivably be using for other purposes) and enter the IP of your Linux machine and location of the share like so: \\"LINUXCOMPUTERLOCALIPADDRESSGOESHERE"\"zpoolnamegoeshere"\. Windows will then ask you for your username and password, enter the ones you set earlier in Samba and you're good. If you've done everything right it should look something like this:

You can now start moving media over from Windows to the share folder. It's a good idea to have a hard line running to all machines. Moving files over Wi-Fi is going to be tortuously slow, the only thing that’s going to make the transfer time tolerable (hours instead of days) is a solid wired connection between both machines and your router.

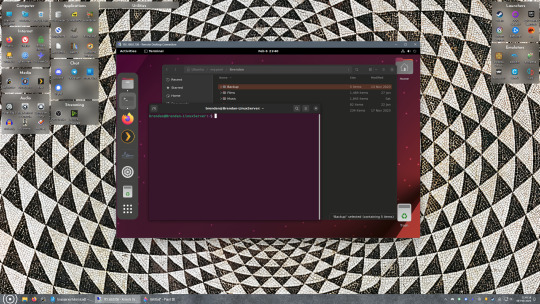

Step Six: Setting Up Remote Desktop Access to Your Server

After the server is up and going, you’ll want to be able to access it remotely from Windows. Barring serious maintenance/updates, this is how you'll access it most of the time. On your Linux system open the terminal and enter:

sudo apt install xrdp

Then:

sudo systemctl enable xrdp

Once it's finished installing, open “Settings” on the sidebar and turn off "automatic login" in the User category. Then log out of your account. Attempting to remotely connect to your Linux computer while you’re logged in will result in a black screen!

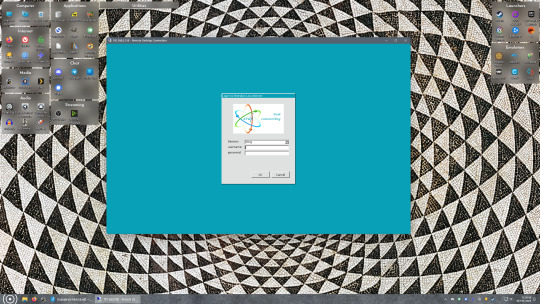

Now get back on your Windows PC, open search and look for "RDP". A program called "Remote Desktop Connection" should pop up, open this program as an administrator by right-clicking and selecting “run as an administrator”. You’ll be greeted with a window. In the field marked “Computer” type in the IP address of your Linux computer. Press connect and you'll be greeted with a new window and prompt asking for your username and password. Enter your Ubuntu username and password here.

If everything went right, you’ll be logged into your Linux computer. If the performance is sluggish, adjust the display options. Lowering the resolution and colour depth do a lot to make the interface feel snappier.

Remote access is how we're going to be using our Linux system from now, barring edge cases like needing to get into the BIOS or upgrading to a new version of Ubuntu. Everything else from performing maintenance like a monthly zpool scrub to checking zpool status and updating software can all be done remotely.

This is how my server lives its life now, happily humming and chirping away on the floor next to the couch in a corner of the living room.

Step Seven: Plex Media Server/Jellyfin

Okay we’ve got all the ground work finished and our server is almost up and running. We’ve got Ubuntu up and running, our storage array is primed, we’ve set up remote connections and sharing, and maybe we’ve moved over some of favourite movies and TV shows.

Now we need to decide on the media server software to use which will stream our media to us and organize our library. For most people I’d recommend Plex. It just works 99% of the time. That said, Jellyfin has a lot to recommend it by too, even if it is rougher around the edges. Some people run both simultaneously, it’s not that big of an extra strain. I do recommend doing a little bit of your own research into the features each platform offers, but as a quick run down, consider some of the following points:

Plex is closed source and is funded through PlexPass purchases while Jellyfin is open source and entirely user driven. This means a number of things: for one, Plex requires you to purchase a “PlexPass” (purchased as a one time lifetime fee $159.99 CDN/$120 USD or paid for on a monthly or yearly subscription basis) in order to access to certain features, like hardware transcoding (and we want hardware transcoding) or automated intro/credits detection and skipping, Jellyfin offers some of these features for free through plugins. Plex supports a lot more devices than Jellyfin and updates more frequently. That said, Jellyfin's Android and iOS apps are completely free, while the Plex Android and iOS apps must be activated for a one time cost of $6 CDN/$5 USD. But that $6 fee gets you a mobile app that is much more functional and features a unified UI across platforms, the Plex mobile apps are simply a more polished experience. The Jellyfin apps are a bit of a mess and the iOS and Android versions are very different from each other.

Jellyfin’s actual media player is more fully featured than Plex's, but on the other hand Jellyfin's UI, library customization and automatic media tagging really pale in comparison to Plex. Streaming your music library is free through both Jellyfin and Plex, but Plex offers the PlexAmp app for dedicated music streaming which boasts a number of fantastic features, unfortunately some of those fantastic features require a PlexPass. If your internet is down, Jellyfin can still do local streaming, while Plex can fail to play files unless you've got it set up a certain way. Jellyfin has a slew of neat niche features like support for Comic Book libraries with the .cbz/.cbt file types, but then Plex offers some free ad-supported TV and films, they even have a free channel that plays nothing but Classic Doctor Who.

Ultimately it's up to you, I settled on Plex because although some features are pay-walled, it just works. It's more reliable and easier to use, and a one-time fee is much easier to swallow than a subscription. I had a pretty easy time getting my boomer parents and tech illiterate brother introduced to and using Plex and I don't know if I would've had as easy a time doing that with Jellyfin. I do also need to mention that Jellyfin does take a little extra bit of tinkering to get going in Ubuntu, you’ll have to set up process permissions, so if you're more tolerant to tinkering, Jellyfin might be up your alley and I’ll trust that you can follow their installation and configuration guide. For everyone else, I recommend Plex.

So pick your poison: Plex or Jellyfin.

Note: The easiest way to download and install either of these packages in Ubuntu is through Snap Store.

After you've installed one (or both), opening either app will launch a browser window into the browser version of the app allowing you to set all the options server side.

The process of adding creating media libraries is essentially the same in both Plex and Jellyfin. You create a separate libraries for Television, Movies, and Music and add the folders which contain the respective types of media to their respective libraries. The only difficult or time consuming aspect is ensuring that your files and folders follow the appropriate naming conventions:

Plex naming guide for Movies

Plex naming guide for Television

Jellyfin follows the same naming rules but I find their media scanner to be a lot less accurate and forgiving than Plex. Once you've selected the folders to be scanned the service will scan your files, tagging everything and adding metadata. Although I find do find Plex more accurate, it can still erroneously tag some things and you might have to manually clean up some tags in a large library. (When I initially created my library it tagged the 1963-1989 Doctor Who as some Korean soap opera and I needed to manually select the correct match after which everything was tagged normally.) It can also be a bit testy with anime (especially OVAs) be sure to check TVDB to ensure that you have your files and folders structured and named correctly. If something is not showing up at all, double check the name.

Once that's done, organizing and customizing your library is easy. You can set up collections, grouping items together to fit a theme or collect together all the entries in a franchise. You can make playlists, and add custom artwork to entries. It's fun setting up collections with posters to match, there are even several websites dedicated to help you do this like PosterDB. As an example, below are two collections in my library, one collecting all the entries in a franchise, the other follows a theme.

My Star Trek collection, featuring all eleven television series, and thirteen films.

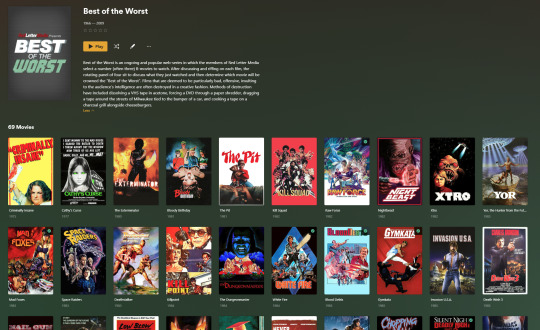

My Best of the Worst collection, featuring sixty-nine films previously showcased on RedLetterMedia’s Best of the Worst. They’re all absolutely terrible and I love them.

As for settings, ensure you've got Remote Access going, it should work automatically and be sure to set your upload speed after running a speed test. In the library settings set the database cache to 2000MB to ensure a snappier and more responsive browsing experience, and then check that playback quality is set to original/maximum. If you’re severely bandwidth limited on your upload and have remote users, you might want to limit the remote stream bitrate to something more reasonable, just as a note of comparison Netflix’s 1080p bitrate is approximately 5Mbps, although almost anyone watching through a chromium based browser is streaming at 720p and 3mbps. Other than that you should be good to go. For actually playing your files, there's a Plex app for just about every platform imaginable. I mostly watch television and films on my laptop using the Windows Plex app, but I also use the Android app which can broadcast to the chromecast connected to the TV in the office and the Android TV app for our smart TV. Both are fully functional and easy to navigate, and I can also attest to the OS X version being equally functional.

Part Eight: Finding Media

Now, this is not really a piracy tutorial, there are plenty of those out there. But if you’re unaware, BitTorrent is free and pretty easy to use, just pick a client (qBittorrent is the best) and go find some public trackers to peruse. Just know now that all the best trackers are private and invite only, and that they can be exceptionally difficult to get into. I’m already on a few, and even then, some of the best ones are wholly out of my reach.

If you decide to take the left hand path and turn to Usenet you’ll have to pay. First you’ll need to sign up with a provider like Newshosting or EasyNews for access to Usenet itself, and then to actually find anything you’re going to need to sign up with an indexer like NZBGeek or NZBFinder. There are dozens of indexers, and many people cross post between them, but for more obscure media it’s worth checking multiple. You’ll also need a binary downloader like SABnzbd. That caveat aside, Usenet is faster, bigger, older, less traceable than BitTorrent, and altogether slicker. I honestly prefer it, and I'm kicking myself for taking this long to start using it because I was scared off by the price. I’ve found so many things on Usenet that I had sought in vain elsewhere for years, like a 2010 Italian film about a massacre perpetrated by the SS that played the festival circuit but never received a home media release; some absolute hero uploaded a rip of a festival screener DVD to Usenet. Anyway, figure out the rest of this shit on your own and remember to use protection, get yourself behind a VPN, use a SOCKS5 proxy with your BitTorrent client, etc.

On the legal side of things, if you’re around my age, you (or your family) probably have a big pile of DVDs and Blu-Rays sitting around unwatched and half forgotten. Why not do a bit of amateur media preservation, rip them and upload them to your server for easier access? (Your tools for this are going to be Handbrake to do the ripping and AnyDVD to break any encryption.) I went to the trouble of ripping all my SCTV DVDs (five box sets worth) because none of it is on streaming nor could it be found on any pirate source I tried. I’m glad I did, forty years on it’s still one of the funniest shows to ever be on TV.

Part Nine/Epilogue: Sonarr/Radarr/Lidarr and Overseerr

There are a lot of ways to automate your server for better functionality or to add features you and other users might find useful. Sonarr, Radarr, and Lidarr are a part of a suite of “Servarr” services (there’s also Readarr for books and Whisparr for adult content) that allow you to automate the collection of new episodes of TV shows (Sonarr), new movie releases (Radarr) and music releases (Lidarr). They hook in to your BitTorrent client or Usenet binary newsgroup downloader and crawl your preferred Torrent trackers and Usenet indexers, alerting you to new releases and automatically grabbing them. You can also use these services to manually search for new media, and even replace/upgrade your existing media with better quality uploads. They’re really a little tricky to set up on a bare metal Ubuntu install (ideally you should be running them in Docker Containers), and I won’t be providing a step by step on installing and running them, I’m simply making you aware of their existence.

The other bit of kit I want to make you aware of is Overseerr which is a program that scans your Plex media library and will serve recommendations based on what you like. It also allows you and your users to request specific media. It can even be integrated with Sonarr/Radarr/Lidarr so that fulfilling those requests is fully automated.

And you're done. It really wasn't all that hard. Enjoy your media. Enjoy the control you have over that media. And be safe in the knowledge that no hedgefund CEO motherfucker who hates the movies but who is somehow in control of a major studio will be able to disappear anything in your library as a tax write-off.

1K notes

·

View notes

Note

Amy tips for getting 30 prebuilt pcs for 1500$ each

We're going to play a game where I show tumblr what I do at work by doing it on tumblr. You can answer my questions in successive anonymous asks. My responses to you will be bracketed by dashed lines, with instructions and commentary before and after.

---------------------------

Hi Anon!

I can definitely help you with your desktop needs. Can you tell me whether you're looking minimize your costs, or get the maximum amount of computer that I can get you for a per-unit price of $1500?

Here are some details that will help me narrow down options that are a good fit for your situation:

Very generally, what will these be for? Basic office use (browsing, office suite)? Video Production? CAD? Finance? Medical providers? Educators?

What date are you looking to have these machines in place?

Is there a specific type of software that you know will be installed on these devices, and if so can you get me the hardware specs required by the software vendor?

Please let me know if you've got any questions, or if there is anything that I can do for you.

Thanks! - Ms-D

-----------------------------

The average cost of business desktops that I sell at work is $700-$900; these are devices that I would give an anticipated lifespan of 7 years, with hardware upgrades planned at 5 years. This is for a mid-range desktop with a 3-year next business day onsite warranty, no software, and does not include the cost of tax, shipping, or configuration. The cost of labor can come close to the cost of the machine for configuration. If I were *PERSONALLY* deploying these machines (pulling them out of the box, debloating, creating profiles, installing software, reboxing, transporting to the site, installing and connecting to peripherals) I'd probably charge around $200-300 per device. My work charges a lot more. Because of that, a 1500 computer is quite likely to be a 700 computer with three hours of estimated labor. If you've got an in-house IT department and aren't going to be paying through the nose for setup, you can get *a lot* of business-class computer for $1500.

If someone at work asked me for a $1500 computer, I would assume that was the cost of the machine ONLY, no peripherals, no configuration, no installation, no software, though I would try to consider both tax and our markup and would look for devices that would maximize performance while under-but-close-to the mark. If I found something that was slightly over (say by up to $70), I would drop our markup to get closer to the client's budget.

What this means for YOU, the computer consumer, is that when you're looking at a computer you need to consider the following in your budget, NOT just the sticker price.

Computer Cost

Software Cost

Setup Cost (if you're not doing it yourself)

Shipping Cost

Tax

Peripherals (computers almost all come with a mouse and a keyboard, these are usually inexpensive but very sturdy; if you want a nice keyboard and an ergonomic mouse you have to buy your own)

Whether you will LOSE peripherals when you replace your current device - do you need to buy an external optical disk drive if your old machine had a CD drive but the new machine doesn't?

Those things can add hundreds of dollars to your total cost, so figure out how much that will be so that you can figure out what your ACTUAL budget for your computer is.

(Also your computer shouldn't be plugged directly into the wall; if you're getting ready to replace a machine and you don't already own a desktop UPS, a desktop UPS should be part of the cost of your next machine!)

118 notes

·

View notes

Note

Very much agree with your colleagues recommending logitech - personally, mine is a G502 lightspeed and has been an absolute trooper for the last five years (prior to it, I used the performance MX for 10 years). Yes, it's comfy, and the macro buttons can be super useful if only for going forwards or back online with the side buttons, but the scroll wheel is the real star of the show. It has a handy button just behind it that toggles the clicks on or off - off and the scroll wheel spins freely, on and it goes from click to click - so absurdly useful for scanning through PDFs or similar tasks. (I got into the habit of using it in the computer labs at uni for datasheets and CAD functions and... yeah I'm not going back unless logitech just stops making mice with that feature). Downside (other than price) is that it is very much right handed only, and i've never really used it in wireless configuration much because it seems to eat through it's battery life (so it's easier to just have it forever plugged in to my desktop).

See price and excessive tech features are what kind of stop me. I'm not a gamer nor do I do engineer/coding so I feel like those things would be kind of a waste for me? I can see how it could be beneficial based on what you said, but I'm not sure how often I'd be using features like that especially price wise. I mean if someone wants to buy me it, sure, but until then I think something a bit more simple might be the route I need? I really appreciate all that info though bc just telling me a mouse is great, but telling me all that info is even better! So thank you!

2 notes

·

View notes

Text

Back again, after more experimenting had to be done to get the linkage right. I found I couldn't get it to move right and going back the the fundamental math I found it would never work in the configuration I had it, so it was time for a full mechanism revamp. luckily this time i found a tool to do it much better! this tool I'm showing is from the amazing Dave which you can check out here. I used his linage simulator to come up with a new design, the final version (left) you can see here.

I am already putting this into cad to prepare for printing so I know I will be ready soon, especially because the next weekend it the start of spring break! so i will have plenty of time and no other plans to get this new design into production!

#engineering#design#mechanism#robotics#biotech#bugs#cad#robot#leg#machinery#Linkage#springbreak#ontroprenur

3 notes

·

View notes

Note

i really enjoy your opinions on different builds! build-wise, do you have a favorite character for each campaign? (simply build-wise, so i suppose the question would be better phrased as "favorite build per campaign")

Hi anon!

Campaign 1 is hard simply because between the cast learning the system and there not being a huge number of options, most of the builds were pretty simple. Percy, Pike, and Keyleth don't really have many build choices at all - they're all good builds, and the players played them well, but it's pretty much as simple as "this is my class and subclass ok done" because Pike and Keyleth are both prepared casters. Grog taking fighter levels is solid but fairly straightforward. Vax gets points for narrative integration but it was so item-dependent and base assassin rogue is so weak. I am torn between Vex and Scanlan and I think I'm calling a tie, which I also am going to do for C3:

Vex makes the most out of a limited base subclass, and the observant feat is an excellent touch. The choices she made for terrain and enemies were well-integrated into her story, as was her choice to multiclass into rogue (assassin rogue as a multiclass for a dex-based combat class is a different story than for base class). Her spell list was solid to the point that she was a strong secondary healer, unusual for a ranger.

Scanlan had a good spread of feats (warcaster and tough) but what puts him here is that he was often the only full caster in a position to cast on the field, since Keyleth was frequently wildshaped and Pike absent. This is incredibly hard for a bard, and his spell list was an extremely good one that had to straddle healing and arcane utility all at once, and he did an admirable job.

Campaign 2 is Fjord; this has been discussed extensively, feel free to check my archives.

Campaign 3 is tough but I'm going for a tie between our Crownkeeper members, Fearne and Orym:

Fearne's level of rogue, while it initially made me take a sidelong glance, is why she rolled that 30 on persuasion this episode, and also is a very true choice to her character. A lot of the rest is, to be honest, the fact that her subclass is one of the best out there, but I've found her spell choices to generally be very well done, she's adapted to the divine damager role (honorable mention to Laura for doing this adeptly with Jester in C2 once Cad showed up), and I have to applaud taking ASIs instead of feats when appropriate. Her stat configuration also makes perfect sense for the character.

Orym as a fighter is strong out the gate but his choices of battlemaster moves make perfect sense for the role he played and are very useful in a party with two glass cannons but also two damage reduction tanks; it's good to have a dodge tank in the mix. His feats are focused on rounding out his skills and his optimized perception is truly ridiculous.

53 notes

·

View notes

Text

Mastering Finite Element Analysis: A Comprehensive Guide for Do Your Abaqus Assignment

Finite Element Analysis (FEA) stands as a cornerstone in the realm of structural and mechanical engineering, enabling engineers to simulate and understand the behavior of complex structures under various conditions. Abaqus, a powerful FEA software, empowers users to conduct intricate simulations, but mastering its capabilities requires a thoughtful approach. In this comprehensive guide, we'll explore the intricacies of using Abaqus to simulate a dynamic loading scenario on a complex mechanical structure. For those seeking assistance with their Abaqus assignments, this guide serves as a valuable resource to navigate through the challenges.

Understanding the Task:

Imagine a scenario where a mechanical structure, comprising interconnected components with diverse material properties and geometric configurations, undergoes dynamic loading. The task to do your Abaqus assignment at hand is to simulate and analyze this complex system using Abaqus. The goal is to provide accurate results while considering computational efficiency, convergence, and the significance of selecting appropriate element types and integration schemes.

Section 1: Modeling Approach

1.1 Geometry and CAD Import

To kick off the simulation process, importing the CAD geometry accurately into Abaqus is crucial. This step ensures that the virtual model faithfully represents the physical system.

1.2 Meshing Strategy

Developing a meshing strategy involves finding the right balance between accuracy and computational efficiency. Areas of high stress or deformation may require mesh refinement, and selecting appropriate element types for each component is key to achieving reliable results.

1.3 Material Assignments

Accurate material assignments are paramount. Understanding the behavior of materials under dynamic loading conditions is essential for realistic simulations. Abaqus allows users to define material properties with precision, enhancing the fidelity of the analysis.

1.4 Boundary Conditions

Applying realistic boundary conditions is crucial to replicate the physical constraints of the structure accurately. This step ensures that the simulation mirrors the actual behavior of the system under the specified loading conditions.

Section 2: Simulation Challenges and Strategies

2.1 Convergence Issues

Convergence issues can arise during the simulation, affecting the accuracy and reliability of results. Monitoring the simulation for convergence problems and adjusting criteria and time increments are essential steps. Sub-stepping techniques can be employed to enhance stability.

2.2 Contact and Interaction

In a complex structure, components may interact and come into contact. Defining contact pairs, choosing appropriate contact algorithms, and validating contact behavior are crucial for a realistic simulation.

2.3 Solver Settings

Optimizing solver settings is vital for computational efficiency. Parallel processing and solution controls can be utilized to manage convergence and enhance the efficiency of the simulation process.

Section 3: Element Types and Integration Schemes

3.1 Element Selection

Choosing the right element types is fundamental for accurate representation. Tetrahedral, hexahedral, and shell elements, when strategically employed, contribute to a comprehensive simulation that captures the nuances of the structure's deformation.

3.2 Integration Schemes

The selection of integration schemes is a delicate balance between accuracy and computational cost. High-order integration schemes may offer more precise results but could increase computation time. Careful consideration is required to find the optimal trade-off.

Conclusion: Navigating the Abaqus Landscape

In the world of finite element analysis, mastering Abaqus is a journey that demands attention to detail and a strategic approach. Simulating a dynamic loading scenario on a complex mechanical structure involves a series of critical decisions, from modeling and meshing to addressing convergence issues and selecting the right element types.

For those seeking assistance with their Abaqus assignments, understanding the nuances of the software and the finite element analysis process is crucial. This guide serves as a roadmap to navigate through the challenges posed by complex simulations, ensuring that the virtual representation aligns with the physical reality.

In the words of simulation experts, tackling an Abaqus assignment involves not just running the software but orchestrating a symphony of decisions that shape the accuracy and reliability of the results. As the virtual world converges with the physical, the mastery of Abaqus becomes an art and science, providing engineers with the insights needed to design and optimize structures in the real world.

#mechanical engineering assignment help#assignment help#students#educational website#university#education#study tips#educational service#mechanical engineering#mechanical engineering assignment#abaqus assignment helper#abaqus

5 notes

·

View notes

Text

The Evolution of Leaf Spring Technology in the Automotive Industry

Leaf springs have been a fundamental component of vehicle suspension systems for centuries, providing stability and support to a wide range of vehicles. Their evolution over time has been a remarkable journey, showcasing advancements in material science, design, and engineering to meet the changing needs of the automotive industry.

Early Beginnings

The concept of leaf springs dates back to ancient civilizations, where horse-drawn carriages utilized flexible wooden or metal strips to provide support and cushioning. As the automotive industry emerged, these early leaf springs were adapted for use in early automobiles. Initially, simple semi-elliptical springs made of multiple layers of steel were employed to handle the vehicle's weight and smooth out the ride.

Transition to Modern Materials

With the advent of the industrial revolution, the automotive industry witnessed a shift towards the use of high-strength steel alloys. These alloys offered increased durability and resilience compared to traditional materials. By the mid-20th century, advancements in metallurgy allowed for the production of leaf springs with superior strength-to-weight ratios, enhancing their overall performance and longevity.

Multi-Leaf and Mono-Leaf Spring Designs

In the mid-20th century, engineers began experimenting with different leaf spring designs to improve suspension performance. Multi-leaf springs, which featured several thinner leaves stacked on top of each other, gained popularity due to their ability to distribute weight more evenly and provide a smoother ride.

Later, the mono-leaf spring design emerged, utilizing a single, thicker leaf with varying thicknesses along its length to optimize load distribution and enhance ride comfort. Mono-leaf springs offered advantages in terms of reduced weight, improved handling, and ease of manufacturing.

Composite Leaf Springs

In recent decades, the automotive industry has explored innovative materials such as composite materials for leaf springs. Composite leaf springs are manufactured using reinforced plastics or composite fibers, offering significant weight savings compared to traditional steel leaf springs. This reduction in weight contributes to improved fuel efficiency and reduces the vehicle's overall carbon footprint.

Composite leaf springs are also known for their corrosion resistance, enhanced fatigue life, and the ability to be precisely tailored to specific load requirements. They have become increasingly prevalent in both commercial and passenger vehicles, representing a modern and sustainable approach to leaf spring technology.

Computer-Aided Design and Simulation

The advent of computer-aided design (CAD) and simulation technologies has revolutionized leaf spring design and optimization. Engineers can now use sophisticated software to model, analyze, and simulate various leaf spring configurations, enabling them to fine-tune designs for optimal performance, durability, and cost-effectiveness. This technology has significantly accelerated the development process and facilitated the creation of highly efficient leaf spring designs.

Future Trends and Innovations

Looking ahead, the future of leaf spring technology in the automotive industry appears promising. Advancements in materials science, such as the integration of carbon fiber and other advanced composites, hold the potential to further reduce weight and enhance the performance of leaf springs.

Moreover, research into smart materials and adaptive suspension systems may lead to leaf springs that can adjust their characteristics in real-time, optimizing the ride and handling based on driving conditions and load variations. These innovations will play a crucial role in shaping the automotive landscape, promoting sustainability, fuel efficiency, and improved driving experiences.

In conclusion, the evolution of leaf spring technology in the automotive industry has been a remarkable journey of innovation and adaptation. From humble beginnings as simple wooden or metal strips to the sophisticated composite leaf springs of today, this fundamental suspension component continues to evolve, driven by advancements in materials, design methodologies, and engineering techniques. As we continue to push the boundaries of technology, the leaf spring remains a vital element in ensuring a safe, comfortable, and efficient ride for vehicles of all kinds.

2 notes

·

View notes

Text

Get the Finest Precision with CNC Machining

In a subtractive manufacturing technique known as CNC machining, materials are precisely shaped and cut into the necessary shapes. CNC machining automates the production process in contrast to conventional machining, which mainly depends on manual intervention, leading to improved precision, repeatability, and efficiency.

Using computer-aided design (CAD) software, a digital design is produced at the start of the process. The design acts as a guide for how the CNC machine should operate. The design is then transformed into a machine-readable format, often a G-code program, which gives instructions for the CNC machine after it is complete.

The ultimate product's needs are taken into consideration while selecting the material, which may be made of metals, polymers, or composites. By sawing the material into the required sizes, either manually or automatically, the material is prepared.

The prepared material is firmly secured to the work table of the CNC machine. The CNC program is entered into the machine's controller once the cutting tools, such as drills, mills, or lathes, are mounted during the CNC machining process.

After the machine is configured, the CNC controller reads the G-code program and gives precise instructions to the machine's motors to move the cutting tools. The cutting tools execute operations including drilling, milling, turning, and more to remove material in line with the design parameters.

Throughout the machining process, quality control procedures are used to make sure that the part's dimensions, tolerances, and surface finishes adhere to the necessary standards. To check accuracy, you could use measurement equipment like calipers, micrometers, or coordinate measuring machines (CMMs).

The advantages of CNC machining

The excellent precision and accuracy of CNC machining is well known. The process is computer-controlled, which guarantees consistent and dependable outcomes, making it perfect for applications that call for precise tolerances and complex geometries.

CNC machines can deal with a variety of materials, such as composites, metals, plastics, and wood. This adaptability makes it possible to produce a variety of components for different businesses.

Complex items may be produced fast and effectively using CNC machining. Once set up, the device can run alone with little assistance from a person. This shortens the production process and increases output in general.

It is possible to produce identical components with great repeatability using CNC prototype machining. Manufacturers can reliably make huge numbers of components because of the CNC program's ability to be stored and used again.

Main source: https://cneasteel.wordpress.com/

#CNC Machining#CNC Machining process#CNC Machining Techniques#CNC Prototype Machining#CNC Machining China

2 notes

·

View notes

Text

Revolutionize Factory Floor Planning with Autodesk Utilities

Transform Your Manufacturing Space: Mastering Factory Layouts with Cutting-Edge Tools

In today’s competitive manufacturing landscape, efficiency and precision are paramount. Optimizing your factory floor layout not only boosts productivity but also enhances safety and reduces operational costs. Leveraging advanced tools like Autodesk Factory Design Utilities can revolutionize the way you approach factory planning, enabling you to create smarter, more efficient layouts with ease.

Autodesk Factory Design Utilities is a powerful plugin that seamlessly integrates with familiar CAD platforms such as AutoCAD and Inventor. This integration allows engineers and factory managers to visualize, simulate, and optimize their production spaces in a 3D environment. By doing so, you can identify potential bottlenecks, improve material flow, and ensure that every inch of your factory is used effectively.

One of the standout features of Autodesk Factory Design Utilities is its ability to quickly generate multiple layout options. Using drag-and-drop functionality, you can experiment with different configurations without the need for time-consuming manual adjustments. This flexibility ensures that you can find the most efficient layout tailored to your specific manufacturing process, whether you operate a small workshop or a large-scale plant.

Furthermore, these tools facilitate collaboration among your team members by providing clear, visual representations of proposed layouts. This transparency reduces misunderstandings and accelerates decision-making, ultimately saving you both time and money. As you iterate on your designs, you gain valuable insights into space utilization, equipment placement, and safety considerations.

Investing in Autodesk Factory Design Utilities also means gaining access to a robust library of factory components, including machinery, workstations, and storage units. This library streamlines the design process, allowing you to create realistic models quickly. Plus, the ability to simulate workflows means you can predict and address potential issues before they arise on the shop floor.

For businesses looking to maximize their return on investment, understanding the cost of these utilities is crucial. You can explore the autodesk factory design utilities cost to determine the best licensing options for your organization. Remember, the right tools not only improve your layout efficiency but also contribute to a safer, more organized workspace that supports your operational goals.

Adopting Autodesk Factory Design Utilities is a strategic move toward Industry 4.0, where digital transformation and automation are key. By embracing these innovative solutions, you position your manufacturing business for sustained growth and competitiveness in a rapidly evolving market. Start transforming your factory floor today and unlock new levels of productivity and efficiency.

0 notes

Photo

Did you know that the best gaming keyboards don’t have to cost $200? The Gamakay x NaughShark NS68 proves affordability can still deliver top-tier response with Hall effect switches and configurable RGB lighting. At just around 50 CAD, this keyboard offers rapid trigger, 8000Hz polling, and PBT keycaps for durability. It’s an incredible budget option for gamers who want quick reactions and solid build quality without breaking the bank. Discover the power of smart, cost-effective gear that elevates your gaming experience. Are you ready to upgrade your setup with affordable, high-performance gear? Check out GroovyComputers.ca for custom builds and gear that fit YOUR budget. What’s your must-have feature in a gaming keyboard?

0 notes

Text

Revit for Architectural Building Design

Building design has evolved significantly over the years, and architects now rely on advanced software to create precise and efficient designs. One such powerful tool is Autodesk Revit. Revit is a BIM software that helps architects, engineers, and construction professionals design, plan, and manage building projects efficiently. Unlike traditional drafting tools, Revit allows users to create intelligent 3D models that contain real-world information, making the design process more streamlined and effective.

What is Revit?

Revit is a software application developed by Autodesk that enables architects and designers to create detailed digital models of buildings. It is specifically designed for BIM, which means that every component in a Revit model is connected and intelligent. If a designer makes a change to one part of the model, it automatically updates related elements, ensuring consistency and reducing errors.

Unlike traditional CAD software, which focuses on 2D drawings, Revit creates a complete 3D model that incorporates data related to materials, dimensions, and construction phases. This ability to integrate detailed project information makes Revit a valuable tool in the architecture, engineering, and construction (AEC) industry, allowing teams to work more collaboratively and efficiently.

Benefits of Using Revit in Architectural Design

1. Efficient Design Process

Revit simplifies the architectural design process by allowing users to create floor plans, elevations, sections, and 3D views within a single platform. The software provides an intuitive interface that enables architects to design buildings more efficiently and make real-time modifications. With Revit, designers can quickly explore different design concepts and configurations without having to start from scratch each time.

2. BIM-Based Collaboration