#What is a Robots.txt File

Explore tagged Tumblr posts

Text

What is a Robots.txt File? The Ultimate Guide to Optimizing Search Engine Crawler Access

Discover everything about robots.txt files and how they help you control search engine crawler access. Learn to optimize your website for better SEO with this detailed guide.

1 note

·

View note

Text

0 notes

Text

To understand what's going on here, know these things:

OpenAI is the company that makes ChatGPT

A spider is a kind of bot that autonomously crawls the web and sucks up web pages

robots.txt is a standard text file that most web sites use to inform spiders whether or not they have permission to crawl the site; basically a No Trespassing sign for robots

OpenAI's spider is ignoring robots.txt (very rude!)

the web.sp.am site is a research honeypot created to trap ill-behaved spiders, consisting of billions of nonsense garbage pages that look like real content to a dumb robot

OpenAI is training its newest ChatGPT model using this incredibly lame content, having consumed over 3 million pages and counting...

It's absurd and horrifying at the same time.

16K notes

·

View notes

Text

Friday, July 28th, 2023

🌟 New

We’ve updated the text for the blog setting that said it would “hide your blog from search results”. Unfortunately, we’ve never been able to guarantee hiding content from search crawlers, unless they play nice with the standard prevention measures of robots.txt and noindex. With this in mind, we’ve changed the text of that setting to be more accurate, insofar as we discourage them, but cannot prevent search indexing. If you want to completely isolate your blog from the outside internet and require only logged in folks to see your blog, then that’s the separate “Hide [blog] from people without an account” setting, which does prevent search engines from indexing your blog.

When creating a poll on the web, you can now have 12 poll options instead of 10. Wow.

For folks using the Android app, if you get a push notification that a blog you’re subscribed to has a new post, that push will take you to the post itself, instead of the blog view.

For those of you seeing the new desktop website layout, we’ve eased up the spacing between columns a bit to hopefully make things feel less cramped. Thanks to everyone who sent in feedback about this! We’re still triaging more feedback as the experiment continues.

🛠 Fixed

While experimenting with new dashboard tab configuration options, we accidentally broke dashboard tabs that had been enabled via Tumblr Labs, like the Blog Subs tab. We’ve rolled back that change to fix those tabs.

We’ve fixed more problems with how we choose what content goes into blogs’ RSS feeds. This time we’ve fixed a few issues with how answer post content is shown as RSS items.

We’ve also fixed some layout issues with the new desktop website navigation, especially glitches caused when resizing the browser window.

Fixed a visual glitch in the new activity redesign experiment on web that was making unread activity items difficult to read in some color palettes.

Fixed a bug in Safari that was preventing mature content from being blurred properly.

When using Tumblr on a mobile phone browser, the hamburger menu icon will now have an indicator when you have an unread ask or submission in your Inbox.

🚧 Ongoing

Nothing to report here today.

🌱 Upcoming

We hear it’s crab day tomorrow on Tumblr. 🦀

We’re working on adding the ability to reply to posts as a sideblog! We’re just getting started, so it may be a little while before we run an experiment with it.

Experiencing an issue? File a Support Request and we’ll get back to you as soon as we can!

Want to share your feedback about something? Check out our Work in Progress blog and start a discussion with the community.

854 notes

·

View notes

Text

Hey!

Do you have a website? A personal one or perhaps something more serious?

Whatever the case, if you don't want AI companies training on your website's contents, add the following to your robots.txt file:

User-agent: *

Allow: /

User-agent: anthropic-ai

Disallow: /

User-agent: Claude-Web

Disallow: /

User-agent: CCbot

Disallow: /

User-agent: FacebookBot

Disallow: /

User-agent: Google-Extended

Disallow: /

User-agent: GPTBot

Disallow: /

User-agent: PiplBot

Disallow: /

User-agent: ByteSpider

Disallow: /

User-agent: PerplexityBot

Disallow: /

User-agent: cohere-ai

Disallow: /

User-agent: ChatGPT-User

Disallow: /

User-agent: Omgilibot

Disallow: /

User-agent: Omgili

Disallow: /

There are of course more and even if you added them they may not cooperate, but this should get the biggest AI companies to leave your site alone.

Important note: The first two lines declare that anything not on the list is allowed to access everything on the site. If you don't want this, add "Disallow:" lines after them and write the relative paths of the stuff you don't want any bots, including google search to access. For example:

User-agent: *

Allow: /

Disallow: /super-secret-pages/secret.html

If that was in the robots.txt of example.com, it would tell all bots to not access

https://example.com/super-secret-pages/secret.html

And I'm sure you already know what to do if you already have a robots txt, sitemap.xml/sitemap.txt etc.

88 notes

·

View notes

Text

"how do I keep my art from being scraped for AI from now on?"

if you post images online, there's no 100% guaranteed way to prevent this, and you can probably assume that there's no need to remove/edit existing content. you might contest this as a matter of data privacy and workers' rights, but you might also be looking for smaller, more immediate actions to take.

...so I made this list! I can't vouch for the effectiveness of all of these, but I wanted to compile as many options as possible so you can decide what's best for you.

Discouraging data scraping and "opting out"

robots.txt - This is a file placed in a website's home directory to "ask" web crawlers not to access certain parts of a site. If you have your own website, you can edit this yourself, or you can check which crawlers a site disallows by adding /robots.txt at the end of the URL. This article has instructions for blocking some bots that scrape data for AI.

HTML metadata - DeviantArt (i know) has proposed the "noai" and "noimageai" meta tags for opting images out of machine learning datasets, while Mojeek proposed "noml". To use all three, you'd put the following in your webpages' headers:

<meta name="robots" content="noai, noimageai, noml">

Have I Been Trained? - A tool by Spawning to search for images in the LAION-5B and LAION-400M datasets and opt your images and web domain out of future model training. Spawning claims that Stability AI and Hugging Face have agreed to respect these opt-outs. Try searching for usernames!

Kudurru - A tool by Spawning (currently a Wordpress plugin) in closed beta that purportedly blocks/redirects AI scrapers from your website. I don't know much about how this one works.

ai.txt - Similar to robots.txt. A new type of permissions file for AI training proposed by Spawning.

ArtShield Watermarker - Web-based tool to add Stable Diffusion's "invisible watermark" to images, which may cause an image to be recognized as AI-generated and excluded from data scraping and/or model training. Source available on GitHub. Doesn't seem to have updated/posted on social media since last year.

Image processing... things

these are popular now, but there seems to be some confusion regarding the goal of these tools; these aren't meant to "kill" AI art, and they won't affect existing models. they won't magically guarantee full protection, so you probably shouldn't loudly announce that you're using them to try to bait AI users into responding

Glaze - UChicago's tool to add "adversarial noise" to art to disrupt style mimicry. Devs recommend glazing pictures last. Runs on Windows and Mac (Nvidia GPU required)

WebGlaze - Free browser-based Glaze service for those who can't run Glaze locally. Request an invite by following their instructions.

Mist - Another adversarial noise tool, by Psyker Group. Runs on Windows and Linux (Nvidia GPU required) or on web with a Google Colab Notebook.

Nightshade - UChicago's tool to distort AI's recognition of features and "poison" datasets, with the goal of making it inconvenient to use images scraped without consent. The guide recommends that you do not disclose whether your art is nightshaded. Nightshade chooses a tag that's relevant to your image. You should use this word in the image's caption/alt text when you post the image online. This means the alt text will accurately describe what's in the image-- there is no reason to ever write false/mismatched alt text!!! Runs on Windows and Mac (Nvidia GPU required)

Sanative AI - Web-based "anti-AI watermark"-- maybe comparable to Glaze and Mist. I can't find much about this one except that they won a "Responsible AI Challenge" hosted by Mozilla last year.

Just Add A Regular Watermark - It doesn't take a lot of processing power to add a watermark, so why not? Try adding complexities like warping, changes in color/opacity, and blurring to make it more annoying for an AI (or human) to remove. You could even try testing your watermark against an AI watermark remover. (the privacy policy claims that they don't keep or otherwise use your images, but use your own judgment)

given that energy consumption was the focus of some AI art criticism, I'm not sure if the benefits of these GPU-intensive tools outweigh the cost, and I'd like to know more about that. in any case, I thought that people writing alt text/image descriptions more often would've been a neat side effect of Nightshade being used, so I hope to see more of that in the future, at least!

245 notes

·

View notes

Text

How to Back up a Tumblr Blog

This will be a long post.

Big thank you to @afairmaiden for doing so much of the legwork on this topic. Some of these instructions are copied from her verbatim.

Now, we all know that tumblr has an export function that theoretially allows you to export the contents of your blog. However, this function has several problems including no progress bar (such that it appears to hang for 30+ hours) and when you do finally download the gargantuan file, the blog posts cannot be browsed in any way resembling the original blog structure, searched by tag, etc.

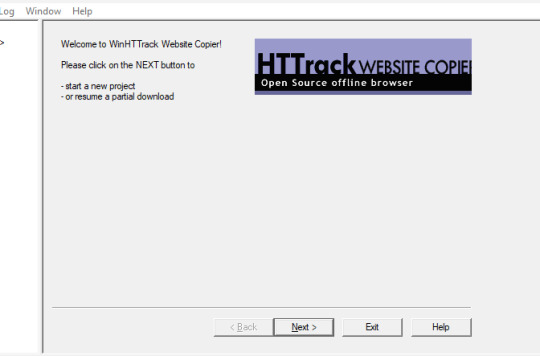

What we found is a tool built for website archiving/mirroring called httrack. Obviously this is a big project when considering a large tumblr blog, but there are some ways to help keep it manageable. Details under the cut.

How to download your blog with HTTrack:

Website here

You will need:

A reliable computer and a good internet connection.

Time and space. For around 40,000 posts, expect 48 hours and 40GB. 6000 posts ≈ 10 hours, 12GB. If possible, test this on a small blog before jumping into a major project. There is an option to stop and continue an interrupted download later, but this may or may not actually resume where it left off. Keep in mind that Tumblr is a highly dynamic website with things changing all the time (notes, icons, pages being updated with every post, etc).

A custom theme. It doesn't have to be pretty, but it does need to be functional. That said, there are a few things you may want to make sure are in your theme before starting to archive:

the drop down meatball menu on posts with the date they were posted

tags visible on your theme, visible from your blog's main page

no icon images on posts/notes (They may be small, but keep in mind there are thousands of them, so if nothing else, they'll take up time. Instructions on how to exclude them below.)

Limitations: This will not save your liked or private posts, or messages. Poll results also may not show up.

What to expect from HTTrack:

HTTrack will mirror your blog locally by creating a series of linked HTML files that you can browse with your browser even if tumblr were to entirely go down. The link structure mimics the site structure, so you should be able to browse your own blog as if you had typed in the url of your custom theme into the browser. Some elements may not appear or load, and much of the following instructions are dedicated to making sure that you download the right images without downloading too many unnecessary images.

There will be a fair bit of redundancy as it will save:

individual posts pages for all your tags, such as tagged/me etc (If you tend to write a lot in your tags, you may want to save time and space by skipping this option. Instructions below.)

the day folder (if you have the meatball menu)

regular blog pages (page/1 etc)

How it works: HTTrack will be going through your url and saving the contents of every sub directory. In your file explorer this will look like a series of nested folders.

How to Start

Download and run HTTrack.

In your file directory, create an overarching folder for the project in some drive with a lot of space.

Start a new project. Select this folder in HTTrack as the save location for your project. Name your project.

For the url, enter https://[blogname].tumblr.com. Without the https:// you'll get a robots.txt error and it won't save anything.

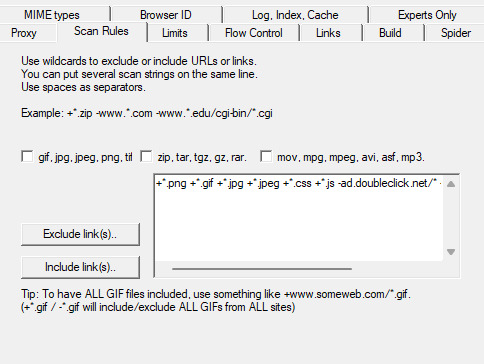

Settings:

Open settings. Under "scan rules":

Check the box for filetypes .gif etc. Make sure the box for .zip etc. is unchecked. Check the box for .mov etc.

Under "limits":

Change the max speed to between 100,000 - 250,000. The reason this needs to be limited is because you could accidentally DDOS the website you are downloading. Do not DDOS tumblr.

Change the link limit to maybe 200,000-300,000 for a cutoff on a large blog, according to @afairmaiden. This limit is to prevent you from accidentally having a project that goes on infinitely due to redundancy or due to getting misdirected and suddenly trying to download the entirety of wikipedia.

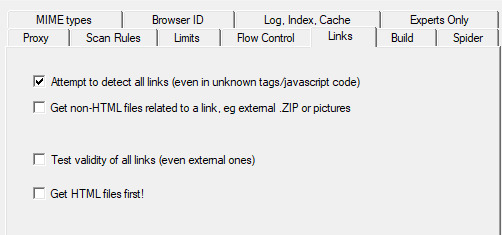

Go through the other tabs. Check the box that says "Get HTML first". Uncheck "find every link". Uncheck "get linked non-html files". If you don't want to download literally the entire internet. Check "save all items in cache as well as HTML". Check "disconnect when finished".

Go back to Scan Rules.

There will be a large text box. In this box we place a sort of blacklist and whitelist for filetypes.

Paste the following text into that box.

+*.mp4 +*.gifv -*x-callback-url* -*/sharer/* -*/amp -*tumblr.com/image* -*/photoset_iframe/*

Optional:

-*/tagged/* (if you don't want to save pages for all your tags.)

-*/post/* (if you don't want to save each post individually. not recommended if you have readmores that redirect to individual posts.)

-*/day/* (if you don't feel it's necessary to search by date)

Optional but recommended:

-*/s64x64u*.jpg -*tumblr_*_64.jpg -*avatar_*_64.jpg -*/s16x16u*.jpg -*tumblr_*_16*.jpg -*avatar_*_16.jpg -*/s64x64u*.gif -*tumblr_*_64.gif -*avatar_*_64.gif -*/s16x16u*.gif -*tumblr_*_16.gif -*avatar_*_16.gif

This will prevent the downloading of icons/avatars, which tend to be extremely redundant as each image downloads a separate time for each appearance.

Many icons are in .pnj format and therefore won't download unless you add the extension (+*.pnj), so you may be able to whitelist the URLs for your and your friends' icons. (Honestly, editing your theme to remove icons from your notes may be the simpler solution here.)

You should now be ready to start.

Make sure your computer doesn't overheat during the extremely long download process.

Pages tend to be among the last things to save. If you have infinite scroll on, your first page (index.html) may not have a link to page 2, but your pages will be in the folder.

Shortly after your pages are done, you may see the link progress start over. This may be to check that everything is complete. At this point, it should be safe to click cancel if you want to stop, but you run the risk of more stuff being missing. You will need to wait a few minutes for pending transfers to be competed.

Once you're done, you'll want to check for: Files without an extension.

Start with your pages folder, sort items by file type, and look for ones that are simply listed as "file" rather than HTML. Add the appropriate extension (in this case, .html) and check to see if it works. (This may cause links to this page to appear broken.)

Next, sort by file size and check for 0B files. HTMLs will appear as a blank page. Delete these. Empty folders. View files as large icons to find these quickly.

If possible, make a backup copy of your project file and folder, especially if you have a fairly complete download and you want to update it.

Finally, turn off your computer and let it rest.

134 notes

·

View notes

Text

Less than three months after Apple quietly debuted a tool for publishers to opt out of its AI training, a number of prominent news outlets and social platforms have taken the company up on it.

WIRED can confirm that Facebook, Instagram, Craigslist, Tumblr, The New York Times, The Financial Times, The Atlantic, Vox Media, the USA Today network, and WIRED’s parent company, Condé Nast, are among the many organizations opting to exclude their data from Apple’s AI training. The cold reception reflects a significant shift in both the perception and use of the robotic crawlers that have trawled the web for decades. Now that these bots play a key role in collecting AI training data, they’ve become a conflict zone over intellectual property and the future of the web.

This new tool, Applebot-Extended, is an extension to Apple’s web-crawling bot that specifically lets website owners tell Apple not to use their data for AI training. (Apple calls this “controlling data usage” in a blog post explaining how it works.) The original Applebot, announced in 2015, initially crawled the internet to power Apple’s search products like Siri and Spotlight. Recently, though, Applebot’s purpose has expanded: The data it collects can also be used to train the foundational models Apple created for its AI efforts.

Applebot-Extended is a way to respect publishers' rights, says Apple spokesperson Nadine Haija. It doesn’t actually stop the original Applebot from crawling the website—which would then impact how that website’s content appeared in Apple search products—but instead prevents that data from being used to train Apple's large language models and other generative AI projects. It is, in essence, a bot to customize how another bot works.

Publishers can block Applebot-Extended by updating a text file on their websites known as the Robots Exclusion Protocol, or robots.txt. This file has governed how bots go about scraping the web for decades—and like the bots themselves, it is now at the center of a larger fight over how AI gets trained. Many publishers have already updated their robots.txt files to block AI bots from OpenAI, Anthropic, and other major AI players.

Robots.txt allows website owners to block or permit bots on a case-by-case basis. While there’s no legal obligation for bots to adhere to what the text file says, compliance is a long-standing norm. (A norm that is sometimes ignored: Earlier this year, a WIRED investigation revealed that the AI startup Perplexity was ignoring robots.txt and surreptitiously scraping websites.)

Applebot-Extended is so new that relatively few websites block it yet. Ontario, Canada–based AI-detection startup Originality AI analyzed a sampling of 1,000 high-traffic websites last week and found that approximately 7 percent—predominantly news and media outlets—were blocking Applebot-Extended. This week, the AI agent watchdog service Dark Visitors ran its own analysis of another sampling of 1,000 high-traffic websites, finding that approximately 6 percent had the bot blocked. Taken together, these efforts suggest that the vast majority of website owners either don’t object to Apple’s AI training practices are simply unaware of the option to block Applebot-Extended.

In a separate analysis conducted this week, data journalist Ben Welsh found that just over a quarter of the news websites he surveyed (294 of 1,167 primarily English-language, US-based publications) are blocking Applebot-Extended. In comparison, Welsh found that 53 percent of the news websites in his sample block OpenAI’s bot. Google introduced its own AI-specific bot, Google-Extended, last September; it’s blocked by nearly 43 percent of those sites, a sign that Applebot-Extended may still be under the radar. As Welsh tells WIRED, though, the number has been “gradually moving” upward since he started looking.

Welsh has an ongoing project monitoring how news outlets approach major AI agents. “A bit of a divide has emerged among news publishers about whether or not they want to block these bots,” he says. “I don't have the answer to why every news organization made its decision. Obviously, we can read about many of them making licensing deals, where they're being paid in exchange for letting the bots in—maybe that's a factor.”

Last year, The New York Times reported that Apple was attempting to strike AI deals with publishers. Since then, competitors like OpenAI and Perplexity have announced partnerships with a variety of news outlets, social platforms, and other popular websites. “A lot of the largest publishers in the world are clearly taking a strategic approach,” says Originality AI founder Jon Gillham. “I think in some cases, there's a business strategy involved—like, withholding the data until a partnership agreement is in place.”

There is some evidence supporting Gillham’s theory. For example, Condé Nast websites used to block OpenAI’s web crawlers. After the company announced a partnership with OpenAI last week, it unblocked the company’s bots. (Condé Nast declined to comment on the record for this story.) Meanwhile, Buzzfeed spokesperson Juliana Clifton told WIRED that the company, which currently blocks Applebot-Extended, puts every AI web-crawling bot it can identify on its block list unless its owner has entered into a partnership—typically paid—with the company, which also owns the Huffington Post.

Because robots.txt needs to be edited manually, and there are so many new AI agents debuting, it can be difficult to keep an up-to-date block list. “People just don’t know what to block,” says Dark Visitors founder Gavin King. Dark Visitors offers a freemium service that automatically updates a client site’s robots.txt, and King says publishers make up a big portion of his clients because of copyright concerns.

Robots.txt might seem like the arcane territory of webmasters—but given its outsize importance to digital publishers in the AI age, it is now the domain of media executives. WIRED has learned that two CEOs from major media companies directly decide which bots to block.

Some outlets have explicitly noted that they block AI scraping tools because they do not currently have partnerships with their owners. “We’re blocking Applebot-Extended across all of Vox Media’s properties, as we have done with many other AI scraping tools when we don’t have a commercial agreement with the other party,” says Lauren Starke, Vox Media’s senior vice president of communications. “We believe in protecting the value of our published work.”

Others will only describe their reasoning in vague—but blunt!—terms. “The team determined, at this point in time, there was no value in allowing Applebot-Extended access to our content,” says Gannett chief communications officer Lark-Marie Antón.

Meanwhile, The New York Times, which is suing OpenAI over copyright infringement, is critical of the opt-out nature of Applebot-Extended and its ilk. “As the law and The Times' own terms of service make clear, scraping or using our content for commercial purposes is prohibited without our prior written permission,” says NYT director of external communications Charlie Stadtlander, noting that the Times will keep adding unauthorized bots to its block list as it finds them. “Importantly, copyright law still applies whether or not technical blocking measures are in place. Theft of copyrighted material is not something content owners need to opt out of.”

It’s unclear whether Apple is any closer to closing deals with publishers. If or when it does, though, the consequences of any data licensing or sharing arrangements may be visible in robots.txt files even before they are publicly announced.

“I find it fascinating that one of the most consequential technologies of our era is being developed, and the battle for its training data is playing out on this really obscure text file, in public for us all to see,” says Gillham.

11 notes

·

View notes

Text

Recent discussions on Reddit are no longer showing up in non-Google search engine results. The absence is the result of updates to Reddit’s Content Policy that ban crawling its site without agreeing to Reddit’s rules, which bar using Reddit content for AI training without Reddit’s explicit consent.

As reported by 404 Media, using "site:reddit.com" on non-Google search engines, including Bing, DuckDuckGo, and Mojeek, brings up minimal or no Reddit results from the past week. Ars Technica made searches on these and other search engines and can confirm the findings. Brave, for example, brings up a few Reddit results sometimes (examples here and here) but not nearly as many as what appears on Google when using identical queries. A standout is Kagi, which is a paid-for engine that pays Google for some of its search index and still shows recent Reddit results.

As 404 Media noted, Reddit's Robots Exclusion Protocol (robots.txt file) blocks bots from scraping the site. The protocol also states, "Reddit believes in an open Internet, but not the misuse of public content." Reddit has approved scrapers from the Internet Archive and some research-focused entities.

Reddit announced changes to its robots.txt file on June 25. Ahead of the changes, it said it had "seen an uptick in obviously commercial entities who scrape Reddit and argue that they are not bound by our terms or policies. Worse, they hide behind robots.txt and say that they can use Reddit content for any use case they want."

Last month, Reddit said that any "good-faith actor" could reach out to Reddit to try to work with the company, linking to an online form. However, Colin Hayhurst, Mojeek's CEO, told me via email that he reached out to Reddit after he was blocked but that Reddit "did not respond to many messages and emails." He noted that since 404 Media's report, Reddit CEO Steve Huffman has reached out.

7 notes

·

View notes

Text

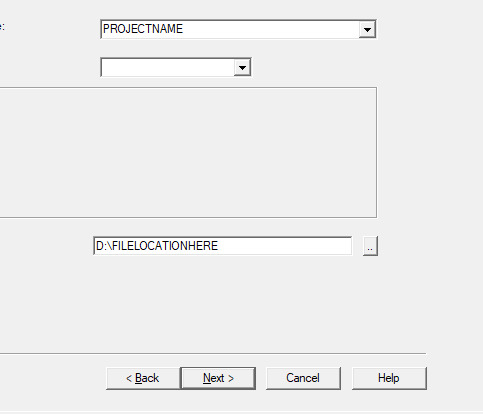

I've recently learned how to scrape websites that require a login. This took a lot of work and seemed to have very little documentation online so I decided to go ahead and write my own tutorial on how to do it.

We're using HTTrack as I think that Cyotek does basically the same thing but it's just more complicated. Plus, I'm more familiar with HTTrack and I like the way it works.

So first thing you'll do is give your project a name. This name is what the file that stores your scrape information will be called. If you need to come back to this later, you'll find that file.

Also, be sure to pick a good file-location for your scrape. It's a pain to have to restart a scrape (even if it's not from scratch) because you ran out of room on a drive. I have a secondary drive, so I'll put my scrape data there.

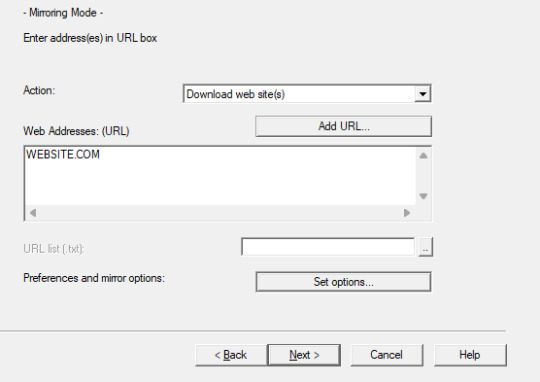

Next you'll put in your WEBSITE NAME and you'll hit "SET OPTIONS..."

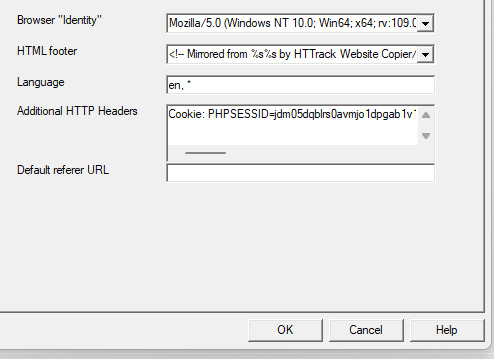

This is where things get a little bit complicated. So when the window pops up you'll hit 'browser ID' in the tabs menu up top. You'll see this screen.

What you're doing here is giving the program the cookies that you're using to log in. You'll need two things. You'll need your cookie and the ID of your browser. To do this you'll need to go to the website you plan to scrape and log in.

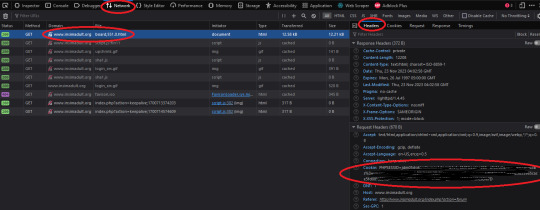

Once you're logged in press F12. You'll see a page pop up at the bottom of your screen on Firefox. I believe that for chrome it's on the side. I'll be using Firefox for this demonstration but everything is located in basically the same place so if you don't have Firefox don't worry.

So you'll need to click on some link within the website. You should see the area below be populated by items. Click on one and then click 'header' and then scroll down until you see cookies and browser id. Just copy those and put those into the corresponding text boxes in HTTrack! Be sure to add "Cookies: " before you paste your cookie text. Also make sure you have ONE space between the colon and the cookie.

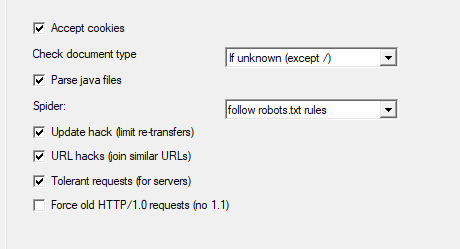

Next we're going to make two stops and make sure that we hit a few more smaller options before we add the rule set. First, we'll make a stop at LINKS and click GET NON-HTML LINKS and next we'll go and find the page where we turn on "TOLERANT REQUESTS", turn on "ACCEPT COOKIES" and select "DO NOT FOLLOW ROBOTS.TXT"

This will make sure that you're not overloading the servers, that you're getting everything from the scrape and NOT just pages, and that you're not following the websites indexing bot rules for Googlebots. Basically you want to get the pages that the website tells Google not to index!

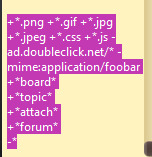

Okay, last section. This part is a little difficult so be sure to read carefully!

So when I first started trying to do this, I kept having an issue where I kept getting logged out. I worked for hours until I realized that it's because the scraper was clicking "log out' to scrape the information and logging itself out! I tried to exclude the link by simply adding it to an exclude list but then I realized that wasn't enough.

So instead, I decided to only download certain files. So I'm going to show you how to do that. First I want to show you the two buttons over to the side. These will help you add rules. However, once you get good at this you'll be able to write your own by hand or copy and past a rule set that you like from a text file. That's what I did!

Here is my pre-written rule set. Basically this just tells the downloader that I want ALL images, I want any item that includes the following keyword, and the -* means that I want NOTHING ELSE. The 'attach' means that I'll get all .zip files and images that are attached since the website that I'm scraping has attachments with the word 'attach' in the URL.

It would probably be a good time to look at your website and find out what key words are important if you haven't already. You can base your rule set off of mine if you want!

WARNING: It is VERY important that you add -* at the END of the list or else it will basically ignore ALL of your rules. And anything added AFTER it will ALSO be ignored.

Good to go!

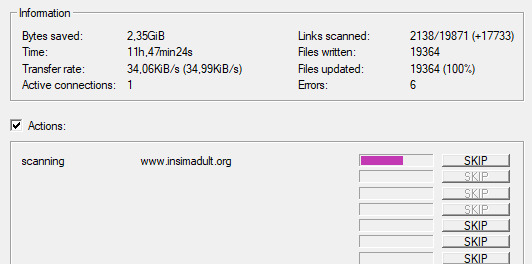

And you're scraping! I was using INSIMADULT as my test.

There are a few notes to keep in mind: This may take up to several days. You'll want to leave your computer on. Also, if you need to restart a scrape from a saved file, it still has to re-verify ALL of those links that it already downloaded. It's faster that starting from scratch but it still takes a while. It's better to just let it do it's thing all in one go.

Also, if you need to cancel a scrape but want all the data that is in the process of being added already then ONLY press cancel ONCE. If you press it twice it keeps all the temp files. Like I said, it's better to let it do its thing but if you need to stop it, only press cancel once. That way it can finish up the URLs already scanned before it closes.

40 notes

·

View notes

Text

youtube

In this comprehensive guide, learn what a robots.txt file is, its use cases, and how to create one effectively. We'll explore the RankMath Robots.txt Tester Tool and discuss the best SEO practices associated with robots.txt files. Additionally, we'll analyze live examples to provide a clear understanding of its implementation.

2 notes

·

View notes

Text

How to Block AI Bots from Scraping Your Website

The Silmarillion Writers' Guild just recently opened its draft AI policy for comment, and one thing people wanted was for us, if possible, to block AI bots from scraping the SWG website. Twelve hours ago, I had no idea if it was possible! But I spent a few hours today researching the subject, and the SWG site is now much more locked down against AI bots than it was this time yesterday.

I know I am not the only person with a website or blog or portfolio online that doesn't want their content being used to train AI. So I thought I'd put together what I learned today in hopes that it might help others.

First, two important points:

I am not an IT professional. I am a middle-school humanities teacher with degrees in psychology, teaching, and humanities. I'm self-taught where building and maintaining websites is concerned. In other words, I'm not an expert but simply passing on what I learned during my research today.

On that note, I can't help with troubleshooting on your own site or project. I wouldn't even have been able to do everything here on my own for the SWG, but thankfully my co-admin Russandol has much more tech knowledge than me and picked up where I got lost.

Step 1: Block AI Bots Using Robots.txt

If you don't even know what this is, start here:

About /robots.txt

How to write and submit a robots.txt file

If you know how to find (or create) the robots.txt file for your website, you're going to add the following lines of code to the file. (Source: DataDome, How ChatGPT & OpenAI Might Use Your Content, Now & in the Future)

User-agent: CCBot Disallow: /

AND

User-agent: ChatGPT-User Disallow: /

Step Two: Add HTTPS Headers/Meta Tags

Unfortunately, not all bots respond to robots.txt. Img2dataset is one that recently gained some notoriety when a site owner posted in its issue queue after the bot brought his site down, asking that the bot be opt-in or at least respect robots.txt. He received a rather rude reply from the img2dataset developer. It's covered in Vice's An AI Scraping Tool Is Overwhelming Websites with Traffic.

Img2dataset requires a header tag to keep it away. (Not surprisingly, this is often a more complicated task than updating a robots.txt file. I don't think that's accidental. This is where I got stuck today in working on my Drupal site.) The header tags are "noai" and "noimageai." These function like the more familiar "noindex" and "nofollow" meta tags. When Russa and I were researching this today, we did not find a lot of information on "noai" or "noimageai," so I suspect they are very new. We used the procedure for adding "noindex" or "nofollow" and swapped in "noai" and "noimageai," and it worked for us.

Header meta tags are the same strategy DeviantArt is using to allow artists to opt out of AI scraping; artist Aimee Cozza has more in What Is DeviantArt's New "noai" and "noimageai" Meta Tag and How to Install It. Aimee's blog also has directions for how to use this strategy on WordPress, SquareSpace, Weebly, and Wix sites.

In my research today, I discovered that some webhosts provide tools for adding this code to your header through a form on the site. Check your host's knowledge base to see if you have that option.

You can also use .htaccess or add the tag directly into the HTML in the <head> section. .htaccess makes sense if you want to use the "noai" and "noimageai" tag across your entire site. The HTML solution makes sense if you want to exclude AI crawlers from specific pages.

Here are some resources on how to do this for "noindex" and "nofollow"; just swap in "noai" and "noimageai":

HubSpot, Using Noindex, Nofollow HTML Metatags: How to Tell Google Not to Index a Page in Search (very comprehensive and covers both the .htaccess and HTML solutions)

Google Search Documentation, Block Search Indexing with noindex (both .htaccess and HTML)

AngryStudio, Add noindex and nofollow to Whole Website Using htaccess

Perficient, How to Implement a NoIndex Tag (HTML)

Finally, all of this is contingent on web scrapers following the rules and etiquette of the web. As we know, many do not. Sprinkled amid the many articles I read today on blocking AI scrapers were articles on how to override blocks when scraping the web.

This will also, I suspect, be something of a game of whack-a-mole. As the img2dataset case illustrates, the previous etiquette around robots.txt was ignored in favor of a more complicated opt-out, one that many site owners either won't be aware of or won't have time/skill to implement. I would not be surprised, as the "noai" and "noimageai" tags gain traction, to see bots demanding that site owners jump through a new, different, higher, and possibly fiery hoop in order to protect the content on their sites from AI scraping. These folks serve to make a lot of money off this, which doesn't inspire me with confidence that withholding our work from their grubby hands will be an endeavor that they make easy for us.

69 notes

·

View notes

Text

What is the best way to optimize my website for search engines?

Optimizing Your Website for Search Engines:

Keyword Research and Planning

Identify relevant keywords and phrases for your content

Use tools like Google Keyword Planner, Ahrefs, or SEMrush to find keywords

Plan content around target keywords

On-Page Optimization

Title Tags: Write unique, descriptive titles for each page

Meta Descriptions: Write compelling, keyword-rich summaries for each page

Header Tags: Organize content with H1, H2, H3, etc. headers

Content Optimization: Use keywords naturally, aim for 1-2% density

URL Structure: Use clean, descriptive URLs with target keywords

Technical Optimization

Page Speed: Ensure fast loading times (under 3 seconds)

Mobile-Friendliness: Ensure responsive design for mobile devices

SSL Encryption: Install an SSL certificate for secure browsing

XML Sitemap: Create and submit a sitemap to Google Search Console

Robots.txt: Optimize crawling and indexing with a robots.txt file

Content Creation and Marketing

High-Quality Content: Create informative, engaging, and valuable content

Content Marketing: Share content on social media, blogs, and guest posts

Internal Linking: Link to relevant pages on your website

Image Optimization: Use descriptive alt tags and file names

Link Building and Local SEO

Backlinks: Earn high-quality backlinks from authoritative sources

Local SEO: Claim and optimize Google My Business listing

NAP Consistency: Ensure consistent name, address, and phone number across web

Analytics and Tracking

Google Analytics: Install and track website analytics

Google Search Console: Monitor search engine rankings and traffic

Track Keyword Rankings: Monitor target keyword rankings

8 notes

·

View notes

Text

What is robots.txt and what is it used for?

Robots.txt is a text file that website owners create to instruct web robots (also known as web crawlers or spiders) how to crawl pages on their website. It is a part of the Robots Exclusion Protocol (REP), which is a standard used by websites to communicate with web crawlers.

The robots.txt file typically resides in the root directory of a website and contains directives that specify which parts of the website should not be accessed by web crawlers. These directives can include instructions to allow or disallow crawling of specific directories, pages, or types of content.

Webmasters use robots.txt for various purposes, including:

Controlling Access: Website owners can use robots.txt to control which parts of their site are accessible to search engine crawlers. For example, they may want to prevent crawlers from indexing certain pages or directories that contain sensitive information or duplicate content.

Crawl Efficiency: By specifying which pages or directories should not be crawled, webmasters can help search engines focus their crawling efforts on the most important and relevant content on the site. This can improve crawl efficiency and ensure that search engines index the most valuable content.

Preserving Bandwidth: Crawlers consume server resources and bandwidth when accessing a website. By restricting access to certain parts of the site, webmasters can reduce the load on their servers and conserve bandwidth.

Privacy: Robots.txt can be used to prevent search engines from indexing pages that contain private or confidential information that should not be made publicly accessible.

It's important to note that while robots.txt can effectively instruct compliant web crawlers, it does not serve as a security measure. Malicious bots or those that do not adhere to the Robots Exclusion Protocol may still access content prohibited by the robots.txt file. Therefore, sensitive or confidential information should not solely rely on robots.txt for protection.

Click here for best technical SEO service

#technicalseo#seo#seo services#robots.txt#404error#digital marketing#keyword research#keyword ranking#seo tips#search engine marketing#404 error#googleadsense#rohan

9 notes

·

View notes

Text

What are the essential elements of a successful technical SEO strategy?

A successful technical SEO strategy includes the following elements:

Website Crawlability: Ensure search engines can access and navigate your site. Optimize robots.txt files and XML sitemaps to guide crawlers effectively.

Mobile Optimization: Adopt a responsive design and prioritize mobile performance to meet Google’s mobile-first indexing requirements.

Site Speed Optimization: Optimize images, use a Content Delivery Network (CDN), and leverage browser caching to improve load times.

HTTPS Implementation: Secure your site with an SSL certificate to enhance trust and ranking potential.

Structured Data Markup: Use schema.org to provide additional context to your content, enabling rich snippets in search results.

URL Structure: Maintain clean, keyword-rich URLs with a logical hierarchy.

Canonical Tags: Avoid duplicate content issues by implementing canonical tags to indicate the preferred version of your pages.

Error Handling: Fix broken links, 404 errors, and redirect chains that could hinder user experience and SEO.

Internal Linking: Build a strong internal linking structure to distribute link equity and improve crawl efficiency.

Core Web Vitals: Optimize for Google’s performance metrics, focusing on loading speed, interactivity, and visual stability.

A well-rounded strategy ensures that both users and search engines can easily access and engage with your website.

2 notes

·

View notes

Text

Oekaki updatez...

Monster Kidz Oekaki is still up and i'd like to keep it that way, but i need to give it some more attention and keep people updated on what's going on/what my plans are for it. so let me jot some thoughts down...

data scraping for machine learning: this has been a concern for a lot of artists as of late, so I've added a robots.txt file and an ai.txt file (as per the opt-out standard proposed by Spawning.ai) to the site in an effort to keep out as many web crawlers for AI as possible. the site will still be indexed by search engines and the Internet Archive. as an additional measure, later tonight I'll try adding "noai", "noimageai", and "noml" HTML meta tags to the site (this would probably be quick and easy to do but i'm soooo sleepy 🛌)

enabling uploads: right now, most users can only post art by drawing in one of the oekaki applets in the browser. i've already given this some thought for a while now, but it seems like artist-oriented spaces online have been dwindling lately, so i'd like to give upload privileges to anyone who's already made a drawing on the oekaki and make a google form for those who haven't (just to confirm who you are/that you won't use the feature maliciously). i would probably set some ground rules like "don't spam uploads"

rules: i'd like to make the rules a little less anal. like, ok, it's no skin off my ass if some kid draws freddy fazbear even though i hope scott cawthon's whole empire explodes. i should also add rules pertaining to uploads, which means i'm probably going to have to address AI generated content. on one hand i hate how, say, deviantart's front page is loaded with bland, tacky, "trending on artstation"-ass AI generated shit (among other issues i have with the medium) but on the other hand i have no interest in trying to interrogate someone about whether they're a Real Artist or scream at someone with the rage of 1,000 scorned concept artists for referencing an AI generated image someone else posted, or something. so i'm not sure how to tackle this tastefully

"Branding": i'm wondering if i should present this as less of a UTDR Oekaki and more of a General Purpose Oekaki with a monster theming. functionally, there wouldn't be much of a difference, but maybe the oekaki could have its own mascot

fun stuff: is having a poll sort of "obsolete" now because of tumblr polls, or should I keep it...? i'd also like to come up with ideas for Things To Do like weekly/monthly art prompts, or maybe games/events like a splatfest/artfight type thing. if you have any ideas of your own, let me know

boring stuff: i need to figure out how to set up automated backups, so i guess i'll do that sometime soon... i should also update the oekaki software sometime (this is scary because i've made a lot of custom edits to everything)

Money: well this costs money to host so I might put a ko-fi link for donations somewhere... at some point... maybe.......

8 notes

·

View notes