#Ben’s not

Text

Just another Treasure Planet X Wings of Fire doodle

This is James and Ben rn

#wings of fire#dragons#james pleiades hawkins#ben robot#ben#treasure planet#design#art#sketches#doodles#don’t question the color#hes mad#Ben’s not#ben is very loud

75 notes

·

View notes

Text

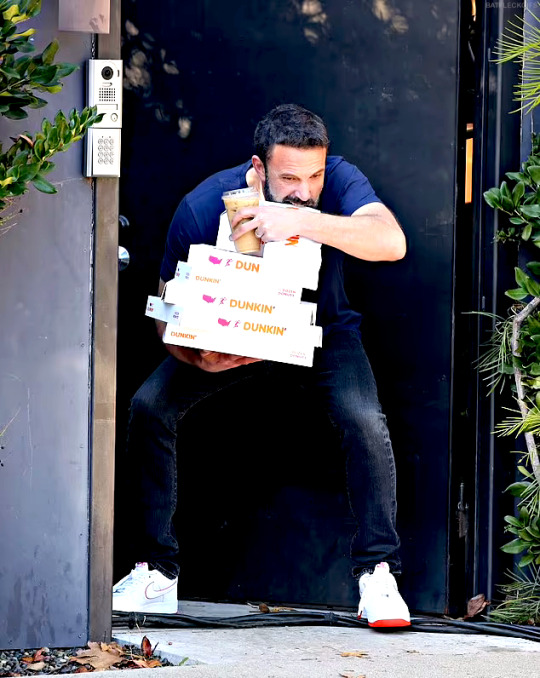

one time I used the ben affleck smoking reaction image in the family group chat and my mom replied with the funniest possible response which was: "mommy doesn't know who the guy is???" and that phrase has not left my brain since. I'll see blorbos on my dash that I don't recognize and I'll be like well it seems mommy doesn't know who the guy is.

#the funny thing is she DOES know who ben affleck is#mom you're the one who made me watch good will hunting!!!#ah well. mommy doesn't know who the guy is#I'm gonna start saying that as if it's a popular meme phrase that everyone knows. maybe i can gaslight pple into using it#....you know what. please reblog this actually. it's what mommy deserves

39K notes

·

View notes

Text

BEN AFFLECK

Jan 04 2024

#ben affleck#benaffleckedit#baffleckedit#affleckgifs#dilfsource#mensource#dccastedit#listen#lmao#mine.#misc: candids.#THERE'S ONE MORE

58K notes

·

View notes

Text

I'm back with another crack meme, let's see how well this one does

If it does as well as my New Yorker Post then I'll make a navigation list for the memes :D

Bonus:

#miles morales#hobie brown#gwen stacy#pavitr prabhakar#miguel o'hara#peter parker#peter b parker#margo kess#ganke lee#jessica drew#ben reilly#johnathan ohnn#spot#rio morales#jeff morales#spiderman#atsv#across the spiderverse#spiderverse

75K notes

·

View notes

Text

you're laughing. The umbrella academy's final season destroyed every character's personal growth and semi-healed traumas, left huge plot-holes, completely abandoned some of it's most beloved side characters that were crucial in previous seasons and you're laugh-oh. You're crying. My bad. Go ahead. Let it out. Understandable.

#the umbrella academy#tua#netflix#at least we got baby shark 😶#five hargreeves#where is#pogo#grace#HERB#SLOANE???!#ray???#sissy??????#vanya hargreeves#lila pitts#diego hargreeves#klaus hargreeves#allison hargreeves#reginald hargreeves#luther hargreeves#ben hargreeves#fiveya#kliego#viktor hargreeves

10K notes

·

View notes

Text

happy pride. or whatever

#creepypasta#creepypasta fanart#pride#lgbtq#gay#jeff the killer#eyeless jack#ben drowned#slenderman#ticci toby#the puppeteer#laughing jack#laughing jill#the rake#seed eater#sonic.exe#sally williams#homicidal liu#im so tired#this is entirely /j please dont take this seriously#some of these are real headcanons but im not saying which good fucking luck#my art#doodleboot

13K notes

·

View notes

Text

The darkness made him say that

12K notes

·

View notes

Text

INTERVIEW WITH THE VAMPIRE 2.08: And That's The End of It. There's Nothing Else IWTVTwT Version.

#iwtvedit#interview with the vampire#iwtv#amc iwtv#tvgifs#cinemapix#loustat#devil's minion#iwtv lestat#iwtv daniel#iwtv armand#lestat de lioncourt#louis de pointe du lac#iwtv loustat#ldpdl#daniel molloy#the vampire lestat#the vampire armand#filmtvdaily#filmtvcentral#dailytvfilmgifs#vampterview#sam reid#jacob anderson#assad zaman#ben daniels#eric bogosian#**#iwtv s2#text post

13K notes

·

View notes

Text

happy umbrella academy eve!! (redrew this)

#the umbrella academy#TUA#luther hargreeves#diego hargreeves#allison hargreeves#klaus hargreeves#five hargreeves#ben hargreeves#viktor hargreeves#lila pitts#artovna

13K notes

·

View notes

Text

“that character is a war criminal” that character is from a fictional fantasy world and did not attend the geneva convention

#this is my favorite tweet i ever made#fictional villains#the darkling#aleksander morovoza#anakin skywalker#halbrand#daemon targaryen#daenerys targeryan#house targaryen#rhysand#kylo ren#ben solo#jess speaks

87K notes

·

View notes

Text

Ben Conor (@bconor)

10K notes

·

View notes

Text

i am a tragedy enjoyer before i am human

#the raven cycle#noah czerny#richard gansey#the ballad of songbirds and snakes#sejanus plinth#lucy gray baird#the haunting of hill house#nellie crain#olivia crain#the haunting of bly manor#hannah grose#rebecca jessel#yellowjackets#jackie taylor#natalie scatorccio#laura lee#the umbrella academy#ben hargreeves#the gilded wolves#tristan maréchal#idk if he really haunted the narrative but he definitely impacted things!#la casa de papel#tokio lcdp#travis martinez#always forget one 😔

39K notes

·

View notes

Photo

THE UMBRELLA ACADEMY — Final Season, August 8 2024

#the umbrella academy#tua#umbrella academy#diego hargreeves#ben hargreeves#allison hargreeves#netflix#tuaedit#umbrelleacademyedit#netflixedit#tvedit#ben baby you look like shit i love it <333

11K notes

·

View notes

Text

source

#saw those pictures and immediately thought about my writing process#have a shitte meme i guess#writing#fanfiction#ben affleck#5k#8k#20k

20K notes

·

View notes

Text

#siblings

#tuaedit#theumbrellaacademyedit#umbrellaacademyedit#tua#the umbrella academy#umbrella academy#thcrin#tuserkaz#klaus hargreeves#ben hargreeves#allison hargreeves#luther hargreeves#diego hargreeves#tua spoilers#umbrella academy spoilers#five hargreeves#viktor hargreeves#lila pitts#and we're back lol#mystuff#1k#5k#10k

10K notes

·

View notes

Text

A new tool lets artists add invisible changes to the pixels in their art before they upload it online so that if it’s scraped into an AI training set, it can cause the resulting model to break in chaotic and unpredictable ways.

The tool, called Nightshade, is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission. Using it to “poison” this training data could damage future iterations of image-generating AI models, such as DALL-E, Midjourney, and Stable Diffusion, by rendering some of their outputs useless—dogs become cats, cars become cows, and so forth. MIT Technology Review got an exclusive preview of the research, which has been submitted for peer review at computer security conference Usenix.

AI companies such as OpenAI, Meta, Google, and Stability AI are facing a slew of lawsuits from artists who claim that their copyrighted material and personal information was scraped without consent or compensation. Ben Zhao, a professor at the University of Chicago, who led the team that created Nightshade, says the hope is that it will help tip the power balance back from AI companies towards artists, by creating a powerful deterrent against disrespecting artists’ copyright and intellectual property. Meta, Google, Stability AI, and OpenAI did not respond to MIT Technology Review’s request for comment on how they might respond.

Zhao’s team also developed Glaze, a tool that allows artists to “mask” their own personal style to prevent it from being scraped by AI companies. It works in a similar way to Nightshade: by changing the pixels of images in subtle ways that are invisible to the human eye but manipulate machine-learning models to interpret the image as something different from what it actually shows.

Continue reading article here

#Ben Zhao and his team are absolute heroes#artificial intelligence#plagiarism software#more rambles#glaze#nightshade#ai theft#art theft#gleeful dancing

22K notes

·

View notes