#linux commands tutorial

Explore tagged Tumblr posts

Text

Essential Linux Commands: Mastering the Basics of Command-Line Operations

Linux, a powerful and versatile operating system, offers a command-line interface that empowers users with unprecedented control over their systems. While the graphical user interface (GUI) provides ease of use, understanding the fundamental Linux commands is essential for anyone seeking to harness the full potential of this open-source platform. In this article, we will explore some of the…

Essential Linux Commands help users navigate, manage files, and control system processes. Here are some key ones:

ls – List directory contents

cd – Change directory

pwd – Show current directory path

mkdir – Create a new directory

rm – Remove files or directories

cp – Copy files or directories

mv – Move or rename files

cat – View file contents

grep – Search text in files

chmod – Change file permissions

top – Monitor system processes

ps – Display running processes

kill – Terminate a process

sudo – Execute commands as a superuser

Mastering these commands boosts productivity and system control!

#Basics of Command-Line Operations#Command-Line Operations#Essential Linux Commands#Linux Commands#linux commands#linux#basic linux commands#linux command line#linux commands for beginners#linux basic commands#linux command line tutorial#learn linux#linux tutorial#linux commands tutorial#command line#commands in linux#best linux commands#linux for beginners#essential linux commands#linux tutorial for beginners#top linux terminal commands#basic linux ubuntu commands#linux commands with examples#commands#linux course

2 notes

·

View notes

Text

youtube

Linux Administration: The Complete Linux Bootcamp for 2024

This Linux Administration course covers every major topic, including using AI and Natural Language to administer Linux systems (ChatGPT & ShellGPT), all important Linux commands, the Linux Filesystem, File Permissions, Process Management, User Account Management, Software Management, Networking in Linux, System Administration, Bash Scripting, Containarizing Apps with Podman, Iptables/Netfilter Firewall, Linux Security and many more!

I’m constantly updating the course to be the most comprehensive, yet straightforward, Linux Administration course on the market!

This course IS NOT like any other Linux Administration course you can take online. At the end of this course, you will MASTER the key concepts and you will become an effective Linux System Engineer or Administrator.

This is a brand new Linux Administration course that is constantly updated to teach you the skills required for the future that comes.

The world is changing, constantly, and at a fast pace! The technology-driven future in which we’ll live is filled with promise but also challenges. Linux powers the servers of the Internet and by enrolling in this course you’ll power the essential Linux concepts and commands. This Linux Administration course is really different! You’ll learn what matters and get the skills to get ahead and gain an edge.

#youtube#free education#education#linux administration#educate yourselves#hacking#educate yourself#tips and tricks#technology#security#The Complete Linux Bootcamp for 2024#linux tutorial#linux for beginners#linux command line#open source#computers

3 notes

·

View notes

Video

youtube

Mastering Regular Expressions and the grep Command in Linux | Linux Tuto...

#youtube#Welcome to our Linux tutorial where we dive deep into Regular Expressions (regex) and the powerful grep command! Whether you're a beginner o

0 notes

Video

youtube

Introduction to Linux for DevOps: Why It’s Essential

Linux serves as the backbone of most DevOps workflows and cloud infrastructures. Its open-source nature, robust performance, and extensive compatibility make it the go-to operating system for modern IT environments. Whether you're deploying applications, managing containers, or orchestrating large-scale systems, mastering Linux is non-negotiable for every DevOps professional.

Why Linux is Critical in DevOps

1. Ubiquity in Cloud Environments - Most cloud platforms, such as AWS, Azure, and Google Cloud, use Linux-based environments for their services. - Tools like Kubernetes and Docker are designed to run seamlessly on Linux systems.

2. Command-Line Mastery - Linux empowers DevOps professionals with powerful command-line tools to manage servers, automate processes, and troubleshoot issues efficiently.

3. Flexibility and Automation - The ability to script and automate tasks in Linux reduces manual effort, enabling faster and more reliable deployments.

4. Open-Source Ecosystem - Linux integrates with numerous open-source DevOps tools like Jenkins, Ansible, and Terraform, making it an essential skill for streamlined workflows.

Key Topics for Beginners

- Linux Basics - What is Linux? - Understanding Linux file structures and permissions. - Common Linux distributions (Ubuntu, CentOS, Red Hat Enterprise Linux).

- Core Linux Commands - File and directory management: `ls`, `cd`, `cp`, `mv`. - System monitoring: `top`, `df`, `free`. - Networking basics: `ping`, `ifconfig`, `netstat`.

- Scripting and Automation - Writing basic shell scripts. - Automating tasks with `cron` and `at`.

- Linux Security - Managing user permissions and roles. - Introduction to firewalls and secure file transfers.

Why You Should Learn Linux for DevOps

- Cost-Efficiency: Linux is free and open-source, making it a cost-effective solution for both enterprises and individual learners. - Career Opportunities: Proficiency in Linux is a must-have skill for DevOps roles, enhancing your employability. - Scalability: Whether managing a single server or a complex cluster, Linux provides the tools and stability to scale effortlessly.

Hands-On Learning - Set up a Linux virtual machine or cloud instance. - Practice essential commands and file operations. - Write and execute your first shell script.

Who Should Learn Linux for DevOps? - Aspiring DevOps engineers starting their career journey. - System administrators transitioning into cloud and DevOps roles. - Developers aiming to improve their understanding of server environments.

***************************** *Follow Me* https://www.facebook.com/cloudolus/ | https://www.facebook.com/groups/cloudolus | https://www.linkedin.com/groups/14347089/ | https://www.instagram.com/cloudolus/ | https://twitter.com/cloudolus | https://www.pinterest.com/cloudolus/ | https://www.youtube.com/@cloudolus | https://www.youtube.com/@ClouDolusPro | https://discord.gg/GBMt4PDK | https://www.tumblr.com/cloudolus | https://cloudolus.blogspot.com/ | https://t.me/cloudolus | https://www.whatsapp.com/channel/0029VadSJdv9hXFAu3acAu0r | https://chat.whatsapp.com/D6I4JafCUVhGihV7wpryP2 *****************************

*🔔Subscribe & Stay Updated:* Don't forget to subscribe and hit the bell icon to receive notifications and stay updated on our latest videos, tutorials & playlists! *ClouDolus:* https://www.youtube.com/@cloudolus *ClouDolus AWS DevOps:* https://www.youtube.com/@ClouDolusPro *THANKS FOR BEING A PART OF ClouDolus! 🙌✨*

#youtube#Linux Linux for DevOps Linux basics Linux commands DevOps basics DevOps skills cloud computing Linux for beginners Linux tutorial Linux for#LinuxLinux for DevOpsLinux basicslinux commandsDevOps basicsDevOps skillscloud computingLinux for beginnersLinux tutorialLinux scriptingLinu#aws course#aws devops#aws#devpos#linux

1 note

·

View note

Video

youtube

How to Navigate File System in Linux Terminal

This is a basic introduction about Linux commands and how you can navigate the file system using Terminal (command line). All Open Source! Arashtad provides high quality tutorials, eBooks, articles and documents, design and development services, over 400 free online tools, frameworks, CMS, WordPress plugins, Joomla extensions, and other products. More Courses ▶ https://tuts.arashtad.com/ Business Inquiries ▶ https://arashtad.com/business-inquiries/ Affiliate Programs ▶ https://arashtad.com/affiliate-programs/ eBooks ▶ https://press.arashtad.com/ Our Products ▶ https://market.arashtad.com/ Our Services ▶ https://arashtad.com/services/ Our Portfolio ▶ https://demo.arashtad.com/ Free Online Tools ▶ https://tools.arashtad.com/ Our Blog ▶ https://blog.arashtad.com/ Documents ▶ https://doc.arashtad.com/ Licensing ▶ https://arashtad.com/licensing/ About us ▶ https://arashtad.com/about/ Join Arashtad Network ▶ https://i.arashtad.com/ Our Social Profiles ▶ https://arashtad.com/arashtad-social-media-profiles/ Vimeo ▶ https://vimeo.com/arashtad Udemy ▶ https://www.udemy.com/user/arashtad GitHub ▶ https://github.com/arashtad Linkedin ▶ https://www.linkedin.com/company/arashtad Twitter ▶ https://twitter.com/arashtad

0 notes

Text

im disgusted with myself but im going to have to learn how to operate linux, my worst nightmare has come true

#it’s not that i hate linux#i just can’t seem to remember commands#or how to make them work together#i dont think it helps that i got TRAUMATIZED by a teacher#he used to give us graded assignements during class#they lasted hours#and we had to install servers and such with commands we didn’t even learn#he gave us youtube tutorials#results: i didn’t learn shit#and now im lost at work lmao#like i know i don’t need to learn but i feel like i kind of do

0 notes

Text

dusted off my cohost and bluesky to follow people since i saw some people linking their accounts here... i don't currently have any plans to go anywhere, but my username is spellbot or spellbot_ on most sites (except neopets, where i'm fudgelade)

also if you're worried about people you follow migrating to sites you don't wanna join, check out my pinned post about social media RSS feeds. if you're interested in making your own web site and buying a domain for it, check out namesilo or namecheap. and you can use a custom domain on a neocities site if you become a neocities supporter for $5 a month (and if you don't care about custom domains you can just use neocities for free)

#if you want php/mysql i would recommend digitalocean but with a disclaimer that it's kind of for advanced users#like you would be managing the server yourself in a command line. so it's cheaper but you gotta wrestle in the mud with linux#they also got a free 60-day trial + tutorials if you want to try it out to decide if you HATE mud wrestling with linux! YECCCK!!!! PTOOEY#silly storie

1 note

·

View note

Text

Nmap Ping Sweep: Home Lab Network Ping Scan

Nmap Ping Sweep: Home Lab Network Ping Scan @vexpert #vmwarecommunities #100daysofhomelab #homelab #Nmaptutorial #Networkscanning #Pingsweepguide #Nmapcommandexamples #HostdiscoverywithNmap #Networksecurity #NmaponKaliLinux #TCPSYNACKpackets #ICMPscanning

There is perhaps not a better known network scan tool for cybersecurity than Nmap. It is an excellent tool I have used for quite some time when you have a rogue device on a network and you want to understand what type of device it is. Nmap provides this functionality along with many others. Let’s look at the Nmap Ping Sweep and see how we can use it as a network vulnerability ping scan to…

View On WordPress

#Host discovery with Nmap#ICMP scanning#Local Ethernet network scanning#network scanning#network security#Nmap command examples#Nmap on Kali Linux#Nmap tutorial#Ping sweep guide#TCP SYN/ACK packets

0 notes

Text

How I ditched streaming services and learned to love Linux: A step-by-step guide to building your very own personal media streaming server (V2.0: REVISED AND EXPANDED EDITION)

This is a revised, corrected and expanded version of my tutorial on setting up a personal media server that previously appeared on my old blog (donjuan-auxenfers). I expect that that post is still making the rounds (hopefully with my addendum on modifying group share permissions in Ubuntu to circumvent 0x8007003B "Unexpected Network Error" messages in Windows 10/11 when transferring files) but I have no way of checking. Anyway this new revised version of the tutorial corrects one or two small errors I discovered when rereading what I wrote, adds links to all products mentioned and is just more polished generally. I also expanded it a bit, pointing more adventurous users toward programs such as Sonarr/Radarr/Lidarr and Overseerr which can be used for automating user requests and media collection.

So then, what is this tutorial? This is a tutorial on how to build and set up your own personal media server using Ubuntu as an operating system and Plex (or Jellyfin) to not only manage your media, but to also stream that media to your devices both at home and abroad anywhere in the world where you have an internet connection. Its intent is to show you how building a personal media server and stuffing it full of films, TV, and music that you acquired through indiscriminate and voracious media piracy various legal methods will free you to completely ditch paid streaming services. No more will you have to pay for Disney+, Netflix, HBOMAX, Hulu, Amazon Prime, Peacock, CBS All Access, Paramount+, Crave or any other streaming service that is not named Criterion Channel. Instead whenever you want to watch your favourite films and television shows, you’ll have your own personal service that only features things that you want to see, with files that you have control over. And for music fans out there, both Jellyfin and Plex support music streaming, meaning you can even ditch music streaming services. Goodbye Spotify, Youtube Music, Tidal and Apple Music, welcome back unreasonably large MP3 (or FLAC) collections.

On the hardware front, I’m going to offer a few options catered towards different budgets and media library sizes. The cost of getting a media server up and running using this guide will cost you anywhere from $450 CAD/$325 USD at the low end to $1500 CAD/$1100 USD at the high end (it could go higher). My server was priced closer to the higher figure, but I went and got a lot more storage than most people need. If that seems like a little much, consider for a moment, do you have a roommate, a close friend, or a family member who would be willing to chip in a few bucks towards your little project provided they get access? Well that's how I funded my server. It might also be worth thinking about the cost over time, i.e. how much you spend yearly on subscriptions vs. a one time cost of setting up a server. Additionally there's just the joy of being able to scream "fuck you" at all those show cancelling, library deleting, hedge fund vampire CEOs who run the studios through denying them your money. Drive a stake through David Zaslav's heart.

On the software side I will walk you step-by-step through installing Ubuntu as your server's operating system, configuring your storage as a RAIDz array with ZFS, sharing your zpool to Windows with Samba, running a remote connection between your server and your Windows PC, and then a little about started with Plex/Jellyfin. Every terminal command you will need to input will be provided, and I even share a custom #bash script that will make used vs. available drive space on your server display correctly in Windows.

If you have a different preferred flavour of Linux (Arch, Manjaro, Redhat, Fedora, Mint, OpenSUSE, CentOS, Slackware etc. et. al.) and are aching to tell me off for being basic and using Ubuntu, this tutorial is not for you. The sort of person with a preferred Linux distro is the sort of person who can do this sort of thing in their sleep. Also I don't care. This tutorial is intended for the average home computer user. This is also why we’re not using a more exotic home server solution like running everything through Docker Containers and managing it through a dashboard like Homarr or Heimdall. While such solutions are fantastic and can be very easy to maintain once you have it all set up, wrapping your brain around Docker is a whole thing in and of itself. If you do follow this tutorial and had fun putting everything together, then I would encourage you to return in a year’s time, do your research and set up everything with Docker Containers.

Lastly, this is a tutorial aimed at Windows users. Although I was a daily user of OS X for many years (roughly 2008-2023) and I've dabbled quite a bit with various Linux distributions (mostly Ubuntu and Manjaro), my primary OS these days is Windows 11. Many things in this tutorial will still be applicable to Mac users, but others (e.g. setting up shares) you will have to look up for yourself. I doubt it would be difficult to do so.

Nothing in this tutorial will require feats of computing expertise. All you will need is a basic computer literacy (i.e. an understanding of what a filesystem and directory are, and a degree of comfort in the settings menu) and a willingness to learn a thing or two. While this guide may look overwhelming at first glance, it is only because I want to be as thorough as possible. I want you to understand exactly what it is you're doing, I don't want you to just blindly follow steps. If you half-way know what you’re doing, you will be much better prepared if you ever need to troubleshoot.

Honestly, once you have all the hardware ready it shouldn't take more than an afternoon or two to get everything up and running.

(This tutorial is just shy of seven thousand words long so the rest is under the cut.)

Step One: Choosing Your Hardware

Linux is a light weight operating system, depending on the distribution there's close to no bloat. There are recent distributions available at this very moment that will run perfectly fine on a fourteen year old i3 with 4GB of RAM. Moreover, running Plex or Jellyfin isn’t resource intensive in 90% of use cases. All this is to say, we don’t require an expensive or powerful computer. This means that there are several options available: 1) use an old computer you already have sitting around but aren't using 2) buy a used workstation from eBay, or what I believe to be the best option, 3) order an N100 Mini-PC from AliExpress or Amazon.

Note: If you already have an old PC sitting around that you’ve decided to use, fantastic, move on to the next step.

When weighing your options, keep a few things in mind: the number of people you expect to be streaming simultaneously at any one time, the resolution and bitrate of your media library (4k video takes a lot more processing power than 1080p) and most importantly, how many of those clients are going to be transcoding at any one time. Transcoding is what happens when the playback device does not natively support direct playback of the source file. This can happen for a number of reasons, such as the playback device's native resolution being lower than the file's internal resolution, or because the source file was encoded in a video codec unsupported by the playback device.

Ideally we want any transcoding to be performed by hardware. This means we should be looking for a computer with an Intel processor with Quick Sync. Quick Sync is a dedicated core on the CPU die designed specifically for video encoding and decoding. This specialized hardware makes for highly efficient transcoding both in terms of processing overhead and power draw. Without these Quick Sync cores, transcoding must be brute forced through software. This takes up much more of a CPU’s processing power and requires much more energy. But not all Quick Sync cores are created equal and you need to keep this in mind if you've decided either to use an old computer or to shop for a used workstation on eBay

Any Intel processor from second generation Core (Sandy Bridge circa 2011) onward has Quick Sync cores. It's not until 6th gen (Skylake), however, that the cores support the H.265 HEVC codec. Intel’s 10th gen (Comet Lake) processors introduce support for 10bit HEVC and HDR tone mapping. And the recent 12th gen (Alder Lake) processors brought with them hardware AV1 decoding. As an example, while an 8th gen (Kaby Lake) i5-8500 will be able to hardware transcode a H.265 encoded file, it will fall back to software transcoding if given a 10bit H.265 file. If you’ve decided to use that old PC or to look on eBay for an old Dell Optiplex keep this in mind.

Note 1: The price of old workstations varies wildly and fluctuates frequently. If you get lucky and go shopping shortly after a workplace has liquidated a large number of their workstations you can find deals for as low as $100 on a barebones system, but generally an i5-8500 workstation with 16gb RAM will cost you somewhere in the area of $260 CAD/$200 USD.

Note 2: The AMD equivalent to Quick Sync is called Video Core Next, and while it's fine, it's not as efficient and not as mature a technology. It was only introduced with the first generation Ryzen CPUs and it only got decent with their newest CPUs, we want something cheap.

Alternatively you could forgo having to keep track of what generation of CPU is equipped with Quick Sync cores that feature support for which codecs, and just buy an N100 mini-PC. For around the same price or less of a used workstation you can pick up a mini-PC with an Intel N100 processor. The N100 is a four-core processor based on the 12th gen Alder Lake architecture and comes equipped with the latest revision of the Quick Sync cores. These little processors offer astounding hardware transcoding capabilities for their size and power draw. Otherwise they perform equivalent to an i5-6500, which isn't a terrible CPU. A friend of mine uses an N100 machine as a dedicated retro emulation gaming system and it does everything up to 6th generation consoles just fine. The N100 is also a remarkably efficient chip, it sips power. In fact, the difference between running one of these and an old workstation could work out to hundreds of dollars a year in energy bills depending on where you live.

You can find these Mini-PCs all over Amazon or for a little cheaper on AliExpress. They range in price from $170 CAD/$125 USD for a no name N100 with 8GB RAM to $280 CAD/$200 USD for a Beelink S12 Pro with 16GB RAM. The brand doesn't really matter, they're all coming from the same three factories in Shenzen, go for whichever one fits your budget or has features you want. 8GB RAM should be enough, Linux is lightweight and Plex only calls for 2GB RAM. 16GB RAM might result in a slightly snappier experience, especially with ZFS. A 256GB SSD is more than enough for what we need as a boot drive, but going for a bigger drive might allow you to get away with things like creating preview thumbnails for Plex, but it’s up to you and your budget.

The Mini-PC I wound up buying was a Firebat AK2 Plus with 8GB RAM and a 256GB SSD. It looks like this:

Note: Be forewarned that if you decide to order a Mini-PC from AliExpress, note the type of power adapter it ships with. The mini-PC I bought came with an EU power adapter and I had to supply my own North American power supply. Thankfully this is a minor issue as barrel plug 30W/12V/2.5A power adapters are easy to find and can be had for $10.

Step Two: Choosing Your Storage

Storage is the most important part of our build. It is also the most expensive. Thankfully it’s also the most easily upgrade-able down the line.

For people with a smaller media collection (4TB to 8TB), a more limited budget, or who will only ever have two simultaneous streams running, I would say that the most economical course of action would be to buy a USB 3.0 8TB external HDD. Something like this one from Western Digital or this one from Seagate. One of these external drives will cost you in the area of $200 CAD/$140 USD. Down the line you could add a second external drive or replace it with a multi-drive RAIDz set up such as detailed below.

If a single external drive the path for you, move on to step three.

For people with larger media libraries (12TB+), who prefer media in 4k, or care who about data redundancy, the answer is a RAID array featuring multiple HDDs in an enclosure.

Note: If you are using an old PC or used workstatiom as your server and have the room for at least three 3.5" drives, and as many open SATA ports on your mother board you won't need an enclosure, just install the drives into the case. If your old computer is a laptop or doesn’t have room for more internal drives, then I would suggest an enclosure.

The minimum number of drives needed to run a RAIDz array is three, and seeing as RAIDz is what we will be using, you should be looking for an enclosure with three to five bays. I think that four disks makes for a good compromise for a home server. Regardless of whether you go for a three, four, or five bay enclosure, do be aware that in a RAIDz array the space equivalent of one of the drives will be dedicated to parity at a ratio expressed by the equation 1 − 1/n i.e. in a four bay enclosure equipped with four 12TB drives, if we configured our drives in a RAIDz1 array we would be left with a total of 36TB of usable space (48TB raw size). The reason for why we might sacrifice storage space in such a manner will be explained in the next section.

A four bay enclosure will cost somewhere in the area of $200 CDN/$140 USD. You don't need anything fancy, we don't need anything with hardware RAID controls (RAIDz is done entirely in software) or even USB-C. An enclosure with USB 3.0 will perform perfectly fine. Don’t worry too much about USB speed bottlenecks. A mechanical HDD will be limited by the speed of its mechanism long before before it will be limited by the speed of a USB connection. I've seen decent looking enclosures from TerraMaster, Yottamaster, Mediasonic and Sabrent.

When it comes to selecting the drives, as of this writing, the best value (dollar per gigabyte) are those in the range of 12TB to 20TB. I settled on 12TB drives myself. If 12TB to 20TB drives are out of your budget, go with what you can afford, or look into refurbished drives. I'm not sold on the idea of refurbished drives but many people swear by them.

When shopping for harddrives, search for drives designed specifically for NAS use. Drives designed for NAS use typically have better vibration dampening and are designed to be active 24/7. They will also often make use of CMR (conventional magnetic recording) as opposed to SMR (shingled magnetic recording). This nets them a sizable read/write performance bump over typical desktop drives. Seagate Ironwolf and Toshiba NAS are both well regarded brands when it comes to NAS drives. I would avoid Western Digital Red drives at this time. WD Reds were a go to recommendation up until earlier this year when it was revealed that they feature firmware that will throw up false SMART warnings telling you to replace the drive at the three year mark quite often when there is nothing at all wrong with that drive. It will likely even be good for another six, seven, or more years.

Step Three: Installing Linux

For this step you will need a USB thumbdrive of at least 6GB in capacity, an .ISO of Ubuntu, and a way to make that thumbdrive bootable media.

First download a copy of Ubuntu desktop (for best performance we could download the Server release, but for new Linux users I would recommend against the server release. The server release is strictly command line interface only, and having a GUI is very helpful for most people. Not many people are wholly comfortable doing everything through the command line, I'm certainly not one of them, and I grew up with DOS 6.0. 22.04.3 Jammy Jellyfish is the current Long Term Service release, this is the one to get.

Download the .ISO and then download and install balenaEtcher on your Windows PC. BalenaEtcher is an easy to use program for creating bootable media, you simply insert your thumbdrive, select the .ISO you just downloaded, and it will create a bootable installation media for you.

Once you've made a bootable media and you've got your Mini-PC (or you old PC/used workstation) in front of you, hook it directly into your router with an ethernet cable, and then plug in the HDD enclosure, a monitor, a mouse and a keyboard. Now turn that sucker on and hit whatever key gets you into the BIOS (typically ESC, DEL or F2). If you’re using a Mini-PC check to make sure that the P1 and P2 power limits are set correctly, my N100's P1 limit was set at 10W, a full 20W under the chip's power limit. Also make sure that the RAM is running at the advertised speed. My Mini-PC’s RAM was set at 2333Mhz out of the box when it should have been 3200Mhz. Once you’ve done that, key over to the boot order and place the USB drive first in the boot order. Then save the BIOS settings and restart.

After you restart you’ll be greeted by Ubuntu's installation screen. Installing Ubuntu is really straight forward, select the "minimal" installation option, as we won't need anything on this computer except for a browser (Ubuntu comes preinstalled with Firefox) and Plex Media Server/Jellyfin Media Server. Also remember to delete and reformat that Windows partition! We don't need it.

Step Four: Installing ZFS and Setting Up the RAIDz Array

Note: If you opted for just a single external HDD skip this step and move onto setting up a Samba share.

Once Ubuntu is installed it's time to configure our storage by installing ZFS to build our RAIDz array. ZFS is a "next-gen" file system that is both massively flexible and massively complex. It's capable of snapshot backup, self healing error correction, ZFS pools can be configured with drives operating in a supplemental manner alongside the storage vdev (e.g. fast cache, dedicated secondary intent log, hot swap spares etc.). It's also a file system very amenable to fine tuning. Block and sector size are adjustable to use case and you're afforded the option of different methods of inline compression. If you'd like a very detailed overview and explanation of its various features and tips on tuning a ZFS array check out these articles from Ars Technica. For now we're going to ignore all these features and keep it simple, we're going to pull our drives together into a single vdev running in RAIDz which will be the entirety of our zpool, no fancy cache drive or SLOG.

Open up the terminal and type the following commands:

sudo apt update

then

sudo apt install zfsutils-linux

This will install the ZFS utility. Verify that it's installed with the following command:

zfs --version

Now, it's time to check that the HDDs we have in the enclosure are healthy, running, and recognized. We also want to find out their device IDs and take note of them:

sudo fdisk -1

Note: You might be wondering why some of these commands require "sudo" in front of them while others don't. "Sudo" is short for "super user do”. When and where "sudo" is used has to do with the way permissions are set up in Linux. Only the "root" user has the access level to perform certain tasks in Linux. As a matter of security and safety regular user accounts are kept separate from the "root" user. It's not advised (or even possible) to boot into Linux as "root" with most modern distributions. Instead by using "sudo" our regular user account is temporarily given the power to do otherwise forbidden things. Don't worry about it too much at this stage, but if you want to know more check out this introduction.

If everything is working you should get a list of the various drives detected along with their device IDs which will look like this: /dev/sdc. You can also check the device IDs of the drives by opening the disk utility app. Jot these IDs down as we'll need them for our next step, creating our RAIDz array.

RAIDz is similar to RAID-5 in that instead of striping your data over multiple disks, exchanging redundancy for speed and available space (RAID-0), or mirroring your data writing by two copies of every piece (RAID-1), it instead writes parity blocks across the disks in addition to striping, this provides a balance of speed, redundancy and available space. If a single drive fails, the parity blocks on the working drives can be used to reconstruct the entire array as soon as a replacement drive is added.

Additionally, RAIDz improves over some of the common RAID-5 flaws. It's more resilient and capable of self healing, as it is capable of automatically checking for errors against a checksum. It's more forgiving in this way, and it's likely that you'll be able to detect when a drive is dying well before it fails. A RAIDz array can survive the loss of any one drive.

Note: While RAIDz is indeed resilient, if a second drive fails during the rebuild, you're fucked. Always keep backups of things you can't afford to lose. This tutorial, however, is not about proper data safety.

To create the pool, use the following command:

sudo zpool create "zpoolnamehere" raidz "device IDs of drives we're putting in the pool"

For example, let's creatively name our zpool "mypool". This poil will consist of four drives which have the device IDs: sdb, sdc, sdd, and sde. The resulting command will look like this:

sudo zpool create mypool raidz /dev/sdb /dev/sdc /dev/sdd /dev/sde

If as an example you bought five HDDs and decided you wanted more redundancy dedicating two drive to this purpose, we would modify the command to "raidz2" and the command would look something like the following:

sudo zpool create mypool raidz2 /dev/sdb /dev/sdc /dev/sdd /dev/sde /dev/sdf

An array configured like this is known as RAIDz2 and is able to survive two disk failures.

Once the zpool has been created, we can check its status with the command:

zpool status

Or more concisely with:

zpool list

The nice thing about ZFS as a file system is that a pool is ready to go immediately after creation. If we were to set up a traditional RAID-5 array using mbam, we'd have to sit through a potentially hours long process of reformatting and partitioning the drives. Instead we're ready to go right out the gates.

The zpool should be automatically mounted to the filesystem after creation, check on that with the following:

df -hT | grep zfs

Note: If your computer ever loses power suddenly, say in event of a power outage, you may have to re-import your pool. In most cases, ZFS will automatically import and mount your pool, but if it doesn’t and you can't see your array, simply open the terminal and type sudo zpool import -a.

By default a zpool is mounted at /"zpoolname". The pool should be under our ownership but let's make sure with the following command:

sudo chown -R "yourlinuxusername" /"zpoolname"

Note: Changing file and folder ownership with "chown" and file and folder permissions with "chmod" are essential commands for much of the admin work in Linux, but we won't be dealing with them extensively in this guide. If you'd like a deeper tutorial and explanation you can check out these two guides: chown and chmod.

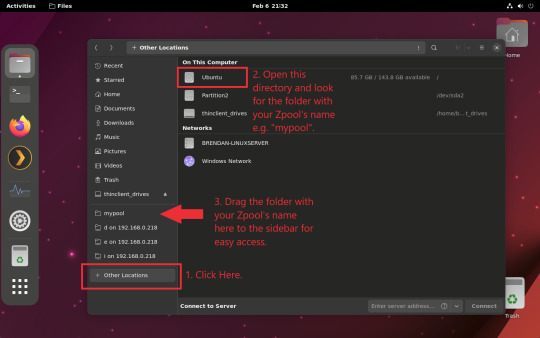

You can access the zpool file system through the GUI by opening the file manager (the Ubuntu default file manager is called Nautilus) and clicking on "Other Locations" on the sidebar, then entering the Ubuntu file system and looking for a folder with your pool's name. Bookmark the folder on the sidebar for easy access.

Your storage pool is now ready to go. Assuming that we already have some files on our Windows PC we want to copy to over, we're going to need to install and configure Samba to make the pool accessible in Windows.

Step Five: Setting Up Samba/Sharing

Samba is what's going to let us share the zpool with Windows and allow us to write to it from our Windows machine. First let's install Samba with the following commands:

sudo apt-get update

then

sudo apt-get install samba

Next create a password for Samba.

sudo smbpswd -a "yourlinuxusername"

It will then prompt you to create a password. Just reuse your Ubuntu user password for simplicity's sake.

Note: if you're using just a single external drive replace the zpool location in the following commands with wherever it is your external drive is mounted, for more information see this guide on mounting an external drive in Ubuntu.

After you've created a password we're going to create a shareable folder in our pool with this command

mkdir /"zpoolname"/"foldername"

Now we're going to open the smb.conf file and make that folder shareable. Enter the following command.

sudo nano /etc/samba/smb.conf

This will open the .conf file in nano, the terminal text editor program. Now at the end of smb.conf add the following entry:

["foldername"]

path = /"zpoolname"/"foldername"

available = yes

valid users = "yourlinuxusername"

read only = no

writable = yes

browseable = yes

guest ok = no

Ensure that there are no line breaks between the lines and that there's a space on both sides of the equals sign. Our next step is to allow Samba traffic through the firewall:

sudo ufw allow samba

Finally restart the Samba service:

sudo systemctl restart smbd

At this point we'll be able to access to the pool, browse its contents, and read and write to it from Windows. But there's one more thing left to do, Windows doesn't natively support the ZFS file systems and will read the used/available/total space in the pool incorrectly. Windows will read available space as total drive space, and all used space as null. This leads to Windows only displaying a dwindling amount of "available" space as the drives are filled. We can fix this! Functionally this doesn't actually matter, we can still write and read to and from the disk, it just makes it difficult to tell at a glance the proportion of used/available space, so this is an optional step but one I recommend (this step is also unnecessary if you're just using a single external drive). What we're going to do is write a little shell script in #bash. Open nano with the terminal with the command:

nano

Now insert the following code:

#!/bin/bash CUR_PATH=`pwd` ZFS_CHECK_OUTPUT=$(zfs get type $CUR_PATH 2>&1 > /dev/null) > /dev/null if [[ $ZFS_CHECK_OUTPUT == *not\ a\ ZFS* ]] then IS_ZFS=false else IS_ZFS=true fi if [[ $IS_ZFS = false ]] then df $CUR_PATH | tail -1 | awk '{print $2" "$4}' else USED=$((`zfs get -o value -Hp used $CUR_PATH` / 1024)) > /dev/null AVAIL=$((`zfs get -o value -Hp available $CUR_PATH` / 1024)) > /dev/null TOTAL=$(($USED+$AVAIL)) > /dev/null echo $TOTAL $AVAIL fi

Save the script as "dfree.sh" to /home/"yourlinuxusername" then change the ownership of the file to make it executable with this command:

sudo chmod 774 dfree.sh

Now open smb.conf with sudo again:

sudo nano /etc/samba/smb.conf

Now add this entry to the top of the configuration file to direct Samba to use the results of our script when Windows asks for a reading on the pool's used/available/total drive space:

[global]

dfree command = /home/"yourlinuxusername"/dfree.sh

Save the changes to smb.conf and then restart Samba again with the terminal:

sudo systemctl restart smbd

Now there’s one more thing we need to do to fully set up the Samba share, and that’s to modify a hidden group permission. In the terminal window type the following command:

usermod -a -G sambashare “yourlinuxusername”

Then restart samba again:

sudo systemctl restart smbd

If we don’t do this last step, everything will appear to work fine, and you will even be able to see and map the drive from Windows and even begin transferring files, but you'd soon run into a lot of frustration. As every ten minutes or so a file would fail to transfer and you would get a window announcing “0x8007003B Unexpected Network Error”. This window would require your manual input to continue the transfer with the file next in the queue. And at the end it would reattempt to transfer whichever files failed the first time around. 99% of the time they’ll go through that second try, but this is still all a major pain in the ass. Especially if you’ve got a lot of data to transfer or you want to step away from the computer for a while.

It turns out samba can act a little weirdly with the higher read/write speeds of RAIDz arrays and transfers from Windows, and will intermittently crash and restart itself if this group option isn’t changed. Inputting the above command will prevent you from ever seeing that window.

The last thing we're going to do before switching over to our Windows PC is grab the IP address of our Linux machine. Enter the following command:

hostname -I

This will spit out this computer's IP address on the local network (it will look something like 192.168.0.x), write it down. It might be a good idea once you're done here to go into your router settings and reserving that IP for your Linux system in the DHCP settings. Check the manual for your specific model router on how to access its settings, typically it can be accessed by opening a browser and typing http:\\192.168.0.1 in the address bar, but your router may be different.

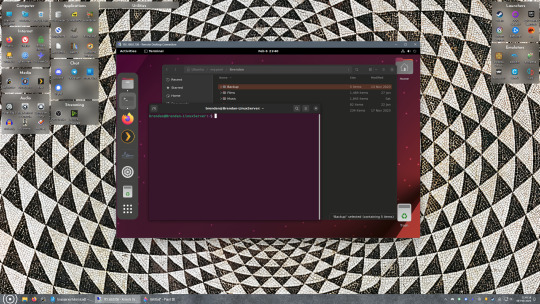

Okay we’re done with our Linux computer for now. Get on over to your Windows PC, open File Explorer, right click on Network and click "Map network drive". Select Z: as the drive letter (you don't want to map the network drive to a letter you could conceivably be using for other purposes) and enter the IP of your Linux machine and location of the share like so: \\"LINUXCOMPUTERLOCALIPADDRESSGOESHERE"\"zpoolnamegoeshere"\. Windows will then ask you for your username and password, enter the ones you set earlier in Samba and you're good. If you've done everything right it should look something like this:

You can now start moving media over from Windows to the share folder. It's a good idea to have a hard line running to all machines. Moving files over Wi-Fi is going to be tortuously slow, the only thing that’s going to make the transfer time tolerable (hours instead of days) is a solid wired connection between both machines and your router.

Step Six: Setting Up Remote Desktop Access to Your Server

After the server is up and going, you’ll want to be able to access it remotely from Windows. Barring serious maintenance/updates, this is how you'll access it most of the time. On your Linux system open the terminal and enter:

sudo apt install xrdp

Then:

sudo systemctl enable xrdp

Once it's finished installing, open “Settings” on the sidebar and turn off "automatic login" in the User category. Then log out of your account. Attempting to remotely connect to your Linux computer while you’re logged in will result in a black screen!

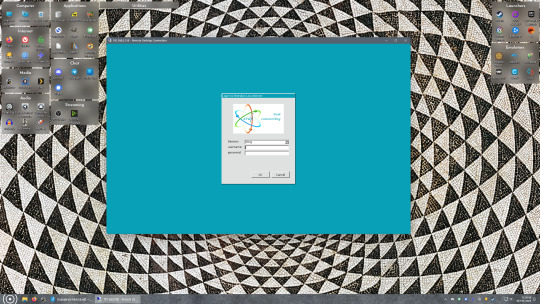

Now get back on your Windows PC, open search and look for "RDP". A program called "Remote Desktop Connection" should pop up, open this program as an administrator by right-clicking and selecting “run as an administrator”. You’ll be greeted with a window. In the field marked “Computer” type in the IP address of your Linux computer. Press connect and you'll be greeted with a new window and prompt asking for your username and password. Enter your Ubuntu username and password here.

If everything went right, you’ll be logged into your Linux computer. If the performance is sluggish, adjust the display options. Lowering the resolution and colour depth do a lot to make the interface feel snappier.

Remote access is how we're going to be using our Linux system from now, barring edge cases like needing to get into the BIOS or upgrading to a new version of Ubuntu. Everything else from performing maintenance like a monthly zpool scrub to checking zpool status and updating software can all be done remotely.

This is how my server lives its life now, happily humming and chirping away on the floor next to the couch in a corner of the living room.

Step Seven: Plex Media Server/Jellyfin

Okay we’ve got all the ground work finished and our server is almost up and running. We’ve got Ubuntu up and running, our storage array is primed, we’ve set up remote connections and sharing, and maybe we’ve moved over some of favourite movies and TV shows.

Now we need to decide on the media server software to use which will stream our media to us and organize our library. For most people I’d recommend Plex. It just works 99% of the time. That said, Jellyfin has a lot to recommend it by too, even if it is rougher around the edges. Some people run both simultaneously, it’s not that big of an extra strain. I do recommend doing a little bit of your own research into the features each platform offers, but as a quick run down, consider some of the following points:

Plex is closed source and is funded through PlexPass purchases while Jellyfin is open source and entirely user driven. This means a number of things: for one, Plex requires you to purchase a “PlexPass” (purchased as a one time lifetime fee $159.99 CDN/$120 USD or paid for on a monthly or yearly subscription basis) in order to access to certain features, like hardware transcoding (and we want hardware transcoding) or automated intro/credits detection and skipping, Jellyfin offers some of these features for free through plugins. Plex supports a lot more devices than Jellyfin and updates more frequently. That said, Jellyfin's Android and iOS apps are completely free, while the Plex Android and iOS apps must be activated for a one time cost of $6 CDN/$5 USD. But that $6 fee gets you a mobile app that is much more functional and features a unified UI across platforms, the Plex mobile apps are simply a more polished experience. The Jellyfin apps are a bit of a mess and the iOS and Android versions are very different from each other.

Jellyfin’s actual media player is more fully featured than Plex's, but on the other hand Jellyfin's UI, library customization and automatic media tagging really pale in comparison to Plex. Streaming your music library is free through both Jellyfin and Plex, but Plex offers the PlexAmp app for dedicated music streaming which boasts a number of fantastic features, unfortunately some of those fantastic features require a PlexPass. If your internet is down, Jellyfin can still do local streaming, while Plex can fail to play files unless you've got it set up a certain way. Jellyfin has a slew of neat niche features like support for Comic Book libraries with the .cbz/.cbt file types, but then Plex offers some free ad-supported TV and films, they even have a free channel that plays nothing but Classic Doctor Who.

Ultimately it's up to you, I settled on Plex because although some features are pay-walled, it just works. It's more reliable and easier to use, and a one-time fee is much easier to swallow than a subscription. I had a pretty easy time getting my boomer parents and tech illiterate brother introduced to and using Plex and I don't know if I would've had as easy a time doing that with Jellyfin. I do also need to mention that Jellyfin does take a little extra bit of tinkering to get going in Ubuntu, you’ll have to set up process permissions, so if you're more tolerant to tinkering, Jellyfin might be up your alley and I’ll trust that you can follow their installation and configuration guide. For everyone else, I recommend Plex.

So pick your poison: Plex or Jellyfin.

Note: The easiest way to download and install either of these packages in Ubuntu is through Snap Store.

After you've installed one (or both), opening either app will launch a browser window into the browser version of the app allowing you to set all the options server side.

The process of adding creating media libraries is essentially the same in both Plex and Jellyfin. You create a separate libraries for Television, Movies, and Music and add the folders which contain the respective types of media to their respective libraries. The only difficult or time consuming aspect is ensuring that your files and folders follow the appropriate naming conventions:

Plex naming guide for Movies

Plex naming guide for Television

Jellyfin follows the same naming rules but I find their media scanner to be a lot less accurate and forgiving than Plex. Once you've selected the folders to be scanned the service will scan your files, tagging everything and adding metadata. Although I find do find Plex more accurate, it can still erroneously tag some things and you might have to manually clean up some tags in a large library. (When I initially created my library it tagged the 1963-1989 Doctor Who as some Korean soap opera and I needed to manually select the correct match after which everything was tagged normally.) It can also be a bit testy with anime (especially OVAs) be sure to check TVDB to ensure that you have your files and folders structured and named correctly. If something is not showing up at all, double check the name.

Once that's done, organizing and customizing your library is easy. You can set up collections, grouping items together to fit a theme or collect together all the entries in a franchise. You can make playlists, and add custom artwork to entries. It's fun setting up collections with posters to match, there are even several websites dedicated to help you do this like PosterDB. As an example, below are two collections in my library, one collecting all the entries in a franchise, the other follows a theme.

My Star Trek collection, featuring all eleven television series, and thirteen films.

My Best of the Worst collection, featuring sixty-nine films previously showcased on RedLetterMedia’s Best of the Worst. They’re all absolutely terrible and I love them.

As for settings, ensure you've got Remote Access going, it should work automatically and be sure to set your upload speed after running a speed test. In the library settings set the database cache to 2000MB to ensure a snappier and more responsive browsing experience, and then check that playback quality is set to original/maximum. If you’re severely bandwidth limited on your upload and have remote users, you might want to limit the remote stream bitrate to something more reasonable, just as a note of comparison Netflix’s 1080p bitrate is approximately 5Mbps, although almost anyone watching through a chromium based browser is streaming at 720p and 3mbps. Other than that you should be good to go. For actually playing your files, there's a Plex app for just about every platform imaginable. I mostly watch television and films on my laptop using the Windows Plex app, but I also use the Android app which can broadcast to the chromecast connected to the TV in the office and the Android TV app for our smart TV. Both are fully functional and easy to navigate, and I can also attest to the OS X version being equally functional.

Part Eight: Finding Media

Now, this is not really a piracy tutorial, there are plenty of those out there. But if you’re unaware, BitTorrent is free and pretty easy to use, just pick a client (qBittorrent is the best) and go find some public trackers to peruse. Just know now that all the best trackers are private and invite only, and that they can be exceptionally difficult to get into. I’m already on a few, and even then, some of the best ones are wholly out of my reach.

If you decide to take the left hand path and turn to Usenet you’ll have to pay. First you’ll need to sign up with a provider like Newshosting or EasyNews for access to Usenet itself, and then to actually find anything you’re going to need to sign up with an indexer like NZBGeek or NZBFinder. There are dozens of indexers, and many people cross post between them, but for more obscure media it’s worth checking multiple. You’ll also need a binary downloader like SABnzbd. That caveat aside, Usenet is faster, bigger, older, less traceable than BitTorrent, and altogether slicker. I honestly prefer it, and I'm kicking myself for taking this long to start using it because I was scared off by the price. I’ve found so many things on Usenet that I had sought in vain elsewhere for years, like a 2010 Italian film about a massacre perpetrated by the SS that played the festival circuit but never received a home media release; some absolute hero uploaded a rip of a festival screener DVD to Usenet. Anyway, figure out the rest of this shit on your own and remember to use protection, get yourself behind a VPN, use a SOCKS5 proxy with your BitTorrent client, etc.

On the legal side of things, if you’re around my age, you (or your family) probably have a big pile of DVDs and Blu-Rays sitting around unwatched and half forgotten. Why not do a bit of amateur media preservation, rip them and upload them to your server for easier access? (Your tools for this are going to be Handbrake to do the ripping and AnyDVD to break any encryption.) I went to the trouble of ripping all my SCTV DVDs (five box sets worth) because none of it is on streaming nor could it be found on any pirate source I tried. I’m glad I did, forty years on it’s still one of the funniest shows to ever be on TV.

Part Nine/Epilogue: Sonarr/Radarr/Lidarr and Overseerr

There are a lot of ways to automate your server for better functionality or to add features you and other users might find useful. Sonarr, Radarr, and Lidarr are a part of a suite of “Servarr” services (there’s also Readarr for books and Whisparr for adult content) that allow you to automate the collection of new episodes of TV shows (Sonarr), new movie releases (Radarr) and music releases (Lidarr). They hook in to your BitTorrent client or Usenet binary newsgroup downloader and crawl your preferred Torrent trackers and Usenet indexers, alerting you to new releases and automatically grabbing them. You can also use these services to manually search for new media, and even replace/upgrade your existing media with better quality uploads. They’re really a little tricky to set up on a bare metal Ubuntu install (ideally you should be running them in Docker Containers), and I won’t be providing a step by step on installing and running them, I’m simply making you aware of their existence.

The other bit of kit I want to make you aware of is Overseerr which is a program that scans your Plex media library and will serve recommendations based on what you like. It also allows you and your users to request specific media. It can even be integrated with Sonarr/Radarr/Lidarr so that fulfilling those requests is fully automated.

And you're done. It really wasn't all that hard. Enjoy your media. Enjoy the control you have over that media. And be safe in the knowledge that no hedgefund CEO motherfucker who hates the movies but who is somehow in control of a major studio will be able to disappear anything in your library as a tax write-off.

1K notes

·

View notes

Text

In light of the recent Nintendo boycotts, I come bearing a gift

I'll copy/paste a message I've been sharing in discord servers

If you like Nintendo games but hate the company, today's your lucky day

This is totally illegal and you absolutely shouldn't do it because its wrong, so I'm gonna tell you exactly what to do so that you guys know not to do it!

You guys absolutely should not download Azahar Nintendo 3DS emulator and then go onto Citra-emulator.com to find old Nintendo DS and Nintendo 3DS games and then open the games through Azahar for to play free, including Tomodachi life, ACNH, The Sims 3, Nintendogs + Cats and Flipnote Studio.

You really shouldn't do this stuff its its illegal but if you did it, it would totally work and no one could stop you. Also I work in tech and virus scanned random files and they all came up clean so its safe but its still illegal don't do it................. (But you totally could and no one would stop you)

The Citra emulator doesn't work because the dev got hit with a lawsuit. He went on to work on Azahar. They say not to do this for legal protection, but it fully works.

As far as I know, these games do not have piracy barriers EXCEPT Tomodachi Life (A large red cross over the character faces). I have a debug file that fixes this. If you guys come across another game that has a barrier let me know and I'll search for a debug

Tutorial

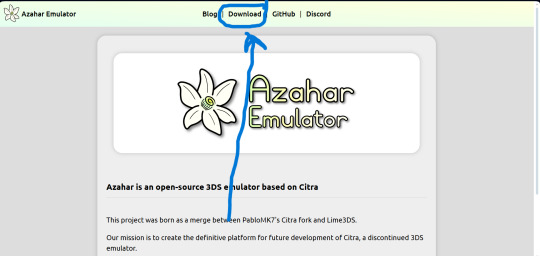

Use this link to download the emulator

https://azahar-emu.org/ scroll all the way up to "Download". Download the version that corresponds with your system (Windows, Mac, Linux, Android)

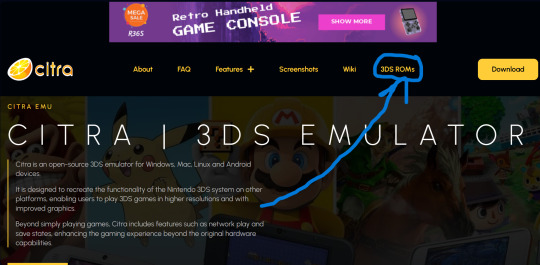

And this link to download the game files

https://citra-emulator.com/ Scroll all the way up to "3DS ROMS". There is an incomplete but still extensive collection of games, both Japanese and English titles as well as Pokemon ROM hacks

On Windows, place the game files on your desktop and open them. It will ask you what app you want to open the file with. Choose "Select app on PC", search for Azahar and select it then press "okay"

(I'm not 100% on the process for Linux and Mac but I'm sure they're similar. On Android I know for certain they are)

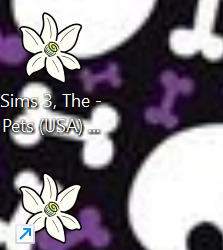

You'll know if it works because the game icons will switch from a paper file to the Azahar flower

Once you see these flowers, you are all set and ready to play!

And here is the error fix for Tomodachi life. Download this file and open it like normal. It will ask you what app you wish to open it with. Open it with Azahar.

Don't panic! A lowkey scary looking dialogue box will pop up for a moment and text will very quickly load onto it. This is Azahar reading the file and saving the commands. It will very quickly close itself. Once that window closes itself, you're all set to open Tomodachi Life and play like normal!

https://drive.google.com/file/d/1_BQfoGycmpaaOvBEm29LU1FKqy7cgG6j/view?usp=drive_link

(This is an upload from my own personal google drive account. I pinkie promise there's no virus on this. and if there is you have full permission to yell at me and put me on blast)

and that's everything I got! Feel free to reblog with other sites or tips you have! <3 Have fun lovelies!

#toby rambles#stardew valley#creepypasta#mouthwashing#hatsune miku#thats not my neighbor#animal crossing#The sims#simblr#tamagotchi#emulation#game emulator#activism#boycott nintendo#vocaloid#epic the musical#epic telemachus#epic odysseus#epic penelope#Stardew valley#stardew#sdv elliott#sdv sebastian#sdv haley

85 notes

·

View notes

Text

Sed is one of the most powerful tools on Linux and Unix-like systems. Learning it is worthwhile, so in this tutorial, we will start with the sed command syntax and examples. 👇

30 notes

·

View notes

Video

youtube

Linux Commands and Command Line Tools

#youtube#🖥️ Master Essential Linux Commands & Command Line Tools! 🚀 In this comprehensive tutorial dive into the powerful world of Linux command-li

0 notes

Text

My 3 days of Linux adventures

I figured out how to copy an iso onto a flashdrive to install linux and after realizing I was hitting the wrong BIOS menu button after a few hours of trial and error and a call to my more tech savvy sister

Started linux setup, got steam on there, realized how many of my games were windows only downloads, and proceeded to research for another couple hours how to get wine running and what front end to use because my computer has 3 gb of ram and I didn't trust that it could handle duel running OSs

Figure out there's literally a button for it in steam download options after which I say fuck it pass out and just reinstall linux the next morning hours faster than the first time I did.

Yay! Games installed!!

Download discord. Discord calls sound like I'm talking through a tin can on a landing strip no matter what settings I mess with. Assume it's something to do with the wifi cutting out. Investigate for hours to experiment with wifi power saving and settings and finally throw in the towel to talk to my sister again

My wifi despite showing two bars is actually faster than it's ever been and is downloading at ~100mbs. Give up for the night

Wake up the next morning to figure out what was fucking up, play around with mic settings and levels before finally reading a forum post from two years ago talking about window's auto installed noise cancellation drivers.

Resign myself to either needing to buy an external mic that's not right next to my computer's half broken fan, or needing to download specific noise canceling drives from github

Struggle with figuring out how to run shit from github for an hour

Resign myself to the external mic pt 2, try to boot up my favorite little rpgmaker puzzle game and it runs like a slideshow. This is my limit. I need my little mimic chest puzzle.

Begin researching again. Learn about more drivers I could potentially try installing or the much simpler method of just dual booting (computer has no ram. She's so old you guys)

Finally throw in the towel completely and decide to unfortunately switch back to Windows10. Download the iso accidentally and struggle around with getting it on the usb before getting the rar I need and the program to reformat the usb to take it (thank you ventoy) and struggle to download it while making sense of tutorials

Try to boot it. Fail.

What the fuck is a partition

Finally realized at this point that the prefix 'Sudo' in ubuntu is the command to run from root. That wouldve been nice to know

Finally delete partitions, run windows and get it reinstalled.

Honestly a 10/10 experience had a blast would do again

13 notes

·

View notes

Note

Any recommendations/cautions about using Alpine Linux on the desktop? It's always intrigued me and you're the only person I've seen post about it

Alpine is pretty good for desktop, very stable, good security practice, professional development philosophy, broad package availability. You will run into some very obvious pitfalls, although they can mostly be obviated by using some modern applications.

The Alpine wiki is a little sparse and at times can be weirdly focussed, like spending a lot of the installation page talking about the very specific usecase of a diskless install. Nonetheless, it's quite good and should be your first port of call. A lot of the things I'm mentioning here are well covered in the article on Daily Driving for Desktop use. I'm basically just editorializing here.

The installation procedure is command-line only, but pretty straightforward, you run setup-alpine and follow the prompts, assuming you want a basic system. If you need special disk partitioning, you'll usually have to do it yourself. There's a whole whackload of helpers to get you set up, like setup-desktop which will help you install any of 'gnome', 'plasma', 'xfce', 'mate', 'sway', or 'lxqt'. Most of these are called by setup-alpine for you, but not the desktop one. You can call it at any time though.

Most obviously, musl libc, no glibc. Packaged software will work fine. There's a compatibility shim called gcompat that will usually work, but might fall apart on more complicated software expecting glibc, for example I've had no luck running glibc AppImages. For more complex software, Flatpaks are a good option, e.g. Steam runs great on Alpine as a Flatpak, I run the Homestuck Companion Flatpak. Your last ditch is containerization and chroots, which are fortunately really easy to handle, just install podman and Distrobox and you can run anything that won't run on Alpine inside a Fedora or Debian or Whatever container seamlessly with your desktop.

Less obviously: no systemd. Systemd underpins some really common features of modern Linux and not having it around means you have to use a few different tools that are anywhere from comparable to a little worse for some tasks. Packaged applications will work smoothly, just learn the OpenRC invocations, Alpine has a really great wiki. For writing your own services, it's a lot more limited than SystemD, you're not going to have full access to like, udev functionality, instead you get the good but kind of weird eudev system.

If you're mainly installing things from the repos you'll barely notice the difference, other than that every package is split up into three, <package>, <package>-docs, and <package>-dev. This is a container-y thing, to allow Alpine container images to install the smallest possible packageset. If you need man pages you'll have to install them specifically.

Alpine has a very solid main repo, and a community repo that's plenty good, and worth enabling on any desktop system. It'll generally be automatically enabled when you set up a desktop anyway, but just a notice if you're going manual. You can run Stable alpine, which updates every six months, or if you want you can run Edge, which is a rolling release of packages as they get added. Lots of very up-to-date software, and pretty stable as these go. You can go from Stable->Edge pretty easily, going back not so much.

There's also the Testing repo, only available on Edge, which I don't really recommend, especially since apkbuild files are so easy to run if you just need one thing that has most of its dependencies met.

Package management is with APK, which is fast and easy to work with. The wiki page will cover you.

Side note: if you want something more batteries-included, you could look at Postmarket, an Alpine derivative mainly focussed on running on smartphones but that is a pretty capable desktop OS, and which has a fairly friendly setup process. I run this on an ARM Chromebook and it's solid. Installation requires some reading between the lines because it's intended for the weird world of phones, so you'll probably want to follow the PMBootstrap route.

8 notes

·

View notes

Text

i started reading an article and couldn’t continue due to not having a subscription.

then i remembered. the answer lies in ✨disabling Javascript ✨

tutorial links below, for anyone who also can’t find The OG Tumblr Post:

Chrome:

Firefox:

#disabling javascript#javascript#dev tools#hacks#life hacks#privacy#chrome#firefox#browsing tips#tech tips#adblock#anti capitalism#shameless#i was reading about disability representation in shameless#reading tips#accessibility#internet freedom#fuck capitalism#punk

9 notes

·

View notes