#government intelligence

Text

People are being killed over this - UFO whistleblowers EXPOSE the deep state agenda | Redacted News

youtube

#UFO#deep state#steven greer#military industrial complex#government intelligence#CIA#politics#constitution#national security#cover up#whistleblower#military#military black sites#technology#congress#ufo disclosure#extraterrestrials#beyond top secret#black projects#black budget#special access programs#unacknowledged special access programs#space#faster than light#aliens#human trafficking#Youtube

4 notes

·

View notes

Text

BREAKING: #FuckStalkerware pt. 7 - israeli national police found trying to purchase stalkerware

for the first time ever, we can prove governments, intelligence companies and data brokers have tried to strike deals with mSpy, a stalkerware company

#fuckstalkerware#maia arson crimew#stalkerware#infosec#security#cyber security#governments#intelligence#spyware#journalism

6K notes

·

View notes

Text

#law. lying low for weeks: the plan is slowly coming together. the pieces are slotting into place. this WILL run smoothly this time.#luffy. the moment he touches land: I'm here to loudly overthrow the government with my sheer power of will#law's intelligence perplexes me because he is. so strategic. knows when and how to play his cards#and is insightful enough to be able to get on people's nerves with their greatest fears#but also. how has he not cottoned on to the fact that this is how luffy operates.#yes he will do the loudest most reckless thing for the sake of other people. (little girl he befriended 5 minutes ago)#yes he will completely discard your plan the moment it becomes inconvenient to his goal.#yes it will benefit law in the end. and he will be mad about it.#CJ's op watch-through#one piece#op#trafalgar law#monkey d. luffy#lawlu

3K notes

·

View notes

Text

With Firefox having AI added in the recent update. Here's how you can disable it.

Open about:config in your browser.Accept the Warning it gives.Search browser.ml and blank all values and set false where necessary as shown in the screenshot, anything that requires a numerical string can be set as 0 .Once you restart you should no longer see the Grey-ed out checkbox checked, and the AI chatbot disabled from ever functioning.

#mozilla#mozilla firefox#firefox#web browsers#pro tip#protips#anti ai#fuck ai#internet#how to#diy#do it yourself#artificial intelligence#signal boost#signal b00st#signal boooooost#ausgov#politas#auspol#tasgov#taspol#australia#fuck neoliberals#neoliberal capitalism#anthony albanese#albanese government

360 notes

·

View notes

Text

Ida is Constantine's good ex.

They separated on good terms after a few months because Ida wanted to start a family and not Constantine (for obvious reasons). Then, despite Ida's efforts to keep in touch as a long-distance friend, she quickly no longer received any news from him.

So when she sees him barely older and seeming lost in his town… she takes him for a ghost who has retained a very human appearance. She rushes to him because she is surely not the only one to notice the presence of a “tourist” in town.

She takes a minute to pity him and apologize. He hadn't ignored him, he was dead!!! Then start explaining to him why as a ghost it's not safe for him here even though it's very nice of him to visit <3

Constantine had not informed Ida about magic, after all it was one of his exes from before his cancer (a little near the time when he stopped responding to her) and his first triple sale of soul for escape death. But he knows Ida well enough not to contradict her. In addition, she gives him all the information in flash notes that he is looking for.

…

Okay, he also missed Ida. It felt SO strange to see her old. But she apparently hadn't lost anything from a mental point of view

This is how Constantine was invited to have tea and catch up on lost time at Ida's in addition to having a more complete debrief of the Amity Park situation

#dpxdc#dcxdp#dc x dp#dp x dc#dp x dc crossover#dc x dp crossover#ida manson#john constantine#amity park#I didn't write it but I'm not sure that Ida would be against going to bed with a ghost#after that I'm not sure on Constantine's side#Constantine doesn't have the heart to tell Ida that he was alive all the time#but that he had been so caught up in the magic tricks to dodge his cancer that he had completely skipped it#and even after n 'never thought about contacting her again#even though she's one of if not the friendliest ex of all.#if he had done it or at least talked to her about magic and his survival#maybe she would have contacted him before the situation got to this point#seriously there is a government agency that wants to start a dimensional war and a couple mad scientists far too intelligent for the good#ida becoming a JLD contact? more likely than you think#my#my prompts#prompt

785 notes

·

View notes

Text

the only Amis who have to Actually Care about their studies are the med students

all the rest are on a sliding scale from 'we don't actually know if they're even in college' to ' actively resisting the college they are enrolled in like they've been dumped behind enemy lines'

they are shit terrible students and that is actually canon

#Marius is a good student.#Marius is square as hell.#this is actually a part of the setup that i think is really hard to graft onto the US modern setting#college is so massively different now#it's expensive there are so many Requirements it's so hostile to like. Life.#why would most of them even be there#being a Good Student has absolutely no correlation with Being Intelligent or Being a Good Person or Overthrowing the Government#and it's less and less of a Given that even middle class kids will attend#so let me tell you about my Amis in Community College/ Community Classes AU--- *is pulled offstage by a giant comedy hook*

352 notes

·

View notes

Text

separate post with the drawing by itself, original photo under cut by @qsmp-where-they-shouldnt-be

#qsmp#art#digital art#qsmp charlie slimecicle#qsmp juanaflippa#qsmp fanart#mcyt#mcyt fanart#slimecicle#charlie slimecicle#jort storm#juanaflippa#i loved drawing flippa so much she’s so silly#she has 17 allergies and is wanted by the central intelligence agency of at least 29 different governments#i miss her every day

479 notes

·

View notes

Text

DARPA is and has been working on projects to connect the human mind to AI. 🤔

#pay attention#educate yourselves#educate yourself#knowledge is power#reeducate yourself#reeducate yourselves#think about it#think for yourselves#think for yourself#do your homework#do some research#do your own research#do your research#ask yourself questions#question everything#darpa#government corruption#ai#artificial intelligence#news

99 notes

·

View notes

Text

#lmao#lol#tiktok#national security#chinese spy#byteDance#espionage#data privacy#cybersecurity#congressional hearings#china-us relations#social media#misinformation#manipulation#intelligence agencies#hypothetical threat#chinese government#user data privacy#tiktok ownership#foreign influence#online surveillance#misinformation campaign

118 notes

·

View notes

Text

The CIA As Organized Crime: How Illegal Operations Corrupt America And The World

#c.i.a.#organized crime#government corruption#central intelligence agency#espionage#psyops#the deep state#books#📖

166 notes

·

View notes

Text

Take action to stop chat control now!

Chat control is back on the agenda of EU governments.

EU governments are to express their position on the latest proposal on 23 September. EU Ministers of the Interior are to adopt the proposal on 10/11 October.

On Monday a new version of the globally unprecedented EU bill aimed at searching all private messages and chats for suspicious content (so-called chat control or child sexual abuse regulation) was circulated and leaked by POLITICO soon after.

According to the latest proposal providers would be free whether or not to use ‘artificial intelligence’ to classify unknown images and text chats as ‘suspicious’.

However they would be obliged to search all chats for known illegal content and report them, even at the cost of breaking secure end-to-end messenger encryption.

#fascism#chat control#government surveillance#internet#privacy#artificial intelligence#ai#climate crisis#eu#european union#internet censorship#surveillance capitalism#2024#activism

39 notes

·

View notes

Text

#aliens#ufo#ufos#us govt#us government#breaking news#news#castiel#destiel#spn#us intelligence#under oath#july 26 2023#non human#aliens and ufos#aliens are real#ufos are real

280 notes

·

View notes

Text

The Collective Intelligence Institute

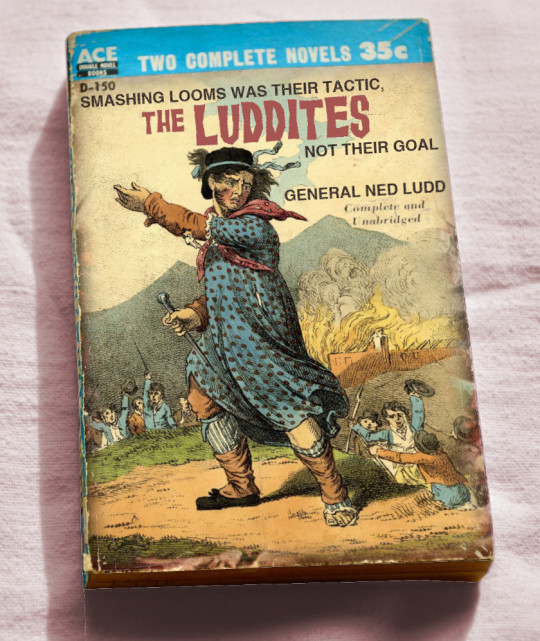

History is written by the winners, which is why Luddite is a slur meaning “technophobe” and not a badge of honor meaning, “Person who goes beyond asking what technology does, to asking who it does it for and who it does it to.”

https://locusmag.com/2022/01/cory-doctorow-science-fiction-is-a-luddite-literature/

If you’d like an essay-formatted version of this post to read or share, here’s a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2023/02/07/full-stack-luddites/#subsidiarity

Luddites weren’t anti-machine activists, they were pro-worker advocates, who believed that the spoils of automation shouldn’t automatically be allocated to the bosses who skimmed the profits from their labor and spent them on machines that put them out of a job. There is no empirical right answer about who should benefit from automation, only social contestation, which includes all the things that desperate people whose access to food, shelter and comfort are threatened might do, such as smashing looms and torching factories.

The question of who should benefit from automation is always urgent, and it’s also always up for grabs. Automation can deepen and reinforce unfair arrangements, or it can upend them. No one came off a mountain with two stone tablets reading “Thy machines shall condemn labor to the scrapheap of the history while capital amasses more wealth and power.” We get to choose.

Capital’s greatest weapon in this battle is inevitabilism, sometimes called “capitalist realism,” summed up with Frederic Jameson’s famous quote “It’s easier to imagine the end of the world than the end of capitalism” (often misattributed to Žižek). A simpler formulation can be found in the doctrine of Margaret Thatcher: “There Is No Alternative,” or even Dante’s “Abandon hope all ye who enter here.”

Hope — alternatives — lies in reviving our structural imagination, thinking through other ways of managing our collective future. Last May, Wired published a brilliant article that did just that, by Divya Siddarth, Danielle Allen and E. Glen Weyl:

https://www.wired.com/story/web3-blockchain-decentralization-governance/

That article, “The Web3 Decentralization Debate Is Focused on the Wrong Question,” set forth a taxonomy of decentralization, exploring ways that power could be distributed, checked, and shared. It went beyond blockchains and hyperspeculative, Ponzi-prone “mechanism design,” prompting me to subtitle my analysis “Not all who decentralize are bros”:

https://pluralistic.net/2022/05/12/crypto-means-cryptography/#p2p-rides-again

That article was just one installment in a long, ongoing project by the authors. Now, Siddarth has teamed up with Saffron Huang to launch the Collective Intelligence project, “an incubator for new governance models for transformative technology.”

https://cip.org/whitepaper

The Collective Intelligence Project’s research focus is “collective intelligence capabilities: decision-making technologies, processes, and institutions that expand a group’s capacity to construct and cooperate towards shared goals.” That is, asking more than how automation works, but who it should work for.

Collective Intelligence institutions include “markets…nation-state democracy…global governance institutions and transnational corporations, standards-setting organizations and judicial courts, the decision structures of universities, startups, and nonprofits.” All of these institutions let two or more people collaborate, which is to say, it lets us do superhuman things — things that transcend the limitations of the lone individual.

Our institutions are failing us. Confidence in democracy is in decline, and democratic states have failed to coordinate to solve urgent crises, like the climate emergency. Markets are also failing us, “flatten[ing] complex values in favor of over-optimizing for cost, profit, or share price.”

Neither traditional voting systems nor speculative markets are up to the task of steering our emerging, transformative technologies — neither machine learning, nor bioengineering, nor labor automation. Hence the mission of CIP: “Humans created our current CI systems to help achieve collective goals. We can remake them.”

The plan to do this is in two phases:

Value elicitation: “ways to develop scalable processes for surfacing and combining group beliefs, goals, values, and preferences.” Think of tools like Pol.is, which Taiwan uses to identify ideas that have the broadest consensus, not just the most active engagement.

Remake technology institutions: “technology development beyond the existing options of non-profit, VC-funded startup, or academic project.” Practically, that’s developing tools and models for “decentralized governance and metagovernance, internet standards-setting,” and consortia.

The founders pose this as a solution to “The Transformative Technology Trilemma” — that is, the supposed need to trade off between participation, progress and safety.

This trilemma usually yields one of three unsatisfactory outcomes:

Capitalist Acceleration: “Sacrificing safety for progress while maintaining basic participation.” Think of private-sector geoengineering, CRISPR experimentation, or deployment of machine learning tools. AKA “bro shit.”

Authoritarian Technocracy: “Sacrificing participation for progress while maintaining basic safety.” Think of the vulnerable world hypothesis weirdos who advocate for universal, total surveillance to prevent “runaway AI,” or, of course, the Chinese technocratic system.

Shared Stagnation: “Sacrificing progress for participation while maintaining basic safety.” A drive for local control above transnational coordination, unwarranted skepticism of useful technologies (AKA “What the Luddites are unfairly accused of”).

The Institute’s goal is to chart a fourth path, which seeks out the best parts of all three outcomes, while leaving behind their flaws. This includes deliberative democracy tools like sortition and assemblies, backed by transparent machine learning tools that help surface broadly held views from within a community, not just the views held by the loudest participants.

This dovetails into creating new tech development institutions to replace the default, venture-backed startup for “societally-consequential, infrastructural projects,” including public benefit companies, focused research organizations, perpetual purpose trusts, co-ops, etc.

It’s a view I find compelling, personally, enough so that I have joined the organization as a volunteer advisor.

This vision resembles the watershed groups in Ruthanna Emrys’s spectacular “Half-Built Garden,” which was one of the most inspiring novels I read last year (a far better source of stfnal inspo than the technocratic fantasies of the “Golden Age”):

https://pluralistic.net/2022/07/26/aislands/#dead-ringers

And it revives the long-dormant, utterly necessary spirit of the Luddites, which you can learn a lot more about in Brian Merchant’s forthcoming, magesterial “Blood In the Machine: The Origins of the Rebellion Against Big Tech”:

https://www.littlebrown.com/titles/brian-merchant/blood-in-the-machine/9780316487740/

This week (Feb 8–17), I’ll be in Australia, touring my book Chokepoint Capitalism with my co-author, Rebecca Giblin. We’ll be in Brisbane tomorrow (Feb 8), and then we’re doing a remote event for NZ on Feb 9. Next are Melbourne, Sydney and Canberra. I hope to see you!

[Image ID: An old Ace Double paperback. The cover illustration has been replaced with an 18th century illustration depicting a giant Ned Ludd leading an army of Luddites who have just torched a factory. The cover text reads: 'The Luddites. Smashing looms was their tactic, not their goal.']

#pluralistic#ai#artificial intelligence#b-corps#collective intelligence#full-stack luddism#full-stack luddites#governance#luddism#ml#sortition#subsidiarity

621 notes

·

View notes

Text

Anybody know what Trump's drag name is? I'm guessing,"Orange Blossom"? ...or, "Meg O'Lomania"? ...or,...?

#donald trump#drag#funny#hilarious#video#lgbtq#republicans#humor#politics#aesthetic#cute#artificial intelligence#photoshop#democrats#government#haha#us politics#gay#trans#beauty-funny-trippy#tiktok#drag ban#end drag ban

83 notes

·

View notes

Text

#meta ai#ai#futurama#futurama meme#meme#memes#artificial intelligence#fuck off#a.i. art#a.i. generated#ai generated#ai art#ai artwork#ai girl#a.i.#ausgov#politas#auspol#tasgov#taspol#australia#fuck neoliberals#neoliberal capitalism#anthony albanese#albanese government#meta#facebook#social networks#social media#internet

349 notes

·

View notes

Text

The way to judge a country is to observe the way they treat their animals, their women, and their vulnerable (not necessarily in that order). That's my benchmark, always.

#spilled thoughts#awareness#personal development#personal values#personal growth#personal responsibility#internalized beliefs#internalized antisemitism#campus protests#gaza#israel#yemen#jerusalem#human rights#human experience#uk universities#uk elections#uk government#uk govt#palestine#kindness#emotional intelligence#spiritual growth#spiritual journey#spiritualgrowth#uk protests#gaza strip#humanity#student protests

20 notes

·

View notes